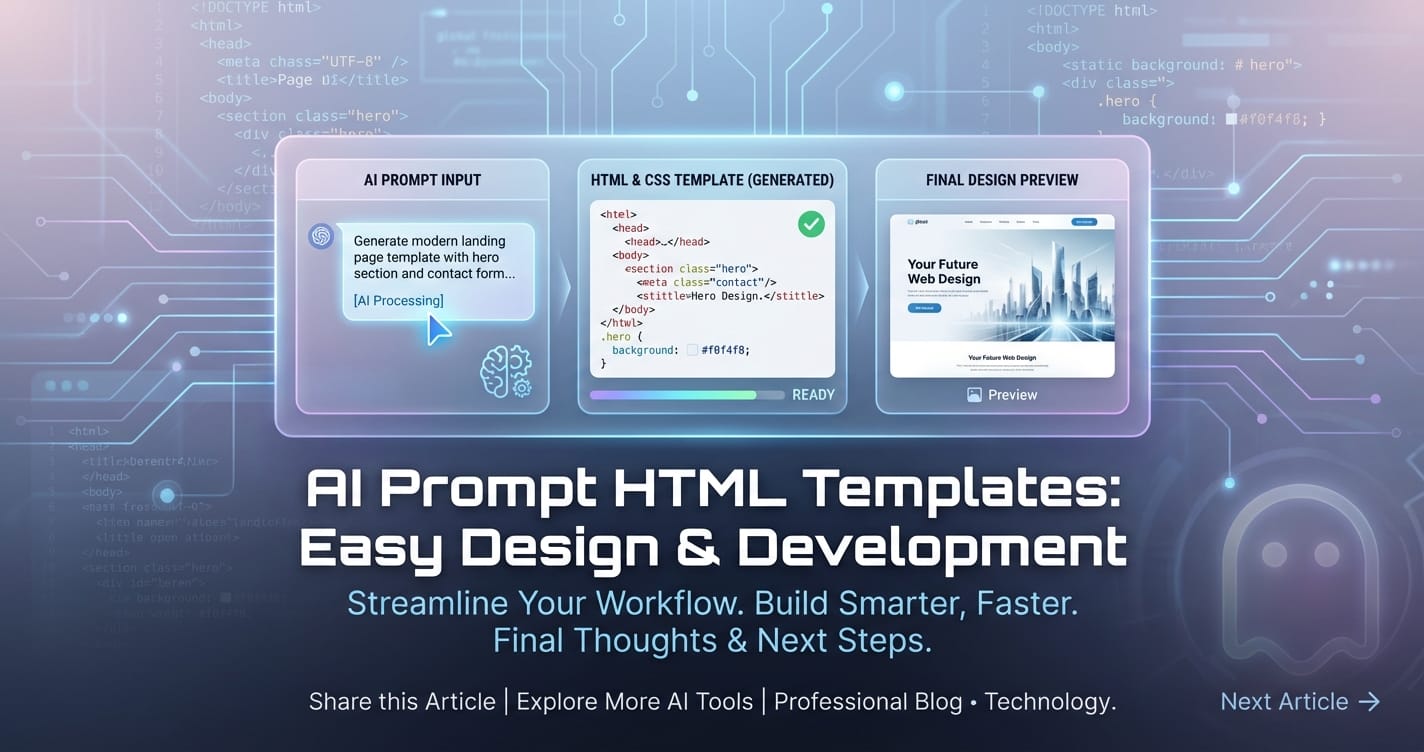

AI Prompt HTML Templates: Easy Design & Development

In the rapidly evolving landscape of artificial intelligence, the ability to effectively communicate with large language models (LLMs) has transitioned from an esoteric skill to a cornerstone of modern software development. Prompt engineering, the art and science of crafting inputs to elicit desired outputs from AI, is at the heart of this communication. Yet, as AI applications proliferate, the manual, ad-hoc approach to prompt creation quickly becomes unsustainable, leading to inconsistencies, maintenance nightmares, and a steep learning curve for developers and designers alike. This is where the concept of AI Prompt HTML Templates emerges as a powerful paradigm, offering a structured, intuitive, and highly efficient pathway to designing and developing sophisticated AI interactions.

The promise of "easy design & development" isn't merely about superficial aesthetics; it delves into creating a robust, scalable, and manageable system for harnessing AI's power. This involves not only the visual and interactive elements that users or developers engage with – the HTML templates themselves – but also the critical backend infrastructure that orchestrates these interactions. At the core of this infrastructure lie sophisticated mechanisms for managing conversational state and semantic understanding, such as the Model Context Protocol, and robust routing and governance layers like the LLM Gateway. Together, these components transform the complex dance of AI integration into a streamlined, predictable, and remarkably accessible process.

This comprehensive exploration will delve into the synergistic relationship between user-friendly HTML templates and the powerful underlying technologies that make them shine. We will unpack how structured templates simplify the frontend experience, while simultaneously elucidating the crucial roles of context management and API gateways in ensuring seamless, efficient, and scalable AI operations. Our journey will highlight how these elements, when combined strategically, unlock a new era of AI application development, making it not just powerful, but genuinely easy to design and develop.

The Dawn of Prompt Engineering and Its Growing Pains

The advent of highly capable large language models has democratized access to sophisticated AI functionalities, from content generation and code assistance to complex data analysis and customer service. At the heart of interacting with these models is the "prompt" – the instruction or query that guides the AI's response. Initially, prompt engineering was often a manual, iterative process, where developers or data scientists would experiment with different wordings, structures, and examples to achieve the desired output. This trial-and-error approach, while insightful in its early stages, quickly reveals its limitations as projects scale.

Consider a scenario where an organization needs to deploy hundreds of AI-powered microservices, each requiring unique prompts for specific tasks. Managing these prompts as raw strings embedded within code becomes a significant burden. Small changes in model behavior, updates to an organization's brand voice, or the need to localize content for different regions would necessitate painstaking manual modifications across countless files. This manual effort not only introduces a high probability of errors but also drastically slows down development cycles and innovation. The lack of standardization also creates inconsistencies in AI output, making it difficult to maintain quality and reliability across different applications. Developers find themselves constantly re-solving similar problems, struggling with version control for prompts, and facing challenges in collaborating effectively on AI logic. These growing pains underscore an undeniable truth: the future of AI application development demands a more structured, systematic, and user-centric approach to prompt management. It requires moving beyond individual, ad-hoc prompt crafting to a more industrialized, template-driven methodology that embraces design principles and robust infrastructure.

HTML Templates as the Canvas for AI Prompts: Structuring Creativity

At its most fundamental, an AI Prompt HTML Template is a standardized, reusable structure, often rendered as a web form or interactive component, designed to facilitate the creation, customization, and deployment of AI prompts. Far from being just static text fields, these templates are dynamic interfaces that abstract away the complexity of raw prompt engineering, allowing developers and even non-technical users to design AI interactions with unprecedented ease and consistency. They act as the visual and interactive "canvas" upon which AI prompts are painted, ensuring that every brushstroke – every parameter, every piece of context, every instruction – is placed deliberately and effectively.

The power of HTML templates for AI prompts lies in their ability to standardize inputs, enforce structure, and guide users through the prompt creation process. Imagine a template for a "Product Description Generator." Instead of users manually typing out a lengthy prompt like "Write a compelling product description for a new gadget. It should be eco-friendly, target young professionals, highlight its long battery life, and compare it to existing smartwatches," the HTML template would present clear input fields: "Product Name," "Key Features (checkboxes or multi-select)," "Target Audience (dropdown)," "Desired Tone (slider)," and "Word Count (numeric input)." Each of these fields directly maps to a variable or a specific instruction within the underlying prompt structure. When the user fills out the form, the template dynamically constructs the optimized prompt string, ensuring all necessary components are present and correctly formatted for the LLM.

Key Benefits of Template-Driven Prompt Design:

- Consistency and Standardization: Templates ensure that prompts adhere to predefined structures and best practices. This eliminates variability in prompt construction, leading to more predictable and consistent AI outputs across different applications or users. For a large enterprise, maintaining a consistent brand voice or technical standard in AI-generated content is paramount, and templates provide the mechanism to enforce this.

- Ease of Use and Accessibility: By presenting clear, guided input fields, templates lower the barrier to entry for prompt engineering. Non-technical users, such as marketing specialists, content creators, or customer service agents, can leverage AI without needing deep expertise in prompt syntax or LLM capabilities. This democratizes access to AI, allowing a broader range of personnel to integrate AI into their daily workflows.

- Reusability and Efficiency: Once a well-designed template is created for a specific task (e.g., email drafting, code generation, data summarization), it can be reused across countless instances. This drastically reduces development time and effort, as developers no longer need to craft prompts from scratch for similar functionalities. It also simplifies maintenance; if a core instruction needs to be updated, it's done once in the template definition, not across multiple disparate prompt strings.

- Collaboration and Version Control: Templates provide a centralized asset for managing prompt logic. Teams can collaborate on refining prompt structures, iterate on design, and easily track changes using standard version control systems. This structured approach prevents "prompt drift" where different team members might inadvertently use slightly varied prompts for the same task, leading to inconsistent results.

- Dynamic Prompt Generation: Beyond static text, HTML templates can incorporate advanced logic, conditional rendering, and dynamic data binding. This allows for the creation of highly adaptive prompts that adjust based on user input, historical data, or external variables. For instance, a template might include a conditional block that adds specific instructions if a user selects a "formal" tone versus an "informal" one, or includes extra details if a product is marked as "new."

Designing Effective AI Prompt HTML Templates:

Crafting a good template goes beyond merely creating a form. It involves thoughtful design principles that consider both the human user and the AI model:

- Clarity and Simplicity: Each input field should be clearly labeled and its purpose evident. Avoid jargon. The template should guide the user intuitively without overwhelming them with too many options simultaneously.

- Granularity and Abstraction: Break down complex prompt elements into granular, manageable inputs. Abstract away the underlying prompt syntax, allowing users to focus on the content and intent. For example, instead of asking for "LLM temperature setting," provide options like "Creativity Level: Low, Medium, High."

- Validation and Guidance: Implement frontend validation to ensure inputs are in the correct format or within acceptable ranges. Provide inline help text or tooltips to explain specific fields and their impact on the AI's response.

- Flexibility and Customization: While templates enforce structure, they should also allow for custom inputs or "free text" areas where users can add specific nuances not covered by predefined fields. This balances structure with creative freedom.

- Preview and Feedback: Incorporating a real-time preview of the constructed prompt or even a simulated AI response within the template can significantly enhance the user experience, allowing for immediate feedback and iterative refinement.

The journey from a user filling out an HTML template to receiving a coherent AI response is facilitated by powerful backend mechanisms. The seemingly simple act of interacting with a web form belies the intricate orchestration happening behind the scenes, ensuring that the AI understands the request not just as a static string, but within its broader operational context. This brings us to the crucial role of the Model Context Protocol and the LLM Gateway – the invisible backbone that empowers these templates.

The Invisible Backbone: Model Context Protocol (MCP)

While HTML templates provide the user-friendly interface for crafting prompts, the true intelligence of an AI interaction lies in the AI's ability to understand the prompt within a broader context. This is where the Model Context Protocol (MCP) and the underlying concept of a context model become indispensable. An LLM, by itself, is stateless; each new prompt is often treated as an isolated event. However, for any meaningful, multi-turn conversation, personalized interaction, or complex task that builds upon previous information, the AI needs memory – a coherent understanding of what has been said, what has been done, and what the current operational environment entails. The MCP defines the standardized means by which this crucial contextual information is captured, organized, and delivered to the LLM, ensuring that prompts are not just understood literally, but semantically and situationally.

A context model is essentially a structured representation of all relevant information pertinent to an ongoing AI interaction. This can include a vast array of data points: * Conversational History: Previous turns in a dialogue, including user inputs and AI responses. * User Profile: Preferences, demographics, historical actions, and personal information. * Application State: Current screen, active features, selected items, or ongoing tasks within the application. * Environmental Data: Time of day, location, device type, or external API data (e.g., weather, stock prices). * System Instructions: Persistent directives about the AI's persona, tone, safety guidelines, or specific limitations. * External Knowledge: Relevant documents, databases, or RAG (Retrieval-Augmented Generation) snippets retrieved based on the current query.

The Model Context Protocol is the set of rules and data formats that govern how this context model is created, updated, serialized, and transmitted. It's not just about concatenating strings; it’s about strategically structuring data to maximize the LLM's understanding while adhering to token limits and computational efficiency. A well-designed MCP will specify: * Schema for Context Objects: How different types of context (e.g., user_profile, chat_history, system_directives) are structured. * Versioning and Prioritization: Mechanisms for updating context, resolving conflicts, and prioritizing crucial information. * Compression and Summarization: Strategies to distill large amounts of historical data into a concise, relevant summary that fits within the LLM's context window. * Dynamic Context Injection: How context elements derived from real-time events or user actions (e.g., form submissions from an HTML template) are seamlessly integrated.

Why Context is Crucial for AI Prompts:

- Coherence and Continuity: Without context, an AI cannot maintain a coherent conversation. Each turn would be an isolated query. MCP ensures that the AI remembers past interactions, allowing for natural, flowing dialogues where references to previous statements are understood.

- Personalization: By incorporating user-specific data into the context model, the AI can tailor its responses, recommendations, and information delivery. For example, a travel planning template might use MCP to inject a user's preferred travel dates and destinations from their profile, even if not explicitly stated in the current prompt.

- Accuracy and Relevance: Context helps the AI disambiguate ambiguous queries and provide more relevant answers. If a user asks "Tell me more about it," the "it" is resolved by understanding the preceding conversation within the context model.

- Task Automation and Workflow Integration: In complex workflows, MCP enables the AI to track progress, understand the current stage of a multi-step process, and remember parameters set in earlier steps, which might have been captured through different input fields in an HTML template.

- Adherence to Safety and Brand Guidelines: System-level instructions, such as safety policies or brand tone requirements, can be persistently injected into the context model via MCP, ensuring that every AI interaction complies with organizational standards.

Challenges in Context Management and How MCP Addresses Them:

- Token Limits: LLMs have finite context windows. MCP addresses this through intelligent summarization, chunking, and selective retrieval strategies, ensuring that only the most relevant context is passed to the model.

- Context Staleness: Over time, some context might become irrelevant. MCP protocols can define aging mechanisms or explicit ways to refresh/reset context.

- Security and Privacy: Handling sensitive user data within the context requires robust MCPs that specify encryption, anonymization, and access control mechanisms, especially when transmitting data across different components.

- Performance Overhead: Building and maintaining a rich context model can introduce latency. Efficient MCP implementations are optimized for speed, often using caching and incremental updates.

Imagine an AI assistant built using HTML templates for various tasks. A template for "customer support ticket summarization" might accept raw customer text. The MCP would then augment this text with the customer's purchase history, previous interactions with support, and the product category, all delivered in a structured format alongside the raw prompt. This rich context allows the LLM to generate a far more insightful and actionable summary than if it only processed the customer's text in isolation. The HTML template makes it easy for the support agent to initiate the summarization, while the MCP ensures the AI has all the necessary background information.

LLM Gateways: Orchestrating Prompt Execution

Once a prompt is meticulously crafted via an HTML template and enriched with context through the Model Context Protocol, it needs a robust, secure, and efficient pathway to reach the appropriate Large Language Model. This is precisely the role of an LLM Gateway. An LLM Gateway acts as a sophisticated intermediary, a control plane between your application (which generated the prompt via a template) and one or more underlying LLM providers (e.g., OpenAI, Anthropic, custom fine-tuned models). It's far more than a simple proxy; it's an intelligent orchestrator that adds a critical layer of management, security, and optimization to your AI infrastructure. Without an LLM Gateway, every application would need to independently manage connections, credentials, rate limits, and model specifics for each AI service it consumes, leading to a tangled and unmanageable mess.

Why an LLM Gateway is Essential for Prompt-Driven Applications:

- Unified API Interface: LLMs from different providers often have varying APIs, request/response formats, and authentication mechanisms. An LLM Gateway abstracts away these differences, providing a single, consistent API endpoint for your applications. This means your HTML templates and the backend logic that processes them can interact with a unified interface, regardless of which LLM ultimately processes the prompt. This drastically simplifies development and makes switching between or combining models seamless.

- Security and Access Control: Exposing API keys or direct access to LLMs from every application is a major security risk. An LLM Gateway centralizes API key management, implements robust authentication and authorization policies, and can even act as a firewall, scrutinizing incoming prompts for malicious content or data exfiltration attempts before forwarding them to the LLMs. This ensures that sensitive data in prompts (or in the context model) is handled securely.

- Rate Limiting and Load Balancing: LLM providers impose strict rate limits. An LLM Gateway can enforce global or per-user rate limits, preventing applications from hitting quotas and ensuring fair access. When dealing with multiple LLMs or multiple instances of the same model, the gateway can intelligently route requests to distribute load, optimize performance, and ensure high availability, preventing any single model from becoming a bottleneck.

- Cost Optimization and Management: Different LLMs have different pricing structures. An LLM Gateway can implement intelligent routing logic to select the most cost-effective model for a given prompt, based on factors like performance, accuracy, and price. It also provides centralized cost tracking, allowing organizations to monitor LLM usage and expenditure across all their applications.

- Observability and Analytics: Knowing what prompts are being sent, how LLMs are responding, and where performance bottlenecks occur is crucial. Gateways offer comprehensive logging, monitoring, and analytics capabilities, providing insights into prompt usage patterns, error rates, latency, and token consumption. This data is invaluable for debugging, optimizing AI interactions, and making informed decisions about model selection.

- Prompt Versioning and A/B Testing: As prompt engineering evolves, you'll need to iterate on prompt structures and content. An LLM Gateway can manage different versions of prompts, allowing you to deploy updates seamlessly and even A/B test different prompt variations to determine which performs best in terms of desired output or user satisfaction. This is particularly powerful when coupled with HTML templates, as different template versions can map to different underlying prompt versions managed by the gateway.

- Data Caching and Response Optimization: For frequently asked questions or repetitive prompts, a gateway can cache LLM responses, significantly reducing latency and costs. It can also apply post-processing transformations to LLM outputs before returning them to the application, ensuring consistency in data format or filtering unwanted content.

Integrating APIPark as a Leading LLM Gateway Solution:

In this context, a powerful tool like APIPark - Open Source AI Gateway & API Management Platform perfectly embodies the capabilities of an advanced LLM Gateway. APIPark is designed to address the very challenges outlined above, making the management, integration, and deployment of AI and REST services remarkably simple.

APIPark's relevance to "Easy Design & Development" of AI Prompt HTML Templates is clear:

- Quick Integration of 100+ AI Models & Unified API Format: APIPark offers a unified management system that standardizes the request data format across various AI models. This means your HTML templates and the backend logic don't need to worry about the specifics of OpenAI vs. Anthropic vs. a custom model. You design your prompt template once, and APIPark handles the conversion and routing, simplifying your development stack significantly.

- Prompt Encapsulation into REST API: One of APIPark's most compelling features is its ability to quickly combine AI models with custom prompts to create new, ready-to-use REST APIs. Imagine an HTML template that takes inputs for sentiment analysis. APIPark can take that templated prompt, encapsulate it, and expose it as a simple REST endpoint. Your frontend application, built with HTML templates, simply calls this API, abstracting away all the underlying AI complexity. This transforms complex prompt engineering into easily consumable microservices.

- End-to-End API Lifecycle Management: From design to publication and monitoring, APIPark provides comprehensive lifecycle management for these AI-driven APIs. This means that prompts, once encapsulated, can be versioned, traffic-managed, and load-balanced just like any other enterprise API, ensuring stability and scalability for your template-driven AI applications.

- Performance and Observability: With high TPS (Transactions Per Second) and detailed API call logging, APIPark ensures that your AI prompt templates, even under heavy load, deliver reliable and traceable interactions. Its data analysis capabilities are crucial for optimizing prompt performance and understanding long-term trends, directly impacting the effectiveness of your prompt designs.

By leveraging an LLM Gateway like APIPark, organizations can move beyond ad-hoc AI integrations to a standardized, secure, and highly efficient framework for deploying AI, enabling the "easy design & development" promised by structured AI Prompt HTML Templates. It acts as the intelligent traffic controller, ensuring that every prompt, meticulously designed on the frontend, reaches its destination efficiently and securely, and that every response is handled with precision.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

Integrating Templates, MCP, and LLM Gateways for a Seamless Workflow

The true power of AI Prompt HTML Templates for "easy design & development" is unlocked when they are seamlessly integrated with a robust Model Context Protocol (MCP) and an intelligent LLM Gateway. This creates an end-to-end workflow that transforms complex AI interactions into a predictable, manageable, and highly efficient process. The synergy between these three components is what truly enables developers and designers to build sophisticated AI applications with speed and confidence.

Let's visualize the journey of an AI prompt from the user interface to the LLM and back:

- User Interaction via HTML Template:

- A user (or developer) interacts with a user-friendly HTML template, which could be a web form, a content editor, or a custom application interface.

- They input specific data into fields like "Topic," "Tone," "Keywords," "Target Audience," or even free-form text areas, all designed to guide the AI.

- Client-side validation (JavaScript within the HTML) ensures immediate feedback and corrects common input errors, improving the initial quality of the prompt components.

- Prompt Construction & Context Enrichment (Backend/MCP Logic):

- Upon submission, the data from the HTML template is sent to a backend application server.

- Here, the Model Context Protocol comes into play. The backend logic retrieves relevant contextual information:

- User history: Previous interactions, preferences, or profile data.

- Application state: The current task, workflow stage, or object being worked on.

- System instructions: Global guidelines for the AI's persona, safety, or formatting.

- External data: Information retrieved from databases, external APIs, or RAG systems based on the user's input.

- The backend then dynamically constructs the final, optimized prompt string. This involves merging the user's template inputs with the rich contextual data, adhering to a predefined prompt structure that maximizes the LLM's understanding while staying within token limits. For instance,

"{system_persona} {user_preferences} Generate {tone} product description for {product_name} highlighting {features}." - This is where the MCP ensures that all necessary background information is bundled effectively with the core prompt request.

- Orchestration via LLM Gateway:

- The fully constructed and context-rich prompt is then sent to the LLM Gateway.

- The Gateway acts as the central hub:

- Authentication & Authorization: It verifies the request's credentials and ensures the calling application has permission to access AI services.

- Rate Limiting: It checks for and enforces API call limits, preventing abuse and ensuring service stability.

- Intelligent Routing: Based on configuration (e.g., specific prompt keywords, required model capabilities, cost considerations, or available capacity), the Gateway intelligently routes the prompt to the most appropriate LLM (e.g., a high-accuracy, expensive model for critical tasks, or a faster, cheaper model for drafts).

- Logging & Monitoring: It logs every request, response, latency, and token usage, providing a comprehensive audit trail and data for performance analysis.

- Caching: If the prompt (or a similar one with identical context) has been processed recently, the Gateway might return a cached response, reducing latency and cost.

- LLM Processing:

- The selected LLM receives the prompt and its accompanying context.

- It processes the request, generating a response based on its training data and the detailed instructions provided.

- Response Processing & Display (Backend & HTML Template):

- The LLM's raw response is returned to the LLM Gateway, which might apply post-processing (e.g., filtering, reformatting).

- The Gateway sends the processed response back to the backend application server.

- The backend server further processes the LLM's output, perhaps integrating it with other application data or transforming it into a format suitable for the frontend.

- Finally, the transformed AI response is sent back to the client-side HTML template, which then dynamically renders the output (e.g., displaying the generated product description in a text area, populating a list of suggestions, or updating a chatbot interface).

Practical Implementation Strategies and Architectures:

- Modular Design: Each component (HTML templates, MCP logic, LLM Gateway) should be developed as a distinct, loosely coupled module. This allows for independent development, testing, and scaling.

- API-First Approach: The backend processing the HTML template input should expose clean, well-documented APIs to the LLM Gateway. Similarly, the Gateway should offer a consistent API to LLMs.

- Event-Driven Architectures: For more complex, asynchronous AI workflows, an event-driven approach (e.g., using message queues) can ensure robustness and scalability, especially when dealing with long-running LLM tasks or multiple intermediate AI steps.

- Configuration over Code: Maximize the use of configuration files for defining prompt structures within templates, MCP rules, and LLM Gateway routing logic. This reduces the need for code changes when updating AI interactions.

- Observability Stack: Integrate logging, monitoring, and tracing tools across all components to gain full visibility into the AI interaction pipeline, from template submission to final response.

This integrated approach makes the "easy design & development" of AI prompts a tangible reality. Developers can focus on crafting intuitive HTML interfaces and refining prompt logic, confident that the underlying MCP will manage context intelligently and the LLM Gateway will orchestrate the interaction with the AI models securely and efficiently. This level of abstraction and automation empowers organizations to rapidly build, deploy, and scale sophisticated AI applications without getting bogged down in the minutiae of AI infrastructure.

Advanced Topics and Future Trends

As AI prompt engineering matures, the synergy between HTML templates, Model Context Protocols, and LLM Gateways will continue to evolve, ushering in advanced capabilities and addressing emerging challenges. The future promises even greater sophistication in how we design, develop, and deploy AI interactions.

Dynamic Templates and Personalized Prompts:

The next generation of AI Prompt HTML Templates will move beyond static forms to highly dynamic, adaptive interfaces. Imagine templates that: * Self-adjust: Based on initial user inputs or even historical data, the template can dynamically reconfigure its fields, adding or removing sections to guide the user more effectively towards an optimal prompt. For example, if a user selects "marketing content," new fields for "target demographic" and "call to action" might appear. * Learn and Optimize: Over time, these templates could leverage user feedback and LLM output quality metrics (tracked by the LLM Gateway) to suggest better prompt structures or default values, effectively becoming self-optimizing prompt generators. * Deep Personalization: By tightly integrating with the Model Context Protocol, templates can pre-populate fields with highly personalized data (e.g., user's recent activity, preferred tone from past interactions), making prompt creation an almost effortless, tailored experience. This takes personalization beyond just the AI's response to the very act of crafting the prompt itself.

The Role of Observability and Analytics in Prompt Performance:

The sophisticated logging and data analysis capabilities of an LLM Gateway will become increasingly critical. Beyond basic error rates and latency, future analytics will focus on: * Prompt Effectiveness Metrics: Analyzing token usage, output coherence, relevance scores (via human or AI feedback loops), and user satisfaction for different prompt structures. This allows developers to quantitatively compare different template designs and prompt engineering strategies. * Contextual Impact Analysis: Understanding how specific context elements (managed by the Model Context Protocol) influence LLM responses. For example, does adding customer purchase history consistently lead to more relevant support responses? * Cost vs. Performance Optimization: Detailed breakdowns of which LLMs are being used for which types of prompts, their associated costs, and their performance, enabling data-driven decisions on routing and model selection. * Anomaly Detection: Automatically flagging unusual prompt patterns or unexpected LLM responses that might indicate issues with template design, context injection, or even subtle model drift. This kind of granular data, readily available through platforms like APIPark, empowers developers to not just deploy, but continuously improve their AI interactions.

Ethical Considerations in Prompt Design and Context Management:

As AI becomes more deeply integrated into critical applications, the ethical implications of prompt design and context management come into sharp focus: * Bias Mitigation: HTML templates must be designed to guide users away from inputs that might lead to biased AI outputs. The Model Context Protocol needs robust mechanisms to filter or transform sensitive contextual data to prevent the perpetuation of societal biases. * Privacy and Data Security: The vast amount of data that can be ingested into a context model (user data, historical interactions) necessitates stringent privacy controls. LLM Gateways will play a crucial role in enforcing data governance policies, anonymizing sensitive information, and ensuring compliance with regulations like GDPR and CCPA. Templates might incorporate explicit consent mechanisms for data usage. * Transparency and Explainability: While not fully solved by templates, future prompt designs, supported by detailed context and gateway logs, should aim to provide more transparency into why an AI generated a particular response. This could involve prompting the AI to explain its reasoning or showing which contextual elements were most influential. * Prevention of Misuse: Templates and gateway controls can be designed to prevent the generation of harmful, illegal, or unethical content, acting as guardrails for AI usage. The Model Context Protocol can incorporate system-level "red-teaming" instructions to proactively identify and avoid undesirable AI behaviors.

The future of AI prompt engineering lies in creating increasingly intelligent, adaptive, and responsible systems. The evolution of AI Prompt HTML Templates, backed by sophisticated Model Context Protocols and robust LLM Gateways, is paving the way for a world where AI development is not just easy, but also effective, ethical, and continuously optimized. These advancements will empower a new generation of builders to unlock the full, transformative potential of artificial intelligence across every industry.

Building a Robust AI Prompt System: A Comprehensive Guide

Developing a truly effective and scalable AI prompt system requires a deliberate approach, integrating user-facing components with powerful backend infrastructure. Below is a detailed guide outlining the key considerations and components, illustrating how HTML templates, Model Context Protocols, and LLM Gateways fit together.

| Component Area | Key Considerations & Best Practices to an AI, the Model Context Protocol is crucial. This protocol isn't just about providing the current input; it's about giving the AI a memory and an understanding of the ongoing interaction. It manages the conversational state, remembering prior turns in a multi-turn dialogue, understanding user preferences, and integrating external data relevant to the current query. This rich contextualization allows for more coherent, relevant, and personalized AI responses. Without it, even the most beautifully designed HTML template would yield disjointed and ineffective AI interactions, as the AI would lack the necessary "background" to truly grasp the user's intent.

The Role of an LLM Gateway

The journey from a well-structured prompt and its associated context to a successful AI response is often fraught with infrastructure challenges. An LLM Gateway serves as the central orchestration point, a sophisticated intermediary that manages the flow of requests between your application and various Large Language Models. It is critical for ensuring that AI prompt HTML templates, even those generating complex prompts, translate into reliable, secure, and cost-effective AI interactions.

An LLM Gateway provides essential functionalities that simplify the development and deployment of AI-powered applications:

- Unified Access: It abstracts away the diverse APIs and authentication methods of different LLM providers, offering a single, consistent interface to your applications. This means your HTML template backend doesn't need to worry about the specific idiosyncrasies of OpenAI, Anthropic, or a custom fine-tuned model; the gateway handles it all.

- Security & Governance: The gateway centralizes API key management, enforces access control, and can apply data masking or content filtering to prompts and responses. This is paramount for protecting sensitive data and ensuring compliance with organizational policies.

- Performance & Scalability: It implements rate limiting, load balancing across multiple LLMs or instances, and caching mechanisms to optimize response times and handle high traffic volumes efficiently.

- Cost Management: By routing requests to the most cost-effective LLM based on usage, performance, and specific prompt characteristics, the gateway helps control expenditures. It also provides detailed analytics for cost tracking.

- Observability: Comprehensive logging of all requests, responses, errors, and token usage provides invaluable insights into AI application performance, aiding debugging and optimization.

- Prompt Versioning & A/B Testing: The gateway can manage different versions of prompts and even facilitate A/B testing of various prompt engineering strategies, allowing continuous improvement without disrupting live applications.

In essence, the LLM Gateway is the traffic controller and quality assurance manager for your AI interactions. It ensures that the creative input from your HTML templates and the intelligent context from your Model Context Protocol are delivered to the right AI model, at the right time, with optimal performance and security.

Practical Implementation Strategies

Building a robust AI prompt system requires a well-thought-out architecture:

- Frontend (HTML Templates):

- User Experience (UX) Focus: Design intuitive and guided interfaces for prompt creation. Use clear labels, tooltips, and validation.

- Modularity: Create reusable template components for common prompt elements (e.g., tone selectors, entity extractors).

- Dynamic UI: Employ JavaScript frameworks (React, Vue, Angular) to create responsive, dynamic templates that adapt based on user input or previous AI responses.

- Backend (Application Logic & Model Context Protocol):

- Prompt Orchestrator: A dedicated service that receives template data, enriches it with context (via MCP), and constructs the final prompt payload.

- Context Management System: A database or cache storing user profiles, historical interactions, application state, and system directives. This system should interact with the Prompt Orchestrator based on the Model Context Protocol.

- Data Security & Privacy: Implement encryption for sensitive context data and ensure strict access controls.

- LLM Gateway:

- Unified Endpoint: Configure a single API endpoint that your backend services call for all AI interactions.

- Routing Logic: Define rules for routing prompts to specific LLMs based on their type, cost, performance, or required capabilities.

- Policy Enforcement: Implement policies for authentication, authorization, rate limiting, and content moderation.

- Monitoring & Analytics: Integrate with your existing observability stack to capture detailed metrics on AI usage and performance.

This multi-layered approach ensures that your AI applications are not only powerful and flexible but also maintainable, scalable, and secure. The upfront investment in structuring your prompt engineering through templates, context protocols, and gateways pays dividends in terms of faster development cycles, improved AI reliability, and ultimately, a better user experience.

Conclusion: The Path to Effortless AI Application Development

The journey through the intricate world of AI prompt engineering reveals a clear trajectory towards more structured, efficient, and user-friendly development paradigms. The initial ad-hoc experimentation with prompts has given way to a sophisticated ecosystem where AI Prompt HTML Templates serve as the intuitive frontend, offering a standardized canvas for crafting AI interactions. These templates, far from being mere superficial elements, are foundational to democratizing prompt engineering, making it accessible to a broader audience and ensuring consistency across diverse applications.

Yet, the true magic unfolds when these elegantly designed templates are underpinned by robust technical infrastructure. The Model Context Protocol provides the crucial intelligence, allowing AI models to understand prompts not in isolation, but within a rich, dynamic, and personalized operational context. This protocol transforms stateless LLM interactions into coherent, multi-turn dialogues and context-aware task executions, moving beyond simple question-answering to genuinely intelligent collaboration.

Completing this powerful triad is the LLM Gateway, the essential orchestrator that ensures seamless, secure, and optimized delivery of these context-rich prompts to the appropriate AI models. Acting as a central control plane, the gateway manages complexities such as API abstraction, security, rate limiting, cost optimization, and vital observability. Tools like APIPark, an open-source AI gateway, exemplify how a unified platform can encapsulate prompts into manageable APIs and streamline the entire AI lifecycle, making the backend as "easy" to manage as the frontend is to design.

Together, AI Prompt HTML Templates, the Model Context Protocol, and the LLM Gateway forge a synergistic relationship that transforms the daunting task of AI application development into an experience defined by "easy design & development." This integrated approach empowers developers and enterprises to move rapidly from concept to deployment, building AI-powered solutions that are not only effective and scalable but also maintainable, cost-efficient, and continuously optimizable. As AI continues its relentless advance, this structured methodology will be the bedrock upon which the next generation of intelligent applications is built, unlocking unprecedented levels of innovation and efficiency across every sector. The future of AI is not just about powerful models, but about the intelligent infrastructure that makes them truly usable, accessible, and transformative for everyone.

Frequently Asked Questions (FAQs)

1. What are AI Prompt HTML Templates and why are they important? AI Prompt HTML Templates are standardized, reusable web forms or interactive components designed to guide users in crafting effective prompts for Large Language Models (LLMs). They are crucial because they bring structure, consistency, and ease of use to prompt engineering, abstracting away complex syntax, reducing errors, and enabling both technical and non-technical users to design sophisticated AI interactions efficiently. They ensure that prompts adhere to best practices and provide dynamic inputs for personalization.

2. How does the Model Context Protocol (MCP) enhance AI Prompt HTML Templates? The Model Context Protocol defines how relevant information—such as conversational history, user preferences, application state, and system instructions—is captured, structured, and delivered to an LLM alongside a prompt. When integrated with HTML templates, MCP ensures that the prompts generated are not only based on user input but are also enriched with a deep understanding of the ongoing interaction and environment. This leads to more coherent, personalized, and accurate AI responses, enabling multi-turn conversations and complex task execution that would be impossible with isolated prompts.

3. What role does an LLM Gateway play in the easy design and development of AI prompts? An LLM Gateway acts as an intelligent intermediary between your application (which uses HTML templates to generate prompts) and various LLM providers. It simplifies development by offering a unified API, abstracting away differences between models. More importantly, it provides critical functionalities like security (centralized API key management, access control), performance optimization (rate limiting, load balancing, caching), cost management, and comprehensive observability (logging, analytics). This ensures that prompts crafted via templates are delivered securely, efficiently, and cost-effectively to the right LLM, making the entire AI backend robust and easy to manage.

4. Can I use AI Prompt HTML Templates with multiple different AI models from various providers? Yes, absolutely. This is one of the primary benefits when combined with an LLM Gateway. An LLM Gateway like APIPark provides a unified API interface that abstracts away the specific characteristics of different AI models (e.g., OpenAI, Anthropic, custom models). This means your HTML templates and the backend logic that processes them can be designed to a single interface, and the LLM Gateway will intelligently route the prompt to the appropriate model based on your configuration, simplifying integration and offering flexibility to switch or combine models without re-architecting your application.

5. How do these components (Templates, MCP, LLM Gateway) contribute to "easy design & development"? They contribute synergistically. HTML Templates make the frontend design and user input for prompts intuitive and consistent. The Model Context Protocol streamlines the backend logic for enriching prompts with necessary context, ensuring AI understands the full intent. The LLM Gateway then simplifies the deployment, management, and operational aspects of connecting to AI models, handling security, performance, and cost. By abstracting complexity at each layer, developers can focus on application features and prompt creativity, accelerating development cycles and making AI integration significantly easier and more reliable from end to end.

🚀You can securely and efficiently call the OpenAI API on APIPark in just two steps:

Step 1: Deploy the APIPark AI gateway in 5 minutes.

APIPark is developed based on Golang, offering strong product performance and low development and maintenance costs. You can deploy APIPark with a single command line.

curl -sSO https://download.apipark.com/install/quick-start.sh; bash quick-start.sh

In my experience, you can see the successful deployment interface within 5 to 10 minutes. Then, you can log in to APIPark using your account.

Step 2: Call the OpenAI API.