API Gateway Main Concepts Explained Simply

In the rapidly evolving landscape of modern software development, characterized by the proliferation of microservices, cloud-native architectures, and a growing reliance on external integrations, the concept of an API Gateway has transitioned from a niche architectural pattern to an indispensable component. As applications become increasingly distributed, interacting with dozens, if not hundreds, of distinct backend services, the sheer complexity of managing these interactions can quickly overwhelm development teams and introduce significant operational overhead. It's no longer just about exposing functionalities; it's about doing so securely, efficiently, resiliently, and in a manner that fosters innovation rather than hindering it.

The traditional approach of allowing client applications to directly communicate with numerous backend services presents a multitude of challenges. These range from fragmented security policies and inconsistent error handling to difficulties in performance optimization and the arduous task of monitoring a sprawling network of service calls. Imagine a bustling city with countless individual shops, each requiring its own entrance, security guard, and information desk. Navigating such a city would be a nightmare. Now, envision a grand central station or a towering commercial building that funnels all traffic through a single, well-managed entrance, where security checks are consolidated, information is readily available, and directions are clear. This analogy provides a glimpse into the transformative role of an API Gateway.

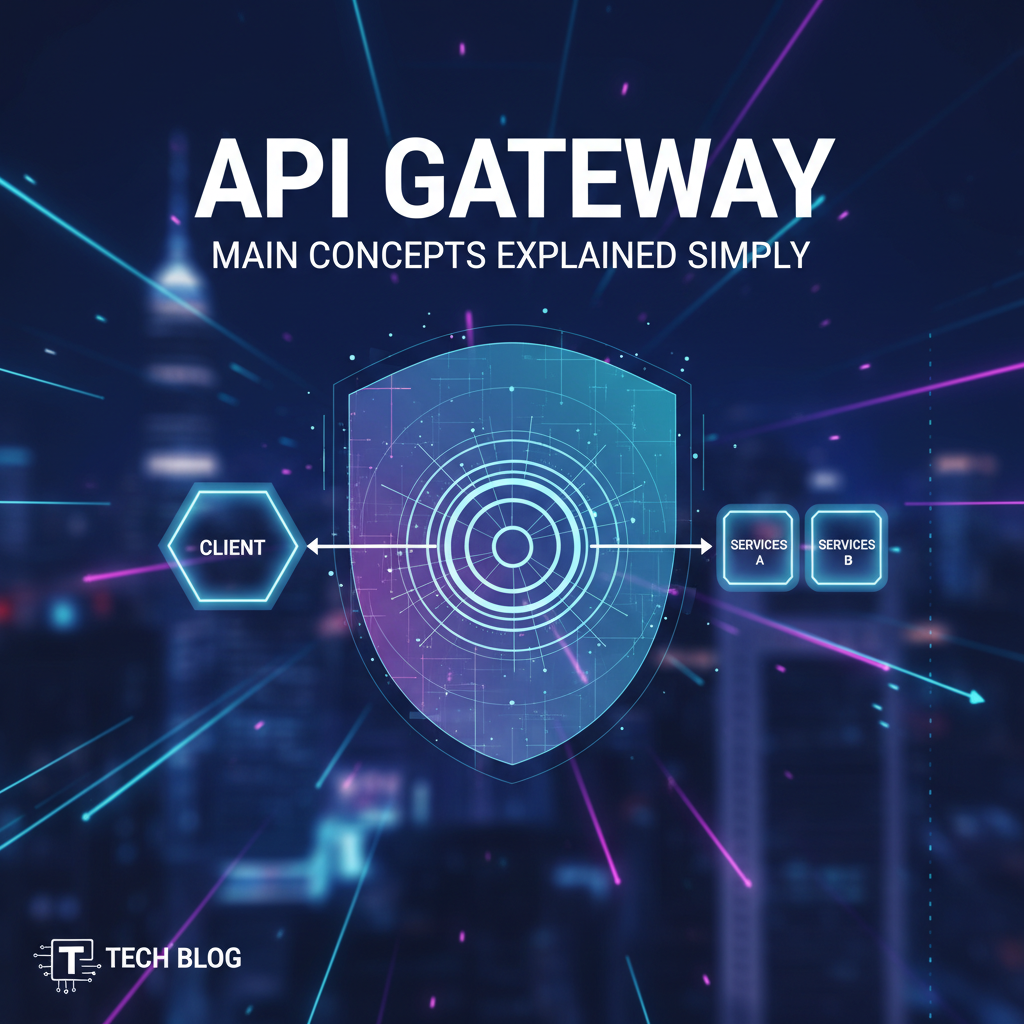

At its core, an API Gateway acts as a single, unified entry point for all incoming API requests, mediating between client applications and backend services. It is a critical piece of infrastructure that simplifies the way clients interact with complex microservice architectures, abstracting away the internal complexities and presenting a streamlined, consistent interface. This article aims to demystify the fundamental concepts surrounding API Gateways, delving into their purpose, core functionalities, architectural considerations, and the profound benefits they offer to organizations striving for robust, scalable, and secure digital platforms. By understanding these principles, developers, architects, and business leaders alike can harness the full potential of this powerful architectural pattern to build more resilient and adaptable systems in the digital age.

Part 1: What is an API Gateway? The Foundation.

To truly grasp the significance of an API Gateway, we must first establish a clear and comprehensive understanding of its definition and foundational role within a modern system architecture. In essence, an API Gateway is a server that acts as an API frontend, sitting between clients and a collection of backend services. It accepts API calls, routes them to the appropriate backend service, and returns the response from the backend service to the client. This seemingly simple description belies a profound impact on how distributed systems are designed, managed, and scaled.

Think of an API Gateway as the sophisticated concierge or the vigilant security checkpoint at a high-security facility. When a visitor (client application) arrives, they don't wander aimlessly searching for a specific department or individual (backend service). Instead, they approach the concierge or the security desk (the API Gateway). This central point verifies their identity (authentication), determines if they have permission to access the requested area (authorization), and then directs them precisely to their destination. The visitor never needs to know the intricate layout of the facility, the specific internal protocols, or the exact location of the person they wish to meet. All they interact with is the central entry point, which handles all the internal navigation and security on their behalf.

The historical context for the emergence of the API Gateway pattern is deeply intertwined with the evolution of software architectures. In the era of monolithic applications, a single, large codebase handled all functionalities. Clients would interact directly with this monolith. While simple to deploy, monoliths became increasingly difficult to scale, maintain, and innovate upon as applications grew in complexity. The advent of microservices architectures offered a compelling alternative: breaking down monolithic applications into smaller, independent, loosely coupled services, each responsible for a specific business capability. This paradigm shift brought tremendous benefits in terms of agility, independent deployment, and technological diversity.

However, the microservices revolution also introduced new challenges. Suddenly, a client application that once communicated with a single monolith now needed to interact with potentially dozens or hundreds of distinct microservices. This led to: * Increased Network Latency: Multiple network hops for a single logical operation. * Complex Client-Side Logic: Clients had to know the addresses and specific APIs of many services. * Fragmented Cross-Cutting Concerns: Implementing security, logging, monitoring, and rate limiting independently for each microservice became a daunting and error-prone task. * Version Management Headaches: Coordinating changes across numerous services and client applications was a nightmare.

It became clear that directly exposing every microservice to clients was not a sustainable or scalable approach. This realization gave birth to the gateway pattern. The API Gateway emerged as the elegant solution to these challenges, providing a centralized point of control and management for all external API traffic. It encapsulates the system's internal architecture, allowing clients to make a single, clean request to the gateway, which then orchestrates the necessary calls to the various backend services. This abstraction dramatically simplifies client development, enhances security, improves performance, and centralizes the management of common concerns. In essence, the API Gateway transforms a tangled web of service interactions into a coherent, manageable, and secure access layer, making it an indispensable component for any robust microservice deployment.

Part 2: Why Do We Need an API Gateway? The Imperatives.

Understanding what an API Gateway is provides the conceptual foundation, but appreciating why it's become a cornerstone of modern distributed systems unveils its true value. The necessity for an API Gateway stems directly from the complexities introduced by microservices and the demands of building scalable, secure, and resilient applications in today's digital landscape. Its role is not merely an optional convenience; for many organizations, it is an essential imperative that addresses critical architectural and operational challenges.

Abstraction and Decoupling

One of the most compelling reasons for deploying an API Gateway is its ability to provide a powerful layer of abstraction. In a microservices architecture, backend services are often designed and deployed independently. This means their internal APIs, data models, and network locations can change without warning. Directly exposing these internal service details to client applications creates a tight coupling that makes evolution difficult. Any change in a backend service's interface or location would necessitate corresponding updates in all client applications, leading to brittle systems and a slow pace of innovation.

The API Gateway acts as a facade, shielding clients from the intricate details of the backend. Client applications interact only with the gateway's stable, public-facing API, regardless of how many services are involved in fulfilling a request or how those services are structured internally. If a backend service is refactored, split, or replaced, the API Gateway can be updated to reflect these changes without affecting the clients. This decoupling empowers backend teams to iterate and evolve their services rapidly without fear of breaking external integrations, significantly accelerating development cycles and reducing the cost of change. It transforms a complex, multi-service interaction into a single, straightforward request from the client's perspective.

Centralized Management of Cross-Cutting Concerns

In a distributed system, many functionalities are required across multiple services but are not part of any single service's core business logic. These are known as cross-cutting concerns, and they include authentication, authorization, rate limiting, logging, monitoring, and caching. Without an API Gateway, each microservice would have to implement these functionalities independently. This leads to: * Duplication of Effort: Every team re-implements similar logic, wasting valuable development time. * Inconsistency: Different services might implement these concerns in slightly different ways, leading to security vulnerabilities or unpredictable behavior. * Increased Complexity: Each service becomes more complex, making it harder to maintain and test.

An API Gateway provides a centralized location to implement and enforce these cross-cutting concerns. Instead of scattering security policies, rate limits, or logging mechanisms across dozens of microservices, they are consolidated at the gateway. This not only eliminates redundancy but also ensures consistency and simplifies management. A single change to the gateway's configuration can instantly update a policy across all exposed APIs, dramatically improving operational efficiency and reducing the risk of errors.

Enhanced Security

Security is paramount in any application, especially those exposing APIs to external consumers. The API Gateway serves as the first line of defense against malicious attacks and unauthorized access. By centralizing security enforcement, it provides a robust shield for backend services that might otherwise be vulnerable if directly exposed.

Key security functions performed by an API Gateway include: * Authentication: Verifying the identity of the client (e.g., using API keys, OAuth 2.0, JWT tokens). * Authorization: Determining if the authenticated client has permission to access the requested resource or perform the requested action. * IP Whitelisting/Blacklisting: Controlling access based on client IP addresses. * Threat Protection: Filtering out common web attack patterns (e.g., SQL injection, cross-site scripting) before they reach backend services. * SSL/TLS Termination: Handling encrypted connections, offloading this CPU-intensive task from backend services.

This centralized security posture means that backend microservices can focus purely on their business logic, relying on the gateway to handle the arduous task of securing the perimeter. This significantly reduces the attack surface and ensures consistent security policies across the entire API ecosystem. Platforms like APIPark, for instance, offer robust features for managing API access, including subscription approval flows and independent access permissions for each tenant, ensuring that callers must subscribe to an API and await administrator approval before they can invoke it, preventing unauthorized API calls and potential data breaches.

Improved Performance and Scalability

While the API Gateway introduces an additional hop in the request path, it can paradoxically improve overall system performance and scalability through several mechanisms: * Caching: The gateway can cache responses from backend services, serving subsequent identical requests directly from its cache. This dramatically reduces the load on backend services and slashes response times for frequently accessed data. * Load Balancing: When multiple instances of a backend service are running, the gateway can intelligently distribute incoming requests across them, ensuring optimal resource utilization and preventing any single instance from becoming a bottleneck. * Request/Response Transformation: It can optimize data formats or combine multiple backend responses into a single, more efficient response for the client, reducing client-side processing and network traffic. * Connection Pooling: The gateway can maintain persistent connections to backend services, reducing the overhead of establishing new connections for every request.

By offloading these performance-enhancing tasks, the API Gateway allows backend services to focus on processing business logic more efficiently, leading to better overall system throughput and responsiveness, especially under high load. Its ability to support cluster deployment ensures it can handle large-scale traffic, mirroring performance benchmarks that rival high-performance proxies.

Simplified Client Development

For client developers, interacting with a distributed system without an API Gateway can be a nightmare. They would need to manage multiple endpoints, handle diverse authentication mechanisms for different services, and piece together data from various sources. The API Gateway simplifies this considerably.

Clients interact with a single, consistent endpoint, regardless of the underlying complexity. The gateway aggregates functionalities, transforms responses, and standardizes interaction patterns, presenting a clean and intuitive API to clients. This reduces the learning curve for client developers, accelerates front-end development, and minimizes the chances of integration errors. It offers a "single pane of glass" through which clients view the entire backend system.

Better Observability

In a complex microservices environment, understanding the flow of requests and pinpointing issues can be incredibly challenging. The API Gateway provides a centralized point for capturing vital operational data. * Centralized Logging: All incoming requests and outgoing responses can be logged at the gateway, providing a unified audit trail and simplifying debugging and compliance efforts. * Metrics Collection: Performance metrics such as latency, throughput, and error rates can be collected and aggregated, offering a holistic view of the system's health. * Distributed Tracing: The gateway can inject correlation IDs into requests, allowing for end-to-end tracing across multiple microservices.

This consolidated observability data is invaluable for monitoring system health, identifying performance bottlenecks, and rapidly troubleshooting issues. Solutions such as APIPark provide detailed logging of every API call, recording comprehensive information, and offers powerful data analysis capabilities to display long-term trends and performance changes, helping businesses with preventive maintenance before issues occur. This level of insight is crucial for maintaining system stability and data security.

Agnosticism and Protocol Translation

Modern applications often employ a variety of communication protocols and API styles – REST, GraphQL, gRPC, SOAP, and more. A well-designed API Gateway can act as a protocol translator, allowing clients to communicate using one protocol (e.g., HTTP/REST) while backend services utilize another (e.g., gRPC). This flexibility enables organizations to choose the best protocol for each service without forcing client applications to adapt to diverse communication methods. It makes the gateway a versatile adaptor, future-proofing integrations and enabling heterogeneous service landscapes.

In summary, the API Gateway is far more than just a proxy; it is a strategic architectural component that addresses the inherent complexities of distributed systems. It acts as an intelligent intermediary, empowering organizations to build more secure, scalable, performant, and maintainable applications, while simultaneously enhancing the developer experience for both backend and client teams. Its benefits are profound and touch upon every aspect of the software development lifecycle, making it an indispensable tool for navigating the demands of the digital age.

Part 3: Core Concepts and Functionalities of an API Gateway.

The true power of an API Gateway lies in its rich set of functionalities, each designed to address specific challenges in managing and exposing APIs. While the general concept of a single entry point is straightforward, the capabilities embedded within a robust gateway are sophisticated and multifaceted. Let's delve into the core concepts and functionalities that make an API Gateway an indispensable component.

Routing and Request Forwarding

At its most fundamental level, an API Gateway must be able to receive an incoming request from a client and accurately direct it to the appropriate backend service. This process is known as routing and request forwarding. The gateway examines the incoming request, analyzing elements such as the URL path, HTTP method, headers, and query parameters, to determine which backend service should handle it.

- Path-based Routing: This is the most common method, where different URL paths are mapped to different backend services. For example, requests to

/usersmight go to the User Service, while/productsgo to the Product Catalog Service. - Header-based Routing: The gateway can inspect specific HTTP headers in the request to decide where to route it. This is often used for versioning (e.g.,

X-API-Version: 2) or for directing requests to different environments (e.g.,X-Environment: staging). - Query-Parameter-based Routing: Similar to header-based, but using query parameters to determine the target service.

- Service Discovery Integration: In dynamic microservices environments where service instances frequently scale up or down and change network locations, the API Gateway integrates with a service discovery mechanism (e.g., Consul, Eureka, Kubernetes DNS). Instead of hardcoding backend service addresses, the gateway queries the service registry to find healthy instances of the target service, ensuring requests are always sent to available endpoints.

Effective routing is the backbone of any API Gateway, ensuring that client requests are efficiently and accurately delivered to the correct backend processing units.

Authentication and Authorization

Centralizing security at the gateway is one of its most critical advantages. It removes the burden of implementing security logic from individual microservices, making the entire system more secure and easier to manage.

- Authentication: The process of verifying the identity of the client making the request. The API Gateway can support various authentication schemes:

- API Keys: Simple tokens passed in headers or query parameters for client identification.

- OAuth 2.0/OpenID Connect: Industry-standard protocols for delegated authorization, often involving issuing JSON Web Tokens (JWTs). The gateway can validate these tokens.

- Mutual TLS (mTLS): For highly secure internal communication, where both client and server authenticate each other using certificates.

- Authorization: Once a client is authenticated, the gateway determines if that client has the necessary permissions to access the requested resource or perform the requested action. This often involves:

- Role-Based Access Control (RBAC): Checking if the client's role (e.g., "admin," "user," "guest") has access rights to the specific API endpoint.

- Policy Enforcement: Applying predefined security policies based on user attributes, resource sensitivity, or contextual information.

By handling authentication and authorization, the API Gateway acts as a robust gatekeeper, ensuring that only legitimate and authorized requests reach the backend services, which can then focus solely on executing their business logic without reimplementing security checks.

Rate Limiting and Throttling

To prevent abuse, ensure fair usage, and protect backend services from being overwhelmed by a sudden surge in traffic, API Gateways implement rate limiting and throttling.

- Rate Limiting: Restricts the number of requests a client can make to an API within a defined time window (e.g., 100 requests per minute per API key). If the limit is exceeded, subsequent requests are rejected, often with an HTTP 429 Too Many Requests status.

- Purpose: Prevents denial-of-service (DoS) attacks, controls resource consumption, and enforces usage tiers for different subscription levels.

- Throttling: Similar to rate limiting but often involves queuing requests or slowing down response times rather than outright rejecting them, especially when the backend is under stress.

Common algorithms for implementing rate limiting include: * Fixed Window: A simple approach where a counter is incremented within a fixed time window. * Sliding Window Log: Tracks timestamps of requests within a window, offering more fairness. * Token Bucket/Leaky Bucket: More sophisticated algorithms that allow for bursts of traffic while maintaining an average rate.

Granularity can vary, allowing limits to be applied per user, per API endpoint, per IP address, or globally across the gateway. This functionality is critical for maintaining the stability and availability of APIs under varying load conditions.

Request/Response Transformation

The API Gateway can act as a powerful translator and manipulator of API traffic, adapting requests and responses to suit the needs of both clients and backend services.

- Header Manipulation: Adding, removing, or modifying HTTP headers in both incoming requests and outgoing responses. This is useful for injecting security tokens, setting caching directives, or passing correlation IDs.

- Payload Transformation: Modifying the body of the request or response. This can involve:

- Data Format Conversion: Converting JSON to XML, or vice versa, if clients and services use different formats.

- Field Filtering/Masking: Removing sensitive data from responses before sending them to clients, or ensuring clients only receive necessary fields.

- Data Enrichment: Adding information to a request before forwarding it to a backend service, or aggregating data from multiple services into a single, unified response for the client (often called "API Composition" or "API Aggregation").

- Protocol Translation: Enabling clients to use one protocol (e.g., REST over HTTP) while the backend service uses another (e.g., gRPC).

These transformation capabilities allow for greater flexibility and decoupling, ensuring that backend services can evolve their internal APIs without necessarily breaking existing client integrations.

Caching

To reduce latency and decrease the load on backend services, API Gateways can implement caching. When a client requests data that has been previously fetched, the gateway can serve the response directly from its cache instead of forwarding the request to the backend.

- Benefits:

- Reduced Latency: Faster response times for cached data.

- Decreased Backend Load: Backend services are hit less frequently, freeing up resources.

- Improved Scalability: The system can handle more requests without scaling backend services proportionally.

- Considerations:

- Cache Invalidation: A critical challenge is ensuring cached data remains fresh. Strategies include time-to-live (TTL), event-driven invalidation, or explicit cache purges.

- Cache Scope: Caches can be global (shared across all gateway instances) or local (per gateway instance).

Caching is particularly effective for static or infrequently changing data, dramatically enhancing the performance and efficiency of the API ecosystem.

Load Balancing

When multiple instances of a backend service are available, the API Gateway acts as an intelligent load balancer, distributing incoming requests across these instances. This ensures that no single service instance becomes overloaded, improving overall system reliability and throughput.

- Algorithms: Common load balancing algorithms include:

- Round Robin: Distributes requests sequentially to each server in the pool.

- Least Connections: Directs traffic to the server with the fewest active connections.

- Weighted Round Robin: Assigns a "weight" to each server, directing more traffic to more capable servers.

- IP Hash: Directs requests from the same client IP to the same server, useful for maintaining session stickiness.

- Health Checks: The gateway continuously monitors the health of backend service instances, automatically removing unhealthy instances from the load balancing pool and reintroducing them when they recover.

Load balancing at the gateway level is crucial for achieving high availability and scalability in microservices architectures, ensuring consistent performance even under heavy traffic.

API Versioning

As APIs evolve, changes are inevitable. Managing these changes gracefully, without disrupting existing client applications, is crucial. API Gateways facilitate API versioning, allowing multiple versions of an API to coexist.

- Versioning Strategies:

- URL Path Versioning: Including the version number directly in the URL (e.g.,

/v1/users,/v2/users). - Header Versioning: Using a custom HTTP header (e.g.,

X-API-Version: 1). - Query Parameter Versioning: Appending the version as a query parameter (e.g.,

/users?api-version=1).

- URL Path Versioning: Including the version number directly in the URL (e.g.,

The API Gateway routes requests to the appropriate backend service version based on the client's specified version. This allows for backward compatibility, enabling older clients to continue using an older API version while newer clients adopt the latest, ensuring a smoother transition during API evolution.

Logging, Monitoring, and Analytics

Centralized observability is a significant benefit of using an API Gateway. It provides a single point to collect comprehensive data about all API interactions, which is invaluable for operational insights and troubleshooting.

- Detailed Logging: The gateway can log every detail of each incoming request and outgoing response, including timestamps, client IP addresses, request headers, response codes, and latency. This data is critical for auditing, debugging, and security analysis.

- Metrics Collection: Beyond raw logs, the gateway can extract and aggregate key performance indicators (KPIs) such as:

- Request rates (requests per second/minute)

- Error rates (e.g., 4xx, 5xx responses)

- Average/P99 latency

- Throughput (data transferred per second)

- Analytics Dashboards: Integrating with monitoring tools (e.g., Prometheus, Grafana, ELK Stack), the gateway's data can populate dashboards, providing real-time visibility into API performance and usage trends.

- Alerting: Setting up alerts based on predefined thresholds (e.g., high error rates, elevated latency) ensures that operational teams are immediately notified of potential issues.

As previously highlighted, platforms like APIPark excel in this area, offering detailed API call logging and powerful data analysis tools that enable businesses to quickly trace and troubleshoot issues, understand long-term performance trends, and proactively maintain system stability. This comprehensive approach to observability is fundamental for operational excellence.

Circuit Breaking and Retries

Resilience is key in distributed systems. API Gateways can implement patterns like circuit breakers and retries to prevent cascading failures and improve system robustness.

- Circuit Breaker: If a backend service consistently fails or becomes unresponsive, the gateway can "trip" a circuit breaker, temporarily preventing further requests from being sent to that service. Instead, it might return a default error response or fall back to an alternative. This gives the failing service time to recover without being overwhelmed by a flood of new requests, preventing a cascading failure throughout the system.

- Retries: For transient errors (e.g., network glitches, temporary service unavailability), the gateway can automatically retry a failed request a certain number of times before declaring it a permanent failure. This improves the reliability of API calls without requiring client-side retry logic.

These resilience patterns significantly enhance the fault tolerance of the entire microservices ecosystem.

Traffic Management (Advanced)

Beyond basic routing, advanced API Gateways offer sophisticated traffic management capabilities crucial for modern deployment strategies and progressive delivery.

- A/B Testing: Directing a percentage of traffic to a new version of a service (A) while the majority goes to the existing version (B), allowing for real-world testing and comparison of performance or user experience.

- Canary Releases: Gradually rolling out a new version of a service to a small subset of users (the "canary") before making it available to everyone. The gateway can precisely control the traffic split, enabling quick rollback if issues are detected.

- Blue/Green Deployments: Maintaining two identical production environments ("Blue" and "Green"). While "Blue" serves live traffic, "Green" is updated with a new version. The gateway then instantly switches all traffic from "Blue" to "Green" once testing is complete, offering zero-downtime deployments and easy rollback.

These capabilities allow for low-risk deployments, faster iteration, and better control over the release cycle, significantly improving development agility and reliability.

Security Policies (WAF-like functionality)

Some API Gateways incorporate functionalities similar to Web Application Firewalls (WAFs) to provide an additional layer of security.

- Threat Protection: Detecting and blocking common web attack vectors like SQL injection, cross-site scripting (XSS), command injection, and more, before they reach the backend services.

- DDoS Protection: Implementing mechanisms to mitigate distributed denial-of-service attacks by detecting and filtering malicious traffic patterns.

- Content Filtering: Inspecting request and response payloads for sensitive information or malicious content.

These advanced security policies provide comprehensive protection at the perimeter, safeguarding backend systems from a wide range of cyber threats. APIPark, for example, enhances security by allowing for the creation of multiple teams (tenants), each with independent applications, data, user configurations, and security policies, while sharing underlying infrastructure to improve resource utilization and reduce operational costs.

In summary, the API Gateway is a powerful, feature-rich component that provides far more than just simple request forwarding. Its extensive set of core functionalities addresses virtually every aspect of API management, from security and performance to resilience and operational observability. By centralizing these critical concerns, it not only simplifies the architecture of distributed systems but also empowers organizations to build more robust, scalable, and secure digital experiences.

Part 4: Deployment Models and Architectures.

The way an API Gateway is deployed and integrated into a system architecture can vary significantly depending on the specific needs of an organization, the chosen cloud environment, and the complexity of the microservices landscape. Understanding these different deployment models is crucial for making informed architectural decisions.

Centralized Gateway (Traditional)

The most common and traditional deployment model involves a single, centralized API Gateway instance (or a highly available cluster of instances) sitting at the edge of the network. All external client requests are routed through this central gateway before reaching any backend services.

- Characteristics:

- Acts as the sole entry point for all external traffic.

- Manages all cross-cutting concerns for the entire API portfolio.

- Often deployed as a dedicated service or a reverse proxy.

- Advantages:

- Simplified client-side interaction (one endpoint for everything).

- Consistent policy enforcement across all APIs.

- Easier to monitor and manage due to a single point of control.

- Disadvantages:

- Can become a single point of failure if not properly configured for high availability.

- Potential performance bottleneck under extreme load if not adequately scaled.

- Can become a monolithic component itself, requiring careful management as the API landscape grows.

This model is suitable for many organizations, especially those starting with a microservices architecture or those with a relatively stable set of APIs.

Sidecar Gateway (with Service Mesh)

In more advanced microservices deployments, particularly those utilizing a service mesh (like Istio, Linkerd, or Consul Connect), the concept of an API Gateway often integrates or overlaps with the service mesh's ingress capabilities. A sidecar pattern involves deploying a proxy alongside each service instance.

- Characteristics:

- The service mesh primarily handles intra-service communication (service-to-service).

- An ingress gateway (often a component of the service mesh) handles external traffic entering the mesh.

- Each service also has a "sidecar proxy" that manages its outbound and inbound traffic.

- Advantages:

- Decouples the perimeter gateway from internal communication concerns.

- Leverages the service mesh for advanced traffic management, observability, and security within the cluster.

- The ingress gateway becomes leaner, primarily focusing on external exposure.

- Disadvantages:

- Adds significant complexity to the infrastructure.

- Requires deep understanding of service mesh concepts.

- Overhead of deploying and managing sidecar proxies.

This model is typically adopted by large enterprises with complex, highly dynamic microservices environments where fine-grained control over internal traffic is as critical as external access management.

Embedded Gateway (Library)

In some specialized scenarios, particularly within simpler applications or as part of a bespoke internal framework, the "gateway" functionality might not be a separate service but rather a library embedded directly within an application or a limited set of applications.

- Characteristics:

- Gateway logic is implemented as part of the application code.

- Not a distinct network component.

- Advantages:

- Very low latency as there's no additional network hop.

- Tight integration with application logic.

- Disadvantages:

- Lacks centralized management and visibility.

- Duplication of effort if multiple applications need similar gateway functions.

- Scalability and robustness are tied to the host application.

- Not suitable for exposing a large number of diverse services externally.

This approach is less common for full-fledged external API Gateways and more typical for internal service aggregation within a single application or specific domain.

Cloud-Managed Gateways

Cloud providers offer fully managed API Gateway services that abstract away much of the infrastructure management, allowing users to focus on configuration.

- Examples:

- AWS API Gateway: Integrates seamlessly with other AWS services (Lambda, EC2, S3), offering powerful features like serverless APIs, caching, custom authorizers, and WebSocket support.

- Azure API Management: Provides a comprehensive solution for publishing, securing, transforming, maintaining, and monitoring APIs, with strong integration into Azure's ecosystem.

- Google Cloud Apigee: An enterprise-grade platform for developing and managing APIs, offering analytics, monetization, and advanced security.

- Advantages:

- No infrastructure to provision or manage (serverless operation).

- High availability and scalability built-in.

- Deep integration with cloud ecosystem services.

- Reduced operational overhead.

- Disadvantages:

- Vendor lock-in: tied to a specific cloud provider.

- Cost can scale rapidly with usage.

- Less control over the underlying infrastructure.

- May have limitations in customization compared to self-hosted options.

Cloud-managed gateways are an excellent choice for organizations that are heavily invested in a particular cloud ecosystem and want to offload infrastructure management.

Open-Source Gateways

For organizations seeking more control, flexibility, or specific functionalities, numerous powerful open-source API Gateway solutions are available.

- Examples:

- Kong Gateway: A popular, highly performant open-source API Gateway that can run on any infrastructure, extensible with plugins.

- Tyk Open Source API Gateway: Another robust option offering a comprehensive set of features and a strong community.

- Ocelot: A .NET Core-based API Gateway specifically for microservices architectures that use .NET.

- Envoy Proxy: While primarily a service mesh proxy, it can also function as an edge gateway with proper configuration.

APIPark stands out as a compelling open-source API Gateway and API management platform, particularly for organizations leveraging AI. Unlike generic gateways, APIPark is specifically designed to simplify the integration and invocation of over 100 AI models, offering a unified API format for AI invocation. This standardization means that changes in underlying AI models or prompts do not impact application microservices, greatly simplifying AI usage and reducing maintenance costs. Its quick deployment with a single command line (curl -sSO https://download.apipark.com/install/quick-start.sh; bash quick-start.sh) and impressive performance (over 20,000 TPS with modest resources) make it a strong contender for both general API management and specialized AI workloads. It further distinguishes itself with features like prompt encapsulation into REST APIs, end-to-end API lifecycle management, and independent API and access permissions for each tenant, providing enterprise-grade capabilities in an open-source package.

- Advantages of Open-Source Gateways:

- Full control over the software and infrastructure.

- Customization and extensibility through plugins or direct code modifications.

- No vendor lock-in.

- Community support and vibrant ecosystems.

- Potentially lower operational costs if managed in-house.

- Disadvantages:

- Requires internal expertise for deployment, maintenance, and scaling.

- Higher operational overhead compared to managed services.

- Responsibility for security patches and upgrades rests with the user.

Choosing the right deployment model and specific API Gateway solution requires a careful evaluation of an organization's architectural needs, operational capabilities, budget constraints, and long-term strategic goals. Whether opting for a cloud-managed service to minimize overhead or an open-source solution like APIPark for maximum control and specialized AI integration, the core functionalities of an API Gateway remain consistently valuable across all models.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

Part 5: API Gateway vs. Other Related Concepts.

The concept of an API Gateway often overlaps with, or is confused with, other networking and architectural components. While there are functional similarities, understanding the distinct roles and primary responsibilities of each is crucial for designing a coherent and efficient system. Let's clarify the differences between an API Gateway and a Load Balancer, a Reverse Proxy, and a Service Mesh.

API Gateway vs. Load Balancer

- Load Balancer (Primary Role): A load balancer's primary function is to efficiently distribute incoming network traffic across a group of backend servers or server pools. Its goal is to maximize throughput, minimize response time, and prevent any single server from becoming overloaded. It operates mainly at Layer 4 (TCP) or Layer 7 (HTTP) of the OSI model, focusing on the health and capacity of backend instances.

- Key Responsibilities: Traffic distribution (round robin, least connections, etc.), health checks of backend servers, session persistence.

- Focus: High availability and scalability by distributing network load.

- API Gateway (Primary Role): While an API Gateway can perform load balancing, its role is far more comprehensive. It is a Layer 7 (Application Layer) component that acts as an intelligent intermediary, handling much more than just traffic distribution.

- Key Responsibilities (beyond load balancing): Authentication, authorization, rate limiting, request/response transformation, API versioning, caching, logging, monitoring, and potentially API composition/aggregation.

- Focus: API management, security, resilience, and developer experience by providing a single, consistent facade to backend services.

- Similarities & Differences:

- Overlap: Both often sit at the edge of a network and direct traffic to backend services. Both perform health checks and can handle high availability.

- Distinction: A load balancer is essentially a "smart traffic cop" whose main job is to direct vehicles efficiently. An API Gateway is a "concierge and security guard" who not only directs visitors but also verifies their identity, checks their permissions, provides additional services (like currency exchange or information), and manages the overall experience.

- Conclusion: You typically need both. A load balancer might sit in front of the API Gateway to distribute traffic across multiple gateway instances, ensuring the gateway itself is highly available and scalable. The API Gateway then distributes traffic to specific microservices, adding its layer of API management functionalities.

API Gateway vs. Reverse Proxy

- Reverse Proxy (Primary Role): A reverse proxy server sits in front of one or more web servers, intercepting client requests before they reach the origin server. Its primary function is to retrieve resources on behalf of a client from one or more servers.

- Key Responsibilities: Load balancing, SSL/TLS termination, caching static content, security (basic firewall functionality), URL rewriting, compression.

- Focus: Enhancing security, performance, and reliability of web servers. It hides the identities of origin servers and can perform some transformations.

- API Gateway (Primary Role): As established, an API Gateway is a specialized type of reverse proxy, but with a significantly expanded scope and intelligence tailored specifically for APIs.

- Key Responsibilities: All the API management features discussed earlier (auth, authz, rate limiting, transformation, versioning, etc.).

- Focus: Holistic API lifecycle management and abstraction for microservices.

- Similarities & Differences:

- Overlap: An API Gateway is conceptually a reverse proxy, as it intercepts requests and forwards them to backend services. Many API Gateway solutions are built on top of or incorporate reverse proxy technology (e.g., Nginx, Envoy).

- Distinction: A reverse proxy is a general-purpose tool that primarily concerns itself with HTTP/HTTPS traffic forwarding and optimization. An API Gateway is a highly specialized reverse proxy specifically designed to understand, manage, and augment API interactions. It's application-aware in a way a generic reverse proxy typically isn't. A reverse proxy might forward all requests for

api.example.comto a single backend server; an API Gateway would intelligently routeapi.example.com/usersto the User Service, authenticate the user, rate limit their calls, and transform the response. - Conclusion: All API Gateways are reverse proxies, but not all reverse proxies are API Gateways. The API Gateway extends the core reverse proxy functionality with rich API-specific intelligence.

API Gateway vs. Service Mesh

- Service Mesh (Primary Role): A service mesh is a dedicated infrastructure layer that handles service-to-service communication within a microservices application. It typically consists of a "data plane" (proxies like Envoy deployed as sidecars next to each service instance) and a "control plane" that manages and configures these proxies.

- Key Responsibilities: Secure, reliable, and observable internal communication between microservices (e.g., traffic management, circuit breaking, retries, mutual TLS, tracing, metrics collection for internal calls).

- Focus: Solving the challenges of inter-service communication within a cluster, making service calls reliable and observable.

- API Gateway (Primary Role): The API Gateway is responsible for managing external client traffic and acting as the entry point to the entire microservices system.

- Key Responsibilities: External API management concerns like authentication for external clients, rate limiting external consumers, API versioning for public APIs, and external client-specific transformations.

- Focus: The edge of the application, managing how external consumers interact with the system.

- Similarities & Differences:

- Overlap: Both use proxies and address similar cross-cutting concerns (traffic management, security, observability). However, they operate at different boundaries.

- Distinction: The API Gateway is the "front door" of the application, handling north-south traffic (external clients to services). The service mesh handles "internal corridors" within the application, managing east-west traffic (service-to-service). While an ingress gateway (often part of a service mesh solution) might handle the initial entry, the API Gateway often provides higher-level API management specific to external consumers.

- Conclusion: They are complementary, not competing technologies. A robust microservices architecture often benefits from both. The API Gateway manages the public face of the APIs, while the service mesh ensures efficient and secure communication between the internal services that fulfill those API requests. An API Gateway might even integrate with the service mesh, passing requests to the mesh's ingress, which then routes them internally.

In essence, while these components share some fundamental networking principles and may even incorporate similar underlying technologies, their architectural intent, primary responsibilities, and the boundaries at which they operate are distinctly different. An API Gateway is purpose-built for the comprehensive management and secure exposure of APIs to external consumers, providing a crucial layer of abstraction and control in a distributed system.

Part 6: Choosing the Right API Gateway.

Selecting the appropriate API Gateway solution is a critical decision that can significantly impact the performance, security, scalability, and overall maintainability of your microservices architecture. There's no one-size-fits-all answer, as the "best" gateway depends heavily on your organization's specific requirements, existing infrastructure, team expertise, and strategic goals. A thorough evaluation process considering various factors is essential.

Factors to Consider:

- Features and Functionalities:

- Core Requirements: Does it support the essential API management features you need immediately (e.g., routing, authentication, rate limiting, caching, logging)?

- Advanced Needs: Do you require more sophisticated capabilities like request/response transformation, API versioning, GraphQL federation, gRPC proxying, advanced traffic management (A/B testing, canary releases), or integration with a service mesh?

- Specialized Use Cases: Are there unique requirements, such as AI model integration and unified invocation (as offered by APIPark), or specific compliance needs?

- Performance and Scalability:

- Throughput: What kind of request volume (requests per second/minute) do you anticipate? Does the gateway demonstrate the ability to handle peak loads efficiently?

- Latency: How much latency does the gateway add to each request? Is this acceptable for your application's responsiveness requirements?

- Scalability Model: How easily can the gateway scale horizontally (add more instances) or vertically (increase resources for existing instances) to meet growing demand? Solutions like APIPark, boasting over 20,000 TPS with an 8-core CPU and 8GB of memory and supporting cluster deployment, illustrate robust performance characteristics to look for.

- Deployment Flexibility:

- Environment: Where do you plan to deploy the gateway? On-premises, in a specific cloud (AWS, Azure, GCP), across multiple clouds (hybrid/multi-cloud), or within a Kubernetes cluster?

- Installation Ease: How straightforward is the deployment process? Some open-source solutions like APIPark offer quick-start scripts for rapid setup, which can be a significant advantage for quick adoption and testing.

- Infrastructure Requirements: What are the underlying resource needs (CPU, memory, storage)?

- Ease of Use and Configuration:

- Developer Experience (DX): How easy is it for developers to define APIs, configure routing rules, and apply policies?

- Management Interface: Does it offer a user-friendly GUI, a powerful CLI, or a robust declarative configuration (e.g., YAML, JSON) for managing the gateway?

- Documentation and Community: Is there comprehensive documentation, active community support, or readily available commercial support?

- Ecosystem and Integrations:

- Monitoring/Logging: How well does it integrate with your existing monitoring, logging, and tracing tools (e.g., Prometheus, Grafana, ELK Stack, Jaeger)?

- Security Tools: Does it integrate with your identity providers (IdP) or other security solutions?

- CI/CD: Can its configuration be easily integrated into your continuous integration and continuous deployment pipelines?

- Cost:

- Licensing: Is it open-source (potentially free, but with operational costs), or does it have commercial licensing fees?

- Operational Costs: For self-hosted solutions, consider the cost of infrastructure, maintenance, and dedicated personnel. For cloud-managed solutions, evaluate the pricing model based on requests, data transfer, and features used.

- Support: Are professional support contracts available and within budget? Many open-source products, including APIPark, offer commercial versions with advanced features and professional technical support for enterprises.

- Specific Needs (e.g., AI Integration):

- If your organization is heavily investing in artificial intelligence, a specialized gateway designed for AI models, like APIPark, might offer significant advantages. APIPark's ability to quickly integrate over 100 AI models and provide a unified API format for AI invocation can dramatically simplify the management and deployment of AI services, reducing maintenance costs and accelerating AI adoption. Its prompt encapsulation feature, allowing users to quickly combine AI models with custom prompts to create new APIs (e.g., sentiment analysis), is a distinct offering.

Comparative Table of API Gateway Types/Features:

To aid in the selection process, here's a simplified comparison of common API Gateway types based on the factors discussed:

| Feature/Criteria | Cloud-Managed Gateway (e.g., AWS API Gateway) | Open-Source Gateway (e.g., Kong, APIPark) | Custom-Built Gateway (Internal) |

|---|---|---|---|

| Infrastructure Mgmt. | Fully managed by cloud provider | Self-managed; requires internal expertise for ops & scaling | Entirely managed and maintained by your team |

| Initial Setup Time | Fast (configuration-based) | Moderate (deployment + configuration) - APIPark is very fast with quick-start | Slow (design, develop, test, deploy) |

| Scalability | Excellent; automatic scaling built-in | Excellent, but requires manual configuration/orchestration (e.g., Kubernetes) | Varies; depends on implementation and team capability |

| Features | Broad feature set, often with deep cloud ecosystem integrations | Comprehensive, highly customizable via plugins; specialized options (e.g., APIPark for AI) | Fully custom; only features you build |

| Cost Model | Pay-as-you-go (requests, data, features); can be high at scale | Infrastructure cost + operational overhead; professional support is extra | High upfront development cost; ongoing maintenance; infrastructure cost |

| Control & Flexibility | Limited to provider's offerings | High; full control over code and deployment | Maximum; complete control |

| Vendor Lock-in | High (tied to cloud provider) | Low to None | None |

| Support | Cloud provider's support plans | Community support; commercial support often available (e.g., APIPark commercial) | Internal team support |

| Best For | Cloud-native organizations, rapid prototyping, minimal ops overhead | Organizations seeking control, specific features, AI integration (APIPark), cost-efficiency | Highly unique, niche requirements not met by existing solutions; internal-only use |

The decision on which API Gateway to adopt is strategic. For organizations heavily reliant on a specific cloud provider and looking for minimal operational overhead, a cloud-managed solution might be ideal. For those seeking maximum control, customization, and cost efficiency, or with specialized needs like AI integration, an open-source solution like APIPark presents a compelling alternative, offering enterprise-grade features with the flexibility of open-source. A careful assessment against these factors will guide you toward the API Gateway that best aligns with your architectural vision and business objectives.

Part 7: Best Practices for Implementing an API Gateway.

Implementing an API Gateway effectively goes beyond simply deploying the software; it involves strategic planning, thoughtful design, and adherence to best practices to maximize its benefits and avoid common pitfalls. A well-implemented API Gateway can transform your microservices architecture, while a poorly managed one can introduce new complexities and bottlenecks.

1. Start Simple and Iterate:

Don't try to implement every possible feature of an API Gateway from day one. Begin by addressing the most critical needs, such as basic routing, authentication, and perhaps rate limiting. As your understanding grows and your system evolves, gradually introduce more advanced functionalities like caching, transformation, or advanced traffic management. An iterative approach allows your team to gain experience, validate the value of each feature, and avoid over-engineering, which can lead to unnecessary complexity and slower development.

2. Design for High Availability and Fault Tolerance:

The API Gateway is a critical component, often acting as a single point of entry. Any downtime for the gateway can mean downtime for your entire application. Therefore, designing for high availability is paramount: * Redundancy: Deploy multiple instances of your gateway across different availability zones or regions. * Load Balancing: Place a robust load balancer in front of your gateway instances to distribute traffic and handle failover. * Health Checks: Configure aggressive health checks for your gateway instances to quickly detect and remove unhealthy ones from the pool. * Auto-Scaling: Leverage auto-scaling groups in cloud environments to automatically adjust the number of gateway instances based on traffic load.

Implementing resilience patterns like circuit breakers and retries within the gateway for backend service calls further enhances overall system stability, preventing cascading failures.

3. Implement Robust Monitoring and Alerting:

The API Gateway is a prime location for collecting critical operational data. Maximize this by implementing comprehensive monitoring and alerting: * Centralized Logging: Ensure all incoming requests and outgoing responses are logged centrally (e.g., to an ELK stack, Splunk, or cloud logging services). This provides an invaluable audit trail and aids in debugging. * Key Metrics: Monitor crucial metrics such as request rates, error rates (HTTP 4xx/5xx), average latency, P99 latency, CPU utilization, memory usage, and network I/O. * Dashboards: Create intuitive dashboards to visualize the health and performance of your gateway and the APIs it exposes. * Actionable Alerts: Set up alerts for deviations from normal behavior (e.g., sudden spikes in errors, high latency, resource exhaustion) that notify the relevant teams, enabling rapid response to incidents. APIPark's detailed logging and powerful data analysis capabilities are excellent examples of how to achieve this level of observability.

4. Regularly Review and Update Security Policies:

The API Gateway is your first line of defense. Its security configurations should not be set once and forgotten: * Audit Regularly: Periodically review authentication mechanisms, authorization rules, IP whitelists/blacklists, and threat protection policies. * Stay Updated: Keep the gateway software patched and updated to protect against known vulnerabilities. * Principle of Least Privilege: Ensure that backend services accessed via the gateway only expose the minimum necessary permissions. * Security by Design: Integrate security considerations into the design and configuration process, rather than treating them as an afterthought. This includes ensuring all tenants have independent and secure configurations, as is facilitated by platforms like APIPark.

5. Version Your APIs Judiciously:

APIs evolve, but breaking changes can disrupt client applications. Implement a clear API versioning strategy through the gateway: * Consistent Approach: Choose a consistent versioning method (e.g., URL path, header, query parameter) and stick to it. * Support Older Versions: Plan to support older API versions for a defined period to allow clients ample time to migrate. The gateway makes this manageable by routing requests to the appropriate backend service version. * Clear Communication: Clearly communicate API changes and deprecation schedules to your consumers.

6. Automate Deployment and Configuration:

Treat your API Gateway configuration as code. This means: * Version Control: Store all gateway configurations (routing rules, policies, etc.) in a version control system (e.g., Git). * CI/CD Integration: Integrate gateway deployment and configuration changes into your continuous integration and continuous deployment pipelines. This ensures consistency, reduces manual errors, and speeds up the release process. * Infrastructure as Code (IaC): Use tools like Terraform, Ansible, or Kubernetes operators to manage the gateway's infrastructure and deployment. Even quick-start scripts like APIPark's can be integrated into automated deployment processes.

7. Consider Developer Experience:

The API Gateway primarily serves client applications. A good gateway enhances, rather than hinders, the client developer experience: * Clear Documentation: Ensure the APIs exposed through the gateway are well-documented. * Consistent Interfaces: Strive for consistent URL structures, error formats, and authentication mechanisms across your APIs. * Self-Service: If possible, provide a developer portal (which APIPark also offers) where developers can explore APIs, subscribe, and manage their API keys. * Meaningful Errors: Return clear and actionable error messages from the gateway when requests fail, helping developers debug issues quickly.

By embracing these best practices, organizations can fully leverage the power of an API Gateway to build highly available, secure, performant, and easily manageable microservices architectures that drive innovation and deliver exceptional user experiences.

Conclusion

The journey through the core concepts of an API Gateway reveals it to be far more than a simple proxy or a fancy load balancer. It stands as a pivotal architectural pattern, an intelligent orchestrator that is indispensable in the intricate landscape of modern distributed systems, particularly those built on microservices. From abstracting backend complexities and centralizing critical cross-cutting concerns like security and rate limiting, to enhancing performance through caching and ensuring system resilience with advanced traffic management, the API Gateway fundamentally redefines how organizations expose and manage their digital capabilities.

We've explored its foundational definition as a unified entry point, delving into the imperative reasons for its adoption – the need for abstraction, robust security, improved scalability, simplified client development, and unparalleled observability. The detailed examination of its core functionalities, including sophisticated routing, stringent authentication and authorization, dynamic request/response transformations, and advanced resilience patterns, underscores its multifaceted role as a guardian, an enhancer, and a manager of an organization's API ecosystem.

Furthermore, by differentiating the API Gateway from related concepts like load balancers, reverse proxies, and service meshes, we've clarified its unique position at the edge of the system, primarily governing external interactions while complementing internal service-to-service communication. The discussion on various deployment models, from traditional centralized instances to cloud-managed services and versatile open-source solutions like APIPark, highlights the flexibility available to organizations in choosing a gateway that aligns with their specific technical and business needs, especially those leveraging AI technologies.

Ultimately, the successful implementation of an API Gateway is a strategic endeavor guided by best practices—starting simple, prioritizing high availability, investing in robust monitoring, maintaining vigilant security, managing API versions gracefully, automating operations, and prioritizing developer experience. When thoughtfully deployed and diligently managed, an API Gateway empowers organizations to unlock the full potential of their microservices architectures, fostering agility, security, and scalability. It transforms a potentially chaotic network of services into a cohesive, performant, and secure platform, paving the way for sustained innovation in an increasingly interconnected digital world. The API Gateway is not just a trend; it is a foundational pillar for resilient and future-proof digital infrastructure.

Frequently Asked Questions (FAQs)

- What is the primary difference between an API Gateway and a traditional Reverse Proxy? An API Gateway is a specialized type of reverse proxy, but it offers a significantly expanded set of functionalities tailored specifically for managing APIs. While a reverse proxy primarily forwards HTTP/HTTPS traffic and might handle basic caching or SSL termination, an API Gateway adds layers of intelligence like authentication, authorization, rate limiting, API versioning, request/response transformation, and API composition. It understands the context of API calls and manages the entire API lifecycle, acting as a smart facade for microservices.

- Why can an API Gateway improve performance despite adding an extra network hop? While an API Gateway introduces an additional hop, it often improves overall system performance through several optimizations. It can implement caching for frequently requested data, reducing the load on backend services and speeding up response times. It also performs load balancing across multiple service instances, preventing bottlenecks. Furthermore, it can transform responses, aggregate data from multiple services into a single, optimized payload, and handle connection pooling, all of which contribute to reduced latency and increased throughput for the client.

- Is an API Gateway necessary for every microservices architecture? While not strictly "necessary" for the smallest, simplest microservices deployments (where a direct client-to-service communication might initially suffice), an API Gateway quickly becomes indispensable as the number of microservices and client applications grows. Without it, managing cross-cutting concerns (security, logging, rate limiting) becomes fragmented, client-side logic becomes complex, and evolving backend services can cause significant disruption. For production-grade, scalable, and secure microservices, an API Gateway is widely considered a best practice and a critical component.

- How does an API Gateway help with API security? The API Gateway acts as the first line of defense, centralizing security enforcement. It performs authentication (verifying client identity via API keys, OAuth, JWTs) and authorization (checking if the client has permissions for the requested resource). It can also enforce rate limits to prevent abuse and DDoS attacks, perform IP whitelisting/blacklisting, terminate SSL/TLS connections, and even provide basic Web Application Firewall (WAF) functionalities to filter out common attack patterns before they reach backend services. This consolidated security posture protects individual microservices and ensures consistent policy application.

- Can an API Gateway be used with AI models and how? Yes, an API Gateway can be highly beneficial when working with AI models, especially in a microservices context. Platforms like APIPark are specifically designed as AI gateways. They can:

- Unify AI API Formats: Standardize the invocation format for diverse AI models (e.g., from different providers like OpenAI, Google AI, custom models), simplifying client integration.

- Manage Access & Cost: Centralize authentication, authorization, and track costs for AI model usage.

- Prompt Encapsulation: Allow developers to encapsulate complex prompts or chained AI calls into simple REST API endpoints.

- Traffic Management & Caching: Apply rate limiting and caching to AI model invocations, which can be expensive and resource-intensive, optimizing cost and performance.

- Lifecycle Management: Provide end-to-end management for AI-powered APIs, from design to deprecation.

🚀You can securely and efficiently call the OpenAI API on APIPark in just two steps:

Step 1: Deploy the APIPark AI gateway in 5 minutes.

APIPark is developed based on Golang, offering strong product performance and low development and maintenance costs. You can deploy APIPark with a single command line.

curl -sSO https://download.apipark.com/install/quick-start.sh; bash quick-start.sh

In my experience, you can see the successful deployment interface within 5 to 10 minutes. Then, you can log in to APIPark using your account.

Step 2: Call the OpenAI API.