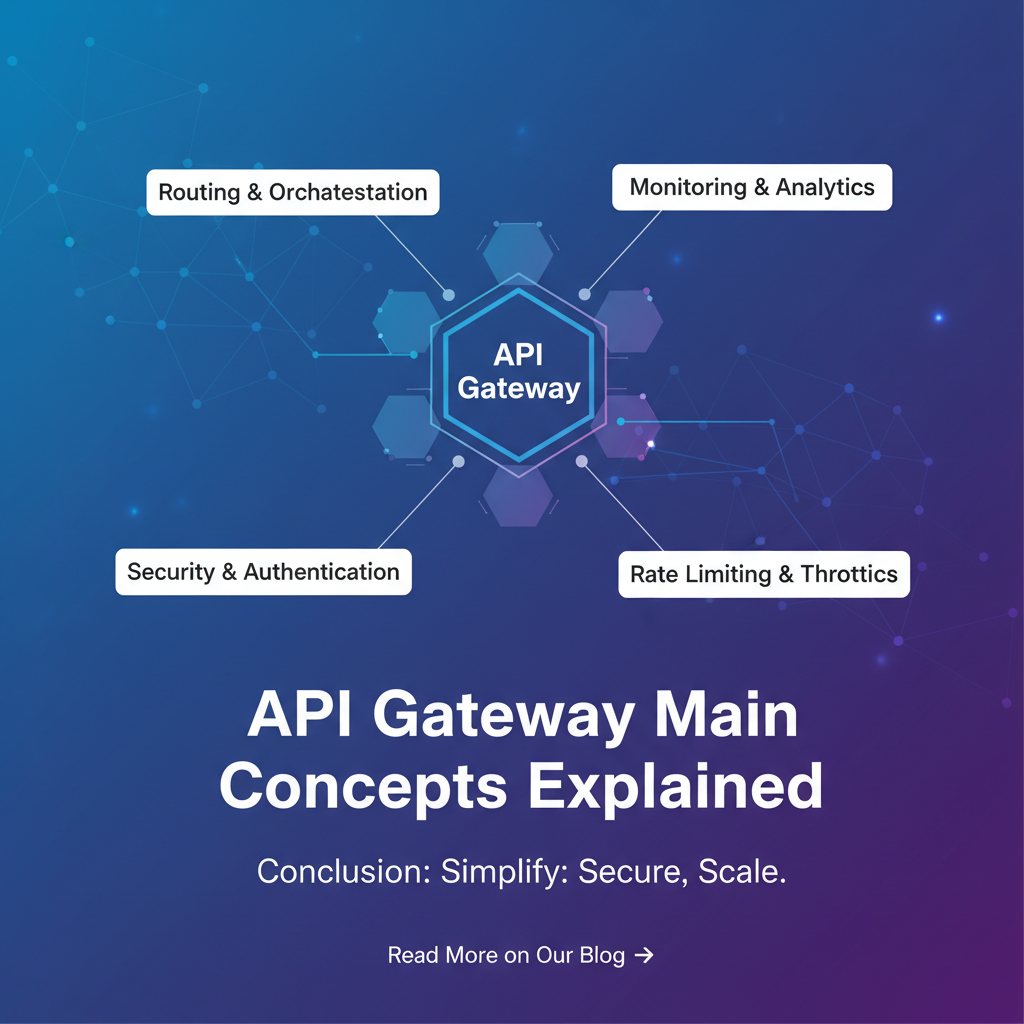

API Gateway Main Concepts Explained

In the rapidly evolving landscape of software architecture, where monolithic applications have given way to intricate constellations of microservices and distributed systems, the humble API Gateway has ascended to become an indispensable component. It stands as the vigilant sentinel, the intelligent traffic controller, and the unwavering security guard at the digital frontier of modern applications. No longer just a simple proxy, today's API Gateway is a sophisticated orchestrator, crucial for managing the complexity, ensuring the security, and enhancing the performance of vast and interconnected digital ecosystems. This comprehensive exploration delves into the fundamental concepts underpinning an API Gateway, dissecting its core functionalities, unearthing its myriad benefits, addressing the inherent challenges, and peering into its promising future.

1. The Genesis of the API Gateway – Why Do We Need It?

To truly appreciate the significance of an API Gateway, one must first understand the architectural shifts that necessitated its creation. For decades, the software world largely operated on the paradigm of monolithic applications – single, large, self-contained units of code encompassing all functionalities. While straightforward to develop and deploy in their nascent stages, these monoliths invariably became unwieldy giants as they scaled. Adding new features meant redeploying the entire application, even minor bugs could bring down the whole system, and technological innovation was stifled by the rigid boundaries of a single codebase and technology stack.

The early 2010s saw the widespread adoption of microservices architecture, a paradigm shift championed by pioneers like Netflix and Amazon. The core idea was to break down monolithic applications into smaller, independent, loosely coupled services, each responsible for a specific business capability, running in its own process, and communicating with others over a network. This shift promised a myriad of benefits: enhanced agility, allowing individual teams to develop and deploy services independently; improved scalability, enabling specific services to be scaled up or down based on demand; greater resilience, as the failure of one service would not necessarily cascade and cripple the entire system; and technological diversity, empowering teams to choose the best tools for their specific service.

However, this newfound freedom came with its own set of complexities. A single client application, perhaps a mobile app or a web frontend, that once communicated with a single monolithic backend, now faced the daunting task of interacting with dozens, or even hundreds, of individual microservices. Imagine an e-commerce application where product details are fetched from one service, user profiles from another, order history from a third, payment processing from a fourth, and recommendations from a fifth.

Initially, clients would directly call each relevant microservice. This "client-to-microservice" communication pattern, while seemingly direct, quickly revealed severe limitations:

- Increased Client-Side Complexity: The client application became burdened with the responsibility of knowing the network locations (IP addresses, ports) of multiple services, understanding different

APIcontracts, handling service discovery, and composing responses from disparate sources. This led to "fat clients" that were difficult to maintain and evolve. - Security Risks: Exposing all internal microservices directly to external clients created a larger attack surface. Each service would need to handle its own authentication, authorization, and rate limiting, leading to duplicated effort and potential inconsistencies in security policies.

- Network Overhead (N+1 Problem): A single user interaction might require multiple network round trips from the client to various backend services, increasing latency and consuming more client-side resources. For instance, displaying a single product page might require fetching product data, reviews, related items, and inventory from different services.

- Refactoring Challenges: As microservices evolved, their internal

APIs might change. Without an intermediary, every client consuming these services would need to be updated, creating a tight coupling that negated many of the benefits of microservices. - Cross-Cutting Concerns: Implementing features like request logging, monitoring, caching, and rate limiting uniformly across all microservices became a herculean task, leading to fragmented operational visibility and inconsistent quality of service.

It became abundantly clear that a new architectural component was needed—an intelligent intermediary that could abstract away the complexities of the microservices backend from the client, act as a unified entry point, and enforce critical cross-cutting concerns. This necessity birthed the concept of the API Gateway. It emerged as the elegant solution to bring order and efficiency to the chaotic beauty of distributed systems, transforming a direct, scattered communication model into a controlled, efficient, and secure interaction pathway.

2. Defining the API Gateway – More Than Just a Proxy

At its heart, an API Gateway is a single, unified entry point for all client requests into an API ecosystem. It acts as a reverse proxy that receives incoming API calls, processes them according to defined policies, and routes them to the appropriate backend microservices or functions. However, to simply call it a reverse proxy would be a significant understatement, akin to calling a sophisticated airport control tower merely a tall building with windows. While it performs proxying, its capabilities extend far beyond basic request forwarding.

Unlike traditional reverse proxies or load balancers, which primarily operate at the transport layer (Layer 4) or basic application layer (Layer 7) for simple routing, an API Gateway operates at a much higher, more intelligent application layer. It understands the nuances of HTTP requests, API contracts, security tokens, and business logic. It's designed specifically for the unique demands of API traffic, offering a rich suite of functionalities that are critical for managing modern, distributed applications.

Think of the API Gateway as the central reception desk and security checkpoint for a large, sprawling corporate campus (your microservices backend). Instead of visitors (clients) trying to locate individual departments (microservices) directly, they all arrive at the main entrance. Here, the gateway performs several crucial tasks:

- Identity Verification: Checks the visitor's credentials (authentication and authorization).

- Traffic Management: Directs the visitor to the correct department based on their request, ensuring no single department is overwhelmed.

- Policy Enforcement: Ensures the visitor adheres to campus rules (rate limiting, security policies).

- Service Aggregation: If a visitor needs information from multiple departments, the reception desk might gather it all and present a single, consolidated response, saving the visitor multiple trips.

- Logging and Monitoring: Records who visited, when, and for what purpose, providing a comprehensive audit trail.

This intelligent intermediary position allows the API Gateway to centralize a wide array of cross-cutting concerns that would otherwise need to be redundantly implemented in each backend service or handled laboriously by clients. It decouples the client from the internal architecture of the microservices, creating a more robust, scalable, and secure system. It provides a consistent, simplified API interface to external consumers, shielding them from the underlying complexities, changes, and heterogeneity of the backend. In essence, it transforms a collection of individual services into a coherent and manageable API product.

3. Core Concepts and Fundamental Features of an API Gateway

The power of an API Gateway lies in its comprehensive suite of features, each designed to address specific challenges in API management. Let's delve into these core concepts with significant detail, exploring their mechanisms and implications.

3.1. Routing and Request Forwarding

The most fundamental responsibility of an API Gateway is to direct incoming requests to the correct backend service. This seemingly simple task is crucial for decoupling clients from service locations and enabling dynamic, flexible architectures.

- Mechanism: When a request arrives at the

gateway, it inspects various parts of the HTTP request – the URL path, HTTP headers, query parameters, or even the HTTP method – to determine which backend service should handle it. - Path-Based Routing: This is the most common method. For example, a request to

/users/{id}might be routed to a "User Service," while/products/{id}goes to a "Product Service." Thegatewaymight rewrite the path before forwarding it, stripping off the/usersprefix so the backend service only sees/or/{id}. - Host-Based Routing: Useful for multi-tenant architectures or when different subdomains correspond to different services.

api.example.commight go to one set of services, whileadmin.example.comgoes to another. - Header-Based Routing: More advanced routing can be based on custom HTTP headers. For instance, an

X-API-Versionheader could direct requests to different versions of a service, facilitating canary deployments or A/B testing. - Dynamic Routing: Modern

API Gatewayscan integrate with service discovery mechanisms (like Consul, Eureka, Kubernetes Service Discovery) to dynamically locate available instances of backend services. If a service scales up or down, or moves to a different network address, thegatewayautomatically updates its routing table without manual intervention. This is essential for highly elastic and fault-tolerant microservices environments. - Implications: This centralization of routing logic simplifies client development, as clients only need to know the

gateway's address. It also allows developers to refactor backend services, change their network locations, or even rewrite them in different languages, all without impacting external clients, as long as theAPI Gateway's public interface remains consistent. Thegatewaybecomes a stable abstraction layer over an ever-changing backend.

3.2. Authentication and Authorization

Security is paramount for any API, and the API Gateway acts as the primary enforcement point, centralizing and streamlining security concerns. This is one of its most critical functions, relieving individual microservices from the burden of repeatedly verifying client identities and permissions.

- Authentication: This is the process of verifying the identity of the client making the request. The

API Gatewaycan support various authentication mechanisms:- API Keys: Simple tokens often included in headers or query parameters. The

gatewayvalidates the key against a repository of known, active keys. - OAuth2 / OpenID Connect: Industry-standard protocols for delegated authorization. The

gatewaycan act as a resource server, validating JWT (JSON Web Tokens) issued by an Authorization Server. It inspects the token's signature, expiration, and issuer. - Mutual TLS (mTLS): A higher level of security where both the client and the server (the

gateway) authenticate each other using X.509 certificates. - Basic Authentication: Username and password, often used for internal or less sensitive

APIs.

- API Keys: Simple tokens often included in headers or query parameters. The

- Authorization: Once a client's identity is verified, authorization determines what that client is allowed to do.

- Role-Based Access Control (RBAC): The

gatewaycan inspect roles or scopes embedded in the authentication token (e.g., JWT claims) and match them against predefined access policies. For example, an "admin" role might access/adminendpoints, while a "user" role can only access/user/{id}. - Attribute-Based Access Control (ABAC): More granular authorization based on various attributes of the user, resource, and environment.

- Role-Based Access Control (RBAC): The

- Policy Enforcement: The

gatewayapplies these security policies uniformly across all incoming requests. If a request fails authentication or authorization, thegatewayimmediately rejects it, often with a 401 Unauthorized or 403 Forbidden status, preventing unauthorized access from even reaching the backend services. - Implications: Centralizing authentication and authorization at the

gatewaysignificantly reduces the attack surface and ensures consistent security enforcement. It frees backend service developers from implementing these complex security mechanisms, allowing them to focus on core business logic. Furthermore, it simplifies credential management and key rotation processes. For robust security management, platforms like APIPark offer robust authentication and authorization features, enabling enterprises to manage access granularly, integrating seamlessly with various identity providers and ensuring that only legitimate and authorized requests reach sensitive backend services.

3.3. Rate Limiting and Throttling

To protect backend services from overload, abuse, and denial-of-service (DoS) attacks, API Gateways implement rate limiting and throttling.

- Rate Limiting: This feature restricts the number of requests a client can make to an

APIwithin a specific time window. If a client exceeds this limit, subsequent requests are rejected, usually with a 429 Too Many Requests status code, until the window resets.- Fixed Window: A straightforward approach where requests are counted within a fixed time interval (e.g., 100 requests per hour). All requests within that hour count towards the limit, regardless of when they occur.

- Sliding Window: A more flexible approach that tracks requests over a moving time window, often by maintaining a log of recent request timestamps. This avoids the "burst problem" at the edge of fixed windows.

- Leaky Bucket / Token Bucket: These algorithms offer smoother control over request rates. The leaky bucket allows requests to "leak out" at a steady rate, while the token bucket grants tokens at a fixed rate, with requests only processed if a token is available.

- Throttling: While often used interchangeably with rate limiting, throttling usually implies a more dynamic adjustment of limits, often based on system load or client tiers. For instance, premium subscribers might have higher rate limits than free-tier users.

- Purpose:

- Resource Protection: Prevents a single client from monopolizing server resources, ensuring fair access for all users.

- Cost Control: Helps manage infrastructure costs by preventing excessive usage that might trigger auto-scaling events or consume expensive third-party

APIcredits. - Abuse Prevention: Deters malicious activities like brute-force attacks or data scraping.

- Implications: Rate limiting is critical for maintaining service availability and stability. It provides a defensive barrier, ensuring that even under heavy load or attack, the core backend services can continue to operate reliably for legitimate users. By offloading this responsibility from individual services, the

gatewaysimplifies their development and improves their efficiency.

3.4. Traffic Management and Load Balancing

Beyond simply routing requests, an API Gateway actively manages the flow of traffic to optimize performance, ensure high availability, and build resilience into the system.

- Load Balancing: When multiple instances of a backend service are running (common in microservices architectures for scalability and redundancy), the

gatewaydistributes incoming requests across these instances.- Algorithms: Common algorithms include:

- Round Robin: Distributes requests sequentially to each service instance in turn.

- Least Connections: Directs traffic to the instance with the fewest active connections, aiming to balance load more dynamically.

- IP Hash: Routes requests from the same client IP address to the same backend instance, which can be useful for session stickiness (though often handled at other layers).

- Weighted Load Balancing: Assigns different weights to instances, directing more traffic to more powerful or stable instances.

- Algorithms: Common algorithms include:

- Circuit Breakers: A critical resilience pattern. If a backend service becomes unhealthy or unresponsive, the

gatewaycan "open" a circuit, preventing further requests from being sent to that failing service. Instead, it can immediately return an error or a fallback response, protecting the service from being overwhelmed and allowing it time to recover. After a configured timeout, the circuit moves to a "half-open" state, allowing a few test requests to see if the service has recovered. - Retries and Timeouts: The

gatewaycan be configured to automatically retry failed requests (within defined limits and often with exponential backoff) or to enforce timeouts for backend service responses. This prevents clients from waiting indefinitely for a bogged-down service and improves the overall responsiveness of the system. - Traffic Shifting (Canary Deployments/A/B Testing):

API Gatewayscan intelligently shift a small percentage of traffic to a new version of a service (canary deployment) or to a different variant (A/B testing) to monitor its performance and stability before rolling it out to all users. This minimizes the risk associated with new deployments. - Implications: Robust traffic management capabilities make the system highly available and fault-tolerant. They allow for seamless scaling, graceful degradation during failures, and safe, incremental deployments of new features, all while ensuring optimal performance for end-users.

3.5. Request/Response Transformation

The API Gateway can modify API requests and responses on the fly, acting as a powerful mediator between disparate systems and simplifying client-side logic.

- Header Manipulation: Add, remove, or modify HTTP headers in both requests and responses. This can be used for injecting correlation IDs for tracing, adding security headers, or stripping sensitive information.

- Payload Transformation: Change the structure or content of the request or response body. This is incredibly useful for:

- Protocol Translation: If a client expects a RESTful

APIbut the backend service is gRPC or SOAP, thegatewaycan perform the necessary translation. - Data Format Conversion: Converting JSON to XML, or vice-versa, to accommodate different client or backend requirements.

- Data Enrichment/Reduction: Adding additional data to a response from another internal service before sending it to the client, or stripping unnecessary data from a backend response to reduce payload size.

- Version Translation: Allowing older clients to interact with newer backend service versions by transforming requests and responses to match the expected format.

- Protocol Translation: If a client expects a RESTful

- Query Parameter Transformation: Add, remove, or modify query parameters before forwarding a request to the backend.

APIComposition and Aggregation: Perhaps one of the most powerful transformation capabilities. A singleAPI Gatewayendpoint can trigger multiple calls to different backend services, aggregate their responses, and then compose a single, unified response tailored for the client. For example, a "Get User Dashboard"APIcall might trigger requests to a "User Profile Service," "Order History Service," and "Recommendation Service," combine the results, and send them back to the client in one go.- Implications: This feature significantly simplifies client development by providing a tailored, consolidated

APIexperience. It allows for greater flexibility in backend evolution, as internalAPIchanges can be masked by thegateway's transformation rules, preventing breaking changes for clients. It also reduces network chattiness between client and backend, improving latency.

3.6. Monitoring, Logging, and Analytics

Visibility into API traffic is crucial for understanding system health, troubleshooting issues, and making informed business decisions. The API Gateway serves as a central point for capturing this vital data.

- Centralized Logging: Every request passing through the

gatewaycan be logged, capturing details such as:- Request timestamp, source IP, client ID.

- Requested endpoint and HTTP method.

- Latency (time taken by

gatewayand backend service). - HTTP status codes and response sizes.

- Backend service details (which service instance handled the request).

- Error messages and stack traces. These logs are invaluable for debugging, auditing, and security analysis.

- Metrics and Telemetry: The

gatewaycan expose various metrics, such as:- Request rates (requests per second/minute).

- Error rates (number of 4xx/5xx responses).

- Latency percentiles (P99, P95, P50).

- CPU, memory, and network usage of the

gatewayitself. These metrics are typically exported to monitoring systems (e.g., Prometheus, Datadog) for real-time dashboards and alerting.

- Distributed Tracing Integration:

API Gatewayscan inject correlation IDs (likeX-Request-IDortraceparentheaders) into requests before forwarding them to backend services. These IDs are propagated through the entire call chain, allowing developers to trace a single request's journey across multiple microservices, providing end-to-end visibility and simplifying the diagnosis of complex distributed problems. - Analytics: By aggregating and analyzing the collected logs and metrics,

API Gatewayscan provide powerful insights intoAPIusage patterns, performance trends, and potential bottlenecks. This data can inform business decisions, capacity planning, and feature prioritization. - Implications: Centralized monitoring and logging provide a holistic view of the entire

APIecosystem. It enables proactive problem detection, faster root cause analysis, and helps ensure service level agreements (SLAs) are met. Comprehensive logging and powerful data analysis are hallmarks of advancedAPImanagement solutions, and APIPark excels in this domain, providing detailedAPIcall logs and insightful analytics to help businesses track performance and identify trends proactively, often detecting issues before they impact end-users.

3.7. Caching

Caching at the API Gateway level is a powerful optimization technique that significantly improves performance and reduces the load on backend services.

- Mechanism: When a client requests data that is frequently accessed and does not change often, the

gatewaycan store a copy of the response in its internal cache. Subsequent requests for the same data can then be served directly from the cache, bypassing the backend service entirely. - Cache Policies:

Gatewayssupport various caching policies:- Time-to-Live (TTL): Responses are cached for a specific duration.

- Cache-Control Headers: Honoring HTTP

Cache-Controlheaders from backend services. - Invalidation: Mechanisms to explicitly clear cached items when underlying data changes.

- Benefits:

- Reduced Latency: Serving responses from cache is significantly faster than making a round trip to a backend service, especially across networks.

- Reduced Backend Load: Less traffic reaches backend services, freeing up their resources and reducing operational costs.

- Improved Scalability: The

gatewaycan handle a larger volume of requests without stressing the backend.

- Considerations: Careful management of cache invalidation is crucial to prevent serving stale data. Caching is best suited for idempotent

GETrequests for data that is relatively static or can tolerate slight delays in freshness. - Implications: Caching can dramatically enhance the user experience by providing faster

APIresponses, particularly for frequently accessed publicAPIs. It's an essential tool for scalingAPIs efficiently and reducing infrastructure strain.

3.8. Security Policies (Beyond Authentication/Authorization)

While authentication and authorization are foundational, API Gateways offer a broader spectrum of security features to protect against various threats.

- Web Application Firewall (WAF) Capabilities: Many

API Gatewaysincorporate WAF-like functionalities to protect against common web vulnerabilities identified by OWASP Top 10, such as SQL injection, cross-site scripting (XSS), and security misconfigurations. They can inspect request payloads for malicious patterns and block suspicious requests. - DDoS Protection: While dedicated DDoS protection services typically operate upstream,

API Gatewayscan contribute by detecting and mitigating certain types of application-layer DDoS attacks through advanced rate limiting, IP blacklisting, and anomaly detection. - Bot Detection and Mitigation: Identify and block automated bots that might be scraping data, performing credential stuffing, or launching other malicious activities.

- Input Validation: Enforce schema validation on incoming request bodies to ensure they conform to expected formats, rejecting malformed requests before they reach backend services.

- TLS/SSL Termination: Offload the computationally intensive task of encrypting and decrypting

APItraffic (HTTPS) from backend services to thegateway. This centralizes certificate management and improves backend service performance. - Threat Protection: Implement various rules to detect and block threats like XML/JSON bomb attacks, oversized payloads, or invalid

APIrequest structures that could exploit vulnerabilities or consume excessive resources. - Implications: By consolidating a comprehensive suite of security policies at the edge, the

API Gatewayforms a robust first line of defense, significantly enhancing the overall security posture of theAPIecosystem. This centralized security simplifies compliance and reduces the likelihood of security vulnerabilities in individual microservices.

4. Advanced API Gateway Patterns and Considerations

As architectures mature, API Gateways often participate in more sophisticated patterns.

4.1. Backend for Frontend (BFF)

The BFF pattern proposes creating a separate API Gateway (or a set of gateways) specifically tailored for each type of client application (e.g., one for web, one for iOS, one for Android).

- Problem Solved: General-purpose

APIs might not perfectly suit the specific data requirements or interaction patterns of different client applications. A mobile app might need a much more condensed data set than a web application, or a different sequence ofAPIcalls. Directly exposing a genericAPIto diverse clients forces clients to over-fetch data, make multiple requests, or perform complex data transformations themselves. - Mechanism: Each BFF acts as an

API Gatewayoptimized for a particular client. It fetches data from various backend microservices, aggregates and transforms it, and then presents it in a format precisely matching that client's needs. - Benefits:

- Reduced Client-Side Complexity: Clients become "thin" as they no longer need to compose multiple responses or perform extensive data transformations.

- Optimized Performance: Tailored responses minimize payload size and the number of network requests from the client.

- Decoupling: Changes in backend services only affect the relevant BFF, not all clients.

- Team Autonomy: Frontend teams can own and evolve their respective BFFs independently, matching their deployment cycles to their client applications.

- Implications: While adding another layer of

gateway, the BFF pattern significantly improves developer experience for frontend teams and optimizes the performance and maintainability of client applications, especially in environments with diverse client types.

4.2. Service Mesh vs. API Gateway

A common point of confusion arises when discussing API Gateways and service meshes, as both handle traffic, security, and observability in microservices. However, they address different concerns and are typically complementary.

- API Gateway (North-South Traffic): Primarily deals with

north-southtraffic – external traffic originating from clients outside the microservices cluster, flowing into the cluster. Its focus is onAPIexposition, publicAPImanagement, and protecting the edge of the system. It handles concerns like authentication for external clients, rate limiting for public consumers,APIversioning, and client-specificAPIaggregation. - Service Mesh (East-West Traffic): Primarily deals with

east-westtraffic – internal traffic flowing between microservices within the cluster. It’s embedded within the application infrastructure, usually as sidecar proxies next to each service instance (e.g., Istio, Linkerd). Its focus is on service-to-service communication, providing advanced traffic control (retries, timeouts, circuit breakers for internal calls), mTLS for internal service authentication, fine-grained access policies between services, and deep observability of inter-service communication. - Complementary Roles: They are not mutually exclusive; in fact, they often work together. The

API Gatewayacts as the first line of defense and the entry point for external consumers, passing authenticated and authorized requests into the service mesh. The service mesh then takes over, managing the internal communication between microservices, ensuring resilience and security for east-west traffic. - Implications: Understanding the distinct roles of

API Gatewaysand service meshes is crucial for designing robust and scalable microservices architectures. Thegatewayhandles the external interface, while the service mesh governs the internal fabric, together forming a powerful control plane for distributed systems.

4.3. Deployment Strategies

The way an API Gateway is deployed can vary significantly based on architectural preferences, scalability requirements, and the chosen technology.

- In-Process vs. Out-of-Process:

- In-Process: The

gatewayfunctionality is implemented as a library or module within each microservice itself. This avoids an extra network hop but distributesgatewaylogic, making centralized management harder. Less common for fullAPI Gatewayfunctionality. - Out-of-Process: The

API Gatewayis deployed as a separate, independent service or cluster of services, distinct from the backend microservices. This is the predominant model, allowing for centralized configuration, scaling, and management ofgatewayfunctionalities.

- In-Process: The

- Monolithic Gateway vs. Distributed Gateways:

- Monolithic Gateway: A single, central

API Gatewayhandles allAPItraffic for the entire application. Simpler to manage initially but can become a bottleneck and a single point of failure if not properly scaled. - Distributed/Layered Gateways: Multiple

API Gatewaysare deployed. This could be a combination of a centralgatewayfor publicAPIs and smaller, domain-specificgateways or BFFs for different consumer types, or even one per team/business domain for greater autonomy. This improves resilience and reduces the blast radius of failures.

- Monolithic Gateway: A single, central

- Cloud-Native Deployments: In containerized environments like Kubernetes,

API Gatewaysare often deployed as containerized applications, leveraging Kubernetes features for scaling, self-healing, and service discovery. Ingress controllers in Kubernetes can act as a basicAPI Gateway, but dedicatedAPI Gatewaysoffer far richer functionality. - Edge Deployment: For low-latency requirements or to handle

APIs from IoT devices,gatewayfunctionalities might be pushed closer to the network edge, leveraging content delivery networks (CDNs) or edge computing platforms. - Implications: The choice of deployment strategy impacts scalability, resilience, latency, and management overhead. A well-thought-out deployment strategy ensures the

API Gatewayitself doesn't become a bottleneck or a single point of failure, enabling it to effectively support the underlying microservices architecture. For quick deployment, platforms like APIPark offer single-command-line installation, allowing developers to set up a robustAPI Gatewayrapidly and efficiently, often within minutes, which is beneficial for both development and production environments.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

5. The Benefits of Implementing an API Gateway

The adoption of an API Gateway brings a multitude of advantages that profoundly impact the efficiency, security, and scalability of modern application architectures.

5.1. Centralized Control and Management

By acting as a single entry point, the API Gateway consolidates the management of policies, rules, and configurations that apply to all API traffic. Instead of scattering rate limits, security policies, or logging configurations across dozens of microservices, these concerns can be defined, enforced, and audited in one place. This centralization significantly reduces operational overhead, ensures consistency, and simplifies compliance with regulatory requirements. It provides a "single pane of glass" view into the API landscape, making it easier for administrators to monitor, update, and govern APIs.

5.2. Enhanced Security

Security is arguably the most compelling benefit. The API Gateway acts as a crucial perimeter defense, protecting backend services from direct exposure to potentially malicious external traffic. It centralizes authentication, authorization, and various threat protection mechanisms, ensuring that every request is thoroughly vetted before it reaches the core business logic. This consolidation means security experts can focus their efforts on hardening a single, well-defined component rather than distributing security concerns across numerous services, thereby reducing the attack surface and minimizing the risk of security vulnerabilities. It also facilitates the implementation of advanced security features like mTLS and sophisticated WAF rules across the entire API estate.

5.3. Improved Performance and Scalability

Through intelligent features like caching, load balancing, and rate limiting, API Gateways significantly boost the performance and scalability of the overall system. Caching reduces latency for frequently accessed data and offloads backend services. Load balancing distributes traffic efficiently across service instances, preventing bottlenecks and ensuring high availability. Rate limiting protects services from being overwhelmed, allowing them to maintain stable performance even under peak loads. By handling these cross-cutting concerns, the gateway allows backend services to focus solely on their core business logic, making them leaner, faster, and more efficient.

5.4. Simplified Client Development

Clients (web browsers, mobile apps, other services) no longer need to know the intricate topology of the backend microservices. They interact with a single, consistent API Gateway interface, simplifying their codebase and reducing development complexity. The gateway can aggregate responses from multiple services, transform data formats, and tailor responses for specific client types (e.g., via the BFF pattern), effectively shielding clients from internal architectural changes. This abstraction makes client applications easier to build, maintain, and evolve, accelerating time-to-market for new features.

5.5. Greater Agility and Maintainability

The API Gateway decouples the client from the backend, creating a robust abstraction layer. This means that backend microservices can be refactored, updated, scaled, or even replaced without impacting client applications, as long as the gateway's public API contract remains stable. This architectural flexibility promotes agility, allowing development teams to iterate faster, experiment with new technologies, and deploy changes more frequently. The ability to manage API versions through the gateway also simplifies the process of rolling out updates and deprecating old APIs gracefully.

5.6. Better Observability

As a central traffic interceptor, the API Gateway provides an unparalleled vantage point for comprehensive monitoring, logging, and analytics. It can capture detailed logs for every request, expose vital performance metrics, and inject correlation IDs for distributed tracing. This centralized data collection offers a holistic view of API usage, performance, and errors across the entire ecosystem. This enhanced observability is invaluable for identifying bottlenecks, diagnosing issues, understanding user behavior, and making data-driven decisions about API evolution and infrastructure capacity planning.

6. Challenges and Best Practices for API Gateway Implementation

While the benefits are substantial, implementing an API Gateway is not without its challenges. Thoughtful design and adherence to best practices are crucial for a successful deployment.

6.1. Challenges

- Single Point of Failure (SPOF): Ironically, the

API Gateway's strength as a central entry point can also be its greatest vulnerability. If thegatewaygoes down, all client communication with the backend services is interrupted.- Mitigation: This risk is mitigated through high-availability deployments, typically involving multiple

gatewayinstances running in a cluster behind a load balancer, spread across different availability zones.

- Mitigation: This risk is mitigated through high-availability deployments, typically involving multiple

- Performance Bottleneck: As all

APItraffic flows through thegateway, it can become a performance bottleneck if not properly designed and scaled. The overhead of processing each request (authentication, routing, transformations) can introduce latency.- Mitigation: Use performant

gatewaysoftware (e.g., those written in Go, Rust, or optimized C++), ensure sufficient computing resources (CPU, memory, network I/O), implement efficient caching, and continuously monitor performance metrics. Horizontal scaling ofgatewayinstances is essential.

- Mitigation: Use performant

- Increased Latency: Even with efficient processing, an

API Gatewayadds at least one additional network hop between the client and the backend service, inherently increasing latency compared to direct service calls.- Mitigation: Optimize

gatewayconfiguration, minimize unnecessary processing steps, leverage caching aggressively, and strategically placegatewayinstances geographically closer to consumers or backend services.

- Mitigation: Optimize

- Complexity of Configuration and Management: A feature-rich

API Gatewaycan have a complex configuration, especially when dealing with manyAPIs, advanced routing rules, security policies, and transformations. Managing this configuration, keeping it in sync with service changes, and deploying updates requires robust tools and processes.- Mitigation: Embrace Infrastructure as Code (IaC) for

gatewayconfiguration, use automated deployment pipelines, and leverageAPImanagement platforms that provide user-friendly interfaces or declarative configuration options.

- Mitigation: Embrace Infrastructure as Code (IaC) for

- Overhead of Maintenance: Like any critical system component, the

API Gatewayrequires ongoing maintenance, including software updates, security patching, and monitoring.- Mitigation: Choose a

gatewaysolution with good community support or commercial backing, automate maintenance tasks where possible, and allocate dedicated resources for its upkeep.

- Mitigation: Choose a

6.2. Best Practices

- Design for High Availability and Fault Tolerance: Always deploy the

API Gatewayin a highly available configuration with redundancy, automatic failover, and self-healing capabilities. Use multiple instances behind a robust load balancer and distribute them across different data centers or cloud availability zones. - Monitor Thoroughly: Implement comprehensive monitoring and alerting for the

API Gatewayitself and the traffic passing through it. Track key metrics like request rates, error rates, latency, and resource utilization. Set up alerts for anomalies and failures. Integrate with distributed tracing to gain end-to-end visibility. - Automate Deployment and Configuration: Treat the

API Gatewayconfiguration as code. Use CI/CD pipelines to automate the deployment ofgatewayinstances and the management of their configurations. This ensures consistency, repeatability, and reduces the risk of human error. - Adopt a "Shift-Left" Security Approach: Integrate security testing and policy validation early in the development lifecycle. While the

gatewayis the enforcement point, ensuring backend services are inherently secure is equally important. - Start Simple and Iterate: Don't try to implement every possible

gatewayfeature on day one. Start with the most critical functionalities (routing, basic authentication) and gradually add more advanced features like rate limiting, caching, and transformations as needs arise. - Choose the Right Gateway for Your Needs: The market offers a wide array of

API Gatewaysolutions, from open-source options like Kong, Envoy, andAPIPark, to commercial products from cloud providers (AWS API Gateway, Azure API Management, Google Cloud Apigee) and enterprise vendors. Consider factors like:- Features: Does it support the specific routing, security, and transformation capabilities you need?

- Scalability and Performance: Can it handle your anticipated traffic volume with acceptable latency?

- Deployment Flexibility: Can it be deployed in your preferred environment (on-prem, cloud, Kubernetes)?

- Ecosystem and Integrations: How well does it integrate with your existing monitoring, logging, and identity management systems?

- Cost and Support: What are the licensing costs, and what level of commercial support is available?

- Open Source vs. Commercial: Open-source options like APIPark provide transparency, community-driven innovation, and often lower initial costs, making them excellent choices for many organizations, especially startups and those with specific customization needs. Commercial versions, however, typically offer more advanced features, professional support, and SLAs which are critical for large enterprises.

- Implement Effective API Versioning: Use the

gatewayto manageAPIversions, allowing multiple versions of anAPIto coexist while clients gradually migrate. This ensures backward compatibility and smooth transitions duringAPIevolution. - Decouple

APIContracts from Backend Implementations: Thegatewayacts as a facade. Ensure that changes in backend service implementations do not break the publicAPIcontract exposed by thegateway.

7. The Future of API Gateways

The API Gateway continues to evolve rapidly, driven by emerging architectural patterns, advanced technologies, and increasing demands for intelligence and automation.

- AI-Driven Management: The integration of Artificial Intelligence and Machine Learning promises to revolutionize

API Gatewaycapabilities. AI can analyze vast amounts ofAPItraffic data to predict load patterns, dynamically adjust rate limits, detect and mitigate sophisticated security threats in real-time, and even optimizeAPIperformance by learning optimal routing or caching strategies. This could lead to self-optimizinggateways that require minimal human intervention. For instance, an AI-poweredgatewaycould automatically identify anomalous traffic spikes, distinguish legitimate surges from DDoS attacks, and react accordingly without explicit rule configuration. - More Intelligent Traffic Routing: Future

gateways will leverage advanced analytics and machine learning to make even smarter routing decisions. This could include routing based on geographical proximity, real-time service health, cost optimization (e.g., routing to cheaper cloud regions), or even user-specific profiles to deliver personalized experiences. Dynamic routing will become even more sophisticated, adapting to an ever-changing environment in real-time. - Integration with Serverless Functions and Event-Driven Architectures: As serverless computing and event-driven architectures gain prominence,

API Gatewaysare adapting to become native frontends for functions-as-a-service (FaaS) platforms. They will seamlessly integrate with event queues, message brokers, and serverless functions, enabling the creation of highly scalable and responsive event-drivenAPIs. This will simplify the exposure of ephemeral, function-based logic to external consumers. - API Productization and Monetization:

API Gatewaysare increasingly central to treatingAPIs as products. They will offer more robust features forAPImonetization, including flexible billing models (pay-per-call, tiered subscriptions), developer portals forAPIdiscovery and subscription, and granular usage analytics that feed into commercial strategies. They will empower businesses to more effectively package, market, and sell their digital services viaAPIs. - Edge Computing and IoT Integration: As computing shifts closer to data sources, particularly in IoT and edge computing scenarios,

API Gatewayswill need to be deployed at the very edge of the network. This involves lightweight, highly performantgateways capable of operating in resource-constrained environments, providing local processing, offline capabilities, and secure communication for edge devices. - Standardization and Interoperability: Efforts towards greater standardization (e.g., OpenAPI Specification, GraphQL) will continue to influence

API Gatewaydesign, promoting better interoperability and simplifyingAPIdefinition and consumption.Gateways will likely offer more out-of-the-box support for these standards, reducing the need for custom transformations.

The API Gateway is no longer a niche tool; it's a foundational pillar of modern digital infrastructure. Its evolution reflects the growing complexity and demands placed on API-driven businesses. As systems become more distributed, intelligent, and interconnected, the API Gateway will continue to play an increasingly critical role in making these intricate ecosystems manageable, secure, and performant.

Conclusion

In the intricate tapestry of modern distributed systems and microservices architectures, the API Gateway stands as an essential, sophisticated component. It is the intelligent front-door, the vigilant security guard, and the efficient traffic controller for all API interactions, abstracting the daunting complexity of backend services from the simplicity desired by client applications. From centralizing critical security policies like authentication and authorization to ensuring system resilience through rate limiting and load balancing, and from simplifying client-side development through transformations and aggregations to providing invaluable observability via logging and analytics, the API Gateway addresses a myriad of challenges inherent in highly distributed environments.

The journey from monolithic applications to agile microservices created a new set of communication and management complexities. The API Gateway emerged as the elegant solution, acting as a powerful mediator and orchestrator. It ensures consistent service quality, fortifies security postures, enhances performance, and accelerates the development lifecycle, allowing organizations to leverage the full power of their API-driven strategies.

While its implementation presents challenges such as potential bottlenecks and configuration complexity, these can be effectively mitigated through careful design, adherence to best practices, and the selection of appropriate technologies, including versatile open-source platforms like APIPark or robust commercial offerings. As the digital landscape continues its inexorable march towards greater intelligence, automation, and distributed computing, the API Gateway will continue to evolve, integrating cutting-edge AI capabilities and adapting to emerging architectural patterns. Far from being a mere intermediary, it is an indispensable strategic asset, empowering businesses to build, manage, and scale their API ecosystems with unprecedented efficiency and confidence, thereby truly unlocking the immense power of their digital services.

Key API Gateway Features Comparison

| Feature Category | Key Functionality | Primary Benefit | Example Use Case |

|---|---|---|---|

| Traffic Management | Routing, Load Balancing, Circuit Breakers, Retries | Ensures requests reach correct, healthy services. | Distributing requests for a "Product Service" across 5 instances. |

| Security | Authentication (API Keys, OAuth), Authorization (RBAC) | Protects backend services from unauthorized access. | Validating a JWT before allowing access to user profile data. |

| Performance | Rate Limiting, Throttling, Caching | Prevents overload, reduces latency, optimizes resource use. | Limiting a user to 100 requests/minute, caching popular product listings. |

| Transformation | Request/Response Modification, Aggregation | Simplifies client interaction, adapts to backend changes. | Combining user details, order history, and recommendations into one API call. |

| Observability | Logging, Monitoring, Analytics, Distributed Tracing | Provides insights into API usage, performance, errors. | Tracking average latency for GET /orders endpoint over time. |

| API Versioning | Allows multiple API versions to coexist | Smooth migration for clients, backward compatibility. | Routing v1 calls to legacy service and v2 to new service. |

5 Frequently Asked Questions (FAQs)

1. What is the fundamental difference between an API Gateway and a traditional Load Balancer or Reverse Proxy? While an API Gateway can perform functions similar to a load balancer or reverse proxy (like routing and traffic distribution), it operates at a much higher application layer and offers significantly more intelligence. Traditional load balancers primarily distribute network traffic at a lower level (Layers 4-7) based on basic rules. A reverse proxy forwards client requests to backend servers. An API Gateway, however, understands API contracts, can perform complex transformations on request and response payloads, enforces granular security policies (authentication, authorization specific to APIs), handles rate limiting, caches API responses, and often aggregates calls from multiple backend services into a single response. It is specifically designed for API management in a microservices context, adding business logic and policy enforcement, whereas load balancers and reverse proxies are more generic infrastructure components.

2. Is an API Gateway always necessary in a microservices architecture? While an API Gateway is highly recommended and almost universally adopted in mature microservices architectures, it's not strictly "always" necessary, especially for very small, greenfield projects with a limited number of services and clients. For simple internal APIs with known clients, direct client-to-service communication might suffice initially. However, as the number of microservices grows, the number of clients increases, or as external consumers need to access APIs, the complexities of security, routing, monitoring, and client-side orchestration quickly become unmanageable without a gateway. The benefits of centralized control, enhanced security, simplified client development, and improved observability typically outweigh the initial setup overhead for most real-world scenarios.

3. How does an API Gateway handle security, particularly authentication and authorization? An API Gateway centralizes security enforcement at the edge of the system. For authentication, it can validate various credentials, such as API keys, OAuth2 tokens (e.g., JWTs), or even perform mutual TLS (mTLS). It verifies the client's identity and checks the validity of their provided credentials. Once authenticated, for authorization, the gateway inspects the client's permissions (e.g., roles or scopes within a JWT) against predefined policies to determine if they are authorized to access the requested resource or perform the desired action. If a request fails either authentication or authorization, the gateway immediately rejects it, preventing unauthorized traffic from reaching backend services and thus significantly reducing the attack surface.

4. What is the difference between an API Gateway and a Service Mesh, and do I need both? An API Gateway and a service mesh address different, albeit related, concerns in a microservices architecture. An API Gateway primarily manages "north-south" traffic – requests coming from outside your microservices cluster (e.g., from web browsers, mobile apps) into your services. It focuses on external APIs, client-specific optimizations, and perimeter security. A service mesh (e.g., Istio, Linkerd) primarily manages "east-west" traffic – communication between microservices within your cluster. It focuses on internal service-to-service communication, providing advanced traffic control, resilience (retries, circuit breakers), security (mTLS), and observability for internal calls. In many complex microservices environments, you will likely need both: the API Gateway handles the external interface and security for inbound requests, and the service mesh ensures secure, resilient, and observable communication among the internal services.

5. Can an API Gateway become a performance bottleneck, and how is this mitigated? Yes, if not properly implemented and scaled, an API Gateway can indeed become a performance bottleneck, as all incoming API traffic must pass through it. This extra hop and the processing involved (authentication, routing, transformations) can introduce latency. Mitigation strategies include: * Horizontal Scaling: Deploying multiple instances of the API Gateway behind a load balancer to distribute the load. * High-Performance Software: Utilizing gateway solutions written in performant languages or optimized for high throughput (e.g., APIPark is engineered for high performance). * Caching: Aggressively caching API responses for frequently accessed, non-volatile data to reduce backend calls. * Efficient Configuration: Minimizing complex transformations and policy evaluations that are not strictly necessary. * Resource Provisioning: Ensuring the gateway instances have ample CPU, memory, and network I/O resources. * Continuous Monitoring: Actively monitoring gateway performance metrics to identify and address bottlenecks proactively. By combining these strategies, API Gateways can be designed to handle massive traffic volumes without compromising overall system performance.

🚀You can securely and efficiently call the OpenAI API on APIPark in just two steps:

Step 1: Deploy the APIPark AI gateway in 5 minutes.

APIPark is developed based on Golang, offering strong product performance and low development and maintenance costs. You can deploy APIPark with a single command line.

curl -sSO https://download.apipark.com/install/quick-start.sh; bash quick-start.sh

In my experience, you can see the successful deployment interface within 5 to 10 minutes. Then, you can log in to APIPark using your account.

Step 2: Call the OpenAI API.