Build & Orchestrate Microservices: A Definitive How-To

The landscape of software development is in perpetual motion, constantly evolving to meet the escalating demands for speed, scalability, and resilience. For decades, the monolithic architecture, where all components of an application are tightly coupled and run as a single service, served as the bedrock of enterprise software. While straightforward to develop and deploy in their nascent stages, these behemoths invariably encountered significant hurdles as applications scaled and business requirements shifted. Updates became arduous, deployments risky, and innovation often stifled by the sheer complexity and interconnectedness of the codebase. This inherent rigidity frequently led to a bottleneck effect, where the failure of one small component could cascade into a complete system outage, and scaling individual parts of the application became an impossibility, forcing the entire monolith to be scaled, often inefficiently.

In response to these systemic limitations, the microservices architectural style emerged as a transformative paradigm, promising a more agile, resilient, and scalable approach to building complex applications. Instead of a single, indivisible unit, microservices decompose an application into a collection of small, independent services, each operating within its own bounded context, responsible for a specific business capability, and communicating through lightweight mechanisms, most commonly via well-defined APIs. This shift empowers development teams to work autonomously, choose diverse technology stacks, and deploy services independently, accelerating the pace of innovation and drastically reducing the blast radius of potential failures. The ability to isolate components not only enhances fault tolerance but also streamlines maintenance and upgrades, as changes to one service do not necessitate redeployment of the entire application.

However, embracing microservices is not without its own intricate set of challenges. The simplicity of a single deployment is replaced by the complexity of a distributed system. Orchestrating these numerous, disparate services—managing their communication, ensuring data consistency, handling security, and maintaining operational visibility—becomes a paramount concern. Without a meticulous strategy for coordination and management, the promise of microservices can quickly devolve into a chaotic "distributed monolith," negating many of its touted benefits. This is precisely where the strategic deployment of an API gateway and sophisticated orchestration patterns become indispensable. An API gateway acts as the single entry point for all client requests, abstracting the internal architecture of the microservices and providing a unified façade for external consumers. It handles cross-cutting concerns like authentication, rate limiting, and request routing, significantly simplifying client-side logic and bolstering the overall security posture of the system.

This comprehensive guide is designed to be your definitive "how-to" for successfully building and orchestrating microservices. We will delve into the fundamental principles, best practices, and essential tools required to navigate the complexities of this architectural style. From designing individual services and crafting robust APIs to deploying resilient communication patterns and implementing sophisticated operational strategies, we will cover the entire lifecycle. Our exploration will emphasize how a well-structured gateway and thoughtful orchestration can transform a collection of independent services into a cohesive, high-performing, and easily manageable system, ultimately enabling organizations to harness the full potential of microservices to deliver exceptional value.

Chapter 1: Understanding the Microservices Paradigm

The journey into microservices begins with a profound understanding of its core tenets and why it represents a significant departure from traditional architectural styles. This paradigm shift is not merely about breaking down an application, but about rethinking how software is conceived, developed, deployed, and operated.

1.1 What are Microservices?

At its heart, a microservice is a small, autonomous service designed to perform a single, well-defined business function. Imagine an e-commerce application traditionally built as one massive codebase. In a microservices world, this monolith might be broken down into distinct services such as a "User Management Service," a "Product Catalog Service," an "Order Processing Service," a "Payment Service," and a "Notification Service." Each of these services is an independent unit.

Key characteristics that define microservices include:

- Autonomous and Decoupled: Each microservice operates independently and is loosely coupled with others. This means a change in one service ideally does not necessitate changes or redeployment in others, as long as the API contract between them remains stable. This autonomy empowers teams to develop, test, and deploy their services without complex coordination overhead.

- Bounded Contexts: Derived from Domain-Driven Design (DDD), each microservice typically owns a specific "bounded context." This refers to a logical boundary within which a particular domain model is defined and consistent. For instance, a "User" might have different attributes and behaviors in a "Billing Context" compared to a "Social Media Context." Each microservice encapsulates its own domain model, preventing domain logic leakage and reducing cognitive load.

- Single Responsibility Principle: Similar to its application in object-oriented programming, this principle suggests that each microservice should have one, and only one, reason to change. This ensures that services remain small, focused, and easier to understand and maintain. If a service starts accumulating too many responsibilities, it's often a sign that it needs further decomposition.

- Independent Deployment: Perhaps one of the most compelling advantages, microservices can be deployed independently of each other. This drastically reduces the risk associated with deployments, allows for more frequent releases, and enables teams to iterate faster on their specific components without affecting the entire application. A bug fix or a new feature in the "Product Catalog Service" can go live without waiting for or impacting the "Payment Service."

- Polyglot Persistence and Programming: Microservices enable teams to choose the best tool for the job. One service might use a relational database like PostgreSQL for structured data, while another might opt for a NoSQL database like MongoDB for flexible document storage, and yet another might use a graph database for complex relationships. Similarly, different services can be written in different programming languages (e.g., Java, Python, Go, Node.js), allowing teams to leverage specialized expertise or choose languages best suited for a particular task.

- Communication via Lightweight Mechanisms: Services communicate primarily through well-defined APIs, typically using HTTP/REST, gRPC, or asynchronous messaging queues. This contract-based communication is crucial for maintaining loose coupling.

In contrast, monolithic applications are single, large codebases where all modules are tightly integrated and share a single database. While initially simpler, they often become a development bottleneck, scaling challenge, and innovation inhibitor as they grow. Deploying a new feature, even a small one, often requires redeploying the entire application, leading to slower release cycles and higher risks.

1.2 Why Choose Microservices?

The decision to adopt microservices is a strategic one, often driven by the desire to overcome the inherent limitations of monolithic architectures and to unlock new levels of organizational agility and technical capability.

Advantages of Microservices:

- Enhanced Scalability: Individual microservices can be scaled independently based on their specific demand. If the "Product Catalog Service" experiences a surge in traffic, only that service needs additional resources, rather than the entire application. This optimizes resource utilization and cost.

- Improved Resilience and Fault Isolation: The failure of one microservice does not necessarily bring down the entire application. Due to their independent nature, a well-designed microservices architecture can isolate failures, allowing other services to continue operating. This significantly improves the overall fault tolerance and reliability of the system.

- Faster Development and Deployment Cycles: Small, focused teams can work on individual services concurrently, leading to faster development. Independent deployments mean features can be released more frequently and with less coordination overhead, accelerating time-to-market.

- Technology Heterogeneity (Polyglot Stacks): Teams are free to choose the most appropriate programming language, framework, and data store for each service. This fosters innovation, allows teams to leverage specialized expertise, and prevents vendor lock-in, enabling them to pick the optimal technology for a specific problem domain.

- Team Autonomy and Empowerment: Microservices promote smaller, cross-functional teams that "own" a service end-to-end, from development to deployment and operation. This ownership fosters greater accountability, expertise, and job satisfaction, leading to more efficient and motivated teams.

- Easier Maintenance and Understanding: Smaller codebases are inherently easier to understand, maintain, and refactor. New developers can onboard faster by focusing on a single service without needing to grasp the entirety of a massive monolith.

- Greater Innovation and Experimentation: The ability to develop and deploy services independently allows for quicker experimentation with new technologies and features. If an experiment fails, it can be rolled back or discarded without impacting the core application.

Disadvantages and Challenges (Briefly):

While the benefits are substantial, microservices introduce their own set of complexities that must be carefully managed:

- Distributed System Complexity: Managing a multitude of interconnected services introduces challenges like distributed logging, tracing, monitoring, and debugging. The network becomes a critical and often unreliable component.

- Data Consistency: Maintaining data consistency across multiple services, each with its own database, is significantly more complex than in a single-database monolith. Distributed transactions are difficult to implement and often lead to eventual consistency patterns.

- Operational Overhead: Deploying and managing numerous services requires robust automation for infrastructure provisioning, deployment, scaling, and monitoring. This often necessitates a mature DevOps culture and sophisticated tooling.

- Inter-service Communication Overhead: Network latency, serialization/deserialization, and potential for network failures add overhead to communication between services.

- Testing Complexity: Testing a distributed system is more involved than testing a monolith. Integration tests become more complex, and end-to-end tests need to account for multiple service interactions.

Despite these challenges, for organizations operating at scale, requiring high agility, and dealing with complex business domains, the strategic advantages of microservices often outweigh the inherent complexities, provided they are managed with a well-thought-out architectural and operational strategy.

Chapter 2: Designing Your Microservices Architecture

The success of a microservices adoption heavily relies on sound design principles. Rushing into breaking down an application without a clear strategy often leads to what's colloquially known as a "distributed monolith"—a system that suffers from the complexities of distribution without reaping the benefits of true microservices. This chapter outlines crucial design considerations.

2.1 Bounded Contexts and Domain-Driven Design (DDD)

Domain-Driven Design (DDD) is a software development approach that places the primary focus on the core business domain and domain logic. When applied to microservices, DDD becomes an indispensable tool for defining the boundaries of individual services, ensuring they are cohesive, loosely coupled, and aligned with distinct business capabilities.

The cornerstone of DDD for microservices is the concept of Bounded Contexts. A bounded context is a logical boundary within which a particular domain model is defined and consistent. Within this boundary, terms, entities, and values have specific meanings. For example, in an e-commerce system:

- A "Product" in the Product Catalog Context might include attributes like name, description, SKU, and image URLs.

- The same "Product" in the Inventory Context might only care about stock levels and location.

- And in the Shipping Context, a "Product" might only refer to its weight and dimensions for logistics calculations.

Each of these contexts represents a distinct responsibility and often translates directly into an individual microservice. By carefully identifying and defining these bounded contexts, you ensure that:

- Clear Ownership: Each service owns its domain model, preventing ambiguity and conflicts that arise when a single model is shared across different concerns.

- Reduced Coupling: Changes to the domain model in one context do not inadvertently affect another, as long as the public API contracts between services remain stable.

- Simplified Reasoning: Developers working within a service only need to understand the domain model relevant to their bounded context, reducing cognitive load.

Strategic DDD focuses on high-level design, identifying the core domains, subdomains (core, supporting, generic), and mapping them to bounded contexts. It also involves defining the relationships between these contexts, known as "Context Maps," which illustrate how services interact (e.g., Shared Kernel, Customer/Supplier, Conformist, Anti-Corruption Layer).

Tactical DDD then delves into the specifics within each bounded context, using patterns like Aggregates, Entities, Value Objects, and Repositories to structure the domain model effectively. By applying DDD principles, you lay a solid foundation for designing microservices that are truly autonomous and aligned with business needs, rather than arbitrary code divisions.

2.2 Service Decomposition Strategies

Deciding how to break down a monolithic application or design new services from scratch is one of the most critical and challenging aspects of microservices architecture. Poor decomposition can lead to tightly coupled services, complex transactions, and an operational nightmare. Several strategies can guide this process:

- Decomposition by Business Capability: This is arguably the most common and effective strategy. Services are organized around distinct business capabilities or functions that an organization performs. For example, in a retail system, you might have capabilities like "Order Management," "Customer Management," "Product Catalog," "Payment Processing," and "Shipping." Each capability can become a separate microservice. This aligns services with business value streams, making them more stable over time as business capabilities tend to change less frequently than technical concerns.

- Decomposition by Subdomain: Closely related to bounded contexts, this strategy involves identifying the core subdomains of your business (e.g., "Authentication," "Reporting," "Recommendation Engine") and making each a microservice. This is particularly useful for complex domains where different subdomains have highly specialized logic and data.

- Decomposition by Data: While less common as a primary strategy, sometimes services are decomposed based on the data they primarily manage. For instance, a "User Data Service" might manage all user-related information, regardless of which other services might consume it. This can be problematic if not carefully managed, as it can lead to tight coupling between services and the database. It's often better to view this as a consequence of capability-based decomposition rather than its primary driver, where each service owns its data.

- Decomposition by Transaction Boundary: Services should ideally encapsulate operations that form a single, atomic business transaction. If an operation spans multiple services, it indicates a potential issue with the service boundary or necessitates complex distributed transaction patterns like Sagas. Decomposing services to minimize cross-service transactions simplifies data consistency challenges.

- Strangler Fig Pattern: When migrating from a monolith, the Strangler Fig pattern is invaluable. Instead of a "big bang" rewrite, new functionality is built as microservices, and existing functionality is gradually extracted and re-implemented as microservices, while the monolith continues to operate. An API gateway (discussed in Chapter 4) is crucial here to route traffic either to the old monolith or the new microservices, slowly "strangling" the monolith until it can be retired.

The goal is to create services that are small enough to be easily managed by a single team, yet large enough to provide meaningful business value without excessive inter-service communication. It's an iterative process, and initial decompositions may need refinement as the system evolves.

2.3 Communication Patterns

Effective communication is the lifeblood of a microservices architecture. Without it, individual services are isolated islands, unable to contribute to the overall application functionality. Choosing the right communication pattern is crucial for maintaining performance, resilience, and loose coupling.

Broadly, communication patterns can be categorized as synchronous or asynchronous:

- Synchronous Communication:For synchronous calls, several resilience patterns are critical: * Timeouts: Prevent services from waiting indefinitely for a response. * Retries: Automatically re-attempt failed requests (with backoff strategies) for transient errors, but be cautious with non-idempotent operations. * Circuit Breakers: Prevent an application from repeatedly trying to invoke a service that is currently unavailable, thus protecting both the failing service and the calling service from cascading failures. When a service fails repeatedly, the circuit breaker "opens," and subsequent requests immediately fail without attempting to call the service. After a timeout, it transitions to a "half-open" state to test if the service has recovered. * Bulkheads: Isolate resources (e.g., thread pools, connection pools) used for calling different services, preventing a failure in one call from consuming all resources and affecting other calls.

- REST (Representational State Transfer): The most prevalent pattern, using HTTP as the underlying protocol. Services expose resources, and clients interact with them using standard HTTP methods (GET, POST, PUT, DELETE).

- Pros: Simplicity, ubiquity, statelessness (easy to scale), well-understood.

- Cons: Tight coupling in time (caller waits for a response), potential for cascading failures, latency.

- gRPC (Google Remote Procedure Call): A high-performance, open-source RPC framework. It uses Protocol Buffers for defining service contracts and HTTP/2 for transport.

- Pros: Language-agnostic, efficient (binary serialization, HTTP/2 multiplexing), built-in code generation for clients/servers, supports streaming.

- Cons: Steeper learning curve, requires HTTP/2, client-side tooling might be less mature than REST.

- REST (Representational State Transfer): The most prevalent pattern, using HTTP as the underlying protocol. Services expose resources, and clients interact with them using standard HTTP methods (GET, POST, PUT, DELETE).

- Asynchronous Communication:

- Message Queues (e.g., RabbitMQ, Apache Kafka, AWS SQS): Services communicate by sending and receiving messages via an intermediary broker. A sender publishes a message to a queue/topic, and one or more receivers consume it later.

- Pros: Decoupling (sender doesn't need to know about receiver), resilience (messages can be retried or stored), scalability (consumers can be added/removed independently), supports event-driven architectures.

- Cons: Increased complexity (message broker management), eventual consistency, difficult to trace message flow, requires idempotent consumers.

- Event Streaming (e.g., Apache Kafka): A specialized form of message queue that provides a durable, ordered, and replayable log of events. It's often used for event sourcing and real-time data pipelines.

- Pros: High throughput, fault-tolerant, provides historical context of events, supports complex data processing patterns.

- Cons: Higher operational complexity, steep learning curve.

- Message Queues (e.g., RabbitMQ, Apache Kafka, AWS SQS): Services communicate by sending and receiving messages via an intermediary broker. A sender publishes a message to a queue/topic, and one or more receivers consume it later.

Choosing between synchronous and asynchronous depends on the use case. Synchronous is suitable for requests that require an immediate response (e.g., retrieving user profile). Asynchronous is ideal for background tasks, notifications, long-running processes, and when strong decoupling is desired (e.g., order fulfillment, sending emails). Often, a hybrid approach is used, leveraging the strengths of both.

2.4 Data Management in Microservices

Data management is one of the most significant complexities introduced by microservices. In a monolith, a single, shared database simplifies transactions and ensures immediate consistency. In microservices, each service typically owns its data, leading to a distributed data landscape that requires careful planning.

- Database per Service Pattern:

- Principle: Each microservice manages its own private database. No other service should directly access another service's database. All communication must happen through the service's public API.

- Benefits:

- Loose Coupling: Services are truly autonomous and independent regarding their data schema and technology choice. A change in one service's database doesn't impact others.

- Polyglot Persistence: Enables choosing the best database technology (relational, NoSQL, graph, etc.) for a service's specific needs, optimizing performance and development.

- Scalability: Each database can be scaled independently.

- Challenges:

- Distributed Transactions: Operations that logically span multiple services (and thus multiple databases) become challenging. Traditional ACID transactions are not possible across service boundaries.

- Data Duplication and Consistency: Data needed by multiple services might be duplicated across their databases, leading to eventual consistency models.

- Handling Distributed Transactions (Saga Pattern):

- When an operation requires changes across multiple services, a Saga pattern is often employed to maintain data consistency in a distributed system. A Saga is a sequence of local transactions, where each transaction updates data within a single service and publishes an event that triggers the next step in the Saga.

- If any local transaction fails, the Saga executes a series of compensating transactions to undo the changes made by preceding successful transactions, ensuring atomicity across the distributed system.

- Types of Sagas:

- Choreography: Services orchestrate the Saga themselves by exchanging events. Each service listens for events and decides the next step. This is decentralized but can be harder to trace and debug in complex Sagas.

- Orchestration: A dedicated Saga orchestrator (a separate service) explicitly manages and coordinates the flow of the Saga. It sends commands to participant services and reacts to their responses/events. This provides a clearer view of the Saga's state and flow but introduces a potential single point of failure (though this can be mitigated).

- Eventual Consistency:

- Given the distributed nature of data, immediate consistency across all services is often impractical or impossible. Instead, microservices typically embrace eventual consistency, where data changes propagate through the system, and all replicas eventually become consistent.

- This requires designing services to be resilient to temporary inconsistencies and potentially using techniques like retries, idempotency, and conflict resolution strategies.

- For example, in an e-commerce system, when an order is placed, the "Order Service" might immediately persist the order, then publish an "OrderPlaced" event. The "Inventory Service" might then consume this event to update stock levels, and the "Payment Service" might process payment. These updates happen asynchronously, meaning for a brief period, the order might exist, but inventory might not yet be decremented.

Effective data management in microservices demands a shift in mindset from strict ACID compliance across the entire system to a more nuanced approach leveraging eventual consistency, robust eventing mechanisms, and patterns like Sagas to ensure business integrity. This strategic design ensures that while services are autonomous, the overall system functions as a coherent whole.

Chapter 3: Building Individual Microservices

Once the architectural canvas is sketched with bounded contexts and communication patterns, the next crucial step involves bringing individual microservices to life. This chapter focuses on the practical aspects of developing these discrete units, emphasizing best practices for their internal structure and external interactions.

3.1 Technology Stack Choices

One of the most appealing advantages of microservices is the freedom to choose the "best tool for the job," fostering a polyglot environment. This allows teams to select the programming language, framework, and data storage solution that is most suitable for a specific service's requirements, team expertise, and performance characteristics.

- Programming Languages:

- Java (Spring Boot): Extremely popular for enterprise-grade microservices due to its robust ecosystem, strong community support, extensive libraries, and frameworks like Spring Boot that simplify development and deployment with convention-over-configuration. It's well-suited for high-throughput, mission-critical services.

- Node.js (Express.js, NestJS): Ideal for I/O-bound, real-time applications and services requiring rapid development. Its asynchronous, event-driven nature makes it highly efficient for handling many concurrent connections, common in web-facing APIs.

- Python (Flask, Django): Favored for data science, machine learning, and rapid prototyping services. Its readability and extensive libraries make it excellent for services involving complex algorithms or data processing.

- Go (Gin, Echo): Gaining significant traction for its performance, concurrency models (goroutines), and static typing. It's often chosen for high-performance network services, infrastructure components, and areas where low-latency is critical.

- C# (.NET Core): Microsoft's cross-platform framework, offering strong performance and a familiar environment for developers coming from the .NET ecosystem, suitable for a wide range of enterprise applications.

- Frameworks: Beyond the language, specific frameworks streamline microservice development by providing common functionalities like dependency injection, routing, security, and configuration management. Examples include Spring Boot for Java, Express.js or NestJS for Node.js, Flask or Django for Python, Gin or Echo for Go, and .NET Core for C#.

- Polyglot Persistence: This refers to the practice of using different types of databases for different services.

- Relational Databases (PostgreSQL, MySQL, Oracle): Excellent for services requiring strong ACID properties, complex querying, and highly structured data (e.g., Order Management, User Accounts).

- NoSQL Databases (MongoDB, Cassandra, Redis, DynamoDB): Offer flexibility, horizontal scalability, and often better performance for specific data access patterns.

- Document Databases (MongoDB): For flexible, schema-less data (e.g., Product Catalog with varying attributes).

- Key-Value Stores (Redis, DynamoDB): For high-speed caching, session management, and simple data retrieval.

- Column-Family Stores (Cassandra): For massive amounts of structured data across many nodes, often used in time-series or IoT applications.

- Graph Databases (Neo4j): For data with complex relationships (e.g., social networks, recommendation engines).

The key is to select the most appropriate technology for each service based on its specific functional and non-functional requirements, rather than imposing a single stack across the entire architecture. This optimizes performance, reduces development friction, and allows teams to leverage their strengths.

3.2 API Design Best Practices

The API is the public contract of your microservice, defining how other services and clients interact with it. Well-designed APIs are crucial for loose coupling, ease of integration, and long-term maintainability. Poorly designed APIs can create significant friction and hinder the benefits of microservices.

- RESTful Principles: Adhere to the principles of REST (Representational State Transfer) for HTTP-based APIs:

- Resources: Design your APIs around resources (e.g.,

/products,/orders,/users), which are nouns, not verbs. - Standard HTTP Methods (Verbs): Use

GETfor retrieving resources,POSTfor creating new resources,PUTfor complete updates,PATCHfor partial updates, andDELETEfor removing resources. - Statelessness: Each request from a client to a server must contain all the information needed to understand the request. The server should not store any client context between requests.

- Idempotency: Repeatedly sending the same request should have the same effect.

GET,PUT, andDELETEare inherently idempotent.POSTis generally not, unless specifically designed to be. - Clear URIs: Use clear, hierarchical, and plural nouns for resource paths (e.g.,

/api/v1/products/123). Avoid actions in URIs (e.g.,/api/v1/getProductById). - Status Codes: Use appropriate HTTP status codes (2xx for success, 4xx for client errors, 5xx for server errors) to provide meaningful feedback to callers.

- Hypermedia as the Engine of Application State (HATEOAS): While often debated for its complexity, the idea is to include links in API responses to guide clients on possible next actions, making the API more discoverable and adaptable.

- Resources: Design your APIs around resources (e.g.,

- API Versioning: As services evolve, their APIs might change, potentially introducing breaking changes. Versioning helps manage these changes and ensures backward compatibility for existing clients.

- URI Versioning: Include the version number in the URI (e.g.,

/api/v1/products). This is explicit and easy to understand but can lead to URL proliferation. - Header Versioning: Pass the version in a custom HTTP header (e.g.,

X-API-Version: 1). Cleaner URIs but less discoverable. - Query Parameter Versioning: Include the version as a query parameter (e.g.,

/api/products?version=1). Can be confusing if not handled carefully. It's crucial to document your versioning strategy and provide clear migration paths.

- URI Versioning: Include the version number in the URI (e.g.,

- API Documentation (OpenAPI/Swagger): Comprehensive and up-to-date documentation is vital for microservices. Tools like OpenAPI (formerly Swagger) allow you to define your APIs in a language-agnostic, machine-readable format. This definition can then be used to:

- Generate interactive documentation (Swagger UI).

- Generate client SDKs in various languages.

- Perform automated testing.

- Enforce API contracts at build time. Automating documentation generation from code or API definitions ensures accuracy and reduces manual effort.

- Clear Contracts and Schema Enforcement: Define clear input and output schemas for your APIs, preferably using tools like JSON Schema or Protocol Buffers. This ensures that data exchanged between services adheres to expected formats, preventing integration issues. Services should validate incoming requests against these schemas.

- Error Handling: Provide consistent and informative error responses. Include error codes, clear messages, and potentially links to documentation for more details. Avoid exposing internal server details in error messages.

- Security: Incorporate security measures from the ground up, including authentication, authorization, input validation, and secure communication (HTTPS).

By adhering to these best practices, you create robust, maintainable, and easily consumable APIs that serve as strong contracts between your microservices, enabling true loose coupling.

3.3 Data Storage & Access

Each microservice typically owns its data, meaning it is responsible for persisting and accessing its specific data stores. This independence offers flexibility but also introduces design considerations.

- Choosing Appropriate Databases: As discussed under polyglot persistence, the choice of database should align with the specific data patterns and consistency requirements of the service.

- A service managing transactional financial data might opt for a traditional relational database (e.g., PostgreSQL, MySQL) to leverage ACID properties and mature SQL querying capabilities.

- A service handling large volumes of user activity logs or catalog data with flexible schemas might prefer a document database (e.g., MongoDB, Couchbase).

- A caching service or one requiring lightning-fast key-value lookups would benefit from in-memory stores (e.g., Redis, Memcached). The key is to avoid a "one-size-fits-all" approach and instead carefully evaluate the needs of each service.

- ORM vs. Direct SQL:

- Object-Relational Mappers (ORMs): Frameworks like Hibernate (Java), SQLAlchemy (Python), Entity Framework (.NET), and Sequelize (Node.js) map database tables to objects in your programming language.

- Pros: Speeds up development, abstracts database specifics, provides an object-oriented view of data, helps prevent SQL injection (if used correctly).

- Cons: Can introduce performance overhead for complex queries, "impedance mismatch" between object model and relational model, can hide underlying SQL performance issues.

- Direct SQL/Query Builders: Writing raw SQL queries or using lightweight query builders (e.g., JDBCTemplate in Spring, Knex.js in Node.js).

- Pros: Full control over query optimization, often better performance for complex or highly specific queries, avoids ORM overhead.

- Cons: More verbose, requires deeper SQL knowledge, potential for SQL injection if not careful with parameter binding, less abstraction. The choice often comes down to the complexity of the data access patterns. For simple CRUD operations, ORMs are often efficient. For complex reports, specific performance tuning, or when working with NoSQL databases, direct access or specialized client libraries are often preferred.

- Object-Relational Mappers (ORMs): Frameworks like Hibernate (Java), SQLAlchemy (Python), Entity Framework (.NET), and Sequelize (Node.js) map database tables to objects in your programming language.

- Data Migration: As services evolve, their data schemas will change. Managing these schema migrations independently for each service is critical. Tools like Flyway (Java) or Alembic (Python) help manage database migrations in a version-controlled, automated manner. Each service should manage its own schema migrations without interfering with other services.

- Data Seeding and Test Data: For development and testing, services need access to relevant data. Strategies for data seeding (populating databases with initial data) and generating realistic test data become important to ensure reliable testing of individual services.

By carefully considering data storage and access mechanisms for each microservice, development teams can optimize performance, maintain autonomy, and ensure the long-term health of their data-driven applications.

3.4 Security Considerations

Security is not an afterthought in microservices; it must be ingrained into the design and development process from the very beginning. The distributed nature of microservices introduces new attack vectors and complexities compared to a monolithic application.

- Authentication and Authorization:

- Authentication: Verifying the identity of a user or another service.

- OAuth 2.0: A standard for delegated authorization, often used with OpenID Connect (OIDC) for authentication. A dedicated Identity Provider (IdP) service (e.g., Keycloak, Auth0, Okta) handles user authentication and issues access tokens (e.g., JWTs) to clients.

- JWT (JSON Web Tokens): Self-contained tokens used to securely transmit information between parties. Once issued by the IdP, a JWT can be passed with each request to microservices. Services can validate the token cryptographically without needing to call the IdP for every request, improving performance.

- Authorization: Determining what an authenticated user or service is allowed to do.

- Role-Based Access Control (RBAC): Assigning roles (e.g., "admin," "user," "guest") to users and defining permissions for each role.

- Attribute-Based Access Control (ABAC): More granular, using attributes of the user, resource, and environment to make access decisions.

- Policy Enforcement: Typically, the API gateway (discussed in Chapter 4) handles initial authentication and can enforce some coarse-grained authorization policies. Fine-grained authorization, however, is best handled within each individual microservice, as it understands its specific resources and business logic.

- Service-to-Service Authentication: Microservices also need to authenticate with each other. This can be achieved using client credentials flow in OAuth 2.0, mutual TLS (mTLS), or dedicated API keys for internal calls, ensuring only authorized services can communicate.

- Authentication: Verifying the identity of a user or another service.

- Input Validation:

- Every service must rigorously validate all incoming data, whether from external clients or other internal services. This prevents common vulnerabilities like SQL injection, cross-site scripting (XSS), and buffer overflows.

- Validation should occur at the edge (e.g., API gateway) for basic sanity checks and within the receiving microservice for business logic-specific validation.

- Secure Communication (HTTPS/TLS):

- All communication, both external (client to API gateway) and internal (service-to-service), should be encrypted using HTTPS/TLS. This protects data in transit from eavesdropping and tampering.

- Mutual TLS (mTLS) provides an even stronger security posture for service-to-service communication by requiring both the client and server to authenticate each other using certificates.

- Principle of Least Privilege:

- Each microservice, and the underlying infrastructure it runs on, should only have the minimum necessary permissions to perform its function. For example, a service that only reads product data should not have write access to the product database.

- Secrets Management:

- Sensitive information (database credentials, API keys, encryption keys) should never be hardcoded or stored directly in code repositories. Use dedicated secrets management solutions like HashiCorp Vault, AWS Secrets Manager, Azure Key Vault, or Kubernetes Secrets (with proper encryption).

- Logging and Monitoring for Security Events:

- Implement comprehensive logging of security-related events (failed login attempts, unauthorized access attempts, data breaches). Centralized logging and monitoring tools (discussed in Chapter 5) are crucial for detecting and responding to security incidents effectively.

- Regular Security Audits and Penetration Testing:

- Periodically audit your microservices for vulnerabilities, conduct penetration testing, and perform code reviews with a security focus.

By integrating these security practices into every stage of development and operation, organizations can build a robust, secure microservices architecture that protects sensitive data and maintains trust.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

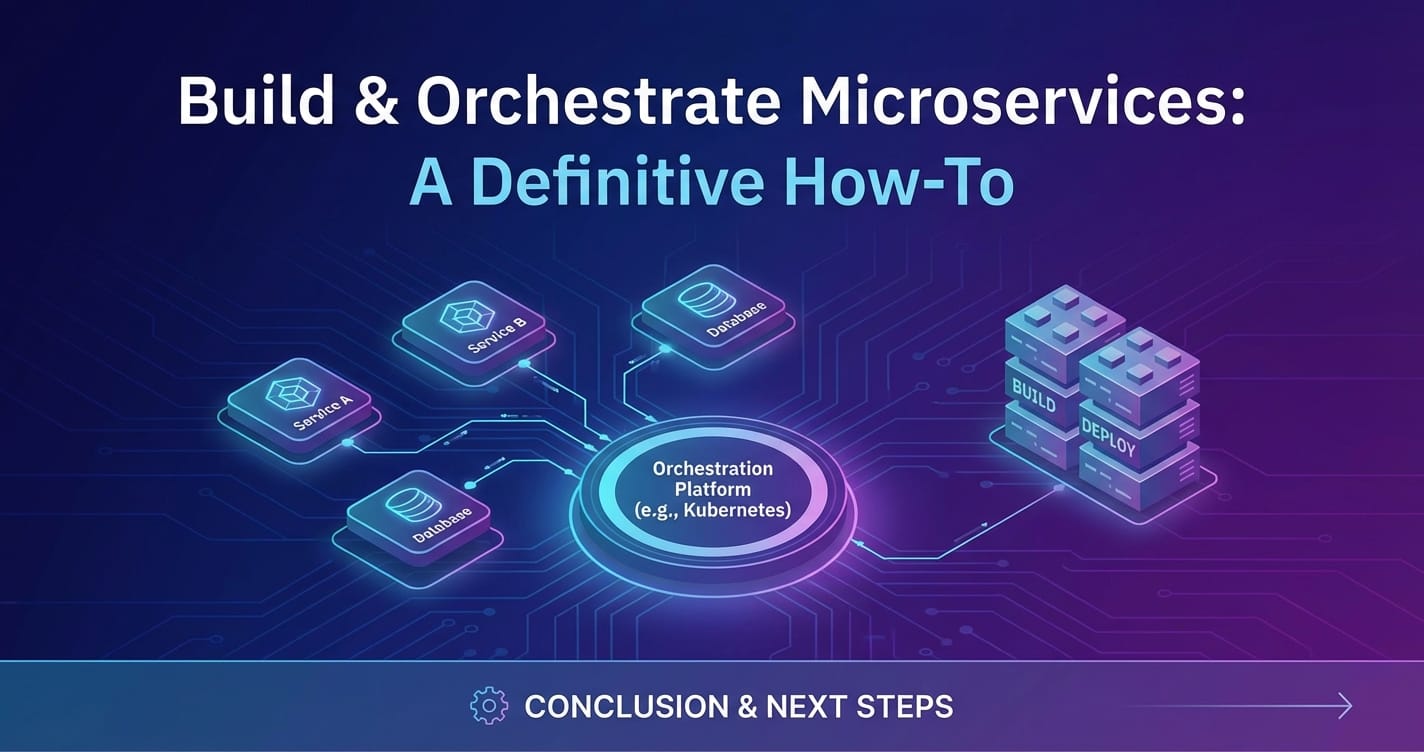

Chapter 4: Orchestrating Microservices: The Critical Layer

Building individual microservices is only half the battle. The true challenge and power of this architectural style lie in effectively orchestrating these disparate units to work harmoniously as a cohesive system. This orchestration layer manages the complexities of communication, security, discovery, and resilience across the entire microservices ecosystem.

4.1 The Role of an API Gateway

In a microservices architecture, an API gateway is an absolutely critical component, acting as the single entry point for all client requests. Without a well-designed API gateway, clients would need to interact with multiple services directly, leading to complex client-side logic, increased network calls, and a host of security and management challenges.

An API gateway serves as a facade, abstracting the internal architecture of the microservices from the external clients. Instead of clients knowing about all individual services, they simply interact with the gateway, which then intelligently routes requests to the appropriate backend service.

Key functions and benefits of an API gateway include:

- Request Routing: The primary function of an API gateway is to route incoming requests to the correct microservice based on the request URL, headers, or other parameters. This allows for dynamic routing, service versioning, and blue/green deployments.

- Authentication and Authorization Enforcement: The API gateway is the ideal place to handle initial authentication and authorization checks. It can validate user tokens (e.g., JWTs), apply rate limiting, and enforce access control policies before forwarding requests to backend services, offloading this burden from individual microservices.

- Rate Limiting and Throttling: To protect microservices from excessive traffic and potential denial-of-service attacks, the gateway can enforce rate limits on requests, preventing any single client from overwhelming the system.

- Protocol Translation: Clients might use different protocols (e.g., HTTP/1.1, WebSocket), while internal services might use gRPC or other specialized protocols. The API gateway can perform protocol translation, providing a consistent interface to clients.

- Caching: The gateway can cache responses for frequently accessed data, reducing the load on backend services and improving response times for clients.

- Load Balancing: Distributes incoming traffic across multiple instances of a microservice, ensuring high availability and optimal resource utilization.

- Request/Response Transformation: The gateway can modify requests before forwarding them to services (e.g., adding headers, transforming payload formats) and similarly transform responses before sending them back to clients. This can help decouple client-side contracts from internal service contracts.

- Monitoring and Logging Aggregation: The API gateway provides a centralized point to collect metrics, logs, and traces for all incoming requests, offering invaluable insights into system performance and behavior. This simplifies monitoring and troubleshooting in a distributed environment.

- Service Composition/Aggregation: For complex client UIs that need data from multiple microservices, the API gateway can aggregate responses from several services into a single response, simplifying client-side development.

Benefits of using an API Gateway:

- Decoupling Clients from Services: Clients don't need to know the number, location, or protocol of individual microservices, simplifying client development and allowing internal service refactoring without affecting external consumers.

- Enhanced Security: Centralized security enforcement improves the overall posture of the application.

- Simplified Client Code: Clients interact with a single endpoint, reducing complexity.

- Centralized Control: Provides a choke point for managing cross-cutting concerns.

- Improved Performance: Through caching, load balancing, and potentially reducing round trips.

Challenges: The API gateway itself can become a single point of failure and a potential bottleneck if not designed for high availability and performance. It requires careful deployment, monitoring, and scaling.

For robust api gateway functionality and comprehensive api management, especially in an AI-driven landscape, platforms like APIPark offer sophisticated solutions. APIPark, as an open-source AI gateway and API management platform, excels in streamlining the management and integration of both AI and REST services. It provides a unified API format for AI invocation, ensuring that changes in AI models or prompts do not affect the application or microservices, thereby simplifying AI usage and maintenance costs. Furthermore, APIPark supports end-to-end API lifecycle management, assisting with design, publication, invocation, and decommissioning. Its robust security features, such as API resource access requiring approval, help prevent unauthorized API calls and potential data breaches, ensuring that your microservices ecosystem is both efficient and secure. With performance rivaling Nginx, APIPark can achieve over 20,000 TPS on modest hardware, supporting cluster deployment to handle large-scale traffic, and offers detailed API call logging for quick tracing and troubleshooting.

4.2 Service Discovery

In a dynamic microservices environment, service instances are constantly being created, destroyed, and scaled. Their network locations (IP addresses and ports) are not fixed. Clients and the API gateway cannot rely on hardcoded configurations to find and communicate with other services. This is where service discovery becomes essential.

Service discovery is the process of automatically detecting the network locations of service instances. There are two main patterns:

- Client-Side Discovery:

- Mechanism: The client (or the API gateway) queries a service registry to get a list of available instances for a particular service. It then uses a load-balancing algorithm (e.g., round-robin) to select an instance and make a request directly.

- Tools: Netflix Eureka, Apache ZooKeeper, HashiCorp Consul. These tools provide a registry where services register themselves upon startup and deregister upon shutdown. They also offer a health check mechanism to remove unhealthy instances from the registry.

- Pros: Simpler network architecture, direct communication between client and service.

- Cons: Client-side logic for discovery and load balancing must be implemented in every client or language, leading to potential inconsistencies and higher development overhead.

- Server-Side Discovery:

- Mechanism: Clients make requests to a single, well-known service locator (e.g., a load balancer or a proxy). The service locator is responsible for querying the service registry, routing the request to an available service instance, and potentially performing load balancing.

- Tools: Kubernetes Service (built-in discovery and load balancing), AWS ELB/ALB (Elastic Load Balancer/Application Load Balancer).

- Pros: Simpler client-side logic (clients just hit the load balancer), centralized management of discovery and routing.

- Cons: The service locator itself can be a single point of failure if not highly available, and it adds an extra network hop.

Most modern container orchestration platforms like Kubernetes incorporate server-side discovery natively. When a service is deployed on Kubernetes, it automatically gets a stable internal DNS name, and Kubernetes's internal load balancer handles routing requests to healthy pods, abstracting away the underlying IP addresses. Regardless of the pattern, a robust service discovery mechanism is fundamental for enabling dynamic scaling and resilience in a microservices architecture.

4.3 Configuration Management

In a microservices environment, managing configurations (database connection strings, API keys, logging levels, feature toggles, environment-specific settings) becomes complex due to the sheer number of services and environments (development, staging, production). Hardcoding these values is unsustainable and insecure. Centralized configuration management is crucial.

- Externalized Configuration: The principle is to separate configuration from application code. Services should fetch their configuration at startup or dynamically at runtime, rather than having it bundled with the deployment artifact.

- Key Features of a Configuration Management System:

- Centralized Storage: A single source of truth for all configurations across all services.

- Version Control: Track changes to configurations, allowing rollback to previous versions.

- Environment-Specific Configuration: Easily manage different configurations for different environments.

- Dynamic Updates: Allow services to refresh their configurations without requiring a restart.

- Security for Sensitive Data (Secrets Management): Integration with secrets management tools to securely store and retrieve sensitive configuration values.

- Tools for Configuration Management:

- Spring Cloud Config (Java): A widely used solution that provides a centralized server to manage externalized configurations for microservices. It typically uses Git repositories to store configurations, offering version control and easy environment switching.

- HashiCorp Consul: A multi-purpose tool offering service discovery, health checking, and a distributed key-value store for configuration. Services can subscribe to configuration changes and react dynamically.

- Kubernetes ConfigMaps and Secrets: Kubernetes provides native resources for managing configuration data.

- ConfigMaps: For non-sensitive configuration data (e.g., application settings, environment variables).

- Secrets: For sensitive data (e.g., database passwords, API keys), stored in an encrypted form. Pods can consume these as environment variables or mounted files.

- Dedicated Configuration Services: Some organizations build custom configuration services or use commercial products tailored to their specific needs.

By centralizing configuration management, teams can ensure consistency, reduce operational errors, improve security, and enable quicker adaptation to changing requirements across their microservices ecosystem.

4.4 Inter-Service Communication & Resilience Patterns

Beyond basic communication mechanisms, designing for resilience in inter-service communication is paramount. The network is inherently unreliable, and microservices must be built to withstand transient failures, service degradation, and even complete outages of dependent services without cascading into system-wide failure.

- Circuit Breakers:

- Concept: Prevents an application from repeatedly trying to invoke a service that is currently unavailable or performing poorly. It acts like an electrical circuit breaker: if current (requests) repeatedly fails, it "trips" and opens the circuit, preventing further requests from reaching the faulty service.

- How it works: When a certain threshold of failures (e.g., 5 failures in 10 requests) is met within a given time window, the circuit breaker opens. Subsequent requests immediately fail (fast-fail) without attempting to call the failing service. After a configurable timeout, it transitions to a "half-open" state, allowing a few test requests to pass through. If these succeed, the circuit closes; otherwise, it opens again.

- Benefits: Prevents cascading failures, provides immediate feedback to the calling service, gives the failing service time to recover, reduces resource consumption on the calling service.

- Tools: Hystrix (legacy, but influential), Resilience4j (Java), Polly (.NET), various libraries in other languages. Many API gateways also incorporate circuit breaker patterns.

- Retries and Timeouts:

- Retries: For transient errors (e.g., network glitches, temporary service unavailability), retrying a failed request can often lead to success.

- Important considerations:

- Idempotency: Only retry idempotent operations (GET, PUT, DELETE) or those designed to be safe for multiple executions. Retrying a non-idempotent POST (e.g., creating an order) can lead to duplicates.

- Exponential Backoff: Instead of immediate retries, wait progressively longer between attempts (e.g., 1s, 2s, 4s, 8s). This prevents overwhelming a recovering service and allows it time to stabilize.

- Max Retries: Set a maximum number of retries to prevent infinite loops.

- Important considerations:

- Timeouts: Prevent services from waiting indefinitely for a response from a dependent service. If a request doesn't receive a response within a specified duration, it's considered a failure.

- Implementation: Apply timeouts at various layers: network client, HTTP client library, and within the business logic.

- Retries: For transient errors (e.g., network glitches, temporary service unavailability), retrying a failed request can often lead to success.

- Bulkheads:

- Concept: Isolates different parts of the application to prevent failures in one area from impacting others. Inspired by shipbuilding, where bulkheads divide the hull into watertight compartments.

- Implementation: In microservices, this often means isolating resource pools (e.g., thread pools, connection pools) for calls to different external services. If a call to "Service A" consumes all its dedicated threads/connections due to latency, it won't deplete the resources needed for calls to "Service B."

- Benefits: Prevents resource exhaustion and cascading failures, improves fault isolation.

- Messaging Patterns (e.g., Kafka, RabbitMQ):

- Concept: Using asynchronous message brokers for inter-service communication enhances resilience.

- How it works: A sender publishes a message to a queue/topic without waiting for a direct response. A receiver consumes the message when it's ready.

- Benefits:

- Decoupling: Services are decoupled in time and space, meaning the sender doesn't need to be aware of the receiver's availability.

- Durability: Messages can be persisted in the broker, ensuring they are not lost even if consumers are down.

- Load Leveling: Handles spikes in traffic by buffering messages, allowing consumers to process them at their own pace.

- Event-Driven Architectures: Facilitates complex event-driven workflows and data synchronization.

By strategically implementing these resilience patterns, microservices architectures can become significantly more robust, capable of gracefully handling failures and maintaining operational stability even under adverse conditions.

4.5 Event-Driven Architecture (EDA) & Sagas

Event-Driven Architecture (EDA) is a powerful paradigm that complements microservices by promoting even looser coupling and enabling complex, asynchronous workflows. When combined with the Saga pattern, it provides a robust solution for managing distributed transactions and achieving eventual consistency.

- Event-Driven Architecture (EDA):

- Concept: Instead of direct synchronous calls, services communicate by publishing and consuming "events." An event represents a significant change of state within a service (e.g., "OrderCreated," "PaymentProcessed," "ProductStockUpdated").

- Mechanism: Services publish events to an event broker (like Kafka or RabbitMQ). Other interested services subscribe to these events and react to them. The publisher typically doesn't know or care who the consumers are.

- Benefits:

- High Decoupling: Services are completely decoupled from each other, only interacting via events. This allows services to evolve independently.

- Scalability: Event brokers are designed for high throughput and can handle a large number of publishers and subscribers.

- Real-time Processing: Enables real-time responsiveness to system changes.

- Auditability: The event log provides a durable, ordered record of all changes, useful for auditing, debugging, and analytics.

- Asynchronous Processing: Long-running processes or tasks that don't require an immediate response can be handled efficiently in the background.

- Challenges:

- Eventual Consistency: Data consistency is achieved over time, not immediately. This requires careful design of business processes to handle temporary inconsistencies.

- Complexity: Debugging event flows across multiple services can be challenging.

- Event Schema Management: Maintaining consistent event schemas across services is crucial.

- Sagas for Distributed Transactions:

- As discussed briefly in Chapter 2, a Saga is a sequence of local transactions, where each local transaction updates the database within a single service and publishes an event to trigger the next step. If any step fails, compensating transactions are executed to undo the previous steps.

- Choreography vs. Orchestration:

- Choreography-based Saga: Each service involved in the Saga listens for events and publishes new events upon completion of its local transaction, effectively "choreographing" the flow themselves.

- Pros: Simpler to implement for small Sagas, decentralized.

- Cons: Can be hard to understand and manage for complex Sagas, difficult to track the overall state of the Saga.

- Orchestration-based Saga: A dedicated orchestrator service (Saga orchestrator) is responsible for coordinating the entire Saga. It sends commands to participant services, collects their responses, and decides the next action.

- Pros: Clear separation of concerns, easier to manage and monitor complex Sagas, better error handling, simpler to add new participants.

- Cons: The orchestrator can become a centralized component (though it should be stateless and highly available), potential for increased complexity in the orchestrator itself.

- Choreography-based Saga: Each service involved in the Saga listens for events and publishes new events upon completion of its local transaction, effectively "choreographing" the flow themselves.

EDA and Sagas are powerful patterns for building highly scalable, resilient, and loosely coupled microservices architectures, particularly for complex business processes that span multiple service boundaries. They require a shift in thinking from immediate, strong consistency to embracing eventual consistency and designing for asynchronous interactions.

Chapter 5: Deployment, Monitoring, and Operations

Building microservices is one thing; deploying, operating, and maintaining them reliably in production is another entirely. The distributed nature of microservices multiplies the complexity of these operational aspects. A robust DevOps culture, coupled with advanced tooling, is essential for success.

5.1 Containerization (Docker)

Containerization has become virtually synonymous with microservices deployment. Docker, the most popular containerization platform, provides a standardized way to package applications and their dependencies into isolated units called containers.

- How Docker Works:

- Docker Image: A lightweight, standalone, executable package that includes everything needed to run a piece of software, including the code, a runtime, system tools, libraries, and settings.

- Docker Container: A running instance of a Docker image. It's an isolated environment that shares the host OS kernel but has its own filesystem, network stack, and process space.

- Benefits for Microservices:

- Portability: A Docker container runs consistently across any environment (developer's laptop, staging server, production cloud) that has Docker installed. This eliminates "it works on my machine" issues.

- Isolation: Each microservice runs in its own container, isolated from other services and the host system. This prevents dependency conflicts and ensures that changes to one service don't inadvertently affect others.

- Consistency: Standardized packaging ensures that the runtime environment for each service is consistent from development to production.

- Efficiency: Containers are much lighter weight and start faster than traditional virtual machines, leading to better resource utilization.

- Version Control: Docker images are versioned, allowing for easy rollback to previous, stable versions of a service.

- Simplified Deployment: Once an image is built, deploying a microservice simply means running its container.

By containerizing microservices, organizations can streamline their development-to-production pipeline, improve environment consistency, and simplify the process of scaling and managing numerous services.

5.2 Orchestration Platforms (Kubernetes)

While Docker is excellent for packaging individual microservices, managing hundreds or thousands of containers manually across a cluster of servers is impractical. Container orchestration platforms automate the deployment, scaling, and management of containerized applications. Kubernetes has emerged as the de facto standard in this space.

- What Kubernetes Does: Kubernetes (K8s) is an open-source system for automating deployment, scaling, and management of containerized applications. It provides a platform for running and coordinating containers across a cluster of machines.

- Key Concepts in Kubernetes:

- Pods: The smallest deployable unit in Kubernetes. A Pod typically contains one or more tightly coupled containers that share network and storage resources. A microservice usually runs within one or more Pods.

- Deployments: A higher-level abstraction that manages the desired state of your Pods. It ensures that a specified number of replica Pods are always running and handles rolling updates and rollbacks.

- Services: An abstract way to expose a group of Pods as a network service. A Kubernetes Service provides a stable IP address and DNS name for your microservice, regardless of which Pods are actually running behind it. This is Kubernetes's built-in service discovery mechanism.

- Ingress: Manages external access to services in a cluster, typically HTTP/HTTPS. It can provide URL-based routing, load balancing, and SSL termination, effectively acting as an API gateway for external traffic into the cluster.

- ReplicaSets: Ensures a stable set of replica Pods are running at any given time.

- Namespaces: Provides a mechanism for isolating groups of resources within a single cluster.

- Benefits for Microservices:

- Automated Deployment and Scaling: Kubernetes automates the deployment of new service versions and can automatically scale the number of Pods up or down based on demand or predefined metrics.

- Self-Healing: If a Pod crashes, becomes unhealthy, or a node fails, Kubernetes automatically restarts or reschedules the Pods to healthy nodes, ensuring high availability.

- Service Discovery and Load Balancing: As mentioned, Kubernetes provides native service discovery and load balancing, simplifying inter-service communication.

- Rolling Updates and Rollbacks: Enables seamless updates of microservices without downtime and provides easy mechanisms to revert to a previous version if issues arise.

- Resource Management: Efficiently allocates CPU, memory, and other resources across the cluster.

- Secrets and Configuration Management: Built-in features (ConfigMaps, Secrets) for managing sensitive and non-sensitive configuration data.

Adopting Kubernetes significantly reduces the operational burden of managing a complex microservices architecture, allowing teams to focus more on developing business logic rather than infrastructure concerns.

5.3 CI/CD Pipelines

Continuous Integration (CI) and Continuous Delivery/Deployment (CD) pipelines are fundamental to realizing the agility benefits of microservices. They automate the entire software release process, from code commit to production deployment.

- Continuous Integration (CI):

- Principle: Developers frequently merge their code changes into a central repository (e.g., Git). Each merge triggers an automated build and test process.

- Steps:

- Code Commit: Developer pushes code to a shared repository.

- Automated Build: The CI server compiles the code, resolves dependencies, and creates executable artifacts (e.g., Docker images for microservices).

- Automated Testing: Runs unit tests, integration tests, and static code analysis to quickly identify defects.

- Feedback: Provides immediate feedback to developers on the success or failure of the build and tests.

- Benefits: Detects integration issues early, improves code quality, reduces the risk of complex merges.

- Continuous Delivery (CD):

- Principle: An extension of CI, ensuring that software is always in a deployable state, ready to be released to production at any time, typically through a manual approval gate.

- Steps (after CI):

- Artifact Storage: Stores build artifacts (e.g., Docker images in a container registry).

- Automated Deployment to Staging/Testing Environments: Deploys the artifacts to various test environments (e.g., QA, UAT) for further automated and manual testing.

- Automated Acceptance Testing: Runs end-to-end tests, performance tests, and security scans.

- Release Approval: A manual gate for business stakeholders to approve the release to production.

- Continuous Deployment (CD):

- Principle: An advanced form of CD where every change that passes all automated tests and quality gates is automatically deployed to production without manual intervention.

- Benefits: Faster time-to-market, reduced manual errors, constant flow of value to users. Requires a very high level of automation, trust in automated testing, and robust monitoring.

- Deployment Strategies for Microservices:

- Rolling Updates: Gradually replaces old instances of a service with new ones, ensuring continuous availability. Kubernetes handles this natively.

- Blue/Green Deployments: Maintains two identical production environments ("blue" and "green"). New versions are deployed to the inactive environment (e.g., "green"), thoroughly tested, and then traffic is switched from "blue" to "green" instantly, minimizing downtime and providing an easy rollback path.

- Canary Releases: A small percentage of live traffic is routed to the new version of a service (the "canary"), while the majority of traffic still goes to the old version. If the canary performs well, more traffic is gradually shifted until all traffic is on the new version. This allows for real-world testing with minimal impact.

- Tools for CI/CD:

- Jenkins, GitLab CI/CD, GitHub Actions, Azure DevOps, CircleCI, Travis CI. These tools orchestrate the pipeline stages, integrate with version control systems, and execute scripts for building, testing, and deploying microservices.

A well-implemented CI/CD pipeline is the backbone of efficient microservices development and operations, enabling rapid, reliable, and frequent releases.

5.4 Centralized Logging

In a microservices architecture, logs are scattered across dozens or hundreds of independent services, often running on different hosts. Aggregating, storing, searching, and analyzing these logs from a central location is absolutely crucial for troubleshooting, monitoring, and understanding system behavior.

- Challenges of Distributed Logging:

- Scattered Logs: Logs generated by different services are on different machines, often in different formats.

- Correlation: Tracing a single user request across multiple services requires a way to correlate log entries from different services.

- Volume: The sheer volume of logs generated can be overwhelming.

- Centralized Logging Solutions:

- ELK Stack (Elasticsearch, Logstash, Kibana): One of the most popular open-source solutions.

- Logstash: Collects logs from various sources, processes them (parses, filters, transforms), and sends them to Elasticsearch.

- Elasticsearch: A distributed, RESTful search and analytics engine that stores and indexes log data, making it highly searchable.

- Kibana: A data visualization dashboard for Elasticsearch, allowing users to query, analyze, and visualize log data through interactive dashboards.

- Promtail/Loki/Grafana: Loki is a horizontally scalable, highly available, multi-tenant log aggregation system inspired by Prometheus. It uses labels to index logs, making it very efficient. Promtail is the agent that ships logs to Loki, and Grafana is used for visualization.

- Commercial Solutions: Splunk, Datadog, Sumo Logic, New Relic Logs, AWS CloudWatch Logs. These often provide more advanced features, enterprise support, and integration with other monitoring tools.

- ELK Stack (Elasticsearch, Logstash, Kibana): One of the most popular open-source solutions.

- Best Practices for Logging:

- Structured Logging: Log data in a structured format (e.g., JSON) rather than plain text. This makes it machine-readable and easier to parse and query.

- Correlation IDs: Implement a mechanism to pass a unique correlation ID (e.g., a Request ID) across all services involved in a single user request. This ID should be included in every log entry, enabling you to trace the entire flow of a request through the distributed system.

- Contextual Information: Include relevant context in log entries (e.g., service name, host, user ID, tenant ID, transaction ID) to aid in debugging.

- Logging Levels: Use appropriate logging levels (DEBUG, INFO, WARN, ERROR, FATAL) to filter logs based on severity.

- Avoid Sensitive Data: Never log sensitive information (passwords, PII, credit card numbers) directly.

- Asynchronous Logging: Implement asynchronous logging to avoid blocking application threads, especially in high-throughput services.

Centralized logging transforms log data from scattered text files into an actionable source of truth for understanding and diagnosing issues within a microservices ecosystem.

5.5 Distributed Tracing

While centralized logging helps in understanding what happened within individual services, distributed tracing provides an end-to-end view of a request's journey across multiple microservices. In a complex call graph, a single user action might invoke dozens of services. Without tracing, debugging latency or errors in such a scenario is incredibly difficult.

- Concept: Distributed tracing tracks the path of a request as it flows through various services. It creates a "trace" which is a collection of "spans."

- Trace: Represents a single end-to-end operation, typically initiated by a client request.

- Span: Represents a logical unit of work within a trace, such as an HTTP request to another service, a database query, or a method execution. Spans have a start and end time, a name, and can have parent-child relationships to indicate causality.

- How it Works:

- When a request enters the system (e.g., via the API gateway), a unique

trace_idis generated. - This

trace_id(along with aspan_idfor the current operation and aparent_span_idif applicable) is propagated through headers in every inter-service call. - Each service, upon receiving the request, creates its own span (with its own

span_id) and sends its trace data to a centralized tracing system. - The tracing system stitches together all these spans using the

trace_idandparent_span_idrelationships to reconstruct the full path and timing of the request.

- When a request enters the system (e.g., via the API gateway), a unique

- Benefits:

- Performance Bottleneck Identification: Quickly identify which service or operation is causing latency in an end-to-end request.

- Error Localization: Pinpoint exactly where an error occurred in a distributed transaction.

- Service Dependency Mapping: Visualize the call graph of your services and understand their dependencies.

- Improved Observability: Provides deep insights into the behavior and performance of the entire system.

- Tools:

- Jaeger (OpenTracing/OpenTelemetry compatible): An open-source, end-to-end distributed tracing system that helps monitor and troubleshoot complex microservices-based architectures.

- Zipkin: Another open-source distributed tracing system, inspired by Google's Dapper.

- OpenTelemetry: A vendor-agnostic set of APIs, SDKs, and tools for generating, collecting, and exporting telemetry data (traces, metrics, logs). It aims to standardize how observability data is produced and consumed.

- Commercial Solutions: Datadog APM, New Relic, AWS X-Ray, Google Cloud Trace.

Implementing distributed tracing is a non-negotiable requirement for effectively operating and debugging complex microservices environments.

5.6 Monitoring and Alerting

Even with robust design and deployment, things can go wrong in production. Comprehensive monitoring and proactive alerting are critical for maintaining the health, performance, and availability of microservices.

- Key Monitoring Pillars (The Four Golden Signals):

- Latency: The time it takes to service a request (average, median, 99th percentile).

- Traffic: How much demand is being placed on your service (requests per second, network I/O).

- Errors: The rate of requests that fail (e.g., HTTP 5xx responses, exceptions).

- Saturation: How "full" your service is (CPU utilization, memory usage, disk I/O, network bandwidth).

- Metrics Collection:

- Application Metrics: Custom metrics emitted by your microservices (e.g., number of orders processed, database query times, cache hit ratios, queue depths).

- System Metrics: Infrastructure-level metrics (CPU, memory, disk, network usage) of the hosts and containers running your services.

- Business Metrics: Metrics directly related to business outcomes (e.g., conversion rates, revenue per hour).

- Tools for Metrics Collection and Visualization:

- Prometheus: A powerful open-source monitoring system that scrapes metrics from configured targets at specified intervals, stores them, and provides a flexible query language (PromQL).

- Grafana: A leading open-source platform for analytics and interactive visualization. It integrates with Prometheus (and many other data sources) to create rich, customizable dashboards for monitoring.

- Commercial Solutions: Datadog, New Relic, Dynatrace, AWS CloudWatch, Google Cloud Monitoring. These often provide agents for metrics collection, advanced AI-powered anomaly detection, and unified dashboards.

- Health Checks:

- Each microservice should expose a health endpoint (e.g.,

/healthor/actuator/healthin Spring Boot) that indicates its operational status. This can be a simple "up" or more detailed, checking database connectivity, message broker status, etc. - Kubernetes uses these health checks (liveness and readiness probes) to determine if a Pod is healthy and ready to receive traffic.

- Each microservice should expose a health endpoint (e.g.,

- Alerting:

- Define Thresholds: Set clear thresholds for metrics that, when breached, indicate a problem (e.g., error rate > 5%, CPU usage > 90% for 5 minutes).

- Alerting Rules: Configure alerting rules in your monitoring system (e.g., Prometheus Alertmanager) to trigger notifications.

- Notification Channels: Send alerts to appropriate channels (Slack, PagerDuty, email, SMS) based on severity.

- Minimize Noise: Carefully tune alerts to avoid "alert fatigue." Only alert on actionable items.

- Incident Response: Establish clear runbooks and processes for responding to alerts and resolving incidents.

Table: Comparison of Common Monitoring Tool Categories

| Feature/Category | Prometheus/Grafana (Open Source Stack) | Commercial APM Tools (e.g., Datadog, New Relic) | Cloud Provider Monitoring (e.g., AWS CloudWatch) |

|---|---|---|---|

| Primary Focus | Time-series metrics collection, alerting, visualization. | End-to-end application performance monitoring (APM), user experience, business metrics. | Infrastructure, application, and log monitoring within a specific cloud ecosystem. |

| Data Collection | Pull-based (scraping from exporters), flexible custom metrics. | Agents installed on hosts/containers, auto-instrumentation, push-based. | Agents, native integrations with cloud services, some custom metrics. |

| Distributed Tracing | Integrates with Jaeger/Zipkin via OpenTelemetry. | Built-in, often with auto-instrumentation for popular frameworks. | Cloud-specific tracing (e.g., AWS X-Ray). |

| Logging Integration | Integrates with Loki/ELK stack. | Integrated log management and analysis. | Integrated log management (e.g., CloudWatch Logs). |

| Alerting | Robust alerting with Alertmanager, highly customizable. | Advanced anomaly detection, predictive alerting, incident management. | Rule-based alerting, some ML-driven anomaly detection. |

| Deployment | Self-hosted, requires management of infrastructure. | SaaS model, managed by vendor. | PaaS model, managed by cloud provider. |

| Cost | Free (software), but incurs infrastructure and operational costs. | Subscription-based, scales with usage/hosts. | Pay-as-you-go for metrics, logs, and dashboards. |

| Ease of Use | Requires some expertise to set up and configure. | Generally high, especially with auto-instrumentation. | Varies, generally good for cloud-native services. |

| Vendor Lock-in | Low (open standards, community-driven). | Moderate to high (specific agents, proprietary dashboards). | High (tied to specific cloud provider services). |