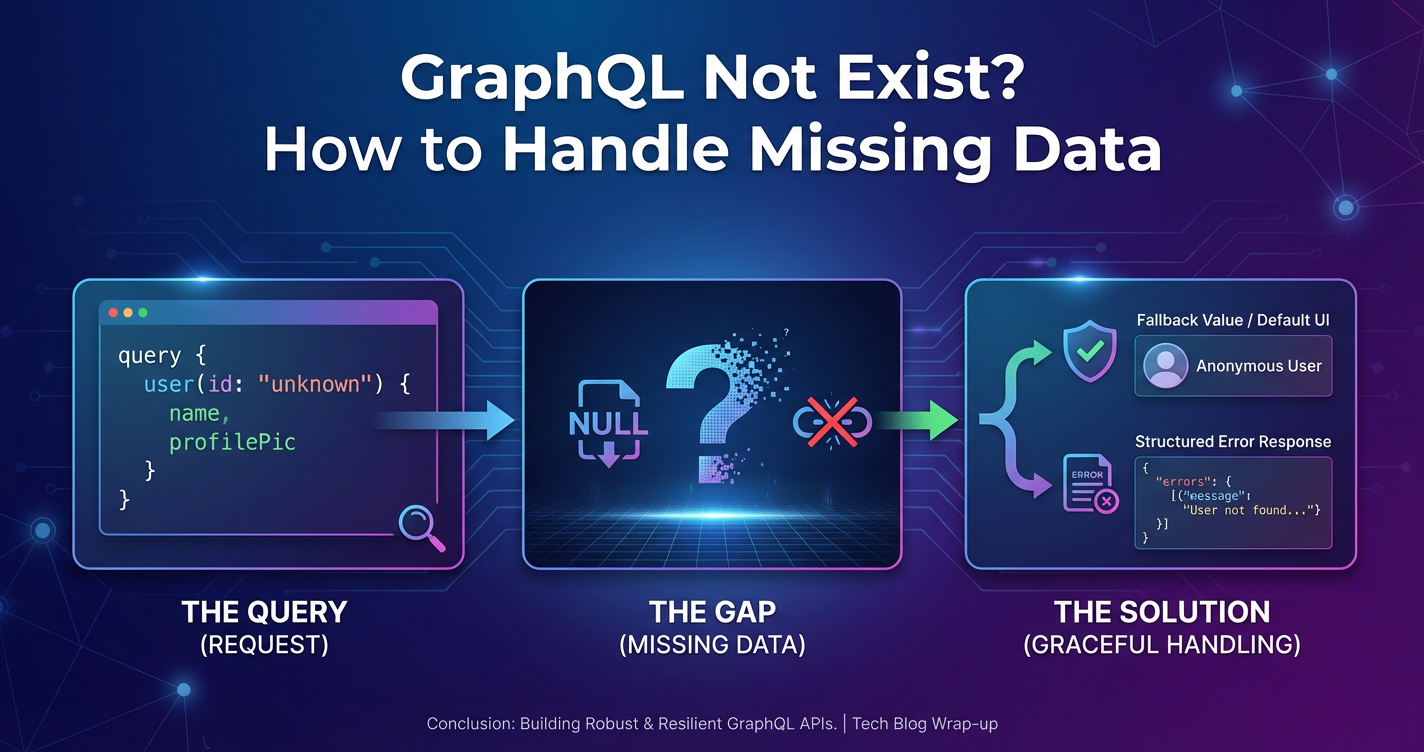

GraphQL Not Exist? How to Handle Missing Data

The modern application landscape is fundamentally shaped by data. From real-time dashboards to personalized user experiences, the ability to efficiently and reliably access, process, and display information is paramount. For a significant period, GraphQL has emerged as a powerful paradigm, lauded for its ability to allow clients to precisely request the data they need, reducing over-fetching and under-fetching, and simplifying complex data graph interactions. Its single endpoint model and declarative nature have brought considerable efficiencies to front-end development, offering a compelling alternative to traditional RESTful APIs for specific use cases. The elegance of a client defining its data requirements, receiving a tailored response, and navigating relationships within a unified graph has been a transformative force for many organizations. This paradigm shift addressed many frustrations developers faced with fixed-structure REST endpoints, where combining data from multiple resources often required several distinct API calls or complex server-side aggregation. GraphQL provided a language for data, enabling a more semantic and intuitive interaction with back-end systems, moving the locus of control over data shape closer to the consumer.

However, the world of software architecture is rarely monolithic. While GraphQL offers distinct advantages, its adoption isn't universal, nor is it always the optimal solution for every problem. There are myriad scenarios where GraphQL might "not exist" within an organization's technology stack, or where its implementation might not be feasible, desirable, or mature enough to address all data-related challenges. Legacy systems, organizational inertia, specific infrastructure constraints, the nature of the data itself, or simply a strategic decision to stick with proven REST or RPC patterns can all mean that GraphQL isn't the primary, or even a secondary, data fetching mechanism. In such environments, or even in hybrid ones where GraphQL coexists with other apis, the fundamental challenge of "handling missing data" remains critically important. Data can be missing for a multitude of reasons: network instability, incorrect requests, schema changes, upstream service failures, or simply the inherent absence of certain information. The ability to gracefully manage these situations, ensuring application resilience and a smooth user experience, transcends any single api technology. It requires a comprehensive approach encompassing robust api design, intelligent api gateway configurations, resilient client-side logic, and pervasive observability. This article will delve deep into these strategies, providing a holistic framework for navigating the complexities of data handling when the sophisticated graph-fetching capabilities of GraphQL are not at your disposal, or when data simply isn't there, regardless of the fetching mechanism. We will explore how a well-designed api architecture, underpinned by strategic gateway deployment and meticulous data handling practices, forms the bedrock of reliable and performant applications in a data-driven world.

The GraphQL Paradigm: Strengths, Scenarios, and Strategic Choices

To fully appreciate the alternatives and the broader challenges of data handling, it's essential to understand the core strengths that propelled GraphQL into prominence. At its heart, GraphQL is a query language for your API, and a server-side runtime for executing those queries by using a type system you define for your data. This fundamental design choice empowers clients to specify exactly what data they need, no more, no less, and in a single request. This contrasts sharply with traditional REST APIs, where a client might need to make multiple requests to different endpoints (/users, /users/{id}/posts, /posts/{id}/comments) to gather related information, or might receive a fixed, often verbose, payload from a single endpoint that contains fields it doesn't require.

The primary benefits of GraphQL are multifaceted. Firstly, it drastically reduces over-fetching, where the client receives more data than it actually needs. This is particularly crucial for mobile applications operating on limited bandwidth or for scenarios where data payload size directly impacts performance and cost. Secondly, it virtually eliminates under-fetching, where a client has to make multiple requests to retrieve all necessary data. Instead, related resources can be fetched in a single, complex query, simplifying client-side logic and reducing network round trips. Thirdly, GraphQL provides a strong type system, which offers inherent data validation and a clear contract between client and server. This schema acts as a single source of truth, enabling better collaboration between front-end and back-end teams, facilitating code generation, and making API evolution more manageable. Finally, the ability to introspect the schema allows developers to explore the API's capabilities dynamically, enhancing developer experience.

Despite these compelling advantages, GraphQL isn't a panacea, nor is it universally adopted. Several factors can lead to scenarios where GraphQL might not be present in an organization's ecosystem or might not be the chosen solution for a particular problem.

Legacy Systems and Incremental Adoption: Many enterprises operate on a foundation of well-established, often decades-old, legacy systems. These systems frequently expose data through existing RESTful APIs, SOAP web services, or even direct database access. Migrating such systems wholesale to GraphQL can be an enormous undertaking, fraught with risks, high costs, and significant disruption. In such environments, maintaining and extending existing apis often takes precedence, and any GraphQL adoption might be incremental, starting with new services rather than overhauling the old.

Simpler Use Cases and Overhead: For straightforward applications with minimal data relationships or a limited number of data models, the overhead of setting up and maintaining a GraphQL server (with its schema definition, resolvers, and potentially complex N+1 query problem solutions) might outweigh its benefits. A few well-designed REST endpoints could be simpler, quicker to implement, and perfectly adequate. The "developer experience" argument for GraphQL sometimes overlooks the initial learning curve and operational complexity for teams not already familiar with its paradigm.

Architectural Preferences and Skill Sets: Some organizations simply have a strong preference for REST or RPC-style apis, deeply rooted in their architectural principles and team skill sets. Investing in a new technology like GraphQL requires not only technical implementation but also training, documentation, and a cultural shift. Teams accustomed to the statelessness and clear resource-oriented nature of REST might find the graph-oriented approach less intuitive for certain types of interactions.

Caching Challenges: While GraphQL excels at fetching specific data, caching at the HTTP layer can be more complex than with REST. REST leverages HTTP caching mechanisms (ETags, Last-Modified, Cache-Control) which are well-understood and widely supported by proxies and browsers. GraphQL, often relying on a single POST endpoint, makes traditional HTTP caching more challenging, pushing caching logic to the application layer or specialized GraphQL caching solutions, which adds another layer of complexity.

Operational Complexity and Monitoring: Operating a GraphQL service can introduce new monitoring and performance challenges. Debugging complex GraphQL queries that aggregate data from multiple microservices can be more intricate than debugging individual REST calls. Performance bottlenecks might be harder to pinpoint without specialized tooling. Ensuring efficient resolver execution and preventing abusive, deep queries requires careful design and robust security measures.

Alternative Data Access Patterns: Beyond REST and GraphQL, other data access patterns persist and thrive. gRPC, for instance, offers high-performance, strongly typed, contract-first communication, especially beneficial for inter-service communication in microservice architectures. WebSockets provide persistent, bi-directional communication, ideal for real-time data streaming. Message queues (like Kafka or RabbitMQ) facilitate asynchronous data exchange, crucial for event-driven architectures where immediate responses are not required. Each of these patterns serves specific purposes and contributes to a diverse data landscape, none of which are replaced by GraphQL.

Therefore, whether due to legacy constraints, simpler requirements, architectural choices, or the presence of other specialized apis, organizations frequently find themselves relying on paradigms where GraphQL's inherent data fetching capabilities are not available. This reality underscores the universal importance of robust data handling strategies, particularly concerning "missing data," irrespective of the chosen api technology. The core challenge is not about the query language, but about the reliability and integrity of the data itself, and how gracefully applications can adapt when that reliability is compromised.

The Core Challenge: Handling Missing Data in APIs

The concept of "missing data" in the context of APIs is far more pervasive and nuanced than it might initially seem. It encompasses a broad spectrum of scenarios, from a simple null value to a complete service outage, each demanding a specific approach to detection, mitigation, and recovery. Effectively handling these situations is not merely a matter of preventing application crashes; it's about maintaining data integrity, ensuring a smooth user experience, preserving business logic accuracy, and safeguarding against potential security vulnerabilities.

Defining "Missing Data" in API Contexts

"Missing data" can manifest in various forms when interacting with an api:

- Null Values or Empty Fields: A field expected to contain data might return

nullor an empty string/array. For instance, a user profile API might return{"email": null, "phone_number": []}. This often indicates optional data or data that hasn't been provided. - Missing Fields/Keys: An entire field or key might be absent from the API response object. If an API contract typically includes a

last_login_datefield, but it's missing for certain users, this represents missing data. - Empty Arrays/Collections: An API endpoint designed to return a list of items (e.g.,

/products/{id}/reviews) might return an empty array[]if no items exist. While technically present, the content is missing. - HTTP Status Codes Indicating Absence (404 Not Found, 204 No Content): A 404 response explicitly states that the requested resource does not exist. A 204 response indicates that the server successfully processed the request but is not returning any content, which, in certain contexts, can be interpreted as a form of "missing data" for a client expecting a payload.

- Network Errors and Timeouts: The client might fail to receive any response due to network connectivity issues, server unavailability, or exceeding a predefined timeout. This means the entire data response is missing.

- Malformed or Invalid Responses: The API might return data, but it might not conform to the expected format (e.g., invalid JSON, incorrect data types, unexpected structure). While not strictly "missing," the data is unusable, effectively making it missing from the application's perspective.

- Authentication/Authorization Failures (401 Unauthorized, 403 Forbidden): While these indicate access issues, from the application's perspective, the requested data is inaccessible and thus missing.

- Rate Limiting (429 Too Many Requests): Temporarily prevents data access.

- Server Errors (5xx Series): Indicate an issue on the server side, resulting in the inability to retrieve requested data.

Why Handling Missing Data is Critical

The implications of poorly handled missing data are far-reaching and can severely impact an application's reliability, usability, and correctness:

- User Experience Degradation: Users encounter broken UIs, empty sections, or cryptic error messages. This leads to frustration, distrust, and a perception of a poorly built application. Imagine a product detail page where the price or image is missing, or a user profile that fails to load entirely.

- Application Crashes and Unpredictable Behavior: Client-side code often makes assumptions about the presence and structure of data. If a field expected to be a string suddenly becomes

nullor is entirely absent, it can lead toTypeErrors,ReferenceErrors, or other runtime exceptions, crashing the application or parts of it. - Incorrect Business Logic: Missing data can subtly corrupt business logic. If a calculation relies on a financial figure that comes back as

nullor0unexpectedly, the resulting calculation will be incorrect, potentially leading to financial discrepancies or flawed decision-making. - Security Vulnerabilities: In some extreme cases, unhandled missing data, especially in authentication or authorization contexts, could be exploited. If a system implicitly grants access when a permission check fails due to missing data, it presents a significant vulnerability. More commonly, errors due to missing data can expose internal system details through stack traces, aiding attackers.

- Increased Debugging Time and Operational Costs: When applications frequently crash or behave unexpectedly due to missing data, developers and operations teams spend significant time reproducing, diagnosing, and fixing these issues. This increases development costs and distracts from feature development.

- Data Inconsistency and Trust Issues: If an application silently proceeds with incomplete data, it can lead to inconsistent states across different parts of the system or even across different users. Over time, this erodes trust in the application and the data it presents.

Root Causes of Missing Data

Understanding the causes is key to implementing effective handling strategies:

- Data Source Issues: The ultimate source of truth (database, third-party service, file system) might legitimately not have the data. This could be due to incomplete user input, data migration errors, or intentional omission.

- Integration Problems: When an

apiaggregates data from multiple internal or external services, a failure in any one of those upstream services can result in partial or entirely missing data in the finalapiresponse. - Schema Mismatches and API Evolution:

apis evolve. Fields are added, deprecated, or removed. If clients aren't updated in sync withapichanges, they might request or expect data that no longer exists or comes in a different format, leading to missing data perceptions. - Network Instability and Latency: Unreliable network connections, high latency, or intermittent outages can prevent responses from reaching the client or cause requests to time out before a response is received.

- Client-Side Errors: Malformed requests, incorrect parameters, or invalid

apikeys from the client can lead the server to return errors or no data at all. - Configuration Errors: Incorrect server configurations, firewall rules, or load balancer settings can intermittently prevent

apitraffic from reaching its destination, leading to perceived missing data.

Given the prevalence and criticality of these challenges, it becomes abundantly clear that a proactive and multi-layered approach to handling missing data is not merely a best practice but a fundamental requirement for building resilient and reliable software systems.

Robust Strategies for API Design and Data Fetching: Pre-emptive Measures

Before data even reaches a client, meticulous API design and robust server-side practices can significantly mitigate the problems associated with missing data. These pre-emptive measures establish a strong foundation, making subsequent handling at the api gateway and client layers far more manageable.

Schema Definition and Validation: The Contractual Agreement

A clear and enforceable api contract is the first line of defense against data inconsistencies and unexpected omissions. * OpenAPI/Swagger for REST: For RESTful apis, comprehensive OpenAPI (formerly Swagger) specifications are indispensable. These declarative documents precisely define endpoints, expected request formats (parameters, headers, body), and, critically, the exact structure of response payloads for both success and error scenarios. They specify data types, required fields, optional fields, minimum/maximum lengths, regular expression patterns, and enumerations. * Strictness vs. Flexibility: The schema should clearly differentiate between required fields and optional fields. For required fields, the API should return a client error (e.g., 400 Bad Request) if they are missing from a request. For optional fields, the client should be prepared to handle their absence or null values. * JSON Schema: Beneath OpenAPI, JSON Schema provides the granular mechanism for validating JSON data structures. It allows for complex validation rules, ensuring that data conforms to expected types and formats, thereby catching malformed data at the earliest possible stage. * Benefits: A well-defined schema serves as documentation, facilitates automated client code generation, enables server-side request/response validation before processing or sending, and provides a shared understanding for both API producers and consumers. By validating incoming requests against the schema, incorrect or incomplete client data can be rejected immediately, preventing deeper system errors. Conversely, validating outgoing responses ensures that the API is always adhering to its published contract, preventing unexpected data shapes or missing required fields from reaching clients.

API Versioning: Managing Evolution Gracefully

apis are living entities; they evolve. New features necessitate new data, existing fields might change their semantics, or old fields might become obsolete. Without a thoughtful versioning strategy, these changes can easily lead to clients encountering missing data or unexpected formats. * Strategies: Common versioning strategies include URL versioning (e.g., /v1/users, /v2/users), header versioning (Accept: application/vnd.myapi.v2+json), or query parameter versioning (?api-version=2). * Deprecation Policies: Critical to versioning is a clear deprecation policy. When fields or entire endpoints are to be removed, they should first be marked as deprecated in a new api version, with ample warning periods (e.g., 6-12 months). During this period, older versions should continue to function, giving clients time to migrate. Once the deprecation period ends, the field or endpoint can be removed, and clients on the older version will then legitimately experience missing data, but it will be an expected missing data event, rather than a surprise. This controlled evolution prevents abrupt breakages.

Error Handling Standards: Consistent and Informative Responses

When things go wrong, the API's response should be as informative and consistent as possible. Poor error handling can make it difficult for clients to understand why data is missing or what actions to take. * HTTP Status Codes: Leverage the rich set of HTTP status codes to convey the general nature of the error (e.g., 400 Bad Request for client-side input validation, 404 Not Found for missing resources, 401 Unauthorized, 403 Forbidden, 429 Too Many Requests, 500 Internal Server Error for unhandled server issues, 503 Service Unavailable for temporary outages, 504 Gateway Timeout). * Standardized Error Body: Beyond the status code, the response body should provide more detailed, machine-readable information. A common practice is to use a standardized error object structure across all apis, perhaps inspired by RFC 7807 (Problem Details for HTTP APIs). This typically includes: * type: A URI that identifies the problem type (e.g., https://example.com/probs/out-of-credit). * title: A short, human-readable summary of the problem type. * status: The HTTP status code (for redundancy). * detail: A human-readable explanation specific to this occurrence of the problem. * instance: A URI that identifies the specific occurrence of the problem (e.g., a request ID). * errors: An optional array or object for field-specific validation errors. * Trace IDs: Include a correlation or trace ID in every api response (even successful ones) and log it internally. When a client encounters an error or missing data, they can provide this ID to support, allowing operations teams to quickly locate relevant logs and diagnose the issue, whether it's an upstream service failure or a data anomaly.

Graceful Degradation and Fallbacks: Resilience in Action

Architecting for failure means anticipating that some data will be unavailable at times. Graceful degradation ensures that an application remains functional, albeit with reduced features or data, rather than completely failing. * Service Isolation: Design services so that the failure of one doesn't bring down the entire application. If a "recommendations" service is down, the core product browsing experience should still function. * Default Values: When a piece of data is missing, define sensible default values. If a user's avatar URL is null, display a generic placeholder image. If a product description is missing, display "Description unavailable." * Pre-computed Fallbacks: For critical but potentially unavailable data, consider pre-computing or caching fallback values. If the real-time stock price feed is down, display the last known price with a disclaimer. * Feature Toggles: Use feature flags to selectively disable parts of the UI or functionality that rely on unstable or unavailable apis, preventing a degraded experience from becoming a broken one.

Idempotency: Safe Retries for Data Consistency

Idempotency means that making the same request multiple times has the same effect as making it once. This is crucial for handling network glitches or timeouts where the client isn't sure if a request succeeded. * Design for Idempotency: For POST requests that create resources, use a unique idempotency key (often a UUID) provided by the client. The server stores this key and, if it sees the same key again for a POST, it returns the original successful response without re-processing. PUT requests (for updates) and DELETE requests are inherently idempotent if they operate on a resource by its identifier. GET requests are always idempotent. * Impact on Missing Data: If a client times out waiting for a response, it can safely retry an idempotent request without fear of creating duplicate records or unintended side effects. This reduces the perception of missing data (because the data eventually gets processed) and improves data consistency.

Pagination, Filtering, and Sorting: Efficient Data Retrieval

Poorly managed data retrieval can lead to perceived missing data, performance bottlenecks, or even api abuse. * Pagination: Always paginate large result sets. Sending thousands of records in a single api response is inefficient, memory-intensive, and prone to timeouts, making the entire dataset effectively "missing" for the client. Common methods include offset-based (offset, limit) and cursor-based (after, first). * Filtering: Allow clients to filter data based on various criteria (e.g., status=active, category=electronics). This reduces the amount of irrelevant data transmitted, making the relevant data more readily available and improving performance. * Sorting: Provide options for clients to sort results. This helps clients display data in a meaningful order, making it easier to find what they need, rather than sifting through an unorganized mass. * Benefits: These mechanisms ensure that clients receive precisely the subset of data they need, improving api performance, reducing bandwidth usage, and preventing scenarios where clients struggle to process or display overwhelmingly large payloads.

Data Transformation and Harmonization: Bridging Disparate Sources

In a microservices world, data often originates from multiple, heterogeneous sources with different schemas, naming conventions, and data formats. * Server-Side Aggregation: The api layer (or dedicated aggregation services) can be responsible for fetching data from multiple internal services, transforming it into a consistent format, and combining it into a single, cohesive response for the client. This effectively abstracts away the complexity of the underlying data landscape. * Harmonization Layers: Implement data harmonization layers that normalize values (e.g., standardizing date formats, currency codes, or geographical units), rename fields to a consistent standard, and resolve relationships between disparate datasets. This ensures that the client receives data that is consistent and ready for consumption, even if it was "missing" from a particular upstream service in the expected format.

Caching Strategies: Speed and Resilience

Caching is a powerful technique to reduce load on backend services, decrease latency, and provide data resilience. * HTTP Caching: Leverage standard HTTP caching headers (Cache-Control, Expires, ETag, Last-Modified) for GET requests where data doesn't change frequently. Proxies and browsers can then serve cached responses without hitting the origin server. * Application-Level Caching: Implement in-memory caches (e.g., Redis, Memcached) within your api services to store frequently accessed data. This can provide rapid responses even if the original data source is slow or temporarily unavailable (stale-while-revalidate). * Content Delivery Networks (CDNs): For static assets or publicly accessible api responses, CDNs can cache data geographically closer to users, significantly reducing latency and acting as a fallback if the origin server experiences issues. * Benefits: Caching not only boosts performance but also acts as a crucial buffer. If a backend database or service becomes temporarily unreachable, the api can still serve stale data from its cache, preventing a complete outage and reducing the perception of missing data for the end-user.

Circuit Breakers and Timeouts: Preventing Cascading Failures

In distributed systems, the failure of one service can quickly cascade and bring down others. Circuit breakers and timeouts are essential patterns for resilience. * Timeouts: Configure aggressive timeouts for all outgoing HTTP calls to dependent services. If a service doesn't respond within a reasonable timeframe, the calling service should stop waiting and handle the timeout (e.g., return a fallback, retry, or propagate an error). This prevents threads from hanging indefinitely and consuming resources. * Circuit Breakers: Implement the circuit breaker pattern (e.g., using libraries like Hystrix or Resilience4j). When a dependency starts failing repeatedly (e.g., a high error rate or consecutive timeouts), the circuit breaker "opens," preventing further calls to that dependency for a defined period. During this open state, requests are immediately failed (or fall back to a cached response) without even attempting to call the unhealthy service. After a configurable "half-open" state, a few requests are allowed through to check if the service has recovered. * Impact on Missing Data: These patterns prevent a slow or failing dependency from consuming all resources, allowing the overall system to remain responsive and preventing complete data unavailability due to cascading failures. Instead of the entire api becoming unresponsive, only the data from the problematic dependency might be missing, and the system can gracefully handle that specific absence.

By meticulously implementing these server-side and api design strategies, organizations can significantly reduce the likelihood and impact of missing data. They create a robust and predictable api ecosystem that is resilient to various failures, forming the bedrock upon which reliable client applications can be built.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

The Pivotal Role of the API Gateway

In modern, often microservice-based architectures, the API Gateway has evolved from a simple reverse proxy to an indispensable component for managing, securing, and optimizing api traffic. When GraphQL isn't the primary data orchestration layer, or when dealing with a multitude of diverse apis (including legacy REST, gRPC, and perhaps even internal AI services), a sophisticated api gateway becomes even more critical. It acts as a single entry point for clients, abstracting the complexities of the backend services, and crucially, offering powerful capabilities to address the challenge of missing data.

What is an API Gateway?

An api gateway sits between clients and a collection of backend services. It serves as a single ingress point, consolidating various cross-cutting concerns that would otherwise need to be implemented in each individual service. This includes:

- Request Routing: Directing incoming client requests to the appropriate backend service based on the request path, host, or other criteria.

- Load Balancing: Distributing incoming traffic across multiple instances of backend services to ensure high availability and performance.

- Authentication and Authorization: Centralizing security concerns by verifying client identities and their permissions before forwarding requests to backend services.

- Rate Limiting: Protecting backend services from abuse or overload by restricting the number of requests a client can make within a specific timeframe.

- Monitoring and Logging: Providing a centralized point for capturing and aggregating

apitraffic metrics and logs, offering visibility into the health and performance of the entireapilandscape.

Advanced Functions for Handling Missing Data

Beyond its fundamental roles, a modern api gateway significantly enhances an organization's ability to handle and mitigate missing data scenarios:

- Data Transformation and Protocol Translation:

- One of the most powerful features of an

api gatewayis its ability to transform requests and responses on the fly. In environments with heterogeneous backend services, data formats and schemas often vary. Agatewaycan normalize incoming client requests before routing them to a specific backend service, ensuring compatibility. More importantly, it can transform disparate backend responses into a consistent, unified format before sending them back to the client. This means that even if an upstream service provides data in a slightly different structure or omits a non-critical field, thegatewaycan apply rules to inject default values, rename fields, or restructure the JSON/XML payload, making the data appear consistent to the client. This ability to "fill in the gaps" or "harmonize" data at the edge greatly reduces the burden on client applications to deal with various backend idiosyncrasies.

- One of the most powerful features of an

- Schema Enforcement and Validation:

- Just as individual services benefit from schema validation, an

api gatewaycan enforce schema adherence at the edge. It can validate incoming request bodies and query parameters against defined schemas (e.g., OpenAPI specifications) before routing them. This prevents malformed requests from ever reaching backend services, reducing their processing load and preventing errors caused by invalid input. Similarly, some advancedgateways can validate outgoing responses against predefined contracts, ensuring that backend services always return data in the expected format. If a backend accidentally omits a required field or sends an incorrect data type, thegatewaycan intercept this, log the error, and either return a standardized error to the client or even attempt to apply a transformation rule to fix it.

- Just as individual services benefit from schema validation, an

- Response Aggregation and Fan-out/Fan-in:

- While not a full GraphQL replacement, a sophisticated

api gatewaycan mimic some of its aggregation capabilities. For requests that require data from multiple backend services, thegatewaycan execute a "fan-out" pattern: sending parallel requests to several upstream services, waiting for their responses, and then aggregating (or "fan-in") these responses into a single, cohesive payload for the client. If one of the backend services fails or returns partial data, thegatewaycan be configured to:- Return a partial response (e.g., the data from the successful services).

- Inject default values for the missing data from the failed service.

- Return a specific error message indicating which parts of the data are unavailable, allowing the client to gracefully handle the absence. This provides a significant layer of resilience and reduces the client's burden of making multiple

apicalls and then manually stitching together responses.

- While not a full GraphQL replacement, a sophisticated

- Caching at the Gateway Level:

- The

api gatewayis an ideal location for implementing caching. By caching frequently accessedapiresponses, thegatewaycan serve requests directly from its cache without forwarding them to backend services. This drastically reduces latency, decreases the load on backend infrastructure, and provides a powerful mechanism for data resilience. If a backend service becomes temporarily unavailable or slow, thegatewaycan still serve stale-but-available data from its cache, configured with appropriateCache-Controlheaders and TTLs. This ensures a consistent user experience even during temporary backend disruptions.

- The

- Error Orchestration and Centralized Error Handling:

- Different backend services might return errors in inconsistent formats. The

api gatewaycan standardize these error responses, transforming various internal error messages into a single, consistent, and client-friendly error structure. This makes it easier for client applications to parse and react to errors, reducing the development effort required for error handling. Furthermore, thegatewaycan be configured to detect specific backend error codes (e.g., a 5xx series error) and respond with a graceful fallback, a custom error message, or even retry the request to a different instance of the service, proactively preventing missing data from reaching the client.

- Different backend services might return errors in inconsistent formats. The

- Monitoring, Logging, and Analytics:

- All

apitraffic flowing through thegatewaycan be meticulously logged and monitored. This provides an invaluable single source of truth for understandingapiusage, performance, and error rates. Detailed logs can capture request and response payloads, latency, HTTP status codes, and any transformations applied. This data is critical for:- Proactive Detection: Identifying spikes in error rates or latency that might indicate an upstream service is struggling to provide data.

- Root Cause Analysis: Quickly tracing the path of a request and diagnosing why data might be missing (e.g., Is it a client error? A

gatewaymisconfiguration? A backend service failure?). - Performance Optimization: Analyzing traffic patterns to optimize caching strategies or identify bottlenecks.

- Security Auditing: Tracking all

apiaccess and detecting unusual patterns that might indicate malicious activity.

- All

For organizations grappling with complex api landscapes and diverse data sources, particularly when embracing AI models, an advanced api gateway becomes paramount. Tools like APIPark offer comprehensive solutions, not just for managing AI and REST services, but also for standardizing API formats, enabling prompt encapsulation into REST apis, and providing robust end-to-end API lifecycle management. Its capability to integrate over 100+ AI models and unify api invocation formats addresses significant data handling challenges, ensuring that even when data structures might vary at the source (e.g., different AI model apis having distinct input/output formats), a consistent and reliable interface is presented to the consumer. APIPark's features, such as powerful data analysis and detailed api call logging, directly contribute to the observability needed to detect and diagnose missing data scenarios efficiently. Its high performance and ability to support cluster deployment also mean it can handle the scale required to ensure consistent data delivery even under heavy load, further minimizing the likelihood of perceived data absence due to performance bottlenecks. The api gateway acts as a crucial control point, providing the flexibility and resilience needed to maintain data integrity and availability across a heterogeneous api ecosystem, bridging the gap when more specialized solutions like GraphQL are not employed across the board.

Client-Side Strategies for Data Resilience

Even with robust api design and a powerful api gateway, the client application remains the final frontier for handling missing data. It's the client's responsibility to gracefully interpret, display, and react to data inconsistencies, ensuring that the user experience remains as smooth and uninterrupted as possible. These client-side strategies are often the most visible aspect of an application's resilience.

Defensive Programming: Guarding Against the Unknown

The most fundamental client-side strategy is to write code that anticipates and safely handles the absence or malformation of data. * Null Checks and Optional Chaining: Never assume data will be present. Explicitly check for null or undefined values before attempting to access properties. Modern JavaScript offers optional chaining (?.) which is incredibly useful for safely accessing nested properties. For example, instead of user.address.street, use user?.address?.street. This prevents runtime errors when intermediate properties are missing. * Default Values: When a piece of data might be missing or null, provide a sensible default. This can be done using the logical OR operator (||) or nullish coalescing operator (??) in JavaScript, or similar constructs in other languages. For example, const userName = userData?.name ?? 'Guest';. * Type Guards and Runtime Validation: While static typing (e.g., TypeScript) helps catch type mismatches at compile time, runtime validation is still crucial, especially when consuming external apis. Libraries like Zod, Yup, or Joi allow you to define schemas for expected api responses and validate incoming data. If the data doesn't conform, you can handle the invalid structure gracefully, perhaps by ignoring the problematic fields, logging a warning, or displaying an error message. * Error Boundaries (React) or Try-Catch Blocks: Isolate potentially error-prone code within error boundaries (in frameworks like React) or try-catch blocks. This prevents a single error due to missing data from crashing the entire application. Instead, only the affected component or section fails gracefully, perhaps displaying a localized error message or a fallback UI.

UI/UX Considerations: Informing and Guiding the User

How an application communicates missing data to the user is as important as how it handles it technically. * Loading States (Skeletons, Spinners): While data is being fetched, display clear loading indicators. Skeleton screens (placeholder UIs that mimic the structure of the content) are particularly effective as they reduce perceived loading times and prepare the user for the incoming content. * Empty States: If an api legitimately returns an empty list (e.g., no search results, no items in a cart), display a thoughtfully designed "empty state" message or illustration. This informs the user that there's no data yet and often provides calls to action (e.g., "Start adding products," "Adjust your filters"). A blank screen is a terrible user experience. * Meaningful Error Messages: When an api call fails or critical data is missing, display user-friendly error messages that explain what happened (in simple terms), suggest possible solutions (e.g., "Check your internet connection," "Try again later"), and provide contact information for support if needed. Avoid cryptic technical jargon. * Retry Mechanisms: For transient network errors or timeouts, provide a "Retry" button. This empowers the user to attempt fetching the data again without leaving the page or refreshing the entire application. * Partial Content Display with Disclaimers: If an application can still function with partial data, display what's available and clearly indicate which parts are missing. For example, "Product details loaded, but reviews are currently unavailable."

Client-Side Caching & Persistence: Local Data Resilience

Clients can store data locally to improve performance and provide resilience against api unavailability. * Browser Caching (HTTP Cache, LocalStorage, SessionStorage): Leverage the browser's built-in HTTP cache for GET requests where api responses include appropriate Cache-Control headers. For more explicit control, LocalStorage and SessionStorage can store non-sensitive data (e.g., user preferences, form drafts) that can persist across sessions or tabs. * IndexedDB: For larger amounts of structured data, IndexedDB provides a powerful, low-level API for client-side storage, suitable for offline-first applications or those that need to perform complex queries on local data. * State Management Libraries with Persistence: Many modern front-end frameworks use state management libraries (e.g., Redux, Vuex, Zustand). These can be configured with persistence layers to save parts of the application state to local storage, allowing the app to reload with some data already present even if api calls fail on startup. * Benefits: Client-side caching reduces reliance on continuous api calls, speeding up initial loads and providing a fallback source of data if the api becomes unreachable, thus reducing instances of perceived "missing data."

Offline-First Approaches: Service Workers and Background Sync

For critical applications, an offline-first strategy offers the highest level of resilience against missing data due to network unavailability. * Service Workers: These are powerful client-side proxies that sit between the browser and the network. They can intercept network requests, cache assets and api responses, and serve them from the cache even when the network is down. * Cache-First, Network-Fallback: A common strategy is to first check the cache for data; if present, serve it immediately. Concurrently (or afterward), fetch fresh data from the network and update the cache. This provides instant loading (no perceived missing data) and keeps data up-to-date. * Background Sync: Service Workers can queue requests when offline and then automatically resend them when network connectivity is restored. This allows users to perform actions (e.g., create a post, send a message) even without a connection, with the api call being deferred until connectivity returns. * Benefits: Offline-first design effectively eliminates "missing data" due to network problems, providing a seamless experience regardless of internet connectivity.

By combining defensive programming with thoughtful UI/UX, robust client-side caching, and advanced offline capabilities, applications can transform potentially disruptive "missing data" scenarios into minor inconveniences, or even unnoticeable events, ensuring a resilient and user-centric experience. The client-side layer is where all the efforts from api design and gateway orchestration ultimately converge to deliver value to the end-user.

Observability and Continuous Improvement

The journey to effectively handle missing data doesn't end with initial design and deployment. It requires continuous vigilance, monitoring, and a feedback loop to identify emerging issues, refine strategies, and proactively improve the system's resilience. Observability, the ability to understand the internal state of a system by examining its external outputs, is paramount in this regard.

Comprehensive Logging and Monitoring: The Eyes and Ears of Your System

Detailed logging and real-time monitoring are the bedrock of any observability strategy, especially crucial for detecting and diagnosing missing data issues. * Granular API Call Logging: Every api request and response should be logged with sufficient detail, ideally at various points in the stack: * Client-side: Log api call initiation, success, and failure, including HTTP status codes, error messages, and response times. * API Gateway: This is a critical choke point. Log all incoming requests, their routing decisions, outgoing requests to backend services, and the corresponding responses. Capture request/response headers, body sizes, latency, and any transformations applied. APIPark, for example, provides comprehensive logging capabilities, recording every detail of each api call, which is invaluable for quickly tracing and troubleshooting issues. * Backend Services: Log request processing, interactions with databases or other dependencies, and the final response generation. * Structured Logging: Use structured logging (e.g., JSON format) to make logs easily parsable and queryable by log aggregation tools (e.g., ELK Stack, Splunk, Datadog). This allows for efficient searching, filtering, and analysis. * Metrics Collection: Collect key performance indicators (KPIs) at every layer: * Request Rates: Total api calls per second/minute. * Error Rates: Percentage of api calls returning 4xx or 5xx errors. Track specific error codes (e.g., 404s, 500s). * Latency: Average, p95, p99 response times for api calls. * Resource Utilization: CPU, memory, network I/O of api gateways and backend services. * Cache Hit Ratios: For any caching layers. * Benefits: By systematically logging and monitoring these metrics, operations and development teams can gain real-time insights into api health, quickly identify anomalies (e.g., a sudden spike in 404s or 500s, increased latency for a specific endpoint), and pinpoint where data might be missing or delayed in the system.

Alerting: Proactive Notification of Anomalies

Monitoring is passive; alerting is proactive. It ensures that the right people are notified immediately when critical issues arise, preventing minor problems from escalating into major outages. * Threshold-Based Alerts: Configure alerts based on predefined thresholds for key metrics. Examples include: * API error rate exceeding 5% for more than 5 minutes. * Latency of a critical api endpoint exceeding 500ms for 10 consecutive minutes. * Number of 404 (Not Found) responses increasing rapidly. * Backend service CPU utilization consistently above 80%. * Impact on Missing Data: Timely alerts enable teams to react quickly to situations where data might be missing or inaccessible. For instance, an alert on a sudden surge of 503 (Service Unavailable) errors from a specific backend could indicate a failure to provide data, prompting immediate investigation. An alert on a high number of 404s for a previously working endpoint could signal an accidental deletion or a client making incorrect requests.

Distributed Tracing: Understanding the Full Request Path

In microservice architectures, a single client request can trigger a complex chain of calls across multiple services, databases, and api gateways. Distributed tracing provides an end-to-end view of these interactions. * Trace IDs Propagation: A unique trace ID is generated at the entry point (e.g., api gateway or client) and propagated through all subsequent service calls. * Span Collection: Each step in the request's journey (e.g., api gateway processing, service A call, database query, service B call) is recorded as a "span," with its own duration, metadata, and parent-child relationships. * Visualization: Tools like Jaeger, Zipkin, or OpenTelemetry visualize these traces, showing the latency of each span and highlighting bottlenecks or failures in the request path. * Benefits for Missing Data: If a client reports missing data, a trace can reveal exactly where the data was dropped or failed to be retrieved within the distributed system. Was it a timeout accessing the database? A misconfigured downstream service? Or an error in the api gateway's transformation logic? Tracing provides the granular visibility needed to answer these questions quickly.

Powerful Data Analysis: Unveiling Patterns and Trends

Beyond real-time monitoring and tracing, historical data analysis is crucial for long-term improvement and proactive maintenance. * Trend Analysis: Analyze historical call data to display long-term trends and performance changes. APIPark, for instance, excels at this, helping businesses with preventive maintenance before issues occur. This can reveal seasonal spikes in traffic that strain certain apis, or gradual increases in latency that suggest a growing bottleneck. * Correlation: Correlate api performance and error rates with deployments, configuration changes, or even external events. This helps in understanding the root causes of performance degradations or increased missing data incidents. * Anomaly Detection: Use machine learning techniques to detect unusual patterns in api usage or error rates that might not trigger simple threshold alerts. This can uncover subtle issues before they become critical. * Usage Patterns: Understand how clients are consuming your apis. Are certain endpoints frequently requested but rarely returning useful data? Are clients making unnecessarily complex queries? This data can inform api design improvements.

Feedback Loops and Continuous Improvement

The insights gained from observability tools must feed back into the development lifecycle. * Regular Review Meetings: Schedule regular operational reviews where teams discuss api performance, error trends, and incidents related to missing data. * Post-Incident Reviews (PIRs): For every significant incident involving missing data or api unavailability, conduct a thorough PIR to understand the root cause, identify contributing factors, and define actionable improvements (e.g., update api schema, implement a new gateway policy, enhance client-side fallback). * Refining API Design: Use insights from missing data incidents to refine api schemas (e.g., making optional fields more explicit, adding default values), improve error messages, or introduce new endpoints that provide data more reliably. * Updating Gateway Configurations: Leverage the api gateway's flexibility to implement new transformation rules, caching policies, or rate limits based on observed data patterns. * Client-Side Iteration: Educate client developers on common api error patterns and best practices for defensive programming and UI/UX for missing data.

By embracing a culture of continuous observability and improvement, organizations can evolve their api landscape to be exceptionally resilient to missing data, ensuring that their applications remain robust, performant, and reliable even in the face of inevitable challenges. This iterative process is the hallmark of mature api governance and a commitment to providing an outstanding user experience.

Conclusion

The notion of "GraphQL Not Exist?" prompts a vital exploration into the broader, foundational challenges of data handling in modern software ecosystems. While GraphQL offers an elegant solution for precise data fetching, its absence from a given architectural stack, or even its coexistence with other paradigms, underscores a universal truth: data integrity, availability, and resilience are paramount, regardless of the specific api technology employed. The problem of "missing data" is not a fringe concern but a pervasive reality stemming from diverse sources – from network instability and schema mismatches to upstream service failures and inherent data scarcity.

This comprehensive analysis has demonstrated that effectively managing missing data requires a multi-faceted, layered approach, starting with the very design of the apis themselves. Robust server-side strategies, including meticulous schema definition, intelligent api versioning, standardized error handling, and the implementation of patterns like graceful degradation, idempotency, and circuit breakers, establish the initial bulwark against data inconsistencies. These pre-emptive measures build an api contract that is both explicit and resilient, laying the groundwork for predictable interactions.

Central to this resilience, especially in heterogeneous api environments, is the API Gateway. Acting as a sophisticated control plane at the edge of the network, the api gateway transcends mere traffic routing. Its advanced capabilities in data transformation, schema enforcement, response aggregation, and centralized error orchestration are instrumental in harmonizing disparate data sources and shielding clients from backend complexities and failures. Platforms like APIPark exemplify how a modern api gateway can unify the management of diverse services, including AI models, providing consistency and reliability where data structures might otherwise vary wildly. Its robust logging and analytical capabilities further empower organizations to gain deep insights into api health, proactively identify issues, and address them before they impact users.

Finally, the client-side plays an indispensable role in closing the loop on data resilience. Through defensive programming techniques, thoughtful UI/UX design for error and empty states, judicious client-side caching, and even embracing offline-first architectures with service workers, applications can present a seamless and robust experience to the end-user, even when confronted with partial or entirely absent data.

In essence, the goal is not merely to fetch data, but to ensure a reliable, consistent, and user-friendly data experience across the entire application stack. This demands continuous vigilance through comprehensive observability – logging, monitoring, alerting, and distributed tracing – which provides the necessary insights to drive an iterative process of improvement. By embracing these holistic strategies, organizations can build software systems that are not just functional, but truly resilient, inspiring trust and delivering uninterrupted value in a world where data is both omnipresent and, at times, elusive. The conversation is not whether GraphQL exists, but how we proactively engineer our systems to thrive in a complex, data-driven reality, leveraging every tool at our disposal, from granular api design to intelligent api gateways and resilient client implementations.

5 FAQs

- What are the primary reasons an organization might not use GraphQL, and how does this impact data handling?

- Organizations might opt out of GraphQL due to existing legacy REST/RPC systems, the overhead for simpler use cases, specific architectural preferences, caching complexities, or a lack of team expertise. This impacts data handling by requiring clients to interact with multiple

apiendpoints, potentially leading to over/under-fetching, and necessitates robust server-side data aggregation, transformation, and stringent API Gateway policies to ensure data consistency and availability.

- Organizations might opt out of GraphQL due to existing legacy REST/RPC systems, the overhead for simpler use cases, specific architectural preferences, caching complexities, or a lack of team expertise. This impacts data handling by requiring clients to interact with multiple

- How can an API Gateway specifically help in handling "missing data" scenarios beyond basic routing?

- An

api gatewaysignificantly aids by performing data transformation (normalizing responses from diverse backends), schema enforcement (validating inputs and outputs), response aggregation (combining data from multiple services), and centralized error orchestration (standardizing error messages). It also provides critical caching at the edge and comprehensive logging, which are crucial for improving data availability and diagnosing missing data issues.

- An

- What is the importance of "idempotency" in API design when dealing with potential missing data?

- Idempotency ensures that making the same

apirequest multiple times has the same effect as making it once. This is vital when handling missing data due to network timeouts or intermittent failures because the client can safely retry a request (e.g., aPOSTwith an idempotency key) without fear of creating duplicate resources or unintended side effects. This improves the reliability of data delivery and reduces the perception of data absence.

- Idempotency ensures that making the same

- How do client-side strategies contribute to a resilient application experience when data is missing?

- Client-side strategies are the final layer of defense. They include defensive programming (null checks, optional chaining, default values), thoughtful UI/UX (loading states, empty states, clear error messages, retry mechanisms), and client-side caching/persistence (LocalStorage, IndexedDB). Advanced approaches like offline-first design with Service Workers ensure that applications remain functional and provide data even in the absence of network connectivity, significantly mitigating the impact of missing data on the user experience.

- Why is "observability" critical for continuous improvement in handling missing data?

- Observability, encompassing detailed logging, real-time monitoring, alerting, and distributed tracing, is crucial because it provides deep insights into the internal state of the system. It allows teams to proactively detect anomalies (e.g., spikes in 404s or 5xx errors), quickly diagnose the root cause of missing data (e.g., a specific service failure or a

gatewaymisconfiguration), and understand long-term performance trends. These insights feed back into the development cycle, enabling continuous refinement ofapidesign,api gatewayconfigurations, and client-side resilience strategies.

- Observability, encompassing detailed logging, real-time monitoring, alerting, and distributed tracing, is crucial because it provides deep insights into the internal state of the system. It allows teams to proactively detect anomalies (e.g., spikes in 404s or 5xx errors), quickly diagnose the root cause of missing data (e.g., a specific service failure or a

🚀You can securely and efficiently call the OpenAI API on APIPark in just two steps:

Step 1: Deploy the APIPark AI gateway in 5 minutes.

APIPark is developed based on Golang, offering strong product performance and low development and maintenance costs. You can deploy APIPark with a single command line.

curl -sSO https://download.apipark.com/install/quick-start.sh; bash quick-start.sh

In my experience, you can see the successful deployment interface within 5 to 10 minutes. Then, you can log in to APIPark using your account.

Step 2: Call the OpenAI API.