How to Asynchronously Send Information to Two APIs

In the vast and interconnected landscape of modern software development, applications rarely exist in isolation. They are intricate tapestries woven from countless services, both internal and external, each serving a specific purpose. From processing user authentication to updating inventory, sending notifications, or integrating with third-party payment gateways, the interaction with Application Programming Interfaces (APIs) forms the very backbone of today's digital experiences. As these systems grow in complexity and the demand for instant responsiveness escalates, the traditional synchronous model of API communication, where one operation must fully complete before the next can begin, often becomes a bottleneck, a chokepoint that can degrade performance, exhaust resources, and ultimately frustrate users.

Imagine a scenario where a single user action, such as submitting an order on an e-commerce platform, needs to trigger a cascade of operations: updating the order database, decrementing inventory, notifying the shipping department, sending an email confirmation to the customer, and perhaps even logging the transaction for analytical purposes. If each of these calls to various apis were executed sequentially, waiting for the full round-trip of each request and response, the user would experience significant delays. This lag can lead to abandoned carts, a perception of unreliability, and a general loss of business. The inherent limitations of this blocking pattern necessitate a more sophisticated approach: asynchronous communication.

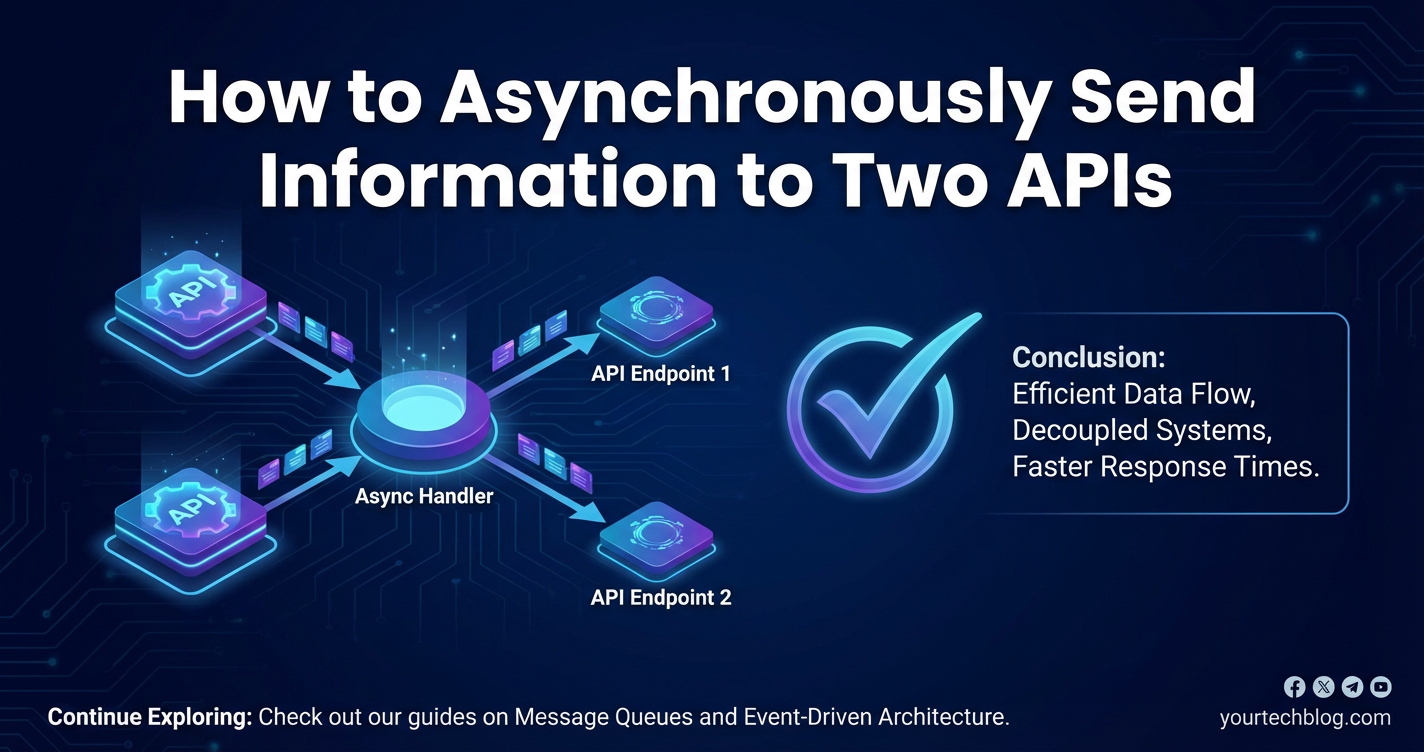

Asynchronous processing revolutionizes how applications interact with multiple services, particularly when information needs to be dispatched to two or more independent apis. Instead of halting all other operations while awaiting a response, an asynchronous system dispatches a request and then immediately frees itself to perform other tasks. The response, when it eventually arrives, is handled by a callback mechanism, a promise resolution, or a message consumed from a queue, without ever blocking the main flow of execution. This paradigm shift not only dramatically improves the responsiveness and user experience of an application but also significantly enhances its scalability, resilience, and overall efficiency, making it an indispensable technique for any developer grappling with the challenges of distributed systems. This article will delve deep into the "why" and "how" of asynchronously sending information to two APIs, exploring the underlying principles, practical architectures, common technologies, and best practices that underpin this crucial architectural pattern, including the pivotal role played by an api gateway in orchestrating such complex interactions.

Understanding Synchronous vs. Asynchronous API Communication

Before we embark on the journey of sending information asynchronously to multiple APIs, it is fundamental to grasp the distinction between synchronous and asynchronous communication models. This foundational understanding will illuminate why the latter is overwhelmingly preferred for modern, performance-critical applications, especially when integrating with numerous external services.

The Nature of Synchronous API Calls

In a synchronous communication model, the execution of a program or a process pauses and waits for an initiated operation to complete before it can proceed to the next step. When an application makes a synchronous api call, it essentially sends a request and then enters a waiting state. It remains in this state, consuming resources and doing nothing else useful, until the api server responds, or until a timeout occurs.

Consider an analogy: you are trying to bake a cake. In a synchronous world, if you need to buy eggs from the store, you would drive to the store, buy the eggs, come back home, and only then would you start measuring flour. While you are driving to the store and waiting in line, your cake preparation is completely halted. Similarly, in software, a synchronous api call means your application is idle, waiting for a remote server to process its request, communicate over the network, and return a response.

Pros of Synchronous Communication: * Simplicity: The flow of control is straightforward and easy to reason about. Each step follows the previous one logically. * Predictability: The order of operations is guaranteed. * Debugging: Tracing execution paths can be simpler since operations happen sequentially.

Cons of Synchronous Communication: * Performance Bottlenecks: The primary drawback. Long-running api calls or network latency directly translate into application delays. If one api call takes 500ms, your application waits 500ms. If you have two such calls, it's 1000ms. * Resource Inefficiency: While waiting, the thread or process making the api call remains active but unproductive, holding onto memory and CPU cycles that could otherwise be used for other tasks. * Poor User Experience: In user-facing applications, synchronous operations can lead to frozen UIs, unresponsive interfaces, and frustrating wait times. * Cascading Failures: A single slow or failing api can block an entire process, potentially impacting other parts of the system that rely on that process. * Scalability Challenges: To handle more concurrent requests, you often need more threads or processes, which consume more resources and have an upper limit.

When is synchronous communication appropriate? In very specific, often internal scenarios where the latency is negligible, or where strict sequential dependency is paramount and immediate failure feedback is critical. However, for calls to external services, or even distinct internal microservices over a network, synchronous calls are generally detrimental to performance and scalability.

The Power of Asynchronous API Calls

Asynchronous communication, by contrast, is a non-blocking model. When an application makes an asynchronous api call, it dispatches the request and immediately continues with its other tasks, without waiting for the response. The application registers a mechanism (like a callback function, a Promise, or an await instruction in conjunction with async) to handle the response whenever it arrives.

Returning to our baking analogy: in an asynchronous world, when you realize you need eggs, you call the store and ask them to set aside a carton for you, perhaps even arrange for delivery. While you wait for the eggs to be ready or delivered, you can continue measuring flour, preheating the oven, or preparing other ingredients. Your primary task of baking is not halted by the dependency on eggs. Similarly, an asynchronous api call allows your application to send a request to one api, then immediately send a request to a second api, and then perhaps perform some local computation, all while waiting for the network responses to arrive independently.

Pros of Asynchronous Communication: * Enhanced Responsiveness: The application's main thread is never blocked, leading to a fluid and responsive user experience. * Improved Scalability: Non-blocking I/O allows a single thread or process to manage many concurrent api calls, drastically increasing throughput with fewer resources. This is particularly evident in environments like Node.js or with Python's asyncio. * Efficient Resource Utilization: Resources are not tied up waiting; they are used for productive work, leading to better overall system efficiency. * Fault Tolerance: If one api call is slow or fails, it doesn't necessarily block other operations or bring down the entire system. Independent error handling and retry mechanisms can be implemented. * Decoupling: Services can operate more independently, reducing interdependencies and making the system more modular and easier to maintain.

Core Concepts in Asynchronous Programming: * Callbacks: Functions passed as arguments to other functions, to be executed once the asynchronous operation is complete. * Promises: Objects that represent the eventual completion (or failure) of an asynchronous operation and its resulting value. They provide a cleaner way to handle callbacks and chain asynchronous operations. * Async/Await: Syntactic sugar built on Promises, making asynchronous code look and feel more like synchronous code, improving readability and maintainability. * Event Loops: A programming construct that waits for and dispatches events or messages in a program. Often used in single-threaded asynchronous environments. * Message Queues: A powerful pattern for robust asynchronous communication between distributed services, offering decoupling and durability.

In virtually all modern distributed systems, and especially when dealing with external apis, asynchronous communication is not merely an option but a necessity. It is the cornerstone of building high-performance, scalable, and resilient applications that can gracefully handle the inherent latencies and potential unreliability of network interactions.

Why Asynchronously Send Information to Two APIs?

The need to communicate with multiple APIs is a commonplace requirement in today's interconnected software ecosystem. However, the specific decision to dispatch information to two distinct apis in an asynchronous manner often stems from a combination of performance, reliability, and architectural considerations. This approach allows an application to perform multiple, independent operations concurrently, without one waiting for the other, which unlocks significant advantages across various common scenarios.

Common Scenarios Demanding Asynchronous Multi-API Dispatch

- Data Duplication and Synchronization Across Disparate Systems: Often, a single piece of information needs to be stored or updated in multiple systems that serve different purposes. For example:

- E-commerce Order Fulfillment: When a customer places an order, the order details must be recorded in the primary order management system. Simultaneously, the inventory system needs to update stock levels, and a separate customer relationship management (CRM) system might need to create or update the customer's purchase history. These are typically independent services, and waiting for each to respond synchronously would introduce unacceptable delays.

- User Registration: Upon a new user signing up, their details are stored in the main authentication database. Concurrently, their profile might be added to an email marketing platform, an analytics service for tracking, and perhaps an internal billing system. Each of these actions can happen in parallel.

- Content Management: When an article is published, it might be saved to a database, and also pushed to a search index

api(like Elasticsearch or Algolia) to make it immediately searchable, and potentially to a caching service.

- Event-Driven Architectures and Fan-out Patterns: In an event-driven system, a single event (e.g., "ProductUpdated" or "UserCreated") can trigger multiple downstream reactions.

- Notification Systems: A user action (e.g., a transaction completing) might trigger a notification

apito send an SMS and simultaneously a differentapito send an email. These are distinct communication channels handled by different services, and neither should block the other. - Microservices Orchestration: In a microservices landscape, one service might publish an event that multiple other services are interested in. For instance, a "Payment Processed" event might trigger a "Generate Invoice" service and a "Update Customer Loyalty Points" service. Asynchronously sending information (the event itself) to the

apis of these services allows for maximum decoupling and parallel execution. This is a classic fan-out pattern where a single input leads to multiple parallel outputs.

- Notification Systems: A user action (e.g., a transaction completing) might trigger a notification

- Auditing, Logging, and Analytics: Many operations require a primary action and a secondary, non-critical action related to auditing or analytics.

- Transaction Logging: While the core transaction proceeds, a separate, non-blocking call can be made to an

apithat records detailed logs in an audit trail system or a data lake for later analysis. If the loggingapiis temporarily unavailable, the primary transaction should still succeed. - Performance Metrics: Alongside delivering core service functionality, applications might asynchronously push performance metrics or user behavior data to an analytics

apito avoid impacting the user's primary interaction.

- Transaction Logging: While the core transaction proceeds, a separate, non-blocking call can be made to an

- Third-Party Integrations: Integrating with external vendors often involves communicating with multiple, independent

apis.- Payment Gateways: When processing a payment, an application might send card details to a payment processor

api. In parallel, it might send order details to a fraud detectionapifrom a different vendor. The payment process itself should ideally not wait for the fraud check to complete, or at least the initial submission to the payment gateway can be non-blocking. - Shipping Services: An order dispatch could trigger a call to a primary carrier

api(e.g., FedEx) to generate a shipping label, and simultaneously another call to a customsapifor international shipments, or a different logistics partner for specialized deliveries.

- Payment Gateways: When processing a payment, an application might send card details to a payment processor

Key Advantages of Asynchronous Dispatch to Two APIs

- Superior Performance and Responsiveness: The most immediate and tangible benefit. By initiating both

apicalls almost simultaneously, the overall latency observed by the user or the calling system is significantly reduced. The total time taken is dictated by the longest of the twoapicalls, rather than their sum. - Enhanced Resilience and Fault Isolation: If one of the two

apis is slow, unresponsive, or fails, the otherapicall can still proceed without being blocked. This allows for independent error handling, retries, or even gracefully degraded functionality. The failure of a non-criticalapi(e.g., analytics logging) doesn't have to prevent the success of a critical one (e.g., order placement). - Improved Resource Utilization: The application's resources (CPU, memory, network connections) are used more efficiently. Threads or processes aren't idling; they are either dispatching requests or performing other work, leading to higher throughput.

- Increased Decoupling and Modularity: Separating concerns into independent

apicalls, especially when facilitated by message queues or event buses, promotes architectural decoupling. Services become more modular, easier to develop, test, and deploy independently. - Better User Experience: For user-facing applications, this translates directly into a faster, smoother, and more reliable experience, which is paramount for retention and satisfaction.

The decision to asynchronously send information to two APIs is therefore a strategic one, driven by the desire to build highly performant, resilient, and scalable systems that can gracefully navigate the complexities of distributed environments. It's an architectural choice that embraces concurrency to overcome the inherent limitations of sequential processing, allowing applications to react swiftly and reliably to dynamic operational demands.

Architectural Patterns and Technologies for Asynchronous API Calls

Implementing asynchronous communication, particularly when targeting multiple APIs, requires careful consideration of architectural patterns and the selection of appropriate technologies. The choice often depends on factors such as the scale of the application, the criticality of the data, the desired level of decoupling, and the underlying programming environment. We'll explore various approaches, from client-side techniques to robust server-side solutions, and the critical role of an api gateway.

Client-Side Asynchrony (Browser/Mobile)

While the focus is often on server-side logic, client-side applications (web browsers, mobile apps) also frequently need to make parallel asynchronous calls to multiple APIs to populate a UI quickly or send data.

Promise.all(): This is a powerful construct for sending multiple independent requests concurrently.Promise.all()takes an iterable of Promises and returns a single Promise that resolves when all of the input Promises have resolved, or rejects if any of them reject.

JavaScript fetch API and XMLHttpRequest (XHR): These are the fundamental mechanisms for making HTTP requests from a browser. Modern JavaScript heavily leverages Promises and the async/await syntax to manage these asynchronous operations gracefully.```javascript async function sendDataToTwoAPIsClientSide(data) { const api1Url = 'https://api.example.com/service1/data'; const api2Url = 'https://api.example.com/service2/log';

try {

const [response1, response2] = await Promise.all([

fetch(api1Url, {

method: 'POST',

headers: { 'Content-Type': 'application/json' },

body: JSON.stringify(data.primary)

}),

fetch(api2Url, {

method: 'POST',

headers: { 'Content-Type': 'application/json' },

body: JSON.stringify(data.secondary) // Different data or partial data

})

]);

// Check responses

if (!response1.ok) throw new Error(`API 1 failed: ${response1.statusText}`);

if (!response2.ok) throw new Error(`API 2 failed: ${response2.statusText}`);

const result1 = await response1.json();

const result2 = await response2.json();

console.log('API 1 Response:', result1);

console.log('API 2 Response:', result2);

return { result1, result2 };

} catch (error) {

console.error('Failed to send data to APIs:', error);

// Handle specific errors for each API if needed

throw error;

}

}// Example Usage: // sendDataToTwoAPIsClientSide({ primary: { key: 'value' }, secondary: { log: 'event' } }); ``` This approach ensures that both API calls are initiated almost simultaneously, and the client waits only for the longest one to complete.

Server-Side Asynchrony (Backend)

The server-side offers more robust and diverse mechanisms for asynchronous communication, often leveraging operating system features or specialized libraries.

1. Threads/Processes (Traditional Concurrency)

Many traditional programming languages (Java, C#, Python, Ruby) allow developers to spawn new threads or processes to handle concurrent tasks. When sending data to two APIs, one could theoretically create a new thread for each api call.

- Thread Pools: Managing individual threads can be complex and resource-intensive. Thread pools provide a more efficient way to manage a fixed number of worker threads that can execute tasks concurrently.

- Pros: Familiar model for many developers, robust for CPU-bound tasks.

- Cons: High overhead for I/O-bound tasks (like

apicalls), thread switching costs, complex synchronization issues (deadlocks, race conditions), and scalability limitations due to context switching and memory footprint per thread. This approach is generally less efficient for large numbers of concurrent I/O operations compared to non-blocking I/O.

2. Event Loops and Non-blocking I/O (Modern Concurrency)

This model is particularly effective for I/O-bound operations like api calls. Instead of blocking a thread while waiting for I/O, the system registers a callback and continues processing other tasks. When the I/O operation completes, the callback is placed in an event queue to be processed by the event loop.

- Node.js: A single-threaded JavaScript runtime built on the V8 engine, famously uses an event loop for non-blocking I/O.

- Python

asyncio: Python's standard library for writing concurrent code using theasync/awaitsyntax, based on an event loop.

Go Goroutines: Lightweight, multiplexed green threads managed by the Go runtime. They are excellent for concurrent I/O and CPU-bound tasks.```python import asyncio import httpx # A modern async HTTP client for Pythonasync def send_data_to_two_apis_server_side_async(data_primary, data_secondary): api1_url = 'https://api.example.com/primary' api2_url = 'https://api.example.com/secondary'

async with httpx.AsyncClient() as client:

tasks = [

client.post(api1_url, json=data_primary),

client.post(api2_url, json=data_secondary)

]

responses = await asyncio.gather(*tasks, return_exceptions=True) # Gather results concurrently

results = []

for i, res in enumerate(responses):

if isinstance(res, httpx.Response):

if res.status_code == 200:

print(f"API {i+1} Success: {res.json()}")

results.append(res.json())

else:

print(f"API {i+1} Failed with status {res.status_code}: {res.text}")

results.append({'error': f'API {i+1} failed', 'status': res.status_code})

else:

print(f"API {i+1} Exception: {res}")

results.append({'error': f'API {i+1} exception', 'details': str(res)})

return results

Example Usage:

asyncio.run(send_data_to_two_apis_server_side_async({"user": "Alice"}, {"log": "signup"}))

`` This model allows for highly scalable and efficient handling of numerous concurrentapi` calls using minimal threads.

3. Message Queues (Robust Asynchrony and Decoupling)

For maximum decoupling, resilience, and scalability, message queues are an excellent solution. They introduce an intermediary layer between the producer of information and the consumers (the APIs).

- Concept:

- A "producer" (your application) publishes a message (the information to be sent) to a queue or topic.

- The message broker (e.g., RabbitMQ, Apache Kafka, AWS SQS, Azure Service Bus) stores the message reliably.

- "Consumers" (separate services designed to interact with each target

api) subscribe to the queue/topic. - When a consumer picks up a message, it then calls its respective

api.

- Workflow for Two APIs:

- Your application publishes a message (e.g., "OrderPlaced" event) to a central message queue.

- Two separate consumer services are listening:

- Consumer A: Consumes the message, processes it, and then makes an

apicall toAPI 1(e.g., Inventory Service). - Consumer B: Consumes the same message (or a different message if fanned out by the broker), processes it, and then makes an

apicall toAPI 2(e.g., CRM Service).

- Consumer A: Consumes the message, processes it, and then makes an

- Benefits:

- Decoupling: The sending application doesn't need to know about the target APIs or their availability. It just sends a message.

- Resilience: If an

apior a consumer service is down, the message remains in the queue and can be processed later (retries). - Scaling: Consumers can be scaled independently of the producer.

- Load Balancing: Message brokers can distribute messages across multiple instances of consumer services.

- Eventual Consistency: Data might not be updated across all systems instantaneously, but it will eventually become consistent.

- Trade-offs: Adds architectural complexity, introduces another component to manage (the message broker), and requires careful handling of message processing and idempotency.

4. Serverless Functions

Cloud serverless platforms (AWS Lambda, Azure Functions, Google Cloud Functions) provide an excellent environment for asynchronous api interactions, particularly in event-driven architectures.

- Concept: A serverless function can be triggered by various events (e.g., an HTTP request, a new message in a queue, a file upload). Once triggered, it executes a specific piece of code.

- For Two APIs:

- A single incoming event (e.g., an HTTP POST request to your API Gateway, or a message in an SQS queue) triggers a Lambda function.

- Within that Lambda function, you can make two parallel asynchronous calls to your target APIs using the

async/awaitpattern (similar to the Python or Node.js examples above). - Alternatively, the initial function could fan out to trigger two separate Lambda functions, each responsible for calling one specific

api. This offers even greater isolation. - Orchestration services like AWS Step Functions can coordinate complex multi-step workflows involving multiple serverless functions and

apicalls, adding state management and robust error handling.

The Role of an API Gateway

In architectures involving multiple apis, especially when dealing with asynchronous interactions and diverse backend services, an api gateway emerges as a critical component. An api gateway acts as a single entry point for all clients, abstracting the complexity of the backend services. It centralizes functionalities that would otherwise be duplicated across multiple services or require complex client-side logic.

- Centralized Routing and Traffic Management: An api gateway can receive a single request and then intelligently route it to one or more backend services. This includes load balancing and versioning.

- Authentication and Authorization: It can enforce security policies across all

apis, offloading this responsibility from individual microservices. - Rate Limiting and Throttling: Protects backend services from being overwhelmed by too many requests.

- Request/Response Transformation: Modifies request or response payloads to suit different client needs or backend service contracts.

- Cross-Cutting Concerns: Handles logging, monitoring, caching, and analytics uniformly.

- Orchestration and Fan-out: A sophisticated api gateway can be configured to take a single incoming request and fan it out to multiple internal or external

apis in parallel. It can then aggregate the responses (if needed, though this article focuses on sending) or simply dispatch and acknowledge the successful dispatch.

For complex scenarios where you're sending information to two or more APIs, an api gateway doesn't necessarily make the internal logic of asynchronous calls easier (e.g., writing async/await code), but it simplifies the management and exposure of these complex interactions to external consumers. It acts as a facade, making a multi-api interaction appear as a single, well-defined api endpoint.

Consider a platform like ApiPark. As an open-source AI gateway and API management platform, APIPark is designed precisely for managing, integrating, and deploying AI and REST services. In the context of asynchronously sending information to two APIs, APIPark can play several pivotal roles:

- Unified API Management: It provides a single pane of glass to manage both API 1 and API 2, even if they are from different providers or internal teams. Its "End-to-End API Lifecycle Management" helps regulate the entire process from design to invocation.

- Traffic Forwarding and Load Balancing: If your internal service that initiates the two async calls is behind APIPark, or if APIPark itself is configured to fan out, it can efficiently manage the traffic to the downstream

apis. - Security and Access Control: APIPark's features like "API Resource Access Requires Approval" and "Independent API and Access Permissions for Each Tenant" are crucial when orchestrating calls to multiple sensitive

apis, ensuring that only authorized requests proceed. - Monitoring and Logging: When you have complex asynchronous flows, tracking each

apicall becomes challenging. APIPark's "Detailed API Call Logging" and "Powerful Data Analysis" features are invaluable. They record every detail of eachapicall, allowing you to trace, troubleshoot, and analyze the performance of your asynchronous dispatches to both APIs, ensuring system stability and data security. - Performance: With "Performance Rivaling Nginx," APIPark can handle the high throughput required for managing numerous asynchronous

apicalls efficiently, even under heavy load, ensuring the gateway itself doesn't become a bottleneck.

In essence, while your application code might handle the direct async/await calls to two APIs, an api gateway like ApiPark provides the surrounding infrastructure to make these interactions secure, manageable, observable, and scalable, especially as the number of APIs and their interactions grow. It simplifies the operational burden and enhances the overall reliability of your multi-API asynchronous architecture.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

Implementation Strategies and Code Examples (Conceptual)

Having understood the "why" and explored various architectural patterns, let's now delve into concrete implementation strategies for asynchronously sending information to two APIs. We'll examine basic parallel execution, the more robust event-driven approach using message queues, and the advanced orchestration capabilities of an api gateway.

1. Parallel Execution (Basic async/await or Promises)

This is the most direct and common method for concurrent execution within a single process or thread utilizing non-blocking I/O. It's suitable when the two api calls are relatively independent and you need to get responses as quickly as possible.

Core Idea: Initiate both api calls almost simultaneously and wait for both to complete. The total time taken will be roughly the duration of the longest api call, plus any network overhead and local processing time.

Python with asyncio and httpx:

import asyncio

import httpx

import json # For illustrative data

async def send_parallel_api_requests(primary_data, secondary_data):

"""

Sends data to two distinct APIs in parallel using Python's asyncio.

"""

api_endpoint_1 = "https://api.example.com/process_order" # Hypothetical order processing API

api_endpoint_2 = "https://api.example.com/log_audit" # Hypothetical audit logging API

async with httpx.AsyncClient() as client:

# Create coroutine tasks for each API call

task1 = client.post(api_endpoint_1, json=primary_data, timeout=10) # 10-second timeout

task2 = client.post(api_endpoint_2, json=secondary_data, timeout=5) # Shorter timeout for non-critical audit

print("Initiating parallel API calls...")

try:

# Await both tasks. asyncio.gather runs them concurrently.

# return_exceptions=True ensures that if one task fails, the other can still complete,

# and the exception is returned as a result instead of crashing asyncio.gather.

results = await asyncio.gather(task1, task2, return_exceptions=True)

response1_result = results[0]

response2_result = results[1]

# --- Process API 1 Response ---

if isinstance(response1_result, httpx.Response):

if response1_result.is_success:

print(f"API 1 (Order Process) Succeeded: Status {response1_result.status_code}")

print(f"API 1 Body: {response1_result.json()}")

# Further processing for primary_data success

else:

print(f"API 1 (Order Process) Failed: Status {response1_result.status_code}, Error: {response1_result.text}")

# Handle primary_data failure: log, trigger retry, notify admin etc.

elif isinstance(response1_result, Exception):

print(f"API 1 (Order Process) Exception: {type(response1_result).__name__} - {response1_result}")

# Handle connection errors, timeouts for primary_data

# --- Process API 2 Response ---

if isinstance(response2_result, httpx.Response):

if response2_result.is_success:

print(f"API 2 (Log Audit) Succeeded: Status {response2_result.status_code}")

print(f"API 2 Body: {response2_result.json()}")

# Audit logging is often non-critical, so a success is good, failure might be just logged.

else:

print(f"API 2 (Log Audit) Failed: Status {response2_result.status_code}, Error: {response2_result.text}")

# Audit logging failure might be acceptable, but still log for investigation.

elif isinstance(response2_result, Exception):

print(f"API 2 (Log Audit) Exception: {type(response2_result).__name__} - {response2_result}")

# Handle audit logging connection errors, timeouts.

return {

"api1_response": response1_result.json() if isinstance(response1_result, httpx.Response) and response1_result.is_success else None,

"api2_response": response2_result.json() if isinstance(response2_result, httpx.Response) and response2_result.is_success else None,

"errors": [str(r) for r in results if isinstance(r, Exception) or (isinstance(r, httpx.Response) and not r.is_success)]

}

except Exception as e:

print(f"An unexpected error occurred during parallel API calls: {e}")

raise # Re-raise or handle as appropriate

# Example Usage

async def main():

order_data = {"order_id": "ORD12345", "user_id": "U001", "items": [{"prod_id": "P001", "qty": 2}]}

audit_data = {"event_type": "OrderPlaced", "timestamp": "2023-10-27T10:30:00Z", "user_id": "U001"}

print("\n--- Scenario 1: Both APIs succeed ---")

# For a real run, mock httpx requests or use actual endpoints

# For demonstration, assume mocks or successful calls.

# results = await send_parallel_api_requests(order_data, audit_data)

# print("Final Results:", results)

print("\n--- Scenario 2: One API fails (conceptual) ---")

# Imagine API 1 returns 500 or times out, while API 2 succeeds.

# The return_exceptions=True in asyncio.gather would allow API 2 to complete.

# The error handling within the function would differentiate between success/failure.

if __name__ == "__main__":

# In a real application, this would be integrated into a web framework or background task runner.

# asyncio.run(main())

print("Conceptual code: To run, replace example.com with real endpoints or use mocking.")

print("The primary mechanism is `await asyncio.gather(task1, task2, ...)`")

JavaScript with Promise.all (Frontend or Node.js Backend):

async function sendParallelApiRequestsJS(primaryData, secondaryData) {

const apiEndpoint1 = "https://api.example.com/process_payment"; // Payment API

const apiEndpoint2 = "https://api.example.com/notify_customer"; // Notification API

console.log("Initiating parallel API calls...");

try {

const [response1, response2] = await Promise.allSettled([ // Promise.allSettled ensures all promises settle, even if some reject

fetch(apiEndpoint1, {

method: 'POST',

headers: { 'Content-Type': 'application/json' },

body: JSON.stringify(primaryData)

}),

fetch(apiEndpoint2, {

method: 'POST',

headers: { 'Content-Type': 'application/json' },

body: JSON.stringify(secondaryData)

})

]);

let paymentResult = null;

let notificationResult = null;

const errors = [];

// Process Payment API Response

if (response1.status === 'fulfilled') {

if (response1.value.ok) {

paymentResult = await response1.value.json();

console.log("Payment API Succeeded:", paymentResult);

} else {

const errorText = await response1.value.text();

errors.push(`Payment API Failed: Status ${response1.value.status}, Error: ${errorText}`);

console.error(`Payment API Failed: Status ${response1.value.status}, Error: ${errorText}`);

}

} else { // response1.status === 'rejected'

errors.push(`Payment API Exception: ${response1.reason.message}`);

console.error("Payment API Exception:", response1.reason);

}

// Process Notification API Response

if (response2.status === 'fulfilled') {

if (response2.value.ok) {

notificationResult = await response2.value.json();

console.log("Notification API Succeeded:", notificationResult);

} else {

const errorText = await response2.value.text();

errors.push(`Notification API Failed: Status ${response2.value.status}, Error: ${errorText}`);

console.error(`Notification API Failed: Status ${response2.value.status}, Error: ${errorText}`);

}

} else { // response2.status === 'rejected'

errors.push(`Notification API Exception: ${response2.reason.message}`);

console.error("Notification API Exception:", response2.reason);

}

return { paymentResult, notificationResult, errors };

} catch (unexpectedError) {

console.error("An unexpected error occurred:", unexpectedError);

throw unexpectedError;

}

}

// Example Usage (conceptual)

// const paymentDetails = { amount: 100, currency: 'USD', card_token: 'tok_abc' };

// const customerNotification = { customer_id: 'CUST001', message: 'Your payment was successful!' };

//

// sendParallelApiRequestsJS(paymentDetails, customerNotification)

// .then(results => console.log("Final Results:", results))

// .catch(err => console.error("Unhandled error:", err));

console.log("Conceptual JS code: To run, replace example.com with real endpoints or use mocking.");

console.log("The primary mechanism is `await Promise.allSettled([promise1, promise2, ...])`");

Error Handling for Parallel Calls: The key challenge with parallel calls is managing failures. * asyncio.gather(..., return_exceptions=True) in Python and Promise.allSettled() in JavaScript are crucial because they ensure that all tasks/promises are run to completion, even if some fail. This prevents a single failure from immediately terminating the entire parallel operation. * You must then iterate through the results and handle successes and failures for each individual api call. This allows for partial success scenarios (e.g., payment processed, but notification failed).

2. Event-Driven with Message Queues

This strategy offers maximum decoupling and robustness, ideal for critical, long-running, or highly distributed systems where immediate api response is not strictly required for the originating system.

Conceptual Flow:

- Producer (Your Application):

- Creates a

messagecontaining the necessary data. - Publishes this

messageto a specific topic or queue in a Message Broker (e.g., Kafka, RabbitMQ, SQS). - The producer's work is done; it does not wait for any

apiresponses.

- Creates a

- Message Broker:

- Receives and durably stores the message.

- Distributes the message to relevant consumers. For two

apis, this might involve:- One message triggering two different queues/topics.

- One message in a topic being consumed by two different consumer groups.

- Consumer Service A (for API 1):

- A dedicated microservice or worker process.

- Subscribes to messages from the broker.

- When it receives a message, it extracts the relevant data.

- Makes an

apicall toAPI 1(e.g., Inventory Updateapi). - Handles

API 1's response, including retries for transient failures, and potentially moving messages to a Dead-Letter Queue (DLQ) for persistent failures. - Acknowledges the message to the broker upon successful processing.

- Consumer Service B (for API 2):

- Another dedicated microservice or worker process.

- Subscribes to the same or a different queue/topic.

- When it receives a message, it extracts data.

- Makes an

apicall toAPI 2(e.g., CRM Updateapi). - Handles

API 2's response, retries, and DLQ. - Acknowledges the message.

Advantages: * Extreme Decoupling: Producer is entirely unaware of consumers and APIs. * High Resilience: Messages are durable. If an api or consumer is down, messages are re-queued or retried. * Scalability: Consumers can be scaled independently, horizontally. * Load Leveling: Brokers smooth out traffic spikes. * Auditability: Message brokers often provide logs and monitoring of messages.

Considerations: * Eventual Consistency: Data updates across systems are not immediate but eventually consistent. * Increased Complexity: Requires setting up and managing a message broker and separate consumer services. * Idempotency: Consumers must be designed to handle receiving and processing the same message multiple times without adverse effects (critical for retries).

3. API Gateway as an Orchestrator (Advanced)

While the previous methods focus on logic within your application or services, an advanced api gateway can sometimes take on the orchestration role itself, particularly for simple fan-out patterns or request transformations.

Scenario: A client makes a single request to the api gateway. The gateway is configured to process this request, extract necessary data, and then independently forward portions of this data (or the entire data) to two different backend apis.

How an API Gateway Facilitates:

- Single Entry Point: External clients interact with one

apiendpoint provided by the gateway. - Request Transformation: The gateway can transform the incoming request payload into the specific formats required by API 1 and API 2.

- Parallel Backend Calls: Advanced gateways or custom gateway plugins can execute multiple backend calls in parallel based on a single incoming request.

- Response Aggregation (Optional): If the client needs a combined response, the gateway can wait for both backend

apis to respond, combine their outputs, and send a single response back to the client. (Though for this article's focus on "sending information," this aggregation might be secondary). - Simplified Client Logic: The client doesn't need to know about the two backend

apis or manage their asynchronous calls; the gateway handles that complexity.

Example (Conceptual Gateway Configuration Logic):

# Hypothetical API Gateway Configuration (e.g., for Kong, Apigee, or APIPark custom plugins)

paths:

/order-submission:

post:

x-gateway-policies:

- name: request-transformer # Transform client request for backend A

config:

add:

headers:

X-Client-ID: "{request.headers.client-id}"

rename:

body:

clientOrderId: "orderId"

- name: fan-out-executor # Custom plugin or built-in fan-out capability

config:

targets:

- service: order-processing-service # Points to API 1

path: /v1/orders

method: POST

body: "{transformed_request.body}" # Use transformed body for API 1

timeout: 10000ms

is_critical: true

- service: analytics-logging-service # Points to API 2

path: /v1/events/order_placed

method: POST

body: "{transformed_request.body.audit_log_data}" # Use a subset for API 2

timeout: 5000ms

is_critical: false # Allow this call to fail without impacting primary

In this scenario, the api gateway handles the asynchronous fan-out to order-processing-service and analytics-logging-service. It abstracts this concurrency from the client. For platforms like ApiPark, its "Prompt Encapsulation into REST API" feature, while primarily for AI models, suggests the underlying capability to define custom API logic that could potentially include such fan-out or orchestration rules. Its "End-to-End API Lifecycle Management" would also be critical for managing these orchestrated APIs, ensuring their design, publication, invocation, and monitoring are all streamlined. The "Detailed API Call Logging" and "Powerful Data Analysis" in APIPark become indispensable here for tracing requests as they fan out to multiple backend services, providing visibility into the success or failure of each leg of the asynchronous journey.

Choosing between these implementation strategies depends on your specific needs: * Parallel Execution (async/await): Best for immediate, direct calls from within a single application component where response times are critical and the two apis are well-known. * Message Queues: Ideal for high reliability, extreme decoupling, and systems where eventual consistency is acceptable. Excellent for background tasks and handling large volumes of events. * API Gateway Orchestration: Useful when you want to simplify client-side logic, centralize control, and apply consistent policies to multi-API interactions at the edge of your system.

Each method has its place in a well-designed asynchronous architecture. Often, a combination of these patterns is employed across different layers of an application to achieve the desired balance of performance, resilience, and manageability.

Challenges and Best Practices in Asynchronous Multi-API Communication

While asynchronous communication offers significant advantages, especially when sending information to two APIs, it also introduces a new set of challenges that must be carefully addressed. Neglecting these aspects can lead to subtle bugs, data inconsistencies, and operational headaches. Adhering to best practices is paramount for building robust and reliable asynchronous systems.

1. Idempotency

Challenge: When making asynchronous calls, especially with retry mechanisms, there's a risk that the same operation might be executed multiple times. If an api call fails after the operation was performed on the server but before the success response was received by the client, a retry would duplicate the operation. For operations like "charge a customer" or "create a user," this can lead to serious issues.

Best Practice: * Design Idempotent APIs: An api operation is idempotent if executing it multiple times with the same parameters has the same effect as executing it once. * For POST requests that create resources, use unique identifiers (e.g., a client-generated UUID idempotency_key) in the request body or header. The server should use this key to detect duplicate requests and return the original successful response without re-executing the operation. * For PUT requests (updates), they are often naturally idempotent if they replace the entire resource. * For DELETE requests, they are idempotent as deleting an already deleted resource typically has no further effect. * Implement Idempotency Keys: Include a unique, client-generated ID in your requests (e.g., X-Idempotency-Key header). The backend api should store this key for a defined period (e.g., 24 hours) and ensure that for any subsequent request with the same key, it returns the original result without re-processing.

2. Error Handling and Retries

Challenge: Asynchronous calls are inherently exposed to network issues, service unavailability, and transient errors. Without proper error handling, a single api failure could lead to data loss or an incomplete operation across your system.

Best Practices: * Robust Error Capture: Use try-catch blocks, Promise.catch(), or return_exceptions=True with asyncio.gather() to gracefully capture errors from each api call. * Distinguish Error Types: Differentiate between transient errors (e.g., network timeout, 5xx server errors, rate limits) that might succeed on retry, and permanent errors (e.g., 4xx client errors, invalid data) that typically won't. * Retry Mechanisms: * Exponential Backoff: When retrying transient failures, wait for increasingly longer periods between attempts (e.g., 1s, 2s, 4s, 8s). This prevents overwhelming the api and gives it time to recover. * Jitter: Introduce a small, random delay to the backoff strategy to prevent multiple clients from retrying simultaneously, creating a "thundering herd" problem. * Max Retries and Max Total Time: Define a maximum number of retries or a total time limit after which the operation is considered a permanent failure. * Circuit Breakers: Implement a circuit breaker pattern. If an api repeatedly fails, the circuit breaker "opens," preventing further requests to that api for a period. This allows the failing api to recover without being hammered by more requests and prevents cascading failures in your system. * Dead-Letter Queues (DLQs): For message queue-based asynchronous patterns, if a consumer repeatedly fails to process a message after multiple retries, move that message to a DLQ. This prevents poison pills from blocking the main queue and allows for manual inspection and reprocessing of failed messages.

3. Monitoring and Observability

Challenge: In asynchronous, distributed systems, tracking the flow of data across multiple api calls can be incredibly difficult. A user action might trigger several parallel api calls, and pinpointing where a failure occurred or why a transaction is slow requires a holistic view.

Best Practices: * Distributed Tracing: Implement a distributed tracing system (e.g., OpenTelemetry, Jaeger, Zipkin). This involves propagating a unique trace ID across all services and api calls involved in a single logical transaction. This allows you to visualize the entire request flow, identify bottlenecks, and pinpoint failures across services. * Comprehensive Logging: * Log key events for each api call: initiation, success, failure, response times, request payloads (sensitive data obfuscated), and response codes. * Ensure logs are structured (JSON) and include the trace ID for correlation. * Centralize logs in a log management system (e.g., ELK Stack, Splunk, Datadog) for easy searching and analysis. * Metrics and Alerting: * Collect metrics for each api call: success rate, error rate, latency, throughput. * Set up alerts for critical thresholds (e.g., high error rate for a primary api, excessive latency for a critical path). * Health Checks: Implement /health or /status endpoints for your services and the APIs they depend on to monitor their availability.

This is where a robust api gateway and management platform like ApiPark truly shines. APIPark's "Detailed API Call Logging" automatically records every detail of each api call, including request/response bodies, status codes, and latency. This centralized logging is critical for debugging complex asynchronous flows. Furthermore, its "Powerful Data Analysis" capabilities can analyze historical call data to display long-term trends and performance changes, helping businesses perform preventive maintenance and identify issues before they become critical. By providing a unified view of all api traffic, APIPark significantly enhances the observability of asynchronous multi-API interactions.

4. Data Consistency

Challenge: When asynchronously updating data across two or more independent services, achieving strong transactional consistency (all or nothing) can be difficult or impossible without distributed transactions, which are notoriously complex and often avoided. The default is often eventual consistency.

Best Practices: * Embrace Eventual Consistency: For many scenarios (e.g., updating a search index and a primary database), it's acceptable for the data to be temporarily out of sync as long as it eventually becomes consistent. Design your system assuming this. * Saga Pattern: For business transactions that span multiple services, consider the Saga pattern. A saga is a sequence of local transactions, where each transaction updates data within a single service and publishes an event to trigger the next step. If a step fails, compensating transactions are executed to undo the changes made by preceding steps. This ensures consistency without distributed transactions. * Data Validation: Perform rigorous validation on incoming data to minimize inconsistencies from incorrect inputs.

5. Security

Challenge: Each api call, whether synchronous or asynchronous, must be secure. When fanning out to multiple apis, ensuring each request carries appropriate authentication and authorization credentials, and that sensitive data is protected, becomes crucial.

Best Practices: * Authentication & Authorization: * Use robust authentication mechanisms (e.g., OAuth2, JWTs, API Keys) for every api call. * Ensure that each api receives only the necessary permissions (Least Privilege Principle). * If using an api gateway, centralize authentication and authorization there to simplify backend services. ApiPark offers features for independent api and access permissions for each tenant, and resource access requiring approval, which are vital for this. * Data Encryption: Encrypt data in transit (HTTPS/TLS) and at rest. * Input Validation and Output Encoding: Protect against common web vulnerabilities like SQL injection, XSS, and broken authentication. * Secrets Management: Securely store and manage API keys, database credentials, and other sensitive information using dedicated secrets management services (e.g., AWS Secrets Manager, HashiCorp Vault). Avoid hardcoding secrets.

6. Resource Management and Throttling

Challenge: Asynchronous calls can quickly consume network connections, CPU, and memory if not properly managed, especially when dealing with high volumes or slow upstream APIs.

Best Practices: * Connection Pooling: Use HTTP client libraries with connection pooling to efficiently manage and reuse network connections. * Concurrency Limits: Implement concurrency limits on your api calls to prevent overwhelming your own system or the target apis. * Rate Limiting: Respect the rate limits of external APIs. If an api imposes a limit (e.g., 100 requests per minute), implement client-side rate limiting to stay within those bounds. An api gateway can also enforce rate limiting at the edge for incoming requests to your services.

By proactively addressing these challenges and integrating these best practices, developers can harness the full power of asynchronous communication to build highly performant, resilient, and scalable applications that effectively interact with multiple APIs. This systematic approach transforms potential pitfalls into opportunities for building more robust and manageable systems.

Comparison of Asynchronous Techniques

To provide a concise overview, let's compare the asynchronous techniques discussed for sending information to two APIs based on several key criteria.

| Feature / Technique | Parallel async/await (e.g., Promise.all) |

Message Queues (e.g., Kafka, RabbitMQ) | API Gateway Orchestration (e.g., APIPark, Kong) |

|---|---|---|---|

| Coupling | Medium (Caller directly invokes APIs) | Low (Producer decouples from Consumers/APIs) | Low (Client decouples from backend APIs) |

| Resilience | Moderate (Caller handles individual API failures) | High (Messages persisted, retries, DLQs) | Moderate (Gateway handles some failures, retries) |

| Scalability | Moderate (Limited by single process/thread concurrency) | High (Consumers scale independently) | High (Gateway handles high traffic, can scale) |

| Complexity | Low to Medium (Relatively straightforward to implement) | High (Requires broker and consumer services) | Medium to High (Gateway configuration, plugins) |

| Latency | Low (Direct calls, simultaneous execution) | Medium to High (Message broker overhead) | Low (Direct calls from gateway) |

| Error Handling | Manual error handling for each call | Built-in retry/DLQ mechanisms in consumers | Gateway-specific error handling/policies |

| Primary Use Case | Quick, parallel execution of independent tasks | Event-driven architecture, background tasks, high throughput | Centralized API management, facade for microservices, simplified client |

| Idempotency | Must be handled in client code and target APIs | Must be handled in consumer services and target APIs | Must be handled in gateway and target APIs |

| Observability | Requires careful logging/tracing in application | Broker monitoring, consumer logs, distributed tracing | Centralized logging, metrics, and tracing by gateway |

This table illustrates that no single solution is universally superior; the best choice depends on the specific requirements, constraints, and existing architecture of your application. Often, a hybrid approach combining these techniques offers the most comprehensive solution. For instance, your application might use async/await to send a message to a queue, and then consumer services use async/await to call their respective APIs, all managed and monitored by an api gateway like ApiPark.

Conclusion

In the dynamic and demanding landscape of modern software, the ability to effectively communicate with multiple APIs is not merely an optional feature but a fundamental necessity. As applications become increasingly distributed, modular, and reliant on external services, the limitations of traditional synchronous communication become glaringly apparent, leading to performance bottlenecks, poor user experiences, and scalability challenges. The deliberate choice to asynchronously send information to two or more APIs represents a powerful architectural decision that directly addresses these issues, fostering systems that are inherently more responsive, resilient, and efficient.

We have explored the stark contrast between synchronous and asynchronous paradigms, highlighting why the latter is the cornerstone of high-performance distributed systems. From client-side Promise.all() to server-side async/await patterns, and from the robust decoupling offered by message queues to the centralized control provided by an api gateway, developers have a rich toolkit at their disposal to implement concurrent API interactions. Each technique offers distinct advantages, catering to different requirements for coupling, resilience, and operational complexity.

Successfully navigating the complexities of asynchronous multi-API communication, however, demands more than just writing non-blocking code. It requires a deep understanding of potential challenges such as idempotency, comprehensive error handling with retries and circuit breakers, and the critical need for robust monitoring and observability. Adhering to best practices in data consistency, security, and resource management is equally vital to ensure the long-term stability and reliability of your applications.

In this intricate dance of data across various services, platforms like ApiPark emerge as invaluable allies. As an open-source AI gateway and API management platform, APIPark simplifies the entire lifecycle of API governance. It offers unified management, ensures security, provides crucial monitoring through detailed API call logging and powerful data analysis, and delivers the performance required to handle the high throughput of orchestrated asynchronous api calls. By leveraging such powerful tools, enterprises can transform the challenge of managing multiple API integrations into a seamless and efficient process.

Ultimately, mastering the art of asynchronously sending information to multiple APIs is about building a future-proof architecture – one that can adapt to evolving business needs, scale effortlessly under load, and provide an uninterrupted, high-quality experience for its users. As the digital world continues to intertwine, the principles and practices discussed here will remain at the forefront of designing and developing the next generation of interconnected applications.

5 Frequently Asked Questions (FAQs)

1. What is the primary benefit of asynchronously sending information to two APIs instead of synchronously? The primary benefit is significantly improved performance and responsiveness. When sending information asynchronously, your application initiates both API calls almost simultaneously and does not wait for each one to complete before starting the next. This means the total time taken is dictated by the longest of the two API calls, rather than the sum of their individual durations, leading to a much faster overall operation and a more fluid user experience.

2. What are common scenarios where I would need to send information to two APIs asynchronously? Common scenarios include data duplication or synchronization across disparate systems (e.g., updating a primary database and a search index), event-driven architectures where a single event triggers multiple independent downstream services (e.g., user signup triggering welcome email and analytics update), auditing and logging (sending data to a primary service and a separate logging service), and complex third-party integrations (e.g., sending payment details to a gateway and order details to an inventory system).

3. What are the main technical approaches to implement asynchronous multi-API calls? There are several main approaches: * Parallel Execution within a single process: Using language features like async/await (e.g., Python's asyncio.gather, JavaScript's Promise.allSettled) to make concurrent HTTP requests. * Message Queues: Using a message broker (e.g., Kafka, RabbitMQ) where your application publishes a message, and separate consumer services pick up the message to call their respective APIs. This offers high decoupling and resilience. * Serverless Functions: Leveraging cloud functions (e.g., AWS Lambda) that can make parallel API calls or trigger other functions in response to an event. * API Gateway Orchestration: An advanced API gateway (like ApiPark) can be configured to receive a single client request and fan it out to multiple backend APIs concurrently.

4. What are the key challenges when implementing asynchronous multi-API communication? Key challenges include ensuring idempotency (preventing duplicate operations if retries occur), robust error handling and retry mechanisms (with exponential backoff and circuit breakers), effective monitoring and observability (using distributed tracing and centralized logging), managing data consistency (especially with eventual consistency models), and maintaining security across all API interactions.

5. How can an API Gateway like APIPark assist in managing asynchronous calls to multiple APIs? An API gateway like ApiPark plays a critical role by centralizing the management, security, and monitoring of API interactions. It can route requests, enforce authentication and authorization, apply rate limiting, and even orchestrate fan-out patterns to multiple backend APIs. Specifically, APIPark's "Detailed API Call Logging" and "Powerful Data Analysis" features are invaluable for gaining visibility into the complex flow of asynchronous requests, helping to trace, troubleshoot, and analyze performance across multiple services, thereby enhancing overall system stability and data security.

🚀You can securely and efficiently call the OpenAI API on APIPark in just two steps:

Step 1: Deploy the APIPark AI gateway in 5 minutes.

APIPark is developed based on Golang, offering strong product performance and low development and maintenance costs. You can deploy APIPark with a single command line.

curl -sSO https://download.apipark.com/install/quick-start.sh; bash quick-start.sh

In my experience, you can see the successful deployment interface within 5 to 10 minutes. Then, you can log in to APIPark using your account.

Step 2: Call the OpenAI API.