How to Use Python Requests Module for Data Queries

The digital landscape of today is fundamentally interconnected, a vast web of services and applications constantly exchanging information. At the heart of this intricate ecosystem lies the API (Application Programming Interface), serving as the universal language through which different software components communicate. Whether you're fetching real-time stock quotes, integrating a payment gateway, automating social media posts, or gathering data for scientific analysis, chances are you're interacting with an API. For Python developers, the requests module stands out as the de facto standard for making HTTP requests, offering a human-friendly and incredibly powerful way to engage with these APIs and query web data. This comprehensive guide will delve deep into the requests library, exploring its capabilities from basic data retrieval to advanced API interactions, ensuring you can harness its full potential for any data querying task.

I. Introduction: The Gateway to Web Data with Python Requests

In the realm of programming, interacting with web services is a cornerstone for building modern applications. Before the advent of specialized libraries, developers often resorted to Python's built-in urllib or urllib2 modules, which, while functional, presented a rather steep learning curve and verbose syntax. This complexity often obscured the core task of sending and receiving data, making web interactions cumbersome and prone to subtle errors. Then came requests. Born from the desire for simplicity and elegance, requests revolutionized how Python programs communicate over HTTP. It abstracts away the complexities of making web requests, offering an intuitive and Pythonic interface that feels natural to use.

Imagine you're building an application that needs to fetch information about a country from a public database, or perhaps post a status update to a social media platform, or even integrate with a cutting-edge AI model hosted on an Open Platform. In all these scenarios, your Python program needs to 'talk' to another server. requests acts as your program's voice, enabling it to articulate various requests, send data, and interpret the responses it receives. Its design philosophy emphasizes ease of use without sacrificing power, making it the preferred choice for tasks ranging from simple data scraping to intricate OpenAPI integrations. This guide will meticulously walk you through every significant aspect of using requests, transforming you into a master of web data queries.

II. Setting Up Your Environment: Getting Started with Requests

Before embarking on our journey into the world of web data querying, the very first step is to ensure that the requests library is installed and ready for action within your Python environment. Python's robust package management system, pip, makes this process remarkably straightforward and quick.

To install requests, open your terminal or command prompt and execute the following command:

pip install requests

This command instructs pip to download the requests package from the Python Package Index (PyPI) and install it into your active Python environment. If you are working within a virtual environment (which is highly recommended for managing project dependencies), ensure that the virtual environment is activated before running the pip install command. This practice helps to isolate your project's dependencies, preventing conflicts with other projects or the global Python installation.

Once the installation is complete, you can verify it by opening a Python interpreter (simply type python or python3 in your terminal) and attempting to import the library:

import requests

print("Requests module imported successfully!")

If no ImportError occurs and you see the success message, you're all set! The requests library is now available for use in your Python scripts, ready to empower your applications with web connectivity. With this foundational step complete, we can now turn our attention to the underlying principles of HTTP, which form the bedrock of requests's functionality.

III. The Anatomy of an HTTP Request: A Foundation for Data Queries

To effectively use the requests library, it's crucial to grasp the fundamental concepts of HTTP (Hypertext Transfer Protocol), which is the protocol underlying all web communication. Every interaction you make with requests is essentially building and sending an HTTP request and then processing the HTTP response. Understanding these components will provide clarity and control over your data queries.

3.1 HTTP Methods: The Verbs of Web Communication

HTTP methods, often referred to as HTTP verbs, define the type of action you want to perform on a resource identified by a URL. Each method has a specific semantic meaning, guiding how the server should interpret the request. The most common methods include:

- GET: This is the most frequently used method. It's employed to retrieve data from a specified resource. GET requests should only retrieve data and have no other effect on the data (i.e., they should be "idempotent" and "safe"). Examples include fetching a webpage, querying a database for records, or retrieving a list of items from an API endpoint.

- POST: Used to submit data to a specified resource, often causing a change in state or a creation of a new resource on the server. When you fill out a form on a website and submit it, that's typically a POST request. In API interactions, POST is used to create new entities, such as a new user, a new product, or a new comment.

- PUT: Used to update a resource or create it if it doesn't exist. PUT requests are "idempotent," meaning that making the same request multiple times will have the same effect as making it once (it will replace the resource with the specified data each time).

- DELETE: Used to remove a specified resource. Like PUT, DELETE requests are also idempotent.

- PATCH: A more recent addition, used to apply partial modifications to a resource. Unlike PUT, which requires sending the complete representation of the resource, PATCH allows sending only the changes you want to apply.

- HEAD: Similar to GET, but it asks for a response identical to a GET request, but without the response body. This is useful for retrieving metadata about a resource (like content type, content length, last modified date) without transferring the entire content, saving bandwidth.

- OPTIONS: Used to describe the communication options for the target resource. Clients can specify a URL for the OPTIONS method, or an asterisk (

*) to refer to the entire server.

Choosing the correct HTTP method is crucial for proper API design and interaction, aligning with the principles of REST (Representational State Transfer) architecture that many modern web services follow.

3.2 URLs, Endpoints, and Resources

At the core of every HTTP request is the Uniform Resource Locator (URL), which uniquely identifies a resource on the web. An API often exposes various "endpoints," each being a specific URL that corresponds to a particular resource or function.

- URL: The complete address, e.g.,

https://api.example.com/v1/users/123. - Base URL: The common part of all URLs for a given API, e.g.,

https://api.example.com/v1/. - Endpoint: The specific path appended to the base URL that points to a particular resource or collection, e.g.,

/usersor/users/123. - Resource: The actual data or service that the URL identifies, e.g., a collection of users or a specific user's profile.

Understanding how APIs structure their URLs is essential for constructing correct data queries. OpenAPI specifications often meticulously document these endpoints, making it easier for developers to interact with an Open Platform.

3.3 Request Headers: Context and Metadata

Request headers are key-value pairs that provide additional information about the request or the client making the request. They are not part of the request body but are sent alongside it. Common headers include:

User-Agent: Identifies the client software making the request (e.g., your browser, a Python script).Content-Type: Specifies the media type of the request body (e.g.,application/json,application/x-www-form-urlencoded). Crucial for POST/PUT requests.Accept: Informs the server about the media types the client prefers to receive in the response.Authorization: Contains credentials to authenticate the client with the server, often an API key or a token.Cache-Control: Directives for caching mechanisms.

Properly setting headers is often vital for successful API interactions, particularly for authentication and content negotiation.

3.4 Request Body: The Payload of Information

For methods like POST, PUT, and PATCH, a request often includes a "request body" or "payload." This is where the actual data you want to send to the server resides. The format of this data is specified by the Content-Type header. Common formats include:

- JSON (application/json): The most prevalent format for modern APIs due to its simplicity and readability. Python dictionaries can be easily converted to JSON strings.

- Form Data (application/x-www-form-urlencoded or multipart/form-data): Used for submitting traditional HTML forms. The

multipart/form-datatype is specifically used for file uploads. - XML (application/xml): Less common in newer APIs but still found in older or enterprise systems.

3.5 Response: The Server's Reply

After sending an HTTP request, the server processes it and sends back an HTTP response. A response consists of:

- Status Code: A three-digit number indicating the outcome of the request.

2xx(Success): E.g.,200 OK(request succeeded),201 Created(resource created).3xx(Redirection): E.g.,301 Moved Permanently.4xx(Client Error): E.g.,400 Bad Request,401 Unauthorized,403 Forbidden,404 Not Found.5xx(Server Error): E.g.,500 Internal Server Error,503 Service Unavailable. Understanding status codes is fundamental for robust error handling.

- Response Headers: Similar to request headers, these provide metadata about the response (e.g.,

Content-Typeof the response body,Date,Server). - Response Body: The actual data returned by the server, often in JSON, XML, HTML, or plain text format. This is the data you're typically querying for.

Here's a concise table summarizing common HTTP methods and their typical use cases:

| HTTP Method | Purpose | Idempotent | Safe | Typical Use Case |

|---|---|---|---|---|

GET |

Retrieve data from a resource | Yes | Yes | Fetching a webpage, getting a list of products, querying user data. |

POST |

Submit data to a resource; create a new resource | No | No | Creating a new user account, submitting a form, adding an item to a database. |

PUT |

Update an existing resource or create if it doesn't exist | Yes | No | Replacing an entire user profile, updating all fields of a product record. |

DELETE |

Remove a specified resource | Yes | No | Deleting a user account, removing a specific blog post. |

PATCH |

Apply partial modifications to a resource | No | No | Updating only the email address of a user, changing the status of an order. |

HEAD |

Retrieve headers only, no body | Yes | Yes | Checking if a resource exists, getting metadata like Content-Length before downloading. |

OPTIONS |

Describe communication options for the target resource | Yes | Yes | Determining what HTTP methods are allowed on a resource, checking CORS preflight requests. |

With this foundational understanding of HTTP, we can now confidently move on to using requests to construct and send these requests, interpreting the responses to extract the data we need.

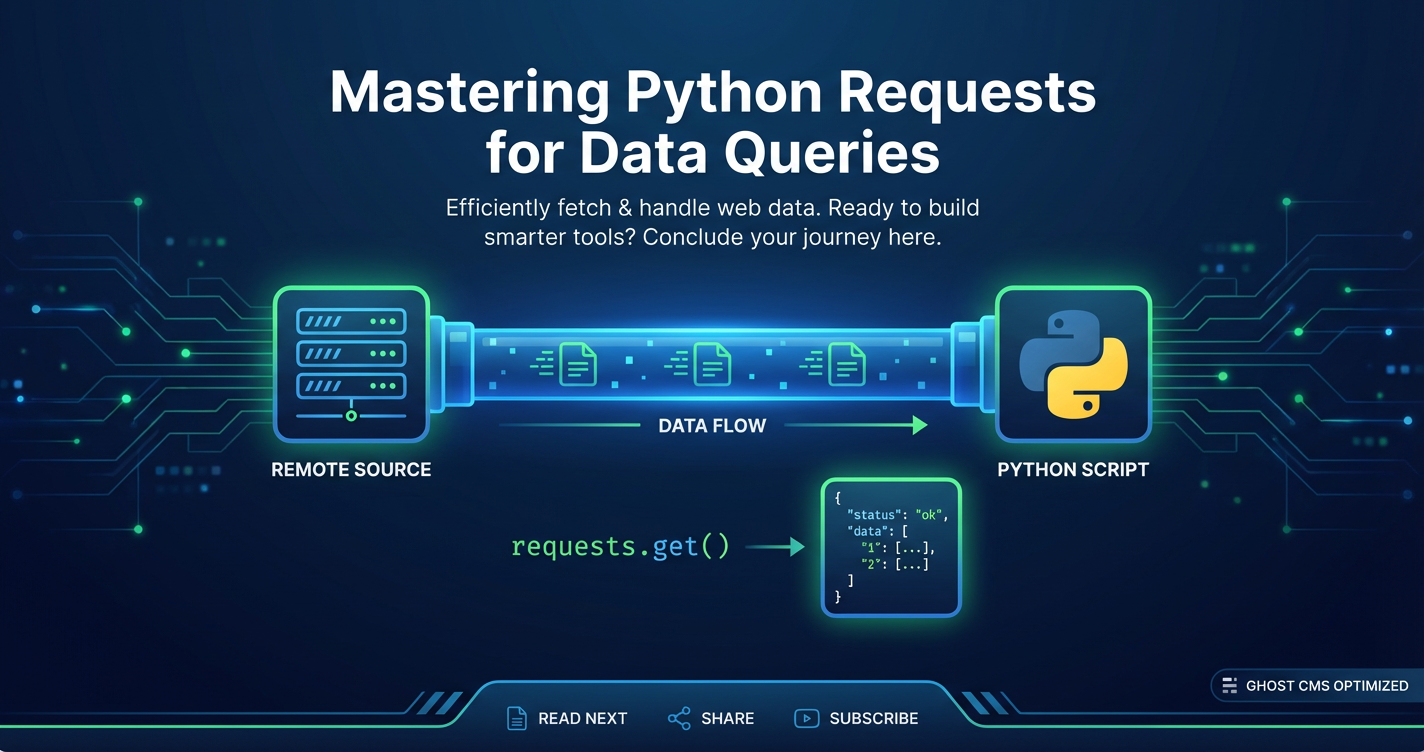

IV. Mastering Basic Data Queries with requests.get()

The GET method is the workhorse of web data retrieval, forming the basis of countless data querying operations. The requests library makes performing GET requests incredibly simple and intuitive.

4.1 Simple GET Requests

The most basic GET request involves providing just the URL of the resource you wish to retrieve.

import requests

# Example: Fetching data from a public API

url = 'https://jsonplaceholder.typicode.com/posts/1' # A mock API endpoint

response = requests.get(url)

# The 'response' object holds all the server's reply

print(f"Status Code: {response.status_code}")

print(f"Content Type: {response.headers['Content-Type']}")

print(f"Response Body (first 200 chars):\n{response.text[:200]}...")

In this example, requests.get(url) sends an HTTP GET request to the specified URL. The returned response object is a powerful container for all the information sent back by the server, allowing us to inspect the status code, headers, and the body of the response.

4.2 Query Parameters: Building Dynamic URLs

Often, when querying data from an API, you need to filter, sort, or paginate the results. This is achieved using query parameters, which are appended to the URL after a question mark ?, with key-value pairs separated by ampersands &. requests simplifies this by allowing you to pass a dictionary to the params argument, which it then automatically encodes and appends to the URL.

import requests

# Example: Searching for articles on a mock API

base_url = 'https://jsonplaceholder.typicode.com/posts'

query_parameters = {

'userId': 1,

'_limit': 3 # Get only 3 posts for user 1

}

response = requests.get(base_url, params=query_parameters)

print(f"Request URL: {response.url}") # See how requests built the URL

print(f"Status Code: {response.status_code}")

print(f"Response Body:\n{response.text}")

# Expected URL: https://jsonplaceholder.typicode.com/posts?userId=1&_limit=3

Using the params dictionary is not only cleaner but also handles URL encoding automatically, preventing potential issues with special characters in your query values. This is particularly useful when interacting with an Open Platform that offers extensive filtering capabilities through its API.

4.3 Accessing Response Data: .text, .content, .json()

The response object provides several convenient ways to access the returned data:

response.text: This attribute returns the content of the response in Unicode format (string).requestsintelligently guesses the encoding of the response based on HTTP headers, making it suitable for most text-based content like HTML, XML, or plain text.python print(type(response.text)) # <class 'str'>response.content: This attribute returns the content of the response in bytes. This is useful when dealing with non-textual data like images, audio files, or when you need explicit control over decoding.python # Example: Downloading a small image (replace with a real image URL) image_url = 'https://www.python.org/static/community_logos/python-logo-master-v3-TM.png' img_response = requests.get(image_url) if img_response.status_code == 200: with open('python_logo.png', 'wb') as f: f.write(img_response.content) print("Image downloaded successfully!")response.json(): If the response body contains JSON data, this method automatically parses it into a Python dictionary or list. This is an incredibly common operation when interacting with modern RESTful APIs. If the content is not valid JSON, it will raise ajson.JSONDecodeError.python import json json_response = requests.get('https://jsonplaceholder.typicode.com/posts/1') if json_response.status_code == 200: data = json_response.json() print(type(data)) # <class 'dict'> print(f"Post Title: {data['title']}") print(f"Post Body: {data['body']}") else: print(f"Failed to fetch data: {json_response.status_code}")Always check theContent-Typeheader (e.g.,application/json) before attemptingresponse.json()to avoid errors, althoughrequestsis often smart enough to figure it out.

4.4 Handling Status Codes: response.status_code and response.raise_for_status()

As mentioned in the HTTP anatomy section, the status code is paramount for understanding the outcome of your request.

response.status_code: This attribute provides the integer status code. You should always check this value to determine if your request was successful before attempting to process the response data.python if response.status_code == 200: print("Request successful!") # Process data elif response.status_code == 404: print("Resource not found.") else: print(f"An error occurred: {response.status_code}")response.raise_for_status(): This convenient method raises anHTTPErrorfor4xxor5xxstatus codes. This is a quick way to check for errors and halt execution if something went wrong on the server side or with the client's request. It does nothing if the request was successful (2xx status code).python try: response.raise_for_status() # Raises an HTTPError for bad responses (4xx or 5xx) print("Request successful (status 2xx).") data = response.json() # Process data except requests.exceptions.HTTPError as e: print(f"HTTP Error: {e}") except requests.exceptions.RequestException as e: print(f"An error occurred: {e}")Usingraise_for_status()within atry-exceptblock is a robust pattern for error handling in data queries, allowing you to gracefully manage network issues and server-side problems.

V. Sending Data: POST, PUT, and PATCH Requests

While GET requests are for retrieving data, POST, PUT, and PATCH are used to send data to the server, typically to create or update resources. These operations are crucial for interacting with APIs that manage dynamic content, user data, or system configurations.

5.1 requests.post(): Creating New Resources

The POST method is primarily used to send data to a server to create a new resource or perform a specific action that changes the server's state. When using requests.post(), you typically pass the data you want to send in one of two ways: data for form-encoded content or json for JSON payloads.

5.1.1 Sending Form-Encoded Data with data

Historically, web forms submit data using the application/x-www-form-urlencoded content type. requests allows you to send data in this format by passing a dictionary to the data parameter. requests will automatically encode this dictionary into the correct format and set the Content-Type header.

import requests

url = 'https://jsonplaceholder.typicode.com/posts' # Endpoint to create a new post

new_post_data = {

'title': 'My New Blog Post',

'body': 'This is the content of my brand new blog post.',

'userId': 1

}

response = requests.post(url, data=new_post_data)

print(f"Status Code: {response.status_code}") # Expect 201 Created

if response.status_code == 201:

print("New post created successfully!")

print(response.json()) # The API often returns the created resource with an ID

else:

print(f"Failed to create post. Response: {response.text}")

In this example, the dictionary new_post_data is converted into title=My+New+Blog+Post&body=... and sent as the request body. While still used, application/x-www-form-urlencoded is less common for modern RESTful APIs compared to JSON.

5.1.2 Sending JSON Data with json

For most modern APIs, particularly those following the REST architectural style, data is exchanged using JSON (JavaScript Object Notation). requests makes sending JSON data effortless with the json parameter. When you use json=your_dict, requests automatically serializes your Python dictionary (or list) into a JSON string and sets the Content-Type header to application/json. This is the recommended way to send structured data to an OpenAPI endpoint.

import requests

url = 'https://jsonplaceholder.typicode.com/posts'

new_post_json = {

'title': 'Another JSON Post',

'body': 'This post was sent as a JSON payload.',

'userId': 2

}

response = requests.post(url, json=new_post_json)

print(f"Status Code: {response.status_code}")

if response.status_code == 201:

print("New JSON post created successfully!")

print(response.json())

else:

print(f"Failed to create JSON post. Response: {response.text}")

This method is incredibly convenient as it abstracts away the need to manually json.dumps() your dictionary and set the Content-Type header, streamlining interactions with Open Platform services.

5.2 requests.put(): Updating or Replacing Resources

The PUT method is used to update an existing resource or, if the resource does not exist at the specified URL, to create it. A key characteristic of PUT is that it is "idempotent," meaning that multiple identical PUT requests will result in the same state as a single PUT request. When you use PUT, you are typically sending the complete updated representation of the resource.

import requests

# Assume post ID 1 already exists

post_id = 1

url = f'https://jsonplaceholder.typicode.com/posts/{post_id}'

updated_post_data = {

'id': post_id, # Often, PUT requests expect the ID in the body as well

'title': 'Updated Post Title by PUT',

'body': 'The entire content of the post has been replaced with this new body.',

'userId': 1

}

response = requests.put(url, json=updated_post_data)

print(f"Status Code: {response.status_code}") # Expect 200 OK or 204 No Content

if response.status_code in [200, 204]:

print(f"Post {post_id} updated successfully!")

if response.status_code == 200: # Some APIs return the updated resource

print(response.json())

else:

print(f"Failed to update post {post_id}. Response: {response.text}")

Notice that for a PUT request, you send the entire resource's data, even if only a small part has changed. This is a crucial distinction from PATCH.

5.3 requests.patch(): Partially Updating Resources

The PATCH method is designed for applying partial modifications to a resource. Instead of sending the full resource representation as with PUT, you send only the specific changes you want to apply. This can be more efficient, especially for large resources where only a few fields need to be modified. However, not all APIs support PATCH, so it's important to consult the API documentation (which might be specified using an OpenAPI document).

import requests

post_id = 1

url = f'https://jsonplaceholder.typicode.com/posts/{post_id}'

# We only want to update the title, not the body or userId

partial_update_data = {

'title': 'Partially Updated Title with PATCH'

}

response = requests.patch(url, json=partial_update_data)

print(f"Status Code: {response.status_code}") # Expect 200 OK

if response.status_code == 200:

print(f"Post {post_id} partially updated successfully!")

print(response.json()) # API usually returns the full updated resource

else:

print(f"Failed to partially update post {post_id}. Response: {response.text}")

PATCH is an excellent choice when dealing with resources where only minor adjustments are frequently made, minimizing the data sent over the network. Always refer to the specific API's documentation to understand whether it supports PATCH and what format the partial update payload should take.

VI. Deleting Resources: requests.delete()

The DELETE method is straightforward: it's used to request the removal of a specified resource identified by a URL. Like PUT, DELETE requests are idempotent, meaning that repeatedly calling DELETE on the same resource will have the same outcome as calling it once (the resource will remain deleted after the first successful attempt, and subsequent attempts will typically yield a 404 Not Found or 204 No Content).

import requests

# Assuming we want to delete a specific post, e.g., post with ID 1

post_to_delete_id = 1

url = f'https://jsonplaceholder.typicode.com/posts/{post_to_delete_id}'

response = requests.delete(url)

print(f"Status Code: {response.status_code}")

# A successful DELETE often returns 200 OK (with an empty body or a success message)

# or 204 No Content (if the server has no content to send back).

if response.status_code in [200, 204]:

print(f"Resource {post_to_delete_id} deleted successfully!")

if response.text:

print(f"Response body: {response.text}") # Might contain a confirmation message

else:

print(f"Failed to delete resource {post_to_delete_id}. Response: {response.text}")

# Attempting to GET the deleted resource should now return 404

get_response = requests.get(url)

print(f"Status after delete attempt: {get_response.status_code}")

When performing DELETE operations, it's particularly important to implement robust error handling and potentially confirmation steps in user-facing applications, as data deletion is often irreversible. The clarity and simplicity of requests.delete() make this critical operation manageable.

VII. Request Headers: Customizing Your Interaction

Beyond the URL and the request body, HTTP headers play a vital role in every web interaction. They provide essential metadata about the request, the client, and the expected response, influencing how the server processes your data query. requests allows for easy manipulation of these headers using the headers parameter, which accepts a dictionary of key-value pairs.

7.1 Setting Custom Headers

You might need to set custom headers for various reasons, such as:

- API Keys/Tokens for Authentication: Many APIs require an API key or a Bearer token passed in the

Authorizationheader. - Specifying Content Type: Although

requestsoften infersContent-Typeforjsonanddataparameters, explicit control might be needed for specific scenarios. - User-Agent String: Identifying your application to the server, which can be useful for debugging or adhering to

APIusage policies. - Accept Header: Specifying the preferred media type for the response (e.g.,

application/json).

import requests

url = 'https://api.github.com/users/octocat' # Public GitHub API

# Define custom headers

custom_headers = {

'User-Agent': 'MyPythonApp/1.0 (Contact: myemail@example.com)',

'Accept': 'application/vnd.github.v3+json', # Request GitHub API v3 specific JSON format

# 'Authorization': 'token YOUR_GITHUB_TOKEN' # Example for authenticated requests

}

response = requests.get(url, headers=custom_headers)

print(f"Status Code: {response.status_code}")

if response.status_code == 200:

print("GitHub user data:")

user_data = response.json()

print(f"Name: {user_data.get('name')}")

print(f"Bio: {user_data.get('bio')}")

else:

print(f"Failed to fetch user data. Response: {response.text}")

In this example, we're not only identifying our application with a User-Agent but also specifically requesting a particular version and format of the GitHub API's response using the Accept header. This level of control is crucial for advanced API integrations, especially with OpenAPI compliant services that might offer multiple content types or versions.

7.2 Common Headers and Their Role

User-Agent: While browsers send their ownUser-Agent, it's good practice for your script to identify itself. Some APIs or websites might block requests without aUser-Agentor from unrecognized ones.Content-Type: Essential forPOST,PUT, andPATCHrequests that include a body. It tells the server how to parse the data you're sending (e.g.,application/json,application/x-www-form-urlencoded,multipart/form-data).requestsusually handles this automatically when you usejson=...orfiles=..., but explicit setting might be needed.Authorization: The backbone of secure API interaction. This header carries authentication credentials like API keys, OAuth tokens (e.g.,Bearer YOUR_TOKEN), or Basic Authentication (Basic base64encoded_username:password). We will elaborate on authentication in the next section.Accept: Informs the server about the type of response data the client can handle or prefers. For example,Accept: application/jsontells the server you prefer a JSON response.Referer: Indicates the URL of the page that linked to the resource being requested. Some APIs use this for security or tracking.

Thoughtful use of headers ensures your requests are correctly interpreted by the server and helps you receive the desired data format, making your data queries more precise and reliable, especially when navigating complex Open Platform environments.

VIII. Authentication: Securing Your Data Queries

Accessing data from many APIs, particularly those requiring private information or managing user accounts, necessitates authentication. This process verifies your identity or your application's identity to the server, granting you permission to perform certain actions or access specific data. requests provides flexible mechanisms to handle various authentication schemes.

8.1 Basic HTTP Authentication

Basic authentication is one of the simplest forms of authentication. It involves sending a username and password (base64 encoded) in the Authorization header. While simple, it's not secure over unencrypted HTTP connections (always use HTTPS!). requests offers a convenient auth parameter for this.

import requests

from requests.auth import HTTPBasicAuth

# Example for a mock API that supports Basic Auth

# (Note: jsonplaceholder does not support auth, this is a conceptual example)

auth_url = 'https://api.example.com/protected/resource'

username = 'myuser'

password = 'mypassword'

# Pass a tuple (username, password) to the auth parameter

response = requests.get(auth_url, auth=(username, password))

# Alternatively, explicitly use HTTPBasicAuth:

# response = requests.get(auth_url, auth=HTTPBasicAuth(username, password))

print(f"Status Code: {response.status_code}")

if response.status_code == 200:

print("Successfully authenticated and fetched data.")

print(response.json())

elif response.status_code == 401:

print("Authentication failed. Invalid credentials.")

else:

print(f"An error occurred: {response.text}")

For more complex authentication schemes like Digest Authentication, requests also provides HTTPDigestAuth in requests.auth.

8.2 Token-Based Authentication (API Keys and Bearer Tokens)

This is by far the most common authentication method for modern RESTful APIs.

- Bearer Tokens (OAuth 2.0): After a successful OAuth authentication flow, an API provides an access token (a "bearer token"). This token is then included in the

Authorizationheader for all subsequent requests to protected resources. The format is typicallyAuthorization: Bearer YOUR_ACCESS_TOKEN.```python import requestsaccess_token = 'YOUR_OAUTH_ACCESS_TOKEN' # Obtain this from an OAuth flow protected_api_url = 'https://api.example.com/user/profile'headers_with_token = { 'Authorization': f'Bearer {access_token}', 'Content-Type': 'application/json' # Other headers like User-Agent can also be included }response = requests.get(protected_api_url, headers=headers_with_token)print(f"Status Code: {response.status_code}") if response.status_code == 200: print("Successfully fetched user profile with Bearer token.") print(response.json()) elif response.status_code == 401: print("Unauthorized. Token might be invalid or expired.") else: print(f"Error fetching profile: {response.text}")`` Whilerequestsitself doesn't implement the full OAuth 2.0 flow (which involves redirects, user consent, etc.), it's perfectly capable of using the access token once acquired. For managing complex OAuth flows, libraries likerequests-oauthlib` can be integrated.

API Keys: Often a long, randomly generated string, an API key is passed either as a query parameter in the URL or, more securely, in a custom HTTP header (e.g., X-API-Key or Authorization).```python import requestsapi_key = 'YOUR_SECRET_API_KEY' # Replace with your actual API key

Example: API key in header

api_url_header = 'https://api.example.com/data' headers_with_api_key = { 'X-API-Key': api_key } response_header = requests.get(api_url_header, headers=headers_with_api_key)

Example: API key as query parameter

api_url_param = 'https://api.example.com/data' params_with_api_key = { 'api_key': api_key } response_param = requests.get(api_url_param, params=params_with_api_key)print(f"Header Auth Status: {response_header.status_code}") print(f"Param Auth Status: {response_param.status_code}") ```

8.3 Storing Credentials Securely

Crucially, never hardcode sensitive credentials like API keys or tokens directly into your script for production environments. Best practices include:

- Environment Variables: Load credentials from environment variables (

os.environ). - Configuration Files: Use a

.inior.envfile (e.g., withpython-dotenv) that is excluded from version control. - Secret Management Services: For enterprise applications, use dedicated secret management services like AWS Secrets Manager, Google Secret Manager, or HashiCorp Vault.

Proper authentication is the gateway to accessing valuable data and features offered by an Open Platform or a private API. Understanding these methods and their secure implementation is paramount for any robust data querying application.

IX. Working with Sessions: Efficiency and State Management

Every time you make a call like requests.get() or requests.post(), a new connection to the server is typically established. While fine for occasional requests, repeatedly opening and closing connections can introduce overhead and slow down your application, especially when interacting with the same host multiple times. This is where requests.Session comes into play.

A Session object allows you to persist certain parameters across multiple requests, such as cookies, authentication credentials, and custom headers. More importantly, it reuses the underlying TCP connection to the same host, which significantly improves performance by avoiding the overhead of establishing a new connection for each request (HTTP persistent connections, or keep-alive).

9.1 Why Use requests.Session?

- Performance: Reusing TCP connections (connection pooling) reduces latency and CPU usage, especially for numerous requests to the same domain.

- Cookie Management: A

Sessionobject automatically handles and persists cookies received from the server across subsequent requests. This is crucial for maintaining state, such as being logged in to a website. - Default Parameters: You can set default headers, authentication, or proxy configurations on a

Sessionobject, and these will apply to all requests made through that session, reducing redundancy.

9.2 Basic Session Usage

import requests

# Create a session object

s = requests.Session()

# Set default headers for the session

s.headers.update({

'User-Agent': 'MyPersistentApp/1.0',

'Accept-Language': 'en-US,en;q=0.5'

})

# Make multiple requests using the session

try:

# First request

response1 = s.get('https://httpbin.org/cookies/set/sessioncookie/12345')

print(f"Response 1 Status: {response1.status_code}")

print(f"Cookies from Response 1: {response1.cookies.get('sessioncookie')}")

# Second request to a different endpoint on the same domain

# This request will reuse the connection and automatically send the cookie received in response1

response2 = s.get('https://httpbin.org/cookies')

print(f"Response 2 Status: {response2.status_code}")

print(f"Cookies sent in Request 2: {response2.json().get('cookies')}")

# You can also set authentication for the entire session

s.auth = ('user', 'pass')

response3 = s.get('https://httpbin.org/basic-auth/user/pass')

print(f"Response 3 Status (with session auth): {response3.status_code}")

except requests.exceptions.RequestException as e:

print(f"An error occurred: {e}")

# The session and its underlying connections are automatically closed when the session object is garbage collected.

# Explicitly closing with s.close() is good practice, especially in long-running applications or when done with the session.

s.close()

In this example, response2 automatically sends the sessioncookie that was set by the server in response1 because both requests were made using the same Session object. Furthermore, response3 correctly authenticates using the auth credentials set on the session.

9.3 Using Sessions with Context Managers

For cleaner resource management, especially ensuring that connections are properly closed, requests.Session objects can be used within a with statement, which acts as a context manager. This ensures that s.close() is called automatically when the block is exited, even if errors occur.

import requests

with requests.Session() as s:

s.headers.update({

'User-Agent': 'MyContextManagedApp/1.0',

'Accept': 'application/json'

})

try:

r1 = s.get('https://jsonplaceholder.typicode.com/users/1')

r1.raise_for_status()

print(f"User 1: {r1.json()['name']}")

r2 = s.get('https://jsonplaceholder.typicode.com/todos/1')

r2.raise_for_status()

print(f"Todo 1: {r2.json()['title']}")

except requests.exceptions.HTTPError as e:

print(f"HTTP Error: {e}")

except requests.exceptions.RequestException as e:

print(f"General Request Error: {e}")

print("Session closed automatically.")

Using requests.Session is a best practice for any application that makes multiple HTTP requests to the same domain, from simple scripts interacting with an Open Platform to complex microservices querying various APIs. It significantly enhances efficiency and simplifies state management.

X. Handling Complex Data: File Uploads and Streaming

Beyond simple JSON or form data, requests is also adept at handling more complex data types, such as files for uploads and large binary streams for efficient downloads. These capabilities are essential for many modern web applications, from managing user-generated content to processing big datasets from an API.

10.1 File Uploads

Uploading files (e.g., images, documents, CSVs) to a server is a common task. requests simplifies this by allowing you to pass a files dictionary to POST or PUT methods. This dictionary maps the form field name to the file content. requests automatically handles the multipart/form-data encoding, which is the standard for file uploads.

You can specify a file in several ways:

- From an open file object:

{'fieldname': open('filename', 'rb')}. The file object should be opened in binary read mode ('rb'). - From a tuple:

{'fieldname': ('filename.txt', open('filename.txt', 'rb'), 'text/plain', {'Expires': '0'})}. This allows specifying the filename, content, content type, and custom headers. - From a string:

{'fieldname': ('filename.txt', 'my text content', 'text/plain')}. The string content will be treated as the file data.

import requests

import os

# Create a dummy file for upload

dummy_file_path = 'dummy_upload.txt'

with open(dummy_file_path, 'w') as f:

f.write('This is a test file for upload via requests.')

# URL of a service that accepts file uploads (e.g., httpbin.org/post)

upload_url = 'https://httpbin.org/post'

try:

with open(dummy_file_path, 'rb') as f:

# 'file_field_name' is the name of the input field on the server expecting the file.

# It's usually defined in the API documentation.

files = {'uploadFile': f}

# You can also send other form data alongside the file

other_data = {'description': 'A simple text file upload'}

response = requests.post(upload_url, files=files, data=other_data)

print(f"Status Code: {response.status_code}")

if response.status_code == 200:

print("File uploaded successfully!")

print(response.json()['files']) # httpbin shows the uploaded file

print(response.json()['form']) # Shows other form data

else:

print(f"Failed to upload file. Response: {response.text}")

except requests.exceptions.RequestException as e:

print(f"An error occurred during file upload: {e}")

finally:

# Clean up the dummy file

if os.path.exists(dummy_file_path):

os.remove(dummy_file_path)

When working with file uploads to a real API, always consult its documentation to determine the correct field name for the file and any other required form parameters. This functionality is vital for building applications that interact with an Open Platform for user content or data processing.

10.2 Streaming Downloads for Large Files

When downloading very large files, such as a multi-gigabyte dataset from an API or a high-resolution video, it's generally a bad idea to load the entire content into memory at once. Doing so can quickly exhaust your system's RAM, leading to crashes or performance issues. requests provides a streaming capability that allows you to download content piece by piece, processing it as it arrives without holding the entire file in memory.

To enable streaming, set stream=True in your request call. Then, you can iterate over response.iter_content() (for raw bytes) or response.iter_lines() (for line-by-line processing of text).

import requests

# Example: Downloading a large file (e.g., a public dataset or a large image)

# Replace with an actual large file URL if testing performance

large_file_url = 'https://speed.hetzner.de/100MB.bin' # A dummy 100MB binary file for testing

output_filename = 'downloaded_large_file.bin'

try:

with requests.get(large_file_url, stream=True) as response:

response.raise_for_status() # Raise an exception for HTTP errors

total_size = int(response.headers.get('content-length', 0))

downloaded_size = 0

chunk_size = 8192 # 8KB chunks

print(f"Starting download of {total_size / (1024*1024):.2f} MB...")

with open(output_filename, 'wb') as f:

for chunk in response.iter_content(chunk_size=chunk_size):

if chunk: # Filter out keep-alive new chunks

f.write(chunk)

downloaded_size += len(chunk)

# Optional: print progress

print(f"\rDownloaded {downloaded_size / (1024*1024):.2f} MB of {total_size / (1024*1024):.2f} MB", end='')

print("\nDownload complete!")

except requests.exceptions.RequestException as e:

print(f"An error occurred during download: {e}")

The iter_content(chunk_size) method yields chunks of bytes, which you can then write to a file, process incrementally, or pass to another stream. This efficient approach prevents memory overload and is indispensable when dealing with high-volume data querying from an API or an Open Platform that serves large datasets.

XI. Error Handling and Robustness in API Interactions

In the imperfect world of network communication and server-side logic, errors are inevitable. A robust data querying application doesn't just make requests; it anticipates, detects, and gracefully handles potential issues. requests provides a comprehensive set of exceptions and features to build resilient API interactions.

11.1 requests.exceptions: Understanding Error Types

requests defines a hierarchy of exceptions that you can catch to differentiate between various types of errors:

requests.exceptions.RequestException: This is the base exception for allrequests-related errors. Catching this will cover almost any issue that can arise from arequestscall.requests.exceptions.ConnectionError: Raised when there's a network problem (e.g., DNS failure, refused connection, no internet). This occurs before the request even reaches the server.requests.exceptions.HTTPError: Raised for4xxor5xxHTTP status codes (client or server errors). This is typically triggered byresponse.raise_for_status().requests.exceptions.Timeout: Raised if a request exceeds its configured timeout.requests.exceptions.TooManyRedirects: Raised if a request exceeds the configured maximum number of redirections.requests.exceptions.URLRequired: Raised if a URL is missing or malformed.

import requests

invalid_url = 'http://nonexistent-domain-12345.com'

timeout_url = 'http://httpbin.org/delay/10' # This endpoint delays response by 10 seconds

server_error_url = 'http://httpbin.org/status/500' # Simulates a server error

not_found_url = 'http://httpbin.org/status/404' # Simulates a resource not found

def make_robust_request(url, timeout=5):

try:

response = requests.get(url, timeout=timeout)

response.raise_for_status() # Check for HTTP errors (4xx or 5xx)

print(f"Successfully fetched {url} - Status: {response.status_code}")

return response.json() if 'json' in response.headers.get('Content-Type', '') else response.text

except requests.exceptions.HTTPError as e:

print(f"HTTP Error for {url}: {e} (Status Code: {response.status_code})")

except requests.exceptions.ConnectionError as e:

print(f"Connection Error for {url}: {e}")

except requests.exceptions.Timeout as e:

print(f"Timeout Error for {url}: Request timed out after {timeout} seconds.")

except requests.exceptions.RequestException as e:

print(f"An unexpected Requests error occurred for {url}: {e}")

except Exception as e:

print(f"An unexpected non-Requests error occurred for {url}: {e}")

return None

print("--- Testing invalid URL ---")

make_robust_request(invalid_url)

print("\n--- Testing timeout ---")

make_robust_request(timeout_url, timeout=3) # Will timeout

print("\n--- Testing server error ---")

make_robust_request(server_error_url)

print("\n--- Testing not found ---")

make_robust_request(not_found_url)

print("\n--- Testing successful request ---")

make_robust_request('https://httpbin.org/get')

This structured try-except block demonstrates how to catch specific exceptions, allowing you to provide tailored error messages or implement specific recovery logic based on the nature of the failure.

11.2 Retry Mechanisms: Handling Transient Errors

Network glitches, temporary server overload, or rate limits can cause transient errors that might resolve themselves if the request is retried after a short delay. Implementing a retry mechanism significantly enhances the robustness of your data querying.

A simple retry logic can be implemented manually:

import requests

import time

def retry_request(url, max_retries=3, delay_seconds=2):

for attempt in range(max_retries):

try:

response = requests.get(url, timeout=5)

response.raise_for_status()

print(f"Attempt {attempt + 1}: Request to {url} successful.")

return response.json()

except (requests.exceptions.ConnectionError, requests.exceptions.Timeout, requests.exceptions.HTTPError) as e:

print(f"Attempt {attempt + 1}: Error - {e}. Retrying in {delay_seconds} seconds...")

time.sleep(delay_seconds)

# For HTTPError, you might want to specifically check for 5xx codes for retries.

# 4xx codes generally indicate a client-side error that won't resolve on retry.

if isinstance(e, requests.exceptions.HTTPError) and 400 <= response.status_code < 500:

print("Client-side error (4xx), not retrying.")

break # Don't retry client errors

print(f"Failed to fetch {url} after {max_retries} attempts.")

return None

# Example with a URL that might intermittently fail (conceptual)

# For demonstration, let's use a URL that will eventually succeed or fail

# (e.g., a mock API that sometimes returns a 500, then a 200)

# Here, we'll use a 500 status code which our retry logic would attempt to handle.

print("\n--- Testing retry mechanism (conceptual server error) ---")

# If httpbin.org/status/500 was dynamic and eventually returned 200, this would work.

# As it's static, it will fail all attempts due to the 500.

retry_request('http://httpbin.org/status/500', max_retries=2, delay_seconds=1)

print("\n--- Testing retry mechanism (successful after multiple attempts) ---")

# This will simulate a temporary failure, then success (requires manual simulation or a dedicated mock server)

# For the purpose of demonstration, let's call a valid one after one "failure".

retry_request('https://httpbin.org/get', max_retries=2, delay_seconds=1)

For more sophisticated retry strategies (e.g., exponential backoff, handling specific status codes), consider using the requests-toolbelt library's requests.adapters.HTTPAdapter with urllib3.Retry.

from requests.adapters import HTTPAdapter

from urllib3.util.retry import Retry

import requests

def session_with_retries():

s = requests.Session()

retries = Retry(total=3, # Max number of retries

backoff_factor=0.1, # Time interval between retries (0.1, 0.2, 0.4 seconds)

status_forcelist=[500, 502, 503, 504], # Which status codes to retry on

allowed_methods=['GET']) # Which HTTP methods to retry

s.mount('http://', HTTPAdapter(max_retries=retries))

s.mount('https://', HTTPAdapter(max_retries=retries))

return s

print("\n--- Testing session with built-in retries ---")

session = session_with_retries()

try:

# This will simulate retries for 500, 502, 503, 504

# Since httpbin.org/status/500 always returns 500, it will eventually exhaust retries and raise HTTPError

response = session.get('http://httpbin.org/status/500')

response.raise_for_status()

print("Request successful (unexpected for 500 status)!")

except requests.exceptions.HTTPError as e:

print(f"HTTP Error after retries: {e}")

except requests.exceptions.RequestException as e:

print(f"General Requests error after retries: {e}")

# Example for a request that should succeed normally

try:

response = session.get('https://httpbin.org/get')

response.raise_for_status()

print(f"Successful request after retries: {response.status_code}")

except requests.exceptions.RequestException as e:

print(f"Error for successful request: {e}")

Implementing robust error handling and retry mechanisms is paramount for any production-ready application that relies on external APIs, particularly when interacting with an Open Platform where network conditions or service availability might fluctuate. This ensures your data queries are resilient and can recover from transient issues.

XII. Proxies and SSL/TLS Verification

When making web requests, especially in enterprise environments, for security, or for bypassing certain network restrictions, you might need to route your traffic through a proxy server. Furthermore, ensuring the authenticity and integrity of the server you're communicating with is crucial, which is where SSL/TLS verification comes in. requests offers robust support for both.

12.1 Using Proxies

A proxy server acts as an intermediary for requests from clients seeking resources from other servers. When using a proxy, your request goes to the proxy, which then forwards it to the target server, and the response comes back through the proxy to you. This can be used for:

- Security: Masking your IP address from the target server.

- Access Control: Bypassing geographic restrictions or internal network firewalls.

- Logging/Monitoring: Network administrators can monitor traffic.

requests allows you to configure proxies using the proxies parameter, which takes a dictionary mapping protocols (http, https) to proxy URLs.

import requests

# Example proxy configuration (replace with your actual proxy)

# Make sure to use 'http' for HTTP proxies and 'https' for HTTPS proxies.

proxies = {

'http': 'http://10.10.1.10:3128',

'https': 'http://10.10.1.10:1080', # HTTPS traffic often goes through HTTP proxy on 1080

# For authenticated proxies:

# 'http': 'http://user:pass@10.10.1.10:3128'

}

test_url = 'http://httpbin.org/get' # This will show your origin IP, or the proxy's IP if successful

https_test_url = 'https://httpbin.org/get' # Test with HTTPS

try:

print(f"--- Testing HTTP request with proxy ---")

response_http = requests.get(test_url, proxies=proxies, timeout=5)

response_http.raise_for_status()

print(f"HTTP Request via proxy successful. Origin IP: {response_http.json().get('origin')}")

print(f"\n--- Testing HTTPS request with proxy ---")

response_https = requests.get(https_test_url, proxies=proxies, timeout=5)

response_https.raise_for_status()

print(f"HTTPS Request via proxy successful. Origin IP: {response_https.json().get('origin')}")

except requests.exceptions.ProxyError as e:

print(f"Proxy Error: Could not connect to proxy. Check proxy configuration: {e}")

except requests.exceptions.ConnectionError as e:

print(f"Connection Error: {e}. Check network connectivity or proxy availability.")

except requests.exceptions.RequestException as e:

print(f"An error occurred: {e}")

For persistent proxy usage across multiple requests, you can set proxies on a requests.Session object. Also, requests respects the HTTP_PROXY and HTTPS_PROXY environment variables by default if no explicit proxies parameter is provided.

12.2 SSL/TLS Verification: Ensuring Trust

SSL/TLS (Secure Sockets Layer/Transport Layer Security) is the standard technology for establishing an encrypted link between a web server and a client. When you access a website via https://, your browser verifies the server's SSL certificate to ensure it's communicating with the legitimate server and that the connection is secure.

requests performs SSL verification by default for all HTTPS requests. This means it will check the server's SSL certificate against a set of trusted Certificate Authorities (CAs) that are bundled with certifi (which requests uses). If the certificate is invalid, expired, or issued by an untrusted CA, requests will raise an SSLError.

import requests

# Default behavior: SSL verification is ON

secure_url = 'https://www.google.com'

invalid_ssl_url = 'https://self-signed.badssl.com/' # A known site with an invalid SSL cert

print("--- Testing secure URL (verification ON) ---")

try:

response = requests.get(secure_url)

response.raise_for_status()

print(f"Successfully connected to {secure_url} with SSL verification.")

except requests.exceptions.SSLError as e:

print(f"SSL Error for {secure_url}: {e}")

except requests.exceptions.RequestException as e:

print(f"Other error for {secure_url}: {e}")

print("\n--- Testing invalid SSL URL (verification ON) ---")

try:

response = requests.get(invalid_ssl_url)

response.raise_for_status()

print(f"Successfully connected to {invalid_ssl_url} with SSL verification (this is unexpected!).")

except requests.exceptions.SSLError as e:

print(f"SSL Error for {invalid_ssl_url}: {e} (Expected error, connection blocked due to untrusted cert).")

except requests.exceptions.RequestException as e:

print(f"Other error for {invalid_ssl_url}: {e}")

Disabling SSL Verification (verify=False): While requests allows you to disable SSL verification by setting verify=False, this is highly discouraged in production environments as it makes your application vulnerable to man-in-the-middle attacks. Only use verify=False for local development, testing with self-signed certificates, or when you fully understand and accept the security risks.

print("\n--- Testing invalid SSL URL (verification OFF - NOT RECOMMENDED) ---")

try:

requests.packages.urllib3.disable_warnings(requests.packages.urllib3.exceptions.InsecureRequestWarning) # Suppress warnings

response = requests.get(invalid_ssl_url, verify=False)

response.raise_for_status()

print(f"Successfully connected to {invalid_ssl_url} with SSL verification DISABLED.")

print(f"Response content length: {len(response.content)}")

except requests.exceptions.RequestException as e:

print(f"Error even with verification OFF: {e}")

Custom CA Certificates: For specific scenarios, such as communicating with an internal server that uses a corporate CA not included in the default certifi bundle, you can specify a path to a custom CA bundle file (.pem format) using the verify parameter: requests.get(url, verify='/path/to/your/custom_ca_bundle.pem'). This allows you to maintain security while accommodating custom infrastructure.

Properly managing proxies and robustly verifying SSL certificates are critical components of secure and compliant data querying, especially when interacting with sensitive data or within tightly controlled network environments from an Open Platform perspective.

XIII. Advanced Scenarios and Best Practices for Data Querying

Having covered the core functionalities of requests, let's explore advanced scenarios and best practices that elevate your data querying capabilities, making your applications more efficient, compliant, and maintainable.

13.1 API Rate Limiting: How to Detect and Handle It

Many APIs, particularly those from public or Open Platform providers, impose rate limits to prevent abuse, ensure fair usage, and maintain service stability. Exceeding these limits typically results in 429 Too Many Requests HTTP status codes.

To handle rate limits gracefully:

- Read API Documentation: The

APIdocumentation will usually specify the rate limits and how they are communicated (e.g., via headers likeX-RateLimit-Limit,X-RateLimit-Remaining,X-RateLimit-Reset). - Monitor Headers: Check

response.headersfor rate limit information. - Implement Delays: If a

429is received, or ifX-RateLimit-Remainingis low, pause execution usingtime.sleep(). Some APIs even provide aRetry-Afterheader indicating when to retry.

import requests

import time

# This endpoint simulates a rate limit with a 429 response

# Note: httpbin.org doesn't truly rate limit, this is for demonstration.

# In a real scenario, you'd be hitting an actual API that limits you.

rate_limited_url = 'https://api.github.com/users/octocat/repos' # GitHub has strict rate limits

def make_rate_limited_request(url, max_attempts=5):

for attempt in range(max_attempts):

response = requests.get(url)

if response.status_code == 429:

retry_after = response.headers.get('Retry-After')

if retry_after:

wait_time = int(retry_after)

print(f"Rate limit hit! Waiting for {wait_time} seconds before retrying.")

time.sleep(wait_time + 1) # Add a small buffer

else:

# Fallback if Retry-After header is missing

print(f"Rate limit hit! No Retry-After header, waiting {2**attempt} seconds.")

time.sleep(2**attempt) # Exponential backoff

elif response.status_code == 200:

print(f"Request successful on attempt {attempt + 1}.")

# Check GitHub's actual rate limit headers for informational purposes

print(f"GitHub Rate Limit Remaining: {response.headers.get('X-RateLimit-Remaining')}")

print(f"GitHub Rate Limit Reset: {time.ctime(int(response.headers.get('X-RateLimit-Reset', 0)))}")

return response.json()

else:

response.raise_for_status() # Raise for other HTTP errors

print(f"Failed to complete request after {max_attempts} attempts due to rate limiting.")

return None

# make_rate_limited_request(rate_limited_url) # Uncomment to test with GitHub's rate limits (might require token for higher limits)

print("Demonstrating rate limit handling (conceptual, as httpbin doesn't simulate 429 well without custom setup).")

print("You would typically replace rate_limited_url with a real API endpoint that has rate limits.")

For complex rate limiting, consider libraries like ratelimit or backoff that provide decorators for automatic handling.

13.2 Pagination: Iterating Through API Responses

Many APIs return large datasets in chunks, or "pages," rather than all at once. This is called pagination. You typically fetch one page at a time, then use information from the response (like a next_page_url or offset/limit parameters) to request the next page until all data is retrieved.

Common pagination strategies include:

- Offset/Limit:

GET /items?offset=0&limit=100 - Page Number/Page Size:

GET /items?page=1&page_size=50 - Cursor/Token-based:

GET /items?cursor=some_token(more resilient to data changes) - Link Headers: Some APIs provide

Linkheaders pointing to next, prev, first, last pages.

import requests

# Example: Paginated data from a mock API

# This API uses _page and _limit for pagination

base_url = 'https://jsonplaceholder.typicode.com/comments'

all_comments = []

page = 1

limit = 10 # Number of items per page

while True:

params = {'_page': page, '_limit': limit}

response = requests.get(base_url, params=params)

response.raise_for_status()

current_page_comments = response.json()

if not current_page_comments:

break # No more comments, reached the end

all_comments.extend(current_page_comments)

print(f"Fetched page {page} with {len(current_page_comments)} comments. Total fetched: {len(all_comments)}")

page += 1

print(f"\nTotal comments fetched across all pages: {len(all_comments)}")

# print(all_comments[:5]) # Print first 5 comments

Building a robust pagination loop is crucial for exhaustively querying data from an API, ensuring you don't miss any records from an Open Platform's vast datasets.

13.3 Working with Different Data Formats (JSON, XML, CSV)

While JSON is dominant, you might encounter other formats:

- JSON:

response.json()is your best friend.

XML: Use response.text and then parse with a dedicated XML library like xml.etree.ElementTree (built-in) or lxml (third-party). ```python import requests import xml.etree.ElementTree as ETxml_url = 'https://www.w3schools.com/xml/note.xml' # Example XML try: response = requests.get(xml_url) response.raise_for_status() root = ET.fromstring(response.text) to_node = root.find('to') from_node = root.find('from') heading_node = root.find('heading') body_node = root.find('body')

if to_node is not None and from_node is not None:

print(f"XML Note To: {to_node.text}")

print(f"XML Note From: {from_node.text}")

print(f"XML Note Heading: {heading_node.text}")

print(f"XML Note Body: {body_node.text}")

else:

print("Could not find 'to' or 'from' nodes in XML.")

except requests.exceptions.RequestException as e: print(f"Error fetching or parsing XML: {e}") except ET.ParseError as e: print(f"XML Parse Error: {e}") * **CSV**: Use `response.text` and Python's `csv` module or `pandas.read_csv` for direct processing.python import requests import io import csv import pandas as pdcsv_url = 'https://raw.githubusercontent.com/plotly/datasets/master/api_docs/mt_cars.csv' try: response = requests.get(csv_url) response.raise_for_status() # Using csv module csv_file = io.StringIO(response.text) reader = csv.reader(csv_file) header = next(reader) print(f"CSV Header: {header}") first_row = next(reader) print(f"First Data Row: {first_row}")

# Using pandas

# pd.read_csv takes a file-like object or a string

df = pd.read_csv(io.StringIO(response.text))

print("\nDataFrame head:")

print(df.head())

except requests.exceptions.RequestException as e: print(f"Error fetching or parsing CSV: {e}") ``` Choosing the right parsing tool is crucial for efficient data extraction, especially when consuming diverse data streams from a complex Open Platform.

13.4 Integrating with OpenAPI Specifications

OpenAPI (formerly Swagger) is a language-agnostic specification for describing RESTful APIs. When an API provides an OpenAPI document, it acts as a comprehensive blueprint, detailing all available endpoints, their HTTP methods, required parameters (query, path, header, body), request/response data models, and authentication schemes.

Integrating with OpenAPI specifications using requests means:

- Reduced Guesswork: You know exactly what URLs to hit, what parameters to send, and what response to expect, minimizing trial-and-error.

- Automated Client Generation: Tools exist that can generate

requests-based client code directly from an OpenAPI specification, though for simple cases, manual interaction is fine. - Validation: You can validate your requests against the API's schema before sending them, catching errors early.

While requests doesn't directly consume OpenAPI documents, understanding the specification vastly simplifies how you construct your requests calls, ensuring they are perfectly aligned with the API's design. This is particularly valuable for complex enterprise APIs or when building integrations on a large Open Platform.

13.5 The Role of API Management Platforms and APIPark

As developers increasingly interact with a multitude of APIs, often from diverse Open Platform providers, managing these integrations efficiently becomes paramount. This is where dedicated API management solutions shine. They help standardize, secure, document, and monitor APIs, whether you are providing them or consuming them. For instance, an Open Source AI Gateway and API Management Platform like ApiPark provides robust tools to streamline API consumption and deployment.

APIPark assists developers by:

- Unifying API Formats: It can standardize request data formats across various AI models and REST services, meaning your

requestscalls don't need to change drastically even if the underlying API evolves. This simplifies yourrequestscode and reduces maintenance. - Lifecycle Management: From design to deprecation, APIPark helps manage the entire API lifecycle, ensuring stability and consistency, which translates to more reliable data queries from your

requests-based applications. - Security and Access Control: It can centralize authentication and authorization, making your

requeststo different APIs more secure and manageable, rather than juggling multiple token types. For instance, it allows for API resource access approval, preventing unauthorized calls even with valid credentials until an administrator approves a subscription. - Performance and Scalability: As an AI gateway, APIPark can optimize API traffic, perform load balancing, and offer high performance (rivaling Nginx), meaning your

requestscalls will be handled efficiently, even under heavy load. - Monitoring and Analysis: Detailed logging and powerful data analysis features in APIPark help you track every

APIcall, diagnose issues that might affect yourrequestsand observe long-term trends, which is invaluable for debugging and optimizing your data querying strategies.

So, while requests is your powerful client for making individual API calls, platforms like APIPark provide the overarching infrastructure to manage a fleet of APIs, ensuring that your requests interactions are more efficient, secure, and maintainable, especially in complex enterprise or multi-API environments. This is a critical consideration for any organization serious about robust Open Platform integration and API governance.

13.6 Logging and Monitoring

For any application interacting with external services, robust logging is non-negotiable. Log your requests calls, responses (especially status codes and error messages), and any exceptions. This is vital for debugging, auditing, and understanding the behavior of your application and the APIs it interacts with.

import requests

import logging

logging.basicConfig(level=logging.INFO, format='%(asctime)s - %(levelname)s - %(message)s')

def logged_request(method, url, **kwargs):

logging.info(f"Making {method.upper()} request to: {url} with params: {kwargs.get('params')}, data: {kwargs.get('data')}, json: {kwargs.get('json')}")

try:

response = requests.request(method, url, **kwargs)

logging.info(f"Received response for {url} - Status: {response.status_code}")

response.raise_for_status()

return response

except requests.exceptions.HTTPError as e:

logging.error(f"HTTP Error for {url} (Status {response.status_code}): {e}. Response body: {response.text[:200]}...")

raise # Re-raise to allow higher-level handling

except requests.exceptions.RequestException as e:

logging.error(f"Network or other Requests Error for {url}: {e}")

raise

except Exception as e:

logging.critical(f"Unexpected error during request to {url}: {e}")

raise

try:

response = logged_request('get', 'https://jsonplaceholder.typicode.com/posts/1')

print(f"Post title: {response.json()['title']}")

logged_request('get', 'https://jsonplaceholder.typicode.com/nonexistent') # This will log an HTTP error

except Exception:

pass # Catch and pass to avoid stopping execution

This structured logging allows for easy traceability, especially when dealing with complex API workflows or debugging issues arising from an Open Platform integration.

13.7 Code Structure and Modularity

As your application grows, avoid embedding requests calls directly throughout your codebase. Instead, create dedicated API client modules or classes. This provides:

- Reusability: Define API methods once.

- Maintainability: If an API changes, you only update one place.

- Testability: Easier to mock and test API interactions.

# api_client.py

import requests

class MyApiClient:

def __init__(self, base_url, api_key=None):

self.base_url = base_url

self.session = requests.Session()

if api_key:

self.session.headers.update({'Authorization': f'Bearer {api_key}'})

self.session.headers.update({'Accept': 'application/json', 'User-Agent': 'MyCustomPythonClient/1.0'})

def _get(self, path, params=None):

url = f"{self.base_url}{path}"

response = self.session.get(url, params=params)

response.raise_for_status()

return response.json()

def get_user(self, user_id):

return self._get(f"/techblog/en/users/{user_id}")

def create_post(self, title, body, user_id):

url = f"{self.base_url}/posts"

data = {'title': title, 'body': body, 'userId': user_id}

response = self.session.post(url, json=data)

response.raise_for_status()

return response.json()

# main.py

# from api_client import MyApiClient

# client = MyApiClient("https://jsonplaceholder.typicode.com")

# user = client.get_user(1)

# print(f"Fetched user: {user['name']}")

# new_post = client.create_post("Hello from client!", "This is my first post.", 1)

# print(f"Created post with ID: {new_post['id']}")

This modular approach is crucial for building scalable and maintainable applications that interact with multiple APIs, especially when dealing with a complex Open Platform ecosystem.

13.8 Security Considerations

Always prioritize security when dealing with APIs and data queries:

- HTTPS Everywhere: Always use

https://URLs to encrypt traffic.requestsverifies SSL certificates by default, keep this enabled (verify=True). - Secure Credential Storage: Never hardcode API keys, tokens, or passwords. Use environment variables, secure configuration files, or secret management services.

- Input Validation: If your application accepts user input that is then used in

APIcalls, validate and sanitize it to prevent injection attacks or malformed requests. - Least Privilege: Configure

APIkeys/tokens with the minimum necessary permissions. - Error Handling: Avoid exposing sensitive internal error details in public responses.

- Dependency Updates: Keep

requestsand its dependencies (likeurllib3andcertifi) up to date to benefit from security patches.

By adhering to these best practices, you can build robust, efficient, and secure Python applications that confidently query data from any web API or Open Platform.

XIV. Case Study: Interacting with a Public OpenAPI (e.g., OpenWeatherMap)

To solidify our understanding, let's walk through a practical example of interacting with a public API that adheres to OpenAPI principles. We'll use the OpenWeatherMap API to fetch current weather data for a city. (Note: You'll need to sign up for a free API key on their website, openweathermap.org, to run this example).

1. Sign Up and Get an API Key: Go to https://openweathermap.org/api and sign up for a free account. Once logged in, navigate to the "API keys" section to generate your personal API key. This key will be used to authenticate your requests.

2. Read the API Documentation: The documentation (often part of an OpenAPI specification) for OpenWeatherMap's Current Weather Data API (https://openweathermap.org/current) reveals: * Endpoint: GET http://api.openweathermap.org/data/2.5/weather * Required Parameters: * q: City name (e.g., London, New York) * appid: Your API key * Optional Parameters: * units: metric, imperial, or standard (default) * lang: Language of the output * Response Format: JSON

3. Construct the requests Call:

import requests

import os

# IMPORTANT: Replace 'YOUR_OPENWEATHERMAP_API_KEY' with your actual key

# It's better to store this in an environment variable for security.

# For example: OPENWEATHER_API_KEY = os.environ.get('OPENWEATHER_API_KEY')

OPENWEATHER_API_KEY = 'YOUR_OPENWEATHERMAP_API_KEY' # Replace with your key

if OPENWEATHER_API_KEY == 'YOUR_OPENWEATHERMAP_API_KEY':

print("WARNING: Please replace 'YOUR_OPENWEATHERMAP_API_KEY' with your actual OpenWeatherMap API key.")

print("Sign up at openweathermap.org/api to get one.")

exit()

BASE_URL = "http://api.openweathermap.org/data/2.5/weather"

CITY_NAME = "London" # Or any city you want

UNITS = "metric" # 'metric' for Celsius, 'imperial' for Fahrenheit

def get_weather(city, api_key, units='standard'):

params = {

'q': city,

'appid': api_key,

'units': units

}

try:

response = requests.get(BASE_URL, params=params, timeout=10)

response.raise_for_status() # Raise an exception for HTTP errors

return response.json()

except requests.exceptions.HTTPError as e:

print(f"HTTP Error: {e} - Status Code: {e.response.status_code}")

print(f"Error details: {e.response.json().get('message', 'No specific error message.')}")

return None

except requests.exceptions.ConnectionError as e:

print(f"Connection Error: {e}")

return None

except requests.exceptions.Timeout as e:

print(f"Timeout Error: Request timed out.")

return None

except requests.exceptions.RequestException as e:

print(f"An unexpected error occurred: {e}")

return None

# Fetch weather data

weather_data = get_weather(CITY_NAME, OPENWEATHER_API_KEY, UNITS)

if weather_data:

print(f"\n--- Current Weather in {CITY_NAME} ---")

print(f"Description: {weather_data['weather'][0]['description'].capitalize()}")

print(f"Temperature: {weather_data['main']['temp']} °C")

print(f"Feels like: {weather_data['main']['feels_like']} °C")

print(f"Humidity: {weather_data['main']['humidity']}%")

print(f"Wind Speed: {weather_data['wind']['speed']} m/s")

print(f"Cloudiness: {weather_data['clouds']['all']}%")

print(f"Pressure: {weather_data['main']['pressure']} hPa")

print(f"Sunrise: {time.strftime('%H:%M:%S', time.gmtime(weather_data['sys']['sunrise'] + weather_data['timezone']))} UTC")

print(f"Sunset: {time.strftime('%H:%M:%S', time.gmtime(weather_data['sys']['sunset'] + weather_data['timezone']))} UTC")

else:

print(f"Could not retrieve weather data for {CITY_NAME}.")

# Example of an invalid city (to demonstrate error handling)

print("\n--- Testing with an invalid city ---")

invalid_city_data = get_weather("NonExistentCity12345", OPENWEATHER_API_KEY)

This case study demonstrates how to leverage requests to interact with a real-world API, highlighting parameter usage, JSON parsing, and robust error handling. By understanding the OpenAPI documentation, we could accurately construct our data queries and anticipate the response structure. This is a practical application of all the concepts we've discussed, bridging the gap between theoretical knowledge and real-world Open Platform interaction.

XV. Conclusion: Empowering Your Python Applications with Web Connectivity

The journey through the requests module reveals it to be far more than just a simple HTTP client; it is an indispensable tool that empowers Python developers to seamlessly interact with the vast and dynamic world of web services. From the fundamental act of retrieving data with GET to the complex operations of sending structured information with POST, PUT, and PATCH, requests consistently delivers a clean, intuitive, and highly Pythonic interface. Its elegant design abstracts away the intricate details of HTTP, allowing developers to focus on the logic of their applications rather than wrestling with low-level network protocols.

We've explored its core functionalities, such as managing query parameters, handling diverse response formats like JSON, XML, and CSV, and robustly dealing with various authentication schemes. Beyond the basics, we delved into advanced features like Session objects for efficient, stateful interactions, the critical need for error handling and retry mechanisms to build resilient applications, and secure practices concerning proxies and SSL/TLS verification. The importance of understanding API rate limits, pagination strategies, and the utility of OpenAPI specifications in streamlining API consumption cannot be overstated. We've also seen how a comprehensive API management platform like ApiPark complements requests by providing a robust framework for governing and optimizing API interactions at scale, especially in complex environments involving numerous AI models and Open Platform integrations.