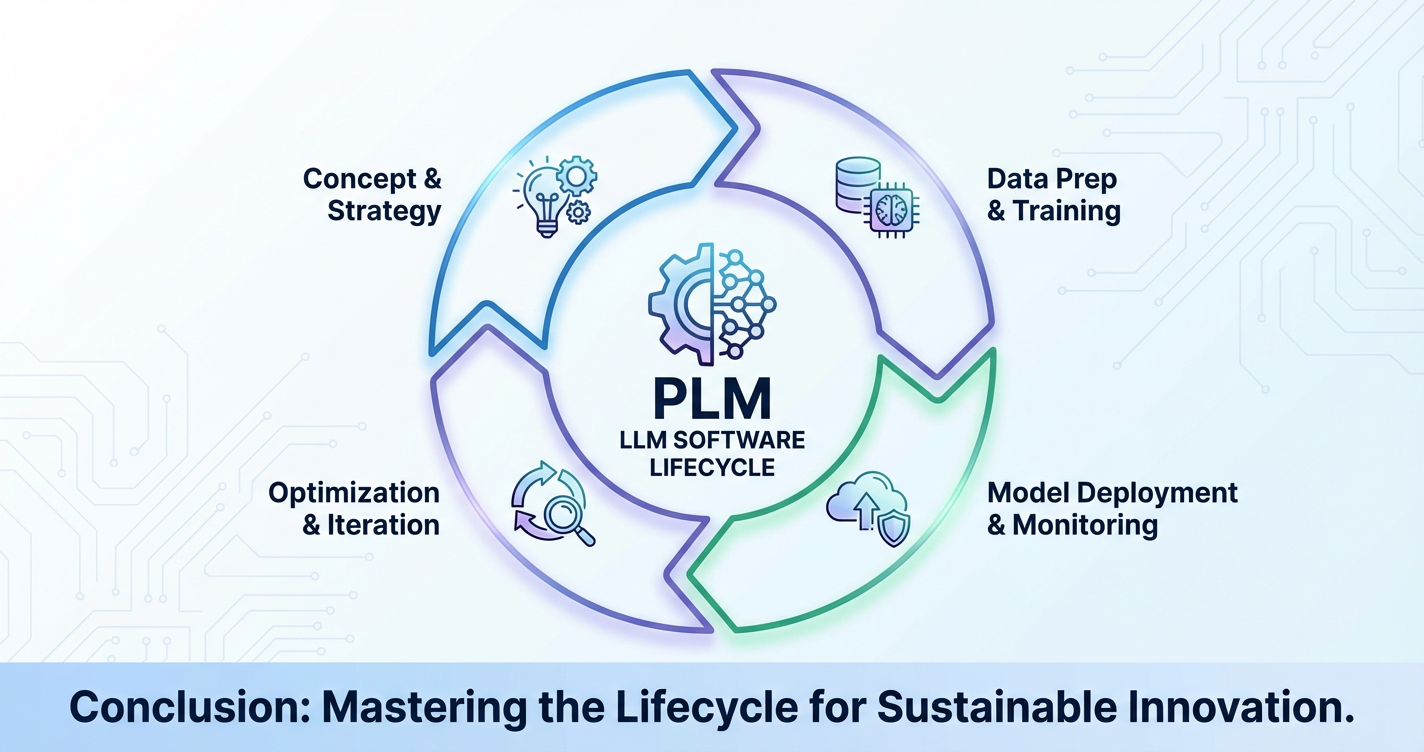

Product Lifecycle Management for LLM Software Development

The advent of Large Language Models (LLMs) has heralded a transformative era in software development, offering unprecedented capabilities for natural language understanding, generation, and complex reasoning. From sophisticated chatbots and intelligent content creation platforms to advanced code assistants and data analysis tools, LLMs are rapidly reshaping the digital landscape. However, integrating these powerful, often black-box, models into production-grade software introduces a unique set of challenges that traditional software development methodologies are not fully equipped to handle. The dynamic nature of LLMs, their reliance on vast datasets, the complexities of prompt engineering, and the ethical considerations surrounding their deployment necessitate a robust and adaptable framework. This is where Product Lifecycle Management (PLM) becomes not just beneficial, but absolutely critical for the successful and sustainable development of LLM-powered software.

Product Lifecycle Management, traditionally applied to physical goods and conventional software, provides a structured approach to managing a product from its initial ideation through design, development, testing, deployment, maintenance, and eventual decommissioning. For LLM software, PLM must be reimagined and extended to encompass the unique intricacies of artificial intelligence, machine learning operations (MLOps), and the continuous evolution inherent in models that learn and adapt. This comprehensive framework ensures that LLM products are not only technically sound and performant but also align with user needs, adhere to ethical guidelines, remain cost-effective, and evolve effectively over their lifespan. Without a disciplined PLM approach, LLM projects risk spiraling into unmanageable complexity, failing to deliver value, or even causing unintended harm. This article will delve into each phase of PLM, dissecting its application to LLM software development and highlighting the crucial role of architectural components like LLM Gateways, AI Gateways, and API Gateways in streamlining this complex journey.

Phase 1: Ideation and Research – Laying the Foundational Stone

The journey of any successful product begins with a clear understanding of the problem it aims to solve and the market it intends to serve. For LLM software, this initial phase is particularly critical, as the technology, while powerful, is not a panacea, and its application must be purposeful and well-researched. This stage is about transforming a nascent idea into a viable concept, grounded in reality and foresight.

Market Research & Opportunity Identification

The first step involves a deep dive into the market to identify genuine pain points and opportunities where LLMs can offer unique or significantly enhanced solutions. This isn't merely about finding tasks LLMs can do, but rather tasks where they can deliver substantial value that existing solutions cannot. For instance, a basic chatbot can answer FAQs, but an LLM-powered conversational AI might understand nuanced user intent, personalize interactions, and even complete complex multi-step tasks, thereby revolutionizing customer service or technical support. This research involves:

- User Needs Analysis: Engaging with potential end-users through surveys, interviews, and focus groups to understand their current challenges, workflows, and aspirations. What repetitive tasks could be automated? What information is difficult to access or synthesize? What creative barriers could be overcome?

- Problem Validation: Ensuring the identified problem is significant enough to warrant an LLM solution. Is the problem widespread? Does it have a clear business impact? Is there a tangible benefit for users or organizations?

- Competitive Landscape Analysis: Examining existing solutions, both traditional and AI-driven. What are their strengths and weaknesses? Where are the gaps? How can an LLM-based product differentiate itself? This might involve analyzing existing AI assistants, content generation tools, or data analysis platforms to identify areas for improvement or entirely new service offerings.

- Emerging Trends: Keeping abreast of the latest advancements in LLM technology, research breakthroughs, and novel applications. The field is moving rapidly, and understanding the trajectory of LLMs—such as improvements in context window, reasoning capabilities, multimodal inputs, or cost-efficiency—can reveal new opportunities.

Thorough market research in this phase acts as a filter, preventing the investment of resources into solutions for non-existent problems or into areas where LLMs offer no discernible advantage over simpler, cheaper alternatives.

Technological Feasibility & Resource Assessment

Once a promising opportunity is identified, the next hurdle is to assess the technological viability and the resources required to bring the concept to life. LLM development is resource-intensive and requires a specific skill set.

- LLM Selection: This involves evaluating which specific LLM is best suited for the task. Choices range from proprietary models like OpenAI's GPT series or Google's Gemini, to open-source alternatives like Llama, Mistral, or Falcon. Factors influencing this decision include model size, performance on specific benchmarks (e.g., reasoning, coding, creativity), cost per token, latency, fine-tuning capabilities, and API availability. Some applications might require a highly specialized, fine-tuned model, while others can leverage general-purpose models with sophisticated prompt engineering.

- Data Requirements: LLMs thrive on data, and the success of an application often hinges on the quality and quantity of data available for training, fine-tuning, or retrieval-augmented generation (RAG). This assessment involves:

- Data Sourcing: Identifying where the necessary data resides (internal databases, public datasets, web scraping).

- Data Quality: Evaluating the cleanliness, accuracy, and relevance of the data. Poor quality data will inevitably lead to poor model performance.

- Data Volume: Estimating the amount of data needed for effective training or contextualization.

- Data Privacy & Security: Crucially, assessing the sensitivity of the data and ensuring compliance with regulations like GDPR, CCPA, or HIPAA. This impacts data handling, storage, and anonymization strategies.

- Computational Resources: LLM inference and especially fine-tuning demand significant computational power, typically GPUs. This assessment includes:

- Infrastructure Costs: Estimating cloud computing costs (e.g., AWS, Azure, GCP) for model hosting, inference, and potential fine-tuning.

- Scalability Needs: Projecting anticipated user load and ensuring the infrastructure can scale horizontally and vertically to meet demand without prohibitive costs or performance degradation.

- On-premise vs. Cloud: Deciding whether to deploy models in a private data center or leverage public cloud services, considering factors like security, cost, latency, and expertise.

- Talent Assessment: Building LLM software requires a diverse team:

- Machine Learning Engineers: To handle model deployment, optimization, and MLOps.

- Data Scientists: For data analysis, feature engineering, and model evaluation.

- Prompt Engineers: A new and increasingly vital role focused on crafting effective prompts, refining model behavior, and maximizing output quality without fine-tuning.

- Software Engineers: To build the surrounding application logic, user interfaces, and integrations.

- Domain Experts: To validate model outputs and guide development from a subject matter perspective.

- UX/UI Designers: To ensure intuitive and effective user interactions with the LLM.

This resource assessment provides a realistic picture of the investment required and helps determine if the project is feasible within allocated budgets and timelines.

Ethical Considerations & Risk Assessment

Perhaps the most distinguishing aspect of PLM for LLM software is the paramount importance of ethical considerations and rigorous risk assessment from the outset. Unlike traditional software, LLMs can exhibit emergent behaviors, biases, and generate content that is factually incorrect, harmful, or even discriminatory. Ignoring these aspects at the ideation stage can lead to catastrophic consequences down the line.

- Bias Detection and Mitigation: LLMs learn from vast datasets, which often reflect societal biases present in the training data. This can lead to models generating biased or unfair outputs based on gender, race, socioeconomic status, or other attributes.

- Identification: Proactive assessment of potential biases in data sources and anticipated model behavior.

- Mitigation Strategies: Exploring techniques like data re-weighting, adversarial training, or controlled generation to reduce bias.

- Fairness Objectives: Defining what "fairness" means for the specific application and establishing metrics to measure it.

- Transparency and Explainability: Users often need to understand why an LLM produced a particular output, especially in critical applications (e.g., healthcare, finance).

- Design for Interpretability: While LLMs are inherently opaque, architectural decisions can be made to provide more context or evidence for generated responses (e.g., through RAG where sources are cited).

- Clear Disclosures: Informing users that they are interacting with an AI and setting appropriate expectations about its capabilities and limitations.

- Data Privacy and Security Implications: LLMs, particularly when fine-tuned on sensitive data or when used in conversational interfaces, pose significant privacy risks.

- Data Minimization: Collecting and processing only the data absolutely necessary.

- Anonymization/Pseudonymization: Implementing robust techniques to protect personally identifiable information (PII).

- Access Controls: Ensuring strict controls over who can access the training data, model parameters, and inference outputs.

- Security by Design: Building security features from the ground up, including secure API endpoints and encryption.

- Potential for Misuse or Harmful Applications: LLMs can be used to generate misinformation, deepfakes, or facilitate malicious activities like phishing or social engineering.

- Red Teaming: Proactively testing the model for vulnerabilities to malicious prompts or attempts to circumvent safety guardrails.

- Safety Filters: Implementing content moderation systems to detect and block harmful outputs.

- Responsible Use Policies: Establishing clear guidelines for how the product can and cannot be used.

- Regulatory Compliance: The regulatory landscape for AI is rapidly evolving (e.g., EU AI Act, various data privacy laws).

- Legal Review: Consulting legal experts to ensure the product complies with all relevant regulations regarding AI, data privacy, and industry-specific standards.

- Audit Trails: Designing the system to log relevant interactions and decisions to support audits and demonstrate compliance.

This comprehensive initial phase sets a strong foundation, not just for technical execution, but for building LLM software that is responsible, ethical, and aligned with societal values, minimizing costly rectifications later in the lifecycle.

Phase 2: Design and Development – Building the Brains and the Body

With a validated concept and a clear understanding of the technological and ethical landscape, the PLM process moves into the design and development phase. This is where the abstract ideas are translated into concrete architectures, data strategies, and functional code. For LLM software, this involves a complex interplay between model selection, prompt engineering, data pipeline construction, and traditional software engineering, all orchestrated to create a cohesive and performant application.

System Architecture Design

The architectural choices made at this stage dictate the scalability, maintainability, and security of the LLM application. It’s about more than just picking an LLM; it’s about how that LLM integrates into a broader ecosystem.

- Choosing the Right LLM Interaction Strategy: This is a pivotal decision.

- Prompt Engineering: Leveraging powerful general-purpose LLMs directly via carefully crafted prompts. This approach is often quicker to implement and less resource-intensive than fine-tuning but requires expert prompt design to achieve desired behaviors.

- Fine-tuning: Adapting a pre-trained LLM to a specific task or dataset by further training it on a smaller, domain-specific dataset. This can significantly improve performance and reduce "hallucinations" for niche applications but is more costly and complex.

- Retrieval-Augmented Generation (RAG): Combining an LLM with a retrieval system that fetches relevant information from a knowledge base before generating a response. RAG is excellent for reducing factual errors, providing up-to-date information, and grounding responses in verifiable sources, making it a popular choice for enterprise applications.

- Hybrid Approaches: Often, a combination of these strategies yields the best results, such as fine-tuning for specific style and tone, then applying RAG for factual accuracy, and finally using prompt engineering for dynamic task execution.

- Designing the Interaction Layer (APIs, UIs): How will users and other systems interact with the LLM?

- API Design: For programmatic access, robust RESTful APIs or gRPC services are essential. These APIs must be well-documented, versioned, and secure. They abstract the underlying LLM complexity, allowing developers to integrate LLM capabilities into various applications without needing deep AI expertise.

- User Interfaces: For direct user interaction, designing intuitive and effective UIs is crucial. This includes conversational interfaces, input forms for structured prompts, and displays for model outputs, often with mechanisms for user feedback or corrections.

- Integration with Existing Systems: LLM applications rarely operate in isolation. They need to connect with databases, CRM systems, enterprise resource planning (ERP) platforms, and other internal or external services.

- Microservices Architecture: A common approach where the LLM component is encapsulated as a distinct service, communicating with other services via well-defined APIs. This enhances modularity and scalability.

- Event-Driven Architecture: For asynchronous communication and complex workflows, an event-driven design can be beneficial, allowing different services to react to LLM outputs or inputs.

- The Role of an API Gateway in LLM Integrations: As LLM-powered services mature, they often expose their capabilities through various APIs. A robust API Gateway becomes indispensable here. It acts as a single entry point for all API calls, handling routing, load balancing, authentication, and authorization. For LLM services, this means:

- Unified Access: Providing a consistent endpoint for different LLM models or different versions of the same model, abstracting away their distinct API specifications.

- Security: Enforcing authentication schemes (API keys, OAuth, JWT) and authorization policies to protect LLM endpoints from unauthorized access.

- Traffic Management: Implementing rate limiting to prevent abuse, applying quotas to manage consumption, and load balancing across multiple LLM inference instances for high availability and performance.

- Monitoring & Analytics: Centralizing logging of API calls, performance metrics, and usage patterns, which is crucial for cost tracking and operational insights.

- Version Control: Facilitating seamless updates and deprecation of LLM APIs without breaking client applications. This is especially vital in the rapidly evolving LLM landscape.

- In complex environments, a platform like APIPark can function as a comprehensive API management platform, simplifying these critical gateway functions. It offers end-to-end API lifecycle management, assisting with design, publication, invocation, and decommissioning, ensuring that LLM-powered services are managed efficiently and securely.

- Considering an AI Gateway for Specialized LLM/AI Service Management: While an API Gateway handles generic API traffic, an AI Gateway (which often encompasses the functionality of an LLM Gateway) is specifically tailored for AI services. It can provide:

- Model Abstraction: Presenting a unified API interface regardless of the underlying AI model (e.g., GPT-4, Llama 3, custom fine-tuned model).

- Prompt Templating and Management: Storing, versioning, and managing prompt templates centrally, allowing developers to change prompts without altering application code.

- Response Caching: Caching common LLM responses to reduce latency and API costs.

- Cost Optimization: Intelligent routing to the cheapest available model that meets performance requirements, or dynamic switching between models based on query complexity.

- Fallback Mechanisms: Automatically switching to a backup model if the primary model fails or becomes unavailable.

- Unified Authentication & Cost Tracking for diverse AI models, as offered by solutions like APIPark, directly addresses the complexities of managing a portfolio of AI services.

Data Strategy and Pipeline

Data is the lifeblood of LLMs. A robust data strategy and pipeline are essential for providing the models with the quality information they need, whether for training, fine-tuning, or real-time RAG.

- Data Collection, Cleaning, and Labeling:

- Collection: Establishing automated or manual processes for gathering relevant data from identified sources. This might involve web scraping, accessing internal data lakes, or acquiring licensed datasets.

- Cleaning: Raw data is invariably messy. This involves removing duplicates, correcting errors, handling missing values, standardizing formats, and filtering out irrelevant or low-quality content. For LLMs, this also includes removing personally identifiable information (PII) if not explicitly required and consented to.

- Labeling: For fine-tuning, data often needs to be labeled by human annotators to provide the model with supervised examples of desired inputs and outputs. Quality control for labeling is paramount.

- Data Versioning and Governance: In an LLM development cycle, data changes frequently.

- Version Control for Data: Implementing systems (like DVC or custom data registries) to track different versions of datasets used for training or evaluation. This ensures reproducibility and helps debug model performance issues.

- Data Lineage: Documenting the origin, transformations, and usage of data throughout its lifecycle.

- Access Control and Audit Trails: Restricting access to sensitive data and maintaining logs of who accessed what data and when, crucial for security and compliance.

- Building Robust Data Pipelines for Training/Fine-tuning/RAG: Automated pipelines are necessary to transform raw data into a format suitable for LLM consumption.

- ETL/ELT Processes: Extracting data from sources, transforming it (cleaning, pre-processing, embedding generation for RAG), and loading it into a data store accessible by the LLM application.

- Orchestration Tools: Using tools like Apache Airflow, Prefect, or Kubeflow Pipelines to manage and schedule complex data workflows, ensuring data freshness and consistency.

- Scalability: Designing pipelines to handle large volumes of data efficiently and scale with growing data requirements.

Prompt Engineering & Model Customization

This is where the art and science of interacting with LLMs truly manifest.

- Developing Effective Prompts: Prompt engineering is about crafting precise instructions and contextual information for an LLM to elicit the desired output. This often involves:

- Clear Instructions: Providing unambiguous directives for the task.

- Context: Supplying relevant background information to guide the LLM's response.

- Examples (Few-shot learning): Including input-output pairs to demonstrate the desired format and style.

- Constraints: Specifying limitations on length, tone, or content.

- Iterative Refinement: Prompt engineering is an iterative process, requiring constant testing and adjustment.

- Strategies for Fine-tuning or RAG Implementation:

- Fine-tuning: If fine-tuning is chosen, the process involves selecting appropriate base models, preparing high-quality labeled datasets, configuring training parameters, and evaluating the fine-tuned model's performance on specific tasks. This requires careful management of model versions and training data versions.

- RAG Implementation: Building a RAG system involves selecting an appropriate vector database, developing robust indexing strategies for the knowledge base, designing retrieval algorithms, and integrating the retrieved context seamlessly into the LLM's prompt.

- Version Control for Prompts and Model Configurations: Just like code, prompts and model configurations (e.g., hyperparameters for fine-tuning, RAG settings) must be version-controlled.

- Prompt Registries: Storing prompts in a centralized, versioned system allows teams to track changes, revert to previous versions, and A/B test different prompt strategies.

- Configuration Management: Maintaining versioned configurations for LLM parameters, API keys, and deployment settings ensures reproducibility and simplifies rollback procedures.

Development Practices for LLM-based Applications

Integrating LLMs into software requires a blend of traditional software engineering best practices with specialized MLOps principles.

- Modular Design: Breaking down the application into independent, loosely coupled modules. This includes separating the LLM interaction logic, data retrieval components, UI, and backend services. This modularity makes the system easier to develop, test, and maintain.

- Test-Driven Development (TDD) for Non-LLM Components: While full TDD for LLM outputs is challenging due to their probabilistic nature, it remains invaluable for the surrounding application logic. Ensuring traditional components are robust reduces the surface area for bugs when integrating with the LLM.

- Experimentation Frameworks: Given the inherent uncertainty and iterative nature of LLM development, setting up frameworks for rapid experimentation is crucial. This allows developers to quickly test different prompts, model versions, and architectural choices, measure their impact, and iterate efficiently.

- CI/CD for Code and Model Updates: Continuous Integration/Continuous Deployment pipelines are essential for automating the build, test, and deployment processes.

- Code CI/CD: Standard pipelines for application code, ensuring new features and bug fixes are integrated and deployed regularly.

- Model CI/CD (MLOps): Extending CI/CD to models involves automating model training, validation, packaging, and deployment. This ensures that new model versions, fine-tunes, or prompt updates can be deployed reliably and frequently.

- Leveraging an LLM Gateway to Abstract Underlying Model Complexities: An LLM Gateway (often a specific manifestation of an AI Gateway) is a powerful tool in this phase. It allows developers to interact with the LLM as a service, abstracting away the specifics of different models, their APIs, and even their deployment locations. This means developers can switch between different LLMs (e.g., from GPT-3.5 to GPT-4, or to an open-source alternative like Llama) with minimal code changes, facilitating experimentation and future-proofing the application against model obsolescence or changes in pricing/availability. It standardizes the request data format across all AI models, ensuring that changes in AI models or prompts do not affect the application or microservices, thereby simplifying AI usage and maintenance costs.

This development phase is characterized by intense iteration, detailed architectural planning, and a strong emphasis on integrating cutting-edge AI capabilities with robust, scalable software engineering practices. The strategic use of gateways acts as a unifying layer, simplifying the complexities of disparate AI models and services.

Phase 3: Testing and Validation – Ensuring Quality and Reliability

The testing and validation phase is paramount for any software product, but it takes on a multifaceted and often more challenging dimension for LLM-powered applications. Due to the non-deterministic nature of LLMs, their potential for generating incorrect or biased outputs, and the sheer complexity of evaluating natural language, traditional testing methods must be augmented with specialized techniques. This phase ensures the LLM software not only performs as expected but also meets quality, safety, and ethical standards.

Unit and Integration Testing

Before delving into LLM-specific evaluations, the conventional software components of the application must be rigorously tested.

- Traditional Software Testing for Application Components: This includes:

- Unit Tests: Verifying that individual functions, classes, and modules of the application (e.g., data parsers, UI components, backend logic) work correctly in isolation.

- Integration Tests: Ensuring that different components of the application interact correctly with each other (e.g., the front-end communicating with the back-end, database interactions).

- API Testing: Validating the functionality, reliability, performance, and security of the APIs exposed by the LLM application. This involves checking request/response schemas, error handling, and authentication mechanisms, particularly for APIs managed by an API Gateway.

- Testing API Integrations: When an LLM application relies on external services or exposes its own functionalities via APIs, these integrations must be thoroughly tested.

- Gateway Functionality: If using an API Gateway, specific tests are needed to verify that routing rules are correct, security policies (authentication, authorization) are enforced, rate limits are applied, and traffic management functions work as intended.

- Error Handling: Testing how the application gracefully handles errors or unexpected responses from external APIs, including the LLM provider's API.

- Latency and Throughput: Measuring the performance of API calls to ensure they meet specified latency and throughput requirements.

LLM-Specific Testing

This is where the unique challenges of LLM software become apparent. Evaluation must be comprehensive, covering functional correctness, performance, robustness, and crucially, ethical aspects.

- Functional Testing: Does the LLM provide relevant, accurate, and coherent responses for the intended use cases?

- Ground Truth Evaluation: For tasks with definitive correct answers (e.g., summarization of known facts, simple question answering), comparing LLM outputs against human-generated or expert-validated "ground truth" responses.

- Relevance and Coherence: For more open-ended generation tasks (e.g., creative writing, dialogue), human evaluators assess the relevance, coherence, fluency, and quality of the LLM's output.

- Hallucination Detection: Rigorous testing to identify instances where the LLM generates factually incorrect or nonsensical information, particularly important for RAG-based systems where grounding in retrieved facts is expected.

- Instruction Following: Verifying that the LLM adheres to complex instructions within prompts, including constraints on format, length, style, and content.

- Performance Testing: How well does the LLM application perform under various loads and conditions?

- Latency: Measuring the time it takes for the LLM to process a request and generate a response. This is critical for real-time applications.

- Throughput: Assessing the number of requests the system can handle per unit of time.

- Scalability: Testing the application's ability to maintain performance as user load increases, often involving load balancers and auto-scaling groups, with an API Gateway managing the distribution of requests efficiently.

- Resource Utilization: Monitoring CPU, GPU, memory, and network usage to identify bottlenecks and optimize infrastructure.

- Robustness Testing: How resilient is the LLM to unexpected or challenging inputs?

- Edge Cases and Out-of-Domain Inputs: Testing the LLM with unusual queries, malformed inputs, or topics outside its expected knowledge domain to observe its behavior and ensure it doesn't crash or generate nonsensical responses.

- Adversarial Prompts (Prompt Injection): Actively attempting to "jailbreak" the LLM or manipulate its behavior through cleverly crafted prompts that bypass safety mechanisms or elicit unintended responses. This requires a dedicated "red teaming" effort.

- Input Variations: Testing with different phrasing, synonyms, and grammatical structures to ensure consistent performance.

- Bias and Fairness Testing: This is a crucial ethical consideration that must be systematically evaluated.

- Demographic Parity: Testing if the LLM's outputs exhibit different levels of quality or bias when presented with inputs associated with different demographic groups (e.g., gender, race, age).

- Stereotype Amplification: Detecting if the LLM reinforces or amplifies harmful stereotypes present in its training data.

- Attribute Probing: Systematically querying the LLM with inputs designed to reveal hidden biases or preferences based on sensitive attributes.

- Mitigation Effectiveness: Testing the efficacy of any bias mitigation techniques implemented during development.

- Safety and Security Testing: Beyond robustness to adversarial prompts, this includes broader security concerns.

- Data Leakage: Testing if the LLM inadvertently reveals sensitive information from its training data or previous conversations.

- Toxic Output Generation: Ensuring the LLM does not generate hateful, violent, or otherwise harmful content.

- Compliance with Safety Guidelines: Verifying adherence to internal safety policies and external regulatory requirements.

User Acceptance Testing (UAT)

The final stage of validation involves real users interacting with the LLM product in a simulated or actual operational environment.

- Real-world Scenario Testing: Users perform typical tasks they would accomplish with the LLM application. This goes beyond technical validation, focusing on usability, user experience, and whether the product truly solves their problems.

- Gathering Feedback for Iterative Improvements: Collecting qualitative and quantitative feedback from UAT participants. This includes:

- Direct Feedback: Surveys, interviews, and bug reports.

- Usage Data: Tracking how users interact with the LLM, which features they use most, and where they encounter difficulties.

- Human-in-the-Loop Feedback: Implementing mechanisms for users to rate LLM responses or provide corrections, which can then be used to fine-tune the model or refine prompts.

UAT is vital because LLMs often behave differently in real-world, complex scenarios than they do in isolated technical tests. It bridges the gap between technical validation and actual product utility, providing invaluable insights for further refinement before general release. This entire testing phase requires meticulous planning, a combination of automated and human-centric evaluation, and a commitment to continuous improvement, ensuring that the LLM software is not only functional but also safe, fair, and truly valuable to its users.

Phase 4: Deployment and Operations – Bringing LLMs to Life

Once thoroughly tested and validated, the LLM software moves into the deployment and operations phase. This stage focuses on making the product accessible to end-users, ensuring its continuous availability, performance, security, and cost-efficiency in a production environment. For LLM applications, this means establishing robust MLOps practices that manage model lifecycle alongside application code, with crucial roles played by specialized gateway technologies.

Deployment Strategies

The choice of deployment strategy significantly impacts scalability, cost, and management overhead.

- Cloud Deployment (SaaS, PaaS): The most common approach for LLM-powered applications due to the high computational demands and need for scalability.

- Software as a Service (SaaS): The LLM application is hosted by a third-party provider and made available to users over the internet. This offloads infrastructure management to the provider.

- Platform as a Service (PaaS): Developers deploy their code to a platform that handles infrastructure, scaling, and runtime environments (e.g., AWS Elastic Beanstalk, Google App Engine). This offers more control than SaaS while reducing infrastructure burden.

- Infrastructure as a Service (IaaS): Developers provision virtual machines, storage, and networks (e.g., AWS EC2, Azure VMs) and manage the entire software stack. This provides maximum control but requires more operational expertise.

- On-premise Deployment: Some organizations choose to deploy LLM models and applications within their private data centers, often driven by strict data privacy requirements, regulatory compliance, or the need to leverage existing hardware investments. This offers greater control over data and infrastructure but entails higher upfront costs and operational complexity.

- Containerization (Docker, Kubernetes) for Scalability:

- Docker: Packaging the LLM inference server, application code, and all dependencies into lightweight, portable containers ensures consistency across different environments (development, staging, production) and simplifies deployment.

- Kubernetes (K8s): An open-source container orchestration platform that automates the deployment, scaling, and management of containerized applications. K8s is ideal for LLM applications as it can:

- Manage GPU resources: Efficiently allocate and deallocate GPUs for LLM inference.

- Handle auto-scaling: Automatically scale the number of LLM inference pods up or down based on traffic load.

- Facilitate blue/green or canary deployments: Enabling risk-averse deployment of new LLM versions or application updates.

Monitoring and Observability

In a dynamic LLM environment, continuous monitoring is non-negotiable to ensure the application remains healthy, performs optimally, and continues to provide accurate outputs.

- Model Performance Monitoring:

- Drift Detection: Monitoring the statistical properties of input data over time to detect "data drift" (changes in the input distribution) or "concept drift" (changes in the relationship between input and output), which can lead to model degradation.

- Accuracy Decay: Continuously evaluating the model's accuracy on a sample of live data or a recurring validation set. Anomalies might indicate a need for re-training or fine-tuning.

- Latency & Throughput: Tracking real-time inference latency and throughput to ensure the LLM service meets its performance SLAs.

- Token Usage & Cost: Monitoring the number of tokens consumed by the LLM (for both prompts and completions) to track costs, especially crucial for pay-per-token models.

- System Health Monitoring:

- Application Metrics: Monitoring traditional software metrics such as CPU usage, memory consumption, disk I/O, network traffic for all application components.

- Error Rates: Tracking error rates for API calls, internal service communications, and LLM responses.

- Service Uptime & Availability: Ensuring that all components of the LLM application are running and accessible.

- Logging and Auditing: Comprehensive logging is essential for debugging, performance analysis, security audits, and compliance.

- Detailed API Call Logging: Recording every detail of each API call made to the LLM application and its underlying LLM providers. This includes timestamps, request parameters, response data (or masked versions for privacy), user IDs, and originating IP addresses. This is critical for troubleshooting issues, understanding usage patterns, and maintaining a robust audit trail. Platforms that serve as an LLM Gateway or AI Gateway, like APIPark, excel in this area, providing detailed logging capabilities that record every aspect of API interactions. This allows businesses to quickly trace and troubleshoot issues in API calls, ensuring system stability and data security.

- Application Logs: Capturing logs from all application services, including warnings, errors, and informational messages.

- Infrastructure Logs: Collecting logs from servers, containers, and network devices.

- Centralized Logging: Aggregating logs from various sources into a centralized logging system (e.g., ELK Stack, Splunk, Datadog) for easy searching, analysis, and alerting.

Security and Access Control

Given the sensitivity of data handled by LLMs and the potential for misuse, robust security measures are paramount.

- API Authentication and Authorization:

- Authentication: Verifying the identity of users or client applications attempting to access LLM APIs (e.g., API keys, OAuth 2.0, OpenID Connect).

- Authorization: Granting specific permissions to authenticated users or applications, ensuring they can only access the LLM features and data they are authorized for. An API Gateway is instrumental in enforcing these policies centrally. Solutions like APIPark allow for the activation of subscription approval features, ensuring that callers must subscribe to an API and await administrator approval before they can invoke it, preventing unauthorized API calls and potential data breaches.

- Rate Limiting, Traffic Management: Preventing abuse, ensuring fair usage, and protecting backend LLM services from being overwhelmed.

- Rate Limiting: Restricting the number of API requests a user or client can make within a specified time frame.

- Throttling: Gradually reducing the rate of requests from a client if they exceed predefined limits.

- Load Balancing: Distributing incoming API traffic across multiple instances of the LLM inference service to optimize resource utilization and prevent single points of failure. An API Gateway commonly handles these functions.

- Data Encryption (in transit and at rest): Protecting sensitive data from unauthorized access.

- In Transit: Using Transport Layer Security (TLS/SSL) for all communication between clients, the API Gateway, the application backend, and the LLM inference service.

- At Rest: Encrypting all data stored in databases, object storage, and disk volumes where LLM models or training data reside.

- Regular Security Audits and Penetration Testing: Periodically conducting security audits and ethical hacking (penetration testing) to identify vulnerabilities in the LLM application and its infrastructure.

Scalability and Resilience

LLM applications need to handle fluctuating user loads and remain available even in the face of failures.

- Auto-scaling Infrastructure: Automatically adjusting computational resources (e.g., number of server instances, GPU clusters) based on real-time traffic and demand. This ensures performance during peak loads and optimizes costs during off-peak times. Kubernetes, as mentioned, is excellent for this.

- Disaster Recovery and High Availability:

- Redundancy: Deploying LLM services across multiple availability zones or regions to ensure that if one zone fails, others can take over seamlessly.

- Backup and Restore: Regularly backing up critical data (model checkpoints, configuration files) and having a clear plan for restoring services in case of a major outage.

- Failover Mechanisms: Implementing automatic failover to redundant instances or regions in case of primary system failure.

- Load Balancing: Essential for distributing incoming requests evenly across multiple healthy instances of the LLM service. This prevents any single instance from becoming a bottleneck and improves overall system responsiveness and fault tolerance. As highlighted, an API Gateway plays a critical role in intelligent load balancing. Its performance, rivaling that of Nginx, and support for cluster deployment mean platforms like APIPark are well-suited to handle large-scale traffic for LLM applications, achieving over 20,000 transactions per second (TPS) with modest resources.

The deployment and operations phase transforms the LLM software from a developed artifact into a living service. It is a continuous cycle of monitoring, optimizing, and securing, underpinned by robust MLOps practices and the strategic utilization of API Gateways, LLM Gateways, and AI Gateways to ensure efficient, reliable, and secure delivery of LLM capabilities to end-users.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

Phase 5: Maintenance and Evolution – Continuous Improvement and Adaptation

The lifecycle of LLM software does not end with deployment; rather, it enters a critical phase of continuous maintenance and evolution. The dynamic nature of LLMs, coupled with rapidly changing user expectations, emerging ethical guidelines, and evolving technological advancements, necessitates an agile and iterative approach to keep the product relevant, performant, and secure. This phase is characterized by feedback loops, iterative updates, cost optimization, and proactive adaptation.

Continuous Improvement

The goal of continuous improvement is to refine the LLM product based on real-world usage and performance data.

- Feedback Loops from Users and Monitoring Data: Establishing systematic mechanisms to gather insights.

- User Feedback: Collecting direct feedback through in-app surveys, feature requests, bug reports, and customer support interactions. This qualitative data provides crucial context on user satisfaction, pain points, and desired features.

- Monitoring Data Analysis: Analyzing the extensive data collected during the operations phase (e.g., model performance metrics, system health logs, API call patterns). This quantitative data reveals trends, identifies performance bottlenecks, detects model drift, and highlights areas for optimization. For instance, detailed API call logging and powerful data analysis features within an AI Gateway or LLM Gateway like APIPark are invaluable here. They enable businesses to track long-term trends and performance changes, helping with preventive maintenance and informed decision-making before issues arise.

- Iterative Model Updates (Re-training, Fine-tuning): LLMs are not static entities. Their performance can degrade over time due to concept drift or changes in user behavior.

- Scheduled Re-training: Periodically re-training the base LLM or fine-tuning its layers on updated datasets to incorporate new information or adapt to evolving language patterns.

- Incremental Fine-tuning: Applying smaller, more frequent fine-tuning updates using newly acquired, labeled data to address specific performance issues or adapt to new use cases.

- Data Curio: Building a data curation pipeline that automatically identifies useful data points from user interactions (e.g., highly rated responses, corrected outputs) to feed back into the training process.

- Prompt Refinement: Even without model updates, significant improvements can often be achieved by refining prompts. This involves:

- A/B Testing Prompts: Experimenting with different prompt variations to see which yields the best results in terms of accuracy, relevance, and user satisfaction.

- Dynamic Prompting: Developing systems that can dynamically construct prompts based on user context, historical data, or retrieved information, moving beyond static templates.

- Meta-Prompting: Using one LLM to generate or refine prompts for another LLM, a technique that can enhance prompt quality and efficiency.

Version Management

Managing different versions of LLMs, prompts, and application code is crucial for stability, reproducibility, and enabling controlled evolution.

- Managing Different Versions of LLMs, Prompts, and Application Code:

- Model Registry: A centralized repository for storing, versioning, and managing different trained or fine-tuned LLM models. This allows for easy tracking of model lineage, metadata, and performance metrics.

- Prompt Registry: Similar to a model registry, but for prompts. It tracks all prompt variations, their associated performance metrics, and which application versions use them.

- Code Version Control: Using Git or similar systems for all application code, configuration files, and MLOps scripts.

- A/B Testing for New Features/Models: Deploying multiple versions of an LLM or application feature simultaneously to a subset of users and comparing their performance metrics (e.g., engagement, conversion rates, error rates). This allows for data-driven decision-making before rolling out changes to the entire user base.

- Deprecation Strategies: Planning for the graceful retirement of older LLM models or API versions.

- Notification: Clearly communicating deprecation timelines to users and developers.

- Migration Support: Providing tools or guidance to help users migrate to newer versions.

- Gradual Rollout: Phased deprecation, ensuring older versions continue to function for a period while users transition.

Cost Optimization

Operating LLM applications can be expensive, especially with high usage volumes. Continuous monitoring and optimization are essential.

- Monitoring API Call Costs: Tracking expenses incurred from external LLM providers (e.g., per-token costs, API quotas). This requires detailed logging and cost attribution mechanisms, often facilitated by an AI Gateway or LLM Gateway that can provide per-tenant or per-application cost breakdowns.

- Optimizing Inference Efficiency: Reducing the computational resources and time required for LLM inference.

- Model Quantization/Pruning: Techniques to reduce the size and computational footprint of LLMs without significantly compromising performance.

- Batching Requests: Processing multiple LLM requests simultaneously to improve GPU utilization and reduce overall latency.

- Hardware Optimization: Leveraging more efficient hardware (e.g., specialized AI accelerators) or optimizing cloud instance types.

- Resource Allocation Adjustments: Dynamically adjusting compute resources based on demand patterns. This includes fine-tuning auto-scaling rules and optimizing serverless function configurations to avoid over-provisioning or under-provisioning.

- Strategic Model Selection: Using smaller, more cost-effective LLMs for simpler tasks, reserving larger, more powerful models only for complex queries, potentially orchestrated by an LLM Gateway capable of intelligent routing.

Regulatory Compliance Updates

The regulatory landscape for AI is nascent but rapidly evolving. Staying compliant is an ongoing process.

- Adapting to Evolving AI Regulations and Ethical Guidelines: Continuously monitoring new laws (e.g., EU AI Act, national AI strategies), industry standards, and ethical recommendations from bodies like the OECD or NIST.

- Internal Policy Reviews: Regularly reviewing and updating internal policies and procedures to ensure they align with new regulations and best practices.

- Documentation and Auditability: Maintaining comprehensive documentation of LLM development processes, data provenance, model evaluations, and mitigation strategies to demonstrate compliance during audits. The detailed logging provided by an API Gateway or AI Gateway becomes a crucial asset for audit trails.

The maintenance and evolution phase ensures that LLM software remains a valuable asset, continuously adapting to new information, challenges, and opportunities while operating efficiently and responsibly. It’s a testament to the fact that LLM development is a continuous journey, not a destination.

Phase 6: Decommissioning – A Responsible Farewell

While often overlooked, the decommissioning phase is an integral part of a comprehensive Product Lifecycle Management strategy, especially for LLM software. Products, even highly successful ones, eventually reach the end of their useful life, whether due to technological obsolescence, shifting market demands, or the emergence of superior alternatives. For LLM applications, responsible decommissioning involves more than just turning off servers; it requires careful consideration of data retention, security, and knowledge transfer.

Data Archiving and Retention

Data used by or generated by an LLM application can be sensitive and subject to various regulations, even after the product is retired.

- Complying with Data Retention Policies: Adhering to internal policies and external regulations (e.g., GDPR, HIPAA, CCPA) regarding how long data must be retained. This typically involves identifying critical data (e.g., customer interaction logs, financial transaction data) and ensuring it is stored securely for the required duration.

- Secure Data Disposal: For data that is no longer required to be retained, implementing secure disposal methods. This includes:

- Data Anonymization/Pseudonymization: Before complete deletion, sensitive personal data might be anonymized or pseudonymized for future analytical or research purposes, if legally permissible and ethically sound.

- Physical Destruction: For on-premise deployments, physically destroying storage media to prevent data recovery.

- Secure Erasure: For cloud or virtualized environments, using industry-standard secure data erasure techniques that overwrite data multiple times.

- Deletion from all Backups: Ensuring that data is not only deleted from active systems but also from all backup archives in accordance with retention policies.

- Model Archiving: Archiving final LLM model checkpoints and associated training data. This ensures reproducibility of results, provides a historical record, and allows for potential re-use or analysis in the future.

System Shutdown

The process of shutting down the LLM application and its underlying infrastructure must be carefully planned and executed to minimize disruption and avoid residual costs.

- Graceful Degradation and Notification to Users:

- Advance Warning: Providing ample notice to users about the upcoming decommissioning, including dates, reasons, and any alternative solutions or migration paths.

- Phased Shutdown: Gradually reducing service availability or limiting functionality rather than an abrupt shutdown. This allows users to transition away from the product more smoothly.

- Clear Communication: Maintaining clear communication channels throughout the process to address user concerns and provide support.

- Resource Deallocation: Systematically deallocating all computational, storage, and networking resources associated with the LLM application.

- Cloud Resources: Ensuring all cloud instances, databases, storage buckets, and network configurations are terminated to prevent incurring unnecessary costs. This is a common oversight that can lead to significant post-decommissioning expenses.

- On-premise Resources: Powering down and repurposing or safely disposing of physical hardware.

- API Gateway Deconfiguration: Removing or disabling all API endpoints related to the decommissioned LLM application from the API Gateway to prevent further traffic or erroneous routing. This ensures that the gateway itself remains clean and efficient.

Lessons Learned

Every product lifecycle, including its ending, offers valuable lessons that can inform future development efforts.

- Documenting Insights for Future Projects: Compiling a comprehensive "post-mortem" report that captures key learnings from the entire lifecycle of the LLM product. This includes:

- Successes and Failures: What worked well? What didn't? Why?

- Technical Challenges: Unique LLM-related technical hurdles encountered and how they were addressed.

- Ethical Dilemmas: Any ethical issues that arose and the strategies employed to resolve them.

- Cost Management: Insights into LLM API costs, infrastructure optimization, and budgeting.

- Market Reception: What did market research reveal, and how did the product perform against those expectations?

- Team Dynamics: What were the organizational and team collaboration lessons?

- Knowledge Transfer: Ensuring that valuable expertise and insights gained from developing and operating the LLM product are formally documented and shared within the organization. This prevents repeating mistakes and accelerates future LLM initiatives. This might include creating best practices guides for prompt engineering, MLOps workflows, or LLM evaluation techniques.

The decommissioning phase, when handled with foresight and discipline, provides a responsible conclusion to the product's journey. It ensures that resources are freed up, data is managed ethically, and critical knowledge is preserved, paving the way for the next generation of innovative LLM-powered solutions.

The Indispensable Role of Gateways in LLM PLM

In the intricate tapestry of Product Lifecycle Management for LLM software development, various types of gateways emerge as crucial architectural components. These gateways simplify complex integrations, enhance security, optimize performance, and provide invaluable observability across the entire product lifecycle. While their functionalities can sometimes overlap, understanding their distinct roles—API Gateway, LLM Gateway, and AI Gateway—is key to building robust, scalable, and maintainable LLM applications.

API Gateway: The Front Door for All Services

At its core, an API Gateway acts as the single entry point for all client requests to a backend service, routing them to the appropriate microservice. For LLM applications, its traditional functions become even more critical:

- Traffic Management: An API Gateway effectively handles incoming request traffic, distributing it across multiple instances of LLM inference services to ensure high availability and responsiveness. This includes load balancing, throttling, and rate limiting to protect backend services from overload and abuse. As LLM usage scales, managing concurrent requests efficiently becomes paramount.

- Security Enforcement: It provides a crucial layer of security, enforcing authentication and authorization policies before requests reach the LLM. This means validating API keys, processing JWTs, and applying access control rules, ensuring only authorized applications or users can invoke LLM capabilities. This centralized security management simplifies development and enhances overall system resilience.

- Request/Response Transformation: API Gateways can modify requests and responses on the fly. For LLMs, this might involve transforming a client-specific request format into the LLM provider's expected format, or sanitizing LLM responses before sending them back to the client to remove sensitive information or ensure compliance.

- Monitoring and Analytics: By centralizing all API traffic, an API Gateway provides a rich source of data for monitoring. It logs every request, its response time, status code, and other metadata. This data is essential for understanding usage patterns, identifying performance bottlenecks, and tracking API consumption for cost management.

- Version Control: As LLMs evolve and new versions are deployed, an API Gateway can manage different API versions, allowing for seamless transitions for client applications. It can route requests to older versions for backward compatibility while new clients use the latest, enabling gradual adoption and minimizing disruption.

LLM Gateway: Specializing in Large Language Models

An LLM Gateway is a specialized form of an API Gateway, designed specifically to address the unique challenges of interacting with Large Language Models. It goes beyond generic API management to offer features tailored to the LLM ecosystem:

- Model Abstraction: One of the most significant benefits of an LLM Gateway is abstracting away the specifics of different LLM providers (e.g., OpenAI, Anthropic, Google, open-source models). Developers interact with a unified API, and the gateway handles the underlying model-specific request formats, authentication, and response parsing. This allows for easy switching between models based on cost, performance, or availability, reducing vendor lock-in and simplifying future model upgrades.

- Prompt Templating and Management: LLM Gateways often include features for managing prompt templates centrally. This means prompts can be version-controlled, A/B tested, and updated without requiring changes to the application code. It ensures consistency across different parts of an application and accelerates prompt engineering iterations.

- Caching: For repetitive queries or common prompts, an LLM Gateway can cache responses. This significantly reduces latency and lowers the cost of API calls to external LLM providers, as identical requests can be served directly from the cache.

- Cost Optimization and Intelligent Routing: LLM Gateways can be configured to route requests to different LLMs based on predefined rules. For example, simple queries might go to a cheaper, smaller model, while complex tasks are routed to a more powerful but more expensive LLM. This intelligent routing helps optimize costs while maintaining performance.

- Fallback Mechanisms: In case an LLM provider experiences an outage or a specific model fails, an LLM Gateway can automatically reroute requests to a backup model or provider, enhancing the resilience and availability of the LLM application.

AI Gateway: A Broader Vision for AI Services

An AI Gateway encompasses the functionalities of both an API Gateway and an LLM Gateway, extending its scope to manage a diverse portfolio of AI services beyond just LLMs. This might include vision models, speech-to-text, text-to-speech, traditional machine learning models, or specialized analytical AI services.

- Unified Access Point for All AI Services: An AI Gateway provides a consistent and unified interface for all AI capabilities within an organization, simplifying integration for developers who might need to combine LLMs with other AI tools.

- Centralized Authentication and Authorization for AI: It enforces security policies uniformly across all AI services, streamlining access management and ensuring compliance. This is especially useful in enterprise environments where multiple teams consume various AI APIs.

- Comprehensive Monitoring and Analytics for Diverse AI Workloads: An AI Gateway offers a holistic view of all AI service consumption, performance, and costs. This enables organizations to make data-driven decisions about their AI strategy, identify underperforming models, and optimize resource allocation across their entire AI ecosystem.

- Multi-tenancy Support: For larger organizations or SaaS providers, an AI Gateway can manage independent API and access permissions for different teams or tenants. This allows for resource sharing while maintaining strong isolation of data and configurations, improving resource utilization and reducing operational costs.

Synergy and the Role of APIPark

The true power lies in the synergy between these gateway types. An LLM-powered application might use a general API Gateway to manage access for its entire frontend, while internally, an AI Gateway (which functions as an LLM Gateway) specifically orchestrates interactions with various LLMs and other AI services. This layered approach creates a highly robust, secure, and flexible architecture.

Platforms like APIPark serve as an excellent example of a unified solution, functioning as both an AI Gateway and API Management Platform. It simplifies the integration of diverse AI models (over 100+ AI models) with a unified management system for authentication and cost tracking, directly addressing the complexities of managing a portfolio of AI services in an LLM PLM context. By offering a standardized API format for AI invocation, APIPark ensures that underlying model changes do not disrupt applications, dramatically simplifying AI usage and reducing maintenance costs. Its end-to-end API Lifecycle Management capabilities, including prompt encapsulation into REST APIs, traffic forwarding, load balancing, and versioning, are precisely what LLM software development needs to navigate the design, deployment, and evolution phases effectively. Furthermore, features like independent API and access permissions for each tenant, subscription approval for API access, performance rivaling Nginx, detailed API call logging, and powerful data analysis directly contribute to the security, scalability, and continuous improvement aspects of LLM PLM. This comprehensive approach empowers enterprises to manage, integrate, and deploy AI and REST services with ease, ensuring that their LLM products are well-governed from conception to retirement.

Challenges and Best Practices in LLM PLM

Navigating the Product Lifecycle Management for LLM software is fraught with unique challenges, but adherence to established best practices can significantly mitigate risks and enhance the likelihood of success. The rapid pace of innovation, the inherent non-determinism of generative AI, and the critical ethical dimensions demand a thoughtful and adaptive approach.

Key Challenges

- Rapid Pace of LLM Evolution: The LLM landscape is constantly changing, with new models, architectures, and capabilities emerging almost weekly. This makes long-term planning difficult and requires continuous adaptation, model retraining, and potentially re-architecting solutions. What is state-of-the-art today might be obsolete in a few months.

- Data Governance Complexity: Managing the vast and often sensitive data required for LLMs presents significant governance challenges. Ensuring data quality, privacy, security, compliance with regulations (like GDPR), and ethical sourcing throughout the data lifecycle is a monumental task. Data drift and concept drift further complicate matters, requiring continuous monitoring and retraining strategies.

- Explainability and Interpretability: LLMs are often referred to as "black boxes," making it difficult to understand why they produced a particular output. This lack of explainability poses challenges for debugging, auditing, ensuring fairness, and gaining user trust, especially in high-stakes applications like healthcare or finance.

- Managing Non-determinism: Unlike traditional software which typically produces deterministic outputs for given inputs, LLMs can generate varied responses even for identical prompts due to their probabilistic nature. This makes traditional testing methodologies less effective and complicates quality assurance and debugging processes.

- Cost Management: LLM inference, especially for large, proprietary models, can be expensive on a per-token basis. Scaling these services for high traffic can lead to substantial operational costs, requiring continuous optimization, intelligent routing, and efficient resource allocation. Training or fine-tuning large models also demands significant computational resources and capital investment.

- Skill Gap: The demand for talent with expertise in MLOps, prompt engineering, AI governance, and ethical AI far outstrips supply. Building and maintaining high-performing LLM products requires a multidisciplinary team with specialized skills that are difficult to acquire and retain.

- Ethical AI and Responsible Development: Ensuring fairness, transparency, privacy, and safety throughout the LLM lifecycle is a continuous challenge. Mitigating biases, preventing the generation of harmful content, guarding against prompt injection attacks, and ensuring compliance with evolving ethical guidelines require constant vigilance and proactive measures.

Best Practices for LLM PLM

To overcome these challenges and harness the full potential of LLMs, organizations should adopt a set of best practices that integrate AI-specific considerations into a robust PLM framework.

- Adopt an Iterative, Agile Approach: Given the rapid evolution and experimental nature of LLMs, waterfall development is unsuitable. Embrace agile methodologies with short development sprints, frequent releases, and continuous feedback loops to adapt quickly to new information and changing requirements. This allows for quick experimentation with different models, prompts, and architectures.

- Prioritize Ethical AI and Responsible Development from Day One: Embed ethical considerations into every phase of the PLM, from ideation to decommissioning. Conduct proactive bias assessments, implement safety guardrails, ensure data privacy by design, and establish clear policies for responsible use. Regular red teaming and adversarial testing are crucial to identify and mitigate risks early.

- Invest in Robust Data Governance: Establish clear policies and processes for data collection, storage, cleaning, labeling, security, and access control. Implement data versioning and lineage tracking to ensure reproducibility and maintain data quality. For LLMs, this also means actively monitoring for data drift and concept drift and having strategies for periodic data refreshes and model retraining.

- Leverage MLOps and AIOps Principles: Apply Machine Learning Operations (MLOps) principles to automate and streamline the entire LLM lifecycle, from model experimentation and versioning to deployment, monitoring, and retraining. Extend this to AIOps for intelligent automation of IT operations using AI, optimizing performance and proactively addressing issues. This includes CI/CD pipelines for models, data pipelines, and prompt management.

- Embrace Modularity and Abstraction via Gateways: Design LLM applications with a modular architecture where the LLM interaction logic is decoupled from the core application. Utilize API Gateways, LLM Gateways, and AI Gateways to abstract away the complexities of different LLM providers, manage diverse AI services, enforce security, control traffic, and optimize costs. This architecture provides flexibility, reduces vendor lock-in, and simplifies future upgrades or model switches.

- Foster Cross-functional Collaboration: Success in LLM development requires close collaboration between ML engineers, data scientists, prompt engineers, software developers, product managers, legal experts, and ethicists. Breaking down silos and promoting interdisciplinary communication ensures a holistic approach to product development.

- Continuous Learning and Adaptation: The field of LLMs is dynamic. Dedicate resources to continuous learning, research, and experimentation. Stay informed about the latest breakthroughs, emerging best practices, and evolving regulatory landscapes. Building a culture of continuous learning enables the team and the product to adapt and thrive in this fast-paced environment.

- Implement Comprehensive Monitoring and Observability: Beyond traditional software monitoring, implement specialized observability for LLMs. Track model performance metrics (accuracy, latency, drift), token usage, costs, and ethical compliance metrics. Centralized logging and powerful data analysis tools provided by platforms like APIPark are essential for gaining insights and preempting issues.

By acknowledging the unique challenges of LLM software and proactively implementing these best practices, organizations can navigate the complexities of LLM PLM more effectively, build innovative and responsible AI products, and achieve sustainable success in the evolving AI landscape.

Conclusion

The integration of Large Language Models into software development represents a paradigm shift, unlocking unprecedented potential for innovation and automation. However, realizing this potential demands a disciplined, comprehensive approach to product management that goes far beyond traditional software development methodologies. Product Lifecycle Management (PLM) for LLM software development is not merely an optional framework; it is an absolute imperative for navigating the intricate journey from initial ideation to responsible decommissioning.

Throughout this extensive discussion, we have explored how each phase of PLM—Ideation and Research, Design and Development, Testing and Validation, Deployment and Operations, Maintenance and Evolution, and Decommissioning—must be meticulously adapted to address the unique characteristics of LLMs. From the critical ethical considerations in the research phase and the complex architectural decisions involving model selection and prompt engineering, to the nuanced testing required for non-deterministic outputs and the continuous monitoring essential for model performance and cost optimization, every stage presents distinct challenges and opportunities.

Crucially, we've highlighted the indispensable role of gateway technologies in streamlining this complex lifecycle. The API Gateway serves as the robust front door, ensuring secure, managed access to LLM services. The specialized LLM Gateway abstracts away the intricacies of various models, facilitating experimentation, cost optimization, and resilience. The broader AI Gateway unifies the management of all AI services, providing a comprehensive solution for enterprise-level AI governance. Platforms like APIPark exemplify how an integrated AI Gateway and API Management Platform can provide end-to-end capabilities, from quick integration of diverse AI models to unified API formats, advanced lifecycle management, robust security, and powerful analytics, thereby significantly enhancing the efficiency, security, and data optimization for developers, operations personnel, and business managers alike in the LLM era.

As the LLM landscape continues to evolve at an astonishing pace, the ability to adapt, learn, and iterate rapidly will be a key differentiator. By embracing a holistic PLM framework, integrating cutting-edge MLOps practices, prioritizing ethical AI from the outset, and strategically leveraging powerful tools, organizations can build responsible, resilient, and truly transformative LLM-powered applications that deliver sustained value. The future of software is undeniably intertwined with AI, and a well-executed PLM strategy is the compass that will guide us through this exciting, yet challenging, new frontier.

Frequently Asked Questions (FAQs)

1. What are the key differences between traditional software PLM and LLM software PLM?

Traditional software PLM focuses heavily on deterministic outcomes, code quality, and structured data. LLM software PLM, however, must contend with non-deterministic outputs, probabilistic model behavior, vast unstructured data, unique ethical considerations (like bias and hallucination), the rapid evolution of foundational models, and ongoing prompt engineering challenges. It requires a significant emphasis on MLOps, continuous model monitoring, and specialized testing for robustness, fairness, and safety, often extending beyond conventional software quality assurance.

2. How do API Gateway, LLM Gateway, and AI Gateway differ and what is their combined role in LLM PLM?

- An API Gateway is a general-purpose traffic manager for all APIs, handling routing, security, and traffic control.

- An LLM Gateway is specialized for Large Language Models, abstracting specific LLM providers, managing prompts, caching responses, and optimizing costs for LLM interactions.

- An AI Gateway is a broader concept that encompasses LLM Gateway functionalities but also manages other AI services like vision or speech models, providing a unified access point for an organization's entire AI portfolio.

Together, these gateways form a layered architecture that centralizes security, streamlines development by abstracting underlying complexities, optimizes performance and cost, and provides crucial observability across the entire LLM application lifecycle, making the LLM product more manageable, scalable, and resilient.

3. What are the biggest ethical concerns in LLM software development and how does PLM address them?

The biggest ethical concerns include bias (reflecting and amplifying societal prejudices), hallucination (generating factually incorrect information), privacy (misuse of sensitive data), transparency (lack of explainability), and the potential for misuse (generating harmful content, misinformation). PLM addresses these by integrating ethical considerations into every phase: * Ideation: Proactive risk assessment and defining ethical guidelines. * Design: Implementing data minimization, privacy-by-design, and model interpretability features. * Testing: Rigorous bias detection, fairness testing, and red-teaming for safety. * Deployment & Operations: Continuous monitoring for harmful outputs, audit trails, and robust access controls. * Maintenance & Evolution: Adapting to evolving regulations and refining mitigation strategies.

4. How can organizations effectively manage the high costs associated with LLM usage?

Effective cost management for LLM software involves several strategies throughout its PLM: * Strategic Model Selection: Using smaller, cheaper models for simpler tasks and reserving larger models for complex ones, potentially orchestrated by an LLM Gateway. * Prompt Optimization: Refining prompts to reduce token count for both input and output. * Caching: Implementing response caching for common queries to reduce API calls to external providers. * Batching Requests: Processing multiple LLM requests in a single call to improve efficiency. * Fine-tuning (selectively): Fine-tuning open-source models can sometimes be more cost-effective than continuous use of expensive proprietary models for specific tasks. * Infrastructure Optimization: Auto-scaling, choosing cost-efficient cloud instances, and optimizing inference efficiency (e.g., quantization). * Monitoring and Analytics: Using tools (like those in APIPark) to track token usage and costs in real-time, identifying areas for optimization.

5. Why is a continuous improvement loop essential for LLM software, and what does it involve?

A continuous improvement loop is essential because LLMs are dynamic: their performance can degrade over time (concept/data drift), new models emerge, and user needs evolve. It involves: 1. Collecting Feedback: Gathering user feedback and analyzing monitoring data (model performance, system health, API usage). 2. Analysis: Identifying areas for improvement, detecting model drift, or discovering new opportunities. 3. Iteration: Refining prompts, re-training/fine-tuning models with updated data, or developing new features. 4. Testing & Validation: Rigorously testing changes before deployment (including A/B testing). 5. Deployment: Rolling out updates with robust CI/CD pipelines. This iterative cycle ensures the LLM product remains relevant, performant, secure, and aligned with user expectations throughout its lifespan.

🚀You can securely and efficiently call the OpenAI API on APIPark in just two steps:

Step 1: Deploy the APIPark AI gateway in 5 minutes.

APIPark is developed based on Golang, offering strong product performance and low development and maintenance costs. You can deploy APIPark with a single command line.

curl -sSO https://download.apipark.com/install/quick-start.sh; bash quick-start.sh

In my experience, you can see the successful deployment interface within 5 to 10 minutes. Then, you can log in to APIPark using your account.

Step 2: Call the OpenAI API.