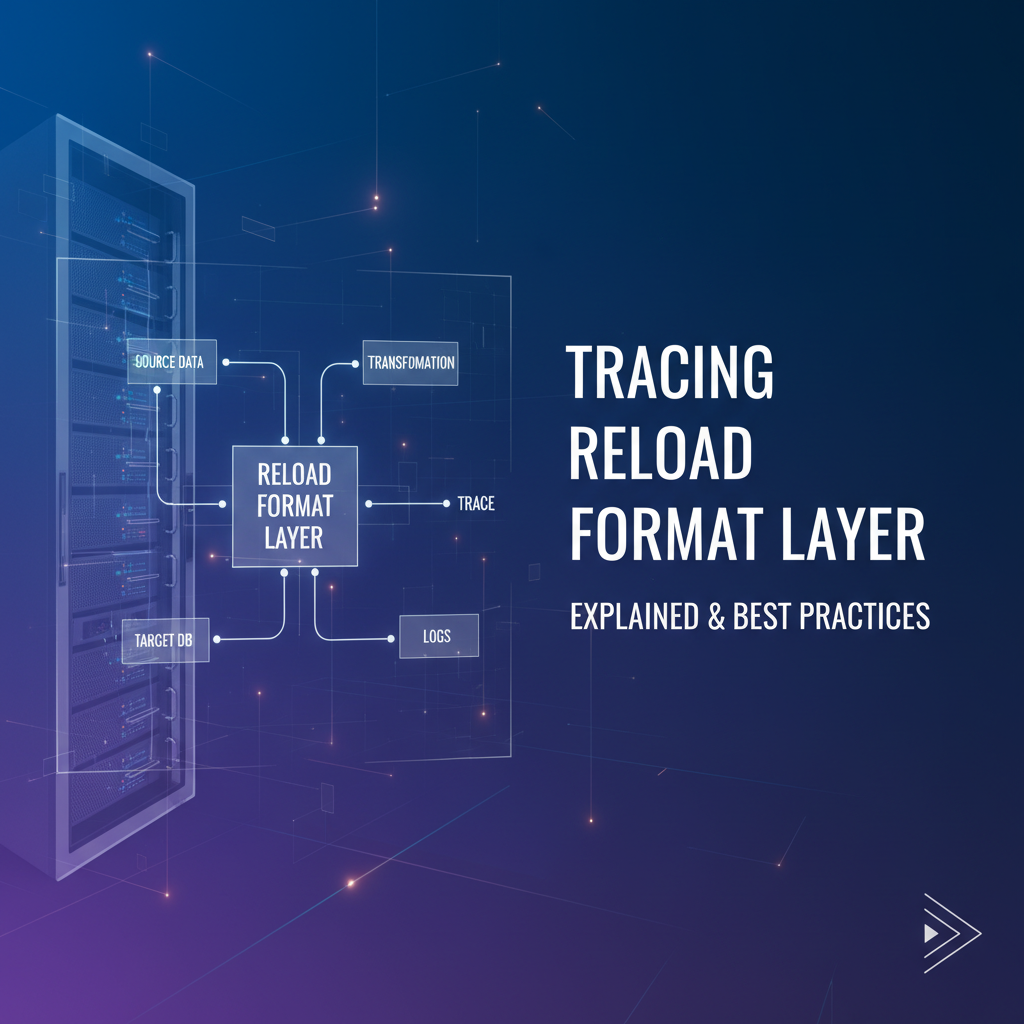

Tracing Reload Format Layer: Explained & Best Practices

In the intricate tapestry of modern software systems, particularly those that demand high availability, dynamic adaptability, and continuous evolution, the ability to update components without incurring significant downtime is paramount. This capability hinges critically on what we refer to as the "Reload Format Layer." It's the often-understated, yet profoundly impactful, mechanism responsible for interpreting, validating, and applying new configurations, data, or state changes to a running system. As artificial intelligence, especially large language models (LLMs), permeates every facet of technology, the complexity and importance of this layer have only intensified. Within the realm of AI, managing an evolving context efficiently and robustly is not merely a feature, but a foundational requirement, often orchestrated through sophisticated systems like the Model Context Protocol (MCP).

This comprehensive article embarks on a deep exploration of the Reload Format Layer. We will dissect its core components, understand its challenges, and unveil the best practices for implementing and, crucially, tracing its operations. Our journey will particularly highlight its relevance in the landscape of AI, examining how protocols like MCP manage the dynamic state that underpins intelligent interactions, and how meticulous tracing of these processes becomes indispensable for maintaining performance, reliability, and security in complex AI architectures. By the end, readers will possess a profound understanding of this critical infrastructure, equipped with insights to design, debug, and optimize systems that gracefully adapt to change, especially within the demanding world of artificial intelligence.

The Foundation: Understanding Reload Mechanisms in Modern Software Systems

Modern software applications are no longer static entities; they are dynamic, ever-evolving ecosystems designed to adapt to changing requirements, user loads, and underlying infrastructure. The concept of "reloading" is central to this paradigm, offering a vital alternative to full system restarts—a practice that is increasingly unacceptable in an era demanding continuous operation and minimal disruption. A reload mechanism allows for the application of updates—be they configuration changes, new code modules, data schema updates, or even new model weights—to a live system without taking it offline. This capability underpins the principles of continuous deployment, A/B testing, and rapid incident response, enabling organizations to iterate faster and maintain higher service availability.

The motivations behind implementing robust reload capabilities are diverse and compelling. Firstly, dynamic configuration management is a primary driver. Imagine a web server farm where changes to routing rules, caching policies, or authentication mechanisms need to be propagated across hundreds or thousands of instances. A full restart of each server would lead to significant downtime and potential service degradation. Reloading allows these changes to take effect almost instantaneously and transparently to the end-user. Secondly, hot-swapping of modules or services enables developers to deploy new features or bug fixes without redeploying the entire application. This is particularly relevant in microservices architectures, where individual services might need independent updates. Thirdly, A/B testing and experimentation often require subtle changes to application logic or data processing pipelines to be deployed to a subset of users, observed, and potentially rolled back or rolled out widely. Reloads facilitate this rapid iteration and controlled experimentation without affecting the broader user base. Lastly, data updates and schema migrations can sometimes be handled gracefully through reload mechanisms, especially when dealing with lookup tables, business rules, or machine learning models that need fresh data regularly.

However, the implementation of reload mechanisms is fraught with challenges that, if not addressed meticulously, can introduce more problems than they solve. Consistency is perhaps the most significant hurdle. When parts of a system are updated dynamically, ensuring that all components agree on the new state and that no conflicting states arise during the transition is critical. For instance, if a new configuration is partially applied, or if some services pick up the new configuration while others retain the old, the system can enter an inconsistent state, leading to unpredictable behavior, data corruption, or outright crashes. This necessitates careful orchestration and often transactional approaches to configuration updates.

Atomicity is another crucial consideration. An ideal reload operation should be atomic, meaning it either completes entirely and successfully, or it fails entirely and leaves the system in its original, known-good state. Partial updates that leave the system in an indeterminate state are incredibly dangerous and difficult to recover from. This often involves techniques like "two-phase commit" or "shadow deployment" where new configurations are loaded and validated in isolation before being switched over.

State management during a reload is also complex. Many applications maintain internal state—user sessions, in-flight transactions, cached data. A reload must carefully manage this existing state to avoid data loss or corruption. For example, a web server reloading its configuration must gracefully handle active connections, ensuring that ongoing requests are completed with the old configuration before new requests are served with the updated one. This often involves concepts like graceful shutdowns for old worker processes and spinning up new ones with the updated configuration, allowing active operations to drain out.

Finally, performance implications cannot be overlooked. The act of reloading itself, including parsing new formats, validating schemas, and applying changes, can consume CPU, memory, and I/O resources. If not optimized, a reload operation can introduce latency spikes or temporary service degradation, especially under heavy load. The goal is to make reloads as lightweight and non-disruptive as possible, which requires efficient parsing, minimal locking, and intelligent resource allocation.

In traditional software, we see these principles applied in various contexts. Web servers like Nginx or Apache can reload their configuration files (e.g., nginx.conf) without restarting the main process, using signals like SIGHUP. This allows new virtual hosts, routing rules, or SSL certificates to be applied dynamically. Databases, while often requiring more complex procedures for schema changes, increasingly support dynamic parameter tuning or hot-swapping of certain components. Application servers, message queues, and even operating systems utilize various forms of reload mechanisms to maintain uptime and adapt to operational changes. Understanding these foundational principles is essential before we delve into the more specialized requirements of AI systems and their context management.

Deconstructing the "Format Layer"

At the heart of any reload mechanism lies the "Format Layer." This layer is fundamentally concerned with how information—be it configuration data, state changes, or even model parameters—is structured, encoded, decoded, and validated. It's the bridge between a human-readable or machine-generated representation of change and the internal data structures that a running application understands and utilizes. Without an efficient and robust format layer, the most sophisticated reload logic would falter, unable to correctly interpret the desired updates. The format layer encompasses both serialization (converting internal data to a format for storage/transmission) and deserialization (converting the format back into internal data structures).

The choice of data format is a critical design decision, influencing everything from performance and readability to schema evolution and tooling support. Developers have a plethora of options, each with its own set of advantages and disadvantages.

Common Data Formats:

- JSON (JavaScript Object Notation):

- Description: A lightweight, human-readable, and widely adopted data interchange format. It's language-independent, using conventions familiar to programmers of C-family languages.

- Advantages: Excellent human readability, simple structure, ubiquitous support in almost all programming languages and environments, good for web APIs and configuration files.

- Disadvantages: Can be verbose for large datasets, lacks built-in schema definition (though external schemas like JSON Schema exist), less efficient for binary data, parsing can be slower than binary formats.

- Typical Use Cases for Reloads: Configuration files, REST API payloads, small to medium-sized data updates.

- YAML (YAML Ain't Markup Language):

- Description: A human-friendly data serialization standard for all programming languages. It is often used for configuration files and in applications where data is being stored or transmitted.

- Advantages: Extremely human-readable (more so than JSON for complex structures), excellent for configuration files, supports comments, can represent hierarchical data effectively.

- Disadvantages: Whitespace sensitivity can lead to parsing errors, less compact than JSON or binary formats, parsing can be complex.

- Typical Use Cases for Reloads: Application configurations, deployment manifests (e.g., Kubernetes), complex rule sets.

- XML (Extensible Markup Language):

- Description: A markup language that defines a set of rules for encoding documents in a format that is both human-readable and machine-readable. Predecessor to JSON in many web contexts.

- Advantages: Robust schema definition (XSD), powerful query languages (XPath, XSLT), extensive tooling, very mature standard.

- Disadvantages: Extremely verbose, parsing can be CPU-intensive, often considered cumbersome for modern web development compared to JSON.

- Typical Use Cases for Reloads: Enterprise application configurations, SOAP web services, document-centric data.

- Protocol Buffers (Protobuf):

- Description: A language-neutral, platform-neutral, extensible mechanism for serializing structured data. Developed by Google.

- Advantages: Very compact binary format, extremely fast serialization/deserialization, strong schema definition (IDL), excellent for performance-critical inter-service communication.

- Disadvantages: Not human-readable (requires schema to interpret), steeper learning curve, less flexible for ad-hoc data.

- Typical Use Cases for Reloads: High-performance configuration updates in distributed systems, internal RPC communication, model parameter updates.

- Apache Avro:

- Description: A data serialization system from the Hadoop ecosystem. It relies on schemas for both reading and writing data, making it well-suited for data warehousing and streaming.

- Advantages: Compact binary format, strong schema evolution capabilities (backward and forward compatibility), good for long-term data storage and large data streams.

- Disadvantages: Less human-readable, requires schema management, primarily used in big data ecosystems.

- Typical Use Cases for Reloads: Schema-driven data updates, event streaming configurations.

- Custom Binary Formats:

- Description: Hand-rolled, application-specific binary encodings optimized for very specific performance characteristics or data structures.

- Advantages: Maximum compactness and performance potential, tailored precisely to application needs.

- Disadvantages: Very high development and maintenance cost, no standard tooling, poor interoperability, extremely difficult for debugging and tracing.

- Typical Use Cases for Reloads: Niche, hyper-optimized scenarios where every byte and microsecond counts, often in embedded systems or game development.

Here's a comparative table summarizing some key characteristics:

| Feature | JSON | YAML | XML | Protocol Buffers | Apache Avro | Custom Binary |

|---|---|---|---|---|---|---|

| Human Readability | High | Very High | Medium (verbose) | Low (binary) | Low (binary) | Very Low (binary) |

| Data Compactness | Medium | Medium | Low (verbose) | Very High | High | Very High |

| Parsing Performance | Good | Medium | Low | Excellent | Excellent | Excellent (if optimized) |

| Schema Support | External (JSON Schema) | External | Built-in (XSD, DTD) | Built-in (IDL) | Built-in (JSON Schema) | None (custom) |

| Schema Evolution | Manual/External | Manual/External | Robust | Excellent | Excellent | Manual/Complex |

| Language Support | Ubiquitous | Ubiquitous | Ubiquitous | Very High | High | Custom/Limited |

| Typical Use Cases | Web APIs, Configs | Configs, Deployment | Enterprise, Document | RPC, High-Perf Data | Big Data, Streaming | Niche, Embedded |

Criteria for Choosing a Format:

- Readability: How easily can humans understand and debug the format? (JSON, YAML excel here).

- Compactness: How much space does the serialized data occupy? (Protobuf, Avro, custom binary formats are superior).

- Performance: How fast can the data be serialized and deserialized? (Binary formats generally outperform text-based ones).

- Schema Evolution: How well does the format support changes to the data structure over time without breaking compatibility? (Protobuf, Avro, XML with XSD are strong).

- Tooling and Ecosystem: How mature is the support for parsing, validation, and manipulation in various programming languages and development tools? (JSON, XML have vast ecosystems).

- Complexity: How easy is it to implement and maintain? (JSON is generally simplest).

Role of Schema Definition and Validation:

Regardless of the chosen format, schema definition is a crucial aspect of the format layer. A schema formally defines the structure, data types, and constraints of the data. For instance, a configuration schema might specify that a port number must be an integer between 1 and 65535, or that a user ID must be a string matching a specific regular expression.

Schema validation is the process of checking whether incoming data conforms to its defined schema. This is an absolutely critical step in the reload process. Without robust validation, a malformed configuration file, an incorrect data update, or a malicious payload could lead to system instability, errors, or security vulnerabilities. Validation should occur early in the reload pipeline, ideally before any changes are applied to the running system. Tools like JSON Schema, XML Schema Definition (XSD), or Protocol Buffer's IDL are designed precisely for this purpose, providing a formal and enforceable contract for data integrity. The format layer, therefore, isn't just about encoding and decoding; it's about establishing and enforcing the structural integrity of the information that drives dynamic updates.

The Intersection with AI and Large Language Models - Model Context Protocol (MCP)

The rise of artificial intelligence, particularly Large Language Models (LLMs) like GPT, Claude, and Llama, has introduced unprecedented challenges and complexities to system design. Unlike traditional applications that might primarily reload static configurations or structured data, AI models, especially those engaged in continuous interaction, require a dynamic and intelligent approach to managing their operational state and memory. This is where the concept of a "Model Context Protocol" (MCP) becomes not just relevant, but foundational.

AI Context Management: Why It's Crucial for LLMs

At its core, an LLM processes input (prompts) and generates output. However, for continuous, coherent, and personalized interactions—like a long-running conversation, a multi-step task, or an agent-based system—the model needs more than just the immediate input. It needs "context." This context is the accumulated knowledge, memory, persona, and constraints that guide the model's behavior over time.

Without effective context management, an LLM would suffer from: * Amnesia: Forgetting previous turns in a conversation, leading to repetitive or illogical responses. * Incoherence: Inability to maintain a consistent persona or follow ongoing instructions. * Inefficiency: Redundant re-processing of information that should already be known. * Limited Utility: Inability to perform complex, multi-turn tasks that require sequential reasoning.

Context, therefore, is the lifeblood of intelligent interaction for LLMs. It encompasses the entire conversational history, user preferences, system-level instructions (e.g., "act as a helpful assistant"), retrieved external knowledge (e.g., from a vector database), and even dynamic parameters related to the current task. Managing this context is a sophisticated challenge, often constrained by the LLM's finite "context window" (the maximum amount of input tokens it can process at once).

Explaining the Concept of Model Context Protocol (MCP)

A Model Context Protocol (MCP) is a standardized or well-defined framework that dictates how an AI model's operational context is structured, managed, stored, retrieved, and updated across interactions. It's an agreement between the application interacting with the AI and the AI service itself (or its surrounding infrastructure) on how to effectively communicate and maintain state. The goal of an MCP is to abstract away the underlying complexities of tokenization, context window management, and history compression, presenting a coherent and usable interface for context handling.

While there isn't one universal, formally ratified "Model Context Protocol" standard across all AI vendors (each typically has its own conventions), the underlying principles are broadly similar. They define: 1. Context Structure: How conversational turns, system instructions, and external data are organized (e.g., an array of message objects, each with a role and content). 2. Context Update Mechanisms: How new information is appended, modified, or retrieved. 3. Context Lifecycle: How context is initiated, maintained, and potentially purged. 4. Serialization/Deserialization: How the structured context is converted into a format consumable by the model and vice-versa.

Deep Dive into Claude MCP and Similar Strategies

While specific implementation details for proprietary models like Claude are often not fully public, we can infer and discuss the architectural principles that any sophisticated claude mcp (or similar Model Context Protocol) would likely employ to manage its conversational context. Anthropic's Claude, known for its ability to handle long and complex conversations, relies heavily on robust context management.

A hypothetical claude mcp would likely involve:

- Message Array Structure: The most common approach. Context is represented as an ordered list of messages, where each message has a

role(e.g., 'user', 'assistant', 'system') andcontent. This mirrors the conversational turn-taking.json [ {"role": "system", "content": "You are a helpful AI assistant."}, {"role": "user", "content": "Hi there, can you help me with a Python problem?"}, {"role": "assistant", "content": "Of course! What's your problem?"}, {"role": "user", "content": "I need to reverse a string efficiently."} ] - System Prompts: A dedicated 'system' role often exists within the MCP to inject overarching instructions, persona definitions, or guardrails that apply throughout the interaction. These are typically the first messages in the context.

- Context Window Management: Crucial for managing the LLM's token limits. When the context approaches the maximum token limit, the MCP needs strategies to shorten it. This could involve:

- Truncation: Simply dropping the oldest messages.

- Summarization: Using an LLM or another mechanism to summarize older parts of the conversation.

- Retrieval-Augmented Generation (RAG): Storing full conversation history externally (e.g., in a vector database) and retrieving only the most relevant snippets based on the current query, then "reloading" these into the context.

- Recap Tokens: Some models might have special tokens or mechanisms to represent a compressed summary of past interactions, effectively reloading a condensed context.

- External Knowledge Injection: The

claude mcpwould need a way to seamlessly integrate information from external databases, APIs, or tools. This might involve inserting "tool use" prompts, search results, or data snippets into the context as if they were part of the conversation or system instructions. - Idempotent Updates: When parts of the context are updated (e.g., a system instruction is refined), the MCP should ideally handle these updates idempotently, ensuring that repeated application doesn't lead to inconsistencies.

How Context is "Reloaded" or Updated within an MCP Framework:

The concept of "reloading" in an MCP context is more nuanced than a typical system configuration reload. It refers to the dynamic process of constructing and updating the input payload (the "context") sent to the LLM for each inference call. This involves:

- Appending New Turns: The most common form of reload. After a user or assistant message, the new message is appended to the existing context history. This is a continuous reload, building the conversation incrementally.

- Retrieving Historical Interactions: When a conversation resumes after a break, or when a user explicitly asks about past events, the MCP might need to retrieve stored historical context from a persistent store (e.g., a database, cache) and "reload" it into the active session. This often involves fetching structured message arrays.

- Injecting External Knowledge: A significant form of context reload. If a user query requires up-to-date information not present in the model's training data, the application uses RAG to query external knowledge bases. The retrieved documents or snippets are then formatted according to the MCP and "reloaded" into the model's input context for the current turn. This is a dynamic, on-demand reload.

- Managing System Prompts and User-Defined Constraints: System-level instructions or user preferences can be modified during a session. For instance, changing the assistant's persona from "friendly" to "formal." The MCP needs to facilitate "reloading" these updated instructions into the beginning of the context, ensuring they take precedence in subsequent interactions. This might involve replacing specific elements in the context array.

- Dynamic Parameter Updates: Beyond conversational context, an MCP might also encompass the "reloading" of dynamic parameters that influence the model's behavior, such as temperature, top-p, or penalty settings, which could change based on the user's interaction mode (e.g., creative vs. factual).

The "Format Layer" within MCP:

The Format Layer is absolutely integral to the Model Context Protocol. It defines precisely how the rich, structured context data (message arrays, metadata, external snippets) is serialized into a format that the LLM API expects and deserialized from it.

- Standardized API Payloads: Most LLM APIs (including those for Claude) expect context in a specific JSON or similar structured format. This format is the core of the MCP's format layer. For example, a common format might be a JSON array of objects, where each object represents a message with

roleandcontentkeys. - Tokenization: Beneath the surface of the human-readable format, the LLM further processes this into tokens. The format layer must implicitly account for how its chosen serialization maps to the model's tokenizer. Different structures or special characters can influence token count and, consequently, context window usage.

- Special Markers and Delimiters: Some MCPs might utilize specific markers or delimiters within the raw text string (before tokenization) to logically separate different parts of the context (e.g., system instructions from conversation history, or external knowledge from user query). This is a form of application-level formatting within the broader format layer.

- Binary Representations (Less Common for Public APIs): While less common for direct public API calls to LLMs, internal model pipelines or highly optimized inference engines might use more compact binary formats (like Protobuf) to represent context for faster transmission and processing between microservices within an AI infrastructure. This would be a deeper, internal part of the MCP's format layer.

In essence, the MCP formalizes the contract for context management, and its format layer provides the concrete syntax and semantics for how that context is represented and exchanged. This interplay is critical for building robust, scalable, and intelligent AI applications that can maintain long-running, coherent interactions with users.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

Tracing the Reload Format Layer - Why and How

The dynamism offered by reload mechanisms, particularly those managing the complex contexts of AI models, comes with an inherent need for robust observability. Without the ability to "trace" the operations of the Reload Format Layer, understanding system behavior, diagnosing issues, and optimizing performance would be an impossible task. Tracing provides the visibility needed to track the flow of information, from its initial format to its final application, ensuring that dynamic updates occur reliably and efficiently.

Why Trace the Reload Format Layer?

- Debugging and Troubleshooting: This is the most immediate and obvious benefit. When a system update or context reload fails, or behaves unexpectedly, tracing allows developers to pinpoint the exact stage where the error occurred. Was the format invalid? Was there a schema mismatch? Did the parsing fail? Was the data corrupted during transmission? Without tracing, debugging issues related to dynamic updates can be like searching for a needle in a haystack, especially in distributed systems or complex AI pipelines where context flows across multiple services. For an MCP, tracing helps identify why an LLM might be "hallucinating" or losing context – perhaps due to incorrect context construction, truncation errors, or malformed data being passed.

- Performance Optimization: The processes of deserialization, validation, and application of updates can be resource-intensive. Tracing allows engineers to identify performance bottlenecks within the format layer. Is a particular JSON parser too slow for large payloads? Is schema validation adding significant latency? Is the context retrieval mechanism for an MCP taking too long, delaying the LLM's response? By observing the time taken at each step of the reload, opportunities for optimization (e.g., using a faster parser, caching validated schemas, optimizing context compression) become apparent.

- Ensuring Data Integrity and Consistency: Tracing helps verify that the data being reloaded is indeed correct and that its application leads to a consistent state. By logging the incoming format, the parsed internal representation, and the final state after the reload, developers can build confidence in the integrity of their dynamic updates. In an MCP, this ensures that the conversational history or system instructions are accurately maintained and presented to the model.

- Security Auditing: Malformed data or injection attacks can sometimes exploit vulnerabilities in the format layer. Tracing can log attempts to pass invalid formats or data that violates schema constraints, providing an audit trail for security incidents and helping to identify potential attack vectors. Monitoring these traces can be crucial for detecting unauthorized attempts to manipulate an AI's context.

- Reliability and System Stability: By continuously tracing reload operations, systems can be monitored for anomalies. Frequent reload failures, performance degradation during reloads, or unusual patterns in the format layer processing can indicate underlying issues that need proactive attention before they lead to service outages. For complex

claude mcpimplementations, this is vital for ensuring consistent model behavior and user experience. - Observability and Understanding System Behavior: Beyond specific issues, tracing provides invaluable insights into how a dynamic system operates. It helps understand the frequency of reloads, the typical size and complexity of the formats processed, and the overall health of the update mechanism. This holistic view is crucial for system architects and operators.

How to Trace the Reload Format Layer?

Effective tracing requires a multi-faceted approach, combining various observability tools and practices:

- Structured Logging:

- Placement: Insert log statements at critical junctures within the reload pipeline:

- Upon receipt of the new format (log raw payload snippet, format type).

- Before and after deserialization/parsing (log parse duration, success/failure).

- Before and after schema validation (log validation result, specific errors).

- Before and after applying changes to the system's internal state (log old vs. new state, application duration).

- For an MCP, log the constructed context before sending to the LLM, and the raw response from the LLM.

- Content: Logs should be structured (e.g., JSON format) to be easily parsable by log aggregators (e.g., ELK Stack, Splunk, Grafana Loki). Include relevant metadata like timestamp, service name, request ID, user ID (for MCP), and severity level.

- Contextual Information: For complex reload operations, including correlation IDs or trace IDs that link related log entries across different components is crucial.

- Placement: Insert log statements at critical junctures within the reload pipeline:

- Metrics and Monitoring:

- Key Metrics:

reload_total_count: Total number of reload attempts.reload_success_count,reload_failure_count: Breakdown of success/failure.reload_duration_seconds_bucket: Histogram of total reload duration.format_parse_duration_seconds_bucket: Latency of deserialization.schema_validation_duration_seconds_bucket: Latency of schema validation.context_size_tokens_bucket: For MCP, monitor the size of the input context in tokens to identify potential context window issues.context_retrieval_duration_seconds_bucket: For RAG-based MCPs, track the time taken to retrieve external context.

- Tools: Use monitoring systems like Prometheus, Grafana, Datadog to collect, visualize, and alert on these metrics. Trends in these metrics can quickly highlight performance regressions or increasing error rates.

- Key Metrics:

- Distributed Tracing:

- Concept: For microservices architectures, a single reload request or context update might span multiple services (e.g., a configuration service fetches a new format, passes it to a validation service, then to an application service). Distributed tracing (e.g., using OpenTelemetry, Jaeger, Zipkin) allows you to follow the entire lifecycle of a request across service boundaries.

- Implementation: Instrument code to generate spans for key operations within the format layer (parsing, validation, application). Each span should have attributes detailing the operation, duration, and any relevant data (e.g., format type, validation result). This provides an end-to-end view of the reload process, identifying latency bottlenecks between services. For an MCP, this would trace the journey of the contextual payload from the user application, through an API gateway, to the LLM backend, and back.

- Profiling:

- Purpose: When performance metrics indicate a bottleneck within the format layer, profiling tools (e.g., pprof for Go, JProfiler for Java, cProfile for Python) can identify the exact lines of code consuming the most CPU or memory during deserialization, validation, or context construction. This granular insight is critical for low-level optimization.

- Auditing and Versioning:

- Audit Logs: Maintain a history of all applied changes, including who made them (if applicable), when, and the exact content of the new format. This provides a crucial paper trail for forensics and compliance.

- Version Control for Formats: Store configuration files, schemas, and even sample context templates in a version control system (like Git). This allows for easy rollback and tracking of changes to the format itself.

By combining these tracing techniques, organizations can gain unparalleled visibility into their Reload Format Layer, transforming it from a potential black box into a transparent and controllable component of their dynamic software systems, especially vital for managing the sophisticated demands of Model Context Protocol implementations within AI.

Advanced Considerations and Best Practices

Building a truly robust and resilient Reload Format Layer, especially one capable of handling the demands of modern AI systems and protocols like MCP, requires moving beyond the basics. This section delves into advanced considerations and best practices that can significantly enhance the reliability, security, and performance of dynamic update mechanisms.

Schema Evolution: Handling Backward and Forward Compatibility

One of the most challenging aspects of a format layer is managing schema evolution. As applications grow and requirements change, the structure of data and configurations will inevitably evolve. A new version of the system might introduce new fields, remove old ones, or change data types. The format layer must handle these changes gracefully, ensuring that older versions of the system can still process newer formats (forward compatibility) and newer versions can still process older formats (backward compatibility), without breaking existing deployments.

- Backward Compatibility (Newer reads Older): The most common requirement. Newer versions of the software must be able to parse and utilize data created by older versions. This usually means:

- Adding new optional fields: New fields should always be optional. Older systems will simply ignore them. Newer systems can use default values if the field is missing from older data.

- Not removing mandatory fields: If a field is mandatory, it cannot be removed without breaking backward compatibility. If it must be removed, it should first be made optional and deprecated for a period.

- Not changing field semantics or types drastically: Minor type changes (e.g., int to long) might be manageable, but drastic changes (e.g., string to complex object) will likely break compatibility.

- Forward Compatibility (Older reads Newer): More difficult to achieve. Older versions of the software must be able to process data created by newer versions, even if they don't fully understand all the new fields. This is crucial for seamless rolling upgrades where both old and new versions might coexist. Techniques include:

- "Unknown field" handling: Parsers should be designed to ignore unknown fields rather than throwing errors. This allows older systems to process the parts of the data they understand.

- Graceful degradation: If a critical new field is introduced, older systems might not be able to fully function with the new data, but they should at least not crash and ideally provide a degraded but operational service.

- Versioned Schemas: Explicitly versioning schemas (e.g.,

/api/v1/config,/api/v2/configor adding aschema_versionfield) allows systems to know which parser to use. This provides a clear contract and helps manage transitions. Tools like Protocol Buffers and Avro excel at built-in schema evolution support, but even with JSON, careful planning and tooling (like JSON Schema withoneOforallOf) can help.

Version Control: Managing Different Formats or Protocol Versions

Just as source code is version-controlled, so too should be configuration files, schemas, and even the definitions of Model Context Protocols.

- Centralized Repository: Store all format definitions (e.g., JSON Schema files, Protobuf

.protofiles, YAML templates) in a centralized version control system (Git is ideal). This provides a single source of truth and a complete history of changes. - Branching and Merging: Use standard branching strategies to manage changes. Feature branches for new format versions, release branches for stable configurations, etc.

- Automated Generation: If schemas are derived from code (e.g., Go structs to JSON Schema), automate this generation process and commit the generated schemas.

- Rollback Capability: Version control enables easy rollback to previous, known-good configurations or schema definitions in case of issues with a new reload.

Error Handling and Rollbacks: Strategies for Failed Reloads

Despite best efforts, reloads can fail. A robust system must anticipate and handle these failures gracefully.

- Pre-flight Checks: Before attempting to apply a reload, perform as many checks as possible: schema validation, semantic validation (e.g., checking IP ranges, port availability), syntax validation. If any check fails, abort the reload immediately.

- Transactional Updates: Where possible, treat a reload as a transaction. All changes are either applied successfully, or none are. This might involve applying changes to a temporary staging area and then atomically switching to it, or using a two-phase commit strategy.

- Automated Rollbacks: If a reload fails at a critical stage (e.g., after partial application), the system should automatically attempt to revert to the previous known-good state. This requires keeping a copy of the old configuration/state.

- Manual Intervention and Alarms: For catastrophic failures, clear alarms should be raised, informing operations teams for manual intervention. The system should provide clear diagnostics and rollback instructions.

- Circuit Breakers and Rate Limiting: Implement circuit breakers to prevent continuous retries of failed reloads, which could exacerbate problems. Rate limit reload attempts to prevent overloading the system.

Security: Data Sanitization, Encryption for Sensitive Context

The format layer often handles sensitive information, especially in AI's Model Context Protocol (MCP) where user conversations or proprietary data might be present.

- Data Sanitization and Validation: Beyond schema validation, perform content validation and sanitization to prevent injection attacks (e.g., prompt injection in LLMs), cross-site scripting (XSS), or SQL injection. Ensure all inputs are properly escaped and validated against expected patterns.

- Encryption In-Transit and At-Rest: For sensitive reload data or AI context, ensure that data is encrypted both when it's being transmitted (e.g., using TLS/SSL for API calls) and when it's stored (e.g., encrypted configuration files, encrypted context history in databases).

- Access Control: Implement strict access control mechanisms to dictate who can initiate reloads, who can modify format definitions, and who can access sensitive context data. Role-based access control (RBAC) is essential.

- Auditing: Maintain detailed audit logs of all reload attempts, including the initiator, timestamp, and outcome, for accountability and forensic analysis.

Performance Tuning: Batching, Compression, Efficient Parsers, Caching

Optimizing the performance of the reload format layer is crucial for maintaining system responsiveness.

- Efficient Parsers: Choose and use the fastest available parsers for your chosen format. For example, in Python,

orjsonis faster than the standardjsonlibrary for JSON parsing. For binary formats, native language bindings are typically optimized. - Compression: For large payloads (e.g., complex AI model weights, extensive context histories), use compression algorithms (Gzip, Zstd) during transmission to reduce network overhead and potentially speed up overall transfer times.

- Batching: If multiple small updates need to be applied, consider batching them into a single, larger reload operation to reduce overhead associated with individual transactions.

- Caching: Cache parsed and validated schemas to avoid redundant processing. If context data is frequently retrieved, implement caching layers (e.g., Redis) to serve it quickly, reducing the load on primary data stores.

- Asynchronous Processing: For reloads that don't require immediate, synchronous application, offload them to a background worker or message queue to avoid blocking critical request paths.

Idempotency: Ensuring Reloads Can Be Safely Retried

An idempotent operation is one that can be applied multiple times without changing the result beyond the initial application. This is a powerful property for reload mechanisms.

- Atomic Updates: Design reload logic such that reapplying the same configuration or context update has no side effects. For example, if you're setting a value, setting it again with the same value should not cause an error or unexpected behavior.

- Conditional Updates: Implement checks to only apply changes if the target state is different from the desired state. For instance, "only update this configuration field if its current value is not already X."

- Version Numbers/Hashes: Use version numbers or content hashes (

ETag) on configuration objects. A reload only proceeds if the incoming version is newer than the current one, or if the content hash differs. This prevents applying stale or identical updates.

Monitoring and Alerting: Setting Up Alerts for Critical Reload Failures or Performance Degradation

Proactive monitoring is non-negotiable for dynamic systems.

- Dashboards: Create comprehensive dashboards (using Grafana, Kibana, Datadog) that visualize key metrics: reload success rates, latency percentiles (p95, p99), context window usage for MCP, error rates per component.

- Alerting Thresholds: Set up alerts for critical conditions:

- Sustained reload failure rates above a certain threshold.

- Reload latency exceeding acceptable limits.

- Context window approaching its maximum for an LLM (indicating potential truncation or performance degradation).

- Frequent schema validation errors.

- Logging Aggregation: Ensure all logs related to the reload format layer are sent to a centralized logging system (ELK, Splunk, Loki) for easy searching and analysis.

Testing: Unit, Integration, and Load Testing for Reload Mechanisms

Testing dynamic updates is inherently more complex than testing static systems, but it's absolutely essential.

- Unit Tests: Test individual components of the format layer: parsers, validators, schema evolution logic, context constructors (for MCP).

- Integration Tests: Test the entire reload pipeline end-to-end, involving all services. Simulate various scenarios: valid updates, invalid formats, corrupted data, partial updates, high load.

- Load Testing: Subject the reload mechanism to high concurrency and large payloads to identify performance bottlenecks and resource contention. This is particularly important for

Model Context Protocolimplementations that might handle numerous concurrent context updates. - Chaos Engineering: Periodically introduce failures (e.g., network partitions, service crashes) during reloads in a controlled environment to verify the system's resilience and rollback capabilities.

Managing the intricacies of various AI models, their specific context protocols, and the associated reload format layers can become a significant operational overhead. This is where robust API management platforms become indispensable. For instance, an open-source AI gateway and API management platform like APIPark can significantly streamline these processes. By offering a unified API format for AI invocation, it abstracts away the complexities of diverse underlying AI models and their respective context protocols, ensuring that prompt changes or model updates don't necessitate widespread application modifications. This unified approach can inherently simplify the "format layer" challenges across a heterogeneous AI landscape. Furthermore, features such as its detailed API call logging and powerful data analysis tools are invaluable for tracing reload format layer operations, identifying performance bottlenecks, and maintaining system stability across potentially hundreds of integrated AI models. APIPark’s ability to standardize, secure, and monitor API calls makes it an ideal complement to complex AI architectures, especially when dealing with the dynamic nature of Model Context Protocol interactions.

Case Study: Tracing a Reloaded Context in an AI Agent System

To solidify our understanding, let's consider a conceptual case study: an advanced AI agent system designed to assist users with complex multi-step tasks, such as travel planning or financial analysis. This system relies heavily on a sophisticated Model Context Protocol (MCP) to maintain a coherent, long-running dialogue, integrate external tool calls, and adapt its persona based on user feedback. The "reload format layer" here is crucial for how the agent’s evolving understanding and memory are constructed and presented to the underlying LLM (let's imagine a claude mcp-like implementation).

Scenario: A user is interacting with the AI agent to plan a complex international trip. The conversation has spanned several hours, involved multiple queries for flights, hotels, and local attractions, and even integrated real-time API calls to booking services.

The "Reload" Event: At a critical juncture, the user decides to change a fundamental constraint: "Actually, I want to travel sustainably, so please prioritize options with lower carbon footprints, even if they cost a bit more." This is a "reload" event for the agent's context. It's not a simple append; it's a modification to the underlying system prompt or a re-weighting of preferences that impacts all future recommendations. Simultaneously, the agent might automatically reload external knowledge by querying a sustainability database using an internal API call.

How the Reload Format Layer and Tracing Come into Play:

- Initial User Input & Context Construction (MCP):

- The user's new constraint (

"prioritize options with lower carbon footprints") arrives. - The MCP (e.g., a service responsible for building the LLM prompt) identifies this as a preference update rather than a simple conversational turn.

- It retrieves the existing context, which includes:

- The conversational history (array of user/assistant messages).

- The current system persona (

"You are a helpful travel agent"). - Previous constraints (

"budget under $5000"). - Results from prior API calls (flight availability, hotel prices).

- Tracing Point 1: Incoming Format Logging. The gateway (or the first service in the chain) logs the raw user input and any immediate metadata (e.g.,

request_id: ABC123,user_id: user-X).

- The user's new constraint (

- Context Modification & System Prompt Update (Format Layer):

- The MCP service decides to inject the new constraint by modifying the system prompt within the context, perhaps changing it to:

"You are a helpful travel agent, prioritizing sustainable options."It also needs to append the user's latest message to the conversation history. - Simultaneously, an internal mechanism triggers an external knowledge lookup for "sustainable travel options." The results from this lookup (e.g., a list of eco-friendly airlines, green hotel certifications) are formatted into a structured JSON object according to a predefined schema.

- Tracing Point 2: Context Pre-processing & Schema Validation. Logs are generated by the MCP service:

event: context_modification_start,request_id: ABC123,action: update_system_prompt,new_prompt: "...".event: external_knowledge_fetch_start,request_id: ABC123,query: "sustainable travel options".- Upon receiving the external knowledge JSON,

event: external_knowledge_parse,request_id: ABC123,format_type: JSON,duration: 50ms. event: external_knowledge_schema_validation,request_id: ABC123,result: success.- If validation failed,

result: failure,error: "missing 'carbon_footprint_data' field". This would immediately alert to a problem with the external data source or its schema.

- The MCP service decides to inject the new constraint by modifying the system prompt within the context, perhaps changing it to:

- Final Context Assembly & Tokenization (MCP's Format Layer):

- The MCP service assembles the complete context, which now includes the updated system prompt, the original conversation history, the newly injected external knowledge, and the latest user query. This entire structure is then serialized into the

claude mcp-compatible JSON format expected by the LLM API. - This JSON payload is then tokenized to ensure it fits within Claude's context window. If it exceeds the limit, the MCP's context window management logic (e.g., summarization of older turns) kicks in.

- Tracing Point 3: Final Context Serialization & Tokenization. Logs:

event: final_context_assembly,request_id: ABC123,payload_size_bytes: 8192,token_count: 3500.event: context_tokenization_duration,request_id: ABC123,duration: 20ms.- If truncation occurs:

event: context_truncation_applied,request_id: ABC123,strategy: summarize_oldest_3_turns,original_tokens: 4500,new_tokens: 3500.

- The MCP service assembles the complete context, which now includes the updated system prompt, the original conversation history, the newly injected external knowledge, and the latest user query. This entire structure is then serialized into the

- LLM Invocation & Response:

- The API gateway forwards the formatted and tokenized context to the Claude LLM.

- Tracing Point 4: LLM API Call. Distributed tracing would show a span for the API call, its duration, and the response status.

event: llm_api_call,request_id: ABC123,llm_model: claude-3-opus,api_latency: 500ms.

Debugging with Tracing:

Imagine the user complains: "The agent is still recommending gas-guzzling flights! It completely ignored my sustainable travel preference."

Without tracing, this would be a nightmare to debug. With tracing: * Check Tracing Point 1: Did the user's input even arrive correctly? * Check Tracing Point 2: Was the system prompt actually updated? Was the external knowledge retrieved successfully, and did its schema validate? If there was a validation error at this point, the external knowledge might not have been included, explaining the irrelevant recommendations. * Check Tracing Point 3: Was the final constructed context correct before sending to the LLM? Did tokenization or truncation accidentally remove the critical sustainability instruction or the external knowledge? If the context_truncation_applied event showed a problematic strategy, that would be the culprit. * Check Tracing Point 4: Did the LLM actually receive the full, correct payload, or was there an issue during transmission? What was its response?

By following the request_id: ABC123 across all these tracing points, an engineer can quickly pinpoint precisely where the "reload" of the user's preference and external knowledge failed within the complex Model Context Protocol pipeline, turning a vague bug report into an actionable diagnostic. This illustrates the profound value of tracing in maintaining the integrity and responsiveness of dynamic AI systems.

Conclusion

The Reload Format Layer, though often operating in the background, stands as a cornerstone of modern, dynamic software systems. Its ability to facilitate seamless updates—from simple configuration changes to the intricate context management required by advanced AI models like those employing a Model Context Protocol (MCP)—is indispensable for achieving high availability, rapid iteration, and adaptive intelligence. We have explored the fundamental reasons for its existence, dissected the various data formats that comprise it, and delved into its critical role within the evolving landscape of AI, highlighting how claude mcp and similar protocols structure and refresh the very "memory" of intelligent agents.

The journey through the complexities of schema evolution, robust error handling, stringent security, and meticulous performance tuning underscores that implementing a reliable Reload Format Layer is far from trivial. It demands careful design, diligent implementation, and an unwavering commitment to observability. Crucially, the ability to trace the operations of this layer is not a luxury but a necessity. Through structured logging, comprehensive metrics, and distributed tracing, engineers can gain the profound insights needed to debug elusive issues, optimize performance bottlenecks, and ensure the unwavering reliability and consistency of dynamic updates, especially those that sculpt the interactions of our increasingly intelligent AI systems.

As AI continues to advance, demanding ever-more sophisticated context management and dynamic adaptability, the significance of a well-engineered and thoroughly traced Reload Format Layer will only amplify. By embracing the best practices outlined in this article, developers and architects can build systems that not only respond to change gracefully but also inspire confidence in their ability to evolve intelligently and reliably.

Frequently Asked Questions (FAQs)

1. What is the "Reload Format Layer" and why is it important in modern software? The Reload Format Layer refers to the mechanism responsible for interpreting, validating, and applying dynamic updates (e.g., new configurations, data, or state changes) to a running software system without requiring a full restart. It's crucial because it enables high availability, continuous deployment, rapid iteration, and A/B testing, minimizing downtime and allowing applications to adapt quickly to changing requirements or data.

2. How does the Reload Format Layer relate to Artificial Intelligence and the Model Context Protocol (MCP)? In AI, particularly with Large Language Models (LLMs), the "context" (conversational history, system prompts, external knowledge) is dynamic and constantly updated. The Model Context Protocol (MCP) defines how this context is structured and managed. The Reload Format Layer within an MCP dictates how this evolving context data is serialized into a format consumable by the LLM (e.g., JSON message arrays) and how new information is "reloaded" into that context for each interaction. This ensures coherence, memory, and adaptability for AI agents.

3. Why is "tracing" the Reload Format Layer so critical, especially for AI systems? Tracing is vital because it provides visibility into the often-complex processes of dynamic updates. For AI systems using MCP, tracing allows developers to: debug why an LLM might be losing context or misbehaving, pinpoint performance bottlenecks in context construction or retrieval, ensure data integrity, and monitor for security issues. Without tracing, diagnosing problems in dynamic AI contexts—where subtle changes in context can lead to drastically different model outputs—becomes exceedingly difficult.

4. What are some common data formats used in the Reload Format Layer, and how do they differ? Common data formats include JSON, YAML, XML, Protocol Buffers (Protobuf), and Apache Avro. They differ in characteristics such as human readability (JSON, YAML are high; Protobuf is low), data compactness (Protobuf, Avro are high; XML is low), parsing performance (binary formats like Protobuf are fast), and schema support (Protobuf, Avro, XML have strong built-in schemas, while JSON often uses external JSON Schema). The choice depends on factors like readability needs, performance requirements, and complexity of schema evolution.

5. What are the best practices for ensuring a robust and secure Reload Format Layer? Key best practices include: implementing strong schema evolution strategies (backward and forward compatibility), maintaining version control for all format definitions, designing for comprehensive error handling and automated rollbacks, enforcing strict security measures (data sanitization, encryption, access control), optimizing for performance (efficient parsers, compression, caching), ensuring idempotency for safe retries, and setting up robust monitoring and alerting. Rigorous unit, integration, and load testing are also essential to validate the layer's reliability and resilience.

🚀You can securely and efficiently call the OpenAI API on APIPark in just two steps:

Step 1: Deploy the APIPark AI gateway in 5 minutes.

APIPark is developed based on Golang, offering strong product performance and low development and maintenance costs. You can deploy APIPark with a single command line.

curl -sSO https://download.apipark.com/install/quick-start.sh; bash quick-start.sh

In my experience, you can see the successful deployment interface within 5 to 10 minutes. Then, you can log in to APIPark using your account.

Step 2: Call the OpenAI API.