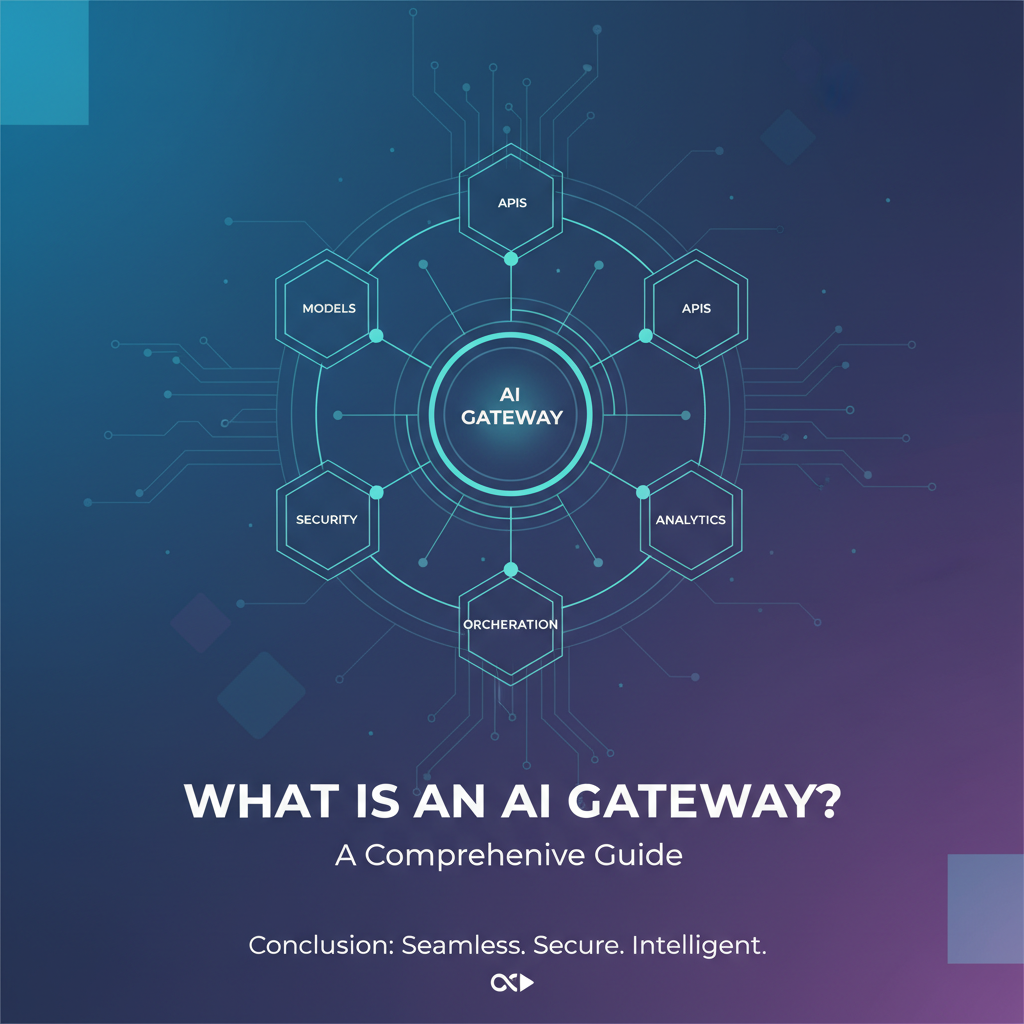

What is an AI Gateway? A Comprehensive Guide

The landscape of modern technology is being reshaped at an unprecedented pace by the advent and rapid evolution of Artificial Intelligence. From sophisticated recommendation engines that understand our preferences to intelligent chatbots that handle complex customer service queries, and from powerful predictive analytics tools to advanced creative assistants, AI has permeated nearly every facet of digital existence. At the heart of this transformative shift lies the crucial need for effective integration and management of these diverse AI capabilities. As organizations increasingly leverage a multitude of AI models, including the groundbreaking Large Language Models (LLMs), they face a burgeoning set of challenges: ensuring seamless connectivity, maintaining robust security, optimizing performance, and controlling costs across a disparate and ever-evolving ecosystem of AI services. This complex scenario has given rise to a critical piece of infrastructure, an indispensable orchestrator in the age of AI: the AI Gateway.

Traditional architectural patterns, while effective for conventional web services and microservices, often fall short when confronted with the unique demands of artificial intelligence. The nuanced requirements of AI—such as prompt versioning for LLMs, dynamic model selection, specialized data sanitization for sensitive inputs, and granular cost tracking based on token usage or computational resources—necessitate a more specialized and intelligent intermediary. An AI Gateway emerges as this sophisticated solution, extending the foundational principles of a conventional API Gateway with a deep understanding of AI-specific protocols, payloads, and operational nuances. It acts as a central control point, simplifying the consumption, governance, and scaling of AI services, thereby empowering developers and enterprises to harness the full potential of AI without being bogged down by its inherent complexities. This comprehensive guide will delve into the intricacies of what an AI Gateway truly is, explore its indispensable features, differentiate it from its traditional counterpart, unveil its profound benefits, and chart its promising future, ultimately underscoring its pivotal role in building the next generation of intelligent applications.

1. Understanding the Core Concepts: From API to AI

To truly grasp the essence and necessity of an AI Gateway, it's vital to first establish a solid understanding of its foundational predecessor: the API Gateway. The evolution from managing generic APIs to orchestrating intelligent AI services is a logical progression driven by technological advancements and the escalating demands of modern software architectures.

1.1 What is an API Gateway? The Foundation of Microservices

In the early days of web development, applications were often monolithic, meaning all functionalities were bundled into a single, tightly coupled unit. As applications grew in complexity and user bases expanded, these monoliths became difficult to develop, deploy, and scale. This challenge led to the rise of microservices architecture, where an application is broken down into a collection of small, independent services, each running in its own process and communicating with lightweight mechanisms, often HTTP APIs. While microservices offered unparalleled flexibility, scalability, and resilience, they also introduced a new layer of complexity: how do clients (web browsers, mobile apps, other services) interact with dozens or even hundreds of these small services? This is where the API Gateway stepped in, becoming an indispensable component in the modern software stack.

An API Gateway acts as a single entry point for a multitude of microservices. Instead of clients having to know the addresses and specific APIs of each individual microservice, they simply send all requests to the API Gateway. The Gateway then intelligently routes these requests to the appropriate backend service. But its role extends far beyond mere routing. It serves as a powerful abstraction layer, shielding clients from the intricate details of the microservices architecture. Crucially, an API Gateway provides a host of cross-cutting concerns that would otherwise need to be implemented within each microservice, leading to redundancy and increased development overhead. These critical functionalities include:

- Request Routing and Composition: Directing incoming requests to the correct service and often aggregating responses from multiple services before sending a unified response back to the client. This simplifies client-side logic significantly.

- Authentication and Authorization: Verifying the identity of the client and ensuring they have the necessary permissions to access specific resources or invoke certain operations. By centralizing security, it reduces the burden on individual services.

- Rate Limiting and Throttling: Protecting backend services from being overwhelmed by too many requests, preventing denial-of-service attacks, and ensuring fair usage across different clients.

- Caching: Storing frequently accessed responses to reduce latency and load on backend services, improving overall application performance.

- Monitoring and Logging: Capturing detailed metrics and logs for all incoming and outgoing API calls, providing crucial insights into system health, performance, and usage patterns.

- Protocol Translation: Converting requests from one protocol to another, for example, handling REST requests and translating them to gRPC calls for backend services.

- Security Policies: Implementing various security measures such as IP whitelisting/blacklisting, WAF integration, and SSL termination.

In essence, an API Gateway streamlines the consumption of microservices, enhances security, improves performance, and simplifies operations, making it a cornerstone for resilient and scalable distributed systems.

1.2 The Evolution to AI Gateway: Why We Need Something More

While a traditional API Gateway excels at managing the interaction with conventional REST or gRPC services, the emergence of Artificial Intelligence, particularly sophisticated machine learning models and Large Language Models (LLMs), introduced a new paradigm that stretched the capabilities of these established gateways to their limits. The fundamental nature of AI services—their diverse interfaces, specialized operational requirements, and unique consumption patterns—demanded a more intelligent and purpose-built intermediary. The limitations of a generic API Gateway when dealing with AI stemmed from several distinct characteristics of AI workloads:

- Diverse Model Interfaces and Protocols: Unlike uniform REST APIs, AI models, especially those from different providers (e.g., OpenAI, Google AI, Hugging Face, custom-trained models), often expose wildly different APIs, data formats, and authentication mechanisms. A generic API Gateway might struggle to abstract these variations effectively without extensive custom coding.

- Prompt Management and Versioning (Especially for LLMs): With LLMs, the "prompt" is paramount. It's not just a simple input; it's the core instruction, context, and often the business logic. Managing, versioning, A/B testing, and securely handling prompts—which can be lengthy and contain sensitive information—goes far beyond the scope of a standard API Gateway.

- Cost Tracking and Optimization: AI model usage, particularly for LLMs, is often billed per token, per inference, or per computational second, making traditional request-based rate limiting and cost tracking insufficient. Organizations need granular visibility into AI spending, often broken down by user, application, or specific model, to prevent budget overruns.

- Model Lifecycle Management: AI models are not static; they are continuously trained, updated, and deployed in various versions. An API Gateway doesn't natively understand how to manage model versions, route traffic to specific versions, or facilitate seamless A/B testing of different models or model configurations.

- Specialized Security Concerns: AI inputs and outputs can contain highly sensitive data (PII, confidential business information, intellectual property). Ensuring data privacy, implementing robust input/output sanitization, redacting sensitive information before it reaches the model or before it's logged, and protecting against prompt injection attacks are critical security considerations that generic API Gateways are not designed to handle out-of-the-box.

- Performance Optimization for AI: Caching AI responses can be complex due to the probabilistic nature of some models, but crucial for reducing latency and cost. Moreover, intelligent routing based on model latency, availability, or cost in real-time is a feature specific to AI workloads.

- Observability Tailored for AI: While API Gateways provide general request/response logging, AI services require deeper insights, such as token usage, confidence scores, model temperatures, and specific error codes from the AI inference engine.

These challenges underscored the need for an architectural component that not only performs the traditional duties of an API Gateway but also possesses an inherent understanding and specialized capabilities tailored for the unique characteristics of AI services. This need culminated in the conceptualization and development of the AI Gateway.

1.3 Defining an AI Gateway

An AI Gateway, at its core, is a specialized type of API Gateway designed specifically to manage, secure, and optimize the consumption and deployment of Artificial Intelligence models and services. It acts as an intelligent intermediary between client applications and various AI backend services, providing a unified and consistent interface for interacting with a diverse range of AI models—be they machine learning models, deep learning models, or, most notably, Large Language Models (LLMs).

An AI Gateway extends the fundamental functionalities of a traditional API Gateway by incorporating AI-specific features and considerations. While it still handles crucial aspects like routing, authentication, rate limiting, and monitoring, it does so with an intelligence layer that understands the nuances of AI workloads. Key differentiators include:

- Model Abstraction and Unification: It abstracts away the complexities of disparate AI model APIs from different providers (e.g., OpenAI, Google AI, custom models), presenting a single, standardized API endpoint to developers. This means a developer can interact with a generic

/predictor/generateendpoint, and the AI Gateway handles the translation to the specific requirements of the underlying AI service. This significantly reduces integration effort and shields applications from changes in individual AI models or providers. - Intelligent Routing and Orchestration: Beyond simple path-based routing, an AI Gateway can dynamically route requests based on a multitude of AI-specific factors such as model cost, latency, current load, provider availability, specific model versions, or even the nature of the prompt itself. This enables sophisticated strategies like failover to a different model if one is unavailable, or cost-aware routing to the cheapest available model that meets performance criteria.

- AI-Specific Security and Data Governance: It implements advanced security measures tailored for AI, including PII (Personally Identifiable Information) redaction, input/output sanitization, prevention of prompt injection attacks (for LLMs), and robust access control policies for sensitive AI models and data.

- Prompt Engineering and Management: For LLMs, the AI Gateway can store, version, and manage prompts, allowing for dynamic injection of context, A/B testing of different prompts, and encapsulating complex prompt logic into simpler API calls.

- Granular Cost Tracking and Optimization: It provides detailed insights into AI consumption costs, often broken down by tokens, inference units, or specific model usage. This allows organizations to monitor budgets, allocate costs accurately, and implement policies to optimize spending.

- Enhanced Observability for AI Workloads: It captures and exposes AI-specific metrics and logs, such as token counts, inference times, model versions used, and confidence scores, providing deeper operational visibility into the performance and behavior of AI systems.

In essence, an AI Gateway acts as the central nervous system for an organization's AI initiatives, simplifying development, bolstering security, optimizing performance, and providing granular control over the entire AI lifecycle. It is not merely an API proxy; it is an intelligent, AI-aware traffic controller and policy enforcer for the modern AI-driven enterprise.

2. The Rise of LLM Gateways: Specializing for Conversational AI

Among the most revolutionary developments in Artificial Intelligence, Large Language Models (LLMs) stand out as game-changers. Their immense potential, however, comes with a unique set of challenges that further underscore the specialized role of an AI Gateway, often leading to the specific term LLM Gateway.

2.1 What are Large Language Models (LLMs)? A Brief Context

Large Language Models (LLMs) are a class of deep learning models, typically based on the transformer architecture, that are trained on vast amounts of text data. This extensive training enables them to understand, generate, and process human language with remarkable fluency and coherence. LLMs can perform a wide array of natural language processing (NLP) tasks, including:

- Text Generation: Creating articles, stories, code, and even poetry.

- Translation: Converting text from one language to another.

- Summarization: Condensing long documents into shorter, coherent summaries.

- Question Answering: Providing direct answers to complex queries based on given context.

- Sentiment Analysis: Determining the emotional tone of a piece of text.

- Chatbots and Conversational AI: Engaging in human-like dialogue, understanding context, and maintaining conversation flow.

Models like OpenAI's GPT series, Google's Bard/Gemini, Anthropic's Claude, and open-source alternatives like Llama have showcased unprecedented capabilities, sparking a global wave of innovation. They represent a significant leap forward because they are "general-purpose" rather than task-specific, meaning a single LLM can handle many different tasks simply by changing the input "prompt."

2.2 Specific Challenges of LLMs for Integration

While incredibly powerful, integrating LLMs into applications at scale presents several unique and demanding challenges that often go beyond the capabilities of a standard AI Gateway, highlighting the need for specialized LLM Gateway functionalities:

- Prompt Engineering Complexities: The performance and output quality of an LLM are heavily dependent on the "prompt"—the input text, instructions, examples, and context provided to the model. Crafting effective prompts (prompt engineering) is an art and a science. Managing, versioning, A/B testing, and securely storing these critical prompts across different applications and teams become a significant overhead. Small changes in a prompt can drastically alter the output, necessitating meticulous control.

- Model Variability and Proliferation: The LLM landscape is fragmented and rapidly evolving. There are numerous commercial providers (OpenAI, Google, Anthropic, Cohere) each offering multiple models (GPT-3.5, GPT-4, Claude 2, Gemini Pro, etc.), alongside a growing ecosystem of open-source models (Llama, Falcon, Mistral) that can be self-hosted. Each model has its own API, data format, authentication scheme, rate limits, and subtle behavioral quirks. Integrating directly with each one is a maintenance nightmare, and migrating from one to another is costly and time-consuming.

- Cost Management and Optimization per Token/Call: LLM usage is typically billed based on the number of "tokens" processed (input + output). This granular billing model makes traditional request-based cost tracking inadequate. Organizations need to understand token consumption per user, per application, per prompt, and per model to manage budgets effectively, especially as costs can accumulate rapidly with extensive usage. Routing to the cheapest model that meets performance criteria becomes a critical cost-saving strategy.

- Latency and Throughput Issues: While LLMs are powerful, inference can be computationally intensive, leading to varying latencies. Managing throughput across different models and providers, ensuring acceptable response times for end-users, and implementing strategies like caching for common queries are essential for a smooth user experience.

- Data Privacy and Security for Conversational Data: LLM inputs often contain sensitive user queries, PII, proprietary business data, or intellectual property. Sending this data to third-party LLM providers raises significant privacy and compliance concerns. An LLM Gateway must be capable of robust data redaction, anonymization, and ensuring that sensitive data does not persist in logs or training data of third-party providers without explicit consent. Prompt injection attacks, where malicious users try to manipulate the LLM's behavior through prompts, also represent a significant security vulnerability.

- Observability and Debugging: Debugging LLM outputs can be challenging. Understanding why a model generated a particular response, tracking token usage for specific interactions, and monitoring model performance (e.g., hallucination rates, accuracy) requires specialized logging and analytics that go beyond standard API monitoring.

- Retry Mechanisms and Fallbacks: Given the distributed nature of LLM services and potential for transient errors or rate limit excursions from providers, intelligent retry mechanisms and fallback strategies (e.g., trying a different model or provider if one fails) are crucial for maintaining application reliability.

These highly specialized challenges demand an intermediary that is not only "AI-aware" but specifically "LLM-aware," capable of understanding prompts, tokens, and the unique operational dynamics of large language models. This is precisely the gap an LLM Gateway fills.

2.3 How an LLM Gateway Addresses These Challenges

An LLM Gateway is a highly specialized form of an AI Gateway, fine-tuned to tackle the specific complexities introduced by Large Language Models. It provides a robust and intelligent layer between client applications and various LLM providers, abstracting away much of the underlying complexity and offering a suite of functionalities critical for successful LLM integration at scale.

Here's how an LLM Gateway addresses the challenges outlined above:

- Prompt Management and Versioning: The LLM Gateway becomes the single source of truth for prompts. It allows developers to define, store, version, and manage prompts centrally. This means different applications can reference the same prompt by an ID, ensuring consistency. It facilitates A/B testing of different prompt variations to optimize model performance, and enables quick rollbacks if a new prompt version causes issues. This centralized control prevents prompt sprawl and improves maintainability.

- Unified API for Multiple LLMs: Perhaps one of its most significant contributions is providing a single, standardized API interface for invoking a diverse array of LLMs. Regardless of whether an application needs to interact with OpenAI's GPT-4, Google's Gemini, or a self-hosted Llama model, the client always makes the same standardized request to the LLM Gateway. The Gateway then translates this request into the specific format required by the chosen backend LLM, handles unique authentication methods, and normalizes the response. This dramatically simplifies integration efforts, makes it trivial to swap out LLM providers, and insulates applications from breaking changes in underlying model APIs. An excellent example of such an open-source solution is ApiPark, which offers the capability to integrate a variety of AI models with a unified management system for authentication and cost tracking, standardizing the request data format across all AI models.

- Cost Optimization and Tracking: An LLM Gateway provides granular visibility into token usage and associated costs. It can track token consumption per user, per application, per prompt, or per model call. This enables organizations to set budgets, implement usage quotas, and accurately attribute costs. Furthermore, it can employ intelligent routing strategies to direct requests to the most cost-effective LLM provider or model version that still meets performance and quality requirements, potentially saving significant operational expenses.

- Caching for Repetitive Prompts: For common or identical prompts that are likely to yield the same or very similar responses, an LLM Gateway can cache the model's output. When a subsequent request for the same prompt arrives, the Gateway can serve the cached response instantly, drastically reducing latency, offloading the burden from the LLM service, and, crucially, saving inference costs. This is particularly beneficial for frequently asked questions or boilerplate text generation.

- Security and Data Redaction: Addressing the paramount concerns of data privacy and security, an LLM Gateway can automatically scan incoming prompts and outgoing responses for Personally Identifiable Information (PII) or other sensitive data. It can then redact, anonymize, or mask this information before it is sent to the LLM or stored in logs. This ensures compliance with regulations like GDPR or HIPAA. Additionally, it provides a crucial layer of defense against prompt injection attacks by implementing sanitization and validation rules on user inputs.

- Observability Tailored for LLMs: Beyond basic API logs, an LLM Gateway captures rich, AI-specific telemetry. This includes metrics like input and output token counts, inference duration, model temperature, specific model versions used, and even confidence scores or hallucination metrics if available from the model. This detailed logging and monitoring capability is invaluable for debugging, performance analysis, cost auditing, and understanding the real-world behavior of LLMs. APIPark, for instance, provides comprehensive logging capabilities, recording every detail of each API call, enabling businesses to quickly trace and troubleshoot issues. It also offers powerful data analysis to display long-term trends and performance changes, aiding in preventive maintenance.

- Dynamic Routing and Failover: An LLM Gateway can intelligently route requests based on real-time conditions. This might involve directing traffic to the lowest-latency model, the most cost-effective provider, or a specific model version for A/B testing. In cases of provider outages or rate limit errors, the Gateway can automatically fail over to an alternative LLM or provider, ensuring high availability and resilience for applications relying on LLMs.

- Prompt Encapsulation into REST API: One particularly powerful feature is the ability to combine an LLM with a predefined prompt and encapsulate this combination as a new, higher-level REST API. For example, a complex "sentiment analysis" prompt can be turned into a simple

/sentimentendpoint, or a "summarization" prompt into a/summarizeendpoint. This simplifies development for consumer applications and allows business logic embedded in prompts to be managed and exposed cleanly. This is a key capability offered by APIPark, allowing users to quickly combine AI models with custom prompts to create new, reusable APIs.

By centralizing these critical functionalities, an LLM Gateway empowers organizations to leverage the transformative power of Large Language Models effectively, securely, and economically, turning what could be a chaotic integration landscape into a streamlined and robust operational environment.

3. Key Features and Capabilities of an AI Gateway

An AI Gateway is a sophisticated piece of infrastructure, distinguished by a rich set of features that go far beyond basic API management. These capabilities are specifically designed to address the unique demands of AI workloads, providing comprehensive control, optimization, and security across the entire AI service lifecycle.

3.1 Unified API Endpoint & Model Abstraction

One of the most foundational and transformative features of an AI Gateway is its ability to provide a unified API endpoint that abstracts away the complexities and diversities of underlying AI models. In the evolving landscape of AI, developers often face a bewildering array of models from various providers—each with its own proprietary API, authentication scheme, request/response formats, and sometimes even unique data types. For instance, invoking a sentiment analysis model from Vendor A might require a JSON payload structured one way, while a similar model from Vendor B demands a completely different format and authentication token. Integrating directly with each of these distinct interfaces rapidly becomes a maintenance nightmare, especially when an application needs to switch models or providers.

An AI Gateway solves this by acting as a universal translator. It presents a single, standardized API interface to client applications. When a client sends a request (e.g., to /ai/sentiment or /ai/generate), the AI Gateway intelligently intercepts it. It then applies the necessary transformations: it handles the specific authentication required by the chosen backend AI model, translates the standardized request payload into the model's native format, invokes the model, and finally, normalizes the model's potentially idiosyncratic response back into a consistent format before returning it to the client. This abstraction layer is invaluable. It drastically reduces development time by eliminating the need for clients to write model-specific integration code. Moreover, it provides unparalleled flexibility, allowing organizations to seamlessly swap out underlying AI models or providers—for example, switching from GPT-3.5 to GPT-4, or even to a different vendor's LLM—without requiring any changes to the client application's codebase. This future-proofs applications against vendor lock-in and allows for agile experimentation with new AI technologies.

3.2 Authentication, Authorization & Security

Security is paramount when dealing with AI services, especially as they often process sensitive user data or proprietary business information. An AI Gateway acts as the first line of defense, centralizing and enforcing robust security policies that would be cumbersome or impossible to implement across individual AI models.

- Centralized Authentication: It provides a single point for authenticating client applications and users accessing AI services. This can integrate with existing identity providers (e.g., OAuth2, JWT, API Keys, SAML), ensuring that only legitimate clients can invoke AI models. This avoids scattering authentication logic across multiple microservices or direct integrations with AI providers.

- Granular Authorization: Beyond mere authentication, an AI Gateway enables fine-grained authorization policies. Organizations can define who can access which AI model, under what conditions, and with what level of permissions. For example, specific teams might only be allowed to use certain models or have higher rate limits. This capability is enhanced by platforms like ApiPark, which supports independent API and access permissions for each tenant, ensuring that different departments or teams have tailored access based on their requirements, and also offers subscription approval features to prevent unauthorized API calls.

- Data Encryption: It ensures that data transmitted between the client, the AI Gateway, and the backend AI model is encrypted both in transit (e.g., via TLS/SSL) and often at rest (e.g., in caches or logs), protecting against eavesdropping and data breaches.

- Input/Output Sanitization and Validation: Crucially for AI, the Gateway can sanitize and validate incoming requests to prevent malicious inputs (e.g., prompt injection attacks for LLMs) and ensure that inputs conform to expected formats, preventing errors or unintended model behavior. Similarly, it can filter or transform model outputs to remove potentially harmful or undesirable content before it reaches the end-user.

- PII Redaction and Anonymization: For models processing sensitive personal data (PII), the AI Gateway can implement automated redaction or anonymization rules. This means sensitive identifiers (names, addresses, credit card numbers) are automatically removed or masked from the input before being sent to the AI model, and also from the model's response or from logs, significantly enhancing data privacy and compliance with regulations like GDPR, CCPA, or HIPAA. This capability ensures that sensitive information is never exposed to third-party AI services or stored unnecessarily.

- Threat Protection: Integration with Web Application Firewalls (WAFs) and other threat detection systems helps protect against common web vulnerabilities and AI-specific attacks.

By centralizing these robust security measures, an AI Gateway establishes a secure perimeter around an organization's AI assets, mitigating risks and ensuring compliance.

3.3 Rate Limiting, Throttling & Quota Management

Controlling the flow of requests is critical for the stability, fairness, and cost-effectiveness of AI services. AI models, especially those hosted by third-party providers, often have strict rate limits, and exceeding these can lead to errors, account suspensions, or unexpected costs. An AI Gateway provides sophisticated mechanisms to manage request traffic:

- Rate Limiting: This feature restricts the number of requests a client can make within a specific time window (e.g., 100 requests per minute). This prevents individual clients from monopolizing resources or accidentally/maliciously overloading backend AI services.

- Throttling: Beyond hard limits, throttling can temporarily reduce the processing rate for a client if the system is under stress, allowing it to recover gracefully. This is essential for maintaining service availability under peak loads.

- Quota Management: This allows administrators to allocate specific amounts of AI resource usage (e.g., a certain number of API calls, a maximum number of tokens for LLMs, or a budget cap) to different users, teams, or applications over a longer period (e.g., per day or per month). This is invaluable for managing costs, preventing budget overruns, and ensuring fair resource distribution within an organization. For example, the R&D team might have a higher token quota than the internal tools team. The AI Gateway can enforce these quotas, blocking requests once a limit is reached or sending alerts to administrators.

These controls are indispensable for managing the operational costs and ensuring the reliability of AI services, particularly with variable usage patterns.

3.4 Routing, Load Balancing & Failover

Intelligent traffic management is a cornerstone of an AI Gateway, crucial for performance, cost optimization, and resilience.

- Dynamic Routing: The Gateway can direct incoming requests to the most appropriate backend AI model or provider based on a sophisticated set of criteria. This might include:

- Cost-aware Routing: Directing requests to the cheapest available model that still meets performance requirements. For example, using a less expensive LLM for simple queries and a more powerful, costly one for complex tasks.

- Latency-aware Routing: Sending requests to the model that is currently offering the lowest latency, improving user experience.

- Load-aware Routing: Distributing traffic evenly across multiple instances of a model or multiple providers to prevent any single endpoint from becoming a bottleneck.

- Feature-based Routing: Routing requests based on specific features requested in the prompt, for example, sending requests for image generation to an image model and text generation to an LLM.

- Geographic Routing: Directing requests to models hosted in data centers geographically closer to the user to reduce network latency.

- Load Balancing: When multiple instances of the same AI model or similar models from different providers are available, the Gateway can distribute incoming requests across them to ensure optimal resource utilization and prevent overload on any single instance. This improves overall throughput and response times.

- Failover Mechanisms: A critical feature for ensuring high availability. If a primary AI model or provider becomes unresponsive, experiences an outage, or exceeds its rate limits, the AI Gateway can automatically detect the failure and reroute subsequent requests to a healthy alternative model or provider. This seamless failover prevents service interruptions for client applications, providing a robust and resilient AI infrastructure.

This intelligent routing capability allows organizations to build highly adaptable and resilient AI systems that can dynamically adjust to changing market conditions, model availability, and cost structures.

3.5 Caching & Response Optimization

Optimizing the performance and cost of AI services often involves smart caching strategies, which an AI Gateway can implement with AI-specific intelligence.

- AI-Specific Caching: For AI models, especially those with deterministic or quasi-deterministic outputs (e.g., a specific prompt to an LLM might consistently generate similar responses, or a sentiment analysis model will likely give the same sentiment for the same input), caching can significantly reduce latency and operational costs. When a request comes in, the Gateway first checks its cache. If an identical or sufficiently similar request has been made recently and its response cached, the Gateway can serve the cached response immediately without invoking the backend AI model.

- Cache Invalidation Strategies: Implementing effective cache invalidation is crucial to ensure freshness of data. This could be time-based (e.g., expire after 5 minutes), event-driven (e.g., invalidate cache when a model version is updated), or based on specific request parameters.

- Response Compression: The Gateway can compress AI responses before sending them back to the client, reducing network bandwidth usage and improving perceived performance for clients with slower connections.

- Partial Response Caching: For long-running AI tasks or streaming responses, the Gateway might cache intermediate results or stream segments to improve responsiveness.

By intelligently caching AI responses, organizations can drastically reduce the number of calls to expensive AI models, lower operational costs, and provide a faster, more responsive user experience.

3.6 Observability, Monitoring & Analytics

Understanding the health, performance, and usage patterns of AI services is vital for debugging, optimization, and strategic decision-making. An AI Gateway provides an unparalleled level of observability specifically tailored for AI workloads.

- Detailed API Call Logging: The Gateway captures comprehensive logs for every single API call made to an AI service. This includes not just standard HTTP request/response data but also AI-specific details such as the input prompt, the full model response, the model version used, input and output token counts (for LLMs), inference duration, and any associated error codes from the AI model itself. This granular logging is indispensable for troubleshooting issues, understanding model behavior, and auditing compliance. ApiPark, for example, is noted for its comprehensive logging capabilities, recording every detail of each API call, which allows businesses to quickly trace and troubleshoot issues in API calls, ensuring system stability and data security.

- Performance Metrics: It collects and exposes key performance indicators (KPIs) such as average latency, success rates, error rates, throughput (requests per second), and resource utilization (e.g., CPU/memory if self-hosting models). These metrics provide real-time insights into the operational health of the AI services.

- Cost Tracking Analytics: Beyond raw usage, the Gateway can aggregate and visualize cost data, breaking it down by model, user, application, or time period. This provides financial transparency and empowers organizations to identify cost drivers and optimize spending.

- Model-Specific Metrics: For LLMs, it can track metrics like hallucination rates (if detectable), sentiment distribution in responses, and specific model failure modes, offering deeper insights into the quality and reliability of AI outputs.

- Alerting and Notifications: Administrators can set up custom alerts based on predefined thresholds for any of the monitored metrics (e.g., alert if latency exceeds X milliseconds, or if error rate surpasses Y percent, or if token usage approaches budget limits). These proactive notifications enable rapid response to potential issues.

- Powerful Data Analysis: Leveraging the rich historical call data, the AI Gateway can perform sophisticated data analysis. This allows businesses to display long-term trends, identify patterns in usage or errors, predict potential issues before they occur, and gain strategic insights into how AI is being consumed and performing across the organization. ApiPark provides powerful data analysis features that analyze historical call data to display long-term trends and performance changes, helping businesses with preventive maintenance before issues occur.

This robust suite of observability features transforms opaque AI interactions into transparent, actionable insights, enabling proactive management and continuous improvement of AI-powered applications.

3.7 Prompt Engineering & Management

For Large Language Models (LLMs), the "prompt" is the primary interface for instructing the model and shaping its output. Effective prompt engineering is critical for getting high-quality, relevant, and consistent responses. An AI Gateway dedicated to LLMs elevates prompt management to a strategic capability.

- Centralized Prompt Store: It provides a central repository for storing, organizing, and categorizing prompts. Instead of hardcoding prompts within applications, developers can reference them by ID or name through the Gateway. This ensures consistency and makes prompt updates trivial across all consuming applications.

- Prompt Versioning: Just like code, prompts evolve. The Gateway allows for version control of prompts, enabling organizations to track changes, revert to previous versions if a new one performs poorly, and maintain an audit trail of prompt modifications. This is crucial for debugging and ensuring reproducibility.

- Dynamic Prompt Injection and Templating: Prompts often require dynamic elements (e.g., user input, specific context variables). The Gateway can act as a templating engine, allowing developers to define placeholders within prompts that are dynamically populated with data from the incoming API request before being sent to the LLM.

- Prompt A/B Testing: To optimize LLM performance and output quality, different prompt variations need to be tested. The Gateway can intelligently route a percentage of traffic to one prompt version (A) and another percentage to a different version (B), collecting metrics on their respective performance and allowing data-driven decisions on which prompt performs best.

- Prompt Encapsulation into REST API: A particularly powerful feature is the ability to combine a specific LLM with a pre-configured prompt (and potentially other model parameters) and expose this combination as a new, higher-level, specialized REST API. For example, a complex prompt designed for "executive summary generation" can be encapsulated into a simple

/summarize-executiveAPI endpoint. This simplifies integration for downstream applications, turning intricate LLM interactions into straightforward service calls. ApiPark explicitly offers this capability, allowing users to quickly combine AI models with custom prompts to create new APIs, such as sentiment analysis, translation, or data analysis APIs, effectively simplifying AI usage and reducing maintenance costs.

By providing comprehensive prompt engineering and management capabilities, an AI Gateway streamlines LLM development, fosters collaboration, and ensures optimal model performance and consistency across applications.

3.8 Cost Optimization & Management

The consumption of AI services, particularly from third-party providers, can become a significant operational expense. An AI Gateway offers powerful tools to gain visibility into, control, and optimize these costs.

- Granular Cost Visibility: It provides detailed breakdowns of AI spending, allowing organizations to track costs by specific AI model, by provider, by individual user or team, by application, and over custom time periods. This visibility is crucial for budget allocation and identifying cost hotspots.

- Cost-Aware Routing: As mentioned in Section 3.4, the Gateway can be configured to intelligently route requests to the most cost-effective AI model or provider that still meets performance and quality criteria. For example, using a cheaper, smaller model for less critical tasks, and reserving expensive, high-performing models for premium features.

- Budget Alerts and Quotas: Administrators can set up budget thresholds and receive automated alerts when spending approaches or exceeds these limits. Coupled with quota management (Section 3.3), this provides proactive control over expenditures, preventing unexpected cost overruns.

- Caching for Cost Reduction: By caching responses for repetitive AI queries, the Gateway directly reduces the number of paid invocations to backend AI models, leading to substantial cost savings, especially for high-volume use cases.

- Provider Consolidation and Negotiation: With a centralized view of AI usage and costs across providers, organizations are in a better position to negotiate favorable terms with AI vendors or to consolidate usage to fewer, more cost-effective providers.

Effective cost management through an AI Gateway ensures that organizations can leverage the power of AI responsibly and sustainably without spiraling expenses.

3.9 Model Versioning & A/B Testing

AI models are constantly evolving. New versions are released, existing ones are fine-tuned, and performance can change. Managing this continuous iteration while maintaining application stability is a complex challenge that an AI Gateway addresses directly.

- Seamless Model Versioning: The Gateway allows organizations to manage different versions of the same AI model. When a new version is deployed, the Gateway can gradually shift traffic to it, allowing for real-world testing before full rollout. If issues arise, it can quickly revert traffic to the previous stable version. This enables continuous integration and continuous deployment (CI/CD) practices for AI models without disrupting end-user applications.

- A/B Testing Models: Beyond just versioning, the Gateway facilitates true A/B testing of different AI models or configurations. For example, an organization might want to compare the performance of a proprietary sentiment analysis model against a commercially available one, or test two different fine-tuned versions of an LLM. The Gateway can route a percentage of incoming requests (e.g., 90% to Model A, 10% to Model B) and collect metrics on their respective outputs (e.g., accuracy, latency, user satisfaction). This data-driven approach allows for informed decisions on which models to deploy at scale.

- Canary Deployments: A specific type of A/B testing where a new model version (the "canary") is gradually rolled out to a small subset of users or traffic. The Gateway monitors the canary's performance, and if all looks well, it slowly increases the traffic to the new version. If any issues are detected, the traffic can be immediately rerouted back to the old version with minimal impact.

These capabilities are crucial for agile AI development, allowing teams to iterate rapidly, experiment safely, and continuously improve their AI-powered features without risking production stability.

3.10 Tenant & Team Management (Multi-tenancy)

For larger enterprises or SaaS providers offering AI capabilities to multiple customers, managing access and resources for diverse teams or tenants is a critical requirement. An AI Gateway with multi-tenancy support provides the necessary isolation and control.

- Independent API and Access Permissions for Each Tenant: A multi-tenant AI Gateway allows the creation of distinct "tenants" or "teams," each operating in its own logical space. Each tenant can have its own independent applications, API keys, user configurations, specific AI model access permissions, and even security policies. This ensures that the data and operations of one team are completely isolated from others, preventing data leakage and unauthorized access. ApiPark is designed with this in mind, enabling the creation of multiple teams (tenants), each with independent applications, data, user configurations, and security policies.

- Centralized Resource Sharing and Management: While tenants maintain logical isolation, they often share underlying applications and infrastructure resources (e.g., the same pool of AI models or compute resources). The Gateway efficiently manages this sharing, improving resource utilization and reducing operational costs for the platform provider.

- API Service Sharing within Teams: The platform allows for a centralized display of all available API services (including AI services) within an organization. This makes it incredibly easy for different departments, teams, or projects to discover, subscribe to, and utilize the required API services without needing direct knowledge of their backend implementation. This fosters internal collaboration and accelerates development by promoting API reusability. ApiPark explicitly highlights this feature, making it easy for different departments and teams to find and use the required API services.

- Customizable Dashboards and Reporting: Each tenant can typically access their own dashboards and reporting tools, showing their specific usage metrics, costs, and performance data, without seeing information from other tenants.

Multi-tenancy capabilities in an AI Gateway are essential for enterprises building internal AI platforms, or for SaaS companies offering AI features to their customer base, ensuring secure, scalable, and isolated access to AI resources.

4. Benefits of Implementing an AI Gateway

The adoption of an AI Gateway is not merely a technical choice; it's a strategic decision that delivers profound benefits across the entire organization, from development teams to operations and even business stakeholders.

4.1 Simplified AI Integration & Development

One of the most immediate and impactful benefits of an AI Gateway is the dramatic simplification of integrating diverse AI models into applications. Without a gateway, developers are forced to contend with a fragmented landscape of proprietary APIs, inconsistent data formats, and varied authentication mechanisms from multiple AI providers. This leads to substantial boilerplate code, increased development time, and a steep learning curve for each new AI service.

An AI Gateway acts as a powerful abstraction layer. By providing a single, unified API endpoint and normalizing requests and responses, it shields developers from the underlying complexities. This means a developer can interact with a standardized /ai/predict endpoint, and the gateway handles all the intricate translations to OpenAI, Google AI, or a custom model. This drastically reduces the time and effort required to integrate AI capabilities, accelerates feature development, and allows developers to focus on building core application logic rather than wrestling with AI provider specifics. Furthermore, it facilitates rapid prototyping and experimentation, enabling teams to quickly test and switch between different AI models or providers with minimal code changes, fostering a culture of innovation and agility.

4.2 Enhanced Security & Compliance

Security is a non-negotiable imperative, especially when dealing with AI models that often process sensitive personal or proprietary data. An AI Gateway significantly strengthens the security posture and simplifies compliance efforts. By centralizing all AI traffic through a single point, it enables the consistent enforcement of robust security policies that would be difficult to scatter across individual microservices.

Key security benefits include: centralized authentication and authorization, ensuring only legitimate users and applications access AI resources; powerful data redaction and anonymization capabilities that automatically strip sensitive Personally Identifiable Information (PII) from inputs and outputs, crucial for GDPR, HIPAA, and other privacy regulations; and protection against AI-specific threats like prompt injection attacks. It acts as an intelligent firewall, validating inputs, sanitizing outputs, and encrypting data in transit. This comprehensive approach minimizes the risk of data breaches, ensures regulatory compliance, and instills confidence in the secure use of AI throughout the enterprise.

4.3 Improved Performance & Reliability

The performance and reliability of AI-powered applications directly impact user experience and business operations. An AI Gateway is engineered to optimize both. Its intelligent routing capabilities ensure that requests are always directed to the most appropriate and available AI model, considering factors like latency, load, and geographic proximity. This dynamic routing minimizes response times and maximizes throughput.

Features like caching for repetitive AI queries dramatically reduce latency and offload the burden from backend models, leading to faster responses and a more fluid user experience. Furthermore, robust load balancing mechanisms distribute traffic efficiently across multiple model instances or providers, preventing bottlenecks and ensuring optimal resource utilization. Crucially, the Gateway's built-in failover capabilities automatically detect unresponsive models or providers and seamlessly reroute traffic to healthy alternatives. This resilience ensures high availability, preventing service interruptions and maintaining continuous operation of AI-driven features, even in the face of underlying model failures or outages.

4.4 Significant Cost Savings & Optimization

AI services, especially those consumed from third-party providers (like large language models billed per token), can accrue substantial costs if not meticulously managed. An AI Gateway provides unparalleled tools for cost visibility, control, and optimization, leading to significant financial savings.

It offers granular cost tracking, breaking down expenses by model, user, application, or project, providing transparent insights into spending patterns. More importantly, it enables active cost optimization through intelligent routing strategies: directing requests to the cheapest available model that meets performance criteria, or utilizing less expensive models for less critical tasks. Caching repetitive queries directly reduces the number of paid invocations to backend AI services. Additionally, features like rate limiting and quota management prevent accidental or malicious overspending by enforcing usage limits. By centralizing control and providing actionable insights, an AI Gateway transforms AI consumption from a potential cost center into a strategically managed resource, ensuring optimal value for money.

4.5 Better Governance & Control

For enterprises adopting AI at scale, establishing clear governance and maintaining control over AI usage are paramount. An AI Gateway serves as the central command center for all AI interactions, providing comprehensive oversight and enabling consistent policy enforcement.

It offers a unified platform for managing model access, setting usage policies, and monitoring all AI traffic. This central control point ensures that AI models are used responsibly, ethically, and in alignment with organizational guidelines. Features like audit trails, detailed logging, and comprehensive analytics provide complete transparency into who is using which models, for what purpose, and with what results. This level of governance is crucial for risk management, ensuring accountability, and maintaining compliance with internal policies and external regulations. It also streamlines the management of different model versions and prompts, allowing for controlled rollouts and rapid experimentation within defined parameters.

4.6 Accelerated Innovation & Experimentation

In the rapidly evolving AI landscape, the ability to innovate quickly and experiment safely is a key competitive advantage. An AI Gateway empowers teams to do just that. By abstracting away the complexities of AI model integration, it frees developers to focus on designing innovative AI-powered features rather than on low-level API mechanics.

The Gateway's support for prompt versioning, A/B testing of different models or prompts, and canary deployments allows for rapid, low-risk experimentation. Developers can quickly test new models, iterate on prompts, and compare performance metrics in a controlled environment, making data-driven decisions about which AI capabilities to deploy. This agility not only accelerates the time-to-market for new AI features but also fosters a culture of continuous improvement and innovation, enabling organizations to stay at the forefront of AI adoption and leverage emerging technologies quickly and effectively.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

5. AI Gateway vs. Traditional API Gateway: A Detailed Comparison

While an AI Gateway shares a common lineage with the traditional API Gateway, its specialization for AI workloads introduces significant distinctions. Understanding these differences is crucial for appreciating the unique value proposition of an AI Gateway.

Both gateways serve as intermediaries, managing and securing API traffic. However, the nature of the "API" and the "traffic" they handle fundamentally differs, leading to divergent feature sets and areas of focus. A traditional API Gateway is designed for generalized HTTP/REST or gRPC traffic, where the payload is typically structured data, and the primary concerns are routing, authentication, and rate limiting for deterministic services. An AI Gateway, on the other hand, deals with the unique characteristics of AI inference, where payloads can be diverse (text, images, audio), the "logic" is often encapsulated in prompts, responses can be probabilistic, and operational costs are highly granular (e.g., per token).

Let's break down the comparison in detail, followed by a summary table.

Traditional API Gateway Focus:

- Protocol Handling: Primarily HTTP/REST, gRPC.

- Data Transformation: Basic JSON/XML transformation, header manipulation.

- Routing: Path-based, host-based, simple load balancing.

- Security: Authentication (API keys, JWT, OAuth), authorization (ACLs), basic threat protection.

- Monitoring: Request/response logs, latency, error rates, throughput.

- Caching: HTTP response caching (based on status codes, headers).

- Rate Limiting: Request count per time unit.

- Core Abstraction: Microservices architecture, hiding service discovery, consolidating APIs.

- Lifecycle Management: General API lifecycle (design, publish, invoke, deprecate).

AI Gateway / LLM Gateway Focus (building upon API Gateway):

- Protocol Handling: Extends to AI-specific SDKs, specialized model APIs (e.g., gRPC for ML frameworks), and often abstracts these into a unified REST interface.

- Data Transformation: Advanced AI-specific payload transformation (e.g., converting generic text input into a specific LLM prompt format, handling base64 encoded images, normalizing varied model outputs), PII redaction.

- Routing: Dynamic and intelligent routing based on AI-specific criteria: model cost, latency, load, provider availability, model version, specific prompt characteristics, A/B testing splits.

- Security: AI-specific authentication/authorization for models, input sanitization for prompt injection, output filtering, PII/sensitive data redaction on both input and output.

- Monitoring: Enhanced metrics including token usage (for LLMs), inference duration, model version, confidence scores, hallucination rates, cost per inference/token.

- Caching: AI-specific content caching (e.g., caching LLM responses for identical prompts), sophisticated cache invalidation.

- Rate Limiting: Granular rate limiting based on requests, tokens, or compute units. Supports complex quota management per user/team for AI resources.

- Core Abstraction: Abstraction of specific AI models/providers, prompt management, model versioning, intelligent model orchestration.

- Lifecycle Management: End-to-end API lifecycle management for AI services, including prompt versioning, model A/B testing, and AI-specific deployment strategies. ApiPark assists with managing the entire lifecycle of APIs, including design, publication, invocation, and decommission, regulating API management processes, traffic forwarding, load balancing, and versioning of published APIs, extending this to AI services.

Here's a detailed comparison table to highlight the key differences:

| Feature/Aspect | Traditional API Gateway | AI Gateway (including LLM Gateway) |

|---|---|---|

| Primary Use Case | Managing microservices, REST APIs, general backend calls | Managing AI models, LLMs, ML inference services, enabling intelligent applications |

| Core Abstraction | Backend microservices, service discovery | Diverse AI models/providers, specific model APIs, prompt management, model versioning |

| Data Payloads | Generic structured data (JSON, XML) | Text, images, audio, video, structured data; often sensitive inputs/outputs |

| Request/Response Format | Standard HTTP methods, JSON/XML | Standardizes varied AI model APIs into a unified format; handles token-based communication for LLMs |

| Routing Logic | Path, host, header, simple load balancing | Intelligent, dynamic routing based on cost, latency, model performance, availability, A/B testing |

| Authentication | API Keys, JWT, OAuth2 | Extends to AI model-specific authentication tokens; granular access to specific models/prompts |

| Authorization | Role-based access control (RBAC), ACLs | RBAC, ACLs for AI models; specific permissions for prompt versions, tenant-specific access |

| Security Enhancements | Basic WAF, SSL termination, IP whitelisting | PII redaction, input sanitization (prompt injection defense), output filtering, data privacy compliance |

| Rate Limiting | Requests per second/minute | Requests, tokens per second/minute, compute units; granular quotas per user/team |

| Caching | HTTP response caching | AI-specific content caching (e.g., LLM outputs for common prompts), intelligent invalidation |

| Monitoring & Logging | Standard HTTP metrics, request/response logs | Enhanced with AI-specific metrics (token counts, inference time, model version, confidence scores, hallucination rates), cost metrics |

| Prompt Management | Not applicable | Centralized prompt storage, versioning, templating, A/B testing, encapsulation into REST APIs |

| Model Lifecycle | Not applicable (manages service endpoints) | Model versioning, canary deployments, A/B testing of different models/prompts |

| Cost Optimization | Basic usage tracking | Granular cost tracking by token/inference, cost-aware routing, budget alerts |

| AI-Specific Features | None | Model abstraction, prompt engineering, PII redaction, token-based metrics, dynamic model selection |

In conclusion, while an AI Gateway performs many of the foundational duties of an API Gateway, it distinguishes itself through its deep, native understanding of AI workloads. It is not just a proxy; it is an intelligent orchestrator specifically designed to streamline the management, security, and optimization of diverse AI models, making it an indispensable component in any AI-driven architecture. The evolution from a general-purpose API Gateway to a specialized AI Gateway reflects the growing maturity and unique demands of integrating artificial intelligence into enterprise applications.

6. Use Cases and Applications of an AI Gateway

The versatility and specialized capabilities of an AI Gateway make it invaluable across a wide spectrum of industries and application types. It addresses common pain points in AI integration, enabling organizations to deploy, manage, and scale AI effectively.

6.1 Enterprise AI Platforms

Large enterprises often have numerous departments and teams, each developing or consuming various AI models. Without an AI Gateway, this leads to a fragmented and chaotic AI landscape where each team reinvents the wheel for integration, security, and cost management. An AI Gateway provides a centralized, consistent, and governed platform for all internal AI services.

- Unified Access: It offers a single point of access for all enterprise AI models, regardless of whether they are proprietary models trained internally or third-party cloud AI services. This promotes discoverability and reuse of AI capabilities across departments.

- Standardized Governance: It enforces consistent security policies, access controls, rate limits, and data privacy rules across the entire enterprise AI ecosystem, simplifying compliance and risk management.

- Cost Allocation: With granular cost tracking, enterprises can accurately attribute AI usage and expenses to specific projects, departments, or cost centers, enabling better budget management and accountability.

- Accelerated Internal Development: Developers across the enterprise can rapidly integrate AI capabilities without needing to understand the intricacies of each model's API, speeding up the development of AI-powered internal tools and applications.

6.2 SaaS Applications Leveraging Multiple AI Services

SaaS providers are increasingly embedding AI features into their products (e.g., smart content generation, predictive analytics, intelligent chatbots). These applications often rely on a combination of different AI models (e.g., an LLM for text generation, a specialized NLP model for entity recognition, and a custom ML model for recommendations).

- Vendor Agnostic Integration: An AI Gateway allows SaaS providers to easily switch between different AI vendors (e.g., moving from OpenAI to Cohere, or vice-versa) based on performance, cost, or feature set, without requiring extensive changes to the core application. This prevents vendor lock-in.

- Optimal Model Selection: The Gateway can intelligently route requests to the most appropriate or cost-effective model in real-time. For example, a premium tier customer might get access to a high-performing, expensive LLM, while a basic tier uses a cheaper, slightly less powerful one.

- Consistent API for Developers: For developers building features within the SaaS product, the AI Gateway provides a stable and unified API for all AI functions, simplifying development and maintenance.

- Scalability and Resilience: As the SaaS platform scales, the AI Gateway handles load balancing and failover, ensuring that AI features remain highly available and responsive to millions of users.

6.3 AI-Powered Chatbots and Virtual Assistants

Chatbots and virtual assistants heavily rely on various AI services for natural language understanding (NLU), natural language generation (NLG), sentiment analysis, and knowledge retrieval. An LLM Gateway is particularly vital here.

- Prompt Orchestration: Manages complex conversational prompts, context handling, and prompt versioning for the underlying LLMs, ensuring consistent and relevant responses.

- Multi-Model Integration: Seamlessly switches between different LLMs or specialized NLU models based on the complexity or domain of the user's query (e.g., a simple FAQ answered by a small, fast model; complex troubleshooting handled by a powerful, larger LLM).

- Cost Management: Monitors token usage and routes conversational turns to the most cost-efficient LLM, significantly reducing the operational cost of running high-volume chatbots.

- Security for Sensitive Conversations: Redacts PII from user inputs and bot outputs, ensuring privacy in customer interactions and preventing sensitive data from being logged or processed by third-party LLMs.

- Performance Optimization: Caches common queries and responses, providing instant answers for frequently asked questions, improving the responsiveness and user experience of the chatbot.

6.4 Developer Platforms Offering AI Capabilities

Platforms that offer AI tools or services to external developers (e.g., cloud providers, specialized API companies) can leverage an AI Gateway to manage their offerings.

- API Productization: The Gateway allows for quick encapsulation of internal AI models and prompts into well-defined, robust REST APIs, making it easy to expose these as products to a developer community. As highlighted earlier, ApiPark enables prompt encapsulation into REST API, allowing users to quickly combine AI models with custom prompts to create new, ready-to-use APIs.

- Tenant-Specific Customization: Each developer or customer can have their own isolated environment with specific access permissions, rate limits, and usage quotas, managed centrally by the Gateway.

- Monetization and Billing: Provides the infrastructure for metering API calls, token usage, and other AI-specific metrics, enabling accurate billing and monetization strategies for AI services.

- Developer Portal: The Gateway can integrate with a developer portal to provide documentation, SDKs, and a seamless onboarding experience for external developers. ApiPark is described as an all-in-one AI gateway and API developer portal, further emphasizing its utility in this context.

6.5 MLOps Pipelines and Production Deployment

In machine learning operations (MLOps), deploying and managing models in production is a complex process. An AI Gateway acts as a critical component for productionizing AI models.

- Model Agnostic Deployment: Decouples the application from the specific ML framework or deployment environment of the model, enabling easier model updates and migrations.

- A/B Testing and Canary Releases: Facilitates the safe rollout of new model versions through A/B testing and canary deployments, minimizing risk and allowing for continuous improvement of production models.

- Monitoring and Feedback Loops: Provides detailed performance metrics and logs for production models, feeding into MLOps monitoring and retraining pipelines, ensuring models remain accurate and performant over time.

- Scalability for Inference: Handles high volumes of inference requests, dynamically scaling traffic to different model instances to meet demand without compromising latency.

By integrating an AI Gateway into these diverse use cases, organizations can unlock the full potential of AI, transforming complex integrations into streamlined, secure, and cost-effective operations, ultimately driving innovation and business value.

7. Choosing the Right AI Gateway Solution

Selecting the appropriate AI Gateway solution is a strategic decision that can significantly impact an organization's AI initiatives. The market offers a range of options, from open-source projects to commercial offerings, each with its own strengths and considerations. A thoughtful evaluation based on specific organizational needs is crucial.

7.1 Open-source vs. Commercial Solutions

- Open-source AI Gateways: Solutions like ApiPark, which is open-sourced under the Apache 2.0 license, offer a high degree of flexibility, transparency, and community-driven development.

- Pros: Lower initial cost (no licensing fees), full control over the codebase, highly customizable, large community support often translates to rapid bug fixes and feature development, avoids vendor lock-in.

- Cons: Requires in-house expertise for deployment, maintenance, and customization; responsibility for security patches and updates falls on the user; may lack enterprise-grade features out-of-the-box (e.g., advanced analytics, dedicated support SLAs).

- Ideal for: Startups, organizations with strong in-house engineering teams, those requiring deep customization, or those on a tight budget.

- Commercial AI Gateway Products: These are typically comprehensive, managed solutions offered by vendors.

- Pros: Out-of-the-box enterprise features, professional technical support, managed services reducing operational overhead, robust security and compliance certifications, faster time-to-value.

- Cons: Higher recurring costs (licensing, subscription fees), potential for vendor lock-in, less flexibility for deep customization, reliance on vendor's roadmap.

- Ideal for: Large enterprises, organizations with limited DevOps resources, those prioritizing compliance and guaranteed support, or those seeking a fully managed solution.

- It's worth noting that some open-source projects, like APIPark, offer a commercial version with advanced features and professional technical support for leading enterprises, providing a hybrid approach that scales with business needs.

7.2 Scalability and Performance

The chosen AI Gateway must be capable of handling the projected traffic volume for your AI services, both current and future.

- Throughput (TPS): Evaluate the gateway's ability to process a high number of requests per second (TPS). High-performance gateways are crucial for real-time AI applications. For example, ApiPark boasts performance rivaling Nginx, capable of achieving over 20,000 TPS with just an 8-core CPU and 8GB of memory, and supporting cluster deployment for large-scale traffic.

- Latency: The gateway should introduce minimal latency to AI inference requests. Measure the overhead added by the gateway to ensure it doesn't negatively impact user experience.

- Elasticity: Can the gateway scale horizontally (adding more instances) to accommodate peak loads and scale down during off-peak times to optimize costs?

- Fault Tolerance: How does the gateway handle failures? Does it offer built-in redundancy, failover mechanisms, and resilience features to ensure continuous availability?

7.3 Feature Set Alignment with Needs

Carefully map the gateway's features against your specific organizational requirements for AI management.

- Core AI Features: Does it support prompt management, model versioning, intelligent routing based on AI metrics, and cost optimization for LLMs?

- Security: Does it offer PII redaction, input sanitization, and robust access controls essential for your data sensitivity and compliance needs?

- Observability: Does it provide granular logging, monitoring, and analytics tailored for AI workloads, allowing you to debug, audit, and optimize effectively?

- Integration Ecosystem: Does it integrate well with your existing identity providers, monitoring tools, and CI/CD pipelines?

7.4 Ease of Deployment and Management

The operational overhead associated with deploying and managing the AI Gateway itself is a significant factor.

- Deployment Complexity: How easy is it to set up? Does it offer quick-start guides, Docker images, or Kubernetes manifests? ApiPark exemplifies this with its quick deployment in just 5 minutes using a single command line.

- Management Interface: Does it provide an intuitive UI for configuration, monitoring, and policy management? Or does it rely heavily on command-line tools and API calls?

- Documentation and Community: Is there comprehensive documentation available? Is there an active community (for open-source) or responsive vendor support (for commercial) to assist with issues and questions?

7.5 Security Posture

Beyond the features mentioned, assess the overall security posture of the gateway solution.

- Vulnerability Management: How are security vulnerabilities identified and patched? What is the update cycle?

- Compliance Certifications: Does the solution (especially commercial ones) hold relevant security and compliance certifications (e.g., SOC 2, ISO 27001)?

- Auditing Capabilities: Does it provide robust auditing logs to track all administrative actions and configuration changes?

By thoroughly evaluating these aspects, organizations can make an informed decision and select an AI Gateway that not only meets their current needs but also provides a scalable, secure, and future-proof foundation for their evolving AI strategy.

8. The Future of AI Gateways

The landscape of Artificial Intelligence is continuously evolving, and with it, the role and capabilities of the AI Gateway are poised for significant advancements. As AI models become more sophisticated, ubiquitous, and integrated into critical business processes, the gateway will adapt to handle new challenges and opportunities.

8.1 More Intelligent Routing and Dynamic Model Selection

The current intelligent routing capabilities of AI Gateways, while powerful, will become even more nuanced. Future gateways will likely incorporate advanced machine learning algorithms themselves to make real-time, dynamic decisions about which AI model to use for a given request. This could involve:

- Context-Aware Routing: Selecting models based on deeper semantic understanding of the input, user profile, or historical interaction patterns. For instance, routing a customer service query to a specialized model trained on specific product knowledge, or a general-purpose LLM for open-ended questions.

- Performance Prediction: Using predictive analytics to forecast model latency and cost based on current load, network conditions, and model characteristics, allowing for proactive routing decisions.

- Hybrid Model Orchestration: Seamlessly splitting a single request across multiple specialized AI models (e.g., one model for entity extraction, another for sentiment, and an LLM for summarization) and orchestrating their combined outputs for a holistic response.

- Reinforcement Learning for Optimization: Gateways could employ reinforcement learning to continuously optimize routing strategies based on real-world performance feedback, balancing cost, latency, and output quality.

8.2 Enhanced Security for AI and Countering Adversarial Attacks

As AI systems become more critical, they also become more attractive targets for malicious actors. Future AI Gateways will fortify their security capabilities to address emerging AI-specific threats:

- Advanced Prompt Injection Defense: Moving beyond basic sanitization to more sophisticated AI-powered detection of malicious prompts or attempts to jailbreak LLMs.

- Data Poisoning Prevention: Implementing mechanisms to detect and prevent poisoned data from reaching AI models, ensuring the integrity of training and fine-tuning datasets.

- Adversarial Attack Detection: Identifying and mitigating inputs designed to trick or mislead AI models (e.g., subtle perturbations in images or text that cause misclassification).

- Explainable AI (XAI) for Security: Integrating XAI techniques to help trace and understand why an AI model generated a particular (potentially malicious) response, aiding in incident response.

- Confidential Computing Integration: Leveraging confidential computing environments to ensure that sensitive AI inputs and model inference happen in protected memory enclaves, even from the cloud provider.

8.3 Integration with MLOps and AIOps Ecosystems

The boundary between the AI Gateway and broader MLOps (Machine Learning Operations) and AIOps (Artificial Intelligence for IT Operations) platforms will blur further.

- Closer MLOps Integration: The Gateway will become an even more integral part of MLOps pipelines, seamlessly feeding real-time inference data back into model retraining loops, facilitating continuous improvement and automatic model deployment.

- AIOps for Gateway Management: AIOps principles will be applied to the gateway itself, using AI to monitor, predict, and proactively resolve issues within the AI Gateway infrastructure, ensuring its own optimal performance and reliability.

- Automated Model Discovery and Cataloging: Future gateways might autonomously discover new AI models deployed within an organization's infrastructure and automatically add them to a centralized catalog, complete with metadata and version information.

8.4 Standardization Efforts and Interoperability

As AI adoption grows, the demand for greater standardization and interoperability across different AI models and platforms will increase.

- Open Standards Adoption: AI Gateways will play a key role in implementing and promoting open standards for AI model invocation, metadata, and lifecycle management, reducing fragmentation and fostering a more unified AI ecosystem.

- Portable Prompts and Configurations: The ability to easily port prompts and model configurations between different AI Gateways and providers will become increasingly important.

8.5 Edge AI Gateway Considerations

With the rise of edge computing, where AI inference happens closer to the data source (e.g., on IoT devices, smart cameras), a new class of "Edge AI Gateways" will emerge.

- Resource-Constrained Optimization: These gateways will be highly optimized for low-power, low-latency environments, performing lightweight inference management, local caching, and secure communication with centralized cloud AI services.

- Offline Capabilities: Providing partial or full AI inference capabilities even without a constant connection to the cloud.

- Distributed AI Orchestration: Managing a mesh of AI models deployed across various edge devices and central cloud resources.