What is an API Waterfall? A Simple Explanation

In the intricate world of modern software development, where applications are no longer monolithic giants but finely woven tapestries of interconnected services, the concept of an API is paramount. An API, or Application Programming Interface, serves as a digital contract, enabling different software components to communicate and exchange data seamlessly. From the simplest mobile app fetching weather data to the most complex enterprise system orchestrating microservices across continents, APIs are the invisible threads that hold our digital infrastructure together. However, as systems grow in complexity and functionality, these threads can sometimes form intricate chains, leading to a phenomenon we might metaphorically describe as an API Waterfall.

This extensive exploration delves into the essence of what an API waterfall truly means, examining its underlying mechanics, the challenges it presents, and the sophisticated solutions available to manage its complexities, with a particular focus on the indispensable role of an API gateway. We will traverse the landscape of distributed systems, dissecting how sequential API calls can impact performance, reliability, and security, and ultimately, how strategic design and robust tooling can transform potential pitfalls into powerful, scalable architectures.

The Foundation: Understanding APIs in the Modern Landscape

Before we plunge into the intricacies of an API waterfall, it's crucial to lay a solid foundation by understanding what an API is and why it has become the bedrock of contemporary software development. At its core, an API is a set of defined rules that dictate how two distinct software components can interact. It abstracts away the complexity of the underlying system, exposing only the necessary functionalities and data in a standardized, accessible manner. Think of it as a restaurant menu: you don't need to know how the chef prepares the meal (the internal logic); you just need to know what to order (the API endpoint) and what ingredients it contains (the expected data).

The rise of the internet, cloud computing, and microservices architectures has exponentially amplified the significance of APIs. They facilitate integration between disparate systems, foster innovation by allowing developers to build upon existing services, and enable the creation of highly modular, scalable applications. Whether it's a mobile banking app querying your account balance, a smart home device controlling your lights, or a business intelligence tool aggregating data from various sources, an api is the silent, tireless worker behind the scenes, ensuring that information flows freely and functions execute correctly.

There are various types of APIs, each with its own conventions and use cases:

- REST (Representational State Transfer) APIs: The most prevalent type, REST APIs leverage standard HTTP methods (GET, POST, PUT, DELETE) to interact with resources. They are stateless, making them highly scalable and easy to consume.

- SOAP (Simple Object Access Protocol) APIs: Older and more rigid, SOAP APIs use XML for message formatting and rely on standard protocols like HTTP, SMTP, or TCP. They offer robust security and transaction management but can be more complex to implement.

- GraphQL APIs: A newer query language for APIs, GraphQL allows clients to request exactly the data they need, no more, no less, reducing over-fetching and under-fetching issues common with REST.

- gRPC APIs: Developed by Google, gRPC is a high-performance, open-source universal RPC framework that uses Protocol Buffers for data serialization. It's often favored for microservices communication due to its efficiency.

Regardless of their specific protocol or structure, all APIs share the common goal of facilitating communication and interaction between software components. This fundamental principle sets the stage for understanding how multiple APIs can intertwine, forming what we describe as an API waterfall.

Unpacking the "API Waterfall" Concept: Sequential Dependencies in Action

The term "API Waterfall" is not a formally recognized architectural pattern with a rigid definition in the same vein as "microservices" or "event-driven architecture." Instead, it serves as a powerful metaphor to describe a common, yet often challenging, phenomenon in distributed systems: a sequence of dependent api calls where the output or successful completion of one API invocation directly gates or feeds into the input or execution of subsequent API invocations. Imagine a cascade of water, where each drop flows into the next, creating a continuous, sequential movement. In the digital realm, this cascade represents data and control flow across multiple services.

An API waterfall emerges when a single user request or system process necessitates interactions with several different APIs, often belonging to different services or even external providers, in a specific order. The chain of dependencies means that if an upstream api fails or is delayed, it can directly impact all subsequent downstream APIs, potentially bringing an entire user journey or business process to a halt.

Let's illustrate with concrete scenarios:

- E-commerce Checkout Process:In this example, a single "checkout" action triggers a waterfall of six distinct API calls, each relying on the successful completion and often the output of the preceding one. Any delay or failure at any point in this chain can prevent the user from completing their purchase, leading to frustration and lost revenue.

- User clicks "Checkout."

- API Call 1 (Cart Service API): Validate items in the shopping cart and calculate total cost.

- API Call 2 (User Service API): Authenticate the user and retrieve their shipping address and payment methods. (Dependent on Call 1's success and validation).

- API Call 3 (Payment Gateway API): Process the payment using the chosen method. (Dependent on Call 2's retrieved payment method).

- API Call 4 (Inventory Service API): Deduct purchased items from stock. (Dependent on Call 3's successful payment).

- API Call 5 (Order Service API): Create a new order record in the database. (Dependent on Call 4's inventory update).

- API Call 6 (Notification Service API): Send a confirmation email or SMS to the user. (Dependent on Call 5's order creation).

- Complex Data Aggregation: Imagine a dashboard that displays a customer's holistic view, pulling data from various internal and external sources:Here, the "waterfall" is less strictly linear but still deeply dependent on the initial customer ID. If the CRM API is slow, the entire dashboard rendering will suffer. If the external partner API fails, that specific piece of information might be missing, but the rest of the dashboard could still load, assuming proper error handling.

- API Call 1 (CRM API): Fetch basic customer profile (name, ID).

- API Call 2 (Support Ticket API): Retrieve recent support interactions for the customer ID. (Dependent on Call 1).

- API Call 3 (Billing API): Get subscription details and payment history for the customer ID. (Dependent on Call 1).

- API Call 4 (Usage Data API): Obtain product usage statistics. (Dependent on Call 1).

- API Call 5 (External Partner API): Fetch related third-party data (e.g., social media mentions). (Dependent on Call 1).

- Aggregation Service: Combine data from Calls 2-5 and format it for display.

- AI-Powered Workflows: Consider an application that processes user-uploaded images through multiple AI models:In such scenarios, the sequential nature is even more pronounced, as the output of one AI model directly informs the input of the next, creating a sophisticated computational cascade. Managing these cascades efficiently, especially when dealing with diverse AI models, is where advanced gateway solutions can shine. For instance, platforms like ApiPark, an open-source AI gateway and API management platform, offer robust solutions to unify API formats for AI invocation and encapsulate prompts into REST APIs, thereby simplifying the management of these complex, multi-stage AI workflows. This significantly streamlines the development and deployment of applications leveraging chained AI capabilities.

- API Call 1 (Image Preprocessing API): Resize and normalize the image.

- API Call 2 (Object Detection AI API): Identify objects in the preprocessed image. (Dependent on Call 1).

- API Call 3 (Sentiment Analysis AI API): Analyze text associated with identified objects (if any). (Dependent on Call 2's output).

- API Call 4 (Recommendation AI API): Generate product recommendations based on detected objects and sentiment. (Dependent on Call 2 & 3).

The visualization of an API waterfall can be quite literal in performance monitoring tools, often resembling network waterfall charts where each bar represents an API call, stacked chronologically, illustrating the cumulative time taken. Understanding this sequential dependency is the first step towards recognizing the challenges it poses and formulating effective strategies to mitigate them.

Why API Waterfalls Are Important (and Challenging)

While indispensable for building complex functionalities, API waterfalls introduce a host of challenges that developers, operations teams, and business stakeholders must meticulously address. Their importance stems from the critical business processes they often underpin, but their inherent complexities demand careful consideration.

1. Performance Implications: The Accumulation of Latency

Perhaps the most immediate and impactful challenge of an API waterfall is its effect on performance. Each API call in the sequence introduces its own latency, which is the sum of:

- Network Latency: The time taken for the request to travel from the client to the server and the response to return. This can be significant, especially across geographical distances or over unstable networks.

- Server Processing Time: The time the backend service spends executing its logic, querying databases, performing computations, or interacting with other internal components.

- Serialization/Deserialization Overhead: The time taken to convert data between its in-memory representation and a transferable format (like JSON or XML) for network transmission.

- Queueing Delays: If services are under heavy load, requests might wait in a queue before being processed.

In an API waterfall, these individual latencies accumulate. If Service A takes 100ms, and Service B (dependent on A) takes another 150ms, and Service C (dependent on B) takes 200ms, the total minimum time for the entire operation will be at least 450ms, excluding any network hops between services and the client. This linear accumulation can quickly lead to unacceptably slow response times for end-users, resulting in poor user experience, increased bounce rates, and potentially lost conversions for business-critical applications.

Furthermore, a slow API in the middle of a waterfall acts as a bottleneck, delaying all subsequent operations. Optimizing individual API calls becomes crucial, but even highly optimized individual calls can still result in a slow overall process if there are too many steps in the waterfall.

2. Reliability & Resilience: The Fragility of the Chain

An API waterfall is inherently as strong as its weakest link. A failure at any point in the sequence can have catastrophic cascading effects:

- Cascading Failures: If an upstream api fails (e.g., due to an error, timeout, or service unavailability), all dependent downstream APIs will also fail or receive invalid input, leading to a complete breakdown of the entire process. This is particularly dangerous as a localized issue can quickly propagate across multiple services.

- Single Points of Failure: Each API in the chain, especially those early in the sequence, can become a single point of failure. If such an API goes down, the entire waterfall halts.

- Retries and Idempotency: When an API in the waterfall fails, the system might attempt to retry the call. However, not all API calls are idempotent (meaning they produce the same result whether called once or multiple times). For example, a payment API call that fails midway and is retried without proper handling could lead to double charges for a customer. Careful design is required to ensure atomicity and idempotency across the entire waterfall.

Ensuring the reliability and resilience of an API waterfall demands robust error handling, retry mechanisms, and fault tolerance strategies at every level of the architecture. Without these, the entire system remains vulnerable to even minor disruptions.

3. Complexity & Maintainability: The Web of Interdependencies

As the number of APIs in a waterfall increases, so does the overall system complexity. This complexity manifests in several ways:

- Debugging Challenges: Pinpointing the exact source of an error in a long chain of API calls can be incredibly difficult. Logs need to be correlated across multiple services, and tracing tools are essential to follow a request's journey through the waterfall.

- Version Management: When an upstream API changes its contract (e.g., modifying its input parameters or output structure), it can break all dependent downstream APIs. Managing these interdependencies and ensuring backward compatibility or coordinating simultaneous updates becomes a significant operational burden.

- Testing Complexity: Thoroughly testing an API waterfall requires simulating various failure conditions and data permutations across all interconnected services. Unit tests and integration tests for individual services are insufficient; end-to-end testing becomes paramount.

- Scalability Challenges: Scaling individual services within a waterfall needs careful consideration. If one service is a bottleneck, scaling others won't necessarily improve overall performance. Resource allocation and load balancing must account for the dependencies.

The sheer volume of potential interactions and the intricate web of data flows make API waterfalls a significant architectural challenge, requiring meticulous documentation, disciplined development practices, and sophisticated tooling for effective management.

4. Security Considerations: Expanded Attack Surface

Each additional API call in a waterfall represents another potential entry point for security vulnerabilities or data exposure:

- Authorization Flow: Ensuring proper authorization at each step of the waterfall is critical. A user might be authorized to call the initial API but not subsequent ones. Token propagation, granular access controls, and secure session management across services become complex.

- Data Exposure: Sensitive data might be passed through multiple services in the waterfall. Each service must handle this data securely, encrypting it in transit and at rest, and only exposing what's strictly necessary to the next service. The risk of data breaches increases with the number of touchpoints.

- Injection Attacks: If any API in the chain is vulnerable to injection attacks (e.g., SQL injection, XSS), it could compromise the entire waterfall or even gain unauthorized access to underlying systems.

- Denial-of-Service (DoS) Attacks: A DoS attack on an upstream API can effectively halt the entire dependent chain, leading to a service outage for end-users.

Securing an API waterfall requires a layered approach, with robust authentication, authorization, input validation, encryption, and continuous monitoring implemented across all services and their interconnections. This necessitates a holistic security strategy that considers the entire flow, not just individual endpoints.

The cumulative effect of these challenges makes managing API waterfalls a critical aspect of building reliable, performant, and secure distributed systems. While they are an unavoidable consequence of modular architectures and rich functionalities, proactive design, strategic tooling, and vigilant operational practices are essential to harness their power without succumbing to their inherent fragor.

Mitigating "API Waterfall" Challenges with Strategic Design Patterns

Successfully navigating the complexities of an API waterfall requires more than just identifying the problems; it demands the application of proven architectural patterns and best practices. These strategies aim to reduce latency, enhance resilience, improve maintainability, and bolster security across the entire chain of API calls.

1. Asynchronous Processing: Decoupling the Flow

One of the most powerful techniques to break the synchronous, blocking nature of an API waterfall is to introduce asynchronous processing. Instead of waiting for each API call to complete before proceeding to the next, services can publish events or messages to a queue, and other services can consume these messages independently.

- Message Queues (e.g., Kafka, RabbitMQ, SQS): When an upstream API completes its task, it doesn't directly call the next API. Instead, it places a message on a queue. Downstream services monitor this queue and pick up messages when they are ready. This decouples the services, allowing them to operate at their own pace and preventing a slow service from holding up the entire chain.

- Benefit: Improves responsiveness for the initial API call (the client gets a faster response indicating the request has been accepted), enhances fault tolerance (messages persist in the queue even if a consumer is down), and facilitates scalability (multiple consumers can process messages in parallel).

- Example: In our e-commerce checkout, after the payment is successfully processed (API Call 3), the payment service could publish a "PaymentSuccessful" event to a message queue. The Inventory Service and Order Service would then asynchronously consume this event and proceed with their respective tasks, rather than being called directly by the payment service. The user receives an immediate "Order Received" confirmation, even if the inventory update or notification sending takes a few more moments.

- Event Streams: Similar to message queues but often providing persistent, ordered logs of events, event streams allow services to react to changes in the system in real-time. This forms the backbone of event-driven architectures.

- Benefit: Enables loose coupling, supports real-time data processing, and provides a durable record of all system events for auditing and replay.

2. Batching & Aggregation: Reducing Round Trips

The cumulative network latency is a major contributor to API waterfall delays. Batching and aggregation patterns aim to minimize the number of network round trips by consolidating multiple individual requests into a single, larger request.

- Batching: If a client needs to perform several similar operations (e.g., update 10 different user profiles), instead of making 10 separate API calls, it can make a single batch API call that includes all 10 updates. The backend service then processes these operations in bulk.

- Benefit: Reduces network overhead and client-side processing, improving overall efficiency.

- Aggregation: Often implemented at an API gateway or a dedicated aggregation service, this pattern involves taking a single client request and fanning it out to multiple backend APIs, collecting their responses, and then combining or transforming them into a single, cohesive response for the client.

- Benefit: Shields the client from the complexity of interacting with multiple backend services, reduces client-side waterfall dependencies, and improves perceived performance by presenting a unified interface.

- Example: For our customer dashboard, instead of the client making five separate API calls, an aggregation service (or the api gateway) would receive one request, internally call the CRM, Support, Billing, Usage, and Partner APIs in parallel, and then combine all the data into a single JSON response before sending it back to the client. This transforms a client-side waterfall into a server-side, potentially parallelized, operation.

3. Caching Strategies: Storing and Reusing Data

Caching is a fundamental optimization technique for reducing repeated calls to APIs for data that doesn't change frequently or has a predictable expiry.

- Client-Side Caching: The client application stores data received from an API call and reuses it for subsequent requests within a certain timeframe, avoiding repeated API calls.

- Server-Side Caching (e.g., Redis, Memcached): APIs can cache frequently requested data in an in-memory store before hitting the actual backend database or another service. This drastically reduces the response time for popular data.

- Gateway-Level Caching: An API gateway can cache responses from backend services, serving them directly to clients for subsequent identical requests, further offloading backend services and reducing latency for the entire waterfall.

- Benefit: Significantly reduces load on backend services, improves response times, and enhances scalability. Careful consideration must be given to cache invalidation strategies to ensure data freshness.

4. Circuit Breakers & Timeouts: Preventing Cascading Failures

These patterns are crucial for improving the resilience of API waterfalls, preventing a failing service from bringing down the entire system.

- Timeouts: Every API call in a waterfall should have a defined timeout. If a service doesn't respond within this period, the calling service should stop waiting and handle the timeout as an error. This prevents threads or resources from being perpetually blocked, waiting for a unresponsive service.

- Circuit Breakers: Inspired by electrical circuits, a circuit breaker wraps calls to external services. If the calls consistently fail (e.g., exceed a failure threshold), the circuit "trips" open, preventing further calls to the failing service. Instead, it immediately returns an error or a fallback response without even attempting the call. After a configurable period, the circuit moves to a "half-open" state, allowing a few test requests to pass through. If these succeed, the circuit closes; otherwise, it opens again.

- Benefit: Prevents cascading failures by isolating failing services, allows services to recover without being overwhelmed by a flood of retries, and improves the overall stability of the system.

- Example: In our e-commerce checkout, if the Payment Gateway API starts consistently timing out, a circuit breaker would trip, and the Order Service would immediately return an error to the user ("Payment service unavailable, please try again later") rather than hanging indefinitely or making repeated, futile attempts.

5. Rate Limiting: Protecting Downstream Services

Rate limiting controls the number of requests a client or service can make to another service within a given timeframe.

- Benefit: Prevents abuse, protects downstream services from being overwhelmed by traffic spikes (which could lead to failures and trigger circuit breakers), and ensures fair usage among different consumers.

- Implementation: Often implemented at the API gateway level, it can enforce quotas per client, per API, or globally.

6. Service Mesh: Observability and Traffic Control

For highly complex microservices architectures with numerous interdependent APIs, a service mesh (e.g., Istio, Linkerd) provides an infrastructure layer for managing service-to-service communication.

- Benefit: Offers out-of-the-box capabilities for traffic management (routing, load balancing), observability (metrics, logs, traces), security (mTLS), and reliability (circuit breaking, retries, timeouts) without requiring changes to application code. This provides a holistic view and control over API waterfalls.

By strategically combining these design patterns, developers can build more resilient, performant, and maintainable systems that effectively manage the inherent challenges of API waterfalls, transforming them into reliable operational flows.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

The Crucial Role of an API Gateway

In the context of managing API waterfalls, the API gateway emerges not just as a useful tool but as a virtually indispensable component. It acts as the single entry point for all client requests, effectively centralizing control and providing a powerful choke point for implementing many of the mitigation strategies discussed earlier. Without an API gateway, clients would have to interact directly with numerous backend services, exacerbating the complexities of API waterfalls and making consistent management nearly impossible.

What is an API Gateway?

An API gateway is a server that sits at the edge of your microservices architecture (or any distributed system) and acts as an entry point for all client applications. It receives API calls, routes them to the appropriate backend services, aggregates responses, and applies various policies and transformations before sending the response back to the client. It essentially abstracts the complexity of the backend services from the client.

How an API Gateway Centralizes and Simplifies API Management

The true power of an API gateway lies in its ability to centralize common functionalities that would otherwise have to be implemented redundantly in each backend service or managed by individual clients. This centralization is particularly beneficial for complex API waterfalls.

Here's how an API gateway addresses specific challenges related to API waterfalls:

1. Request Routing and Composition (Aggregation)

- Smart Routing: The gateway can intelligently route incoming requests to the correct backend service based on the URL, headers, or other request parameters. This allows for clear separation of concerns in the backend while presenting a unified api to clients.

- API Composition/Aggregation: As mentioned earlier, the API gateway can take a single client request and internally fan it out to multiple backend services in parallel or sequence, aggregate their responses, and then return a single, coherent response to the client. This hides the internal API waterfall from the client, making the client's interaction simpler and more performant by reducing client-side round trips.

- Example: Instead of a mobile app making five different calls to fetch customer details, support tickets, billing info, and usage data, the app makes one call to the API gateway, which then orchestrates the internal calls, aggregates the data, and sends back a single JSON object.

2. Authentication and Authorization

- Centralized Security: Instead of each backend service independently handling authentication and authorization, the API gateway can manage these critical security aspects at the perimeter. It can validate API keys, OAuth tokens, or JWTs once, reducing redundant security logic in backend services.

- Policy Enforcement: The gateway can enforce granular access control policies, ensuring that only authorized clients and users can access specific APIs or functionalities. This is crucial for protecting sensitive data flowing through API waterfalls.

3. Traffic Management

- Rate Limiting: The gateway is the ideal place to implement rate limiting. It can protect backend services from being overwhelmed by enforcing limits on the number of requests per client, IP address, or API within a specified time frame. This prevents a single client from monopolizing resources or causing a DoS attack on upstream services in a waterfall.

- Throttling: Similar to rate limiting, throttling allows for more dynamic control over traffic, potentially adjusting limits based on backend service health or current load.

- Load Balancing: The gateway can distribute incoming traffic across multiple instances of backend services, ensuring even load distribution and high availability, which is vital when a service is part of a critical API waterfall.

- Circuit Breaker Integration: While circuit breakers can be implemented at the service level, a gateway can also act as a central point to monitor the health of downstream services and implement circuit-breaking logic, preventing calls to failing services even before they hit the specific service's circuit breaker.

4. Monitoring and Logging

- Unified Observability: The API gateway serves as a central point for collecting metrics, logs, and trace data for all API requests. This provides a holistic view of API traffic, performance, and errors, which is invaluable for debugging and understanding the health of complex API waterfalls.

- Request Tracing: By adding unique correlation IDs to requests as they enter the gateway and propagating them through the entire waterfall, distributed tracing tools can reconstruct the full journey of a request across multiple services, making it easier to pinpoint performance bottlenecks or error sources.

5. Transformation and Protocol Translation

- Data Transformation: The gateway can transform data formats between the client and backend services (e.g., converting XML to JSON, or restructuring JSON payloads). This allows clients to use a preferred data format while backend services can maintain their native formats.

- Protocol Translation: It can enable communication between clients and services that use different protocols (e.g., expose a REST API to clients while communicating with a gRPC backend). This is particularly useful for integrating legacy systems into modern architectures.

6. Versioning

- The gateway can manage multiple versions of an api, routing requests to the appropriate backend service version based on the client's request (e.g.,

/v1/usersvs./v2/users). This simplifies API evolution and allows for graceful deprecation of older versions without breaking existing clients.

APIPark: An Advanced API Gateway for AI and Beyond

When discussing the sophisticated management of complex API ecosystems, especially those involving sequential dependencies and AI models, it's pertinent to consider platforms specifically designed for these challenges. ApiPark stands out as an open-source AI gateway and API management platform that embodies many of the principles and functionalities required to efficiently manage API waterfalls.

APIPark is particularly adept at handling the unique demands of AI-driven workflows, which often involve chained invocations of different AI models (a common form of API waterfall). Its features directly address common API waterfall issues:

- Quick Integration of 100+ AI Models & Unified API Format: APIPark allows for the integration of numerous AI models under a unified management system. Critically, it standardizes the request data format across all AI models. This means that an application calling a sequence of AI models doesn't need to adapt its invocation logic if an underlying AI model is swapped or updated. This dramatically simplifies the maintenance and robustness of AI API waterfalls, preventing cascading failures due to interface changes.

- Prompt Encapsulation into REST API: Users can combine AI models with custom prompts to create new, specialized APIs (e.g., a sentiment analysis API). This facilitates the creation of modular AI services that can be chained together in an API waterfall, each performing a specific task, while being easily callable via a standard REST interface.

- End-to-End API Lifecycle Management: Beyond just routing, APIPark assists with the entire API lifecycle, from design and publication to invocation and decommission. It helps regulate API management processes, traffic forwarding, load balancing, and versioning – all crucial aspects for stable API waterfalls.

- Performance Rivaling Nginx: With its high-performance capabilities (over 20,000 TPS on modest hardware), APIPark can handle large-scale traffic, ensuring that the gateway itself doesn't become a bottleneck in an API waterfall. Its cluster deployment support further enhances its resilience and scalability.

- Detailed API Call Logging & Powerful Data Analysis: APIPark provides comprehensive logging for every API call and analyzes historical data. This is invaluable for troubleshooting API waterfalls, quickly tracing issues across chained calls, understanding performance trends, and performing preventive maintenance. This centralized observability dramatically simplifies debugging compared to sifting through logs from disparate services.

By centralizing these critical functions, APIPark, like other robust API gateway solutions, significantly reduces the burden on individual backend services, enhances security, improves performance, and provides a unified point of control for managing the complexities of API waterfalls, particularly in the burgeoning field of AI.

Implementing API Gateways Effectively

While the benefits of an API gateway are clear, its effective implementation requires careful planning and adherence to best practices. A poorly configured gateway can itself become a bottleneck or a single point of failure, negating its intended advantages.

1. Choosing the Right Gateway

The market offers a variety of API gateway solutions, each with its strengths and weaknesses:

- Cloud Provider Gateways: Services like AWS API Gateway, Azure API Management, and Google Cloud Apigee are fully managed solutions, offering deep integration with their respective cloud ecosystems. They are excellent for organizations already heavily invested in a particular cloud provider.

- Open-Source Gateways: Solutions like Kong, Tyk, Envoy, and APIPark offer flexibility, customization, and often stronger community support. They are suitable for organizations that prefer more control, self-hosting, or have specific technical requirements that commercial solutions might not easily meet. APIPark, for instance, being open-source and specifically designed as an AI gateway, offers a powerful blend of flexibility and specialized features for AI-centric architectures.

- Self-built Gateways: For highly unique requirements, some organizations opt to build their own custom gateway. However, this approach comes with significant overhead in terms of development, maintenance, and security, and is generally not recommended unless there's a compelling reason.

When choosing, consider factors like: * Feature Set: Does it support all necessary routing, security, traffic management, and transformation capabilities? * Scalability & Performance: Can it handle your expected traffic volume without becoming a bottleneck? * Ease of Use & Management: How easy is it to configure, deploy, and monitor? * Integration: How well does it integrate with your existing infrastructure, CI/CD pipelines, and observability tools? * Cost: Licensing, operational, and maintenance costs. * Community/Vendor Support: The availability of documentation, community forums, or professional support.

2. Deployment Considerations

The deployment model of your API gateway is critical for its performance, availability, and scalability.

- High Availability: Deploy the gateway in a highly available configuration (e.g., across multiple availability zones) to ensure that a single point of failure doesn't bring down your entire api landscape.

- Scalability: Implement auto-scaling mechanisms to ensure the gateway can dynamically adjust its capacity to handle fluctuating traffic loads.

- Network Proximity: Position the gateway as close as possible to both your clients (for minimal latency) and your backend services (for efficient routing). This might involve deploying it in multiple regions if you have a globally distributed user base.

- Security Zoning: Deploy the gateway in a carefully secured network zone (e.g., a DMZ) with robust firewall rules, isolating it from your internal backend services and external clients.

3. Best Practices for Gateway Configuration

Effective configuration is the linchpin of a successful API gateway implementation:

- Minimal Logic in Gateway: While the gateway is powerful, avoid putting excessive business logic within it. The primary role of the gateway is routing, security, and traffic management, not complex application-specific processing. Backend services should remain the source of truth for business logic. This keeps the gateway lightweight, performant, and easier to maintain.

- Comprehensive Logging and Monitoring: Configure detailed logging for all requests passing through the gateway. Integrate it with your centralized logging system and monitoring tools. This is crucial for debugging issues in API waterfalls and understanding system health.

- Consistent Security Policies: Apply consistent authentication, authorization, and rate-limiting policies across all APIs managed by the gateway. This reduces the attack surface and ensures uniform security posture.

- Sensible Timeouts: Configure appropriate timeouts for both inbound requests (from clients to the gateway) and outbound requests (from the gateway to backend services). This prevents long-running or unresponsive backend services from consuming gateway resources indefinitely.

- Versioning Strategy: Clearly define and implement an API versioning strategy at the gateway level to manage changes without breaking existing clients. This could involve URL-based versioning (e.g.,

/v1/users), header-based versioning (e.g.,Accept: application/vnd.myapi.v1+json), or query parameter versioning. - Error Handling and Fallbacks: Design the gateway to handle errors gracefully. Implement custom error messages that are informative for clients but don't expose sensitive internal details. Where possible, define fallback mechanisms (e.g., serving cached data, returning a default response) when backend services are unavailable.

- Automated Deployment and Configuration: Treat API gateway configurations as code. Use infrastructure-as-code (IaC) tools and integrate gateway deployment and configuration into your CI/CD pipelines. This ensures consistency, repeatability, and reduces manual errors.

- Performance Testing: Regularly stress test your API gateway to ensure it can handle peak loads and identify potential bottlenecks before they impact production.

By diligently applying these practices, organizations can fully leverage the power of an API gateway to tame the complexities of API waterfalls, building robust, scalable, and secure distributed systems that deliver superior performance and user experience. The gateway becomes the orchestrator, guiding the flow of data through the waterfall with precision and resilience.

Advanced Concepts and Future Trends

The landscape of API management is constantly evolving, with new technologies and paradigms emerging to tackle ever-increasing complexity. Beyond the foundational patterns and the robust capabilities of an API gateway, several advanced concepts are shaping the future of managing API waterfalls.

1. Serverless Functions and API Waterfalls

Serverless computing, exemplified by AWS Lambda, Azure Functions, or Google Cloud Functions, has become a popular model for deploying fine-grained pieces of logic. When combined with an API gateway, serverless functions can radically simplify the implementation of API waterfalls, particularly the aggregation and orchestration logic.

- Function-as-a-Service (FaaS) for Orchestration: Instead of a traditional microservice, a serverless function can act as an orchestrator for an API waterfall. An incoming request to the API gateway triggers this function, which then makes calls to multiple backend APIs (which themselves might be other serverless functions or traditional services), aggregates their results, and returns a single response.

- Event-Driven Serverless: Serverless functions naturally lend themselves to event-driven architectures. A function completing its task can emit an event, which triggers another function, forming an asynchronous API waterfall.

- Benefit: Reduces operational overhead (no servers to manage), allows for highly granular scaling (functions scale independently), and can be very cost-effective for intermittent workloads. However, managing state across functions and debugging distributed traces in serverless environments can introduce new complexities.

2. Event-Driven Architectures (EDA)

While we touched upon asynchronous processing, full-fledged event-driven architectures take this concept further. In an EDA, services communicate primarily by emitting and reacting to events, rather than making direct synchronous API calls.

- Breaking the Synchronous Chain: EDAs fundamentally transform API waterfalls. Instead of

Service A calls Service B calls Service C, the pattern becomesService A emits Event A -> Service B reacts to Event A and emits Event B -> Service C reacts to Event B. This decouples services entirely, enhancing resilience and scalability. - Stream Processing: Technologies like Apache Kafka enable real-time stream processing, allowing services to consume and transform events in a continuous flow.

- Benefit: Extreme loose coupling, improved responsiveness, high fault tolerance, and greater flexibility for evolving systems. It shifts the paradigm from sequential "waterfall" execution to a more parallel and reactive "stream" of activity. However, designing and debugging EDAs can be complex due to the distributed nature of events and the lack of a single, linear flow.

3. AI/ML in API Management and Optimization

Artificial intelligence and machine learning are increasingly being applied to optimize and manage API ecosystems, including the detection and mitigation of API waterfall issues.

- Predictive Performance: AI models can analyze historical API call data (latency, error rates, traffic patterns) to predict potential bottlenecks in API waterfalls before they occur. This allows for proactive scaling or resource allocation.

- Automated Anomaly Detection: ML algorithms can identify unusual patterns in API traffic or performance that might indicate a failing service within a waterfall or an emerging DoS attack.

- Intelligent Routing: AI-driven API gateways could dynamically route requests based on real-time service health, latency, or even predicted load, optimizing the path through an API waterfall for the best performance.

- Security Threat Detection: ML can analyze API request patterns to detect malicious activities, such as injection attempts or sophisticated DoS attacks, providing enhanced security for all APIs in a waterfall.

Platforms like APIPark, which focus on being an "AI Gateway," are at the forefront of this trend, not just integrating AI models but also leveraging AI principles to manage and optimize the gateway's own operations and the APIs it serves.

4. Observability and Distributed Tracing

As API waterfalls grow in length and complexity, having deep visibility into the entire request flow becomes paramount.

- Distributed Tracing: Tools like Jaeger or OpenTelemetry allow developers to trace a single request as it propagates through multiple services and APIs in a waterfall. Each segment of the trace (span) captures details like service name, operation, duration, and metadata, enabling precise identification of bottlenecks and error sources.

- Centralized Logging and Metrics: Consolidating logs from all services and collecting comprehensive metrics (latency, error rates, resource utilization) provides a holistic view of the system's health. Correlating these data points across services is crucial for understanding the behavior of API waterfalls.

- Service Maps: Visualizing the dependencies between services and APIs helps in understanding the structure of API waterfalls and identifying potential points of failure.

These advanced concepts are not mutually exclusive; rather, they often complement each other, offering increasingly sophisticated ways to build, manage, and optimize the complex interdependencies that characterize API waterfalls in modern distributed systems. The ongoing evolution in these areas promises to make even the most intricate API flows more manageable, resilient, and performant.

Case Studies and Examples

To truly solidify our understanding of API waterfalls and their management, let's consider how real-world systems tackle these challenges. While the term "API Waterfall" itself is metaphorical, the underlying problem of chained API calls and their associated issues is universal in complex architectures.

1. Netflix: The Master of Microservices and Resilience

Netflix is a quintessential example of a company that operates on a massive scale, relying heavily on microservices and complex API interactions. When you interact with Netflix, a single request to stream a movie triggers a cascade of internal API calls:

- Authentication and Authorization: Verify user identity and content access rights.

- User Profile Service: Retrieve user preferences, viewing history, and parental controls.

- Recommendation Service: Generate personalized content suggestions.

- Content Catalog Service: Fetch metadata for movies/shows.

- Licensing Service: Check regional content availability.

- Payment/Subscription Service: Verify active subscription.

- Video Encoding/Streaming Service: Select appropriate video quality and deliver the stream.

- Activity Tracking Service: Log user interaction for future recommendations and analytics.

How Netflix manages the "Waterfall":

- API Gateway (Zuul): Netflix famously developed Zuul (now open-source) as its edge gateway to handle all inbound requests. It provides dynamic routing, monitoring, security, and traffic management, effectively shielding clients from the complexity of the backend API waterfall.

- Hystrix (Circuit Breaker): To prevent cascading failures, Netflix created Hystrix, a latency and fault tolerance library that implements the circuit breaker pattern. If an internal service in a waterfall becomes unresponsive, Hystrix quickly fails the request without waiting for a timeout, protecting the downstream services and allowing the system to degrade gracefully (e.g., showing generic recommendations instead of failing the entire page load).

- Asynchronous Communication: While some calls are synchronous, many internal processes are asynchronous, leveraging message queues for decoupling.

- Comprehensive Observability: Netflix heavily invests in distributed tracing and monitoring tools (like their custom "Spectator" metrics system) to gain deep insights into the performance and health of their vast API ecosystem. This allows them to quickly identify and resolve bottlenecks within their API waterfalls.

2. Modern Financial Services: Transaction Processing

Financial institutions deal with incredibly complex and sensitive transactions that often involve multiple systems and external partners, forming critical API waterfalls. Consider a cross-border payment:

- Client API Call: User initiates a payment request.

- Fraud Detection API: Assess the risk of the transaction.

- Sanctions Screening API: Check for compliance with international sanctions lists.

- Currency Exchange Rate API: Get real-time exchange rates (if applicable).

- Internal Ledger API: Debit the sender's account.

- External Banking Partner API: Initiate payment to the recipient's bank.

- Notification API: Send confirmation to the user.

Challenges and Solutions:

- High Reliability & Atomicity: Failures in this waterfall are unacceptable. Financial systems often employ two-phase commit (2PC) or saga patterns to ensure atomicity across distributed transactions, meaning either all steps succeed, or all are rolled back.

- Strict Security: Every API in the chain is heavily secured with multi-factor authentication, strong encryption, and rigorous access controls. An API gateway is critical for centralizing these security policies.

- Performance: While atomicity is key, performance is also vital. Batch processing for non-real-time updates and highly optimized, low-latency APIs for critical steps are common.

- Auditing and Traceability: Every step in the waterfall is meticulously logged and traceable for regulatory compliance and dispute resolution. Distributed tracing and comprehensive logging (as offered by solutions like APIPark) are essential.

3. Smart City Platforms: Data Aggregation and Action

Smart city initiatives often involve integrating data from myriad sensors, municipal services, and public interfaces to provide unified dashboards and trigger automated actions.

- Dashboard Request: A city official requests a dashboard showing real-time traffic, air quality, and public transport status.

- Traffic Management System API: Fetch sensor data, traffic flow.

- Environmental Monitoring API: Get air quality readings.

- Public Transport API: Retrieve bus/train locations, schedules.

- Weather Service API: Local weather conditions.

- Data Aggregation Service: Combine and process all these inputs.

- Visualization API: Render data for the dashboard.

Managing the Diverse Waterfall:

- API Gateway for Central Access: A robust API gateway is essential to provide a unified access point for all these disparate services, many of which might be external or legacy systems. It handles routing, authentication, and potentially protocol translation.

- Asynchronous Data Ingestion: For continuous sensor data, an event-driven architecture with message queues (e.g., Kafka) is often used to ingest data asynchronously, preventing individual sensor failures from halting the entire system.

- Caching for Performance: Static or slowly changing data (e.g., bus route maps, historical air quality averages) is heavily cached to reduce repeated calls to backend systems and improve dashboard responsiveness.

- Microservices for Modularity: Each service (Traffic, Environment, Transport) is often a separate microservice, allowing independent development, scaling, and fault isolation.

These examples underscore that while "API Waterfall" is a conceptual term, the underlying challenges are intensely real in high-stakes, high-volume environments. The effective management of these sequential API dependencies through strategic design patterns, robust tooling like API gateways, and a commitment to observability is a cornerstone of modern software engineering.

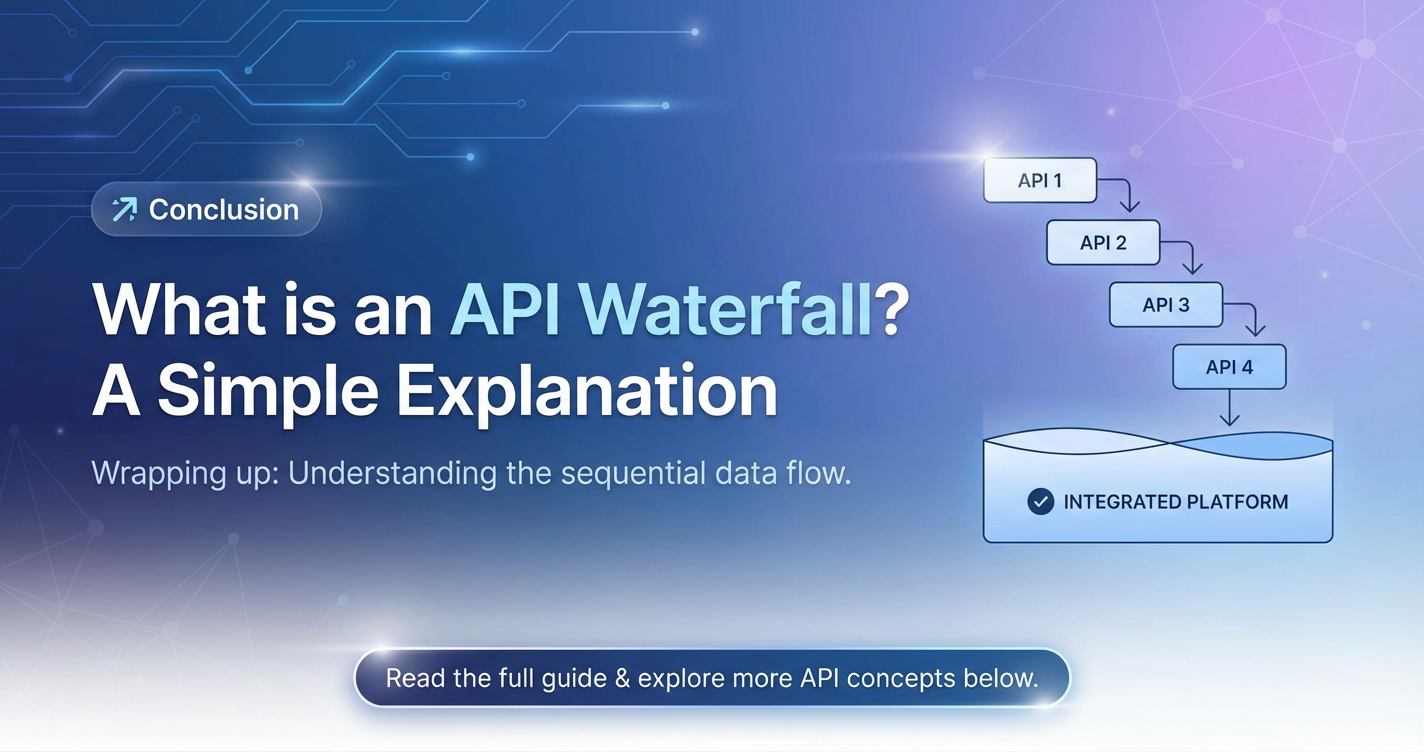

Conclusion: Taming the Digital Cascade

In the vibrant and interconnected ecosystem of modern software, APIs are the very lifeblood, facilitating communication, integration, and innovation across a myriad of services. As applications grow in complexity, integrating diverse functionalities and often leveraging cutting-edge technologies like AI, the pattern of sequential, dependent API calls—what we've termed the "API Waterfall"—becomes an inevitable and often critical aspect of system design.

While these digital cascades are essential for constructing rich, multi-faceted functionalities, they introduce a host of formidable challenges. The cumulative impact on performance, the fragility inherent in cascading failures, the burgeoning complexity for maintenance, and the expanded surface area for security vulnerabilities demand meticulous attention and sophisticated solutions. Ignoring these challenges can lead to sluggish user experiences, unreliable systems, and ultimately, significant business repercussions.

However, the journey through the API waterfall is not one fraught with insurmountable obstacles. By embracing strategic design patterns, developers can transform these potential pitfalls into robust, resilient, and highly performant architectures. Asynchronous processing decouples services, preventing a single slow point from holding back the entire flow. Batching and aggregation patterns minimize network round trips, significantly reducing latency. Caching strategies ensure that frequently requested data is served with lightning speed, offloading backend services. Crucially, the implementation of circuit breakers, timeouts, and rate limiting fortifies the system against the inevitable failures of individual components, preventing localized issues from spiraling into system-wide outages.

At the heart of managing these intricate API waterfalls, the API gateway emerges as the indispensable orchestrator. Serving as the single entry point for all client requests, it centralizes critical functionalities: intelligent request routing, robust authentication and authorization, efficient traffic management, comprehensive monitoring, and flexible data transformation. By abstracting the complexity of the backend from the client, the API gateway provides a unified, secure, and performant interface, making complex inter-service dependencies manageable and observable. Platforms like ApiPark, as an open-source AI gateway and API management solution, exemplify how specialized gateway capabilities can specifically streamline the integration and orchestration of AI models, a common source of complex API waterfalls, offering unified formats, lifecycle management, and detailed observability.

Looking ahead, the evolution of serverless computing, the pervasive adoption of event-driven architectures, the intelligent application of AI/ML in API management, and the increasing sophistication of observability tools promise even more powerful ways to navigate and optimize API waterfalls. These advancements will continue to empower developers to build systems that are not only more efficient and secure but also more adaptive and resilient in the face of constant change.

In essence, understanding the API waterfall is about acknowledging the intricate dance of modern software components. Taming it is about applying architectural wisdom, leveraging powerful tools, and committing to continuous monitoring and refinement. By doing so, we ensure that the digital cascade, rather than becoming a torrent of challenges, instead flows smoothly, carrying data and functionality with unwavering precision and unwavering reliability, powering the next generation of digital experiences.

Frequently Asked Questions (FAQ)

1. What exactly is meant by "API Waterfall"?

The term "API Waterfall" is a metaphor used to describe a sequence of dependent API calls, where the successful completion and often the output of one API invocation are required before the next API in the chain can execute. It's not a formal architectural pattern but a descriptive term for a common scenario in distributed systems where a single user action or business process triggers a series of interconnected API interactions, each relying on the preceding one.

2. Why is an API Waterfall a challenge, and what are its main drawbacks?

API waterfalls present several key challenges: * Performance Latency: Each API call adds its own latency, which accumulates across the chain, potentially leading to slow overall response times. * Reliability & Resilience: A failure or slowdown in any single API within the waterfall can cause a cascading failure, disrupting the entire process. * Complexity & Maintainability: Debugging, versioning, and testing become significantly more complex due to the intricate interdependencies. * Security Risks: Each additional API call represents another potential point of vulnerability or data exposure in the flow.

3. How does an API Gateway help manage API Waterfalls?

An API gateway acts as a central entry point for all client requests, providing a single point of control for managing API waterfalls. It helps by: * Aggregating Calls: It can receive a single client request and internally fan it out to multiple backend services, combining their responses before sending a single response back to the client, reducing client-side waterfalls. * Centralizing Security: Handles authentication, authorization, and security policy enforcement at the edge. * Traffic Management: Implements rate limiting, throttling, and load balancing to protect backend services from overload and ensure fair usage. * Observability: Provides centralized logging, monitoring, and distributed tracing capabilities to gain insights into the entire API waterfall. * Caching: Can cache responses to reduce repeated calls to backend services.

4. What are some architectural patterns that mitigate API Waterfall issues?

Several design patterns can help mitigate the challenges of API waterfalls: * Asynchronous Processing: Using message queues or event streams to decouple services and allow them to operate independently, preventing a single slow service from blocking the entire chain. * Batching & Aggregation: Consolidating multiple individual requests into a single API call to reduce network round trips. * Caching Strategies: Storing and reusing frequently accessed data at client, server, or gateway levels to reduce redundant API calls. * Circuit Breakers & Timeouts: Implementing mechanisms to quickly fail requests to unresponsive services, preventing cascading failures and resource exhaustion. * Rate Limiting: Controlling the number of requests to protect services from being overwhelmed.

5. Can APIPark help with managing complex API waterfalls, especially with AI models?

Yes, ApiPark is specifically designed as an open-source AI gateway and API management platform that is well-suited for managing complex API waterfalls, particularly those involving AI models. It helps by: * Unified AI API Format: Standardizes request formats across diverse AI models, simplifying chained invocations and reducing maintenance. * Prompt Encapsulation: Allows custom AI workflows to be exposed as standard REST APIs, making them easier to integrate into waterfalls. * Lifecycle Management: Provides tools for managing the entire API lifecycle, including traffic management, load balancing, and versioning, which are critical for stable waterfalls. * Performance & Observability: Offers high performance to avoid bottlenecks at the gateway level and provides detailed logging and data analysis for troubleshooting and optimizing API waterfalls.

🚀You can securely and efficiently call the OpenAI API on APIPark in just two steps:

Step 1: Deploy the APIPark AI gateway in 5 minutes.

APIPark is developed based on Golang, offering strong product performance and low development and maintenance costs. You can deploy APIPark with a single command line.

curl -sSO https://download.apipark.com/install/quick-start.sh; bash quick-start.sh

In my experience, you can see the successful deployment interface within 5 to 10 minutes. Then, you can log in to APIPark using your account.

Step 2: Call the OpenAI API.