API Gateway Main Concepts: Explained for Beginners

In the vast and interconnected landscape of modern software development, where applications are no longer monolithic behemoths but rather intricate ecosystems of independent services, a central orchestrator becomes not just useful, but absolutely essential. This orchestrator is what we refer to as an API Gateway. For anyone venturing into the world of microservices, cloud computing, or the broader API economy, understanding the fundamental principles and functionalities of an API Gateway is paramount. It acts as the digital doorman, the intelligent traffic controller, and the vigilant security guard for your entire application infrastructure. Without it, managing the complexity, ensuring security, and maintaining performance of numerous interacting services would quickly descend into an unmanageable chaos.

This comprehensive guide is designed to demystify the API Gateway for beginners. We will embark on a journey starting from the very basics of what an API is, understand the critical need that gave rise to the API Gateway, delve deep into its core concepts and essential functions, explore various architectural patterns, discuss crucial implementation considerations, and even glimpse into its evolving future. Our aim is to provide a detailed, accessible, and practical understanding, equipping you with the knowledge to confidently navigate and leverage this powerful component in your own projects and professional endeavors. By the end of this article, you will not only grasp what an API Gateway does but also appreciate its indispensable role in building robust, scalable, and secure distributed systems.

Chapter 1: Understanding the Foundation - What is an API?

Before we can fully appreciate the role of an API Gateway, it’s crucial to have a solid understanding of what an API itself is. The acronym API stands for Application Programming Interface. In its simplest form, an API is a set of defined rules and protocols that allows different software applications to communicate with each other. Think of it as a contract: one application offers a service, and another application uses the API to request that service, adhering to the contract's terms. It’s the digital bridge that enables disparate software components to interact, share data, and invoke functionalities in a controlled and predictable manner.

To use a common analogy, imagine you’re in a restaurant. You, the customer, want to order food. You don’t go into the kitchen yourself to tell the chef what you want or how to cook it. Instead, you interact with a waiter. You tell the waiter your order, the waiter takes it to the kitchen, the kitchen prepares the food, and the waiter brings it back to your table. In this scenario, the waiter is the API. You (your application) make a request to the waiter (the API), who then communicates with the kitchen (another application or service) to fulfill your request, and finally delivers the response (the food) back to you. The waiter abstracts away the complexity of the kitchen, providing a simple, consistent interface for your interaction.

Historically, APIs have existed in various forms, from library calls within a single program to remote procedure calls (RPC) across networks. However, the most prevalent form in today's internet-driven world are Web APIs. These are APIs that use standard web protocols, primarily HTTP, to facilitate communication between web servers and web clients (like browsers, mobile apps, or other servers). The dominant architectural style for Web APIs is REST (Representational State Transfer), which leverages standard HTTP methods (GET, POST, PUT, DELETE) to perform operations on resources identified by URLs. Other styles like SOAP (Simple Object Access Protocol) and more recently GraphQL also serve similar purposes, each with its own set of characteristics and use cases.

The importance of APIs in modern software development cannot be overstated. They are the fundamental building blocks of interconnectivity. Without APIs, every application would largely operate in isolation, incapable of sharing data or functionalities with others. Consider your daily digital life: when you use a weather app, it likely fetches data from a weather service's API. When you log into an application using your Google or Facebook account, that application is interacting with Google's or Facebook's authentication API. Payment gateways, mapping services, social media integrations – almost every rich digital experience relies heavily on a multitude of API interactions happening behind the scenes. In a world increasingly dominated by microservices architectures, where a large application is broken down into small, independent services, APIs are the glue that holds these services together, enabling them to communicate and collaborate to deliver a unified user experience. They fuel innovation by allowing developers to build new applications by combining existing services, rather than reinventing the wheel, fostering a thriving API economy.

Chapter 2: The Need for a Gateway - Why Not Direct Access?

With a clear understanding of what an API is, the next logical question might be: why do we need an API Gateway at all? Why can't clients simply communicate directly with the backend services that expose these APIs? While direct client-to-service communication might seem simpler at first glance, especially for small, monolithic applications, this approach quickly becomes problematic and unsustainable as systems grow in complexity, particularly with the widespread adoption of microservices architectures. The sheer volume of issues that arise from direct access underscores the critical need for a centralized intermediary – a gateway – that can manage and orchestrate these interactions.

Let's delve into the specific challenges and problems that direct client-to-service communication introduces, which an API Gateway is designed to solve:

1. Managing Numerous Endpoints and Increased Client Complexity: In a microservices architecture, an application is composed of dozens, sometimes hundreds, of small, independently deployable services. Each of these services exposes its own set of APIs, residing at unique network addresses. If a client application (e.g., a mobile app or a web frontend) needs to interact with multiple services to fulfill a single user request, it would have to know the specific endpoint for each service. This means the client application becomes responsible for managing a large number of service URLs, handling service discovery, and potentially dealing with different communication protocols for each service. This significantly increases the complexity of the client-side code and makes it brittle, as any change in a backend service's location or interface directly impacts the client.

2. Security Concerns and Decentralized Enforcement: Security is paramount for any application, and in a direct access model, implementing it becomes a distributed nightmare. Each individual microservice would be responsible for its own authentication (verifying the client's identity) and authorization (determining what the client is allowed to do). This leads to: * Redundant Security Logic: Every service needs to implement the same authentication and authorization logic, leading to duplicated effort, increased development time, and a higher risk of security vulnerabilities due to inconsistent implementations. * Inconsistent Security Policies: Without a central enforcement point, maintaining a consistent security posture across all services becomes incredibly difficult. A single oversight in one service could compromise the entire system. * Exposing Internal Architecture: Direct access means exposing the internal network topology and individual service endpoints to the outside world, increasing the attack surface and making it easier for malicious actors to probe and exploit vulnerabilities.

3. Cross-Cutting Concerns and Duplicated Logic: Beyond security, many other functionalities are common to almost all services in a distributed system. These are known as cross-cutting concerns: * Logging and Monitoring: Collecting comprehensive logs for every request and monitoring the performance and health of each service. * Rate Limiting/Throttling: Protecting services from being overwhelmed by too many requests, either accidental or malicious (e.g., DDoS attacks). * Caching: Storing frequently accessed data to reduce latency and load on backend services. * Request/Response Transformation: Modifying the data format or content between the client and the service. * Auditing: Recording specific events for compliance or analytical purposes. If each microservice has to implement these concerns independently, it leads to significant code duplication, increased complexity within the services themselves, and makes it challenging to apply system-wide policies consistently.

4. Routing Complexity and Service Discovery: Services in a microservices architecture are often ephemeral; they can scale up or down, be deployed and redeployed, and move between different hosts. Clients need a way to discover the current location of a service. While service discovery mechanisms exist (e.g., Consul, Eureka), forcing every client to implement service discovery adds significant overhead. Moreover, routing requests to the correct service instance, especially when multiple instances are running for load balancing, becomes a complex task for the client.

5. Version Management and Backward Compatibility: As services evolve, their APIs might change. When an API needs to be updated, maintaining backward compatibility for existing clients is a significant challenge. If clients are directly coupled to individual services, evolving an API can break older client versions, forcing all clients to update simultaneously. This creates a tight coupling that hinders independent evolution of services.

6. Resilience and Fault Tolerance: In a distributed system, individual services can fail. If a client is directly calling a service that becomes unavailable, the client needs to handle that failure gracefully, perhaps by retrying the request or falling back to a different service. Implementing robust fault tolerance mechanisms (like circuit breakers or retries) in every client for every service interaction is a daunting task, leading to inconsistent error handling and a fragile system overall.

These challenges collectively highlight why direct client-to-service communication is not a viable long-term strategy for complex, distributed applications. The sheer burden placed on client applications, coupled with the security risks and operational complexities, necessitates an architectural component that can abstract away these intricacies, providing a single, coherent, and secure entry point for all client interactions. This is precisely the role of the API Gateway. It acts as the intelligent gateway through which all external traffic must pass, centralizing control, enforcing policies, and simplifying the client's perspective of the backend system.

Chapter 3: Introducing the API Gateway - The Digital Doorman

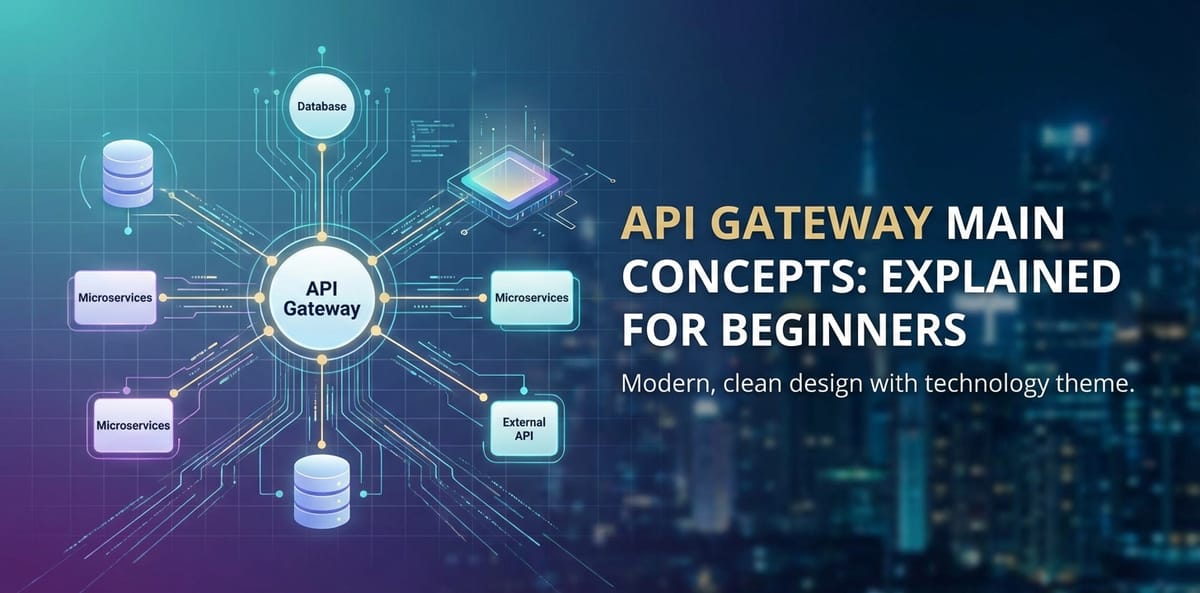

Having established the multitude of problems inherent in direct client-to-service communication, we can now properly introduce the elegant solution: the API Gateway. An API Gateway is essentially a single entry point for all client requests in a distributed system. Instead of clients interacting directly with individual backend services, they communicate with the API Gateway, which then intelligently routes the requests to the appropriate services, performs various cross-cutting concerns, and returns the aggregated or processed responses back to the client. It stands as the vigilant digital doorman, meticulously managing who enters, where they go, and what services they can access within your application infrastructure.

To expand on our previous analogy, if an individual API is like a waiter in a restaurant, an API Gateway is akin to a sophisticated hotel concierge or an airport control tower. When you arrive at a large hotel, you don't typically try to find your room directly or wander into the kitchen to order food. Instead, you go to the concierge desk. The concierge (the API Gateway) knows about all the services within the hotel (rooms, restaurants, spa, gym, etc.). You tell the concierge what you need, and they direct you to the right place, handle your requests, maybe even make reservations for you, and ensure you have a smooth experience, all while ensuring only authorized guests access certain areas. Similarly, an airport control tower manages all incoming and outgoing flights, ensuring safe and efficient routing, preventing collisions, and coordinating with various ground services, abstracting the complexity of air traffic from individual pilots.

The primary role of an API Gateway is to abstract the internal architecture of your backend services from the clients. Clients interact with a single, well-defined API exposed by the gateway, completely unaware of the number of services, their network locations, or the specific communication protocols they might be using internally. This abstraction provides immense flexibility and simplifies client development. For instance, if you decide to refactor a backend service, split it into two, or even change its internal API contract, the API Gateway can be configured to absorb these changes and present a consistent API to the client. This loose coupling is a cornerstone of agile development and microservices architectures.

It's important to differentiate an API Gateway from simpler network components like a reverse proxy or a load balancer, although an API Gateway often incorporates their functionalities. * Reverse Proxy: A reverse proxy sits in front of one or more web servers and forwards client requests to them. It can provide load balancing, caching, and basic security (like SSL termination). However, its primary function is usually at the network or transport layer, forwarding requests based on simple rules (e.g., host, path). It doesn't typically understand the business logic of the APIs or perform complex transformations. * Load Balancer: A load balancer distributes incoming network traffic across multiple servers to ensure optimal resource utilization, maximize throughput, minimize response time, and avoid overloading any single server. While an API Gateway definitely performs load balancing, its scope is much broader, extending into the application layer.

An API Gateway operates at a higher level of abstraction, often at the application layer (Layer 7 of the OSI model). It understands the semantics of HTTP requests and responses, allowing it to perform much more sophisticated operations beyond simple traffic forwarding. It can inspect request headers and bodies, transform payloads, apply complex routing logic based on user roles or API versions, and enforce fine-grained security policies.

The benefits of implementing an API Gateway are profound and directly address the challenges outlined in the previous chapter:

- Centralized Security and Policy Enforcement: Authentication, authorization, and other security policies can be enforced at a single choke point, preventing unauthorized access before requests even reach backend services. This ensures consistency and simplifies security management.

- Simplified Client Development: Clients only need to know about one endpoint – the API Gateway. This significantly reduces client complexity, especially for mobile and web applications that might need to consume data from many different services.

- Improved Performance and Reduced Latency: Caching, request aggregation, and intelligent routing can drastically improve response times for clients by reducing the number of round trips and offloading work from backend services. For instance, a single client request might require data from three different microservices. Without an API Gateway, the client makes three separate requests. With an API Gateway, the client makes one request, and the gateway internally orchestrates the three backend calls, aggregates the results, and sends a single response.

- Service Decoupling and Encapsulation: The API Gateway shields clients from changes in the internal architecture, allowing services to evolve independently without impacting client applications. This fosters greater agility and reduces the risk of breaking existing integrations.

- Resilience and Fault Tolerance: The gateway can implement patterns like circuit breakers, retries, and fallbacks, protecting clients from service failures and preventing cascading failures across the system. If a service is down, the gateway can return a cached response or an appropriate error without forcing the client to deal with the intricacies of service health.

- Enhanced Observability: By centralizing all incoming and outgoing traffic, the API Gateway becomes an ideal point for comprehensive logging, monitoring, and tracing, providing a holistic view of system behavior and performance. This centralized visibility is crucial for troubleshooting and performance analysis.

- Optimized Resource Utilization: Features like rate limiting protect backend services from being overwhelmed by traffic, ensuring they can serve legitimate requests effectively and preventing costly resource spikes.

In essence, an API Gateway serves as the intelligent intermediary that sits between clients and a collection of backend services. It streamlines communication, enhances security, boosts performance, and simplifies the overall architecture, making it an indispensable component for building scalable, resilient, and manageable modern applications.

Chapter 4: Core Concepts and Functions of an API Gateway

The true power of an API Gateway lies in its rich array of functionalities, each designed to address specific challenges in distributed systems. These capabilities transform it from a mere proxy into a highly intelligent and versatile traffic manager and policy enforcer. Understanding these core concepts is fundamental to appreciating how an API Gateway contributes to a robust and efficient architecture.

4.1 Request Routing and Load Balancing

At its heart, an API Gateway is a sophisticated router. Its primary task is to receive an incoming client request and intelligently direct it to the correct backend service instance that can fulfill that request. This involves several layers of decision-making:

- Path-Based Routing: The most common form, where the gateway routes requests based on the URL path. For example, requests to

/usersmight go to the User Service, while requests to/productsgo to the Product Catalog Service. - Host-Based Routing: Used in multi-tenant environments or when different services are exposed via different domain names.

- Header-Based Routing: More advanced routing rules can inspect request headers (e.g.,

X-API-Version) to direct requests to specific versions of a service or to A/B testing instances. - Query Parameter-Based Routing: Similar to header-based, routing decisions can be made based on query parameters present in the URL.

Beyond just identifying the correct service, the API Gateway is also crucial for Load Balancing. In a scalable architecture, there are typically multiple instances of each service running to handle traffic spikes and provide high availability. The gateway distributes incoming requests across these available instances, ensuring no single service instance becomes overwhelmed while others remain idle. Common load balancing algorithms include:

- Round Robin: Requests are distributed sequentially to each server in the pool.

- Least Connections: Requests are sent to the server with the fewest active connections.

- Weighted Round Robin/Least Connections: Servers can be assigned weights based on their capacity, directing more traffic to more powerful instances.

- IP Hash: Requests from a particular client IP address are always sent to the same server, which can be useful for session stickiness.

Effective request routing and load balancing are critical for distributing workload efficiently, maximizing resource utilization, and maintaining consistent performance and availability of backend services.

4.2 Authentication and Authorization

One of the most significant benefits of an API Gateway is its ability to centralize security enforcement. Instead of each microservice implementing its own authentication and authorization logic, the gateway can handle these concerns at the perimeter, acting as a single point of truth for access control.

- Authentication: This is the process of verifying the identity of the client making the request. The API Gateway can support various authentication mechanisms:

- API Keys: Simple tokens passed with each request. The gateway validates the key against an internal store or an external identity provider.

- JWT (JSON Web Tokens): A self-contained token that can be verified cryptographically by the gateway without needing to call an identity provider for every request, improving performance.

- OAuth 2.0/OpenID Connect: Industry-standard protocols for delegated authorization, allowing clients to access protected resources on behalf of a user. The gateway can validate access tokens issued by an identity provider.

- Mutual TLS (mTLS): For highly secure environments, the gateway can enforce mutual authentication, where both the client and the server verify each other's certificates.

- Authorization: Once authenticated, authorization determines what the client is allowed to do. The API Gateway can apply fine-grained authorization policies based on:

- User Roles: Granting access to specific APIs or operations based on the user's role (e.g.,

admin,guest,premium). - Scopes/Permissions: Validating that the client's token contains the necessary permissions (scopes) to access the requested resource.

- Attribute-Based Access Control (ABAC): More complex policies that evaluate attributes of the user, resource, and environment to make authorization decisions.

- User Roles: Granting access to specific APIs or operations based on the user's role (e.g.,

By centralizing these security functions, the API Gateway offloads a significant burden from individual microservices, ensuring consistent security policies across the entire system and reducing the attack surface.

4.3 Rate Limiting and Throttling

To protect backend services from being overwhelmed by excessive traffic, whether malicious (like a DDoS attack) or accidental (like a runaway client application), API Gateways implement rate limiting and throttling.

- Rate Limiting: Restricts the number of requests a client can make to an API within a specific time window. If the limit is exceeded, subsequent requests are rejected, often with an HTTP 429 "Too Many Requests" status code. This prevents abuse and ensures fair usage.

- Throttling: Similar to rate limiting, but often involves queueing requests rather than immediately rejecting them, or applying a gradual reduction in the rate of service to a particular client. Throttling is typically used for resource management, ensuring sustained performance for all users.

The API Gateway can enforce these limits based on various criteria:

- Per API: Different APIs might have different usage quotas.

- Per Client/User: Each authenticated client or user might have a unique rate limit.

- Per IP Address: To protect against unauthenticated abuse.

- Time Windows: Limits can be configured for per second, per minute, per hour, or per day.

Common strategies for implementing rate limits include: * Fixed Window: A simple counter for a fixed time period. * Sliding Window Log: Tracks timestamps of requests to maintain a more accurate sliding window. * Leaky Bucket/Token Bucket: More sophisticated algorithms that smooth out bursty traffic and allow for brief spikes within overall limits.

Effective rate limiting is essential for maintaining the stability, availability, and cost-effectiveness of your backend infrastructure.

4.4 Caching

Caching is a fundamental optimization technique, and an API Gateway is an excellent place to implement it. By storing responses to frequently requested data, the gateway can serve subsequent identical requests directly from its cache, without needing to forward them to the backend service.

Benefits of caching: * Reduced Latency: Clients receive responses much faster as the request doesn't have to traverse the entire network path to the backend service. * Reduced Backend Load: Less traffic reaches the backend services, freeing up their resources to handle more complex or dynamic requests. * Improved Scalability: Backend services can handle more unique requests without scaling up their infrastructure.

API Gateways can implement various caching strategies: * Time-To-Live (TTL): Responses are cached for a specific duration, after which they are considered stale and re-fetched from the backend. * Cache Invalidation: Mechanisms to explicitly remove or update cached items when the underlying data changes, ensuring clients always receive fresh data. * Conditional Caching (ETag, Last-Modified): The gateway can use HTTP headers to check if the backend data has changed before serving a cached response, saving bandwidth and processing power.

4.5 API Transformation and Protocol Translation

One of the more powerful and flexible features of an API Gateway is its ability to transform requests and responses. This allows it to decouple the external API contract presented to clients from the internal API contracts of backend services.

- Request Transformation: The gateway can modify incoming requests before forwarding them to the backend. This might include:

- Header Manipulation: Adding, removing, or modifying HTTP headers.

- Body Transformation: Changing the format (e.g., from XML to JSON, or even structuring a client-friendly request into a format expected by a specific AI model), adding default values, or filtering sensitive data.

- Query Parameter Modification: Renaming, adding, or removing query parameters.

- Request Aggregation: Combining multiple client requests into a single backend request if a service requires it, or conversely, breaking down a single client request into multiple calls to different backend services to gather necessary data.

- Response Transformation: Similarly, the gateway can modify backend responses before sending them back to the client:

- Body Transformation: Restructuring JSON or XML responses to a format more convenient for the client, filtering out internal-only data, or enriching the response with additional information.

- Header Manipulation: Adding security headers, removing internal headers, or setting cache-control directives.

- Response Aggregation: A common and immensely useful feature is combining responses from multiple backend services into a single, unified response for the client. This is particularly valuable for clients (like mobile apps) that might need data from several services to render a single screen, reducing the number of round trips.

Protocol Translation is a specific type of transformation where the API Gateway can translate requests between different communication protocols. For instance, a client might send a standard RESTful HTTP request, but the backend service might communicate using gRPC or a proprietary messaging queue. The gateway bridges this gap, allowing clients to use a familiar protocol while backend services can leverage protocols optimized for their specific needs. This capability is especially critical when integrating with diverse services, including specialized AI models. For example, a platform like APIPark offers a "Unified API Format for AI Invocation" and "Prompt Encapsulation into REST API." This means clients can interact with a wide array of AI models using a consistent REST API, and the API Gateway handles the internal transformation of these requests into the specific invocation formats and prompts required by each underlying AI model. This significantly simplifies AI integration for developers, abstracting away the complexities of various AI service APIs.

4.6 Logging and Monitoring

A robust API Gateway acts as a central point for collecting vital operational data, providing unparalleled observability into your API traffic.

- Detailed Logging: Every request and response passing through the gateway can be logged, capturing critical information such as:

- Timestamp of the request.

- Client IP address.

- Request method and URL.

- HTTP status code of the response.

- Response size.

- Latency (time taken for the request to be processed).

- User ID or API Key used.

- Backend service instance targeted. This comprehensive logging is invaluable for debugging issues, auditing access, and understanding API usage patterns. For instance, APIPark provides "Detailed API Call Logging," ensuring that every nuance of an API call is recorded, which is crucial for quick troubleshooting and maintaining system stability.

- Monitoring and Metrics: The API Gateway can generate and export a wide range of metrics, which are essential for real-time monitoring and long-term performance analysis. These metrics include:

- Total number of requests per second/minute.

- Error rates (e.g., 4xx and 5xx responses).

- Average and percentile latency.

- Cache hit/miss ratios.

- Resource utilization (CPU, memory) of the gateway itself. These metrics can be fed into monitoring dashboards (like Grafana, Prometheus, or Datadog) to provide real-time alerts and visualizations of system health. Furthermore, by analyzing historical call data, as offered by features like APIPark's "Powerful Data Analysis," businesses can identify long-term trends and predict potential performance issues, enabling proactive maintenance and capacity planning.

4.7 Versioning

As applications evolve, so do their APIs. Managing different versions of an API concurrently is a common challenge, especially to maintain backward compatibility for existing clients while introducing new features. An API Gateway provides robust mechanisms for API versioning:

- URL Path Versioning: Embedding the version number directly in the URL (e.g.,

/v1/users,/v2/users). The gateway routes requests based on this path segment. - Query Parameter Versioning: Passing the version as a query parameter (e.g.,

/users?api-version=1.0). - Header Versioning: Using a custom HTTP header (e.g.,

X-API-Version: 1.0) to indicate the desired API version.

The API Gateway can route requests to different backend service versions based on these indicators. This allows developers to deploy new API versions without immediately breaking older clients, giving them time to migrate. It also facilitates A/B testing of new API features.

4.8 Circuit Breaker and Retries

In a distributed system, individual services can and will fail. A single failing service can, if not managed properly, cause a cascading failure across the entire system. The API Gateway can implement resilience patterns to mitigate this:

- Circuit Breaker: Inspired by electrical circuit breakers, this pattern prevents a client from repeatedly trying to invoke a service that is known to be failing. When a service experiences a high rate of failures, the circuit breaker "trips," and subsequent requests to that service are immediately rejected by the gateway without even attempting to call the backend. After a configurable "timeout" period, the circuit enters a "half-open" state, allowing a limited number of test requests to pass through. If these succeed, the circuit "closes" and normal operation resumes. This prevents overwhelming a failing service and gives it time to recover.

- Retries: For transient failures (e.g., network glitches, temporary service unavailability), the API Gateway can automatically retry a request to a backend service. This can significantly improve the perceived reliability of the system, but it must be implemented carefully to avoid overwhelming services or causing unintended side effects (especially for non-idempotent operations).

These patterns enhance the overall fault tolerance and resilience of the system, making it more robust against inevitable service failures.

4.9 API Service Sharing and Lifecycle Management

An API Gateway often integrates with or provides features for comprehensive API lifecycle management, from design and publication to deprecation and decommissioning. It also facilitates the sharing and discovery of APIs within and across organizations.

- Developer Portal: Many API Gateway solutions include a developer portal (or can integrate with one) where consumers can discover available APIs, read documentation, subscribe to APIs, and manage their API keys. This self-service capability greatly accelerates API adoption and reduces support overhead.

- Publication and Versioning: The gateway acts as the publication point for APIs, making them discoverable and usable. It helps manage different versions of published APIs, ensuring a smooth transition for consumers.

- Subscription and Approval Workflows: To control access to sensitive or premium APIs, the gateway can enforce subscription mechanisms, where clients must subscribe to an API and potentially await administrator approval before gaining access. This adds an extra layer of governance and security, ensuring that only authorized callers can invoke specific APIs. APIPark explicitly offers an "API Resource Access Requires Approval" feature, which is vital for preventing unauthorized API calls and potential data breaches by enforcing a mandatory subscription and approval process.

- Service Sharing within Teams: In large organizations, different departments or teams may produce APIs that could be beneficial to others. The API Gateway (or its associated developer portal) can serve as a centralized catalog, making it easy for internal teams to find, understand, and reuse existing API services, fostering collaboration and reducing redundant development efforts. APIPark champions this with its "API Service Sharing within Teams" feature, providing a centralized display for all API services, thereby simplifying discovery and consumption across various departments.

- End-to-End API Lifecycle Management: Beyond just publishing, a sophisticated API Gateway helps govern the entire lifecycle of an API. This includes initial design (sometimes with schema validation), publication, monitoring its usage and performance, evolving it through versioning, and eventually deprecating and decommissioning it. This holistic approach ensures APIs remain relevant, secure, and performant throughout their lifespan. APIPark explicitly supports "End-to-End API Lifecycle Management," aiding in regulating API management processes, traffic forwarding, load balancing, and versioning, ensuring comprehensive governance.

4.10 Multi-tenancy and Isolation

For larger organizations or API providers, supporting multiple independent teams, departments, or even external customers (tenants) on a shared API Gateway infrastructure is a crucial requirement.

- Tenant Isolation: An API Gateway can provide strong isolation between tenants, ensuring that each tenant has its own independent applications, data, user configurations, and security policies. This means one tenant's activities or misconfigurations do not impact others.

- Shared Infrastructure: Despite the isolation, the underlying API Gateway infrastructure can be shared, leading to significant cost savings and improved resource utilization compared to deploying a separate gateway for each tenant.

- Independent Access Permissions: Each tenant can define its own access permissions for its APIs, managing who within their team can publish, subscribe, or administer their specific APIs.

- Resource Allocation: The gateway can enforce resource quotas (e.g., rate limits, concurrent connections) per tenant, preventing one tenant from monopolizing shared resources.

APIPark excels in this area with its "Independent API and Access Permissions for Each Tenant" feature. It allows the creation of multiple teams (tenants), each operating with independent applications, data, user configurations, and security policies, all while efficiently sharing the underlying infrastructure to maximize resource utilization and minimize operational expenditures. This robust multi-tenancy support makes it ideal for enterprise-level deployments and API providers.

To summarize the vast functionalities an API Gateway offers, let's look at a comparison table highlighting its capabilities versus a simple reverse proxy:

| Feature/Functionality | Simple Reverse Proxy | API Gateway (Advanced) |

|---|---|---|

| Primary Focus | Network traffic forwarding, basic load balancing, SSL termination | Comprehensive API management, intelligent routing, security, policy enforcement, transformation, aggregation |

| Layer of Operation | Primarily Layer 4 (Transport), some Layer 7 (Application) for basic HTTP | Primarily Layer 7 (Application) |

| Request/Response Inspection | Limited (e.g., host, path) | Deep inspection of headers, body, query parameters, full API payload |

| Authentication/Authorization | Minimal (e.g., IP whitelisting, client certs) | Centralized, supports API Keys, JWT, OAuth2, granular policies, user/role-based access |

| Rate Limiting/Throttling | Basic (e.g., connection limits) | Advanced, per API/user/IP, various algorithms, sophisticated quotas |

| Caching | Basic HTTP caching | Intelligent caching, cache invalidation, conditional caching |

| API Transformation | None | Extensive payload transformation, protocol translation, request/response aggregation |

| Logging & Monitoring | Basic access logs | Detailed API call logs, metrics generation, integration with observability tools |

| Versioning | Limited (e.g., URL paths) | Advanced version management (path, header, query parameter-based) |

| Resilience Patterns | None | Circuit Breakers, Retries, Fallbacks |

| Developer Portal/Lifecycle | None | Full API lifecycle management, developer portal, subscription, governance |

| Multi-Tenancy | None | Full support for tenant isolation, independent permissions, shared infrastructure |

| Business Logic Awareness | Low | High, understands API contracts and business operations |

This comprehensive overview of the core concepts and functions clearly illustrates that an API Gateway is far more than just a proxy; it is a critical component that enhances security, scalability, performance, and manageability of modern distributed applications.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

Chapter 5: Architectural Patterns with API Gateways

The versatility of an API Gateway allows it to be integrated into various architectural patterns, each addressing specific needs and challenges. Its role can adapt depending on the complexity of the system and the types of clients interacting with the backend.

5.1 API Gateway for Microservices

This is the most common and arguably the quintessential use case for an API Gateway. In a microservices architecture, a single large application is decomposed into numerous small, independent services, each responsible for a specific business capability. While this approach offers significant benefits in terms of agility, scalability, and independent deployment, it also introduces complexity for client applications.

- Simplifying Client Interactions: Without an API Gateway, a client application (e.g., a mobile app) might need to make calls to five, ten, or even more different microservices to fetch all the data required for a single screen or feature. Each call would involve a separate network request, potentially to a different IP address or domain, and require the client to aggregate and compose the responses. This leads to increased network latency, significant client-side complexity, and a fragile client that is tightly coupled to the internal service landscape.

- The Gateway as an Aggregator: The API Gateway solves this by acting as an aggregation layer. A single client request to the gateway can trigger multiple internal calls to various microservices. The gateway then collects these responses, potentially transforms them, and composes a single, tailored response that is optimal for the client. This dramatically reduces round trips, simplifies client code, and improves the overall user experience.

- Backend for Frontends (BFF) Pattern: A specialized variation of the API Gateway pattern in microservices is the Backend for Frontends (BFF). In this pattern, instead of having a single generic API Gateway for all clients, you create a separate API Gateway (or a dedicated set of endpoints within a larger gateway) specifically tailored for a particular type of client (e.g., one BFF for web applications, another for mobile apps, and yet another for IoT devices).

- Client-Specific Optimization: Each BFF can expose an API contract that is perfectly optimized for its corresponding frontend, providing exactly the data and format that client needs, without over-fetching or under-fetching. For example, a mobile app might need a highly optimized, compact JSON response, while a web application might require more extensive data for rendering complex UI components.

- Independent Evolution: The BFF pattern further decouples frontend development from backend service development. Changes in the backend services only require adjustments to the relevant BFF, leaving other BFFs and their associated clients unaffected. This is particularly useful when different frontends have distinct requirements and release cycles.

5.2 API Gateway for Legacy Systems

While often associated with modern microservices, API Gateways are also incredibly valuable when dealing with legacy systems. Many older applications expose their functionalities through outdated or complex protocols like SOAP, CORBA, or proprietary RPC mechanisms. Modern applications and clients, however, typically expect RESTful APIs.

- Modernizing Access: An API Gateway can act as a modernization layer for legacy systems. It can expose a clean, modern RESTful API to external clients, while internally translating these requests into the legacy protocols and data formats expected by the older systems.

- Encapsulating Complexity: This approach encapsulates the complexity of the legacy system behind the gateway, shielding modern clients from its intricacies. It allows organizations to leverage existing, well-proven business logic within their legacy systems without incurring the massive cost and risk of a full rewrite.

- Phased Migration: API Gateways facilitate a phased migration strategy. Organizations can gradually replace parts of their legacy system with new microservices, and the gateway can seamlessly route requests to either the old or new components, presenting a unified API to clients throughout the transition.

5.3 API Gateway in Hybrid and Multi-Cloud Environments

As enterprises increasingly adopt hybrid cloud strategies (combining on-premise infrastructure with public cloud services) or multi-cloud approaches (using multiple public cloud providers), managing API access across these disparate environments becomes a significant challenge.

- Consistent API Access: An API Gateway can provide a consistent and unified API endpoint that spans across different clouds and on-premise data centers. Clients interact with a single gateway endpoint, regardless of where the actual backend services are physically located. The gateway handles the complex routing and secure communication across network boundaries.

- Centralized Policy Enforcement: Security policies, rate limits, and monitoring can be consistently applied across all services, regardless of their deployment location. This simplifies governance and ensures compliance in distributed environments.

- Traffic Management: The gateway can intelligently route traffic to services deployed in different clouds, potentially based on latency, cost, or regulatory requirements. This enables efficient disaster recovery strategies and optimizes resource utilization across heterogeneous infrastructure.

- Network Abstraction: It abstracts away the underlying network topology, making it easier to manage and scale services that are distributed across different geographical regions or cloud providers.

By acting as a universal access layer, the API Gateway simplifies the management and consumption of services in highly distributed and complex cloud environments, making it a cornerstone for modern enterprise architectures.

Chapter 6: Key Considerations When Choosing and Implementing an API Gateway

Selecting and implementing an API Gateway is a critical decision that can profoundly impact the performance, security, and maintainability of your application landscape. It's not a one-size-fits-all solution, and various factors must be carefully evaluated to ensure the chosen gateway aligns with your specific organizational needs and technical requirements.

6.1 Performance and Scalability

The API Gateway sits in the critical path of all client requests. As such, its own performance and scalability are paramount. A bottleneck at the gateway level can cripple your entire system, regardless of how performant your backend services are.

- Low Latency: The gateway should introduce minimal latency to each request. Every millisecond added by the gateway accumulates, potentially impacting user experience. Efficient request processing, optimized network stack, and asynchronous operations are key.

- High Throughput: It must be capable of handling a very high volume of requests per second (TPS) without degradation in performance. This is particularly crucial for public APIs or high-traffic applications. Some API Gateway solutions, like APIPark, are specifically engineered for high performance, with the ability to achieve over 20,000 TPS on modest hardware (e.g., an 8-core CPU and 8GB of memory), rivaling the performance of traditional high-performance proxies like Nginx.

- Horizontal Scalability: The API Gateway itself must be horizontally scalable, meaning you can easily add more instances of the gateway to handle increased load. It should support cluster deployment to distribute traffic and provide high availability.

- Resource Footprint: Consider the CPU and memory footprint of the gateway. An efficient gateway will minimize resource consumption, reducing operational costs.

6.2 Security Features

Given its position as the first line of defense, the security capabilities of an API Gateway are non-negotiable.

- Comprehensive Authentication/Authorization: As discussed in Chapter 4, robust support for various authentication schemes (API Keys, JWT, OAuth 2.0, mTLS) and granular authorization policies is essential.

- Threat Protection: The gateway should offer features like Web Application Firewall (WAF) capabilities to detect and block common web attacks (e.g., SQL injection, XSS), DDoS protection, and schema validation to prevent malformed requests.

- SSL/TLS Management: Secure communication is fundamental. The gateway should handle SSL/TLS termination, certificate management, and ensure strong encryption protocols.

- Auditing and Compliance: The ability to log security-relevant events and integrate with security information and event management (SIEM) systems is vital for auditing and meeting compliance requirements.

6.3 Ease of Configuration and Management

A powerful API Gateway that is difficult to configure or manage will negate many of its benefits.

- User Interface (UI): A well-designed, intuitive UI can significantly simplify the process of defining routes, policies, and managing APIs, especially for non-technical users or small teams.

- Declarative Configuration: For larger, more complex deployments and DevOps environments, the gateway should support declarative configuration (e.g., YAML, JSON files). This allows configurations to be version-controlled, tested, and deployed as code through CI/CD pipelines.

- Command Line Interface (CLI): A powerful CLI can automate common tasks and enable scripting for advanced use cases.

- API for Management: The gateway itself should expose an API for programmatic management, allowing integration with other internal tools and automation systems.

- Integration with Existing Systems: Consider how easily the gateway integrates with your existing identity providers, monitoring tools, and CI/CD pipelines.

6.4 Observability and Analytics

Understanding how your APIs are being used and how your system is performing is crucial for operations and business insights.

- Rich Logging and Metrics: The gateway should provide detailed, configurable logging and a comprehensive set of metrics (latency, error rates, throughput) that can be easily exported to your existing monitoring and logging infrastructure (e.g., Prometheus, ELK stack, Splunk).

- Distributed Tracing Support: Integration with distributed tracing systems (e.g., Jaeger, Zipkin, OpenTelemetry) allows you to trace a single request across multiple services, which is invaluable for debugging and performance profiling in microservices architectures.

- Analytics Capabilities: Beyond raw data, some gateways offer built-in analytics dashboards or integrations that can help visualize trends, identify bottlenecks, and provide business insights into API consumption. As noted earlier, APIPark's "Powerful Data Analysis" feature analyzes historical call data to display long-term trends and performance changes, which can be invaluable for predictive maintenance.

6.5 Extensibility

As your needs evolve, you may require custom logic or integrations that are not part of the out-of-the-box gateway features.

- Plugin Architecture: A flexible plugin architecture allows you to extend the gateway's functionality with custom code or pre-built plugins (e.g., for custom authentication, data transformation, or integration with specific third-party services).

- Scripting Capabilities: The ability to embed custom scripts (e.g., Lua, JavaScript) for complex routing or transformation logic can be very powerful.

6.6 Cost and Licensing

The financial implications of adopting an API Gateway solution can vary widely.

- Open-Source vs. Commercial: Open-source API Gateways (like Kong, Apache APISIX, or APIPark) offer a cost-effective starting point, allowing you to deploy and use the core functionalities without licensing fees. However, they may require more internal expertise for setup, maintenance, and advanced features. Commercial API Gateways (from vendors like Google Apigee, AWS API Gateway, Azure API Management, or enterprise versions of open-source solutions) often come with enterprise-grade features, professional support, and managed services, but at a significant cost.

- Deployment Model: Consider whether you prefer a self-hosted gateway (on-premise or on your own cloud instances) or a fully managed cloud-native service. Managed services abstract away operational complexities but come with recurring costs.

- Pricing Structure: Understand the pricing model – is it per request, per deployed instance, per developer, or a fixed subscription? For large-scale deployments, these costs can add up quickly.

APIPark offers a compelling proposition here. As an open-source AI gateway and API management platform under the Apache 2.0 license, it caters to basic API resource needs for startups, offering a cost-effective entry. For leading enterprises requiring more advanced features and dedicated professional technical support, APIPark also provides a commercial version, illustrating a flexible model for different organizational scales and requirements.

6.7 Vendor Lock-in

Choosing an API Gateway can lead to a degree of vendor lock-in, especially with proprietary cloud-managed services.

- Portability: Evaluate how easy it would be to migrate your API configurations and policies if you decide to switch gateway providers in the future.

- Standardization: Favor gateways that adhere to open standards where possible, as this generally reduces lock-in.

By carefully weighing these considerations against your organization's specific context, budget, and strategic goals, you can make an informed decision and select an API Gateway solution that effectively serves as the intelligent backbone for your modern application architecture.

Chapter 7: Real-World Examples and Use Cases

The theoretical understanding of an API Gateway gains much more resonance when viewed through the lens of real-world applications and how major players in the digital economy leverage them. From streaming giants to e-commerce behemoths, API Gateways are the unsung heroes facilitating seamless digital experiences and driving the global API economy.

Netflix: Pioneering Microservices and API Gateways Perhaps one of the most famous examples of an API Gateway in action is at Netflix. When Netflix transitioned from a monolithic application to a highly distributed microservices architecture, they faced the very challenges we discussed earlier: managing thousands of service endpoints, ensuring security, and dealing with client-specific data aggregation. Their solution was to develop their own API Gateway, Zuul (later open-sourced), which handles all incoming requests from millions of diverse clients (web browsers, smart TVs, mobile phones, gaming consoles).

- Client Abstraction: Zuul provides a single, unified API endpoint for all clients, abstracting away the hundreds of microservices that power Netflix's vast content catalog, recommendation engine, user profiles, and billing systems.

- Request Routing: It intelligently routes requests to the correct backend services, often dynamically based on real-time service discovery and load.

- Security and Resilience: The gateway enforces security policies, handles authentication, and implements robust resilience patterns like circuit breakers to protect against service failures, ensuring that even if one microservice encounters issues, the entire Netflix experience doesn't collapse.

- Client-Specific API Aggregation: Netflix heavily utilizes the concept of "Backend for Frontends" (BFF), where the gateway or specific proxy layers within it tailor API responses to the unique needs of different device types, optimizing data payloads and reducing client-side processing.

Amazon: The Power of the API Economy Amazon, both as an e-commerce platform and as a cloud provider (AWS), is a prime example of the API economy in action. Amazon's internal architecture relies heavily on APIs, where virtually every service communicates via well-defined APIs. With AWS, they have democratized access to these powerful building blocks, offering services like AWS API Gateway.

- Monetization and Management: AWS API Gateway allows developers to create, publish, maintain, monitor, and secure APIs at any scale. It's a fully managed service, meaning developers don't have to worry about provisioning or scaling servers for their gateway.

- Integration with Serverless: It tightly integrates with serverless computing (AWS Lambda), allowing developers to build powerful, scalable APIs without managing any servers for their backend logic.

- Developer Ecosystem: By providing a robust API Gateway service, Amazon empowers countless businesses and developers to build their own API-driven applications, fostering innovation and creating new business models that rely on programmatic access to data and functionality.

Financial Services and Open Banking: The financial sector is rapidly transforming through "Open Banking" initiatives, driven by regulations that mandate banks to open up their customer data and services (with customer consent) to third-party providers via APIs.

- Secure Data Exchange: API Gateways are fundamental in this context. They provide the secure, standardized channels through which banks can expose their APIs to FinTech companies, ensuring that sensitive financial data is exchanged securely, with proper authentication, authorization, and audit trails.

- Regulatory Compliance: The gateway helps enforce regulatory compliance by ensuring that only authorized and compliant applications can access specific financial APIs, and all interactions are logged for auditing purposes.

- Innovation: This fosters a new wave of financial innovation, allowing developers to build novel financial products and services by integrating with banking APIs, such as personalized budgeting tools, faster payment systems, and aggregated financial dashboards.

IoT (Internet of Things) Platforms: In the world of IoT, billions of devices generate vast amounts of data and require remote control. API Gateways are crucial for managing this scale and diversity.

- Device Connectivity: An API Gateway can provide a scalable and secure ingestion point for data coming from countless IoT devices, often translating various device protocols into a unified API format.

- Command and Control: It enables secure command and control of devices, ensuring that only authorized users or applications can send commands to specific devices.

- Edge Computing Integration: API Gateways can be deployed at the edge (closer to the devices) to perform initial processing, filtering, and aggregation of data before forwarding it to central cloud services, reducing latency and bandwidth usage.

These examples underscore that API Gateways are not merely theoretical constructs but vital, high-performance components powering the digital infrastructure of today's most demanding applications and the broader API economy. They are the essential facilitators for connecting disparate services, enabling secure data exchange, and fostering innovation across industries.

Chapter 8: Looking Ahead - The Future of API Gateways

The landscape of software architecture is in a constant state of evolution, and API Gateways, as a central piece of this puzzle, are evolving alongside it. As systems become even more distributed, dynamic, and intelligent, the capabilities and integration patterns of API Gateways are expanding significantly. The future promises gateways that are not only more efficient and secure but also more intelligent and deeply integrated into the entire application ecosystem.

8.1 Integration with Service Meshes

One of the most significant trends impacting API Gateways is the rise of Service Meshes. A service mesh (like Istio, Linkerd, or Consul Connect) is a dedicated infrastructure layer that handles service-to-service communication within a microservices application. While an API Gateway manages north-south traffic (external client to internal services), a service mesh primarily governs east-west traffic (internal service to internal service).

The future sees a strong convergence and integration between these two components:

- Complementary Roles: The API Gateway will continue to be the primary entry point for external traffic, focusing on client-facing concerns like API key validation, external rate limiting, and public API exposure. The service mesh will take over internal routing, retries, circuit breaking, and traffic management between microservices.

- Unified Control Plane: We are moving towards unified control planes that can manage both the API Gateway and the service mesh. This allows for consistent policy enforcement and observability across the entire communication path, from the edge to the deepest internal service.

- Reduced Overlap, Increased Efficiency: By delegating internal resilience and traffic management to the service mesh, API Gateways can become leaner and more focused on their core edge responsibilities, leading to more efficient resource utilization and clearer separation of concerns.

8.2 AI-Powered Gateway Features

The rapid advancements in Artificial Intelligence and Machine Learning are beginning to permeate every layer of the software stack, and API Gateways are no exception. AI will enhance gateways in several key areas:

- Intelligent Routing and Traffic Management: AI algorithms can analyze real-time traffic patterns, backend service health, and historical performance data to make more intelligent routing decisions, optimizing for latency, cost, or resource utilization. This could include predictive scaling or dynamic load balancing based on anticipated load.

- Anomaly Detection and Security: AI can continuously monitor API traffic for unusual patterns that might indicate security threats (e.g., bot attacks, unauthorized access attempts, data exfiltration). Machine learning models can identify zero-day attacks or sophisticated fraud attempts that traditional rule-based security systems might miss.

- Proactive Issue Prediction: By analyzing vast amounts of log and metric data, AI can predict potential performance bottlenecks or service failures before they occur, allowing operators to take proactive measures.

- Automated API Management: AI could assist in automating aspects of API lifecycle management, such as generating API documentation, suggesting optimal rate limits, or even identifying opportunities for API consolidation or deprecation based on usage patterns.

- Unified AI Gateway Capabilities: Specialized AI Gateways, like APIPark, are already emerging as crucial components. These gateways are designed not just to manage traditional REST APIs, but specifically to streamline the integration and management of diverse AI models. Features like "Quick Integration of 100+ AI Models" and "Unified API Format for AI Invocation" enable developers to consume a wide range of AI services through a single, consistent API endpoint, abstracting away the complexities of different AI model providers and their specific invocation protocols. This dramatically lowers the barrier to entry for incorporating AI into applications.

8.3 Serverless Gateway Functions

The rise of serverless computing (e.g., AWS Lambda, Azure Functions, Google Cloud Functions) is also influencing API Gateway deployments.

- Event-Driven Architectures: API Gateways are increasingly integrating seamlessly with serverless functions, acting as the front door for event-driven architectures. A request to the gateway can directly trigger a serverless function, allowing developers to build highly scalable and cost-effective APIs without managing any servers.

- Gateway as a Function: In some contexts, the API Gateway itself might be implemented using serverless functions for specific, lightweight routing or transformation logic, leading to even greater operational simplicity and scalability for certain use cases.

- Reduced Operational Overhead: For many organizations, the desire to minimize operational overhead will push towards fully managed API Gateway services provided by cloud vendors or serverless-first gateway solutions.

8.4 GraphQL Gateways and Federation

While REST remains dominant, GraphQL is gaining traction for its efficiency in data fetching. The future API Gateway landscape will increasingly accommodate and specialize in GraphQL:

- GraphQL Gateway: Instead of exposing multiple REST endpoints, a GraphQL gateway exposes a single GraphQL schema that aggregates data from various backend services (REST, gRPC, databases).

- GraphQL Federation: More advanced patterns, like GraphQL Federation, allow for composing a single GraphQL schema from multiple independent GraphQL services, managed and exposed through a smart gateway. This allows for highly flexible and efficient data access for clients while maintaining the autonomy of backend services.

In conclusion, the API Gateway is not a static technology but a dynamic and evolving component at the forefront of distributed systems architecture. Its future is characterized by deeper integration with other infrastructure layers like service meshes, enhanced intelligence powered by AI, tighter coupling with serverless paradigms, and expanded support for emerging API styles. These advancements will make API Gateways even more indispensable for building the next generation of scalable, secure, and intelligent applications.

Conclusion

The journey through the core concepts of an API Gateway reveals its pivotal role in the modern software landscape. From understanding the fundamental principles of an API as the building blocks of communication to recognizing the inherent complexities of direct service interactions, we have seen how the API Gateway emerged as an indispensable solution. It stands as the vigilant digital doorman, orchestrating traffic, enforcing security, and streamlining operations for distributed systems.

We delved into the multifaceted functionalities that empower an API Gateway: intelligent request routing and load balancing to optimize resource utilization, centralized authentication and authorization to bolster security, robust rate limiting to ensure stability, strategic caching for enhanced performance, and powerful API transformation capabilities—including protocol translation—to decouple clients from backend complexities, as exemplified by platforms like APIPark with its unified AI API formats. We also explored its critical roles in logging, monitoring, versioning, resilience patterns, comprehensive API lifecycle management, team collaboration, and robust multi-tenancy.

Beyond its core functions, we examined how API Gateways are integrated into various architectural patterns, from simplifying microservices interactions and enabling the "Backend for Frontends" approach to modernizing access to legacy systems and providing consistent API exposure in complex hybrid and multi-cloud environments. We also discussed crucial considerations for selecting and implementing an API Gateway, emphasizing the importance of performance, scalability, security, ease of management, observability, extensibility, cost, and the potential for vendor lock-in.

Finally, we looked towards the horizon, anticipating a future where API Gateways integrate more deeply with service meshes, become imbued with AI-powered intelligence for smarter operations and enhanced security, adapt to serverless paradigms, and evolve to support advanced API patterns like GraphQL federation. The continuous innovation in this space underscores its enduring relevance.

In essence, the API Gateway is far more than a simple proxy; it is a strategic control point, a critical layer of abstraction, and a powerful engine for governance in the API economy. For beginners stepping into the world of distributed systems, grasping these main concepts is not just theoretical knowledge but a practical necessity. By leveraging an API Gateway effectively, developers and organizations can build applications that are not only more resilient, secure, and performant but also inherently more manageable and adaptable to the ever-changing demands of the digital world. It truly serves as the foundational backbone for robust digital transformation, enabling seamless connectivity and unlocking new possibilities for innovation.

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between an API Gateway and a Load Balancer or Reverse Proxy? While an API Gateway often incorporates functionalities found in load balancers and reverse proxies, its scope is much broader and operates at a higher level (application layer or Layer 7). A reverse proxy primarily forwards requests based on basic rules like host or path, and a load balancer distributes traffic among servers. An API Gateway, however, performs advanced API-specific logic such as authentication, authorization, rate limiting, request/response transformation, aggregation of multiple backend service calls, caching, and version management, effectively serving as a central management layer for all external API interactions, rather than just basic network traffic routing.

2. Why is an API Gateway particularly important in a microservices architecture? In a microservices architecture, an application is composed of many small, independent services. Without an API Gateway, client applications would need to know and manage the endpoints for dozens or hundreds of individual services, leading to increased complexity, security vulnerabilities, and duplicated logic (e.g., authentication, rate limiting) across clients and services. The API Gateway simplifies this by providing a single, unified entry point for all client requests, abstracting the internal service landscape, centralizing cross-cutting concerns, and enabling client-specific API aggregation (like the Backend for Frontends pattern).

3. What are the key security benefits of using an API Gateway? An API Gateway significantly enhances security by centralizing critical security functions at the edge of your network. It can enforce comprehensive authentication mechanisms (API Keys, JWT, OAuth 2.0) and fine-grained authorization policies (based on user roles or permissions) before requests even reach your backend services. Additionally, it can provide threat protection (e.g., Web Application Firewall features, DDoS protection), handle SSL/TLS termination, and generate detailed audit logs, effectively creating a strong perimeter defense and ensuring consistent security across your entire API landscape.

4. How does an API Gateway improve performance and scalability? An API Gateway contributes to performance and scalability through several mechanisms: * Request Aggregation: It can combine multiple internal service calls into a single client request, reducing network round trips and latency. * Caching: By caching frequently requested responses, it reduces the load on backend services and speeds up response times for clients. * Rate Limiting: Protects backend services from being overwhelmed by traffic, ensuring their stability and availability. * Load Balancing: Distributes incoming traffic efficiently across multiple service instances, optimizing resource utilization. * Circuit Breakers and Retries: Improve resilience by preventing cascading failures and handling transient issues gracefully, ensuring continuous service.

5. What is the difference between an API Gateway and a Service Mesh, and how do they interact? An API Gateway and a Service Mesh both manage network traffic, but they operate at different architectural layers and for different purposes. * API Gateway: Focuses on "north-south" traffic (external client to internal services). It handles client-facing concerns like public API exposure, authentication, rate limiting, and request transformation. * Service Mesh: Focuses on "east-west" traffic (internal service-to-service communication). It handles internal routing, retries, circuit breaking, observability, and traffic management between microservices. In modern architectures, they are often used together in a complementary fashion. The API Gateway acts as the entry point for external traffic, and once a request passes through the gateway, it enters the service mesh for internal routing and management, providing comprehensive traffic control from the edge to the deepest internal services.

🚀You can securely and efficiently call the OpenAI API on APIPark in just two steps:

Step 1: Deploy the APIPark AI gateway in 5 minutes.

APIPark is developed based on Golang, offering strong product performance and low development and maintenance costs. You can deploy APIPark with a single command line.

curl -sSO https://download.apipark.com/install/quick-start.sh; bash quick-start.sh

In my experience, you can see the successful deployment interface within 5 to 10 minutes. Then, you can log in to APIPark using your account.

Step 2: Call the OpenAI API.