Best Open Source Webhook Management Tools & Practices

In the rapidly evolving landscape of modern software development, the ability of disparate systems to communicate and react to events in real-time has become not just a luxury, but a fundamental requirement. From notifying users about new orders to triggering continuous integration pipelines, webhooks stand as a cornerstone of this event-driven paradigm. They represent a lightweight, HTTP-based mechanism for services to push information to other services, acting as "user-defined HTTP callbacks" that are invoked when a specific event occurs. Unlike traditional polling, where a client constantly asks a server for updates, webhooks offer an immediate, efficient, and resource-friendly alternative, significantly reducing latency and server load.

However, while the concept of webhooks is elegantly simple, their effective management in production environments is fraught with complexities. Ensuring reliable delivery, robust security, scalable infrastructure, and comprehensive observability for webhooks requires careful planning, architectural foresight, and the right set of tools and practices. As systems grow in complexity and event volumes surge, the need for sophisticated webhook management becomes paramount. Without it, developers face a myriad of challenges ranging from missed events and data inconsistencies to security vulnerabilities and debugging nightmares.

This comprehensive guide delves into the world of open-source webhook management, exploring the best practices, architectural considerations, and the suite of open-source tools that empower developers and enterprises to build resilient and scalable event-driven applications. We will not only dissect the technical intricacies of managing webhooks but also contextualize them within the broader ecosystem of API governance, highlighting how an overarching API gateway strategy and the philosophy of an Open Platform contribute significantly to a successful webhook implementation.

The Unseen Backbone: Understanding the Anatomy and Importance of Webhooks

To truly appreciate the nuances of webhook management, it's essential to first grasp their fundamental mechanics and their pivotal role in modern distributed systems. At its heart, a webhook is a simple POST request containing a payload of data sent from a source application (the "publisher") to a designated URL (the "consumer's endpoint") when a specific event happens. This push-based model contrasts sharply with the traditional pull-based API paradigm, where a consumer would repeatedly query an API endpoint to check for new data.

Consider a scenario in an e-commerce platform: when a customer places an order, the order processing system (publisher) can instantly send a webhook to a shipping logistics system (consumer). This immediate notification eliminates the need for the shipping system to poll the order system every few seconds, saving resources for both parties and enabling real-time fulfillment. Other common use cases include:

- Payment Gateways: Notifying merchants of successful or failed transactions.

- SaaS Applications: Alerting integrated services about user sign-ups, data updates, or triggered workflows.

- Version Control Systems: Triggering CI/CD pipelines upon code commits or pull requests (e.g., GitHub webhooks).

- Chat Platforms: Delivering messages or alerts to external services.

- Monitoring and Alerting: Sending notifications to incident management tools when system anomalies are detected.

Each webhook typically consists of several key components:

- Event: The specific action or state change that triggers the webhook (e.g.,

order.created,user.updated). - Payload: The data associated with the event, usually formatted as JSON or XML, describing what happened. This payload can vary significantly in structure and content depending on the event and the publisher.

- Target URL: The unique HTTP endpoint provided by the consumer, where the webhook publisher sends the POST request. This URL acts as the recipient's address for event notifications.

- Headers: HTTP headers often contain additional metadata, such as content type, authentication tokens, or unique identifiers (e.g.,

X-GitHub-Event,X-Stripe-Signature). These headers are crucial for security and processing.

The elegance of webhooks lies in their simplicity and flexibility, allowing for loosely coupled architectures where services can react to events without direct knowledge of each other's internal workings. This fosters greater agility, resilience, and scalability in microservices environments, making them an indispensable tool in the modern developer's toolkit. However, this very simplicity can mask significant challenges when attempting to manage them at scale, especially in high-traffic or mission-critical applications.

The Gauntlet of Webhook Management: Identifying Key Challenges

While webhooks are powerful, their implementation and ongoing management introduce a distinct set of challenges that, if not addressed proactively, can undermine the reliability and security of an entire system. These challenges are multifaceted, encompassing aspects of delivery, security, scalability, and observability.

1. Ensuring Reliable Delivery: The Unpredictable Network

The internet is an inherently unreliable medium. Network glitches, server outages, and transient errors are commonplace. For webhooks, this means a simple HTTP POST request is not guaranteed to succeed on the first attempt.

- Transient Failures: The consumer's server might be temporarily down, overloaded, or experiencing a brief network interruption. Without a retry mechanism, such events would be permanently lost.

- Idempotency: What if a webhook is successfully delivered but the publisher doesn't receive the acknowledgment, leading to a duplicate send? Consumers must be able to process the same webhook multiple times without undesirable side effects.

- Delivery Guarantees: For critical events, "at-least-once" delivery is often required, meaning the webhook must be delivered, even if it means delivering it more than once. Achieving this reliably across distributed systems is complex.

- Ordering: In some scenarios, the order of events is critical (e.g.,

user.createdbeforeuser.updated). Webhook systems must consider how to preserve or handle out-of-order delivery. - Throttling & Backpressure: Consumers might have rate limits or become overwhelmed. Publishers need mechanisms to detect this and back off, preventing service degradation.

2. Fortifying Security: Trusting the Source and Protecting the Data

Webhooks, by their nature, involve sending sensitive data to external endpoints. This opens up several security vectors that must be meticulously addressed.

- Authenticity Verification: How does a consumer verify that an incoming webhook genuinely originated from the claimed publisher and not from an impostor? This is crucial to prevent spoofing and malicious injections.

- Data Integrity: How can a consumer be sure that the webhook payload hasn't been tampered with in transit?

- Authorization: Even if authentic, is the recipient authorized to receive this specific type of event or access this data?

- Endpoint Exposure: Webhook endpoints are publicly accessible URLs. They become potential targets for DDoS attacks or brute-force attempts if not adequately protected.

- Secrets Management: Storing and transmitting shared secrets (for signing or authentication) securely is a common challenge.

3. Scaling for Volume: Handling the Deluge of Events

As applications grow and user activity increases, the volume of webhooks can skyrocket, posing significant scalability challenges for both publishers and consumers.

- Publisher Side: A sudden surge in events can overwhelm the publisher's system if it attempts to send webhooks synchronously, blocking critical application threads. Asynchronous processing is vital.

- Consumer Side: High volumes of incoming webhooks can inundate a consumer's endpoint, leading to timeouts, queue overflows, and potential denial of service if the processing logic is not designed for scale.

- Resource Management: Each webhook involves HTTP connections, CPU cycles, and network bandwidth. At scale, these resources must be efficiently managed to avoid bottlenecks.

4. Achieving Observability: Knowing What's Happening

When something goes wrong, diagnosing issues in an event-driven system reliant on webhooks can be notoriously difficult without proper observability.

- Logging: Comprehensive logs for every sent and received webhook, including payloads, headers, response codes, and timestamps, are indispensable for debugging.

- Monitoring: Real-time metrics on webhook delivery rates, success/failure rates, latency, and retry counts are crucial for proactive problem detection.

- Alerting: Automated alerts for persistent failures, unusual spikes in errors, or significant delays in delivery ensure that operational teams are informed immediately.

- Tracing: Understanding the full lifecycle of an event, from its origin to its ultimate processing via a webhook, requires distributed tracing capabilities.

5. Developer Experience and Tooling: Simplifying Complexity

The complexity of webhook management can significantly impact developer productivity. Without adequate tools and a streamlined experience, developers spend valuable time debugging, replaying events, and manually managing subscriptions.

- Testing and Debugging: Simulating webhook events, inspecting payloads, and replaying failed deliveries are essential developer workflows that often lack robust tooling.

- Subscription Management: Managing which consumers receive which events, and allowing them to configure their target URLs and event filters, can be cumbersome.

- Documentation: Clear, up-to-date documentation for webhook formats, security mechanisms, and retry policies is vital for successful integration.

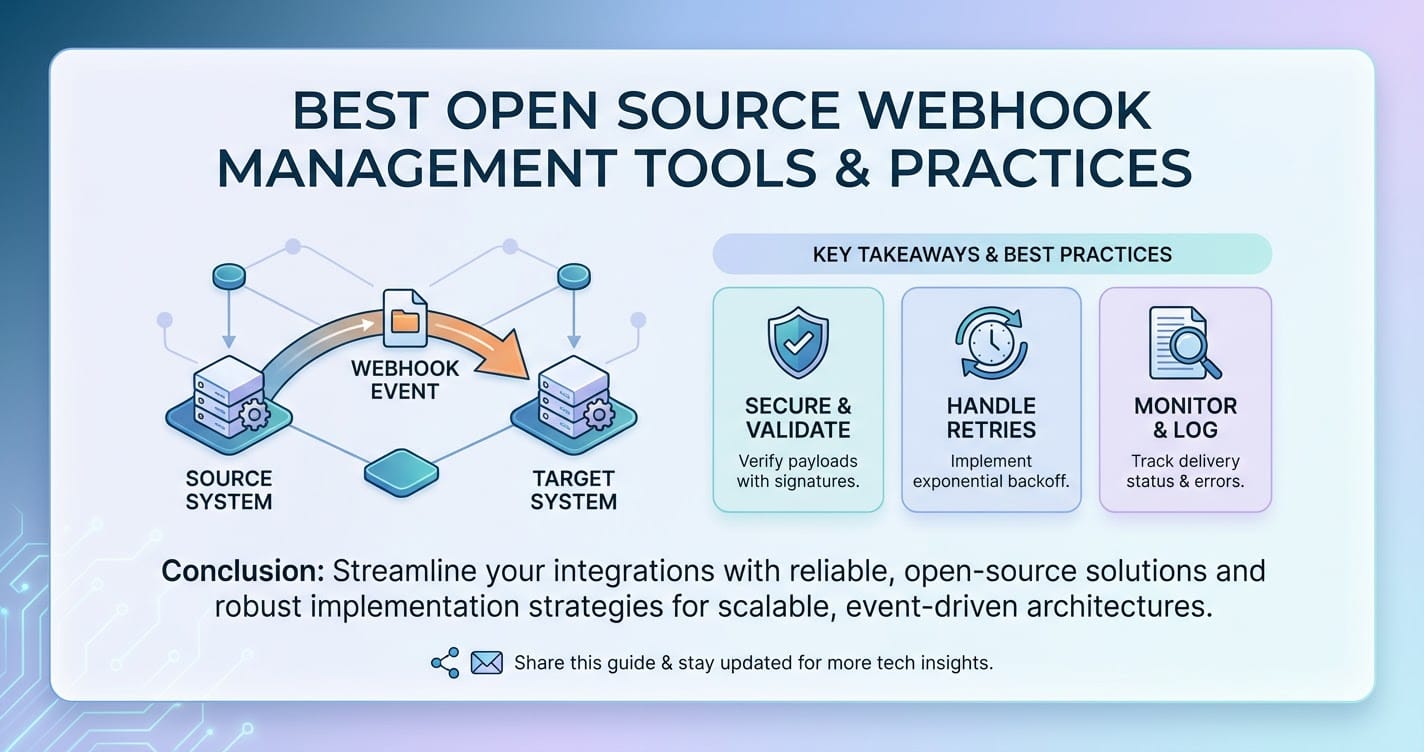

Addressing these challenges requires a layered approach, combining architectural best practices with the intelligent use of open-source tools and platforms. The goal is to build a robust, resilient, and observable webhook infrastructure that can reliably deliver critical events without becoming an operational burden.

Core Principles of Effective Webhook Management: Building Resilience

Successful webhook management hinges on adhering to several core principles that guide the design and implementation of both the publishing and consuming sides of the event flow. These principles are universal, regardless of the specific tools or technologies employed.

1. Prioritizing Reliable Delivery and Robust Retry Mechanisms

Reliability is paramount. Events must be delivered, even in the face of transient failures.

- Asynchronous Processing: Publishers should never send webhooks synchronously within the main application thread. Instead, events should be immediately queued for asynchronous processing by a dedicated worker. This prevents webhook delivery issues from blocking user-facing operations and allows for retries without impacting system responsiveness.

- Persistent Queues: Utilize message queues (e.g., Apache Kafka, RabbitMQ, Redis Streams) to store webhook events before delivery. This ensures events are not lost if the webhook sender process crashes and provides a buffer against consumer slowdowns.

- Exponential Backoff with Jitter: When a webhook delivery fails, retries should not be immediate or at fixed intervals. Exponential backoff increases the delay between retries after successive failures, giving the consumer time to recover. Adding "jitter" (a random component to the delay) helps prevent all retries from hitting the consumer simultaneously, which could exacerbate an outage.

- Dead-Letter Queues (DLQs): For events that persistently fail after multiple retries, they should be moved to a DLQ. This prevents them from continuously retrying and consuming resources, while allowing operators to inspect, manually reprocess, or discard them.

- Circuit Breakers: Implement circuit breakers to detect when a consumer's endpoint is consistently failing. Instead of continuously retrying, the circuit breaker "opens," preventing further attempts for a defined period, thus protecting both the publisher and the overloaded consumer. Once the period elapses, a single "probe" request is sent; if successful, the circuit "closes" and normal delivery resumes.

- Idempotent Consumers: Consumers must be designed to safely process the same webhook event multiple times without causing duplicate side effects. This is typically achieved by including a unique identifier (e.g., a UUID) in the webhook payload and storing a record of processed IDs, allowing subsequent identical requests to be ignored or reprocessed appropriately.

2. Implementing Robust Security Practices

Security should be a non-negotiable aspect of any webhook implementation, protecting data in transit and ensuring authenticity.

- HTTPS Only: Always use HTTPS for webhook delivery. This encrypts the data in transit, protecting against eavesdropping and man-in-the-middle attacks.

- Signature Verification: Publishers should sign webhook payloads using a shared secret and a cryptographic hash function (e.g., HMAC-SHA256). The signature is included in a special HTTP header (e.g.,

X-Signature). Consumers then use their copy of the shared secret to re-calculate the signature and compare it with the incoming one. Mismatched signatures indicate tampering or an unauthorized source. - Unique Secret per Consumer: Ideally, each consumer should have a unique secret. This limits the blast radius if a secret is compromised.

- Timestamp Verification & Replay Protection: Include a timestamp in the webhook payload or headers. Consumers can verify that the timestamp is recent (e.g., within 5 minutes) to prevent replay attacks where old, legitimate webhooks are intercepted and resent by an attacker.

- Dedicated, Hardened Endpoints: Webhook consumer endpoints should be specifically designed to handle webhooks and nothing else. They should be minimally exposed, ideally behind an API gateway that provides additional layers of security like WAF (Web Application Firewall) capabilities, DDoS protection, and rate limiting.

- Least Privilege: Ensure that the system sending webhooks only has access to the data it absolutely needs to send, and the system receiving it only processes what's necessary.

3. Architecting for Scalability and Performance

Webhooks should scale horizontally to accommodate fluctuating event volumes without degrading performance.

- Stateless Processing: Design webhook processing logic to be stateless wherever possible. This allows any worker instance to handle any incoming webhook, simplifying scaling.

- Horizontal Scaling: Both the webhook sending and receiving infrastructure should be designed for horizontal scaling. Publishers can add more worker processes to send webhooks, and consumers can add more instances of their webhook endpoint application to handle incoming traffic.

- Efficient Payload Design: Keep webhook payloads as lean as possible. Only include the necessary data to inform the consumer about the event. Large payloads increase network overhead and processing time.

- Rate Limiting (Publisher Side): Publishers should offer configurable rate limits per consumer to prevent overwhelming specific endpoints.

- Admission Control (Consumer Side): Consumers can implement their own admission control or rate limiting to protect their internal systems from excessive load, gracefully rejecting requests above a certain threshold (e.g., returning HTTP 429 Too Many Requests).

4. Implementing Comprehensive Monitoring and Observability

Visibility into the webhook lifecycle is non-negotiable for operational excellence.

- Detailed Logging: Log every incoming and outgoing webhook, including the full payload (with sensitive data masked), headers, response codes, duration, and any errors. Use structured logging (e.g., JSON) to facilitate analysis.

- Key Metrics: Collect and monitor critical metrics such as:

- Total webhooks sent/received

- Successful vs. failed deliveries

- Retry counts

- Average delivery latency

- Queue depth (for asynchronous systems)

- Error rates (e.g., HTTP 4xx, 5xx responses)

- Alerting: Set up alerts for deviations from normal behavior, such as a sudden drop in successful deliveries, an increase in retry attempts, or excessive queue growth.

- Distributed Tracing: Integrate with distributed tracing systems (e.g., OpenTelemetry) to track the journey of an event across multiple services, from its origin to its eventual processing by a webhook consumer.

- Dashboarding: Create intuitive dashboards using tools like Grafana to visualize webhook metrics, providing real-time operational insights.

5. Fostering a Positive Developer Experience and Robust Tooling

A well-managed webhook system is also one that is easy for developers to integrate with and troubleshoot.

- Clear Documentation: Provide comprehensive and up-to-date documentation that covers:

- Available events and their payload schemas.

- Security mechanisms (signature calculation, secret management).

- Retry policies and expected behavior.

- Endpoint requirements (e.g., expected response codes, timeout limits).

- Testing and debugging guidelines.

- Developer-Friendly Event Schemas: Use widely accepted data formats (JSON) and clear, self-describing field names. Version your webhook schemas to allow for graceful evolution.

- Testing Tools: Offer tools or environments that allow developers to easily test their webhook endpoints, simulate events, and inspect payloads.

- Event Replay: Provide a mechanism for developers or support teams to replay past events, especially for debugging or recovering from outages.

- Subscription Management UI: If possible, offer a user interface for consumers to easily manage their webhook subscriptions, including adding/removing endpoints and filtering events.

By diligently applying these principles, organizations can transform webhooks from a potential point of failure into a powerful, reliable, and secure mechanism for real-time inter-service communication.

Open Source Solutions for Webhook Management: Building Blocks for Resilience

While there aren't many "all-in-one" open-source webhook management platforms that rival commercial SaaS offerings like Svix or Hookdeck directly, the open-source ecosystem provides a rich array of building blocks that, when combined, can form a highly robust, scalable, and customizable webhook management system. The power of an Open Platform lies in this flexibility, allowing developers to tailor solutions precisely to their needs without vendor lock-in.

Here, we explore key open-source components and patterns that underpin effective webhook management.

1. Message Queues and Event Streaming Platforms (The Backbone of Reliability)

These are fundamental for ensuring reliable, asynchronous delivery and managing retry logic.

- Apache Kafka:

- Description: A distributed streaming platform capable of handling trillions of events daily. Kafka provides high-throughput, fault-tolerant, and durable storage for event streams. It's designed for publish-subscribe models and can serve as a central nervous system for event-driven architectures.

- Role in Webhook Management:

- Event Buffering: Publishers can immediately write webhook events to a Kafka topic instead of directly sending them. This decouples the event generation from the delivery mechanism, protecting the main application from network delays or consumer failures.

- Reliable Storage: Kafka's durable logs ensure events are not lost, even if webhook sender services crash.

- Scalable Consumers: Multiple worker processes can consume from Kafka topics in parallel to send webhooks, allowing for horizontal scaling of the delivery mechanism.

- Retry Management: Failed webhooks can be re-queued to a retry topic (possibly with a delay via Kafka Streams or external schedulers), or moved to a Dead-Letter Queue (DLQ) topic for manual inspection.

- Advantages: Extremely high performance, fault tolerance, robust ecosystem, strong community support.

- Considerations: Can be complex to set up and manage at scale, requires Zookeeper (though becoming less so with newer versions).

- RabbitMQ:

- Description: A widely deployed open-source message broker that implements the Advanced Message Queuing Protocol (AMQP). It's known for its flexibility in routing messages and supporting various messaging patterns.

- Role in Webhook Management:

- Task Queues: Publishers enqueue webhook delivery tasks to RabbitMQ. Workers consume these tasks and attempt delivery.

- Flexible Routing: RabbitMQ's exchange and queue model allows for sophisticated routing, including sending events to multiple different webhook sender workers or specific queues for different types of webhooks.

- Retries and DLQs: Features like message acknowledgments, time-to-live (TTL) on messages or queues, and dead-letter exchanges allow for implementing retry logic and DLQs directly within RabbitMQ.

- Advantages: Mature, flexible routing, good for smaller to medium-scale event volumes, easier to operate than Kafka for some use cases.

- Considerations: Less performant than Kafka at very high throughputs, can suffer from single-node bottlenecks if not clustered correctly.

- Redis Streams / Redis List:

- Description: Redis, primarily an in-memory data store, offers features like Lists and Streams that can be used for simple queuing. Redis Streams (introduced in Redis 5.0) offer more sophisticated message queuing capabilities with consumer groups and persistent storage.

- Role in Webhook Management:

- Lightweight Queuing: For simpler scenarios or systems already using Redis, Streams or Lists can serve as a fast, in-memory queue for webhook delivery tasks.

- Retry Mechanisms: Simple retry logic can be implemented by pushing failed messages back to the end of a List or Stream, possibly with a delay.

- Advantages: Extremely fast, simple to integrate if Redis is already in the stack, low operational overhead for basic queuing.

- Considerations: Less robust for high-durability and large-scale event streaming compared to Kafka, more manual effort required for advanced features like complex retry policies or DLQs.

2. Web Servers and Reverse Proxies (The Front Door)

These components are essential for receiving incoming webhook requests and providing an initial layer of security and load balancing.

- Nginx / Caddy:

- Description: High-performance open-source web servers and reverse proxies. Nginx is renowned for its stability and extensive feature set, while Caddy is known for its simplicity and automatic HTTPS.

- Role in Webhook Management:

- Endpoint Protection: Act as a reverse proxy for consumer webhook endpoints, providing SSL termination, basic access control, and rate limiting to protect the backend application from direct exposure.

- Load Balancing: Distribute incoming webhook traffic across multiple instances of a consumer's webhook handler, ensuring scalability and high availability.

- Request Filtering: Can be configured to filter out unwanted requests based on IP address, headers, or request methods before they reach the application.

- Advantages: Battle-tested, high performance, flexible configuration, widely used.

- Considerations: Configuration can become complex for advanced scenarios.

3. Application Frameworks and Libraries (The Processing Logic)

For custom webhook sending and receiving logic, developers rely on open-source frameworks and libraries in their preferred programming languages.

- Python (Flask/Django), Node.js (Express), Go (net/http), Java (Spring Boot):

- Description: These are popular open-source frameworks for building web applications and microservices.

- Role in Webhook Management:

- Webhook Sender: Applications use these frameworks to integrate with message queues, construct webhook payloads, calculate signatures, and send HTTP requests to consumer endpoints.

- Webhook Receiver: Applications use these frameworks to define HTTP endpoints that listen for incoming webhooks, parse payloads, verify signatures, and enqueue messages for asynchronous processing.

- Advantages: Ecosystem richness, developer productivity, vast library support for HTTP clients, JSON parsing, cryptography.

- Considerations: Requires custom development for all management features (retries, DLQs, monitoring).

4. Monitoring and Logging Stacks (The Eyes and Ears)

Observability is critical. These open-source tools provide the necessary insights.

- ELK Stack (Elasticsearch, Logstash, Kibana):

- Description: A powerful suite for centralized logging. Logstash collects logs, Elasticsearch stores and indexes them, and Kibana provides a rich interface for searching, analyzing, and visualizing log data.

- Role in Webhook Management: Aggregate logs from all webhook publishers and consumers, allowing for easy search, filtering, and analysis of webhook events, errors, and delivery statuses. Crucial for debugging.

- Advantages: Comprehensive, scalable, powerful search capabilities, rich visualization.

- Considerations: Resource-intensive, can be complex to set up and maintain.

- Prometheus & Grafana:

- Description: Prometheus is an open-source monitoring system with a time-series database. Grafana is an open-source analytics and interactive visualization web application.

- Role in Webhook Management:

- Metrics Collection: Applications can expose Prometheus-compatible metrics (e.g., webhook send counts, failure rates, latency, queue depths). Prometheus scrapes these metrics.

- Alerting: Prometheus's Alertmanager can trigger alerts based on defined thresholds (e.g., if webhook error rates exceed 5%).

- Dashboarding: Grafana connects to Prometheus to visualize key webhook metrics in real-time dashboards, providing operational insights at a glance.

- Advantages: Powerful time-series data handling, flexible alerting, highly customizable dashboards, cloud-native.

- Considerations: Requires applications to expose metrics in a Prometheus-compatible format.

5. Dedicated Open Source Webhook Libraries/Components

While full platforms are rare, some open-source libraries offer specific webhook-related functionalities.

webhookd(Go): A simple daemon for handling GitHub webhooks and executing commands. More of a utility than a full management system but illustrates basic principles.- Custom Microservices: Often, organizations build custom microservices in their preferred language (using the frameworks above) to act as dedicated webhook dispatchers/receivers, integrating with message queues for reliability. These become their internal open-source solutions.

The true "open-source webhook management tool" often emerges from the thoughtful combination of these powerful, modular components. It's a testament to the Open Platform philosophy, where individual, best-of-breed open-source projects can be integrated to form a tailored, robust system that precisely meets an organization's unique requirements, rather than relying on a monolithic, proprietary solution. This approach offers unparalleled flexibility, cost efficiency, and community support.

Table: Comparison of Open Source Components for Webhook Management

To illustrate how various open-source technologies contribute to a comprehensive webhook management system, consider the following comparison of their primary roles and characteristics:

| Category | Component | Primary Role in Webhook Management | Key Features | Best Use Case | Considerations |

|---|---|---|---|---|---|

| Message Queues/Event Streams | Apache Kafka | Asynchronous event buffering, reliable delivery, durable storage, retry basis | High-throughput, distributed, fault-tolerant, consumer groups, persistent logs | Large-scale, high-volume event streams, complex event processing | High operational complexity, requires Zookeeper/KRaft, more demanding resource-wise |

| RabbitMQ | Asynchronous task queuing, flexible routing, built-in retry mechanisms | AMQP, message acknowledgments, dead-letter exchanges, various routing options | Medium-scale event queues, complex routing logic, task distribution | Less performant than Kafka for extreme throughput, can be slower for very large messages | |

| Redis Streams | Lightweight, fast queuing, simple consumer groups | In-memory (can be persisted), consumer groups, non-blocking operations | Low-latency event queues, smaller scale, existing Redis users | Less durable and feature-rich than Kafka/RabbitMQ for enterprise needs, more manual retry logic | |

| Web Servers/Proxies | Nginx | Secure webhook endpoints, load balancing, rate limiting | Reverse proxy, SSL termination, caching, access control, traffic shaping | Exposing external webhook endpoints, protecting backend services | Configuration can be complex for advanced scenarios, no built-in webhook-specific logic |

| Caddy | Simpler setup, automatic HTTPS, reverse proxy | Automatic HTTPS, easy configuration, modern HTTP/3 support | Quick deployment of secure webhook endpoints, smaller teams | Less feature-rich than Nginx for very advanced traffic management | |

| Observability Stacks | ELK Stack | Centralized logging, full-text search, data visualization | Log ingestion, storage (Elasticsearch), visualization (Kibana), alerting | Comprehensive log analysis, debugging, auditing, security monitoring | Resource-intensive, steep learning curve, requires careful indexing planning |

| Prometheus/Grafana | Metrics collection, time-series analysis, real-time dashboards, alerting | Pull-based metrics, PromQL, Alertmanager, flexible dashboards | Real-time operational monitoring, performance tracking, proactive alerting | Requires instrumenting applications for metrics, managing Alertmanager rules | |

| Application Frameworks | Python (Flask/Django) | Custom webhook sender/receiver logic, signature verification, retry orchestration | RESTful API development, HTTP clients, cryptography libraries, ORMs | Building custom webhook dispatchers/processors, rapid prototyping | Requires significant custom development for all management features, no out-of-the-box solution |

| Go (net/http) | High-performance custom webhook services | Concurrency, low-level HTTP control, strong typing, efficient | Building highly performant, custom webhook microservices | More verbose than Python/Node.js, requires more boilerplate code |

This table highlights that a robust open-source webhook management system is typically an architectural composition rather than a single off-the-shelf product. This compositional approach allows for maximum control, scalability, and adherence to specific security and performance requirements, embodying the flexibility inherent in an Open Platform.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

Best Practices for Webhook Publishers: Being a Good Event Citizen

The reliability and usability of a webhook system start with the publisher. Adhering to best practices ensures that the webhooks you send are easy to consume, reliable, and secure.

1. Provide Comprehensive and Clear Documentation

This is arguably the most critical aspect. Without good documentation, consumers cannot effectively integrate. * Event Catalog: Clearly list all available webhook events, their triggers, and what information they convey. * Payload Schema: Document the exact structure and data types of the payload for each event. Use tools like JSON Schema for validation and clarity. * Security Details: Explain how to verify webhook authenticity (e.g., HMAC signature calculation steps, header names). * Retry Policy: Inform consumers about your retry logic (e.g., exponential backoff, maximum retries, timeout periods). * Endpoint Requirements: Specify expected HTTP response codes from consumers (e.g., 200 OK for success, 4xx/5xx for failures), and timeout limits. * Version Management: Clearly state how webhook versions are handled (e.g., via X-Webhook-Version header or URL path).

2. Design Consistent and Informative Payloads

The data you send should be easy to parse and understand. * Minimal Payload: Only include essential data. If consumers need more information, provide an id or URL in the payload that they can use to fetch additional details via your primary API. * Consistent Structure: Maintain a consistent JSON structure across all events. Common fields like event_id, event_type, timestamp, resource_id, data (for event-specific details) are highly recommended. * Event ID: Include a unique event_id in every payload to facilitate idempotency on the consumer side and for debugging. * Human-Readable Event Names: Use clear, descriptive event names (e.g., invoice.created, user.deleted).

3. Implement Robust Security Measures (Signatures and HTTPS)

Never compromise on security. * Always Use HTTPS: Ensure all webhook deliveries are over HTTPS to encrypt data in transit. * Cryptographic Signatures: Sign every webhook payload. Calculate a hash (e.g., HMAC-SHA256) of the raw payload using a shared secret and include it in an HTTP header (e.g., X-Signature). Consumers use this to verify authenticity and integrity. * Unique Secrets: Allow consumers to generate and manage their own unique secrets for signature verification. * Timestamp Headers: Include a X-Webhook-Timestamp header. This, combined with the signature, helps consumers mitigate replay attacks.

4. Implement a Comprehensive Retry Strategy

Assume failures will happen and build resilience. * Exponential Backoff with Jitter: Use this for all failed deliveries to gracefully handle temporary consumer outages without overwhelming them. * Maximum Retries and Timeout: Define a reasonable maximum number of retries and a global timeout for each webhook event before it's moved to a Dead-Letter Queue. * Monitoring and Alerting: Actively monitor webhook delivery status. Set up alerts for persistent failures or high error rates. * Dead-Letter Queue: Automatically move persistently failing webhooks to a DLQ for manual inspection or reprocessing.

5. Allow for Flexible Subscription Management

Give consumers control over what they receive. * Event Filtering: Allow consumers to subscribe only to specific event types they care about, reducing unnecessary traffic. * Endpoint Management: Provide a clear way for consumers to add, update, and remove their webhook endpoints. * Secret Rotation: Offer a mechanism for consumers to rotate their webhook secrets regularly.

6. Consider Idempotency (For Consumers)

While primarily a consumer responsibility, publishers can aid this. * Unique Event IDs: Always include a unique event_id in the payload. This is the consumer's primary tool for idempotency. * Idempotency Key (Optional): For specific scenarios where the consumer needs to handle duplicate requests in a complex way, a publisher might provide an Idempotency-Key header, though this is less common for general webhooks and more for direct API requests.

By adhering to these best practices, webhook publishers create a reliable, secure, and developer-friendly experience, fostering trust and enabling seamless integration within the broader API ecosystem.

Best Practices for Webhook Consumers: Robustly Receiving and Processing Events

As a webhook consumer, your responsibility is to build an endpoint that is secure, resilient, and capable of processing events efficiently, even under duress.

1. Secure Your Endpoint

Your webhook endpoint is a publicly accessible door into your system; secure it meticulously. * HTTPS Always: Only expose webhook endpoints over HTTPS. * Validate Signatures: This is non-negotiable. For every incoming webhook, verify the cryptographic signature using the shared secret provided by the publisher. Reject any request with an invalid or missing signature immediately with an HTTP 401 (Unauthorized) or 403 (Forbidden) status. * Validate Timestamps (Replay Protection): Check the timestamp header (X-Webhook-Timestamp or similar). If the timestamp is too old (e.g., more than 5 minutes), reject the request. This prevents attackers from replaying old, legitimate webhooks. * Dedicated Endpoint: Use a dedicated, unique URL for each webhook integration. Avoid using generic endpoints that handle other types of requests. * IP Whitelisting (Optional but Recommended): If the publisher provides a static list of IP addresses from which they send webhooks, configure your firewall or API gateway to only accept requests from those IPs. * Rate Limiting: Protect your endpoint from abuse or accidental flooding by implementing rate limiting at your API gateway or application layer.

2. Process Webhooks Asynchronously

Never block the incoming request thread with heavy processing. * Respond Immediately: The webhook endpoint should do minimal work: parse the request, verify the signature, and then immediately enqueue the event into a reliable message queue (e.g., RabbitMQ, Kafka). * Return 2xx Status: Respond with an HTTP 200 OK or 202 Accepted status code as quickly as possible, typically within a few hundred milliseconds, after enqueuing the event. Any other 2xx status is also acceptable. This signals to the publisher that the webhook was successfully received and acknowledged. * Dedicated Workers: Use separate, asynchronous worker processes to consume events from your message queue and perform the actual business logic. This decouples receiving from processing.

3. Ensure Idempotency in Your Processing Logic

This is critical to handle duplicate deliveries gracefully. * Unique Event ID: Leverage the unique event_id provided in the webhook payload. Store a record of processed event IDs. * Atomic Operations: Design your processing logic to be idempotent. Before performing any action (e.g., creating a user, updating a record), check if that specific event_id has already been processed. If it has, simply acknowledge success without re-executing the logic. Database transactions can help here. * Consistent State: Ensure that repeated processing of the same event yields the same result without unintended side effects.

4. Be Resilient to Publisher Retries and Failures

Expect the publisher to retry, and be prepared for potential failures on your end. * Handle Timeouts Gracefully: Understand that publishers have timeouts. If your endpoint takes too long to respond, they will retry. This reinforces the need for asynchronous processing. * Error Reporting: If your endpoint cannot process a webhook (e.g., invalid payload, unauthorized access), return appropriate HTTP 4xx or 5xx status codes to guide the publisher's retry logic. * 400 Bad Request: Malformed payload, invalid data. * 401 Unauthorized/403 Forbidden: Signature verification failed, IP not whitelisted. * 429 Too Many Requests: Rate limit exceeded. * 500 Internal Server Error: Your processing logic failed. * 503 Service Unavailable: Your service is temporarily down or overloaded.

5. Implement Comprehensive Monitoring and Logging

Visibility is key for debugging and operational health. * Log Incoming Webhooks: Log all incoming webhook details (headers, masked payload, timestamp, signature status) before enqueuing for processing. This is crucial for debugging. * Log Processing Outcomes: Log the success or failure of your asynchronous processing workers, including any errors. * Metrics: Track metrics like the number of incoming webhooks, processing latency, success rates, and error rates using tools like Prometheus and Grafana. * Alerting: Set up alerts for high error rates, processing delays, or queue backlogs.

6. Graceful Degradation and Circuit Breaking

Protect your own systems from cascading failures. * Internal Circuit Breakers: If your webhook processing logic relies on other internal or external services, implement circuit breakers to prevent retries from hammering an already failing downstream service. * Batching (if applicable): For high-volume, non-time-critical events, consider batching updates to your database or downstream services to reduce load.

By rigorously applying these best practices, webhook consumers can build robust, secure, and highly available systems that can reliably ingest and process event data, forming a crucial part of a resilient event-driven architecture. The adoption of an Open Platform approach further empowers consumers with the flexibility to integrate these best practices using a wide array of battle-tested open-source components.

The Broader Context: API Gateways and Open Platforms in Webhook Management

While webhooks operate on a peer-to-peer event notification model, they exist within a larger ecosystem of distributed systems. The principles of API management, driven by powerful API gateway solutions and the philosophy of an Open Platform, are highly relevant to creating a robust and maintainable webhook infrastructure.

An API Gateway acts as a single entry point for all client requests, routing them to the appropriate backend services. It provides a centralized location for handling cross-cutting concerns such as authentication, authorization, rate limiting, logging, and monitoring. While webhooks are typically sent from a service to another service's endpoint, an API Gateway can play several crucial roles:

1. Securing and Managing Webhook Publishing Services

If your services are emitting webhooks, an API gateway can secure the internal APIs that generate these events. It ensures that only authorized callers can trigger actions that lead to webhook generation. For instance, if an internal microservice has an API to update a user profile, and this update triggers a user.updated webhook, the API gateway can enforce access control and validate input for this internal API.

2. Protecting Webhook Consumer Endpoints

For services receiving webhooks, an API gateway can act as the first line of defense. * SSL Termination: Handle HTTPS termination, offloading this compute-intensive task from your backend services. * Rate Limiting: Protect your webhook endpoint from being overwhelmed by too many requests, even from legitimate publishers. * IP Whitelisting/Blacklisting: Filter traffic based on source IP addresses, allowing only trusted publishers to reach your endpoint. * DDoS Protection: Provide an additional layer of defense against distributed denial-of-service attacks targeting your public webhook URLs. * Centralized Logging and Monitoring: Aggregate logs and metrics related to incoming webhook requests before they even hit your application logic, providing a holistic view of traffic.

3. Unified API Management and Observability

In an event-driven architecture, webhooks often complement traditional RESTful APIs. An API gateway offers a unified view and management plane for both. For example, a client might call a REST API to create a resource, and then subscribe to a webhook to be notified when that resource changes. The API gateway can manage the authentication for the initial REST call and log the full lifecycle of the related events.

The concept of an Open Platform further enhances this. An Open Platform, by its very definition, promotes interoperability, extensibility, and transparency, often leveraging open-source components. For webhook management, this means:

- Flexibility and Customization: An Open Platform provides the building blocks and interfaces to integrate various open-source tools (queues, logging, monitoring) to create a bespoke webhook management system tailored to specific needs. This avoids vendor lock-in and allows for greater control over the infrastructure.

- Community-Driven Innovation: Open-source projects benefit from a global community of developers contributing fixes, features, and best practices. This ensures tools are continuously improved and kept up-to-date with emerging challenges.

- Transparency and Security Audits: The open nature of the codebase allows for thorough security audits and transparency in how data is handled, which is crucial for sensitive webhook events.

- Cost-Effectiveness: Leveraging open-source components significantly reduces licensing costs, allowing resources to be allocated to development and operational expertise.

APIPark: An Open Platform for Comprehensive API Management

This is where a product like APIPark comes into play. APIPark is an open-source AI gateway and API management platform that embodies the principles of an Open Platform. While its primary focus is on AI API integration and REST service management, its robust capabilities as an API gateway and developer portal are highly relevant to the broader context of a system that includes webhooks.

As an Open Platform, APIPark offers:

- End-to-End API Lifecycle Management: For the APIs that generate webhooks, APIPark can help manage their entire lifecycle – from design and publication to invocation and decommission. This ensures that the source of your webhook events is well-governed and reliable.

- Performance Rivaling Nginx: Its high-performance capabilities (over 20,000 TPS on modest hardware) mean it can efficiently front services that emit a high volume of events, ensuring they remain responsive. It can also securely protect your internal services that need to receive webhook events, acting as a crucial reverse proxy.

- Detailed API Call Logging and Data Analysis: APIPark provides comprehensive logging for every API call it handles. This capability is invaluable for debugging issues related to webhook events, especially when webhooks are triggered by upstream API calls. It records every detail, allowing businesses to trace and troubleshoot issues quickly. Its powerful data analysis features can show trends and performance changes, which can indirectly highlight issues in the event-driven chain.

- API Service Sharing and Team Collaboration: Within a larger enterprise, different teams might publish or consume webhooks. APIPark's ability to centrally display API services and manage access permissions for each tenant (team) facilitates collaboration and governance across an organization's entire API and event landscape.

- Unified API Format and Security Policies: Although primarily for AI models, the principle of standardizing API formats and applying consistent security policies (like subscription approval) aligns perfectly with the need for strong governance over any API or event source, including those that emit webhooks. For instance, APIs managed by APIPark that serve as internal event triggers can benefit from its robust authentication and authorization features, ensuring only legitimate actions lead to webhook generation.

In essence, while APIPark is an API gateway and not a dedicated webhook delivery platform, it provides the overarching infrastructure and management capabilities that enhance the reliability, security, and observability of the services that interact with webhooks. By managing the APIs that are part of the event-driven flow, APIPark contributes to a more stable and observable ecosystem where webhooks can thrive. Its open-source nature ensures that enterprises benefit from a flexible, community-driven solution, reinforcing the value proposition of an Open Platform in modern software development.

Future Trends in Webhook Management

The landscape of event-driven architectures is continuously evolving, and with it, the best practices and tools for webhook management. Several trends are shaping the future of how we handle these crucial event notifications:

1. Standardization and Evolution of Event Formats

While JSON remains the de facto standard for webhook payloads, there's a growing push for more formal standardization to improve interoperability and reduce integration friction. * CloudEvents: This CNCF project defines a vendor-neutral specification for describing event data in a common way. Adopting CloudEvents could simplify integration across disparate systems and tools, as publishers and consumers would share a common understanding of event structure and metadata. It aims to make it easier for services and tools to interoperate, enabling more sophisticated event routing and processing. * GraphQL Subscriptions: While not strictly webhooks, GraphQL subscriptions offer a real-time, push-based alternative for clients to receive updates, often over WebSockets. As GraphQL adoption grows, it might influence how some real-time data push mechanisms are implemented, especially for client-facing applications where a persistent connection is feasible.

2. Deeper Integration with Serverless Architectures

Serverless functions (like AWS Lambda, Google Cloud Functions, Azure Functions) are a natural fit for consuming webhooks due to their event-driven nature and auto-scaling capabilities. * Event-Driven Function Triggers: Webhook events can directly trigger serverless functions, which scale on demand to handle fluctuating traffic without requiring server provisioning. * Simplified Operations: The operational burden of managing servers and scaling is offloaded to the cloud provider, allowing developers to focus solely on the webhook processing logic. * Cost-Effectiveness: Pay-per-execution models make serverless functions highly cost-effective for event processing, especially for bursty or infrequent webhook traffic. The challenge here lies in ensuring reliable delivery before the function is invoked and handling cold starts for low-frequency events.

3. Advanced Retry and Observability Features

As webhook systems become more critical, the demand for sophisticated retry logic and granular observability will increase. * Smarter Retries: Beyond exponential backoff, future systems might incorporate AI/ML to predict optimal retry intervals based on historical failure patterns or even dynamically adjust based on real-time consumer health indicators. * End-to-End Event Tracing: Enhanced distributed tracing that provides a complete, visual journey of a single event from its inception, through various processing steps, and across multiple webhook deliveries, will become standard. This will greatly simplify debugging in complex microservices environments. * Developer Portals with Webhook Management: As seen in commercial offerings, API gateway and developer portals will likely integrate more comprehensive webhook management features, allowing consumers to self-serve, view delivery logs, replay events, and manage secrets directly through a user-friendly interface. This aligns perfectly with the Open Platform philosophy of empowering developers.

4. Enhanced Security and Trust Models

The security landscape is constantly evolving, and webhooks are no exception. * Decentralized Identity for Webhooks: Moving beyond shared secrets, future systems might leverage decentralized identity mechanisms or mTLS (mutual TLS) for stronger, more granular authentication and authorization between webhook publishers and consumers. * Token-Based Authentication: Implementing short-lived, rotating tokens for webhook requests, similar to OAuth, could provide a more robust security posture than long-lived shared secrets. * Fine-Grained Authorization: The ability to define and enforce highly granular permissions for what events a particular webhook endpoint is authorized to receive.

5. Event Mesh Architectures

For highly complex, enterprise-scale environments, the concept of an "event mesh" is gaining traction. This involves a dynamic infrastructure layer for routing events across various applications and cloud environments. * Broker-Agnostic Event Routing: An event mesh can abstract away the underlying message brokers (Kafka, RabbitMQ, etc.) and provide a unified way to publish, subscribe to, and route events, including those related to webhooks, across a heterogeneous landscape. * Dynamic Event Discovery: Services can dynamically discover and subscribe to events, rather than requiring static, pre-configured webhook endpoints, enhancing agility.

These trends underscore a continuous drive towards greater automation, resilience, security, and developer-friendliness in event-driven systems. As organizations increasingly rely on real-time data flows, the tools and practices for managing webhooks will continue to evolve, offering even more sophisticated solutions for connecting the digital world. The open-source community, with its commitment to innovation and collaborative development, will undoubtedly play a pivotal role in shaping this future.

Conclusion: Mastering the Art of Webhook Management

Webhooks are indispensable in today's interconnected digital landscape, serving as the conduits for real-time information exchange between applications. They power the instantaneous notifications, automated workflows, and reactive services that define modern, agile software architectures. However, the apparent simplicity of a webhook belies the significant challenges inherent in their reliable, secure, and scalable management.

This guide has traversed the critical aspects of webhook management, from understanding their fundamental anatomy and identifying common pitfalls, to establishing core principles for building resilient systems. We've explored how a robust webhook infrastructure is not a monolithic entity but rather a meticulously assembled mosaic of open-source components—message queues, web servers, monitoring stacks, and application frameworks—each playing a vital role. The philosophy of an Open Platform empowers developers with the flexibility and control to architect these solutions precisely to their unique needs, fostering innovation and avoiding vendor lock-in.

Furthermore, we've contextualized webhooks within the broader API ecosystem, highlighting the crucial role of an API gateway in securing, managing, and observing the services that interact with event streams. Products like APIPark, an open-source AI gateway and API management platform, exemplify how a comprehensive API gateway solution, built on an Open Platform ethos, can provide the overarching governance, performance, and observability necessary for a harmonious event-driven architecture that includes webhooks. Its robust logging, performance, and lifecycle management capabilities, though aimed at APIs, directly contribute to the stability of the services generating or consuming webhook events.

Ultimately, mastering webhook management is an ongoing journey that demands a continuous commitment to best practices: ensuring reliable delivery through asynchronous processing and robust retries, fortifying security with signatures and HTTPS, building for scalability, maintaining comprehensive observability, and fostering a developer-friendly experience. By embracing these principles and strategically leveraging the power of open-source tools and an Open Platform approach, organizations can harness the full potential of webhooks, transforming them from potential points of failure into powerful engines of real-time innovation. The future of software development is event-driven, and effective webhook management is the key to unlocking its boundless possibilities.

5 Frequently Asked Questions (FAQs)

Q1: What is the main difference between an API and a Webhook?

A1: An API (Application Programming Interface) typically uses a "pull" model, where a client makes a request to a server for data or to perform an action, and the server responds. The client initiates the communication. A webhook, on the other hand, uses a "push" model. It's a user-defined HTTP callback that a server sends to a client when a specific event occurs, meaning the server initiates the communication when something happens. While webhooks are a type of API interaction (specifically, an event-driven one), the key distinction lies in the direction and initiation of the communication: polling for APIs versus event notification for webhooks.

Q2: Why is signature verification so important for webhooks?

A2: Signature verification is paramount for webhook security because it addresses two critical concerns: authenticity and data integrity. By verifying the signature, the consumer can be sure that the webhook truly originated from the expected publisher and not from an impostor (preventing spoofing). Additionally, it confirms that the webhook payload has not been tampered with during transit. Without signature verification, malicious actors could send fake webhooks or alter legitimate ones, leading to unauthorized actions or data corruption within the consuming system.

Q3: How do I ensure reliable delivery of webhooks if the consumer's endpoint is down?

A3: Reliable delivery of webhooks, especially when the consumer's endpoint is unavailable, is achieved through a combination of best practices: 1. Asynchronous Processing: Publishers should queue webhook events in a persistent message queue (e.g., Apache Kafka, RabbitMQ) immediately after an event occurs, rather than attempting direct synchronous delivery. 2. Retry Mechanisms: Implement an intelligent retry strategy, typically using exponential backoff with jitter, for failed deliveries. This gives the consumer's endpoint time to recover without overwhelming it. 3. Dead-Letter Queues (DLQs): For events that consistently fail after multiple retries, move them to a DLQ for manual inspection or re-processing, preventing them from being lost or endlessly retrying. 4. Circuit Breakers: Employ circuit breakers to temporarily stop sending webhooks to consistently failing endpoints, protecting both the publisher and the overloaded consumer.

Q4: Can an API Gateway help with webhook management?

A4: Yes, an API Gateway plays a significant role in the broader context of webhook management, particularly in enhancing security, scalability, and observability. For services receiving webhooks, an API Gateway can act as a secure front door, providing SSL termination, rate limiting, IP whitelisting, and DDoS protection. For services emitting webhooks, it can secure the underlying APIs that trigger event generation. Furthermore, an API Gateway, especially one functioning as an Open Platform like APIPark, can offer centralized logging, monitoring, and overall API lifecycle management across all services, including those that interact with webhooks, contributing to a more robust and observable event-driven architecture.

Q5: What is idempotency in the context of webhooks and why is it important?

A5: Idempotency refers to the property of an operation that produces the same result regardless of how many times it is executed with the same input. In webhook management, it means a consumer's system should be able to safely process the same webhook event multiple times without causing duplicate side effects (e.g., creating the same user twice, deducting money multiple times). This is crucial because webhook publishers often implement retry mechanisms, meaning a consumer might receive the same webhook multiple times due to network issues or acknowledgement failures. Idempotency is typically achieved by including a unique event_id in the webhook payload, allowing the consumer to check if an event has already been processed before executing its business logic.

🚀You can securely and efficiently call the OpenAI API on APIPark in just two steps:

Step 1: Deploy the APIPark AI gateway in 5 minutes.

APIPark is developed based on Golang, offering strong product performance and low development and maintenance costs. You can deploy APIPark with a single command line.

curl -sSO https://download.apipark.com/install/quick-start.sh; bash quick-start.sh

In my experience, you can see the successful deployment interface within 5 to 10 minutes. Then, you can log in to APIPark using your account.

Step 2: Call the OpenAI API.