Cluster-Graph Hybrid: The Future of Scalable Computing

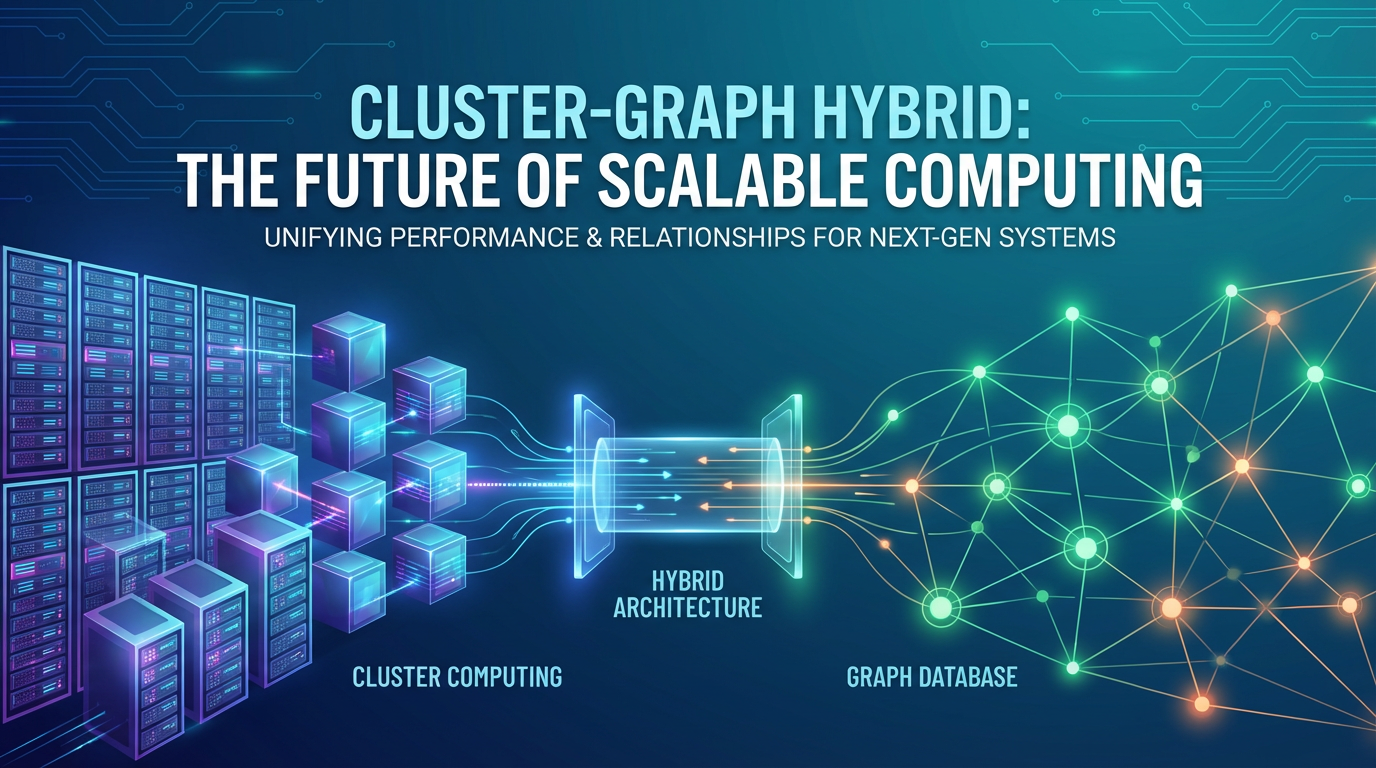

The relentless march of digital transformation has ushered in an era where data is not merely abundant, but intricately interconnected. From social networks mapping human relationships to vast enterprise systems charting supply chain dependencies, and from complex biological pathways to the intricate web of global financial transactions, the sheer volume and inherent interconnectedness of information demand a new paradigm in computing. For decades, the industry has relied on robust cluster computing to handle massive data volumes and intense computational workloads, distributing tasks across networks of machines. Concurrently, graph computing has emerged as an indispensable tool for uncovering insights from relationship-rich datasets, allowing us to model and analyze complex networks with unparalleled clarity. However, as organizations grapple with petabytes of data where every data point might be tied to hundreds of others, the limitations of these isolated approaches become increasingly apparent. The future of scalable computing, poised to meet the demands of truly intelligent and context-aware applications, lies not in one or the other, but in their seamless integration: the Cluster-Graph Hybrid.

This article delves into the profound synergy between cluster and graph computing, exploring how their combined strengths forge a resilient, highly scalable, and exceptionally intelligent foundation for the next generation of applications. We will dissect the individual strengths and weaknesses of each paradigm, illuminate the critical drivers for their convergence, and chart the architectural pathways that enable this powerful fusion. Furthermore, we will examine the crucial role of advanced API management, including the indispensable functions of an api gateway, an AI Gateway, and a specialized LLM Gateway, in orchestrating these complex hybrid systems. By understanding the intricate interplay of these technologies, we can unlock unprecedented capabilities in areas ranging from real-time personalized recommendations and sophisticated fraud detection to the next wave of AI-driven knowledge discovery, ultimately redefining the very landscape of scalable computing.

Part 1: The Foundations – Understanding Cluster Computing

At its core, cluster computing is an architectural approach that involves linking multiple computers (nodes) together to work as a single, cohesive system. The primary motivation behind this distributed design is to pool resources – processing power, memory, and storage – to tackle problems that a single machine would find insurmountable or inefficient. Its evolution can be traced from early parallel processing systems to the modern, cloud-native infrastructures that underpin most of today's digital economy. The paradigm fundamentally addresses the challenge of "big data" by enabling horizontal scalability, allowing systems to grow by simply adding more commodity hardware rather than upgrading expensive, high-end single machines.

The fundamental principles governing cluster computing revolve around parallelism, distribution, and fault tolerance. Parallelism dictates that computational tasks are broken down into smaller sub-tasks that can be executed concurrently across multiple nodes. This dramatically reduces processing times for large datasets or complex calculations. Distribution, on the other hand, refers to the physical spread of data and processing logic across the cluster, ensuring that no single point becomes a bottleneck. Data shards can reside on different machines, and processing engines can fetch and process data locally. Crucially, fault tolerance is a cornerstone of robust cluster design. In a system comprising hundreds or thousands of nodes, hardware failures are inevitable. Cluster computing architectures are engineered to detect node failures, redistribute affected workloads, and recover lost data or computations seamlessly, often without human intervention, thereby maintaining continuous service availability. This resilience is typically achieved through techniques like data replication (e.g., Hadoop's HDFS) and checkpointing of intermediate computations.

Key technologies have driven the widespread adoption and sophistication of cluster computing. Hadoop, with its Distributed File System (HDFS) for data storage and MapReduce for batch processing, revolutionized big data analytics in the early 21st century, making it feasible to process petabytes of information using commodity hardware. Apache Spark emerged as a powerful successor and complement, offering in-memory processing capabilities that dramatically accelerated iterative algorithms and introduced support for streaming data, machine learning, and graph processing. More recently, Kubernetes has become the de facto standard for orchestrating containerized applications in clusters. It automates the deployment, scaling, and management of applications, providing a robust platform for microservices architectures that are inherently distributed. These technologies have collectively enabled enterprises to build highly scalable data lakes, run complex analytics, and deploy elastic web services capable of handling millions of concurrent users.

The strengths of cluster computing are manifold and have shaped the modern digital landscape. Its ability to provide immense raw processing power and store vast quantities of diverse data (structured, semi-structured, unstructured) is unparalleled. This makes it ideal for batch processing, large-scale ETL (Extract, Transform, Load) operations, and general-purpose distributed computations. The horizontal scalability inherent in these systems means that as data volumes or computational demands grow, organizations can simply add more nodes to the cluster, linearly increasing capacity and performance without requiring complex redesigns. Furthermore, the fault tolerance mechanisms ensure high availability and data durability, which are critical for business continuity and regulatory compliance.

However, cluster computing, in its traditional form, exhibits certain limitations, particularly when confronted with highly interconnected datasets. While excellent at processing large volumes of discrete data points, it often struggles when the focus shifts to the relationships between those points. Data locality, a boon for batch processing, can become a challenge for iterative graph algorithms that require frequent access to interconnected data spread across many nodes. The overhead of shuffling vast amounts of data across the network to reconstruct graph structures or perform complex join operations for relationship analysis can severely impact performance. Moreover, the conceptual model of many cluster computing frameworks is inherently tabular or document-oriented, making it awkward to naturally represent and efficiently query complex, multi-faceted relationships found in real-world networks. This often necessitates complex data transformations or multiple join operations, leading to less intuitive queries and reduced analytical efficiency when the core problem is intrinsically relational.

Part 2: The Foundations – Understanding Graph Computing

In stark contrast to the often tabular or document-oriented view of traditional cluster computing, graph computing offers a fundamentally different lens through which to perceive and process data: one centered on relationships. At its essence, a graph database models data as nodes (entities) and edges (relationships) connecting these nodes, with both nodes and edges capable of holding properties (attributes). This highly intuitive model mirrors the way humans naturally understand interconnected systems, making it exceptionally powerful for representing complex real-world relationships. Instead of inferring connections through join operations across multiple tables, relationships are explicit and directly traversable, leading to orders of magnitude improvement in performance for connection-oriented queries.

The applications of graph computing are vast and growing, spanning nearly every industry sector. In social networks, graphs naturally represent users as nodes and their friendships, follows, or interactions as edges, enabling features like friend recommendations and community detection. E-commerce platforms leverage graphs for recommendation engines, connecting users to products, products to categories, and products to other related products, leading to highly personalized shopping experiences. Fraud detection systems use graphs to uncover suspicious patterns by linking individuals, accounts, devices, and transactions, identifying rings of fraudsters that would be invisible to traditional relational queries. Knowledge graphs organize information in a semantic network, linking concepts, entities, and events to provide context-rich data for AI systems and intelligent search. Furthermore, graphs are invaluable in fields like biology (protein-protein interaction networks), cybersecurity (network intrusion detection), and logistics (supply chain optimization), where understanding connectivity is paramount.

To extract insights from these intricate networks, a variety of key graph algorithms have been developed. PageRank, famously used by Google, measures the importance of nodes within a graph. Shortest Path algorithms (like Dijkstra's or A*) find the most efficient route between two nodes, critical for navigation and network optimization. Community Detection algorithms (e.g., Louvain method, Girvan-Newman) identify groups of densely connected nodes, useful for understanding social structures or customer segments. Centrality algorithms identify the most influential nodes in a network, while graph embeddings transform nodes and edges into low-dimensional vector representations, enabling machine learning models to operate on graph data. These algorithms allow analysts and machines to uncover hidden patterns, predict behaviors, and make informed decisions based on the structural properties of the data.

The technological landscape for graph computing is diverse and rapidly evolving. Dedicated graph databases like Neo4j (a native graph database that stores data in its graph structure), ArangoDB (a multi-model database supporting graphs), and Amazon Neptune (a fully managed graph database service) provide highly optimized storage and query engines for graph data. Graph processing frameworks, such as Apache Spark's GraphX library, enable large-scale graph analytics by leveraging existing cluster infrastructure. Furthermore, the advent of Graph Neural Networks (GNNs) has revolutionized machine learning on graphs, allowing deep learning models to directly learn from and generate predictions based on graph structures, opening new frontiers for AI applications.

The strengths of graph computing are precisely where traditional cluster computing often falters. Its primary advantage lies in its ability to model and query complex, multi-hop relationships with exceptional efficiency. By making relationships first-class citizens, graph databases excel at discovering hidden patterns, inferring connections, and performing contextual analysis across diverse data points. This leads to more intuitive data models for connected data, simpler queries (often expressed in declarative graph query languages like Cypher or Gremlin), and significantly faster query execution times for highly connected data compared to complex SQL joins. For scenarios where the relationships themselves are as important as the entities, graph computing provides unparalleled analytical depth.

Despite its powerful capabilities, graph computing also presents its own set of limitations, particularly concerning scalability for extremely dense graphs or when integrating with vast, disparate datasets that are not inherently graph-structured. While native graph databases perform exceptionally well for connected traversals, scaling them horizontally for truly massive graphs (billions of nodes and trillions of edges) can introduce complexity in data partitioning and distributed query processing. Memory footprint can also be a concern for very large, in-memory graph processing engines. Furthermore, integrating graph data with the broader enterprise data landscape, which often resides in relational databases, data lakes, or document stores, requires careful ETL processes to transform and load data into a graph format. While frameworks like Spark GraphX address some of these scalability challenges by running on clusters, they still face overheads in data serialization and network communication for highly iterative graph algorithms.

Part 3: The Convergence – Why a Cluster-Graph Hybrid?

The preceding sections have illuminated the distinct powerhouses of cluster computing and graph computing. Cluster computing excels at processing and storing vast quantities of data, offering unparalleled horizontal scalability and fault tolerance for bulk operations and general-purpose distributed workloads. Graph computing, on the other hand, is uniquely adept at modeling, querying, and analyzing the intricate relationships embedded within data, uncovering insights that are often opaque to traditional methods. The critical observation here is not that one paradigm is superior to the other, but that they are complementary. The "gap" that a Cluster-Graph Hybrid architecture addresses is precisely where the sheer volume of data meets an equally immense complexity of relationships. When an organization needs to perform real-time, context-rich analysis on petabytes of interconnected information, neither a standalone cluster nor a standalone graph system can optimally deliver the required performance, scalability, and analytical depth.

The synergistic benefits of combining cluster and graph computing are profound, creating an architecture far more powerful than the sum of its parts. Firstly, the cluster provides the robust, scalable substrate that graph engines desperately need for massive data volumes. This means the underlying storage (e.g., HDFS, S3-compatible object storage) and compute resources (e.g., Kubernetes clusters, Spark clusters) of a distributed system can host and power graph databases and graph processing frameworks. This addresses the traditional scalability challenges of graph systems, allowing them to operate on datasets previously considered too large or too complex. Secondly, the graph component injects intelligence and context into the raw, often flat, data stored within the cluster. Instead of merely processing data, the graph structure enriches it, revealing latent connections, hierarchies, and emergent properties. This contextualization transforms raw data into actionable knowledge, enabling more sophisticated analytics and more informed decision-making.

Architecturally, the Cluster-Graph Hybrid can manifest in several powerful patterns. One common approach involves deploying graph databases on distributed file systems or object storage. For instance, a native graph database might store its underlying data structures (nodes, edges, properties) on HDFS or S3, leveraging the cluster's distributed storage capabilities for scalability and durability. Another prevalent pattern is the use of graph processing engines that run natively on cluster compute frameworks. Apache Spark's GraphX is a prime example, allowing users to perform large-scale graph analytics by representing graphs as Resilient Distributed Datasets (RDDs) and leveraging Spark's in-memory processing power and distributed execution model. This enables the integration of graph processing directly into existing big data pipelines. A third, increasingly popular pattern involves a microservices architecture where specialized graph services (e.g., for specific graph queries or algorithms) are containerized and deployed within a Kubernetes cluster. These services can interact with a centralized graph database or even embed smaller, in-memory graph structures, communicating with other microservices and data sources across the distributed environment.

The real-world implications of this hybrid approach are transformative, enabling use cases that were previously intractable. Consider personalized recommendation systems operating at the scale of millions or billions of users and products. A Cluster-Graph Hybrid can store vast user interaction data, product catalogs, and implicit preferences in a distributed data lake (cluster component). Simultaneously, a graph layer can model user-product relationships, product-product similarities, and social connections. When a user interacts with a product, the cluster processes the event, and the graph quickly traverses relationships to provide real-time, context-aware recommendations that go beyond simple collaborative filtering. Another compelling example is real-time fraud detection. Transactional data, device fingerprints, and user behavior logs are ingested and stored in a cluster. A graph layer links accounts, individuals, devices, and transactions, allowing for rapid traversal to identify suspicious patterns (e.g., a new account suddenly linked to a known fraudulent entity through an unusual device). This hybrid allows for both historical analysis on the cluster and immediate, relationship-based pattern matching on the graph. Furthermore, intelligent supply chain optimization can leverage a graph to map dependencies between suppliers, factories, distribution centers, and customers, while the cluster handles inventory data, shipping logistics, and demand forecasts, enabling dynamic re-routing and proactive problem resolution in the face of disruptions. Finally, for complex network analysis, whether in telecommunications, cybersecurity, or biological research, the hybrid model facilitates comprehensive analysis of both network topology and the immense data attributes associated with each node and edge, leading to deeper insights into system behavior and vulnerabilities.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

Part 4: Architectural Considerations and Implementation Challenges

Building a robust Cluster-Graph Hybrid system is not without its complexities, demanding careful architectural planning and a deep understanding of distributed systems principles. The seamless integration of these two powerful paradigms requires addressing several critical considerations, from data flow and resource management to security and API exposure. Overcoming these challenges is paramount to harnessing the full potential of the hybrid model.

One of the foremost challenges lies in data integration. Real-world data is diverse, existing in structured databases, unstructured text files, semi-structured JSON documents, and streaming event logs. Transforming and loading this disparate data into a coherent graph structure, while maintaining consistency with its representation in the broader cluster, is a complex ETL (Extract, Transform, Load) task. This often involves parsing large datasets, identifying entities and relationships, deduping information, and then loading it into a graph database or a graph processing framework. Ensuring that the graph data remains synchronized with changes in the underlying source systems, potentially in real-time for streaming data, adds another layer of complexity. Techniques like change data capture (CDC) and event-driven architectures are crucial for keeping the graph layer fresh and consistent.

Next, orchestration and resource management become critical. A hybrid system often deals with heterogeneous workloads: long-running batch jobs on the cluster, real-time streaming analytics, computationally intensive graph traversals, and potentially machine learning model training and inference. Managing these diverse demands on a unified cluster infrastructure requires sophisticated tools. Kubernetes has emerged as a dominant solution, providing a powerful platform for containerizing both traditional data processing components and specialized graph services. It automates the deployment, scaling, and management of these workloads, ensuring efficient resource allocation and high availability. However, configuring Kubernetes for optimal performance across such a varied workload profile – ensuring enough compute for graph algorithms while also reserving resources for real-time data ingestion – requires expert tuning and monitoring.

Performance optimization is another key area. For graph queries on massive datasets, efficient data partitioning strategies are essential. Distributing graph data across multiple nodes in a way that minimizes cross-node communication for common traversals (e.g., keeping densely connected subgraphs together) can dramatically improve query latency. Query optimization, both at the graph database level and for distributed queries involving multiple data sources, is also vital. Furthermore, intelligent indexing strategies for both nodes and edges, along with techniques like graph caching and materialized graph views, can significantly accelerate query performance in highly active hybrid systems. The interplay between the storage layer (e.g., HDFS, S3) and the graph processing engine's memory utilization must be carefully managed to prevent I/O bottlenecks.

Finally, security and access control in a distributed, hybrid environment are inherently more complex than in monolithic systems. Protecting sensitive graph data, which often reveals intricate relationships between entities, requires granular access controls. This means not just securing access to the cluster's resources but also implementing fine-grained permissions at the graph database level, defining who can view specific nodes, edges, or properties. Authentication and authorization mechanisms must be robust and seamlessly integrated across different components of the hybrid system. Data encryption at rest and in transit is non-negotiable, especially when dealing with personal or proprietary information. Auditing capabilities are also essential to track data access and modifications across the distributed landscape.

The Indispensable Role of API Management: API Gateway, AI Gateway, and LLM Gateway

In such a complex, distributed Cluster-Graph Hybrid architecture, effective API management is not merely a convenience but a fundamental necessity for seamless operation, security, and scalability. This is where the specialized functions of an api gateway, an AI Gateway, and an LLM Gateway become absolutely indispensable.

An api gateway serves as the single entry point for all client requests, abstracting away the underlying complexity of the distributed microservices and graph services within the hybrid system. It acts as a traffic cop, routing requests to the appropriate backend service, whether it's a traditional data store, a graph query engine, or an analytics service. Beyond simple routing, a robust api gateway provides critical functionalities: * Authentication and Authorization: Securing access to services, validating API keys, tokens, and enforcing user permissions. * Rate Limiting and Throttling: Protecting backend services from overload by controlling the number of requests clients can make. * Load Balancing: Distributing incoming traffic across multiple instances of a service to ensure high availability and optimal performance. * Caching: Storing responses to frequently requested data, reducing latency and backend load. * Logging and Monitoring: Providing a centralized point for collecting metrics and logs, crucial for observability and troubleshooting in a distributed environment. * API Versioning: Managing different versions of APIs, allowing for smooth transitions and backward compatibility.

When the Cluster-Graph Hybrid system begins to incorporate sophisticated artificial intelligence models, particularly those leveraging the contextual insights from graph data, an AI Gateway becomes a vital layer. Traditional api gateways are excellent for general-purpose REST APIs, but AI services often have unique requirements. An AI Gateway is specifically designed to manage access to a multitude of AI models (e.g., machine learning prediction endpoints, embedding models, natural language processing services) deployed across the cluster. Its functions include: * Unified Model Invocation: Standardizing the request and response formats across different AI models, abstracting away their underlying frameworks or deployment specifics. * Model Versioning and Routing: Directing requests to specific versions of AI models, enabling A/B testing or gradual rollouts. * Cost Management and Tracking: Monitoring usage and associated costs for different AI services, especially crucial when consuming third-party AI APIs. * Input/Output Transformation: Handling data transformations specific to AI models, such as encoding inputs or decoding outputs. * Security for AI Endpoints: Applying fine-grained access policies to different AI models, ensuring only authorized applications or users can invoke specific intelligent services.

In a world increasingly driven by conversational AI and advanced natural language processing, where Large Language Models (LLMs) play a pivotal role, a specialized LLM Gateway is becoming an essential component within a sophisticated AI Gateway strategy. Imagine a hybrid system where graph data provides factual grounding for LLMs, or where LLMs help construct knowledge graphs from unstructured text. An LLM Gateway specifically addresses the unique challenges of interacting with these powerful models: * Prompt Management and Optimization: Centralizing prompt templates, enabling dynamic prompt engineering, and optimizing prompts for different LLM providers or internally deployed models. * Model Abstraction: Providing a unified interface for interacting with various LLMs (e.g., OpenAI, Anthropic, open-source models), abstracting away their specific API differences. * Token Usage Tracking and Cost Control: Meticulously monitoring token usage to manage costs, which can be a significant factor with LLMs. * Content Moderation and Safety: Implementing filters and safeguards for inputs and outputs to ensure responsible AI usage. * Latency Optimization and Fallbacks: Managing parallel calls to multiple LLMs for performance or failover, and providing intelligent caching for common LLM queries. * Integration with Contextual Data: Seamlessly feeding contextual information from the graph component into LLM prompts to enhance relevance and accuracy.

For organizations building such advanced Cluster-Graph Hybrid architectures, an open-source solution like APIPark offers a compelling foundation. As an open-source AI Gateway and API Management Platform, APIPark is perfectly positioned to manage the diverse range of APIs in this environment. It can unify the management of 100+ AI models, offering a standardized API format for AI invocation, which simplifies maintenance when interacting with different LLM or other AI services that might be deployed across the cluster or leveraged externally. APIPark's ability to encapsulate prompts into REST APIs allows developers to quickly create specialized graph-enhanced AI services. Furthermore, its end-to-end API lifecycle management, performance rivaling Nginx, and detailed logging capabilities ensure that all API calls within the complex Cluster-Graph Hybrid are managed efficiently, securely, and with full observability, allowing teams to focus on building innovative applications rather than wrestling with API infrastructure. It provides independent API and access permissions for each tenant, which is crucial for multi-team environments leveraging a shared hybrid infrastructure. The platform can manage traffic forwarding, load balancing, and versioning of published APIs, all critical for the dynamic nature of hybrid graph and AI services.

| Feature / Component | Cluster Computing (Traditional) | Graph Computing (Native DB) | API Gateway | AI Gateway | LLM Gateway |

|---|---|---|---|---|---|

| Primary Focus | Scalable data processing, storage | Relationship modeling, traversal | API exposure, security, routing | AI model access, standardization | LLM access, prompt management |

| Core Strength | Volume, Velocity, Variety | Connectedness, Context | Centralized access, control | Unified AI interaction | Optimized LLM interaction |

| Key Use Cases | Batch analytics, Big Data Lakes | Fraud detection, Recommenders | Microservices, external APIs | AI-driven applications | Generative AI, chatbots |

| Scalability | Horizontal (data, compute) | Horizontal (nodes, edges) | High (traffic, requests) | High (models, users) | High (prompts, tokens) |

| Complexity Handled | Data Volume | Data Relationships | Distributed systems | Diverse AI models | Specific LLM intricacies |

| Integration w/ Hybrid | Forms the foundational substrate | Provides relationship intelligence | Manages all API traffic | Specializes in AI services | Focuses on LLM interactions |

| Example Technology | Hadoop, Spark, Kubernetes | Neo4j, ArangoDB | Nginx, Kong, APIPark | APIPark | APIPark |

This table underscores how each component plays a distinct yet interconnected role in forming a cohesive and powerful Cluster-Graph Hybrid architecture, with API Gateways acting as critical enablers for accessing its intelligent services.

Part 5: The Future Landscape – AI, LLMs, and Beyond

The convergence of cluster and graph computing sets the stage for a transformative future, particularly as artificial intelligence and Large Language Models (LLMs) continue their exponential evolution. The Cluster-Graph Hybrid is not merely an incremental improvement; it is a foundational architecture that unlocks unprecedented capabilities for truly intelligent, context-aware, and highly scalable applications.

One of the most exciting frontiers is Graph-Enhanced AI. While traditional machine learning models often struggle with relational data, treating each data point in isolation or requiring complex feature engineering, graph structures provide a rich, explicit context that dramatically enhances AI performance. Graph Neural Networks (GNNs), operating on large-scale graphs distributed across clusters, are at the forefront of this revolution. GNNs can learn representations (embeddings) of nodes and edges that capture their structural and semantic context, leading to more accurate predictions in areas like drug discovery (predicting molecular properties), fraud detection (identifying complex criminal networks), and recommendation systems (understanding nuanced user preferences). The hybrid architecture allows for the massive computational power of the cluster to train and deploy these complex GNNs on enormous, real-world graphs, fostering more explainable and robust AI systems that understand the "why" behind their predictions, not just the "what."

Furthermore, the synergy between LLMs and Knowledge Graphs is rapidly reshaping the landscape of information retrieval and reasoning. LLMs, while powerful in generating human-like text, often suffer from "hallucinations" and a lack of grounding in factual, verifiable information. Knowledge graphs, by explicitly representing entities and their relationships in a structured format, offer a powerful antidote. The hybrid model allows LLMs to query and construct knowledge graphs residing on the cluster. LLMs can be used to extract entities and relationships from unstructured text (e.g., documents, web pages) and populate a knowledge graph. Conversely, knowledge graphs can provide factual grounding for LLM responses, ensuring accuracy and reducing hallucination. Imagine an LLM answering a complex financial query, where its response is directly validated against a real-time knowledge graph of market data and company relationships, all managed within the scalable hybrid system. An LLM Gateway becomes crucial here, managing the interaction between LLMs and the rich, graph-based context, ensuring that prompts are correctly formatted and responses are accurately interpreted and grounded.

The demand for real-time hybrid systems will only intensify. As businesses strive for instantaneous insights and proactive decision-making, the ability to perform low-latency graph queries integrated with high-throughput streaming data processing on clusters becomes critical. This means processing incoming events (e.g., a new transaction, a sensor reading, a social media post) in real-time, updating the graph structure and properties, and immediately triggering graph-based algorithms to detect anomalies, make recommendations, or generate alerts. For example, in a smart city context, sensor data streams into a cluster, which updates a real-time graph of traffic flow and infrastructure status. Graph algorithms instantly identify bottlenecks or potential failures, allowing for immediate intervention. This requires sophisticated event-driven architectures and highly optimized graph processing engines capable of millisecond response times.

Looking even further ahead, the concept of Edge Computing and Hybrid Graphs extends the reach of this paradigm. As more data is generated at the edge (IoT devices, autonomous vehicles, smart factories), there's a growing need to perform local processing and analysis without constant reliance on a central cloud. Edge devices or local micro-clusters can maintain smaller, context-specific graphs (e.g., a factory's machine dependency graph, a vehicle's local environment map) for real-time decision-making. These edge graphs can then periodically synchronize or communicate with a larger, global Cluster-Graph Hybrid in the cloud, leveraging its immense processing power and comprehensive data for broader insights, long-term trend analysis, and model training. This creates a federated graph intelligence network, blurring the lines between local and global processing.

However, as these systems grow in complexity and influence, ethical considerations and data governance become paramount. Graph data, by its very nature, can reveal highly sensitive relationships, potentially exposing privacy concerns or enabling discriminatory practices if misused. Organizations must implement robust data governance frameworks to ensure responsible data collection, storage, and processing within the hybrid system. This includes adhering to data residency laws, implementing anonymization techniques for sensitive graph data, and transparently documenting the algorithms and AI models that operate on these graphs. The inherent power of a Cluster-Graph Hybrid, while immensely beneficial, also carries a profound responsibility to ensure its development and deployment serve humanity ethically and equitably.

Conclusion

The digital universe is expanding at an unprecedented rate, characterized not just by its sheer volume, but by an intricate web of relationships that define its very essence. For too long, computing paradigms have been forced into a binary choice: brute-force processing for massive datasets (cluster computing) or intricate relationship analysis for connected data (graph computing). While individually powerful, neither can fully address the demands of modern applications that require both immense scale and profound intelligence.

This article has charted the evolution of these two distinct domains, revealing their individual strengths in handling data volume and data relationships, respectively. We have demonstrated how their intelligent amalgamation into a Cluster-Graph Hybrid architecture represents a pivotal advancement in scalable computing. This fusion provides the robust, horizontally scalable substrate of cluster computing to host and power graph engines, while simultaneously infusing the raw data with the contextual intelligence derived from explicit relationships. From personalized recommendations that truly understand user nuances to real-time fraud detection systems that uncover hidden criminal networks, the hybrid model empowers solutions previously deemed impossible.

We have also navigated the intricate architectural considerations and implementation challenges, from seamless data integration and sophisticated resource orchestration to stringent security and performance optimization. Crucially, we highlighted the indispensable role of advanced API management, where an api gateway, an AI Gateway, and specifically an LLM Gateway (such as the capabilities offered by APIPark) act as the crucial conduits, securing, standardizing, and streamlining access to the myriad services within this complex ecosystem.

Looking ahead, the Cluster-Graph Hybrid is not just an architectural pattern but a foundational platform for the next wave of innovation. It is where graph-enhanced AI will yield more explainable and powerful insights, where Large Language Models will be grounded in verifiable knowledge, and where real-time, context-aware decisions will redefine operational efficiency across industries. As we venture further into an era of pervasive AI and hyper-connected data, the intelligent synergy of cluster and graph computing offers a robust, scalable, and inherently intelligent pathway forward, promising to unlock unprecedented value and shape the future of how we compute, understand, and interact with the world around us.

Frequently Asked Questions (FAQ)

1. What exactly is a Cluster-Graph Hybrid architecture and why is it necessary? A Cluster-Graph Hybrid architecture combines the strengths of traditional cluster computing (for handling massive data volumes and parallel processing) with graph computing (for modeling and analyzing complex relationships). It's necessary because modern applications often deal with both "big data" and "connected data" simultaneously. While clusters excel at raw processing, they struggle with relationship-centric queries. Graph databases excel at relationships but can face scalability challenges with truly vast datasets. The hybrid approach allows organizations to leverage the scalable storage and compute power of a cluster to host and power large-scale graph databases and processing engines, enabling highly scalable, context-rich analysis that neither paradigm can achieve alone.

2. What are the main challenges when implementing a Cluster-Graph Hybrid system? Implementing such a system involves several challenges. These include complex data integration, as data from various sources (structured, unstructured, streaming) needs to be transformed into a coherent graph structure while maintaining consistency with the cluster's data lake. Orchestration and resource management are also difficult, as the system must handle diverse workloads (batch, real-time, graph algorithms, AI inference) efficiently on a unified cluster, often using tools like Kubernetes. Furthermore, performance optimization (data partitioning, query optimization) and robust security with granular access controls across distributed graph data and cluster resources are critical and complex areas.

3. How do an API Gateway, AI Gateway, and LLM Gateway fit into this hybrid architecture? These gateways are critical for managing access and interaction with the complex services within a Cluster-Graph Hybrid. An API Gateway acts as a central entry point for all requests, handling routing, authentication, rate limiting, and load balancing for all microservices and graph-query endpoints. An AI Gateway specializes in managing access to various AI models (e.g., prediction services, embedding models) deployed across the cluster, standardizing invocations and managing costs. Specifically, an LLM Gateway further refines this by providing tailored features for Large Language Models, such as prompt management, token usage tracking, content moderation, and integrating LLMs with the rich, contextual graph data to reduce hallucinations and improve relevance. They abstract away complexity, enhance security, and ensure consistent access to the intelligent capabilities of the hybrid system.

4. Can you provide a practical example of a Cluster-Graph Hybrid in action? Consider a large e-commerce platform. The cluster computing component (e.g., a Spark cluster on Hadoop/S3) would store petabytes of transactional data, user clickstreams, product catalogs, and inventory levels. A graph computing layer, possibly running on that same cluster, would model user-product interactions, product-to-product similarities, user-to-user social connections, and supplier relationships. When a user views a product, the hybrid system can combine the raw data (from the cluster) with relationship analysis (from the graph) to provide highly personalized, real-time recommendations (e.g., "users who bought this also bought X," or "your friend Y likes this brand"), identify potential fraud patterns based on connected accounts, or even dynamically adjust inventory based on supplier network health. An API Gateway would manage access for front-end applications, while an AI Gateway (and potentially an LLM Gateway for natural language product searches) would manage calls to AI models that predict user behavior or generate product descriptions based on graph-enhanced insights.

5. What is the future outlook for Cluster-Graph Hybrid architectures, especially with AI and LLMs? The future outlook is highly promising. The Cluster-Graph Hybrid is becoming a foundational architecture for next-generation intelligent applications. It will enable more powerful and explainable AI through Graph-Enhanced AI, where Graph Neural Networks (GNNs) leverage the contextual information from graphs to make more accurate predictions. It will also be critical for integrating LLMs with Knowledge Graphs, allowing LLMs to extract information for graph construction and providing factual grounding for LLM responses, mitigating hallucinations. Furthermore, we will see a surge in real-time hybrid systems for instantaneous insights and proactive decision-making, and the extension of this paradigm to edge computing, with local graphs at the edge interacting with central hybrid clusters for federated intelligence. The combination promises truly scalable, intelligent, and context-aware computing.

🚀You can securely and efficiently call the OpenAI API on APIPark in just two steps:

Step 1: Deploy the APIPark AI gateway in 5 minutes.

APIPark is developed based on Golang, offering strong product performance and low development and maintenance costs. You can deploy APIPark with a single command line.

curl -sSO https://download.apipark.com/install/quick-start.sh; bash quick-start.sh

In my experience, you can see the successful deployment interface within 5 to 10 minutes. Then, you can log in to APIPark using your account.

Step 2: Call the OpenAI API.