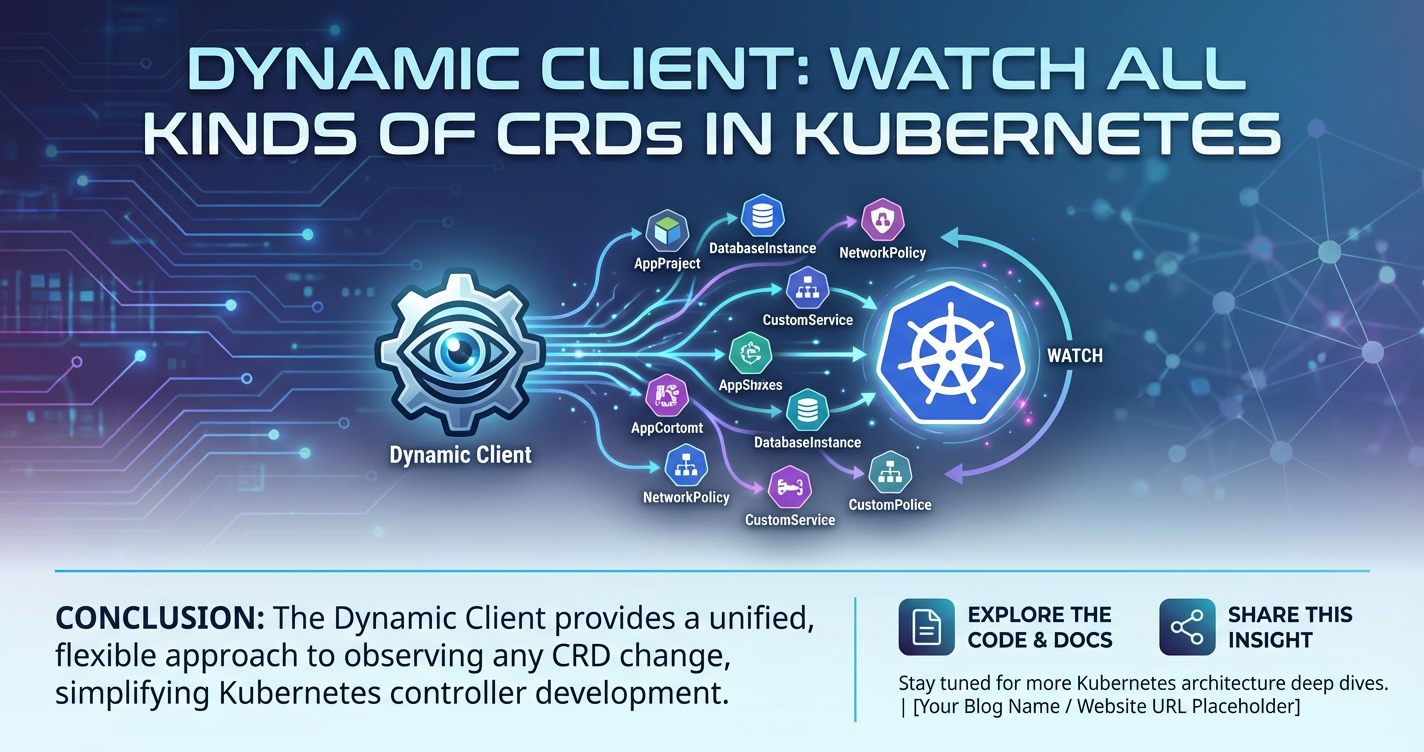

Dynamic Client: Watch All Kinds of CRDs in Kubernetes

The Kubernetes ecosystem, a rapidly evolving landscape, has fundamentally changed the way organizations deploy and manage applications. At its core, Kubernetes offers powerful primitives like Pods, Deployments, and Services, providing a robust foundation for container orchestration. However, the true strength and adaptability of Kubernetes lie in its extensibility – the ability for users to define and manage their own custom resources, tailored precisely to their unique operational needs and application domains. This extensibility is primarily manifested through Custom Resource Definitions (CRDs), which allow developers to introduce new types of objects into the Kubernetes API, making them first-class citizens of the cluster.

While CRDs empower users to extend Kubernetes, interacting with these custom resources programmatically presents a unique challenge, especially when their structure is not known at compile time. Traditional client-go libraries, which are the standard for Go applications interacting with Kubernetes, rely on static type definitions generated from CRD schemas. This approach works exceptionally well for well-defined, stable CRDs, but it falls short when dealing with a dynamic and diverse array of custom resources whose types may not be known in advance, or when building generic tools that need to observe and react to any CRD that might be present in a cluster.

This is where the Kubernetes Dynamic Client steps in, offering a profoundly powerful and flexible mechanism to interact with any Kubernetes resource, including all kinds of CRDs, without prior knowledge of their Go type. It provides an unstructured view of resources, allowing generic applications to list, get, create, update, delete, and, most importantly for many operational use cases, watch these resources in real-time. The ability to watch CRDs dynamically unlocks immense potential for building sophisticated operators, generic monitoring tools, auditing systems, and advanced automation that can adapt to the ever-changing landscape of a Kubernetes cluster.

This comprehensive guide will delve deep into the world of the Kubernetes Dynamic Client, exploring its architecture, capabilities, and practical applications for watching all kinds of CRDs. We will navigate the complexities of identifying and interacting with custom resources using GroupVersionResource (GVR), understand the nuances of the unstructured.Unstructured type, and master the art of establishing reliable watch mechanisms. Furthermore, we will discuss advanced use cases, best practices for building production-ready generic controllers, and integrate this powerful tool into the broader Kubernetes and API management ecosystem, demonstrating how solutions like APIPark complement internal Kubernetes operations by providing robust external API management for services potentially orchestrated by these dynamic CRD watches. By the end of this journey, you will possess a profound understanding of how to leverage the Dynamic Client to unlock the full extensibility of Kubernetes, creating more adaptable and resilient cloud-native solutions.

Kubernetes and the Extensibility Paradigm

Kubernetes, at its core, is a platform for automating deployment, scaling, and management of containerized applications. It abstracts away the underlying infrastructure, allowing developers to focus on application logic rather than operational minutiae. The fundamental building blocks of Kubernetes – Pods, Deployments, Services, and Namespaces – are powerful and broadly applicable, forming the bedrock upon which most applications are built. These native resources are precisely defined within the Kubernetes api and are managed by various controllers within the control plane. For instance, a Deployment controller ensures that a specified number of Pod replicas are running, while a Service controller ensures that network access to these Pods is properly configured.

However, the real power of Kubernetes, and a significant reason for its widespread adoption, lies in its extensibility. Recognizing that no single set of built-in resources could satisfy the diverse requirements of all users and applications, the Kubernetes architects designed the system to be extensible. This means users aren't limited to the standard api objects; they can introduce their own custom types, making them first-class citizens within the Kubernetes control plane. This extensibility is primarily facilitated through Custom Resource Definitions (CRDs).

A CRD allows users to define a new kind of resource, specifying its schema, scope (namespaced or cluster-scoped), and other metadata. Once a CRD is created in a cluster, the Kubernetes api server immediately begins serving the new resource type. This means you can interact with your custom resource using standard Kubernetes tools like kubectl, or through client libraries, exactly as you would with native resources. For example, if you define a CRD for "Database," you could then create a "MySQL" Database object, and a custom controller (often called an operator) would observe these "Database" objects and take actions to provision and manage actual MySQL instances. This pattern, where custom logic is implemented to manage custom resources, forms the foundation of the Operator pattern, a highly effective way to encapsulate operational knowledge and automate complex application lifecycle management within Kubernetes. The api server acts as the central hub, processing all requests for both native and custom resources, enforcing authentication, authorization, and validation rules.

The Challenge of Interacting with Unknown CRDs

When building applications that interact with Kubernetes, particularly controllers or operators, the standard and most idiomatic approach in Go is to use client-go. This library provides strongly typed clients for all native Kubernetes resources. For custom resources, client-go also supports code generation. Given a CRD's OpenAPI schema, tools like code-generator can automatically generate Go types (type Foo struct { ... }) and corresponding client methods (Clientset.ExampleV1().Foos().List(...)) that are tailored to your specific custom resource. This approach offers several significant advantages:

- Type Safety: You work with Go structs that directly mirror your Kubernetes resources, reducing the chance of runtime errors due as the compiler catches many issues.

- IDE Support: Autocompletion, type checking, and refactoring tools work seamlessly, enhancing developer productivity.

- Readability: Code is generally easier to understand and maintain because it operates on well-defined types.

However, this static typing approach introduces a critical limitation: it requires compile-time knowledge of the CRD's Go type. If you're building a generic tool – say, a universal auditor that logs changes to any resource in the cluster, or a dashboard that displays all custom resources regardless of their type – you cannot possibly generate code for every conceivable CRD that might be present. The universe of CRDs is potentially infinite and ever-changing across different clusters and organizations. Deploying a new CRD would necessitate recompiling and redeploying your tool, which is cumbersome and defeats the purpose of a generic solution.

Furthermore, consider scenarios where you are building a gateway service that needs to dynamically adapt to new kinds of resources being exposed, or a system that integrates various Kubernetes deployments with external apis. The static approach becomes a bottleneck. How do you, as a developer, write code that can fetch, inspect, and react to a Foo CRD and a Bar CRD, both of which are defined and deployed after your application has been compiled, without having their specific Go types? This "dynamic" problem, the need to interact with Kubernetes objects whose precise structure is unknown at compile time, is precisely the challenge that the Kubernetes Dynamic Client was designed to solve. It bridges the gap between the statically typed world of client-go and the fluid, ever-expanding world of Kubernetes custom resources.

Introducing the Kubernetes Dynamic Client

The Kubernetes Dynamic Client, found within the k8s.io/client-go/dynamic package, is a powerful abstraction designed to interact with Kubernetes resources in a schema-agnostic manner. Instead of operating on statically typed Go structs, it primarily uses unstructured.Unstructured objects, which represent any Kubernetes object as a generic map[string]interface{}. This fundamental difference is what gives the Dynamic Client its flexibility, allowing it to work with any resource—native or custom—without requiring compile-time code generation for specific types.

The core interface of the Dynamic Client is dynamic.Interface. Unlike the standard kubernetes.Interface (which you get from kubernetes.NewForConfig), which provides methods like CoreV1(), AppsV1(), etc., each returning a typed client for a specific API group and version, dynamic.Interface offers a single, universal entry point: Resource(gvr GroupVersionResource) ResourceInterface.

Let's break down its purpose and design philosophy:

- Purpose: To provide a programmatic way to interact with Kubernetes resources (both built-in and custom) when their Go type is unknown at compile time. This is invaluable for generic tools, operators, and controllers that need to be adaptable to evolving cluster configurations.

- Design Philosophy: To treat all Kubernetes objects as generic key-value pairs (

map[string]interface{}), allowing runtime inspection and manipulation of theirspec,status, andmetadatafields. Thisunstructuredapproach trades compile-time type safety for immense runtime flexibility.

Key Methods of dynamic.Interface and ResourceInterface:

dynamic.NewForConfig(config *rest.Config) (dynamic.Interface, error): This is the entry point for creating a dynamic client. Similar toclient-go, it takes arest.Configobject, which contains the necessary information (like API server address, authentication credentials) to connect to the Kubernetes cluster.Resource(gvr GroupVersionResource) ResourceInterface: This is the most crucial method ofdynamic.Interface. It takes aGroupVersionResource(GVR) as input and returns aResourceInterface. A GVR uniquely identifies a specific kind of resource within the Kubernetes API.- Group: The API group (e.g.,

apps,batch,example.com). For core Kubernetes resources (like Pods, Services), the group is often empty. - Version: The API version (e.g.,

v1,v1beta1). - Resource: The plural name of the resource (e.g.,

deployments,pods,foos). - For example, a Deployment would have a GVR of

apps/v1/deployments, while a customFooresource might haveexample.com/v1/foos.

- Group: The API group (e.g.,

ResourceInterface: Once you have aResourceInterfacefor a specific GVR, you can perform standard CRUD operations on resources of that type. Its methods include:Namespace(namespace string) ResourceInterface: Returns a namespaced client if the resource is namespaced. If the resource is cluster-scoped, this method should not be called.Create(ctx context.Context, obj *unstructured.Unstructured, opts metav1.CreateOptions, subresources ...string) (*unstructured.Unstructured, error): Creates a new resource.Update(ctx context.Context, obj *unstructured.Unstructured, opts metav1.UpdateOptions, subresources ...string) (*unstructured.Unstructured, error): Updates an existing resource.UpdateStatus(ctx context.Context, obj *unstructured.Unstructured, opts metav1.UpdateOptions) (*unstructured.Unstructured, error): Updates only thestatussubresource of an object.Delete(ctx context.Context, name string, opts metav1.DeleteOptions, subresources ...string) error: Deletes a resource by name.DeleteCollection(ctx context.Context, opts metav1.DeleteOptions, listOpts metav1.ListOptions) error: Deletes multiple resources matching specified list options.Get(ctx context.Context, name string, opts metav1.GetOptions, subresources ...string) (*unstructured.Unstructured, error): Retrieves a resource by name.List(ctx context.Context, opts metav1.ListOptions) (*unstructured.UnstructuredList, error): Lists all resources of the specified GVR, optionally filtered byListOptions.Watch(ctx context.Context, opts metav1.ListOptions) (watch.Interface, error): This is the method we will focus on, allowing you to establish a continuous stream of events for changes to resources of the specified GVR.

The unstructured.Unstructured Type:

This type is the cornerstone of Dynamic Client operations. Instead of a concrete Go struct like *appsv1.Deployment, the Dynamic Client returns and accepts *unstructured.Unstructured objects. This struct essentially wraps a map[string]interface{}, allowing it to represent any Kubernetes object's JSON/YAML structure without compile-time knowledge of its fields.

package unstructured

import (

"k8s.io/apimachinery/pkg/apis/meta/v1"

"k8s.io/apimachinery/pkg/runtime"

"k8s.io/apimachinery/pkg/types"

)

// Unstructured uses an interface{} for JSON decoding, and mostly treats objects as

// an unstructured map.

type Unstructured struct {

Object map[string]interface{}

}

// UnstructuredList is a list of Unstructured objects.

type UnstructuredList struct {

TypeMeta `json:",inline"`

ListMeta `json:"metadata,omitempty"`

Items []Unstructured `json:"items"`

}

The unstructured.Unstructured type provides helper methods to access and manipulate common Kubernetes fields, such as GetName(), GetNamespace(), GetAPIVersion(), GetKind(), GetResourceVersion(), and methods like SetManagedFields(), SetAnnotations(), SetLabels(). For accessing fields within the spec or status, you use methods like UnstructuredContent() to get the underlying map[string]interface{} and then navigate it manually. For example, to get a field from the spec:

spec, found, err := unstructured.NestedMap(obj.Object, "spec")

if found && err == nil {

replicas, found, err := unstructured.NestedInt64(spec, "replicas")

// ...

}

This dynamic approach, while requiring more careful runtime checks and explicit type assertions, provides unparalleled flexibility for building generic tools that can truly watch all kinds of CRDs in Kubernetes, adapting to an ever-evolving api surface without the need for constant recompilation. It embodies the essence of Kubernetes extensibility, allowing developers to build sophisticated systems that are resilient to changes in resource definitions.

Setting Up Your Environment for Dynamic Client

Before diving into the mechanics of watching CRDs, it's essential to properly set up your Go development environment and establish a connection to your Kubernetes cluster using the Dynamic Client. The process largely mirrors that of client-go, focusing on rest.Config for connection details and dynamic.NewForConfig for client instantiation.

First, ensure you have the necessary client-go modules in your go.mod file. You'll typically need at least k8s.io/client-go, k8s.io/apimachinery, and k8s.io/api.

go get k8s.io/client-go@latest

go get k8s.io/apimachinery@latest

go get k8s.io/api@latest

Next, let's consider how to obtain rest.Config. This configuration object contains all the details required for a client to communicate with the Kubernetes api server, including the host, authentication credentials, and TLS configuration. There are two primary scenarios for obtaining this configuration:

- In-Cluster Configuration: When your application is running inside a Kubernetes Pod, it can leverage the service account token mounted at

/var/run/secrets/kubernetes.io/serviceaccountto authenticate with theapiserver. Therest.InClusterConfig()function handles this automatically. This is the recommended approach for operators and controllers running within the cluster.```go import ( "k8s.io/client-go/rest" // ... )func getInClusterConfig() (*rest.Config, error) { config, err := rest.InClusterConfig() if err != nil { return nil, fmt.Errorf("failed to get in-cluster config: %w", err) } return config, nil } ```

Out-of-Cluster Configuration (Kubeconfig): When your application is running outside the cluster (e.g., during local development, testing, or from a standalone tool), it typically uses your kubeconfig file. The clientcmd.BuildConfigFromFlags function is used for this. It can take the path to your kubeconfig file (often ~/.kube/config) or use the default location if no path is provided. You can also specify a specific context within the kubeconfig.```go import ( "flag" "path/filepath" "k8s.io/client-go/util/homedir" "k8s.io/client-go/tools/clientcmd" // ... )func getKubeConfig() (rest.Config, error) { var kubeconfig string if home := homedir.HomeDir(); home != "" { kubeconfig = flag.String("kubeconfig", filepath.Join(home, ".kube", "config"), "(optional) absolute path to the kubeconfig file") } else { kubeconfig = flag.String("kubeconfig", "", "absolute path to the kubeconfig file") } flag.Parse()

config, err := clientcmd.BuildConfigFromFlags("", *kubeconfig)

if err != nil {

return nil, fmt.Errorf("failed to build kubeconfig: %w", err)

}

return config, nil

} ```

Once you have a rest.Config, you can initialize the Dynamic Client:

import (

"k8s.io/client-go/dynamic"

// ...

)

func initializeDynamicClient() (dynamic.Interface, error) {

// Choose your config retrieval method

config, err := getInClusterConfig() // or getKubeConfig()

if err != nil {

return nil, fmt.Errorf("failed to get Kubernetes config: %w", err)

}

dynamicClient, err := dynamic.NewForConfig(config)

if err != nil {

return nil, fmt.Errorf("failed to create dynamic client: %w", err)

}

return dynamicClient, nil

}

Authentication and Authorization (RBAC):

It's crucial to understand that the Dynamic Client, like any other Kubernetes client, operates within the framework of Role-Based Access Control (RBAC). The identity used to establish the connection (whether a service account in-cluster or a user associated with the kubeconfig) must have the necessary permissions to perform the requested operations on the target resources. For watching CRDs, the service account or user needs watch, list, and potentially get permissions on the customresourcedefinitions resource itself (to discover CRDs) and on the specific custom resources you intend to watch.

For example, to watch all Foo resources in the example.com group, you would need a ClusterRole (if the CRD is cluster-scoped) or a Role (if namespaced) with rules like this:

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: crd-watcher-role

rules:

- apiGroups: ["example.com"]

resources: ["foos"]

verbs: ["get", "list", "watch"]

- apiGroups: ["apiextensions.k8s.io"]

resources: ["customresourcedefinitions"] # Needed if you want to discover CRDs

verbs: ["get", "list", "watch"]

This ClusterRole would then be bound to your service account using a ClusterRoleBinding. Proper RBAC configuration is paramount for security and operational integrity, ensuring your dynamic client application only has the minimal necessary privileges. For more complex api governance scenarios, especially when exposing internal services externally or integrating with third-party apis, an API gateway like APIPark provides an additional layer of access control and management, complementing Kubernetes' internal RBAC.

With the client initialized and RBAC correctly configured, your environment is ready to leverage the full power of the Dynamic Client for watching CRDs.

Deep Dive into Watching CRDs with Dynamic Client

Watching resources in Kubernetes is a fundamental mechanism for building reactive systems like controllers and operators. Instead of continuously polling the api server (which is inefficient and generates unnecessary load), a watch establishes a long-lived connection, allowing the api server to push notifications to the client whenever a change occurs to a specific resource or collection of resources. The Dynamic Client provides an elegant way to perform this watch operation on any CRD.

Resource Identification: GroupVersionResource (GVR)

The key to interacting with any resource via the Dynamic Client is its GroupVersionResource (GVR). As mentioned, this triumvirate uniquely identifies a resource type in the Kubernetes api.

- Group: This is the

apiVersion.grouppart of your CRD (e.g.,example.comforapiVersion: example.com/v1). For core resources, it's often an empty string (e.g., for Pods, Services, ConfigMaps). - Version: This is the

apiVersion.versionpart (e.g.,v1,v1beta1). - Resource: This is the plural name of your CRD, as defined in

spec.names.plural(e.g.,foosfor aFooCRD,deploymentsforDeployment).

Constructing a GVR for a CRD:

Let's assume you have a CRD defined as follows:

apiVersion: apiextensions.k8s.io/v1

kind: CustomResourceDefinition

metadata:

name: foos.example.com

spec:

group: example.com

versions:

- name: v1

served: true

storage: true

schema:

openAPIV3Schema:

type: object

properties:

spec:

type: object

properties:

message:

type: string

status:

type: object

properties:

processed:

type: boolean

scope: Namespaced

names:

plural: foos

singular: foo

kind: Foo

listKind: FooList

To watch Foo resources, your GVR would be example.com/v1/foos. In Go, you construct it using schema.GroupVersionResource:

import (

"k8s.io/apimachinery/pkg/runtime/schema"

// ...

)

var fooGVR = schema.GroupVersionResource{

Group: "example.com",

Version: "v1",

Resource: "foos",

}

For a standard Kubernetes resource like Deployment:

var deploymentGVR = schema.GroupVersionResource{

Group: "apps",

Version: "v1",

Resource: "deployments",

}

The Watch Method and watch.Interface

Once you have your dynamic.Interface and the target GVR, initiating a watch is straightforward:

import (

"context"

"fmt"

"time"

metav1 "k8s.io/apimachinery/pkg/apis/meta/v1"

"k8s.io/apimachinery/pkg/runtime/schema"

"k8s.io/client-go/dynamic"

"k8s.io/client-go/tools/cache" // For some concepts, though not direct use of cache.NewInformer

"k8s.io/client-go/rest"

"k8s.io/client-go/util/homedir"

"path/filepath"

"k8s.io/client-go/tools/clientcmd"

"flag"

"k8s.io/apimachinery/pkg/apis/meta/v1/unstructured"

"k8s.io/apimachinery/pkg/watch"

)

func main() {

config, err := getKubeConfig() // Assuming getKubeConfig from previous section

if err != nil {

panic(fmt.Errorf("error building kubeconfig: %v", err))

}

dynamicClient, err := dynamic.NewForConfig(config)

if err != nil {

panic(fmt.Errorf("error creating dynamic client: %v", err))

}

// Example GVR for a custom resource

fooGVR := schema.GroupVersionResource{

Group: "example.com",

Version: "v1",

Resource: "foos",

}

// Set up context for graceful shutdown

ctx, cancel := context.WithCancel(context.Background())

defer cancel()

fmt.Printf("Starting watch for CRD: %s/%s/%s\n", fooGVR.Group, fooGVR.Version, fooGVR.Resource)

watcher, err := dynamicClient.Resource(fooGVR).Watch(ctx, metav1.ListOptions{})

if err != nil {

panic(fmt.Errorf("error starting watch: %v", err))

}

defer watcher.Stop() // Ensure the watch connection is closed

for event := range watcher.ResultChan() {

obj, ok := event.Object.(*unstructured.Unstructured)

if !ok {

fmt.Printf("unexpected type for object in watch event: %T\n", event.Object)

continue

}

switch event.Type {

case watch.Added:

fmt.Printf("ADDED: %s/%s, Name: %s\n", obj.GetAPIVersion(), obj.GetKind(), obj.GetName())

// Further process obj.Object for specific fields

if msg, found, err := unstructured.NestedString(obj.Object, "spec", "message"); found && err == nil {

fmt.Printf(" Message: %s\n", msg)

}

case watch.Modified:

fmt.Printf("MODIFIED: %s/%s, Name: %s\n", obj.GetAPIVersion(), obj.GetKind(), obj.GetName())

// Check for changes, e.g., in status

if processed, found, err := unstructured.NestedBool(obj.Object, "status", "processed"); found && err == nil {

fmt.Printf(" Status Processed: %t\n", processed)

}

case watch.Deleted:

fmt.Printf("DELETED: %s/%s, Name: %s\n", obj.GetAPIVersion(), obj.GetKind(), obj.GetName())

case watch.Error:

// Handle error event, often indicates the watch connection broke

fmt.Printf("ERROR: %v\n", event.Object)

default:

fmt.Printf("UNKNOWN event type: %s, Object: %s/%s, Name: %s\n", event.Type, obj.GetAPIVersion(), obj.GetKind(), obj.GetName())

}

}

fmt.Println("Watch stopped.")

}

The Watch method returns a watch.Interface, which provides a ResultChan() that is a Go channel of watch.Event objects. Each watch.Event contains: * Type: An watch.EventType (e.g., watch.Added, watch.Modified, watch.Deleted, watch.Error). * Object: An runtime.Object which, for the Dynamic Client, will almost always be an *unstructured.Unstructured.

Practical Considerations for Watching

Watching CRDs with the Dynamic Client involves more than just calling the Watch method; it requires careful consideration of several factors to ensure robustness and correctness in production environments.

- Resource Version and List-Watch Pattern: Kubernetes watches are not guaranteed to be unbroken indefinitely. Network glitches,

apiserver restarts, or internalapiserver state pruning can cause a watch connection to terminate. When this happens, the client must be able to resume the watch without missing any events. This is achieved using theresourceVersionfield.The recommended pattern, known as the List-Watch pattern, is as follows: * Perform an initialListcall to get all existing resources of the target GVR. This provides a consistent snapshot of the cluster's current state. * From theListresponse, extract theResourceVersionof the list itself (available inmetadata.resourceVersionofUnstructuredList). ThisResourceVersionrepresents the state of the cluster at the exact moment the list was retrieved. * Start aWatchcall, passing thisResourceVersioninmetav1.ListOptions. This tells theapiserver to only send events for changes that occurred after that specificResourceVersion. * When the watch connection breaks (e.g., due to anErrorevent or channel closure), repeat the entire List-Watch process. This ensures that any events that occurred while the watch was down are captured by the subsequentListcall, and the new watch resumes from a consistent point.Implementing this pattern correctly guarantees that your application never misses events, even during transient network failures orapiserver instability. - Informers vs. Dynamic Client Watches: While the Dynamic Client's

Watchmethod is powerful, for complex controllers that manage local caches of Kubernetes objects,client-go'scache.SharedInformerFactory(andcache.SharedIndexInformer) is often the preferred choice. Informers encapsulate the List-Watch pattern, handle re-watches, manage local object caches, and notify registered event handlers. They are optimized for efficiency, often sharing a single watch connection across multiple informers for the same resource type.It's important to note that you can use the Dynamic Client with informers.cache.NewListWatchFromClientcan accept adynamic.Clientand a GVR to create acache.ListerWatcher, which is then used bycache.NewSharedIndexInformer. This combines the flexibility of the Dynamic Client with the robustness of informers.- When to use Dynamic Client Watch:

- For simple, single-purpose watch loops where the overhead of an informer (cache, indexers, shared factory) is deemed too heavy.

- When building very generic tools that need to watch arbitrary CRDs discovered at runtime, and the complexity of setting up informers dynamically for every possible GVR is prohibitive (though dynamic informers are possible).

- For short-lived watches or specific debugging scenarios.

- When to use Informers:

- For robust, production-grade controllers and operators that need to maintain an up-to-date, consistent local cache of resources.

- When you need strong guarantees about event ordering and state consistency.

- When multiple components need to react to changes on the same resource type efficiently.

- For integrating with a

workqueuepattern for controller reconciliation.

- When to use Dynamic Client Watch:

- Filtering Watches:

metav1.ListOptionscan be passed to theWatchmethod to filter the events received:Applying filters reduces the amount of data transferred from theapiserver, improving efficiency and reducing the load on both the client and the server.LabelSelector: Filters resources based on their labels (e.g.,app=my-app,env=prod).FieldSelector: Filters resources based on their fields (e.g.,metadata.name=my-resource,metadata.namespace=default). This is more limited than label selectors and only works for indexed fields on theapiserver side.LimitandContinue: Used for pagination inListoperations, less relevant for continuous watches.

- Rate Limiting and Backoff: When a watch connection repeatedly breaks and needs to be re-established (especially in error scenarios), it's crucial to implement rate limiting and exponential backoff. Continuously retrying to establish a watch without delay can overwhelm the

apiserver and lead to a denial-of-service. Libraries likek8s.io/client-go/util/retryprovide robust mechanisms for this. - Context Management: Always use

context.Context(context.WithCancelorcontext.WithTimeout) when initiating watches andapicalls. This allows for graceful shutdown of your application. When the context is canceled,watcher.ResultChan()will eventually close, and any ongoingapicalls will be gracefully terminated, preventing resource leaks.

By diligently applying these practical considerations, developers can build highly reliable, efficient, and resilient applications that leverage the Dynamic Client to watch and react to all kinds of CRDs within a Kubernetes cluster. The flexibility offered by unstructured.Unstructured coupled with robust watch management practices forms the backbone of powerful Kubernetes extensibility.

Advanced Use Cases and Best Practices for Dynamic Client

The power of the Dynamic Client extends far beyond simple watch operations. Its ability to interact with Kubernetes resources in a schema-agnostic way opens up a plethora of advanced use cases, making it an indispensable tool for anyone building generic Kubernetes utilities, complex operators, or cluster-wide management systems.

Generic Controllers and Operators

One of the most compelling applications of the Dynamic Client is in building generic controllers or operators. Imagine a scenario where you want to enforce a cluster-wide policy, such as adding a specific label or annotation to any new custom resource, or perhaps auditing changes to all CRDs. With statically typed clients, this would require generating a client for every single CRD, a cumbersome and impractical approach.

A generic controller built with the Dynamic Client can: * Discover CRDs at Runtime: By watching CustomResourceDefinition objects (themselves standard Kubernetes resources under apiextensions.k8s.io/v1), the controller can dynamically learn about new CRD types being introduced into the cluster. * Dynamically Create Watchers: For each discovered CRD, the controller can construct its GroupVersionResource and instantiate a new Dynamic Client watcher. This allows the controller to react to events from CRDs it didn't know about at compile time. * Apply Generic Logic: Once an unstructured.Unstructured object is received, the controller can apply generic logic (e.g., check for a specific field, add default values, update status) without needing to know the exact Go type of the resource. For instance, a generic validating webhook could inspect the unstructured object against a set of rules defined in a ConfigMap or another CRD.

This approach creates highly adaptable and future-proof solutions, capable of evolving with the cluster's custom resource landscape.

Auditing and Monitoring Tools

The Dynamic Client is perfectly suited for creating powerful auditing and monitoring tools. A single application can use the Dynamic Client to: * Centralized Event Stream: Subscribe to watch events for a wide range of native resources (Pods, Deployments, Services) and all custom resources. * Historical Logging: Log every Added, Modified, and Deleted event to a persistent store, creating a comprehensive audit trail of all changes within the cluster. This is invaluable for security compliance, post-incident analysis, and understanding cluster state evolution. * Real-time Alerts: Trigger alerts based on specific changes to any resource, such as the creation of a sensitive CRD in a particular namespace, or modification of a critical configuration object. * Dashboards: Build dynamic dashboards that can display statistics or current states of any custom resource type, fetching data through List and keeping it updated via Watch.

Such tools enhance operational visibility and provide crucial insights into the cluster's activities, especially when combined with robust api logging provided by an external gateway for inbound and outbound traffic, such as APIPark.

Interacting with CRD Status and Spec

When working with unstructured.Unstructured objects, accessing and modifying specific fields within the spec or status requires using helper functions provided by the unstructured package. While it's less type-safe than direct struct access, these functions are robust.

import "k8s.io/apimachinery/pkg/apis/meta/v1/unstructured"

// Example: Reading a field from spec

val, found, err := unstructured.NestedString(obj.Object, "spec", "myStringField")

if found && err == nil {

fmt.Printf("My string field: %s\n", val)

}

// Example: Updating a field in status

// First, make a deep copy to avoid modifying the original watch object directly

objCopy := obj.DeepCopy()

err = unstructured.SetNestedField(objCopy.Object, true, "status", "processed")

if err != nil {

// Handle error

}

// Then, update the status subresource

updatedObj, err := dynamicClient.Resource(fooGVR).Namespace("default").UpdateStatus(ctx, objCopy, metav1.UpdateOptions{})

if err != nil {

// Handle error

}

These Nested* and SetNestedField functions (e.g., NestedMap, NestedSlice, NestedInt64, SetNestedMap) allow for flexible navigation and manipulation of the underlying map[string]interface{}, enabling full CRUD operations on unstructured objects.

Schema Validation and OpenAPI

Even though the Dynamic Client interacts with unstructured objects, the underlying CRD still typically defines an OpenAPI v3 schema in its spec.versions[].schema.openAPIV3Schema. This schema is crucial for validation. When you Create or Update an unstructured.Unstructured object via the Dynamic Client, the Kubernetes api server will still validate the submitted object against the CRD's OpenAPI schema. If the object does not conform, the api server will reject the request with a validation error.

This means that while your client code is dynamic, the data you send to the api server must still be valid according to the CRD's definition. Developers using the Dynamic Client should be aware of the CRD's schema, even if not directly using generated types, to ensure their modifications are valid. Tools that dynamically generate unstructured objects (e.g., from user input) should ideally perform their own client-side validation against the OpenAPI schema (which can be fetched from the CRD itself) before sending the object to the api server, thereby reducing unnecessary api calls. This also highlights how OpenAPI serves as a universal language for defining and validating API structures, whether for custom Kubernetes resources or external apis exposed through a gateway.

Performance Implications

Working with unstructured.Unstructured objects inherently carries a slightly higher performance overhead compared to statically typed objects. Each time you access or modify a field, there's a need for map lookups and type assertions, which are slower than direct struct field access. Additionally, deserializing and serializing unstructured objects might involve more generic reflection.

For most common use cases, this overhead is negligible. However, in extremely high-throughput scenarios where you are processing tens of thousands of object changes per second, and performance is critical, the choice between dynamic and typed clients (or informers) might need careful benchmarking. For the vast majority of generic controllers and auditing tools, the flexibility and development simplicity offered by the Dynamic Client far outweigh this minor performance consideration.

Error Handling Strategies

Robust error handling is paramount when using the Dynamic Client, especially in watch loops. Beyond generic Go error checks, consider Kubernetes-specific error conditions:

apierrors.IsNotFound(err): Check if a resource doesn't exist.apierrors.IsAlreadyExists(err): Check if a resource you're trying to create already exists.apierrors.IsConflict(err): Crucial for optimistic concurrency. If you're updating a resource, you should retrieve the latest version, modify it, and then update. If another client modifies it between yourGetandUpdate, you'll get a conflict error, and you'll need to retry the operation.- Network Errors: Handle transient network issues by implementing retry logic with exponential backoff.

- Validation Errors: As mentioned,

apiserver will return errors if yourunstructuredobject does not conform to the CRD'sOpenAPIschema. Log these detailed errors for debugging. - Watch Error Events: The

watch.Errorevent type should be explicitly handled. This often indicates a breaking watch connection and necessitates re-initiating the List-Watch pattern.

By anticipating these error conditions and implementing appropriate retry mechanisms and logging, you can build highly resilient applications using the Dynamic Client.

Security Aspects of Dynamic Client

While the Dynamic Client provides immense flexibility, it does not bypass Kubernetes' inherent security mechanisms. It operates strictly within the boundaries defined by Role-Based Access Control (RBAC), and careful consideration of permissions is crucial to maintain a secure and stable cluster environment.

RBAC Enforcement

Every operation performed by the Dynamic Client – be it List, Get, Create, Update, Delete, or Watch – is subjected to Kubernetes RBAC checks by the api server. The service account (for in-cluster applications) or user (for out-of-cluster tools) associated with the rest.Config used to initialize the Dynamic Client must have the necessary permissions for the specific GroupVersionResource (GVR) and verb (get, list, watch, create, update, delete).

For example, if your Dynamic Client application is intended only to watch Foo resources, it should only be granted watch and list permissions for foos.example.com. Granting create, update, or delete permissions would be a breach of the principle of least privilege, potentially allowing the application to make unauthorized changes.

Example RBAC for a Watcher:

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole # Use ClusterRole if watching cluster-scoped CRDs or all namespaces

metadata:

name: dynamic-crd-watcher

rules:

- apiGroups: ["example.com"] # Replace with the actual API group of your CRD

resources: ["foos"] # Replace with the plural resource name of your CRD

verbs: ["get", "list", "watch"]

- apiGroups: ["another.domain.com"] # Add rules for other CRDs you intend to watch

resources: ["bars"]

verbs: ["get", "list", "watch"]

- apiGroups: ["apiextensions.k8s.io"] # Essential if you plan to discover CRDs

resources: ["customresourcedefinitions"]

verbs: ["get", "list", "watch"]

This ClusterRole would then be bound to a service account using a ClusterRoleBinding. If the CRD is namespaced and your watcher is also namespaced, a Role and RoleBinding might suffice.

Least Privilege

Always adhere strictly to the principle of least privilege. Grant only the minimum set of permissions required for your Dynamic Client application to function correctly. Over-privileging a generic tool, even a watcher, can inadvertently create security vulnerabilities. If a compromised watcher has delete permissions on all CRDs, it could lead to significant data loss or service disruption. Regularly review and audit the RBAC policies applied to your Dynamic Client applications.

Sensitive Data Handling

Since unstructured.Unstructured objects represent the full Kubernetes resource, they can contain sensitive information in their spec, status, or annotations. Be extremely cautious about what data you log or expose from unstructured objects, especially in generic auditing or monitoring tools. If you're logging the entire unstructured.Unstructured object, ensure your logging infrastructure is secure and that sensitive fields are redacted or encrypted if necessary.

For instance, a Secret CRD might contain confidential credentials. While a Dynamic Client could technically watch Secret CRDs, accessing and logging their data field would be a severe security risk. Developers must implement explicit checks and redaction logic when handling unstructured data that might contain secrets.

API Governance and External Exposure

While Dynamic Client focuses on internal interaction with the Kubernetes api, organizations often need to expose services and apis to external consumers or integrate with third-party systems. In such scenarios, an API gateway becomes a critical component for security, management, and governance.

Consider a Kubernetes cluster where CRDs orchestrate various backend services, including AI models. While the Dynamic Client watches these CRDs internally to ensure their correct operation, the actual api endpoints for these AI models or other microservices need a robust gateway for external access. This is where platforms like APIPark come into play.

APIPark is an open-source AI gateway and API management platform that sits at the edge of your network, providing a unified api interface. It offers features like: * Centralized Authentication and Authorization: Beyond Kubernetes RBAC, APIPark provides its own granular access control, ensuring only authorized consumers can invoke external apis, often integrating with identity providers. * Traffic Management: Load balancing, rate limiting, and circuit breaking for external api calls. * API Lifecycle Management: Design, publish, version, and deprecate external apis. * Comprehensive Logging and Analytics: Detailed records of every api call, crucial for auditing and performance monitoring of external integrations. This complements the internal logging provided by Dynamic Client watchers. * Prompt Encapsulation for AI: For AI models orchestrated by CRDs, APIPark allows prompt encapsulation into REST apis, simplifying their consumption for application developers.

Therefore, while the Dynamic Client is invaluable for internal Kubernetes operations, a platform like APIPark provides the necessary secure and managed gateway for externalizing the services and apis that are often deployed and managed within a Kubernetes environment. Both tools address different, yet complementary, aspects of a comprehensive cloud-native architecture.

By carefully managing RBAC, adhering to least privilege, practicing secure data handling, and leveraging appropriate external api governance tools, the Dynamic Client can be safely and effectively integrated into any Kubernetes security strategy.

Integrating with the Wider Kubernetes Ecosystem

The Dynamic Client, while powerful on its own, truly shines when integrated into the broader Kubernetes ecosystem. Its generic nature allows it to serve as a foundational component for various tools and platforms, enhancing the overall manageability and interoperability of a cluster.

Dynamic Informers for Robust Controllers

As briefly touched upon, you don't have to choose between the flexibility of the Dynamic Client and the robustness of informers. client-go allows you to create informers using a Dynamic Client. This pattern is particularly useful for operators that need to manage an arbitrary number of CRDs or CRD versions that may appear and disappear at runtime.

The key is cache.NewListWatchFromClient which can be initialized with a dynamic.Client:

import (

"k8s.io/client-go/tools/cache"

"k8s.io/apimachinery/pkg/runtime/schema"

"k8s.io/client-go/dynamic"

// ...

)

// Assume dynamicClient is initialized

// Assume fooGVR is defined

// Create a ListerWatcher using the dynamic client

listWatcher := cache.NewListWatchFromClient(

dynamicClient.Resource(fooGVR).Namespace(""), // Pass the GVR and optionally a namespace

fooGVR.Resource, // Plural name of the resource

"", // Namespace (empty for all namespaces or cluster-scoped)

metav1.ListOptions{},

)

// Create a SharedIndexInformer from the ListerWatcher

// This informer will use the Dynamic Client to perform List-Watch operations

informer := cache.NewSharedIndexInformer(

listWatcher,

&unstructured.Unstructured{}, // The object type for the informer's store

0, // Resync period (0 means no periodic resync)

cache.Indexers{},

)

// Add event handlers to the informer

informer.AddEventHandler(cache.ResourceEventHandlerFuncs{

AddFunc: func(obj interface{}) {

unstructuredObj := obj.(*unstructured.Unstructured)

fmt.Printf("Informer ADDED: %s/%s, Name: %s\n", unstructuredObj.GetAPIVersion(), unstructuredObj.GetKind(), unstructuredObj.GetName())

},

UpdateFunc: func(oldObj, newObj interface{}) {

unstructuredObj := newObj.(*unstructured.Unstructured)

fmt.Printf("Informer MODIFIED: %s/%s, Name: %s\n", unstructuredObj.GetAPIVersion(), unstructuredObj.GetKind(), unstructuredObj.GetName())

},

DeleteFunc: func(obj interface{}) {

unstructuredObj := obj.(*unstructured.Unstructured)

fmt.Printf("Informer DELETED: %s/%s, Name: %s\n", unstructuredObj.GetAPIVersion(), unstructuredObj.GetKind(), unstructuredObj.GetName())

},

})

// Start the informer

stopCh := make(chan struct{})

defer close(stopCh)

go informer.Run(stopCh)

// Wait for the informer's cache to sync

if !cache.WaitForCacheSync(stopCh, informer.HasSynced) {

fmt.Println("Failed to sync informer cache")

return

}

fmt.Println("Informer cache synced.")

// At this point, the informer is running and maintaining an up-to-date cache

// You can use the informer.GetStore() to query the cached objects

This pattern allows you to build operators that can: * Manage dynamic sets of CRDs: Watch for CustomResourceDefinition changes, and for each new CRD, spin up a corresponding dynamic informer. * Maintain local state: Leverage the informer's cache for efficient lookups and consistent state management, avoiding repeated api calls. * Process events reliably: Utilize the informer's event handlers and integrate with a workqueue for robust reconciliation loops, ensuring idempotent and ordered processing of changes.

This combination unlocks the full potential for building highly adaptive and resilient Kubernetes automation.

Integrating with API Gateways for External Services

While the Dynamic Client is focused on internal interaction with Kubernetes resources, a complete cloud-native architecture often requires exposing services and apis externally or connecting to external apis. This is where the concept of an API gateway becomes critical, and platforms like APIPark provide this crucial functionality.

Imagine a scenario where your Kubernetes cluster hosts several microservices, some of which are managed by custom operators that use the Dynamic Client to watch CRDs. These CRDs might represent specific AI models, data processing pipelines, or external system integrations. While your internal controllers ensure these services are running correctly, the actual application developers or external partners need a single, secure, and managed entry point to consume these services.

An API gateway like APIPark serves as that entry point. It can: 1. Expose Kubernetes Services as Managed APIs: Services deployed in Kubernetes (which might be orchestrated by CRDs watched by your Dynamic Client) can be exposed through APIPark as managed api endpoints. This centralizes authentication, authorization, and traffic management for external consumers, abstracting away the internal Kubernetes complexities. 2. Integrate External APIs: If your CRDs define external api integrations (e.g., a "ThirdPartyService" CRD), APIPark can manage the outbound calls to these external apis, offering features like rate limiting, caching, and transformation. 3. Unified API Format for AI: Particularly relevant for AI services, APIPark unifies the request data format across different AI models. This means your application developers don't need to worry about the underlying AI model's specific api or prompt details; they interact with a standardized api endpoint managed by APIPark. This significantly simplifies AI usage and reduces maintenance costs. 4. Prompt Encapsulation into REST API: APIPark allows users to combine AI models with custom prompts to create new, specialized apis (e.g., a sentiment analysis api or a translation api). These custom apis can then be exposed through the gateway, providing a powerful abstraction for AI capabilities orchestrated within Kubernetes.

In essence, the Dynamic Client enables you to build the intelligent, self-managing internal workings of your Kubernetes cluster by reacting to CRD changes. APIPark then provides the secure, governed, and performant facade for these internal services, allowing them to be consumed reliably and scalably by external applications and users. This creates a powerful synergy where internal Kubernetes extensibility meets robust external api governance, leading to a comprehensive and efficient cloud-native strategy.

Custom Tooling and CLI Extensions

Beyond controllers, the Dynamic Client is invaluable for building custom command-line interface (CLI) tools or extending existing ones. For instance: * A kubectl plugin that can generically inspect and display any CRD in the cluster, even newly installed ones, without needing a go generate step. * A diagnostic tool that can fetch status information from diverse custom resources to troubleshoot problems. * An automation script that needs to create or modify custom resources based on dynamic inputs.

These tools benefit from the Dynamic Client's flexibility, allowing them to adapt to any Kubernetes cluster's specific CRD landscape.

Comparison: Dynamic Client vs. Typed Clients vs. Client-go Informers

To solidify the understanding of when and where to use the Dynamic Client, it's beneficial to compare its characteristics against the other primary methods of interacting with Kubernetes resources in Go: statically typed clients (kubernetes.Interface) and client-go informers (cache.SharedIndexInformer).

Let's present this comparison in a table format for clarity, followed by a detailed discussion.

| Feature | Dynamic Client (dynamic.Interface) |

Typed Clients (kubernetes.Interface) |

Client-go Informers (cache.SharedIndexInformer) |

|---|---|---|---|

| Type Safety | Low (uses map[string]interface{} via unstructured.Unstructured) |

High (uses generated Go structs like *appsv1.Deployment) |

High (event handlers receive typed objects from cache) |

| Flexibility | Very High (works with any CRD, even unknown ones) | Low (requires compile-time knowledge and generated types for each CRD) | High (can be combined with Dynamic Client for CRDs, but typically typed) |

| Setup Complexity | Moderate (GVR construction, unstructured handling) |

Low (straightforward Go struct usage) | High (List-Watch pattern, cache, indexers, event handlers, workqueues) |

| Performance | Moderate (map lookups, type assertions) | High (direct struct access) | High (efficient watch, local cache reduces API calls) |

| Resource Usage | Low (single watch connection, no cache overhead unless combined) | Low (single API call per operation) | Moderate to High (local cache, multiple goroutines for processing) |

| Data Consistency | Point-in-time for Get/List, event stream for Watch |

Point-in-time for Get/List |

High (guaranteed eventually consistent local cache) |

| Use Cases | Generic tools, auditing, dynamic operators, discovering CRDs | Simple CRUD, well-defined controllers, testing | Robust controllers, operators, complex state management, cache building |

| Compile-time knowledge | None required for resource type (GVR required) | Full knowledge of resource type required | Full knowledge of resource type required (or use dynamic client for ListerWatcher) |

| Re-Watch/Reconciliation | Manual List-Watch loop with resourceVersion |

N/A (single API calls) | Built-in (automatic re-watch, event handlers drive reconciliation) |

Detailed Discussion of Comparison:

- Type Safety vs. Flexibility:

- Typed Clients are ideal when you have a fixed set of resources with known Go types. They provide the highest level of type safety, allowing the compiler to catch many errors, which translates to more robust and maintainable code.

- Dynamic Client sacrifices type safety for ultimate flexibility. By treating everything as

unstructured.Unstructured, it can interact with any resource. This is invaluable when the resource types are not known at compile time, such as when building generic tools or dynamically discovering CRDs. Developers must perform runtime type assertions and map traversals, which require more vigilance. - Informers, when used with generated typed clients, offer the best of both worlds: they provide the robust event-driven, cached approach while allowing event handlers to operate on strongly typed Go objects. If combined with a Dynamic Client for

ListerWatchercreation, they retain the flexibility of dynamic interaction while gaining the benefits of caching.

- Setup Complexity:

- Typed Clients are generally the easiest to set up for basic CRUD operations.

- Dynamic Client has moderate complexity; the core setup is simple, but handling

unstructured.Unstructuredobjects and implementing robust List-Watch loops manually adds complexity. - Informers are the most complex to set up initially due to their underlying mechanics (DeltaFIFO, reflector, workqueues). However, this complexity is offset by the robustness and efficiency they provide for continuous reconciliation.

- Performance and Resource Usage:

- Typed Clients offer excellent performance for individual

apicalls due to direct Go struct manipulation. They are low in resource usage for simple operations. - Dynamic Client has slightly more overhead due to the

unstructuredmarshaling/unmarshaling and map operations. For simple watches, resource usage is low, as it primarily maintains a single HTTP connection. - Informers are highly efficient for continuous observation. While they use more memory for their local cache, they drastically reduce the number of

apicalls over time by only fetching incremental changes (watches) after an initial list. This makes them very performant in high-event environments where you need an always-up-to-date view of the cluster state.

- Typed Clients offer excellent performance for individual

- Data Consistency and Reconciliation:

- Typed and Dynamic Clients (for

Get/List) provide a snapshot of the resource state at the time of theapicall.Watchprovides a stream of events. For a controller needing to ensure state consistency, this stream requires careful management (e.g., usingresourceVersion). - Informers are designed for eventually consistent state management. They guarantee that their local cache will eventually reflect the true state of the

apiserver, and their event handlers facilitate the reconciliation loop, ensuring your controller reacts to changes in a well-ordered and idempotent manner.

- Typed and Dynamic Clients (for

When to Choose Which:

- Choose Typed Clients when:

- You know all the resource types you'll be interacting with at compile time.

- You need maximum type safety and IDE support.

- You are building simple, single-purpose applications or quick scripts that perform CRUD operations on well-defined resources.

- You are writing an operator for a single, specific CRD where code generation is feasible.

- Choose Dynamic Client when:

- You need to interact with a wide variety of CRDs, some of which may not exist or be known at compile time.

- You are building generic tools like cluster auditors, dashboards, or

kubectlplugins that need to inspect arbitrary resources. - You are developing a dynamic operator that discovers and manages new CRD types on the fly.

- You need to build a

ListerWatcherfor an informer for a custom or unknown resource type.

- Choose Informers (often combined with typed clients, or Dynamic Client for ListerWatcher) when:

- You are building a robust, production-grade controller or operator.

- You need to maintain an eventually consistent, local cache of resources for quick lookups.

- You need to process events in an ordered and reliable manner using workqueues.

- You want to minimize

apiserver load by reducing polling and sharing watch connections.

In summary, the Dynamic Client occupies a critical niche, empowering developers to build highly flexible and adaptable Kubernetes applications that can truly interact with and watch all kinds of CRDs, even those yet to be conceived, thereby unlocking the full extensibility potential of Kubernetes. When this internal extensibility meets external API management, as provided by platforms like APIPark for a consistent and secure api gateway, organizations achieve a truly comprehensive and powerful cloud-native infrastructure.

Future Trends and Evolution

The Kubernetes ecosystem is a testament to continuous innovation, and the way we interact with custom resources is no exception. The importance of the Dynamic Client and related patterns for watching CRDs is only set to grow as Kubernetes continues to expand its reach and capabilities. Several trends indicate this evolution:

- Increased Sophistication of Operators: As more complex applications are deployed on Kubernetes, the demand for sophisticated operators will rise. These operators will need to manage not just one but potentially many interdependent CRDs, often dynamically discovering and reacting to new ones as they are introduced or upgraded. The Dynamic Client's ability to create generic controllers will be foundational for these advanced operators, enabling them to be more resilient and adaptable to evolving application architectures. Operators will increasingly become domain-specific control planes, requiring the agility that dynamic resource interaction offers.

- Growth of Multi-Cluster and Federation Solutions: Managing CRDs across multiple Kubernetes clusters or in federated environments introduces new complexities. Tools that can generically watch and synchronize custom resources across clusters, or apply policies universally, will become vital. The Dynamic Client is ideally positioned to enable such cross-cluster generic orchestration, as it doesn't rely on pre-generated types specific to a single cluster's CRD set. This will allow for more seamless deployment and management of applications across diverse Kubernetes landscapes, where each cluster might have a slightly different set of custom resources.

- Enhanced Policy Enforcement and Governance: The ability to watch all kinds of CRDs dynamically is a cornerstone for building advanced policy enforcement and governance tools. Projects like Kyverno, OPA Gatekeeper, and others are already leveraging similar underlying mechanisms to validate, mutate, and generate resources based on policies. The Dynamic Client empowers developers to create custom policy engines that can observe changes to any CRD, ensuring compliance with organizational standards, security postures, and operational best practices. This will move Kubernetes towards a more self-regulating and compliant platform.

- Runtime Discovery and API Autonomy: The trend towards highly autonomous and self-healing systems in Kubernetes will further emphasize runtime discovery. Instead of hardcoding dependencies on specific CRDs, future systems will be more capable of inspecting the

apiserver's capabilities at runtime (e.g., listing allCustomResourceDefinitionobjects) and dynamically adapting their behavior. This moves away from tightly coupled components towards a more loosely coupled, event-driven architecture, where components react to the existence and state of resources rather than relying on prior knowledge. - Simplified Development Experience for CRDs: While the Dynamic Client provides flexibility, the developer experience for creating and managing CRDs themselves continues to improve. Tools that simplify CRD schema definition, OpenAPI generation, and code generation will make it easier for developers to define their custom resources. This, in turn, will lead to an even richer ecosystem of CRDs, further increasing the need for generic tools that can interact with them using the Dynamic Client. The ongoing advancements in

OpenAPIdefinitions for CRDs will also facilitate more robust client-side validation for dynamic operations. - Integration with AI/ML Workflows: As AI and Machine Learning become integral parts of cloud-native applications, CRDs are increasingly used to define and manage AI workloads, model deployments, and data pipelines within Kubernetes. Tools that can dynamically watch these AI-specific CRDs will be essential for building intelligent schedulers, auto-scalers, and monitoring systems for AI/ML operations. This further highlights the synergy between internal Kubernetes extensibility (via Dynamic Client and CRDs) and external AI API management provided by platforms like APIPark, which streamlines the exposure and consumption of AI models orchestrated within the cluster.

In conclusion, the Dynamic Client is not just a niche tool; it's a vital component in the evolving Kubernetes landscape. Its capability to dynamically interact with and watch all kinds of CRDs is fundamental to building the next generation of resilient, adaptable, and intelligent cloud-native applications and management tools, driving the future of Kubernetes extensibility and automation.

Conclusion

The Kubernetes Dynamic Client stands as a testament to the platform's commitment to extensibility and adaptability. In an ecosystem where Custom Resource Definitions (CRDs) are increasingly becoming the backbone for domain-specific applications and operational logic, the ability to interact with these resources programmatically, without needing prior compile-time knowledge of their Go types, is an indispensable capability.

Throughout this comprehensive exploration, we have delved into the intricacies of the Dynamic Client, understanding its fundamental reliance on unstructured.Unstructured objects and the critical role of GroupVersionResource (GVR) in identifying any Kubernetes resource. We navigated the essential steps of setting up a dynamic client, establishing robust watch mechanisms, and implementing the crucial List-Watch pattern to ensure event consistency and resilience against watch interruptions.

We examined how the Dynamic Client empowers developers to build advanced generic controllers, universal auditing tools, and flexible CLI extensions that can seamlessly adapt to the ever-changing landscape of custom resources within a Kubernetes cluster. We explored best practices for interacting with CRD spec and status fields, understanding the importance of schema validation backed by OpenAPI, and securing dynamic operations through rigorous RBAC and prudent sensitive data handling.

Furthermore, we highlighted the broader ecosystem integration, demonstrating how dynamic informers can combine the flexibility of the Dynamic Client with the robustness of client-go's caching mechanisms. Crucially, we articulated the complementary roles of internal Kubernetes extensibility (achieved through Dynamic Client and CRDs) and external API management. For complex services deployed within Kubernetes, especially those involving AI models or requiring secure external exposure, platforms like APIPark emerge as vital components. APIPark acts as an open-source AI gateway and API management platform, providing a critical gateway for secure, efficient, and scalable management of external api traffic, complementing the internal operational capabilities afforded by the Dynamic Client.

By understanding and effectively leveraging the Kubernetes Dynamic Client, developers are equipped to unlock the full potential of Kubernetes extensibility. This empowers them to construct more resilient, adaptable, and intelligent cloud-native solutions, capable of not only embracing the current diversity of custom resources but also seamlessly integrating with the unforeseen innovations that will undoubtedly shape the future of Kubernetes. The ability to watch all kinds of CRDs dynamically is not merely a technical detail; it is a foundational pillar for true Kubernetes mastery and future-proofing your cloud-native investments.

5 FAQs

1. What is the primary difference between client-go's typed clients and the Dynamic Client? The primary difference lies in how they handle resource types. Typed clients require compile-time knowledge of a resource's Go struct (which often needs to be code-generated from a CRD's schema), providing strong type safety. The Dynamic Client, on the other hand, operates on unstructured.Unstructured objects, which are essentially generic map[string]interface{} representations of Kubernetes resources. This allows the Dynamic Client to interact with any resource (native or custom) without needing its specific Go type at compile time, offering immense flexibility but requiring runtime type assertions and map traversals.

2. When should I choose the Dynamic Client over client-go informers for watching CRDs? You should choose the Dynamic Client's direct Watch method for simpler, single-purpose watch loops, especially when the overhead of an informer (caching, indexers) is unnecessary, or when you need to dynamically watch arbitrary CRDs that are discovered at runtime without setting up a full informer factory for each. For robust, production-grade controllers that require an eventually consistent local cache, efficient event processing, and automatic re-watches, informers are generally preferred. It's also possible to combine them by using the Dynamic Client to create a ListerWatcher for an informer.

3. How does the Dynamic Client handle security (RBAC)? The Dynamic Client fully respects Kubernetes Role-Based Access Control (RBAC). Any operation performed by the Dynamic Client is subject to the same RBAC checks by the Kubernetes API server as operations from typed clients. The service account or user credentials used to initialize the Dynamic Client must have the necessary get, list, watch, create, update, or delete permissions for the specific GroupVersionResource (GVR) it intends to interact with. Adhering to the principle of least privilege is crucial to prevent unauthorized access or modifications.

4. Can the Dynamic Client work with any Kubernetes resource, including native ones like Pods or Deployments? Yes, absolutely. The Dynamic Client is designed to interact with any Kubernetes resource, whether it's a built-in native resource (like Pods, Deployments, Services, ConfigMaps) or a Custom Resource Defined (CRD) by users. The key is correctly identifying the resource using its GroupVersionResource (GVR). For example, a Pod would have a GVR of ""/techblog/en/v1/pods (empty group for core resources), and a Deployment would be apps/v1/deployments.

5. How can platforms like APIPark complement the use of the Dynamic Client in Kubernetes? While the Dynamic Client is excellent for internal Kubernetes operations, especially for watching and reacting to CRDs, platforms like APIPark complement it by managing the external exposure and consumption of services. If your CRDs orchestrate backend services or AI models, APIPark acts as an API gateway to provide secure, managed, and scalable access to these services for external applications or users. It offers centralized API governance, authentication, traffic management, and detailed logging for external API traffic, unifying request formats for AI models, and encapsulating prompts into REST APIs. This creates a complete solution where internal Kubernetes extensibility (via Dynamic Client) meets robust external API management (via APIPark).

🚀You can securely and efficiently call the OpenAI API on APIPark in just two steps:

Step 1: Deploy the APIPark AI gateway in 5 minutes.

APIPark is developed based on Golang, offering strong product performance and low development and maintenance costs. You can deploy APIPark with a single command line.

curl -sSO https://download.apipark.com/install/quick-start.sh; bash quick-start.sh

In my experience, you can see the successful deployment interface within 5 to 10 minutes. Then, you can log in to APIPark using your account.

Step 2: Call the OpenAI API.