TPROXY vs eBPF: Which is Better for Network Proxying?

In the complex and ever-evolving landscape of modern distributed systems, network proxying stands as an indispensable pillar, orchestrating traffic, enforcing policies, enhancing security, and optimizing performance. As applications become increasingly distributed, containerized, and driven by microservices, the demands placed on the underlying networking infrastructure grow exponentially. This is particularly true with the advent of AI workloads and large language models (LLMs), which necessitate highly efficient, low-latency, and intelligently managed traffic flows. Choosing the right technology for implementing network proxies is therefore a critical decision for architects and engineers.

This comprehensive article embarks on a deep dive into two prominent technologies that enable sophisticated network proxying within the Linux ecosystem: TPROXY and eBPF. Both offer distinct approaches to intercepting and manipulating network traffic, each with its own set of advantages, limitations, and optimal use cases. From traditional gateway functionalities to cutting-edge api gateway solutions and specialized LLM Proxy implementations, understanding the nuances of TPROXY and eBPF is crucial for building robust, scalable, and observable network infrastructures. We will dissect their underlying mechanisms, evaluate their performance characteristics, explore their programmability and flexibility, and ultimately provide a framework for determining which technology might be "better" for specific network proxying challenges in today's high-demand computing environments.

1. The Ubiquitous Role of Network Proxying in Modern Systems

Network proxies, at their core, act as intermediaries for requests from clients seeking resources from other servers. Their presence in virtually every modern network architecture is not coincidental; it stems from a fundamental need to manage, secure, and optimize the flow of data across diverse and often hostile environments. Without proxies, direct client-to-server connections would expose backend services to a multitude of threats, lack centralized traffic control, and struggle with performance bottlenecks.

The necessity of proxies has only intensified with the paradigm shift towards cloud-native architectures, microservices, and container orchestration platforms like Kubernetes. In these environments, services are ephemeral, IP addresses change frequently, and traffic patterns are dynamic. Proxies help abstract away this complexity, providing a stable point of contact for clients while intelligently routing requests to healthy backend instances. They are the silent workhorses that enable load balancing, ensuring high availability and distributing incoming traffic across multiple servers to prevent overload. Beyond simple load distribution, proxies are instrumental in implementing fine-grained security policies, such as authentication, authorization, SSL termination, and protection against various cyber threats by inspecting and filtering traffic.

Furthermore, proxies play a pivotal role in performance optimization through caching mechanisms, reducing latency by serving frequently requested content directly. They also provide crucial observability hooks, allowing engineers to gain insights into traffic patterns, identify bottlenecks, and monitor the health of services. This data is invaluable for troubleshooting, capacity planning, and proactive system maintenance. From simple HTTP proxies to sophisticated layer 7 api gateway solutions that manage complex API ecosystems, the evolution of proxying needs has been relentless. The advent of AI services, particularly large language models (LLMs), introduces even more stringent requirements for an LLM Proxy, demanding ultra-low latency, intelligent request routing based on model capabilities or costs, and robust security for sensitive AI prompts and responses. These increasingly complex demands necessitate efficient and flexible proxying technologies that can adapt to rapid changes and extreme performance requirements.

2. Deep Dive into TPROXY: The Transparent Linux Proxy

TPROXY, a long-standing feature within the Linux kernel, offers a powerful mechanism for implementing transparent proxies. Unlike traditional proxies that require clients to explicitly configure their network settings to route traffic through the proxy, a transparent proxy intercepts traffic without the client's knowledge, making the redirection seamless. This characteristic is particularly valuable in scenarios where client configuration is impractical or impossible, such as intercepting all outbound traffic from a subnet or transparently routing service mesh traffic.

2.1 What is TPROXY? Understanding its Core Mechanics

At its heart, TPROXY leverages the netfilter framework within the Linux kernel, specifically interacting with iptables rules, to redirect network packets. The fundamental distinction that sets TPROXY apart from other iptables redirection mechanisms like REDIRECT is its ability to preserve the original destination IP address of the intercepted packet.

When a packet is destined for a service behind a REDIRECT rule, iptables modifies the packet's destination IP address to that of the local machine (the proxy), effectively making the proxy the new destination. While this works for simple cases, it loses critical information: the original intended destination. For many proxying scenarios, especially those involving policy enforcement, logging, or services that rely on the original destination, this loss of information is unacceptable.

TPROXY, on the other hand, operates differently. When an iptables rule with the TPROXY target matches a packet, instead of altering the destination IP, it simply marks the packet and redirects it to a local socket that is listening in "transparent proxy" mode. The crucial part is that the proxy application receiving this packet can then retrieve the original destination IP address and port from the packet's metadata. This capability allows the proxy to establish a new connection to the original intended destination on behalf of the client, while appearing as the original source IP to the backend server. Crucially, the proxy itself can bind to a non-local IP address (the client's source IP) when connecting to the backend, completing the illusion of transparency.

The typical packet flow with TPROXY involves several steps: 1. Incoming Packet: A client sends a packet to a destination IP/port. 2. PREROUTING Chain: The packet enters the netfilter PREROUTING chain. An iptables rule (e.g., iptables -t mangle -A PREROUTING -p tcp --dport <target_port> -j TPROXY --on-ip <proxy_ip> --on-port <proxy_port> --tproxy-mark 0x1/0x1) matches the packet. 3. Marking and Redirection: The TPROXY target marks the packet (e.g., with a fwmark) and redirects it to a local port where the proxy application is listening. Importantly, the destination IP/port of the packet itself remains unchanged. 4. Proxy Application: A user-space proxy application (e.g., Nginx, Envoy, or a custom daemon) has a socket configured with IP_TRANSPARENT and IP_RECVORIGDSTADDR options. This allows it to receive the redirected packets and retrieve their original destination. 5. New Connection: The proxy application then establishes a new connection to the original destination server, using its own IP address as the source or even spoofing the original client's IP address (if configured and permitted by kernel routing). 6. Data Forwarding: The proxy forwards data between the client and the backend server.

This intricate dance ensures that from the perspective of both the client and the backend server, the proxy remains largely invisible, facilitating a wide array of network management capabilities without requiring application-level modifications.

2.2 Key Features and Advantages of TPROXY

TPROXY's design offers several significant advantages, making it a viable choice for specific proxying requirements:

- Preservation of Original Source and Destination IP Addresses: This is arguably TPROXY's most compelling feature. By maintaining the original client source IP when connecting to the backend (through socket options like

IP_TRANSPSPARENTand appropriate routing), and by allowing the proxy to see the original destination IP, TPROXY provides critical context for various network functions. For api gateway implementations, this means accurate client logging, IP-based access control, and geo-targeting can be performed effectively. For an LLM Proxy, knowing the client's true origin is vital for usage tracking, billing, and applying regional access policies. - Simplicity of Integration with Existing

netfilterRules: TPROXY integrates seamlessly into the well-establishednetfilterframework andiptablestooling. For administrators already familiar withiptablesfor firewalling and NAT, extending their configurations to include transparent proxying with TPROXY requires learning a new target and some socket options, rather than an entirely new system. This reduces the learning curve and allows for leveraging existing infrastructure and operational knowledge. - Broad Compatibility and Maturity: TPROXY has been a part of the Linux kernel for a considerable period. This maturity translates into robust stability, extensive testing across various kernel versions and distributions, and a large body of documentation and community support. Its long-standing presence means it's generally well-understood and has fewer unforeseen edge cases compared to newer, rapidly evolving technologies.

- Established Ecosystem: There's a mature ecosystem of tools and applications that either natively support TPROXY or can be configured to do so. Many common load balancers, reverse proxies, and service mesh data planes (when using

iptablesfor traffic interception) leverage TPROXY for its transparent capabilities. This ecosystem makes deployment and troubleshooting relatively straightforward for standard use cases. - No Client-Side Configuration Required: As a transparent proxy, TPROXY removes the burden of configuring each client application or device to use the proxy. This is invaluable in scenarios involving legacy applications, third-party software, or large numbers of clients where manual configuration is impractical or impossible.

2.3 Limitations and Challenges of TPROXY

Despite its advantages, TPROXY comes with its own set of limitations and challenges that can become significant hurdles in high-performance or highly dynamic environments:

- Kernel Space Involvement and Context Switching: While TPROXY operates in the kernel, the actual proxy logic typically resides in user space. This means that every packet intercepted by TPROXY and handled by the user-space proxy application requires at least two context switches: one from kernel to user space when the packet arrives at the proxy, and another from user to kernel space when the proxy sends the packet out. In high-throughput scenarios, these context switches introduce significant overhead and can become a performance bottleneck, limiting the maximum packet processing rate.

iptablesComplexity and Scalability: Managingiptablesrules, especially large and intricate sets, can become extremely complex and error-prone. In dynamic environments, where services are constantly being added, removed, or scaled, programmatically managingiptablesrules can be cumbersome. Large rule sets also require the kernel to iterate through many rules for each packet, leading to increased latency and CPU utilization. This can be a major issue for a scalable api gateway or LLM Proxy that might need to apply different policies based on hundreds or thousands of routes.- Lack of Fine-Grained Programmability: TPROXY itself is a fixed kernel mechanism; it simply redirects packets. The actual intelligence and custom logic of the proxy must be implemented in user space. This separation limits the ability to perform complex packet manipulation or routing decisions directly within the kernel. For example, implementing sophisticated load balancing algorithms, advanced security checks, or application-layer parsing (like HTTP header manipulation for an api gateway) requires pushing packets up to user space, incurring the context switching cost.

- Performance Bottlenecks with High Traffic: The combination of

iptablesrule processing overhead and repeated context switching means that TPROXY-based solutions can struggle to keep pace with extremely high traffic volumes or low-latency requirements. While sufficient for many applications, it might not be optimal for ultra-high-performance use cases that demand near wire-speed processing. - Limited Observability and Debugging: While

iptablesprovides basic packet and byte counters, obtaining deep, fine-grained insights into the network traffic processed by TPROXY requires additional tooling. Debugging issues can involve tracingnetfilterrules, examining socket states, and inspecting user-space proxy logs, which can be a multi-faceted and challenging process. It lacks the built-in, programmable introspection capabilities that more modern kernel technologies offer.

3. Deep Dive into eBPF: The Programmable Kernel

Extended Berkeley Packet Filter, or eBPF, represents a revolutionary paradigm shift in how programs interact with the Linux kernel. Evolving from the classic BPF (cBPF) designed solely for packet filtering, eBPF transforms the kernel into a programmable environment, allowing users to run custom, safe, sandboxed programs directly within kernel space. This unprecedented level of kernel programmability opens up vast possibilities for networking, security, tracing, and observability, profoundly impacting how modern infrastructure operates.

3.1 What is eBPF? Unlocking Kernel Superpowers

At its core, eBPF allows developers to write small programs (often in a restricted C-like language) that can be attached to various hook points within the kernel. These hook points include network events (like packet ingress/egress), system calls, kernel tracepoints, user-space probe points (uprobes), and many others. When an event occurs, the associated eBPF program is executed. This execution happens directly in kernel space, avoiding the overhead of context switching inherent in user-space solutions.

The magic of eBPF lies in its meticulous design for safety and efficiency: * Verifier: Before an eBPF program is loaded into the kernel, a sophisticated verifier statically analyzes the program. It ensures that the program terminates, does not contain loops that could hang the kernel, does not access invalid memory, and adheres to strict security rules. This guarantees kernel stability and security. * Just-In-Time (JIT) Compiler: Once verified, the eBPF bytecode is translated by a JIT compiler into native machine code optimized for the host architecture. This ensures that eBPF programs run with near-native CPU efficiency, making them incredibly fast. * Maps: eBPF programs can interact with data structures called "maps." These are key-value stores that can be shared between eBPF programs and between eBPF programs and user-space applications. Maps enable stateful operations, configuration updates, and efficient data exchange. * Helper Functions: The kernel exposes a set of well-defined "helper functions" that eBPF programs can call to perform specific tasks, such as looking up data in maps, generating random numbers, or redirecting packets. These helpers ensure that eBPF programs operate within a controlled and safe environment. * Hooks: eBPF programs can be attached to a wide array of kernel hook points, offering granular control over different aspects of the system. For networking, key hooks include XDP (eXpress Data Path) for very early packet processing, tc (traffic control) for packet classification and manipulation, and socket filters for application-level control.

For network proxying, eBPF programs can intercept packets at extremely low levels of the network stack, sometimes even before the full network stack processing begins (with XDP). This allows for lightning-fast decision-making, packet manipulation, and redirection, all within the kernel, bypassing the need for user-space intervention for many common proxy tasks.

3.2 Key Features and Advantages of eBPF for Proxying

eBPF's capabilities translate into a multitude of compelling advantages for network proxying, addressing many limitations of older technologies:

- Unparalleled Programmability and Flexibility: This is eBPF's cornerstone. Developers can write custom logic to inspect, modify, drop, or redirect packets based on virtually any criteria extractable from the packet header or payload. This allows for highly sophisticated traffic engineering, custom load balancing algorithms, advanced security policies, and application-aware routing that were previously impossible or extremely difficult to achieve without modifying the kernel itself. For an api gateway or an LLM Proxy, eBPF can enable dynamic routing based on API version, user subscription, model cost, or even content of the request, all at kernel speed.

- Exceptional Performance and Efficiency: By executing programs directly in kernel space, eBPF minimizes context switching overhead, which is a major bottleneck for user-space proxies. Combined with the JIT compiler, eBPF programs achieve near-native execution speed. For extremely high-throughput and low-latency environments, such as those required by modern api gateway solutions or an LLM Proxy processing thousands of requests per second, eBPF delivers significant performance gains. XDP, a specific type of eBPF program, can process packets even before they hit the full Linux network stack, enabling unprecedented speeds for denial-of-service mitigation or load balancing.

- Deep Visibility and Observability: eBPF provides unparalleled capabilities for deep introspection into network traffic and kernel operations. Custom eBPF programs can extract detailed metrics, trace packet paths, log specific events, and generate real-time telemetry, all from within the kernel. This granular observability is crucial for identifying performance bottlenecks, debugging complex network issues, and gaining comprehensive insights into service behavior. For operations teams managing a large api gateway or an LLM Proxy, eBPF can provide the critical data needed for proactive monitoring and rapid incident response.

- Enhanced Security: The eBPF verifier ensures that all programs loaded into the kernel are safe, preventing malicious or buggy code from compromising kernel stability. Furthermore, eBPF programs can be used to implement advanced security features, such as network policy enforcement, micro-segmentation, and intrusion detection, by analyzing and filtering traffic at the earliest possible point in the network stack. This makes it an ideal technology for securing sensitive api gateway endpoints and protecting proprietary LLM models via an LLM Proxy.

- Dynamic Updateability without Kernel Recompilation: eBPF programs can be loaded, updated, and unloaded dynamically without requiring a kernel reboot or recompilation. This flexibility is a game-changer for maintaining dynamic infrastructure, allowing for rapid deployment of new features, bug fixes, or policy changes without service interruption.

- Reduced Overhead and Resource Consumption: By processing packets efficiently in the kernel and avoiding unnecessary data copies between kernel and user space, eBPF solutions generally consume fewer CPU cycles and memory resources compared to traditional user-space proxies, especially at high traffic volumes.

- Seamless Integration with Service Mesh and Container Orchestration: Projects like Cilium have pioneered the use of eBPF to power high-performance, sidecar-less service meshes in Kubernetes, demonstrating its capabilities for advanced traffic management, policy enforcement, and observability in containerized environments.

3.3 Limitations and Challenges of eBPF

While eBPF offers compelling advantages, it also introduces its own set of challenges, particularly related to its complexity and the expertise required:

- Complexity of Development and Debugging: Writing eBPF programs requires a deep understanding of kernel internals, networking stacks, and the specific eBPF programming model (BPF C). Tools like BCC and libbpf simplify development, but the learning curve is steep. Debugging eBPF programs, which run in the kernel and interact with complex kernel structures, can be significantly more challenging than debugging user-space applications. This specialized knowledge can be a barrier to entry for many development teams.

- Kernel Version Dependency: While the eBPF framework itself is stable, specific eBPF features, helper functions, and available hook points can vary between different Linux kernel versions. This dependency means that eBPF solutions might need to be tested and potentially adapted for specific kernel versions, which can complicate deployments across diverse environments. Newer kernels generally offer more advanced eBPF capabilities.

- Security Concerns (Perceived vs. Real): Despite the robust security guarantees provided by the eBPF verifier, the concept of running custom code directly in the kernel can initially raise security concerns for some organizations. Education and clear understanding of the verifier's role are essential to mitigate these perceptions. While the verifier prevents accidental kernel crashes or malicious direct memory access, a poorly designed eBPF program could still potentially cause performance degradation or unintended network behavior.

- Resource Management: While efficient, eBPF programs are still kernel resources. Care must be taken in program design to avoid excessive resource consumption (e.g., CPU cycles due to complex logic, or memory for large maps). The verifier has limits on program size and complexity to prevent resource exhaustion, but efficient coding practices remain paramount.

- Lack of Direct Application-Layer Logic (without user-space bridge): While eBPF can inspect and modify network packets, performing complex application-layer processing (like full HTTP body parsing, JSON schema validation for an api gateway, or complex prompt manipulation for an LLM Proxy) usually still requires a user-space component. eBPF can efficiently steer traffic to the correct user-space proxy instance and perform initial filtering, but deep application logic often remains in user space due to the limitations of kernel-level programming. However, sophisticated eBPF programs can offload a significant portion of the decision-making, reducing the workload on user-space proxies.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

4. Direct Comparison: TPROXY vs. eBPF for Network Proxying

Having explored TPROXY and eBPF in detail, it's time to juxtapose their capabilities directly. The choice between these two powerful technologies for network proxying hinges on a careful consideration of performance, flexibility, complexity, and specific use case requirements. This section provides a feature-by-feature comparison and discusses their suitability for different scenarios, from generic gateway functions to specialized api gateway and LLM Proxy implementations.

Comparative Analysis Table

Let's summarize the key differences and similarities in a structured format:

| Feature | TPROXY | eBPF |

|---|---|---|

| Core Mechanism | netfilter (iptables) based transparent redirection |

Programmable kernel bytecode execution at various hook points (XDP, tc, syscalls) |

| Execution Location | Kernel (redirection), User-space (proxy logic) | Purely Kernel-space (program execution), with optional User-space control/data plane |

| Source IP Preservation | Yes (requires IP_TRANSPARENT socket option in user-space proxy) |

Yes (inherently, as packets are processed in-kernel without modification unless specified) |

| Performance | Good for moderate loads, but suffers from context switching overhead at high rates; iptables rule matching adds latency. |

Excellent for high-performance and low-latency workloads; near-native speed with JIT compilation, minimal context switches. |

| Programmability | Fixed kernel functionality; proxy logic must be in user space. | Highly programmable; custom logic can be written and executed directly in the kernel. |

| Flexibility | Limited to netfilter's capabilities for redirection. |

Extremely flexible; can intercept, modify, drop, and redirect packets based on complex custom rules. |

| Complexity (Setup) | Relatively straightforward setup using iptables and standard user-space proxies. |

Steeper learning curve for eBPF program development; requires specialized BPF C and tooling (BCC, libbpf). |

| Complexity (Operation) | Managing large iptables rule sets can be complex; debugging is multi-layered. |

Operational deployment of compiled eBPF programs can be simpler; rich observability tools simplify debugging. |

| Observability | Basic iptables counters; requires external tools for deep insights. |

Built-in, highly granular observability; custom metrics and tracing directly from kernel. |

| Deployment | Widely supported across Linux distributions, mature and stable. | Kernel version dependent; rapidly evolving, requires newer kernels for full feature set. |

| Primary Use Cases | Transparent load balancers, simple transparent proxies, basic gateway functions, some legacy api gateway implementations. | High-performance service meshes, advanced traffic engineering, sophisticated security policies, next-gen api gateway and LLM Proxy solutions, observability. |

| Maturity | Very mature, long-standing kernel feature. | Rapidly maturing, active development, cutting-edge technology. |

Discussion: When to Choose Which

The decision between TPROXY and eBPF is rarely a matter of one being universally "better" than the other. Instead, it's about aligning the technology with specific project requirements, existing infrastructure, and team expertise.

Performance: A Clear eBPF Lead

For any scenario demanding extreme performance, ultra-low latency, or the ability to handle massive traffic volumes, eBPF is the undisputed champion. Its in-kernel execution and JIT compilation eliminate the context switching overhead that plagues user-space proxies leveraging TPROXY. This makes eBPF ideal for demanding api gateway solutions that need to process millions of requests per second, or for an LLM Proxy where every millisecond of latency can impact user experience or cost. While TPROXY is efficient for moderate loads, its performance ceiling is significantly lower than eBPF's.

Flexibility and Programmability: eBPF's Defining Strength

If your proxying needs go beyond simple redirection and require custom logic, dynamic routing based on complex conditions, or sophisticated packet manipulation, eBPF is the only viable choice. TPROXY, by design, is a static redirection mechanism. All intelligent decision-making must be offloaded to user-space, limiting what can be done in-kernel and adding latency. eBPF empowers developers to embed this intelligence directly into the kernel, enabling use cases like: * Dynamic Load Balancing: Custom algorithms based on application-level metrics, not just IP hash or round-robin. * Advanced Policy Enforcement: Fine-grained access control based on granular packet data or application headers. * Service Mesh Sidecar Optimization: Building "sidecar-less" service meshes that offload much of the proxying logic from dedicated sidecar containers to eBPF programs, drastically reducing resource consumption and latency. * LLM Proxy Intelligence: Routing LLM requests to different models based on token count, user tier, cost-effectiveness, or even real-time model performance, all handled at kernel speed.

Observability: eBPF's Diagnostic Superpower

Modern distributed systems are notoriously difficult to debug and monitor. eBPF offers unparalleled observability by allowing custom programs to extract virtually any data point from the kernel and present it in real-time. This is a massive advantage over TPROXY, which offers only basic iptables counters. With eBPF, you can get detailed metrics on packet drops, latency at different points in the network stack, specific request attributes, and much more, without impacting performance. For an api gateway or an LLM Proxy, this level of insight is invaluable for proactive monitoring, performance tuning, and rapid troubleshooting.

Complexity: A Trade-off

Setting up basic transparent proxying with TPROXY and iptables is generally simpler for those already familiar with Linux networking tools. The learning curve is less steep for fundamental tasks. However, managing complex iptables rule sets for thousands of services can quickly become a significant operational burden.

eBPF, on the other hand, has a much higher initial learning curve for program development. Writing, verifying, and deploying eBPF programs requires specialized skills and tooling. However, once developed and deployed, eBPF solutions can simplify operational complexity by consolidating logic, reducing the number of moving parts, and providing superior diagnostics. For teams building next-generation infrastructure, investing in eBPF expertise can yield long-term benefits in terms of efficiency and capability.

Use Cases: Matching Technology to Need

- TPROXY is still relevant for:

- Simpler transparent proxying needs where performance is not the absolute critical factor.

- Environments with older kernel versions where eBPF might not be fully supported.

- Scenarios where existing

iptablesexpertise is abundant, and the custom logic is minimal or can be fully handled in user space without extreme performance penalties. - Basic gateway functions where packet redirection is the primary concern.

- eBPF is the preferred choice for:

- High-performance, low-latency applications like modern api gateway solutions, especially those handling intensive loads from AI services or microservices.

- Advanced traffic engineering, including complex load balancing, rate limiting, and sophisticated routing.

- Service mesh implementations that aim for high efficiency and reduced resource footprint (e.g., sidecar-less architectures).

- Deep security enforcement and network policy management at kernel speed.

- Any scenario demanding unparalleled observability and real-time telemetry from the network stack.

- Specialized services like an LLM Proxy where dynamic routing, intelligent policy application, and detailed monitoring of AI interactions are paramount.

5. Real-World Applications and Synergies

The discussion of TPROXY and eBPF moves beyond theoretical comparisons when we consider their practical implications in modern system architectures. From service meshes to high-performance api gateway solutions, these technologies underpin much of today's resilient and scalable infrastructure.

5.1 Transparent Proxying in Service Meshes

Service meshes have revolutionized how microservices communicate, providing capabilities like traffic management, security, and observability at the application layer. Transparent proxying is a foundational requirement for service meshes, allowing the mesh's data plane (typically implemented as a sidecar proxy like Envoy) to intercept and manage all inbound and outbound traffic for a service without requiring application changes.

Historically, iptables (and by extension, TPROXY) has been the workhorse for this transparent interception. When a pod is deployed in Kubernetes with a service mesh sidecar, iptables rules are injected into the pod's network namespace. These rules redirect all traffic destined for external services, or originating from the application, through the sidecar proxy. The sidecar then leverages TPROXY's ability to preserve the original destination IP, allowing it to forward the request correctly while applying mesh policies. This approach is well-understood and widely deployed.

However, the iptables-based sidecar model comes with overhead: each sidecar adds its own resource consumption, and iptables rule processing can become a bottleneck in large clusters. This is where eBPF is ushering in a new era. Projects like Cilium have demonstrated how eBPF can replace iptables and even some sidecar functionalities. By attaching eBPF programs to the network interface (e.g., using XDP or tc hooks), traffic can be intercepted and processed directly in the kernel, bypassing the need for sidecar processes to handle every packet. These eBPF programs can perform: * Transparent Proxying: Redirection to a proxy without iptables rules. * Policy Enforcement: Network policies applied at kernel speed. * Load Balancing: Efficient in-kernel load balancing. * Observability: Generating metrics and tracepoints directly from the kernel.

This eBPF-driven approach can significantly reduce latency, improve throughput, and decrease resource consumption, leading to more efficient and scalable service meshes. While the sidecar model still has its place for complex application-layer functionalities that eBPF cannot (yet) fully handle, eBPF is pushing the boundaries of what's possible in the kernel.

5.2 API Gateways and LLM Proxies

The demands on modern api gateway solutions have grown tremendously. No longer just simple reverse proxies, they are now central to managing complex API ecosystems, enforcing security, handling traffic, providing analytics, and integrating diverse services. With the explosion of AI services, particularly LLMs, a new category of specialized proxies, the LLM Proxy, has emerged, facing even more stringent requirements.

An api gateway typically sits at the edge of a network, routing requests to various backend services. For optimal performance, such a gateway needs highly efficient underlying proxying mechanisms. Whether it uses TPROXY for its initial transparent interception or leverages eBPF for more advanced, in-kernel traffic steering and policy application, the efficiency of this layer directly impacts the overall performance of the api gateway. Features like rate limiting, circuit breaking, authentication, and authorization, while implemented at the application layer within the api gateway, benefit immensely from fast and reliable packet handling beneath.

Consider a platform like APIPark. APIPark is described as an all-in-one AI gateway and API developer portal that helps developers and enterprises manage, integrate, and deploy AI and REST services with ease. Its key features include quick integration of 100+ AI models, unified API format for AI invocation, prompt encapsulation into REST API, and end-to-end API lifecycle management. Crucially, APIPark boasts performance rivaling Nginx, capable of achieving over 20,000 TPS with an 8-core CPU and 8GB of memory, and supports cluster deployment for large-scale traffic. This impressive performance profile suggests that while APIPark's core value lies in its application-layer intelligence for API management and AI integration, its ability to handle such high throughput is undoubtedly underpinned by highly optimized and efficient networking foundations. Whether it's through traditional high-performance proxies configured with TPROXY or by increasingly adopting eBPF for advanced traffic management, a robust and efficient proxying mechanism is absolutely essential for a platform like APIPark to deliver its promised performance and features, especially for the intensive demands of an LLM Proxy. An LLM Proxy functionality within APIPark would leverage these underlying efficient proxying layers to intelligently route requests to different LLM providers based on cost, performance, or specific model capabilities, apply rate limits to prevent abuse, cache responses, and enforce fine-grained access policies, all while maintaining low latency and high reliability. The detailed API call logging and powerful data analysis features of APIPark also benefit from efficient kernel-level observability, which eBPF excels at providing. You can learn more about APIPark and its capabilities at ApiPark.

The specific needs of an LLM Proxy highlight the advanced capabilities that eBPF can provide: * Dynamic Routing: Based on real-time LLM provider costs, API quotas, or performance metrics. * Security for Prompts: Filtering and redacting sensitive information within prompts at kernel speed. * Advanced Rate Limiting: Granular rate limits per user, per model, or per token usage, enforced efficiently in-kernel. * Observability for AI Workloads: Detailed insights into AI request/response flows, latency, and error rates, crucial for managing and optimizing LLM interactions.

While a traditional api gateway might leverage TPROXY for initial traffic interception, the future of high-performance, intelligent api gateway solutions, especially those dealing with dynamic AI workloads, points strongly towards eBPF for its unparalleled flexibility and performance in the kernel.

5.3 Future Trends: Hardware Offloading and Specialized eBPF Hardware

The evolution of network proxying is relentless. One significant future trend involves hardware offloading. SmartNICs (Network Interface Cards) are increasingly capable of executing eBPF programs directly in hardware. This pushes packet processing even closer to the wire, achieving line-rate speeds with minimal CPU utilization. For ultra-high-performance applications and specialized gateway services, hardware-accelerated eBPF will be a game-changer, further cementing eBPF's position as a cornerstone technology for future network architectures. This not only boosts performance but also frees up valuable CPU cycles for application logic, benefiting platforms like APIPark in their quest for ever-higher TPS rates for AI and REST services.

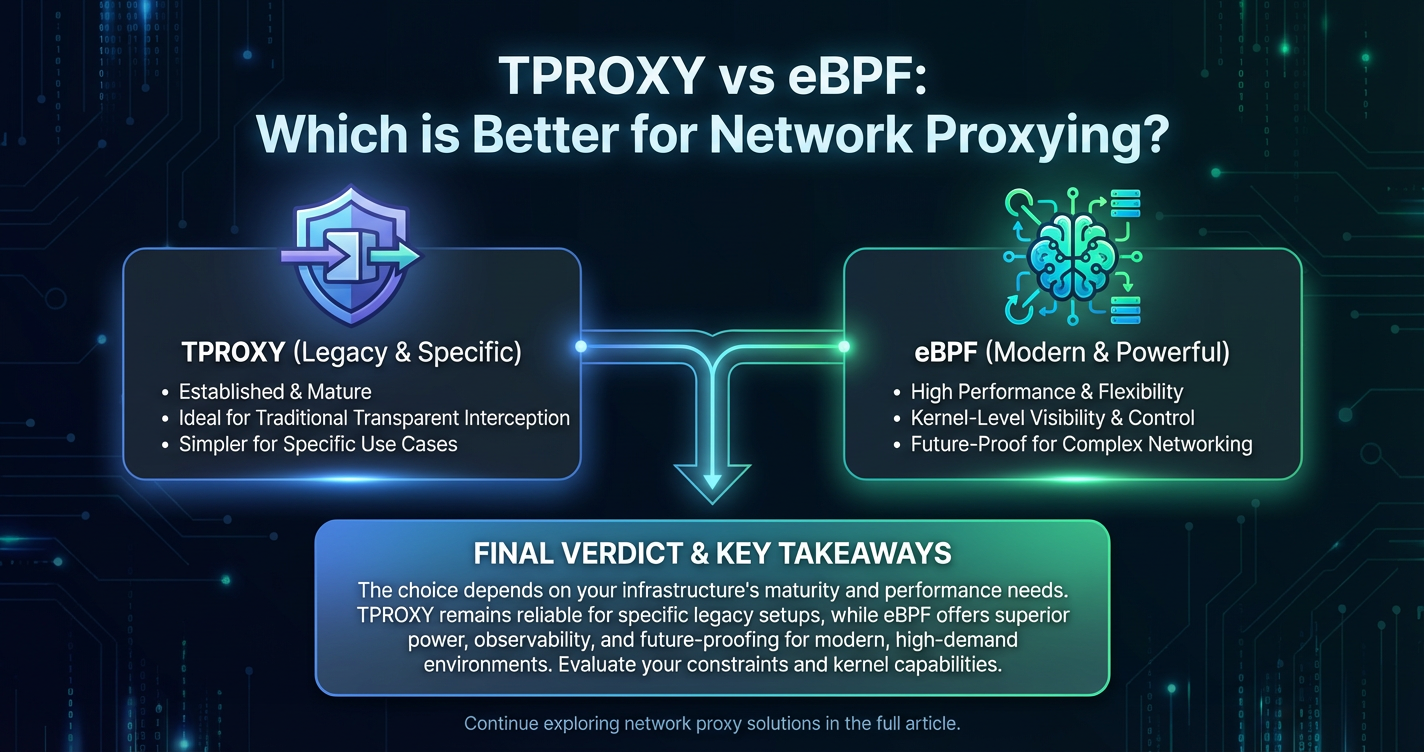

6. Making the Choice: TPROXY or eBPF?

The journey through TPROXY and eBPF reveals two distinct philosophies for network proxying within the Linux kernel. TPROXY, a mature and stable workhorse, offers transparent redirection with crucial IP preservation, ideal for simpler, established use cases. eBPF, a revolutionary and rapidly evolving technology, provides unprecedented kernel programmability and performance, perfectly suited for the dynamic, high-demand, and highly observable needs of modern infrastructure. Making the "better" choice is not about declaring a single victor, but rather about a pragmatic assessment of your specific context.

Factors to Consider

When weighing TPROXY against eBPF, several critical factors should guide your decision:

- Performance Requirements:

- Low to Moderate Traffic, Less Latency-Sensitive: TPROXY can be perfectly adequate. If your api gateway or general gateway traffic volumes are not extreme, and millisecond-level latency isn't a strict requirement, TPROXY's simplicity might be preferable.

- High Throughput, Ultra-Low Latency, Extreme Scale: eBPF is the clear choice. For mission-critical LLM Proxy applications, high-performance service meshes, or a massively scaled api gateway, eBPF’s in-kernel processing and minimal overhead are indispensable.

- Flexibility and Programmability Needs:

- Simple Redirection, User-Space Logic: If your proxy logic is largely handled in user space (e.g., HTTP routing, authentication) and you primarily need transparent traffic interception, TPROXY effectively serves this purpose by redirecting packets.

- Complex Custom Logic, Dynamic Decision-Making, Kernel-Level Manipulation: If you require sophisticated traffic engineering, application-aware routing at the packet level, advanced security filtering, or stateful processing directly in the kernel, eBPF’s programmability is unmatched. This is crucial for innovative api gateway features or intelligent LLM Proxy routing.

- Team Expertise and Learning Curve:

- Existing

iptablesand Linux Networking Expertise: If your team is proficient iniptablesand traditional Linux networking, deploying TPROXY-based solutions will have a lower immediate learning curve. - Willingness to Invest in New Skills: Adopting eBPF requires a significant investment in learning BPF C, kernel concepts, and specific tooling. However, this investment yields substantial long-term benefits in terms of capability and efficiency.

- Existing

- Observability Requirements:

- Basic Traffic Monitoring: TPROXY with standard networking tools can provide basic metrics.

- Deep Introspection, Real-time Telemetry, Custom Metrics: eBPF is superior for gaining fine-grained visibility into network behavior, which is vital for complex distributed systems and performance-sensitive services like an LLM Proxy.

- Deployment Environment and Kernel Version:

- Older Kernel Versions or Strict OS Standards: TPROXY is universally supported across virtually all Linux distributions and kernel versions.

- Modern Linux Distributions with Newer Kernels (5.x+ recommended): To leverage the full power of eBPF, a relatively modern Linux kernel is required. Check your target environment's kernel version for compatibility.

- Security Needs:

- Standard Network Security: Both can contribute, with TPROXY acting as a redirection mechanism for security proxies.

- Advanced In-Kernel Security Policies, Micro-segmentation: eBPF allows for highly effective, performant, and dynamic security policy enforcement directly within the kernel, providing a robust layer of defense for your api gateway and backend services.

When TPROXY Might Still Be Sufficient

Don't dismiss TPROXY too quickly. For many small to medium-sized deployments, for specific transparent proxying tasks that don't demand extreme performance, or in environments where iptables is already deeply embedded and familiar, TPROXY remains a perfectly valid and robust solution. If your primary goal is to simply redirect traffic transparently to an existing user-space proxy application (like Nginx, Envoy, or a custom daemon) that handles all the complex application-layer logic, and if the overhead of context switching is acceptable for your traffic volume, then TPROXY provides a stable and proven mechanism. It’s particularly useful when migrating existing api gateway configurations that rely on transparent interception.

When eBPF Becomes the Clear Choice

eBPF shines brightest when you are pushing the boundaries of what's possible in networking. If you are building next-generation infrastructure, such as: * A high-performance api gateway designed to handle massive scale and complex routing for microservices. * A specialized LLM Proxy that requires intelligent, real-time routing based on AI model performance or cost, alongside robust security and fine-grained observability. * A cloud-native service mesh seeking to minimize resource overhead and maximize throughput. * Any system requiring deep, granular, and programmable control over network packets directly in the kernel.

In these scenarios, the initial investment in eBPF expertise is outweighed by the dramatic gains in performance, flexibility, security, and observability. eBPF empowers architects to solve problems that were previously intractable without significant compromises or complex kernel modifications.

The Hybrid Approach

It's also important to recognize that TPROXY and eBPF are not mutually exclusive. In some complex architectures, a hybrid approach might emerge. For instance, eBPF could be used for initial, ultra-fast packet filtering and load balancing at the XDP layer, then intelligently steer specific traffic flows (perhaps those requiring deep application-layer inspection) to a user-space proxy that might have been initially configured with TPROXY. This allows for leveraging the strengths of both technologies, optimizing for performance where it's most critical, while maintaining flexibility for complex application-level processing.

Conclusion

The realm of network proxying is continuously evolving, driven by the increasing complexity of distributed systems, the proliferation of microservices, and the surging demands of AI workloads. TPROXY and eBPF stand as two formidable technologies, each offering distinct advantages for intercepting and manipulating network traffic within the Linux kernel.

TPROXY, with its mature netfilter integration and ability to preserve original source/destination IPs, remains a reliable choice for traditional transparent proxying, basic gateway functionalities, and scenarios where simplicity and compatibility with existing iptables configurations are paramount. It offers a solid foundation for many conventional api gateway implementations and continues to serve its purpose effectively in countless production environments.

However, eBPF represents a monumental leap forward in kernel programmability. By allowing custom, safe programs to execute directly within the kernel, eBPF unlocks unprecedented levels of performance, flexibility, and observability. For modern, high-demand applications, particularly those forming the backbone of advanced api gateway solutions and specialized LLM Proxy services, eBPF is rapidly becoming the indispensable technology. Its ability to provide near-native execution speed, dynamic traffic steering, sophisticated policy enforcement, and deep real-time insights makes it ideally suited for the challenges of managing highly dynamic, low-latency, and resource-intensive network flows.

Ultimately, the decision of which technology is "better" is contextual. It hinges on your specific performance requirements, the complexity of your proxying logic, the expertise of your team, and your long-term architectural vision. For those pushing the boundaries of network performance, scalability, and observability, eBPF offers a transformative capability that is reshaping the future of network infrastructure. For those with more modest requirements or a strong existing investment in iptables knowledge, TPROXY remains a robust and proven tool. As network technologies continue to advance, understanding both TPROXY and eBPF will empower architects and engineers to build more resilient, efficient, and intelligent systems capable of meeting the ever-growing demands of the digital age.

Frequently Asked Questions (FAQ)

1. What is the fundamental difference between TPROXY and eBPF for network proxying?

The fundamental difference lies in their approach and programmability. TPROXY is a specific netfilter (iptables) target that transparently redirects packets to a local user-space proxy while preserving original destination IP information. It's a fixed kernel mechanism. eBPF, on the other hand, is a general-purpose programmable framework that allows custom code to run directly inside the kernel at various hook points (like network interfaces). For proxying, eBPF can inspect, modify, and redirect packets within the kernel itself, offering far greater flexibility and performance by avoiding context switches to user space for logic execution.

2. Which technology offers better performance for high-throughput proxying, and why?

eBPF generally offers significantly better performance for high-throughput proxying. This is because eBPF programs execute directly in kernel space with near-native speed (thanks to JIT compilation) and minimal context switching overhead. TPROXY, while fast for redirection, still requires the actual proxy logic to be executed in user space, incurring performance penalties due to context switches between kernel and user space for every packet. For demanding applications like a high-volume api gateway or an LLM Proxy, eBPF's in-kernel processing leads to superior throughput and lower latency.

3. Can TPROXY and eBPF be used together in a network architecture?

Yes, a hybrid approach is possible. While they operate at different levels and with different mechanisms, an architecture might leverage eBPF for ultra-fast, early-stage packet processing (e.g., at the XDP layer for initial filtering or load balancing) and then steer certain types of traffic to a user-space proxy that might be using TPROXY for its transparent redirection capabilities to apply more complex application-layer logic. This allows architects to combine the performance benefits of eBPF with the established capabilities of user-space proxies and TPROXY for specific use cases.

4. What are the main benefits of using eBPF for an LLM Proxy?

For an LLM Proxy, eBPF offers several critical benefits: * Dynamic and Intelligent Routing: eBPF can implement complex routing logic directly in the kernel, directing LLM requests based on factors like model cost, provider availability, user tiers, or real-time performance metrics with extremely low latency. * High Performance and Low Latency: Essential for AI services, eBPF minimizes overhead, ensuring prompts and responses are processed with maximum speed. * Enhanced Security: Fine-grained security policies can be enforced at the kernel level, filtering or redacting sensitive information in prompts efficiently. * Granular Observability: eBPF provides deep insights into LLM traffic, allowing for detailed monitoring, tracing, and analytics of AI interactions.

5. Is eBPF significantly harder to implement compared to TPROXY?

Yes, eBPF generally has a steeper learning curve for implementation compared to TPROXY. Setting up basic transparent proxying with TPROXY involves configuring iptables rules and a user-space proxy, which is straightforward for those familiar with Linux networking. Developing eBPF programs, however, requires knowledge of BPF C, kernel internals, and specialized tooling (like BCC or libbpf). While the operational deployment of compiled eBPF programs can be simpler, the initial development phase demands a higher level of expertise.

🚀You can securely and efficiently call the OpenAI API on APIPark in just two steps:

Step 1: Deploy the APIPark AI gateway in 5 minutes.

APIPark is developed based on Golang, offering strong product performance and low development and maintenance costs. You can deploy APIPark with a single command line.

curl -sSO https://download.apipark.com/install/quick-start.sh; bash quick-start.sh

In my experience, you can see the successful deployment interface within 5 to 10 minutes. Then, you can log in to APIPark using your account.

Step 2: Call the OpenAI API.