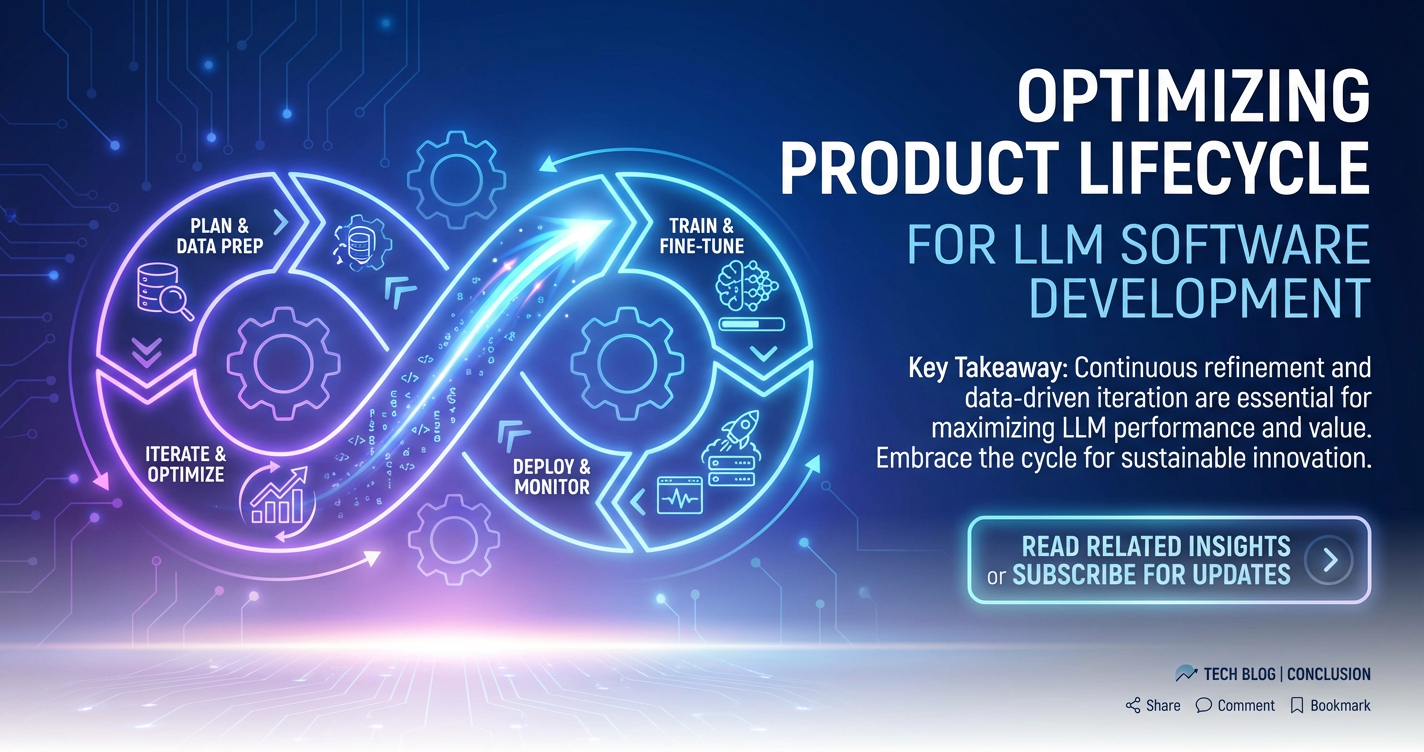

Optimizing Product Lifecycle for LLM Software Development

The advent of Large Language Models (LLMs) has ushered in a transformative era for software development, presenting unprecedented opportunities for innovation across industries. From intelligent assistants and sophisticated content generation tools to advanced data analysis and complex decision-making systems, LLMs are reshaping how applications are conceived, built, and interact with users. However, the rapid evolution, inherent complexities, and unique operational challenges associated with LLMs demand a specialized approach to product lifecycle management (PLM). Unlike traditional software development, building and maintaining LLM-powered applications involves navigating a dynamic landscape of model updates, prompt engineering intricacies, data governance, ethical considerations, and significant computational overheads.

This comprehensive guide delves into the critical strategies and best practices for optimizing the product lifecycle in LLM software development. We will explore each phase, from initial conception and design through development, deployment, and ongoing optimization, emphasizing the unique considerations that LLMs introduce. By adopting a structured and adaptive PLM framework, organizations can harness the full potential of these powerful models, ensuring their LLM-driven products are robust, scalable, cost-effective, and ultimately deliver sustained value to users. We will particularly focus on crucial architectural components like the LLM Gateway and LLM Proxy, and the fundamental importance of a robust Model Context Protocol in building sophisticated and reliable LLM applications.

The Unique Landscape of LLM Software Development

Before diving into the lifecycle phases, it’s crucial to understand why LLM software development requires a distinct PLM approach. Traditional software development often deals with deterministic logic and well-defined rules. LLMs, conversely, operate on probabilistic principles, making their outputs inherently non-deterministic and often challenging to predict or control precisely. This introduces several unique challenges:

1. Rapid Evolution and Obsolescence: The field of LLMs is characterized by breathtaking speed. New models, architectures, and fine-tuning techniques emerge almost weekly, rendering previous best practices or even entire models potentially obsolete in a matter of months. A robust PLM must account for this volatility, facilitating continuous integration of new models and features without disrupting existing services.

2. Non-Determinism and Hallucinations: LLMs can generate plausible but factually incorrect information (hallucinations). Managing this risk, especially in critical applications, requires sophisticated validation, human-in-the-loop systems, and robust error handling strategies throughout the product lifecycle. The probabilistic nature also means that the same prompt might yield different outputs, which can complicate testing and quality assurance.

3. Context Management Complexity: For LLMs to be truly useful in conversational or stateful applications, they need to maintain context across multiple turns or sessions. Managing this "memory" efficiently and effectively, ensuring the model always has the most relevant information without exceeding token limits, is a significant technical challenge. This is where a well-defined Model Context Protocol becomes indispensable.

4. Data Governance and Ethics: LLMs are trained on vast datasets, and their applications often process sensitive user information. Concerns around data privacy, bias in model outputs, fairness, transparency, and potential misuse necessitate rigorous ethical review and responsible AI practices integrated into every stage of the PLM. Compliance with regulations like GDPR or HIPAA adds another layer of complexity.

5. High Computational and Operational Costs: Running and fine-tuning LLMs can be incredibly resource-intensive, often requiring specialized hardware like GPUs. Managing these costs, optimizing inference times, and scaling efficiently are major operational considerations that impact the entire product lifecycle from design to deployment and beyond. Token usage directly translates to cost, making efficient prompt design and response handling critical.

6. Prompt Engineering as a Core Development Skill: Unlike traditional coding, where logic is explicitly written, much of LLM application development involves crafting precise and effective prompts. This "prompt engineering" is an iterative, experimental process that requires its own set of best practices for version control, testing, and optimization, fundamentally altering the development workflow.

By acknowledging these distinctive attributes, organizations can tailor their PLM strategies to effectively address the specific demands of LLM software development, fostering innovation while mitigating risks.

Phase 1: Conception and Strategic Design for LLM Products

The initial phase of any product lifecycle is arguably the most critical, laying the groundwork for all subsequent efforts. For LLM-powered applications, this phase takes on additional layers of complexity due to the unique nature of the underlying technology. Strategic design in this context is not just about identifying a problem, but also about determining if an LLM is the right solution, selecting the appropriate model, and architectural planning for its integration.

1.1 Problem Identification and Value Proposition Definition

Before jumping into LLMs, the fundamental question must be asked: What problem are we trying to solve, and how will an LLM genuinely add value? It’s tempting to apply LLMs everywhere, but their strengths lie in specific areas such as natural language understanding, generation, summarization, translation, and sophisticated pattern recognition in unstructured text.

- Identifying LLM-Native Problems: Focus on use cases where human-like text generation, understanding nuanced language, or synthesizing vast amounts of information are core requirements. Examples include automated customer support agents, personalized content creation, code generation, complex document analysis, or educational tutors.

- Defining Measurable Value: How will the LLM solution improve efficiency, enhance user experience, reduce costs, or unlock new capabilities? Quantifiable metrics must be established early on. For instance, reducing customer support resolution time by X%, increasing content production speed by Y%, or improving search result relevance by Z%.

- Feasibility and Risk Assessment: Beyond technical feasibility, consider the ethical implications, regulatory compliance, and potential for model biases or hallucinations. Can these risks be sufficiently mitigated, or do they pose an unacceptable threat to the product’s integrity or user safety? A deep understanding of these aspects informs the initial design choices.

1.2 Model Selection and Evaluation Strategy

Once a clear problem and value proposition are established, the next crucial step is selecting the appropriate LLM. This decision has profound implications for cost, performance, scalability, and development effort.

- Open-Source vs. Proprietary Models:

- Proprietary Models (e.g., OpenAI GPT series, Anthropic Claude, Google Gemini): These often offer state-of-the-art performance, ease of use via APIs, and significant infrastructure investment by providers. Benefits include reduced operational overhead, continuous model improvements, and potentially faster time-to-market. Drawbacks include vendor lock-in, recurring API costs that can scale unpredictably, and data privacy concerns if sensitive information is sent to third-party APIs.

- Open-Source Models (e.g., Llama 2, Mistral, Falcon): Offer greater control over the model, data privacy (as data stays in-house), customization through fine-tuning, and no direct API costs. However, they require significant infrastructure investment (GPUs), expertise in deployment and management, and ongoing maintenance. The choice often depends on sensitivity of data, budget, performance requirements, and internal expertise.

- Fine-tuning vs. Prompt Engineering vs. RAG (Retrieval Augmented Generation):

- Prompt Engineering: The quickest way to get started, leveraging an existing model by crafting effective prompts. Cost-effective for simple tasks, but limited by the model's inherent knowledge and context window.

- Fine-tuning: Adapting a pre-trained LLM on a specific dataset to make it more specialized for a particular task or domain. Improves performance on niche tasks, reduces hallucination, and can lead to shorter, cheaper prompts. Requires a curated dataset and computational resources.

- RAG: Combining an LLM with a retrieval system that fetches relevant information from a knowledge base before generating a response. Excellent for grounded, up-to-date, and factually accurate responses, mitigating hallucination without expensive fine-tuning of the base model. This approach is becoming increasingly popular for enterprise applications.

- A combination of these strategies is often the most effective, using RAG for knowledge retrieval, fine-tuning for domain-specific nuances, and prompt engineering for dynamic instruction.

- Evaluation Metrics: Define clear metrics for success beyond anecdotal observation. These could include accuracy, relevance, fluency, coherence, toxicity, latency, and token cost per interaction. Establishing baselines early allows for objective performance tracking throughout the lifecycle.

1.3 System Architecture and Integration Strategy

Integrating LLMs into an existing or new software ecosystem demands thoughtful architectural design. This phase should define how the application interacts with the LLM, how data flows, and how security and scalability are maintained.

- API-First Approach: Treat the LLM as a service accessed via a well-defined API. This promotes modularity, allows for easy swapping of models, and simplifies integration with various application components.

- Introducing the LLM Gateway: For complex applications that interact with multiple LLMs (from different providers or different fine-tuned versions of the same model), an LLM Gateway becomes an indispensable architectural component.

- Definition: An LLM Gateway acts as a centralized entry point for all requests to LLM services. It sits between your application and the various LLM providers or deployed models.

- Benefits:

- Unified API Access: Provides a consistent interface for interacting with diverse LLMs, abstracting away provider-specific APIs and data formats. This dramatically simplifies integration for developers.

- Traffic Management: Handles request routing, load balancing across multiple model instances or providers, and rate limiting to prevent abuse or control costs.

- Security: Centralizes authentication, authorization, and API key management, enforcing security policies before requests reach the LLMs.

- Cost Optimization: Can track token usage, implement budget caps, and potentially route requests to the most cost-effective model based on the task.

- Caching: Caches frequent LLM responses to reduce latency and save costs.

- Observability: Provides centralized logging, monitoring, and analytics for all LLM interactions, offering insights into performance, errors, and usage patterns.

- Model Agility: Enables seamless switching between models or providers without requiring changes in the application code, crucial for rapid iteration and managing model evolution.

- APIPark as a Solution: This is precisely where a platform like ApiPark demonstrates its significant value. As an open-source AI Gateway & API Management Platform, APIPark is designed to simplify the complex task of integrating and managing multiple AI models, including LLMs. It offers quick integration of over 100+ AI models and provides a unified API format for AI invocation, ensuring that changes in underlying AI models or prompts do not disrupt the application layer. Its end-to-end API lifecycle management capabilities are instrumental in regulating API processes, managing traffic, load balancing, and versioning of LLM-based APIs, making it an ideal choice for organizations looking to optimize their LLM infrastructure.

- Data Flow and Storage: Define how data flows to and from the LLM. Consider data masking or anonymization for sensitive information, and where intermediate outputs or conversational histories will be stored. Edge processing versus cloud processing can also be a key decision.

1.4 Ethical and Responsible AI Design

Integrating ethical considerations from the outset is paramount for building trustworthy and sustainable LLM products.

- Bias Mitigation: LLMs can inherit biases from their training data. Design strategies to detect and mitigate bias in outputs, which might include prompt design techniques, input filtering, or output post-processing.

- Transparency and Explainability: While LLMs are often black boxes, consider how to provide users with transparency regarding the AI's involvement (e.g., clearly labeling AI-generated content) and, where possible, some level of explainability for its outputs.

- Safety and Guardrails: Implement mechanisms to prevent the LLM from generating harmful, inappropriate, or malicious content (e.g., prompt injection defenses, content moderation filters). This involves designing safety policies and incorporating them into the system architecture.

- Privacy by Design: Ensure that data privacy principles are embedded into the architecture from the ground up, minimizing data collection, anonymizing sensitive data, and complying with all relevant privacy regulations.

Phase 2: Development and Iterative Prototyping

With a solid design foundation, the development phase focuses on bringing the LLM application to life. This is an iterative process heavily reliant on prompt engineering, robust integration, and continuous testing, distinct from traditional coding-centric development.

2.1 Prompt Engineering and Version Control

Prompt engineering is the art and science of crafting effective inputs to guide LLMs towards desired outputs. It's not a one-time activity but an ongoing iterative process.

- Iterative Prompt Design: Developers will spend significant time experimenting with different prompts, few-shot examples, chain-of-thought instructions, and other techniques to achieve optimal model performance for specific tasks. This involves continuous feedback loops, observing model behavior, and refining prompts.

- Prompt Management and Versioning: Just like code, prompts should be version-controlled. Tools that allow for tracking changes in prompts, testing different versions, and rolling back to previous iterations are crucial. This ensures reproducibility and helps in understanding performance regressions or improvements. Treating prompts as 'prompt-as-code' enables collaboration and systematic refinement.

- Prompt Templating: Using templates for prompts can standardize inputs, make prompts easier to manage, and allow for dynamic insertion of variables. This also aids in preventing prompt injection attacks by structuring user inputs securely.

- Automated Prompt Testing: Develop automated tests for prompts, evaluating their effectiveness against a defined set of inputs and expected outputs. This helps in quickly identifying prompt regressions when models are updated or context changes.

2.2 Integration Patterns and the LLM Proxy

How the application interacts with the LLM is critical for performance, reliability, and maintainability. Several integration patterns exist, often facilitated by an intermediary layer like an LLM Proxy.

- Direct API Calls: Simplest approach, but bypasses many benefits of a gateway or proxy. Suitable for very simple, low-volume applications with a single LLM.

- Synchronous vs. Asynchronous Calls: For real-time user interactions, synchronous calls are often necessary, but for background tasks or long-running generations, asynchronous processing can improve user experience and system throughput.

- Streaming Responses: For conversational UIs, streaming LLM responses token-by-token improves perceived latency and user engagement. The integration pattern must support this.

- Introducing the LLM Proxy: While an LLM Gateway provides centralized control over multiple LLM services, an LLM Proxy can be thought of as a specialized intermediary that sits closer to the application, often enhancing a specific LLM interaction or providing additional functionality before or after reaching the gateway/model.

- Definition: An LLM Proxy is an intermediary server or service that intercepts requests and responses between an application and an LLM (or an LLM Gateway). It can modify, enhance, or filter these communications.

- Benefits:

- Request/Response Pre-processing & Post-processing: Can sanitize user inputs before sending them to the LLM (e.g., for security or formatting), and parse/filter LLM outputs (e.g., for safety, structure enforcement, or extracting specific entities).

- Caching Layer: Implements caching of LLM responses for identical requests, significantly reducing latency and operational costs, especially for frequently asked questions or common prompts.

- Rate Limiting & Cost Control: Enforces specific rate limits per user or per application, and tracks token usage at a granular level, providing more control over spending.

- Fallbacks & Retries: Implements retry logic for transient errors and can route requests to fallback models or static responses if the primary LLM is unavailable or fails.

- Logging & Monitoring Enhancement: Adds more detailed, application-specific logging and telemetry to LLM interactions, enriching observability data.

- Context Management Integration: Can be tightly integrated with the Model Context Protocol to manage and inject conversational history or external knowledge efficiently.

- An LLM Proxy can operate independently or as a component within a broader LLM Gateway architecture. For example, the caching and pre/post-processing features described for APIPark implicitly serve as proxy functionalities within its gateway capabilities.

2.3 Model Context Protocol and State Management

For LLMs to engage in meaningful, multi-turn conversations or generate coherent, context-aware content, they need to retain and utilize information from previous interactions or external data sources. This is where a robust Model Context Protocol becomes fundamental.

- Definition: A Model Context Protocol defines the standardized way in which conversational history, user-specific data, retrieved external information (from RAG), and system-level instructions are structured, transmitted, and managed for an LLM during an interaction. It dictates how an application builds the "prompt" including all necessary context for the LLM to generate an informed response.

- Key Components of a Model Context Protocol:

- Message History Format: Standardizing how user and assistant messages are stored and re-sent to the LLM to maintain conversation flow (e.g., using roles like "system", "user", "assistant").

- System Instructions: Defining how overarching directives or constraints are consistently passed to the LLM (e.g., "You are a helpful assistant", "Always respond in JSON format").

- External Knowledge Injection (RAG): Specifying the format and mechanism for embedding retrieved documents, database snippets, or API call results into the prompt. This ensures relevant, up-to-date information is consistently available.

- User/Session Attributes: How user IDs, preferences, or session-specific variables are included in the context to personalize responses.

- Context Window Management: Strategies for managing the ever-present token limit. This includes:

- Summarization: Summarizing older parts of the conversation to condense history.

- Truncation: Intelligent truncation strategies (e.g., removing the oldest messages) if the context grows too large.

- Sliding Window: Maintaining a fixed-size window of recent interactions.

- Semantic Search: Retrieving only the most semantically relevant past interactions.

- State Persistence: How the context is stored between turns or sessions (e.g., in a database, session store, or cache).

- Importance: A well-defined Model Context Protocol ensures:

- Coherent Interactions: The LLM always has the necessary background to provide relevant and consistent responses.

- Reduced Hallucinations: By grounding responses in provided context.

- Optimized Token Usage: By intelligently managing the amount of information sent to the LLM.

- Easier Development: Developers have a clear blueprint for constructing LLM prompts, reducing errors and inconsistencies.

- Scalability: Efficient context management is crucial for handling many concurrent users without excessive resource consumption.

2.4 Data Pre-processing and Post-processing

The quality of inputs and the usability of outputs are crucial for LLM applications.

- Input Sanitization: Cleaning and validating user inputs to remove malicious content (e.g., prompt injection attempts), format inconsistencies, or irrelevant information.

- Output Parsing and Validation: LLM outputs often require parsing (e.g., extracting JSON objects from text, identifying entities) and validation (e.g., ensuring structured output conforms to a schema).

- Output Filtering and Moderation: Implementing safety filters to prevent the LLM from generating inappropriate or harmful content, or to ensure compliance with brand guidelines.

- Structured Data Integration: Converting unstructured LLM outputs into structured data for use in downstream systems or databases.

2.5 Testing and Quality Assurance

Testing LLM applications is far more complex than traditional software due to the probabilistic nature of the models.

- Unit and Integration Testing: Test individual components, including prompt templates, data pre/post-processors, and integration with the LLM Gateway/Proxy.

- Adversarial Testing: Intentionally try to "break" the LLM by feeding it unusual, malicious, or ambiguous inputs to uncover vulnerabilities, biases, or failure modes (e.g., prompt injection, jailbreaking).

- Evaluation Datasets: Create diverse datasets of inputs and expected outputs to systematically evaluate LLM performance against specific criteria (accuracy, relevance, safety). This is crucial for regression testing when models or prompts are updated.

- Human-in-the-Loop (HITL) Testing: For critical applications, human review of LLM outputs is indispensable, especially during development and early deployment. This provides valuable feedback for prompt refinement and model improvement.

- Metrics-Driven Testing: Focus on quantifiable metrics such as accuracy, coherence, latency, and token usage. Establish benchmarks and monitor these metrics over time.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

Phase 3: Deployment and Operational Excellence

Deploying LLM applications demands robust infrastructure, meticulous monitoring, and stringent security measures. This phase transforms prototypes into production-ready systems that are scalable, reliable, and cost-efficient.

3.1 Infrastructure and Scalability Considerations

The choice and configuration of infrastructure directly impact performance and cost.

- Cloud vs. On-Premise Deployment:

- Cloud: Offers elasticity, scalability, and managed services (e.g., specialized GPU instances for open-source models, direct API access for proprietary models). Requires careful cost management.

- On-Premise: Provides maximum data control and potentially lower long-term costs for very high usage, but demands significant upfront investment in hardware and expertise in managing complex AI infrastructure.

- GPU Requirements: Self-hosting open-source LLMs necessitates powerful GPUs. Proper selection and scaling of these resources are critical. Cloud providers offer various GPU instance types.

- Containerization and Orchestration: Use Docker and Kubernetes (or similar tools) to containerize LLM services, LLM Proxies, and the LLM Gateway. This ensures portability, simplifies deployment, and enables efficient scaling and resource management.

- Load Balancing: For high-traffic applications, distribute requests across multiple instances of your LLM service, potentially across different models or even different LLM providers via the LLM Gateway, to ensure high availability and responsiveness.

3.2 Monitoring and Observability

Comprehensive monitoring is vital for understanding LLM application performance, identifying issues, and optimizing resource usage.

- Key Metrics for LLMs: Beyond standard software metrics (CPU, memory, latency, error rates), specific LLM metrics include:

- Token Usage: Input and output tokens per request, total tokens over time (critical for cost).

- Inference Latency: Time taken for the LLM to generate a response.

- Cost Per Request/Per Session: Directly tie usage to financial outlay.

- Hallucination Rate: Using automated or human evaluation to detect factual inaccuracies.

- Safety Violations: Number of times content moderation filters are triggered.

- Context Window Utilization: How much of the available context window is being used.

- Logging: Implement detailed logging for all LLM interactions, including prompts, responses, metadata (timestamps, user IDs), and errors. This data is invaluable for debugging, auditing, and post-hoc analysis. APIPark's detailed API call logging, for instance, records every detail, enabling quick tracing and troubleshooting.

- Alerting: Set up alerts for anomalies in performance metrics, excessive error rates, sudden spikes in token usage, or repeated safety violations.

- Traceability: Ensure end-to-end traceability of requests through the LLM Gateway, LLM Proxy, and the LLM itself, which is crucial for complex microservices architectures.

3.3 Security and Access Control

Securing LLM applications is paramount, given the potential for data breaches, abuse, and prompt injection attacks.

- API Key Management: Securely manage API keys for proprietary LLMs. Use environment variables, secret management services, and role-based access control. The LLM Gateway should centralize API key management and securely pass them to the underlying LLMs.

- Authentication and Authorization: Implement robust authentication and authorization mechanisms for accessing your LLM application and the LLM Gateway. This ensures only authorized users and services can make requests. APIPark, for example, allows for independent API and access permissions for each tenant, and can activate subscription approval features, preventing unauthorized calls.

- Input Validation and Sanitization: As discussed in development, this is a critical security layer to prevent prompt injection and other adversarial attacks.

- Output Filtering: Filter LLM outputs for sensitive information, malicious content, or data that should not be exposed to end-users.

- Network Security: Deploy LLM services in secure network environments, using firewalls, VPCs, and encryption in transit (TLS) and at rest.

3.4 Cost Management and Optimization

LLM costs can quickly escalate if not managed proactively.

- Token Usage Tracking: Continuously monitor token consumption per feature, per user, or per business unit. This helps identify areas of high cost and informs optimization efforts.

- Budget Alerts and Caps: Implement automated alerts when usage approaches predefined budgets, and consider hard caps to prevent unexpected expenditure.

- Model Tiering: Route requests to different LLM models based on their cost-effectiveness and performance requirements. For example, use a cheaper, smaller model for simple tasks and a more expensive, powerful model for complex ones, managed effectively by an LLM Gateway.

- Caching: Leverage the caching capabilities of the LLM Gateway or LLM Proxy to reduce redundant LLM calls, saving both latency and cost.

- Prompt Optimization: Efficient prompt engineering can significantly reduce the number of input/output tokens, directly impacting cost.

- Batching Requests: Where feasible, batch multiple LLM requests to optimize network and processing overhead, although this might increase latency for individual requests.

Phase 4: Optimization, Evolution, and Decommissioning

The product lifecycle doesn't end at deployment; it enters a continuous cycle of optimization and evolution. For LLM applications, this phase is particularly dynamic, driven by new model releases, user feedback, and ongoing performance monitoring.

4.1 Continuous Improvement and Performance Tuning

Maintaining peak performance and efficiency is an ongoing effort.

- Latency Reduction: Optimize every step of the LLM inference pipeline, from input processing to network communication with the LLM Gateway/Proxy, to the LLM's response generation. Techniques include model quantization, batching, and using faster models for specific tasks.

- Throughput Improvement: Scale infrastructure, optimize request handling, and leverage asynchronous processing to maximize the number of requests the system can handle per unit of time. APIPark, for instance, boasts performance rivaling Nginx, capable of over 20,000 TPS with an 8-core CPU and 8GB memory, supporting cluster deployment for large-scale traffic.

- Model Fine-tuning and Iteration: Continuously evaluate the performance of your deployed LLM. Collect user feedback and operational data to identify areas where the model underperforms. Use this data to fine-tune the model further or refine prompt engineering strategies.

- A/B Testing and Experimentation: Systematically test different model versions, prompt templates, context management strategies, or pre/post-processing logic in production to identify what performs best against your defined metrics. This requires a robust experimentation platform.

4.2 Model Updates and Versioning Strategies

The rapid pace of LLM innovation means that new, improved models are constantly being released. A robust strategy for managing these updates is crucial.

- Semantic Versioning for Models and Prompts: Treat models and prompts like software artifacts, assigning versions (e.g.,

model-v1.0,prompt-v2.1). This allows for clear tracking of changes and ensures reproducibility. - Blue/Green Deployments or Canary Releases: For significant model or prompt changes, use strategies like blue/green deployments (deploying the new version alongside the old and switching traffic) or canary releases (gradually rolling out the new version to a small subset of users). This minimizes risk and allows for quick rollbacks.

- Backward Compatibility: Design your API interfaces (especially through the LLM Gateway) to be backward-compatible with older model versions as much as possible, to avoid breaking existing applications during upgrades.

- Knowledge Base Refresh: For RAG-based systems, establish a pipeline for regularly updating and indexing your knowledge base to ensure the LLM always has access to the most current information.

4.3 Feedback Loops and User Experience Enhancement

User feedback is an invaluable resource for driving product improvements.

- Explicit Feedback Mechanisms: Implement features like "thumbs up/down" for LLM responses, free-text feedback forms, or rating systems directly within the application.

- Implicit Feedback Analysis: Analyze user interaction patterns, such as how users rephrase prompts, abandon conversations, or modify LLM-generated content, to infer areas for improvement.

- Human Review of Flagged Content: For safety-critical applications, establish processes for human review of LLM outputs that trigger moderation filters or receive negative user feedback.

- Data Analysis: APIPark’s powerful data analysis features, for example, analyze historical call data to display long-term trends and performance changes, helping businesses with preventive maintenance and identifying areas for UX improvements before issues even manifest.

4.4 Responsible AI in Production and Auditing

Ethical considerations extend beyond design into the operational lifetime of the product.

- Continuous Bias Monitoring: Deploy systems to continuously monitor for emergent biases in LLM outputs as user interactions evolve and data distributions shift.

- Fairness and Transparency Auditing: Regularly audit the system for fairness across different user groups and ensure that transparency claims are upheld in practice.

- Data Governance and Privacy Audits: Conduct periodic audits to ensure ongoing compliance with data privacy regulations and internal data governance policies, especially regarding the data processed by the LLM.

- Security Vulnerability Assessments: Regular penetration testing and vulnerability scanning of the entire LLM application stack, including the LLM Gateway and LLM Proxy, are crucial to identify and address security weaknesses.

4.5 Decommissioning

Eventually, all products reach the end of their useful life. For LLM applications, this might involve retiring old models, features, or the entire product.

- Sunset Planning: Plan for the orderly deprecation of older models or features. Communicate changes to users well in advance.

- Data Archiving and Retention: Securely archive relevant operational data, logs, and model artifacts in accordance with data retention policies.

- Resource De-provisioning: Carefully de-provision infrastructure resources (e.g., GPU instances) to avoid incurring unnecessary costs.

Conclusion

Optimizing the product lifecycle for LLM software development is a multifaceted and continuous endeavor that demands a holistic, adaptive, and responsible approach. The unique characteristics of Large Language Models – their probabilistic nature, rapid evolution, contextual dependencies, and inherent ethical considerations – necessitate a departure from traditional PLM strategies. By meticulously addressing each phase, from initial strategic design through iterative development, robust deployment, and ongoing optimization, organizations can unlock the immense potential of LLMs while mitigating significant risks.

The strategic implementation of architectural components such as the LLM Gateway and LLM Proxy proves invaluable for centralizing control, enhancing security, optimizing costs, and streamlining model management. Furthermore, a well-defined Model Context Protocol is the bedrock for building intelligent, coherent, and effective conversational and generative AI applications. Platforms like ApiPark exemplify how an open-source AI gateway and API management solution can empower developers and enterprises to navigate this complex landscape, offering quick integration, unified API formats, and end-to-end API lifecycle management essential for the longevity and success of LLM-powered products.

As LLMs continue to advance, the emphasis on structured PLM will only grow. Embracing agile methodologies, prioritizing MLOps best practices, fostering a culture of continuous learning, and embedding ethical AI principles at every stage will be critical for building cutting-edge, responsible, and sustainable LLM software that delivers lasting value in an ever-evolving technological frontier.

Frequently Asked Questions (FAQs)

1. What is the primary difference in PLM for LLM software compared to traditional software? The primary difference lies in managing inherent non-determinism, rapid model evolution, complex context management, significant computational costs, and heightened ethical considerations. Traditional PLM often deals with deterministic logic and slower-evolving technologies, whereas LLM PLM must be highly agile, iterative, and focused on managing model behavior, prompt effectiveness, and data quality throughout the entire lifecycle, including continuous monitoring for bias and hallucinations.

2. Why is an LLM Gateway important for LLM software development? An LLM Gateway is crucial because it acts as a centralized entry point for all LLM interactions, offering numerous benefits. It provides a unified API for disparate LLMs, centralizes security (authentication, authorization, API key management), manages traffic (rate limiting, load balancing), optimizes costs (token tracking, budget caps), and enables model agility by abstracting away provider-specific implementations. This architectural component simplifies integration, enhances control, and ensures scalability and reliability for LLM applications.

3. What role does a Model Context Protocol play in LLM applications? A Model Context Protocol is fundamental for building sophisticated LLM applications, especially those requiring multi-turn conversations or rich, informed responses. It defines the standardized way an application structures and manages all relevant information (conversational history, user data, external knowledge, system instructions) that is sent to the LLM. This protocol ensures the LLM receives sufficient and coherent context, preventing hallucinations, improving response relevance, optimizing token usage, and making the application more robust and predictable.

4. How does an LLM Proxy differ from an LLM Gateway? While both act as intermediaries, an LLM Gateway typically provides broader, centralized control over multiple LLM services, handling API management, security, and traffic routing across different models or providers. An LLM Proxy, on the other hand, is often a more specialized layer closer to the application, focusing on enhancing specific LLM interactions. Its primary functions include request/response pre-processing (sanitization, formatting), post-processing (parsing, filtering), advanced caching, fine-grained rate limiting, and robust retry/fallback logic, often augmenting the capabilities of a gateway or a direct LLM connection.

5. What are the key strategies for managing costs in LLM software development? Managing LLM costs involves several strategies: * Token Usage Tracking: Meticulously monitor input and output token consumption across features and users. * Prompt Optimization: Craft concise and effective prompts to minimize token count while achieving desired results. * Model Tiering: Utilize different LLM models based on cost-effectiveness and task complexity (e.g., cheaper models for simple tasks, more powerful ones for complex queries). * Caching: Implement caching at the LLM Gateway or LLM Proxy level to avoid redundant LLM calls for identical requests. * Budget Alerts and Caps: Set automated notifications and hard limits to prevent unexpected expenditure. * Batching Requests: Where feasible, combine multiple requests into a single batch to reduce API call overhead. * RAG over Fine-tuning: Leverage Retrieval Augmented Generation (RAG) to provide up-to-date knowledge without the high computational cost of frequent model fine-tuning.

🚀You can securely and efficiently call the OpenAI API on APIPark in just two steps:

Step 1: Deploy the APIPark AI gateway in 5 minutes.

APIPark is developed based on Golang, offering strong product performance and low development and maintenance costs. You can deploy APIPark with a single command line.

curl -sSO https://download.apipark.com/install/quick-start.sh; bash quick-start.sh

In my experience, you can see the successful deployment interface within 5 to 10 minutes. Then, you can log in to APIPark using your account.

Step 2: Call the OpenAI API.