Optimizing TPS with Step Function Throttling

In the intricate tapestry of modern digital infrastructure, the ability to process a vast number of operations with unwavering speed and reliability stands as a paramount measure of a system's efficacy. This metric, often condensed into the acronym TPS, or Transactions Per Second, underpins the user experience, dictates operational costs, and ultimately influences the very success of online services, applications, and enterprise platforms. As the digital landscape continues its relentless expansion, characterized by an explosion in interconnected services, mobile applications, and burgeoning data volumes, the challenges associated with maintaining optimal TPS have never been more acute. Organizations grapple with the dynamic and often unpredictable nature of user demand, the specter of sudden traffic surges, and the imperative to deliver seamless performance without incurring exorbitant infrastructure expenses. The delicate balance between elasticity and stability often hinges on sophisticated traffic management strategies, among which throttling stands out as a critical defense mechanism. While conventional throttling methods offer a foundational layer of protection, they frequently fall short in their ability to adapt to the nuanced, real-time ebb and flow of system load. This limitation has spurred the evolution of more intelligent, responsive techniques, leading us to the sophisticated realm of step function throttling. This article embarks on an extensive exploration of TPS optimization, dissecting the fundamental challenges posed by unmanaged traffic, critically evaluating traditional throttling paradigms, and ultimately delving deep into the architecture, implementation, and profound benefits of step function throttling as a superior, adaptive strategy for fortifying system resilience and performance, especially within complex API ecosystems managed by advanced API gateways.

The Indispensable Significance of Transactions Per Second (TPS)

To truly appreciate the necessity and sophistication of advanced throttling mechanisms like step function throttling, it is imperative to first grasp the profound importance of Transactions Per Second (TPS) in any contemporary digital system. At its core, TPS quantifies the number of atomic operations, or transactions, that a system can successfully process within a single second. These transactions can range from a simple database query, an API call, a user login, to a complex multi-step financial operation. The seemingly straightforward nature of this metric belies its deep implications across various dimensions of system performance and business continuity.

Firstly, and perhaps most immediately, TPS directly correlates with user experience. In an age where instant gratification is the norm, users expect applications and services to be responsive and performant. A system that can handle a high TPS without latency spikes or errors ensures that user requests are processed swiftly, leading to a smooth, frustration-free experience. Conversely, a low or inconsistent TPS can manifest as slow loading times, unresponsive interfaces, and ultimately, user abandonment. For e-commerce platforms, this translates directly to lost sales; for social media, it means reduced engagement; and for critical enterprise applications, it can impact productivity and decision-making.

Secondly, optimal TPS is a cornerstone of system stability and reliability. Every system has a finite capacity. When the incoming transaction rate consistently exceeds this capacity, the system begins to buckle under the pressure. This can lead to resource exhaustion (CPU, memory, network I/O, database connections), queue buildups, cascading failures across interconnected services, and ultimately, system outages. A well-managed TPS ensures that the system operates within its healthy limits, preventing catastrophic failures and maintaining continuous service availability. This is particularly crucial for mission-critical applications where downtime carries severe financial, reputational, and even safety consequences.

Thirdly, TPS has significant ramifications for cost efficiency. In cloud-native environments, resources are often provisioned and billed based on usage. Over-provisioning to handle infrequent peak loads is economically wasteful, leading to underutilized resources and unnecessary expenditure. Conversely, under-provisioning risks performance degradation and outages. Optimizing TPS allows organizations to right-size their infrastructure, ensuring that resources are adequately matched to demand without excessive overhead. Dynamic throttling, in particular, allows for more intelligent scaling decisions, maximizing resource utilization while maintaining performance.

Finally, TPS is intrinsically linked to business continuity and competitive advantage. In today's hyper-competitive market, businesses cannot afford to have their digital services falter. A system capable of sustaining high and consistent TPS under varying loads instills confidence in users and partners, strengthens brand reputation, and provides a distinct competitive edge. It enables businesses to confidently launch new features, handle marketing campaigns, and scale operations without fear of infrastructure collapse. The ability to process more transactions per second, reliably and efficiently, directly translates into the capacity to serve more customers, process more data, and drive more revenue.

However, achieving this "goldilocks zone" for TPS – not too low to hinder performance, not too high to cause overload – is a complex undertaking. It depends on a myriad of factors, including the underlying infrastructure (servers, network, storage), the efficiency of application design and algorithms, database performance and query optimization, and external dependencies. Each component in the service chain contributes to the overall TPS, and a bottleneck in any single area can severely constrain the entire system's throughput. This inherent complexity underscores the need for sophisticated management strategies that can dynamically respond to fluctuations and ensure that TPS remains within acceptable, healthy bounds.

The Perils of Unmanaged Traffic: Why Throttling is Imperative

In a perfect world, system load would be predictable, gradual, and always within capacity limits. Unfortunately, the real world of digital services is far from this ideal. Traffic to applications and APIs is inherently volatile, subject to sudden surges, unexpected dips, and malicious attacks. When this traffic remains unmanaged, the consequences can range from minor annoyances to catastrophic system failures, illustrating precisely why throttling mechanisms are not merely an optional feature but a critical, indispensable component of any robust architecture.

The most immediate and noticeable consequence of unmanaged traffic exceeding system capacity is a precipitous decline in performance. Users experience increased latency, meaning their requests take significantly longer to receive a response. This delay can render interactive applications unusable, cause frustration, and lead to high bounce rates. For transactional systems, extended processing times can lead to timeouts and incomplete operations, creating data inconsistencies and requiring manual intervention.

Beyond latency, an overloaded system is prone to a sharp increase in error rates. As resources like CPU cycles, memory, and database connections become saturated, components begin to fail. Services might respond with HTTP 5xx errors (server errors), indicating that they are unable to process the request. These errors not only degrade the user experience but can also propagate through a microservices architecture, triggering cascading failures where one failing service overwhelms its downstream dependencies, leading to a widespread outage. Imagine a critical user authentication service collapsing under unmanaged load, effectively locking out all users from multiple dependent applications.

The ultimate nightmare scenario for unmanaged traffic is a complete system crash or outage. When resources are fully exhausted, the operating system or application processes might become unresponsive or terminate unexpectedly. This results in complete unavailability of the service, leading to lost revenue, damage to brand reputation, and potential compliance penalties for enterprises. High-profile examples of major websites crashing during flash sales or critical events are stark reminders of the fragility of systems without adequate traffic management.

Several common scenarios highlight the need for robust throttling:

- Sudden Traffic Surges: These can be legitimate, such as during highly anticipated product launches, flash sales, viral marketing campaigns, or major news events that drive a flood of users to a particular service. Without throttling, the sudden influx can overwhelm servers, databases, and APIs, leading to performance degradation or complete collapse. Even well-provisioned systems can be caught off guard by unprecedented demand spikes.

- Denial-of-Service (DoS) and Distributed Denial-of-Service (DDoS) Attacks: Malicious actors deliberately flood a system with an overwhelming volume of traffic to disrupt service or make it unavailable to legitimate users. Throttling acts as a crucial first line of defense, mitigating the impact of these attacks by limiting the rate at which requests are processed, preventing resource exhaustion. While dedicated DDoS protection services offer a broader defense, application-level throttling complements these by protecting specific API endpoints.

- Faulty or Misbehaving Clients: An improperly configured client application, a poorly written script, or even a bug in a legitimate service can inadvertently generate an excessive volume of requests to an API. For instance, a client application stuck in a retry loop due to a transient error can quickly exhaust the API's resources. Throttling, particularly per-client or per-API key throttling, is essential to contain such rogue behavior and prevent it from impacting the broader service.

- Resource Contention and Downstream Dependencies: In a microservices architecture, a single user request might fan out into multiple calls to various internal services and databases. If one of these downstream services is slow or becomes overloaded, the upstream services waiting for its response can accumulate requests, leading to increased latency and resource consumption. Throttling at the API gateway level or even within individual services can prevent this backpressure from overwhelming the entire system, ensuring that bottlenecks in one part of the system do not cause a domino effect.

These scenarios vividly illustrate that simply adding more hardware or scaling out services, while important, is often not enough. There's a critical need for mechanisms that intelligently manage and control the rate of incoming requests, ensuring that the system processes what it can handle gracefully, rejects excess load predictably, and maintains stability even under extreme pressure. This is the fundamental role of throttling, setting the stage for more sophisticated approaches like step function throttling that move beyond static limits to adaptive, real-time control.

Traditional Throttling Mechanisms: A Foundation with Limitations

Before delving into the intricacies of step function throttling, it's essential to understand the traditional approaches to request throttling, as they form the foundational concepts upon which more advanced techniques are built. These methods, while effective for certain scenarios, also highlight the limitations that step function throttling aims to overcome.

At the outset, it's useful to distinguish between rate limiting and throttling, although these terms are often used interchangeably. * Rate Limiting typically refers to strictly enforcing a maximum number of requests a client can make within a defined time window. Once the limit is reached, subsequent requests from that client are immediately rejected until the window resets. Its primary goal is often to prevent abuse, ensure fair usage among clients, and protect against DoS attacks. * Throttling, on the other hand, is a more general term that often implies a more nuanced approach. While it also involves limiting request rates, throttling can also involve delaying requests, queuing them, or selectively dropping them based on current system health, rather than just a hard, static client-specific limit. It's often employed to protect the system's overall capacity and ensure stability under varying loads. For the purpose of this discussion, we will use "throttling" in its broader sense, encompassing both hard limits and adaptive flow control.

The most common traditional throttling mechanisms rely on fixed-rate limits. These involve setting a predetermined maximum number of requests that a system (or a specific component, or a specific client) can handle per unit of time (e.g., 100 requests per second, 1000 requests per minute). When this limit is reached, any additional incoming requests are typically rejected, often with an HTTP 429 "Too Many Requests" status code, or queued if the system is designed to handle temporary backlogs.

Several algorithms are commonly used to implement fixed-rate throttling:

- Token Bucket Algorithm:

- Concept: Imagine a bucket that holds a fixed number of "tokens." Tokens are added to the bucket at a constant rate. Each incoming request consumes one token. If a request arrives and the bucket is empty, the request is rejected or queued until a token becomes available.

- Pros: Allows for bursts of traffic up to the bucket's capacity, as long as tokens are available. This makes it more flexible than a strict fixed window for handling transient spikes. The bucket size determines the allowed burst size, and the token refill rate determines the sustained rate.

- Cons: Still relies on static parameters (bucket size, refill rate). If the system's actual capacity fluctuates dynamically due to internal factors (e.g., database load, downstream service issues), a fixed token bucket might not adapt optimally.

- Leaky Bucket Algorithm:

- Concept: Visualize a bucket with a hole at the bottom, through which requests "leak" out at a constant rate, representing the system's processing capacity. Incoming requests are added to the bucket. If the bucket is full, new requests are rejected.

- Pros: Smoothes out bursty traffic into a steady stream, preventing the system from being overwhelmed by sudden spikes. It effectively enforces a maximum output rate.

- Cons: Similar to the token bucket, its parameters (bucket size, leak rate) are typically fixed. It doesn't inherently understand the system's internal health or real-time ability to process requests. Bursts can still lead to rejections if the bucket fills too quickly, even if the system could handle a higher rate temporarily.

Pros of Fixed-Rate Throttling: * Simplicity: Easy to understand, configure, and implement, especially at the API gateway level where it can be applied broadly. * Predictability: Provides a clear, hard limit that developers and clients can anticipate and design around. * Fairness: Can be configured to ensure fair usage among different clients or consumers of an API. * Basic Protection: Offers a fundamental layer of defense against abuse, DoS attacks, and basic resource exhaustion.

Cons and Limitations of Fixed-Rate Throttling: The primary drawback of fixed-rate throttling is its lack of adaptability. It operates on a static, predetermined maximum, irrespective of the system's actual, real-time capacity and health.

- Suboptimal Resource Utilization:

- Under-utilization: If the fixed limit is set conservatively to handle worst-case scenarios, the system might be underutilized during periods of lower load, wasting resources.

- Overload during degraded state: Conversely, if the system is experiencing internal issues (e.g., a slow database, a failing microservice, high CPU due to garbage collection), its effective capacity might temporarily drop significantly below the fixed throttle limit. In such cases, the fixed throttle would still allow requests up to its limit, potentially pushing the already struggling system into a deeper crisis or even failure.

- Difficulty in Capacity Planning: Determining the "correct" fixed limit is often a challenging exercise. It requires extensive load testing and capacity planning, often leading to either over-provisioning (to be safe) or under-provisioning (to save costs). Adjusting these limits typically requires manual intervention and re-deployment, which is cumbersome and slow to react to dynamic changes.

- Ineffective for Dynamic Workloads: Modern cloud environments and microservices architectures are inherently dynamic. Workloads fluctuate dramatically, and the capacity of services can change due to auto-scaling events, transient network issues, or variable resource consumption by different types of requests. Fixed limits struggle to cope with this fluidity.

- Lack of Graceful Degradation: When the fixed limit is hit, excess requests are abruptly rejected. This provides no mechanism for the system to gracefully reduce load, prioritize critical requests, or offer an alternative experience to users, often leading to a sudden and harsh cutoff.

These limitations underscore the need for a more intelligent, dynamic, and adaptive approach to traffic management, one that can respond in real-time to the internal state of the system rather than relying solely on predetermined, static thresholds. This is precisely where step function throttling offers a compelling evolution, moving beyond the foundational yet rigid principles of traditional methods to a truly resilient strategy.

Introducing Step Function Throttling: An Adaptive Paradigm

The limitations of traditional, fixed-rate throttling mechanisms, particularly their inability to adapt to real-time system conditions, paved the way for more sophisticated approaches. Among these, step function throttling emerges as a powerful, dynamic paradigm designed to overcome the rigidity of static limits, enabling systems to maintain stability and performance even under highly variable and unpredictable loads.

At its essence, step function throttling is an adaptive capacity management strategy. Unlike fixed-rate throttling, which enforces a single, predetermined maximum request rate, step function throttling dynamically adjusts the allowed request rate in a series of discrete "steps," based on continuous monitoring of the system's actual health and performance metrics. It's a feedback-driven mechanism where the system constantly observes its own state and responds by either tightening or loosening the throttle.

How it Differs from Fixed-Rate Throttling:

The core distinction lies in its responsiveness. A fixed-rate throttle operates on a simple "on/off" or "limit reached/not reached" binary. Step function throttling, however, operates on a continuum of system health. It recognizes that a system's capacity is not a static ceiling but a fluctuating boundary influenced by a myriad of factors, including:

- Internal Resource Utilization: CPU load, memory consumption, disk I/O, network bandwidth.

- Application-Specific Metrics: Number of active threads, queue lengths, database connection pool saturation, garbage collection activity.

- Performance Indicators: Latency (e.g., p90, p99 response times for key operations), error rates (e.g., HTTP 5xx responses).

- Dependent Service Health: The status and performance of downstream APIs or microservices that the current system relies upon.

Instead of a single "max TPS" value, step function throttling defines multiple operational tiers, or "steps," each associated with a different maximum allowable request rate. The system then transitions between these steps based on predefined thresholds tied to its health metrics.

Core Principles of Step Function Throttling:

- Continuous Monitoring: The system continuously collects and analyzes key operational metrics in real-time. This forms the basis for all throttling decisions.

- Defined Steps and Thresholds:

- Steps: These are distinct operational states, each corresponding to a specific maximum allowable request rate (e.g., "Full Capacity," "Reduced Capacity 1," "Reduced Capacity 2," "Critical Capacity").

- Thresholds: For each metric, specific values are defined that trigger a transition between steps. For example:

- If CPU utilization exceeds 70% for 30 seconds, move to "Reduced Capacity 1" (e.g., throttle to 80% of normal).

- If latency (p99) for a critical API endpoint exceeds 500ms for 15 seconds, move to "Reduced Capacity 2" (e.g., throttle to 50% of normal).

- If the error rate (HTTP 5xx) climbs above 5%, move to "Critical Capacity" (e.g., throttle to 20% or even a complete circuit break).

- Conversely, if conditions improve (e.g., CPU drops below 50% for 1 minute), the system might gradually increase the throttle back to a higher capacity step.

- Feedback Loops: The system forms a closed-loop feedback mechanism. Monitoring data informs throttling decisions, and the throttling itself influences the system's health, which is then re-monitored. This continuous cycle allows the system to self-regulate and adapt to changing conditions.

Advantages of Step Function Throttling:

- Improved Resource Utilization: By dynamically adjusting the throttle based on actual capacity, the system avoids both under-utilization during low load and dangerous overload during high load or degraded conditions. Resources are used more efficiently, which is particularly beneficial in cloud environments where resource costs are significant.

- Enhanced Resilience and Stability: The system can proactively reduce incoming load before it reaches a critical breaking point. This prevents cascading failures, allows struggling components time to recover, and maintains a baseline level of service even under extreme stress. It shifts from a reactive "crash and recover" model to a proactive "adapt and stabilize" one.

- Better User Experience During High Load: Instead of abrupt rejections, step function throttling allows for more graceful degradation. While some requests might be delayed or rejected at lower throttle levels, the overall system remains responsive for a subset of users, rather than becoming completely unavailable for everyone. Prioritization rules can ensure critical transactions still pass through.

- Adaptability to Unforeseen Events: Whether it's a sudden viral traffic spike, an internal service degradation, or even a subtle memory leak slowing down an application, step function throttling can respond without manual intervention, providing an automated safety net.

Disadvantages and Complexities:

- Increased Setup Complexity: Implementing step function throttling requires a robust monitoring infrastructure, careful selection of metrics, precise definition of steps and thresholds, and a mechanism to apply these throttle adjustments. This is significantly more complex than setting a single fixed limit.

- Tuning Challenges: Defining the right thresholds and step increments is crucial. If thresholds are too aggressive, the system might over-throttle; if too lenient, it might not react quickly enough. Incorrect tuning can lead to system oscillations (constantly throttling up and down) or instability. Extensive testing and continuous refinement are necessary.

- Potential for Oscillations: Without proper damping mechanisms or hysteresis, the system might enter a state where it continuously switches between throttle levels, causing instability. Introducing delays in transitions and requiring sustained metric breaches helps mitigate this.

Despite these complexities, the benefits of enhanced resilience, optimized performance, and intelligent resource management make step function throttling a compelling choice for mission-critical applications and dynamic cloud-native architectures. It represents a significant leap forward in traffic management, transforming an often-static defense into an adaptive, self-healing mechanism.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

Implementing Step Function Throttling: Components and Metrics

The practical implementation of step function throttling requires a well-orchestrated combination of monitoring, decision-making logic, and enforcement mechanisms. Understanding these components and the metrics they leverage is crucial for designing an effective adaptive throttling system.

Key Metrics for Decision Making

The intelligence of step function throttling stems from its ability to interpret the system's health through a diverse set of metrics. These metrics serve as the vital signs of your infrastructure and applications, signaling when adjustments to the throttle are necessary. They can generally be categorized into resource utilization, performance indicators, and application-specific metrics.

- Resource Utilization Metrics: These provide insights into how heavily the underlying infrastructure is being used.

- CPU Utilization: A high CPU percentage indicates that the processing units are constantly busy, potentially struggling to keep up with demand. Thresholds might be set, for example, at 70% or 80% to trigger a throttle reduction.

- Memory Usage: Excessive memory consumption (especially nearing limits) can lead to swapping to disk, significantly slowing down operations, or even out-of-memory errors. Monitoring heap usage, garbage collection frequency, and page faults is critical.

- Network I/O: High network bandwidth utilization or numerous dropped packets can indicate network saturation, which affects request processing.

- Disk I/O: For systems heavily reliant on persistent storage, high disk read/write operations or long queue depths can be a bottleneck.

- Database Connections/Pool Saturation: Databases are often a common bottleneck. Monitoring the number of active connections, connection pool utilization, and query execution times provides direct insight into database health. If the connection pool is consistently saturated, it's a strong indicator of database stress.

- Performance Indicators: These metrics directly reflect the user experience and system responsiveness.

- Latency (Response Time): This is perhaps the most direct measure of user experience. Instead of just average latency, it's often more informative to monitor percentile latencies, such as p90 (90% of requests complete within this time) or p99 (99% of requests complete within this time). A sudden increase in p99 latency for critical API endpoints is a strong signal for throttling.

- Error Rates (HTTP 5xx): A rising percentage of server-side errors (e.g., 500 Internal Server Error, 503 Service Unavailable) indicates that the system is failing to process requests successfully. This is a critical metric for triggering aggressive throttling or even circuit breaking.

- Request Queue Depths: For systems that queue incoming requests, monitoring the depth of these queues can indicate impending overload before other metrics fully reflect the stress. A rapidly growing queue suggests that the system cannot process requests as fast as they arrive.

- Application-Specific Metrics: These are highly customized to the specific application logic and internal operations.

- Worker Pool Availability: In applications that use worker pools (e.g., thread pools, message queues), monitoring the number of idle workers or the backlog of tasks awaiting processing can be a direct indicator of capacity.

- Business Transaction Success Rates: For critical business processes, monitoring the success rate of these specific transactions can provide a higher-level view of health.

- Rate of Successful Transactions: While overall TPS is important, monitoring the rate of successful transactions helps distinguish between high traffic and high useful traffic.

Defining "Steps" and "Thresholds"

Once metrics are identified, the next step is to define the operational "steps" and the "thresholds" that dictate transitions between them. This is the heart of the step function logic.

Example Scenario: Let's consider a simplified example for an API gateway managing a critical service.

- Step 1: Full Capacity (Default State)

- Max TPS allowed: 10,000 requests/second.

- Conditions for maintaining: CPU < 60%, P99 Latency < 200ms, Error Rate < 1%.

- Step 2: Reduced Capacity (Initial Degradation)

- Max TPS allowed: 8,000 requests/second.

- Transition to (Trigger):

- CPU > 70% for 30 seconds, OR

- P99 Latency > 300ms for 15 seconds, OR

- Error Rate > 2% for 10 seconds.

- Transition from (Recovery):

- CPU < 50% for 60 seconds, AND

- P99 Latency < 200ms for 30 seconds.

- Step 3: Severely Reduced Capacity (Significant Stress)

- Max TPS allowed: 5,000 requests/second.

- Transition to (Trigger):

- CPU > 85% for 20 seconds, OR

- P99 Latency > 500ms for 10 seconds, OR

- Error Rate > 5% for 5 seconds.

- Transition from (Recovery):

- System is in Step 3 for at least 5 minutes, AND

- CPU < 60% for 90 seconds, AND

- P99 Latency < 300ms for 60 seconds.

- Step 4: Critical/Circuit Break (Imminent Failure)

- Max TPS allowed: 1,000 requests/second (or even 0, allowing only health checks).

- Transition to (Trigger):

- CPU > 95% for 10 seconds, OR

- P99 Latency > 1000ms for 5 seconds, OR

- Error Rate > 10% for 3 seconds.

- Transition from (Recovery):

- System is in Step 4 for at least 10 minutes, AND

- All metrics return to healthy levels (e.g., CPU < 50%, P99 Latency < 200ms, Error Rate < 1%) for a sustained period (e.g., 2 minutes).

Important Considerations for Steps and Thresholds:

- Hysteresis: Notice in the example that recovery thresholds are often more stringent or require longer durations than trigger thresholds. This prevents rapid, unstable oscillations between steps.

- Multiple Metrics: Decisions should ideally be based on a combination of metrics, as a single metric might not tell the whole story. Logical OR/AND conditions can be used.

- Time Windows: Metrics should be evaluated over specific time windows (e.g., "CPU > 70% for 30 seconds") to avoid reacting to transient spikes.

Feedback Loops: The Self-Adjusting Mechanism

The entire system functions as a continuous feedback loop: 1. Monitor: Collect metrics from the system (applications, infrastructure, network). 2. Analyze: Evaluate collected metrics against defined thresholds. 3. Decide: Based on analysis, determine if a throttle adjustment is needed (move to a different step). 4. Act: Apply the new throttle limit to incoming requests. 5. Observe: Monitor the impact of the throttle adjustment on the metrics.

This loop allows the system to be self-healing and dynamically adaptive, constantly seeking its optimal operational state.

Placement of Throttling

Where in the architecture should step function throttling be implemented? * At the Edge (Load Balancers, API Gateway): This is a highly effective point for initial filtering. An API gateway or load balancer sits in front of all backend services, making it an ideal place to apply global or per-API throttling rules. It can reject requests before they even reach the backend, protecting the entire system. * Service Level (Sidecars, Proxies): In a microservices architecture, individual services might implement their own throttling logic, especially for internal APIs, to protect themselves from misbehaving callers or to manage their specific resources. Sidecar proxies (e.g., within a service mesh) are excellent candidates for this. * Application Level: Deep within the application code, specific resource-intensive operations might have their own throttling or circuit-breaking mechanisms to protect internal components (e.g., a specific database query or a complex computation).

For managing external API calls and providing a unified control point, the API gateway plays a central and strategic role. It acts as the frontline enforcer of policies, including sophisticated throttling. An open-source AI gateway and API management platform like ApiPark is specifically designed to provide robust traffic management capabilities. It can serve as the ideal infrastructure to implement such dynamic throttling strategies, offering features like traffic forwarding, load balancing, and detailed API call logging. These features are indispensable for collecting the metrics needed to make intelligent throttling decisions and applying the adjusted limits effectively across a multitude of APIs. APIPark's ability to manage the entire API lifecycle, from design to invocation, also means it has the necessary context to apply granular and intelligent throttling policies, ensuring that each API endpoint, or even specific clients, receive appropriate treatment based on their defined access patterns and the system's current health.

Design Patterns and Best Practices for Step Function Throttling

Implementing step function throttling effectively goes beyond merely setting up metrics and thresholds. It requires integrating it within a broader resilience strategy, adhering to design patterns, and following best practices to ensure stability, maintainability, and optimal performance.

Graceful Degradation and Prioritization

One of the significant advantages of adaptive throttling is its potential to enable graceful degradation. Instead of simply rejecting requests wholesale, the system can make intelligent choices about which requests to prioritize when under stress.

- Prioritize Critical Requests: Not all requests are equally important. For an e-commerce platform, processing an order payment is more critical than loading a product recommendation widget. When throttling, the system can be configured to favor requests from VIP customers, core business transactions, or internal administrative calls, even at lower throttle steps. This ensures that the most vital functions remain operational, albeit with potentially reduced overall throughput.

- Feature Degradation: In extreme cases, less critical features can be temporarily disabled or provided with a reduced quality of service (e.g., serving stale data from a cache instead of hitting the database for real-time updates). This offloads the backend system and allows it to allocate resources to essential services.

- Different Throttling Policies per API/Endpoint: A robust API gateway can apply different step function throttling policies to different API endpoints based on their criticality and resource consumption profile. A

/paymentendpoint might have a very strict, high-priority throttling policy, while a/user-activityendpoint might have a more relaxed one.

Backpressure Mechanisms

Throttling at the edge (e.g., an API gateway) is crucial, but it's equally important for services further downstream to communicate their load status upstream. This concept is known as backpressure.

- Propagating Load Information: If a backend service is struggling, it should signal its degraded state to the API gateway or upstream callers. This could be through dedicated health checks, specific HTTP status codes (e.g., 503 Service Unavailable with a

Retry-Afterheader), or through distributed tracing systems that show increasing latencies within the service. - Reactive Throttling: The API gateway can then use this backpressure signal as an additional metric to adjust its step function throttle. If multiple downstream services are reporting degraded status, the gateway can proactively reduce the overall incoming rate, even if its own CPU/memory metrics are still healthy.

Complementary Resilience Patterns: Circuit Breakers and Bulkheads

Step function throttling should not operate in isolation. It complements other resilience patterns to create a truly robust system.

- Circuit Breakers: A circuit breaker pattern prevents an application from repeatedly trying to invoke a service that is likely to fail. When a service experiences a high number of failures, the circuit breaker "trips," short-circuiting calls to that service and quickly failing subsequent requests (or redirecting them to a fallback) for a defined period. This allows the failing service to recover without being continuously bombarded. Step function throttling can work in conjunction: if a circuit breaker is open for a critical downstream service, the step function throttle on the upstream API gateway might immediately drop to a "critical" step to prevent further load on the ailing dependency.

- Bulkhead Pattern: Inspired by ship compartments, the bulkhead pattern isolates failures within a system. It limits the resources (e.g., thread pools, connection pools) that a specific component or a call to an external service can consume. This prevents a failure or slowdown in one component from consuming all resources and affecting other parts of the system. Step function throttling can be applied within each bulkhead, ensuring that even isolated sections of the system adapt to their individual loads.

Observability: The Foundation of Adaptive Throttling

The entire adaptive throttling mechanism hinges on the ability to monitor the system effectively. Without comprehensive observability, the system cannot make informed decisions.

- Comprehensive Monitoring: This includes collecting all the metrics discussed previously (CPU, memory, latency, error rates, queue depths, etc.) from every layer of the stack: infrastructure, network, API gateway, individual services, and databases.

- Detailed API Call Logging: As mentioned in the description of APIPark, detailed API call logging is indispensable. It provides a granular record of every request, including timestamps, request/response bodies (optionally), latency, status codes, and client identifiers. This data is critical for:

- Troubleshooting: Quickly diagnosing issues related to throttling (e.g., identifying why specific clients were throttled, or if the throttling itself is causing errors).

- Policy Refinement: Analyzing historical data to identify trends, refine thresholds, and improve the accuracy of the step function.

- Auditing and Compliance: Ensuring that throttling policies are applied fairly and transparently.

- Real-time Dashboards: Visualizing current system health and throttle status.

- Alerting: Setting up alerts for critical thresholds or for when the system transitions to lower throttle steps ensures that operations teams are immediately notified of potential issues.

- Distributed Tracing: Tools that trace a single request across multiple services are invaluable for understanding latency bottlenecks and identifying which services are causing backpressure, informing more precise throttling adjustments.

Testing and Tuning

Implementing step function throttling is an iterative process that requires rigorous testing and continuous tuning.

- Load Testing: Simulate various load scenarios, including gradual ramp-ups, sudden spikes, and sustained high load, to observe how the step function throttling responds. Test specific failure injection scenarios (e.g., intentionally slowing down a database) to see if the throttle reacts appropriately.

- A/B Testing/Canary Deployments: For complex changes, deploy the new throttling logic to a small subset of traffic first to observe its behavior in a production environment before a full rollout.

- Gradual Rollouts: Implement new thresholds or steps gradually. Start with conservative settings and gradually fine-tune them based on observed system behavior.

- Regular Review: System behavior and traffic patterns evolve. Periodically review the defined steps, metrics, and thresholds to ensure they remain relevant and effective.

Granularity of Throttling

Step function throttling can be applied at different levels of granularity:

- Global Throttling: Applies a single throttle to all incoming requests to a service or the entire API gateway.

- Per-Client/Per-API Key Throttling: Different clients or API keys might have different entitlement levels. The step function can dynamically adjust these individual limits based on the overall system health.

- Per-API/Per-Endpoint Throttling: As discussed, different endpoints might have different criticality or resource consumption, warranting independent step function policies.

Auto-Scaling Integration

Step function throttling serves as an excellent first line of defense before auto-scaling actions kick in.

- Throttling as Pre-Scaling: When load starts to increase, throttling can temporarily mitigate the pressure, buying time for auto-scaling mechanisms to provision and bring online new instances. This prevents over-scaling for transient spikes and ensures that new instances are brought online gracefully.

- Signaling for Scaling: The metrics used for step function throttling (e.g., sustained high CPU, growing queue depths) can also be used as triggers for horizontal auto-scaling, ensuring that capacity is added when needed.

By integrating step function throttling with these design patterns and best practices, organizations can build systems that are not only capable of handling massive loads but are also inherently resilient, adaptive, and efficient, ensuring optimal TPS under the most challenging conditions.

Case Studies and Real-World Scenarios: Step Function Throttling in Action

The theoretical underpinnings of step function throttling become most vivid when examined through the lens of real-world applications and challenging scenarios. These examples highlight how an adaptive throttling strategy, often orchestrated by a sophisticated API gateway, provides indispensable resilience and performance.

E-commerce During Black Friday

Imagine a major online retailer preparing for Black Friday, the epitome of unpredictable traffic surges. Historically, they've used fixed-rate throttling, setting a maximum TPS based on their highest-ever recorded peak load, plus a buffer. However, this approach leads to two problems: 1. Over-provisioning: For 99% of the year, their infrastructure runs far below capacity, wasting cloud spend. 2. Rigidity During Unexpected Spikes: If a viral marketing campaign or a competitor's outage redirects even more traffic than anticipated, their fixed throttle still pushes the system to its breaking point, causing outages or severe degradation.

With Step Function Throttling: The retailer implements a step function throttle on their API gateway for all core shopping APIs (product browsing, cart operations, checkout). * Metrics: CPU utilization, database connection pool usage, p99 latency for checkout API, and error rates (HTTP 5xx). * Steps: * Full Capacity (100%): Default state. * High Load (80%): Triggered if CPU > 70% or P99 Latency > 300ms for 30 seconds. * Critical (50%): Triggered if CPU > 85% or P99 Latency > 600ms or Error Rate > 5% for 15 seconds. * Emergency (20%): Triggered if database connections saturate or Error Rate > 10% for 5 seconds. * Prioritization: At lower throttle steps, the system prioritizes existing checkout flows over new product browsing requests, and VIP customer accounts receive preferential treatment.

Outcome: During Black Friday, a record-breaking influx of traffic hits. 1. The API gateway detects rising CPU and latency, automatically stepping down the throttle from 100% to 80%, then to 50%. 2. Crucially, it buys time for auto-scaling groups to provision new instances. 3. While some new users might experience brief delays or temporary "we're busy" messages for non-critical actions, the core checkout functionality remains operational. 4. The system avoids a full outage, gracefully managing the load and maximizing successful transactions during the most critical sales period. As load subsides and new instances come online, the throttle gradually increases back to full capacity.

Social Media Platforms During Viral Events

Social media platforms are constantly at the mercy of viral content. A single tweet or post going global can generate millions of requests per second within minutes, hitting multiple APIs (content delivery, notification, user profile). Fixed-rate throttling would either be too conservative (leading to constant rejections) or too liberal (leading to collapses).

With Step Function Throttling: A social media platform deploys step function throttling at various layers: * Edge Gateway: Handles global rate limits based on overall system health. * Notification Service Gateway: Implements its own adaptive throttling based on its queue depth and ability to push notifications. * Content Service API: Adjusts its rate based on database read replicas' lag and cache hit ratios.

Example Metric-Action: * If the database read replica lag for the content service exceeds 5 seconds, the content service's internal throttle (managed by its own mini-gateway or sidecar) reduces its allowed request rate by 20%. This, in turn, causes its upstream callers (including the edge API gateway) to detect increased latency or 503 errors from the content service, prompting them to further reduce their own outbound request rates to the struggling service, or activate fallback mechanisms. * The edge API gateway monitors the overall system and, in response to signs of stress, might prioritize requests from premium users or essential system updates over less critical activities like feed refreshes.

Outcome: When a global event causes a massive spike in activity, the system adapts dynamically. Instead of collapsing, it gracefully throttles down specific non-critical functions, ensuring that the platform remains accessible and functional for key interactions. Notifications might be delayed, and some less popular content might take longer to load, but the core ability to post, like, and share remains stable.

Microservices Architecture with Inter-Service Dependencies

In a complex microservices environment, services often depend on each other. A slowdown in one service (e.g., a "Product Catalog" service due to a slow database query) can quickly create a bottleneck that affects all its upstream callers (e.g., "Order Service," "Recommendation Service").

With Step Function Throttling: Each critical microservice implements its own step function throttling, often managed by a local proxy or service mesh sidecar acting as a micro-gateway. * Product Catalog Service: Monitors its own thread pool utilization, database connection health, and p99 latency for its primary API endpoints. * Order Service: Calls the Product Catalog. It uses a circuit breaker that wraps calls to the Product Catalog. If the circuit breaker trips, it falls back to a cached version of product data or returns a "product unavailable" message rather than waiting indefinitely. * Central API Gateway: Monitors the health of all upstream services.

Interplay: 1. The Product Catalog service experiences a sudden slowdown due to a problematic database query or high load. 2. Its internal step function throttle detects rising p99 latency and CPU, and immediately reduces the rate at which it processes incoming requests, to protect its database. 3. The Order Service, making calls to the Product Catalog, sees increased latency or 503 errors. Its circuit breaker trips, causing it to stop calling the Product Catalog for a short period and use a fallback. 4. The central API gateway detects the reduced availability of the Product Catalog (via health checks or increased error rates from upstream services trying to call it). It then adjusts its global step function throttle to reduce the overall inbound requests for APIs that ultimately depend on the Product Catalog, preventing new requests from even hitting the Order Service.

Outcome: This multi-layered adaptive throttling prevents a single point of failure (the Product Catalog) from bringing down the entire system. The Order Service remains functional (albeit with potentially stale data), and the overall system maintains stability. The API gateway acts as the orchestrator, making high-level decisions, while individual services handle their localized adaptive throttling.

These case studies illustrate the profound impact of step function throttling. It transforms a brittle, static defense into a dynamic, intelligent, and resilient mechanism. By continuously monitoring system health and adapting the allowed transaction rate, organizations can confidently operate high-performance, high-availability services even in the face of unpredictable demand and internal stressors, with the API gateway serving as a central enforcer and coordinator of these sophisticated policies.

The Economic and Operational Benefits of Adaptive Throttling

Beyond preventing catastrophic failures and ensuring basic functionality, the adoption of step function throttling yields a myriad of tangible benefits that impact an organization's bottom line, operational efficiency, and strategic positioning. These advantages underscore why investing in adaptive traffic management is not merely a technical necessity but a sound business decision.

Cost Savings: Optimized Resource Utilization

One of the most immediate and significant economic benefits comes from optimized resource utilization. In cloud environments, where infrastructure is billed on a pay-as-you-go basis, inefficient resource allocation directly translates to wasted money.

- Preventing Over-provisioning: Traditional fixed-rate throttling often necessitates over-provisioning infrastructure to handle anticipated peak loads, even if those peaks occur infrequently. This means paying for idle capacity most of the time. Step function throttling, by dynamically adjusting the allowed rate based on real-time capacity, allows systems to run leaner. When demand is low, the throttle is open, using only necessary resources. When demand spikes, it adapts, effectively managing the load until auto-scaling mechanisms can kick in, rather than requiring always-on excess capacity.

- Efficient Cloud Spend: By ensuring that resources are neither idle nor overwhelmed, adaptive throttling maximizes the utility of every dollar spent on cloud infrastructure. It reduces the need for expensive, high-tier instances that might only be fully utilized for a few hours a month. This precise matching of capacity to demand is crucial for cost-conscious organizations operating at scale.

Improved Reliability: Consistent Performance and Reduced Downtime

The core purpose of throttling is to enhance system stability, and step function throttling excels at this, leading to dramatically improved reliability.

- Fewer Outages and Crashes: By proactively shedding load when internal metrics indicate stress, the system avoids being pushed beyond its breaking point. This significantly reduces the likelihood of catastrophic outages, system crashes, and cascading failures that can cripple operations and erode customer trust.

- Consistent Performance: Even during periods of high load, the system maintains a more predictable and consistent level of performance for the requests it does accept. Instead of wildly fluctuating latency and high error rates, users experience a more stable service, albeit potentially with some temporary delays or selective rejections, which is preferable to complete unavailability.

- Enhanced Resilience: The system becomes inherently more resilient to unforeseen events, whether they are legitimate traffic surges, internal service degradations, or even certain types of cyberattacks. It can "bend but not break," adapting to stressors rather than succumbing to them.

Enhanced User Experience: Speed, Responsiveness, and Trust

Ultimately, the goal of any digital service is to serve its users effectively. Adaptive throttling contributes significantly to a superior user experience.

- Reduced Latency and Fewer Errors (for accepted requests): By preventing overload, the system ensures that accepted requests are processed efficiently, leading to lower latency and fewer user-facing errors.

- Graceful Degradation: Users perceive a system that gracefully degrades (e.g., informs them of high load, offers a retry, or temporarily disables non-critical features) much more positively than one that simply crashes or becomes completely unresponsive. This predictability fosters trust and reduces user frustration.

- Brand Reputation: A service known for its consistent availability and performance, even under stress, builds a strong brand reputation. This is a critical asset in today's competitive digital marketplace.

Operational Efficiency: Automation and Reduced Manual Intervention

Step function throttling introduces a significant degree of automation into traffic management, freeing up operations teams and reducing the reliance on manual interventions during crises.

- Automated Response to Load Fluctuations: The system autonomously detects rising stress and adjusts its inbound traffic flow without human involvement. This eliminates the frantic, often error-prone, manual adjustments that operations teams might otherwise undertake during traffic spikes or outages.

- Faster Recovery: By preventing deeper system degradation, the time to recovery (MTTR - Mean Time To Recover) from incidents is often dramatically reduced. The system can stabilize itself more quickly.

- Proactive Problem Management: By using metrics to trigger throttling before a full outage, operations teams can shift from a purely reactive "firefighting" mode to a more proactive stance, having more time to investigate root causes and implement long-term solutions.

- Clearer Monitoring and Alerting: The specific steps and thresholds provide clear indicators of system health, making monitoring dashboards more informative and alerts more actionable.

Strategic Advantage: Building Future-Proof Systems

Organizations that embrace adaptive throttling position themselves strategically for future growth and innovation.

- Scalability Confidence: With a robust adaptive throttling mechanism in place, businesses can launch new products, undertake aggressive marketing campaigns, and onboard new users with greater confidence, knowing their infrastructure can cope with the resulting load.

- Foundation for Innovation: A stable, performant, and resilient platform provides a solid foundation upon which to build new features and services. Developers can focus on innovation rather than constantly firefighting performance issues.

- Competitive Edge: The ability to consistently deliver high-performance services, even under extreme conditions, provides a distinct competitive advantage in any industry.

The economic and operational benefits of step function throttling are multifaceted and profound. By moving beyond static limits to an intelligent, adaptive approach, organizations can achieve a sweet spot of cost efficiency, unwavering reliability, superior user experience, and operational agility. This transformation is pivotal in building future-proof digital architectures capable of thriving in an increasingly dynamic and demanding online world, with a capable API gateway often serving as the strategic control point for these advanced traffic management policies.

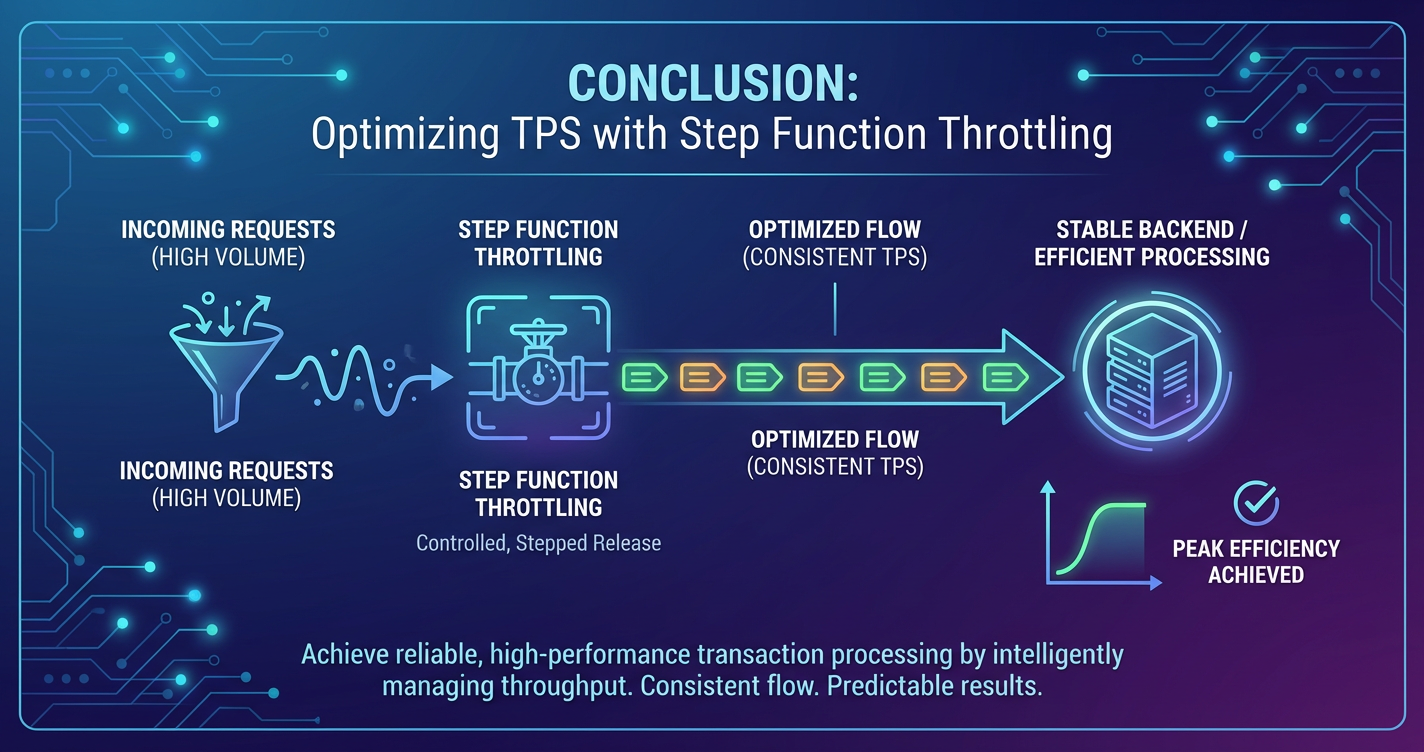

Conclusion: The Imperative of Adaptive TPS Optimization

In the ceaselessly evolving digital landscape, where the velocity of transactions defines the very pulse of an enterprise, optimizing Transactions Per Second (TPS) transcends mere technical preoccupation; it becomes a fundamental pillar of business survival and success. The relentless pressure of burgeoning user demands, the inherent unpredictability of traffic patterns, and the ever-present specter of system overload necessitate a paradigm shift from static, reactive traffic management to dynamic, proactive, and intelligent control. While traditional throttling mechanisms laid a vital groundwork for preventing uncontrolled resource exhaustion, their inherent rigidity often proved to be a double-edged sword, leading to either costly over-provisioning or perilous under-adaptation in the face of fluctuating system health.

This extensive exploration has illuminated the critical shortcomings of fixed-rate approaches and, more importantly, championed the profound benefits of step function throttling. By continuously monitoring a rich array of system health metrics—from CPU utilization and database connection saturation to critical latency percentiles and error rates—step function throttling empowers systems to dynamically adjust their allowed transaction rates in a series of predefined steps. This adaptive methodology ensures that resources are optimally utilized, preventing both wasteful idleness during low demand and catastrophic failure during peak loads or internal degradations. It is a sophisticated dance between observing, deciding, and acting, a continuous feedback loop that allows digital infrastructures to breathe, adapt, and stabilize autonomously.

The implementation of step function throttling, particularly when orchestrated through a robust API gateway, transforms a fragile system into a resilient one. An API gateway acts as the central nervous system for all inbound and outbound API traffic, making it the ideal control point for enforcing these nuanced policies. Products like ApiPark, an open-source AI gateway and API management platform, exemplify how modern gateway solutions provide the foundational infrastructure for such advanced traffic management capabilities. With features like comprehensive API lifecycle management, traffic forwarding, load balancing, and crucial detailed API call logging, APIPark facilitates the precise monitoring and granular control necessary to implement effective step function throttling, ensuring optimal performance and security for critical API ecosystems.

Furthermore, the integration of step function throttling within a broader resilience strategy—encompassing graceful degradation, intelligent backpressure mechanisms, and complementary patterns like circuit breakers and bulkheads—creates a multi-layered defense. This holistic approach, underpinned by a rigorous commitment to observability, meticulous testing, and continuous tuning, ensures that systems are not merely protected but are inherently designed for sustained high performance and unwavering reliability.

The economic and operational benefits derived from this adaptive approach are far-reaching. From significant cost savings through optimized cloud resource utilization to dramatically improved system reliability and a superior user experience, step function throttling directly contributes to an organization's bottom line and competitive advantage. It fosters operational efficiency by automating critical responses to load fluctuations, freeing human operators from constant firefighting, and enabling a more proactive stance towards system health.

In conclusion, as the digital world grows ever more complex and demanding, the pursuit of optimal TPS is no longer about simply handling more transactions, but about handling them intelligently, adaptively, and resiliently. Step function throttling is not just an advanced technical capability; it is an imperative strategy for building future-proof, high-performance systems that can confidently navigate the volatile currents of modern digital traffic, ensuring continuity, stability, and success in an interconnected world. The journey towards true digital resilience begins with an intelligent gateway and adaptive throttling at its core.

Frequently Asked Questions (FAQs)

1. What is the primary advantage of step function throttling over fixed-rate throttling?

The primary advantage of step function throttling is its adaptability to real-time system conditions. Fixed-rate throttling enforces a static, predetermined maximum request rate, regardless of the system's actual health or available capacity. In contrast, step function throttling dynamically adjusts the allowed request rate in a series of defined "steps" based on continuous monitoring of metrics like CPU utilization, latency, and error rates. This ensures optimal resource utilization, preventing both under-provisioning during low load and catastrophic overload when the system is stressed or degraded, leading to improved resilience and a more consistent user experience.

2. What key metrics are crucial for effective step function throttling?

Effective step function throttling relies on a diverse set of key metrics to accurately gauge system health and inform throttling decisions. Crucial metrics include: * Resource Utilization: CPU utilization, memory usage, network I/O, disk I/O, database connection pool saturation. * Performance Indicators: P90/P99 latency (response times), server-side error rates (HTTP 5xx), and request queue depths. * Application-Specific Metrics: Examples include worker pool availability, garbage collection activity, or business transaction success rates. A combination of these metrics, evaluated over specific time windows, provides a comprehensive view of system health, allowing for intelligent and timely throttle adjustments.

3. Can step function throttling replace auto-scaling?

No, step function throttling cannot entirely replace auto-scaling; instead, they are complementary strategies that work best when integrated. Step function throttling acts as a first line of defense, proactively shedding excess load when the system begins to show signs of stress, thereby preventing immediate overload and buying time. Auto-scaling, on the other hand, is designed to dynamically add or remove computing resources (e.g., virtual machines, containers) based on demand. Throttling can prevent transient spikes from triggering unnecessary scaling, and it ensures that the system remains stable while new instances are being provisioned and brought online, creating a more robust and cost-efficient scaling strategy.

4. What are the potential challenges in implementing step function throttling?

Implementing step function throttling comes with several challenges: * Increased Complexity: It requires a robust monitoring infrastructure, careful selection of relevant metrics, and precise definition of multiple "steps" and their associated thresholds. * Tuning Difficulties: Defining the "right" thresholds for triggering and recovering, and determining the appropriate throttle increments, is an iterative process that requires extensive testing and continuous refinement to avoid over-throttling or insufficient reaction. * Risk of Oscillations: Without proper damping mechanisms (e.g., hysteresis, sustained metric breaches over time windows), the system might rapidly switch between throttle levels, leading to instability. * Observability Requirements: A high degree of visibility into the system's internal state is essential, necessitating comprehensive logging, monitoring, and alerting.

5. How does an API gateway contribute to implementing advanced throttling strategies?

An API gateway plays a central and strategic role in implementing advanced throttling strategies like step function throttling for several reasons: * Centralized Control Point: It acts as the single entry point for all API traffic, allowing throttling policies to be applied consistently across multiple APIs and services. * Traffic Interception: The gateway can intercept, inspect, and modify incoming requests before they reach backend services, making it an ideal place to enforce dynamic rate limits and reject excess traffic at the edge. * Contextual Information: A sophisticated gateway can leverage client-specific information (e.g., API keys, user roles) to apply granular throttling rules. * Monitoring and Logging Infrastructure: Many API gateways offer built-in monitoring and detailed API call logging capabilities (like those in ApiPark), which are crucial for collecting the real-time metrics needed to inform step function throttling decisions. * Integration with Backend Health: Advanced gateways can be configured to react to the health status of backend services, allowing them to adjust throttling based on downstream dependencies' performance, thus acting as an intelligent orchestrator of system resilience.

🚀You can securely and efficiently call the OpenAI API on APIPark in just two steps:

Step 1: Deploy the APIPark AI gateway in 5 minutes.

APIPark is developed based on Golang, offering strong product performance and low development and maintenance costs. You can deploy APIPark with a single command line.

curl -sSO https://download.apipark.com/install/quick-start.sh; bash quick-start.sh

In my experience, you can see the successful deployment interface within 5 to 10 minutes. Then, you can log in to APIPark using your account.

Step 2: Call the OpenAI API.